Meet the new Intel processors

Yesterday, 04/02/2019, Intel announced the long-awaited update for the Intel® Xeon® Scalable Processors family, introduced in mid-2017. The new processors are based on microarchitecture, codenamed Cascade Lake and built on an improved 14-nm process.

Features of the new processors

First, take a look at the differences in labeling. In the previous article about Skylake-SP, we already mentioned that all processors are divided into 4 series - Bronze , Silver , Gold and Platinum . The first digit of the number tells which series the processor model belongs to:

- 3 - Bronze,

- 4 - Silver,

- 5, 6 - Gold,

- 8 - Platinum.

The second digit indicates the generation of the processor. For the Intel® Xeon® Scalable Processors family, code-named generations:

- 1 - Skylake,

- 2 - Cascade Lake.

The next two digits indicate the so-called SKU (Stock Keeping Unit). In fact, this is just a CPU identifier with a specific set of available functions.

Also, after the model number, there may be indices denoted by one or two letters. The first letter of the index indicates the features of the architecture or optimization of the processor itself, and the second - the memory capacity on the socket.

For example, take a processor labeled Intel® Xeon® 6240 . Decrypt:

- 6 - Gold series processor,

- 2 - the generation of Cascade Lake,

- 40 - SKU.

Performance

The new generation processors are designed with the expectation of use in the fields of virtualization, artificial intelligence, as well as high-performance computing. The first noticeable change was the increase in clock frequency. This was quite expected, since there are a large number of server applications for which clock speed is more important than the number of processor cores. For example, the financial product 1C, the system requirements of which clearly say that the higher the processor frequency, the faster the end user will get the result.

In some cases, the number of cores was increased. For clarity, we have compiled comparative tables of several processors of the Intel® Xeon® Scalable Processors family of the first and second generation:

| Intel® Xeon® Silver 4114 (10 cores) | Intel® Xeon® Silver 4214 (12 cores) | |

| Clock frequency | 2.20 GHz | 2.20 GHz |

| In turbo mode | 3.00 GHz | 3.20 GHz |

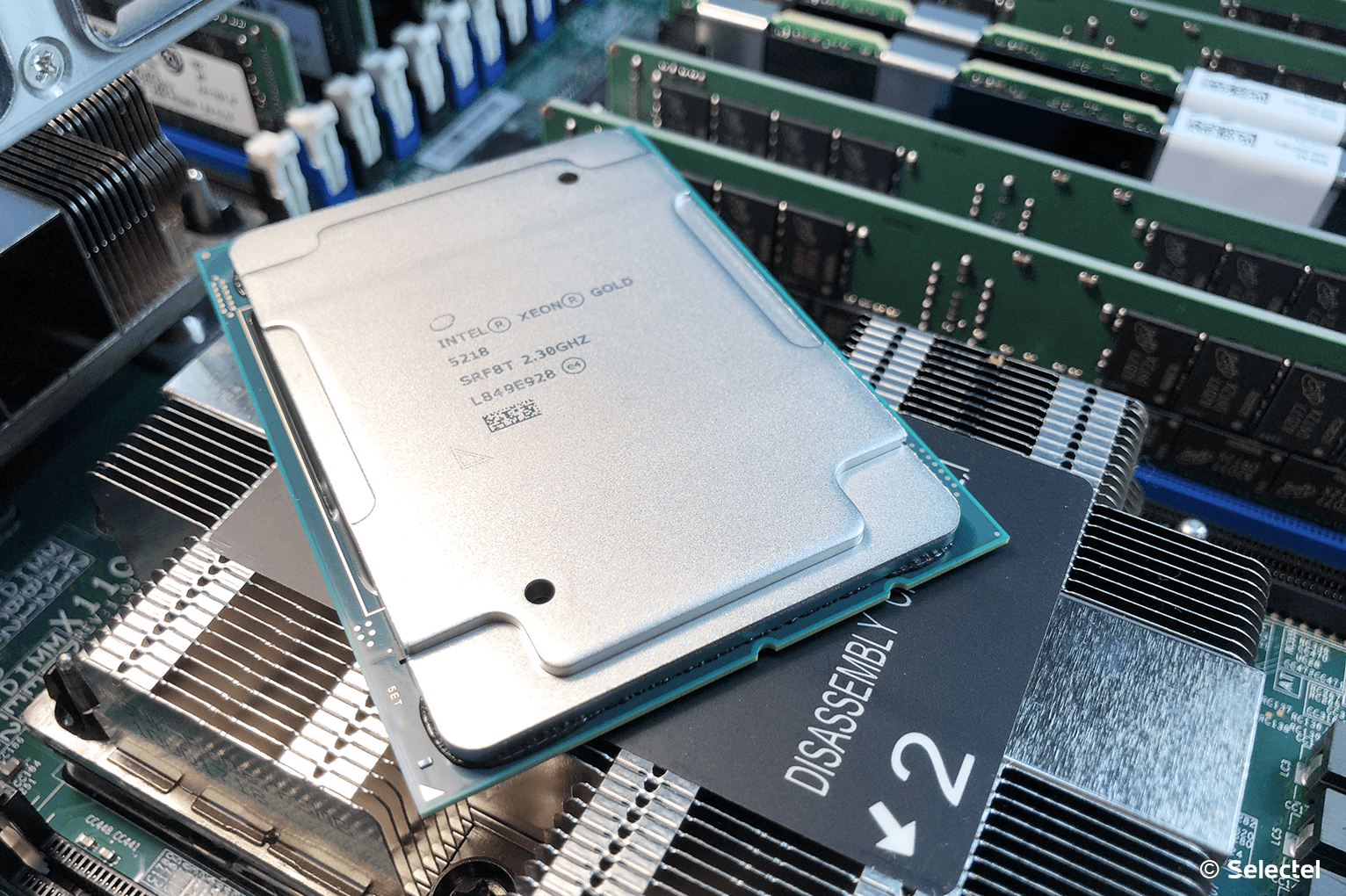

| Intel® Xeon® Gold 5118 (12 cores) | Intel® Xeon® Gold 5218 (16 cores) | |

| Clock frequency | 2.30 GHz | 2.30 GHz |

| In turbo mode | 3.20 GHz | 3.90 GHz |

| Intel® Xeon® Gold 6140 (18 cores) | Intel® Xeon® Gold 6240 (18 cores) | |

| Clock frequency | 2.30 GHz | 2.60 GHz |

| In turbo mode | 3.70 GHz | 3.90 GHz |

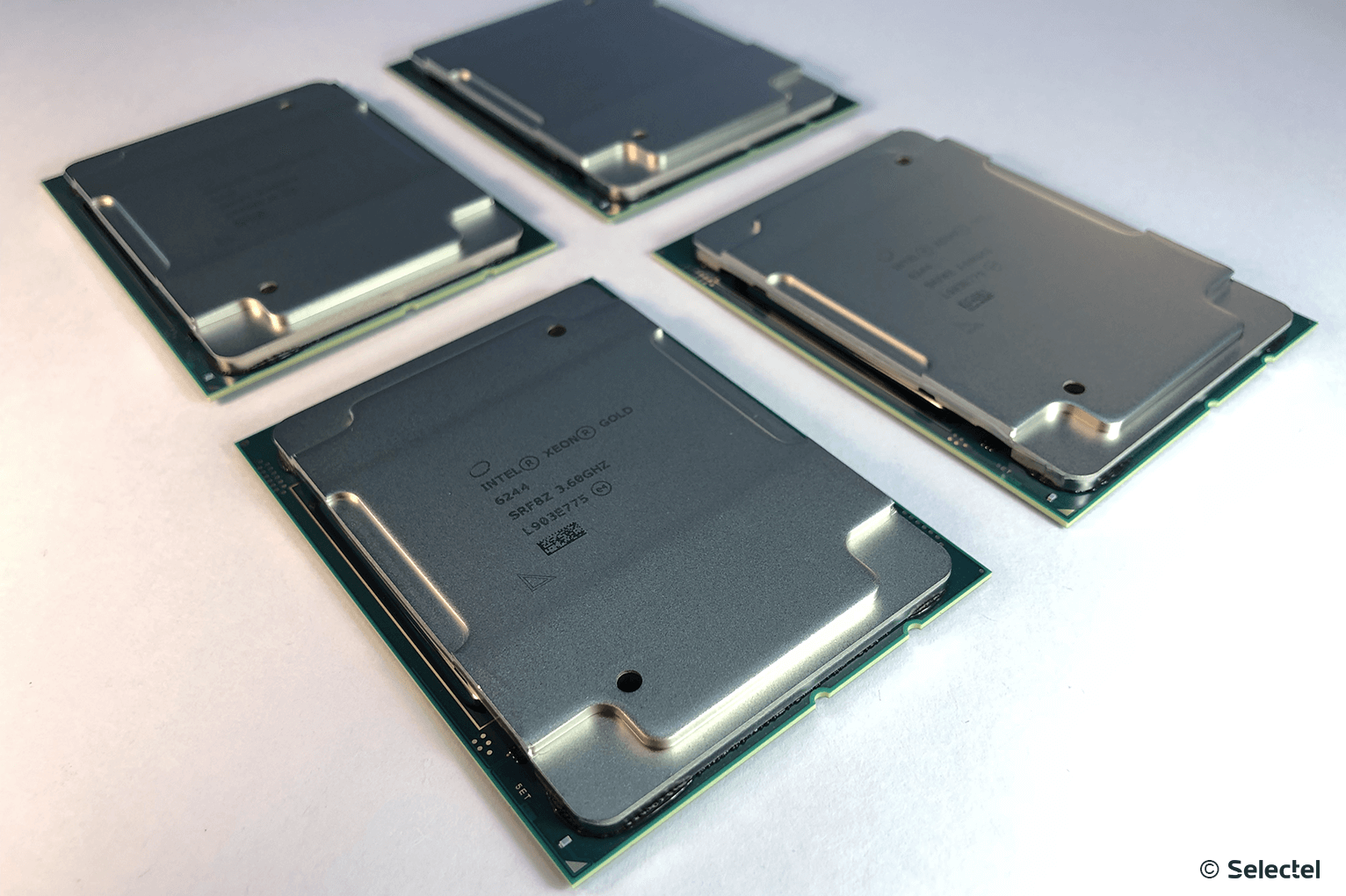

| Intel® Xeon® Gold 6144 (8 cores) | Intel® Xeon® Gold 6244 (8 cores) | |

| Clock frequency | 3.50 GHz | 3.60 GHz |

| In turbo mode | 4.20 GHz | 4.40 GHz |

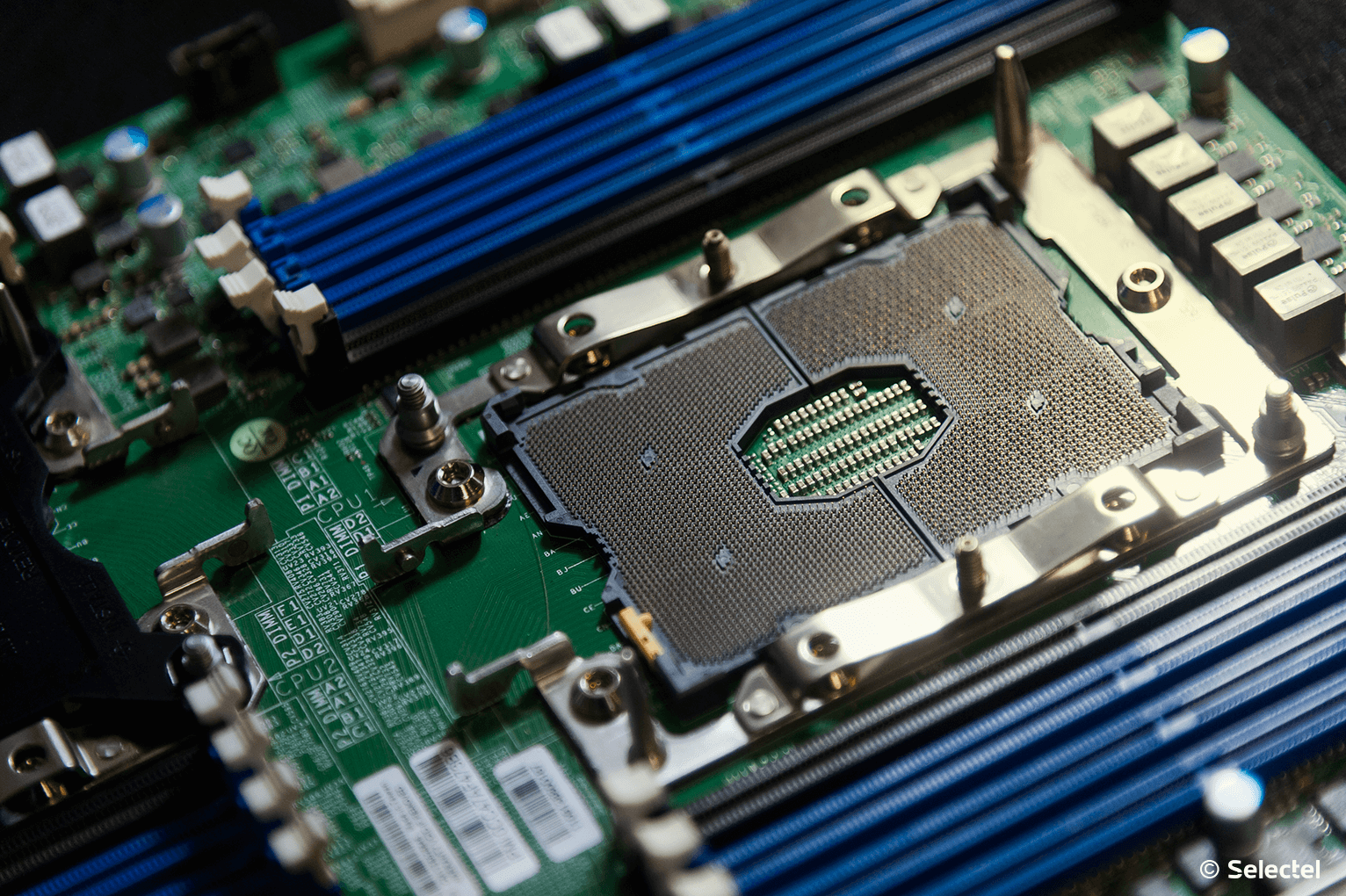

As in the previous generation of Skylake SP, processors are installed in the LGA3647 socket (Socket P), which is due to the use of a 6-channel memory controller (up to a maximum of 2 memory modules per channel). The memory frequency is 2666 MT / s , however, when using processors of the 6000 and 8000 series, you can use the memory with a frequency of 2933 MT / s (no more than 1 module per channel).

TireUltra-Path Interconnect , successfully used in the first generation Intel Xeon SP processors, remained in the second generation, providing data exchange between processors at speeds of 9.6 GT / s or 10.4 GT / s for each channel. This allows you to effectively scale the hardware platform to 8 physical processors, optimizing bandwidth and energy efficiency.

Tests

We began to test the new generation processors with the help of the SPEC test suite , which simulate the load based on the solution of the most pressing life tasks. These tests represent both the simplest calculations and the calculation of various physical processes, for example, solving problems of molecular physics and hydrodynamics.

Currently, we have ready the results of some SPEC tests for integer calculations using the Intel® Xeon® Gold 6140 and Intel® Xeon® Gold 6240 processors as examples.

Intrate

| Test | Intel® Xeon® Gold 6140 | Intel® Xeon® Gold 6240 |

| 500.perlbench_r | 147 | 157 |

| 531.deepsjeng_r | 127 | 139 |

| 541.leela_r | 125 | 127 |

| 548.exchange2_r | 176 | 203 |

Intsepeed

| Test | Intel® Xeon® Gold 6140 | Intel® Xeon® Gold 6240 |

| 600.perlbench_s | 5.67 | 6.33 |

| 602.gcc_s | 6.95 | 8.74 |

| 641.leela_s | 3.24 | 3.62 |

| 648.exchange2_s | 5.94 | 7.90 |

Test description

- perlbench_r is a stripped-down version of the Perl language. The test load imitates the work of the popular SpamAssassin anti-spam system;

- deepsjeng_r - simulation of a game of chess. The server performs an in-depth study of game positions using the alpha-beta-clipping algorithm;

- leela_r - simulation of a game in go. In the process of testing, there is an analysis of movement patterns, as well as a selective search in the tree based on upper confidence limits;

- exchange2_r - generator of non-trivial sudoku puzzles. Written in Fortran 95, it uses most of the array processing functions;

- gcc_s C language compiler. The test load “compiles” the GCC compiler from source codes for the IA-32 microprocessor architecture.

According to the results of the tests, it becomes clear that the new generation processors perform integer calculations faster than the previous generation. We will share the results of other tests in one of the following articles.

Intel® Optane ™ DC Persistent Memory Support

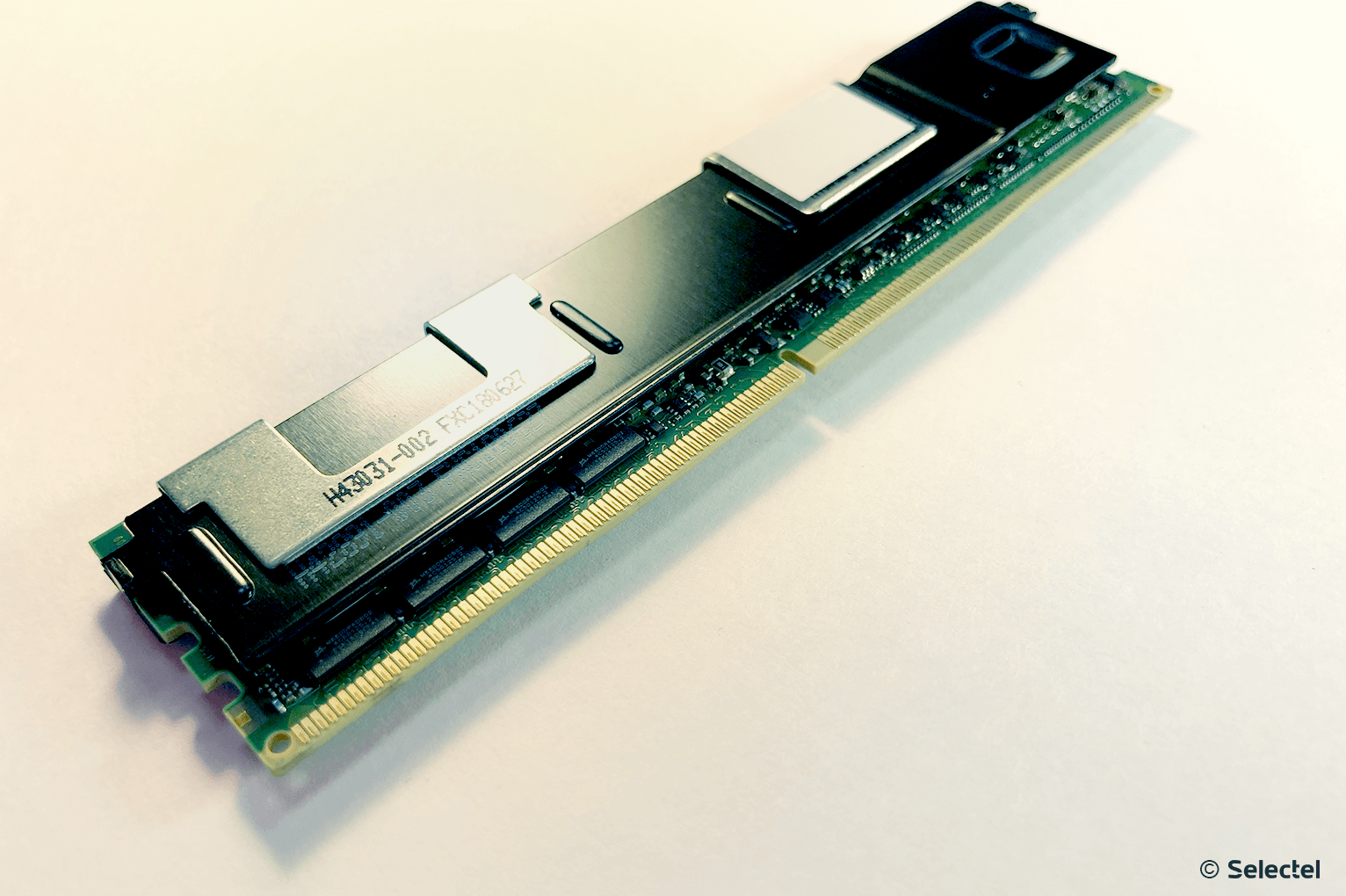

Accelerating the workload of highly loaded databases and applications - this is what all customers expected from the upcoming update. Therefore, a key innovation was the support for Intel® Optane ™ DC Persistent Memory, better known under the code name Apache Pass.

This memory is designed to become a universal solution to the problem when using the right amount of DRAM is economically disadvantageous, and the speed characteristics of even the flagship SSDs are not enough.

A vivid example is the placement of databases directly in the Intel® Optane ™ DC Persistent Memory, which eliminates the need for constant data exchange between RAM and a storage device (a feature inherent in traditional systems).

A new type of memory is installed directly in the DIMM slot and is fully compatible with it. Modules with the following volume are available:

- 128 GB

- 256 GB

- 512 GB

Such significant volumes of modules will allow you to flexibly configure the hardware platform, having received a very capacious and very fast disk space for highly loaded systems. Intel® Optane ™ DC Persistent Memory has truly enormous potential for application, including machine learning.

Faster deep learning

In addition to supporting a new type of memory, Intel engineers took care of accelerating the process of deep learning. Since convolutional neural networks often require multiple multiplication of 8 and 16 bit values, the new processors received support for the AVX-512 VNNI (Vector Neural Network Instructions) instructions. This will allow you to optimize and speed up the calculation several times.

The best efficiency is achieved by implementing the following set of instructions:

- VPDPBUSD (for INT8 calculations),

- VPDPWSSD (for INT16 calculations).

The bottom line is to reduce the number of items processed per cycle. The VPDPWSSD instruction combines the two INT16 instructions and also uses the INT32 constant to replace the two current instructions PMADDWD and VPADDD . The VPDPUSB instruction likewise reduces the number of elements by replacing the three existing instructions VPMADDUSBW , VPMADDWD, and VPADDD .

Thus, with the correct application of the new set of instructions, it is possible to reduce the number of processed elements per cycle by two to three times and increase the speed of data processing. An appropriate framework for new instructions will become part of such popular machine learning software libraries as:

Load balancing optimization

Uniform loading of computing resources became easier with Intel® Speed Select Technology (on processors with an index of Y). The bottom line is that each operation begins to be associated with the number of cores involved and the clock speed. Depending on the selected profile of each operation, resources are allocated as follows:

- more cores, but with a lower clock speed;

- fewer cores, but with increased clock speed.

This approach allows you to fully utilize resources, which is especially important when using virtualized environments. This will reduce costs by optimizing the load on virtualization hosts.

Acceleration of Scientific Computing

Processing scientific data, especially when modeling physical processes at the particle level (for example, calculating electromagnetic interactions) requires an enormous amount of parallel computing. This problem can be solved using a CPU, GPU or FPGA.

Multi-core CPUs are universal due to the large number of software tools and libraries for data processing. Using a GPU for these purposes is also very effective, because you can run thousands of parallel threads directly on hardware graphics cores. There are convenient frameworks for development, such as OpenCL or CUDA, which allow you to create applications of any complexity using GPU computing .

However, there is another hardware tool that we already talked about.in previous articles - FPGA. The ability to program such devices to perform specific calculations allows you to speed up data processing, partially offloading the CPU. A similar scenario can be implemented on new Cascade Lake processors in conjunction with discrete Intel® Stratix® 10 SX FPGAs.

Despite the lower clock speed compared to conventional CPUs, FPGA is able to show performance ten times higher. For some types of tasks, such as digital signal processing, the Intel® Stratix® 10 SX can display results up to 10 TFLOPS (tera floating-point operations per second).

Platform scaling

Doing business in real time implies not only stability, but also the ability to scale on-demand. A good example is the high-performance SAP HANA platform used for data storage and processing. The physical deployment of this platform requires very powerful hardware resources.

Intel® Xeon® Scalable processors are designed to turn multi-socket systems into core elements of the IT infrastructure, providing scalability to meet the demands of business applications.

This is implemented in the form of support for external Node controllers, which allows you to create configurations of a higher level than one single platform can provide. For example, you can create a configuration of 32 physical processors by combining the resources of several multi-socket platforms into a single whole.

Conclusion

An increase in operating frequencies and processor cores, an increase in productivity, and support for Intel® Optane ™ DC Persistent Memory — all these improvements significantly increase the computing power of each platform, reducing the cost of the amount of equipment used and increasing data processing efficiency. The principle of scalability, laid down at the architecture level, allows you to build an IT infrastructure of any complexity and achieve high performance and energy efficiency.

As Selectel is an Intel Platinum partner, our customers are now available for ordering next-generation Intel® Xeon® Scalable processors in arbitrary configuration servers.

Renting a server with next-generation processors is easy! Just goto the configurator page and select the necessary components. Any questions regarding the operation of services can be asked to our specialists by creating a ticket in the control panel. Paying a server for several months in advance, you get a discount of up to 15%.

If you are interested in participating in testing the latest technologies, then join our Selectel Lab.

We will be glad to hear your questions and suggestions in the comments.