Overview of PlayCanvas features for creating Web VR applications

PlayCanvas is a visual platform for developing interactive web applications. Everything that is developed using PlayCanvas is based on the capabilities of HTML5. PlayCanvas is a web application, which means you do not need to install special programs and you can access your project from any device anywhere in the world via the Internet. All projects that you create can be placed on the network with just one click.

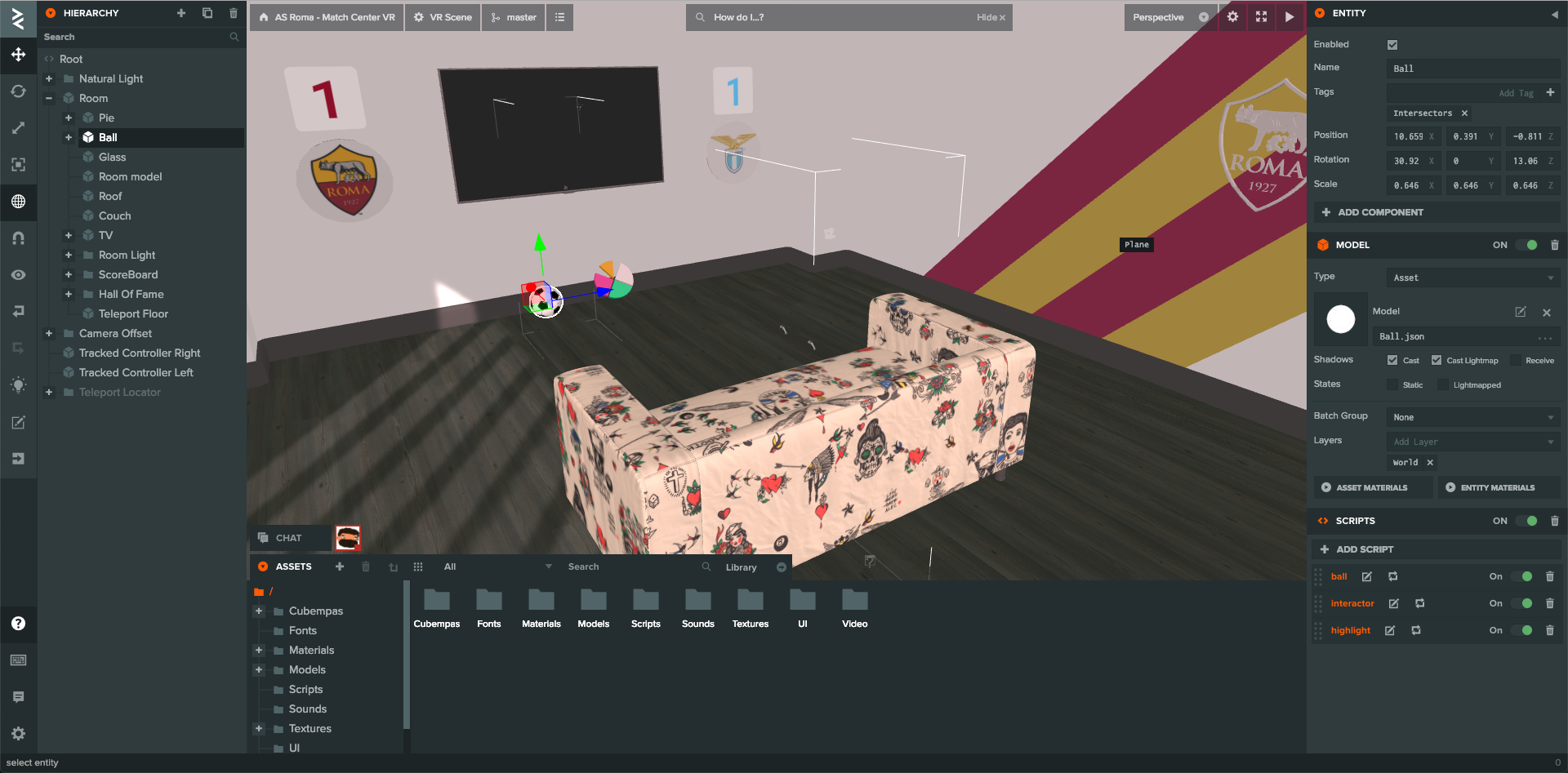

Workflow in PlayCanvas

Everything in PlayCanvas starts with a visual editor.

On the left side of the screen is a section of the entity hierarchy. It allows you to create both empty entities and predefined ones, such as cameras, lights, primitives, audio, interfaces, particle systems or models. Any entity added to the hierarchy automatically enters the scene.

In the center of the screen is the scene editor. Here you can change the arrangement of entities, select them for editing, and simply view how your application scene will look.

At the bottom of the scene editor is the asset section. Assets are all files and other elements that can be added to your entities. There are several types of assets in PlayCanvas: folder, css, cubemap, HTML, JSON, material, script, shader and text. All of them have different purposes.

And finally, on the right side of the screen is a section of the properties of the entity. An entity has basic properties: location, rotation, scale, name, tags, settings of added components. Properties change depending on which entity is added. For example, if we add a cube, it will have the following properties: type, material, shadow settings, layers and groups.

The general process of developing applications and games in PlayCanvas is approximately as follows:

- We add the necessary assets. For example: models, materials, audio, video.

- We create the environment of our scene. For example: city, house, landscape.

- Add interactive elements. For example: a player and his enemies.

- Add application logic using scripts.

- Publish a game or application online.

PlayCanvas and JavaScript

To add logic to our game or application in PlayCanvas there is a special component: a script. Scripts can be global, in which case they must be added to the root entity of the scene hierarchy. Local scripts are added directly to the entity within the hierarchy (for example, to the model of the game character). All scripts must be written in JavaScript since after all, we write games in the browser. ES6 lovers, unfortunately, will be disappointed because PlayCanvas still uses ES5, and when you try to write some kind of design from ES6, the built-in linter will begin to swear. In general, the anatomy of the script is the following template:

var NewScript = pc.createScript('newScript');

NewScript.attributes.add('someString', {

type: 'string',

default: ‘any’,

title: 'Some string'

});

NewScript.prototype.initialize = function() {

this.startPosition = this.entity.getPosition();

};

NewScript.prototype.update = function(dt) {

this.entity.setLocalPosition(this.newPosition);

};

NewScript.prototype.calcaulateNewPosition = function() {

this.newPosition = this.startPosition.dot(pc.Vec3.ZERO)

};

Here we create a new script. He gets two main methods: initialize - will be called when the entity is added to the scene. Update - each render frame is called. The dt parameter in update is delta time -% of the second for which the last frame was drawn. This is well illustrated by the following example: you need to rotate an object in one second 360 degrees. We write the following code:

this.entity.rotate(0, 360 * dt, 0);Finally, the last calcaulateNewPosition method is a custom method and can be used to structure code.

Also in the code there is the ability to add a new attribute someString . This design allows you to define parameters that can be further specified through the editor interface. To add a script to the selected entity and click the “Parse” button . If the script had a construction with attributes, a special field will appear to fill in the value. This value will override the default value. PlayCanvas supports a lot of different types of attributes for a script. You can read more about this here .

Scripts can be edited both in the built-in editor and on your local machine in an IDE convenient for you. But in the second case, you have to play around with the settings, because you need to raise the server paired with PlayCanvas.

Well, now that we have covered the main features of PlayCanvas, we can talk about how to create virtual reality scenes in PlayCanvas.

VR out of the box

PlayCanvas allows you to create a VR scene out of the box. To do this, select the appropriate option (VR Starter Kit) when creating a new project. So, let's see what the default PlayCanvas offers us (spoiler: not as many as we would like).

Running the scene, you will see three cubes in front of you. When you gaze at them (gaze control), a progress bar will be launched, which will make the cube transparent. No controllers or WASD controls for PC. In essence, this management allows you to create a small application for cardboards, because there by default there is support for touch events.

The code of our starter VR kit, in fact, is not very well structured and some of its parts are directly tied to the logic of this scene. That is, to do something different, you have to figure out how it all works and adapt to your needs. There is no API that allows you to connect any functionality separately.

Now let's try to go through the starter kit files to find out what is responsible for and how you can use it for your own purposes.

- look-camera.js . Here is the logic that is responsible for pairing the VR display and camera. In addition, using mouse-controller.js or touch-controller.js we can transmit pitch and yaw to control the camera from a PC or mobile phone.

- selector-camera.js . This file has hidden logic for implementing gaze control. Each element that is available for interaction must be added through the selectorcamera: add event. Moreover, its AABB must be calculated manually. Also here you can find the ray logic (ray \ raycaster). PlayCanvas has a special object this._ray = new pc.Ray (); which knows how to find intersections with BoundingBox or BoundingSphere .

- web-vr-ui.js . Just adds a VR login interface. Frankly, this is not very elegant. All styles and HTML are directly in this script. Apparently this is due to the fact that the 2D Screen for interfaces has its own limitations, and the button should be strictly in the lower right corner.

- box.js . Here we will find all the logic associated with the cube - management of the progress bar, etc.

As you can see from the above, there is nothing much to rely on in the starting VR kit. All that can be done is a cardboard application and this, in my opinion, is not very interesting, because cardboards are a kind of toy that does not give an idea of the normal experience of using VR. You can truly immerse yourself in virtual reality with Oculus Go, Oculus Rift or HTC Vive.

Now let's talk about how we can add controller support to our application.

VR controllers

It would be nice if PlayCanvas adapted its storage so that it was possible to connect various elements connected with the necessary logic to the application with one button. But today this cannot be done, so let's try to do it differently. In order not to write all the logic for comparing the position of the controllers, we can use existing solutions. There is a great example of a Web VR Lab . There is a lot of interesting things, but the code ... the devil himself will break his leg. There is also a small VR Tracked Controllers scene - just a basic scene with two controllers. Here it is just the same and is suitable for borrowing elements into your project.

Open the VR Tracked Controllers scene for editing. First we need to transfer the controller:

- We select the controller, in the properties section we find the model, click on it, get on it as an asset.

- In the settings there will be a Download button, which we click and download the model and textures.

- Unzip the assets and load them into your application. To do this, just drag them into the Asset window, which is located below. You need to transfer everything: a model in JSON format and all textures.

- The model will appear in our list of assets. Drag it to the stage. And here she is already there. Let's call it Left Controller.

Now we need to add the material:

- Create a new material by clicking on the “+” button on the assets panel. Name the material Controller Material.

- Now we need to open the source project and find the tracked-controller material there and copy all the settings into our material, including normals, emissive, specular and diffuse maps (maps).

Now you can copy the controller using the special Duplicate button in the hierarchy panel and name the second controller Right Controller.

That's it, the controllers on our stage. But so far these are just two models and in order for everything to work we need to transfer the scripts. Let's see in more detail what is needed there and how it works:

- vr-gamepad-manager.js - essentially contains all the necessary logic for your controllers to get the position and rotation of the real controller. Here, the fake elbow logic for 3-dof helmets such as Oculus Go, Gear VR or Daydream is implemented. _updatePadToHandMappings here is responsible for locating controllers and mapping them to our controllers. All the logic of matching the real and virtual controller is in the _poseToWorld function. In fact, here the data is taken from the WebXR API through the instance of the controller itself - padPose.position, padPose.poseRotation. The logic below is responsible for the nuances associated with different types of devices. The script itself must be global (i.e. added to the root of the hierarchy).

- input-vr.js - is responsible for registering our controllers and working with buttons. In fact, it simply determines the button press and sends the number of the button pressed. This is not very convenient, since different devices may have different buttons and a GamePad API , and it is not a fact that the first button in Oculus Go will be a trigger for the HTC Vive controller. Therefore, you have to dig manually. This script needs to be connected to the controller element.

If everything is done correctly, you can enter virtual reality and wave your controllers. Not bad, although the process of integrating the necessary functionality is quite inconvenient and tedious.

Total

PlayCanvas is an excellent engine that you can use to create WebGL games or applications. But, I must admit that it is poorly adapted for WebVR. It seems that the goal was to demonstrate what PlayCanvas can do to foster public interest. But this direction, apparently, has not received development. Therefore, you can make a VR game or application, but you will have to copy a lot and understand the complicated code that was created only for demonstration (Web VR Lab).

In the next article, I would like to conduct a small lesson on creating a teleport control, so that we have some kind of at least a little set that allows you to start a Web VR game or application. Thank you all for your attention!