We write an operating system on Rust. Paging memory organization

- Transfer

In this article we present the page , a very common memory management scheme, which we also apply in our OS. The article explains why memory isolation is necessary, how segmentation works , what virtual memory is and how pages solve the problem of fragmentation. We also explore the layout of multi-level page tables in the x86_64 architecture.

This blog is posted on github . If you have any questions or problems, open the corresponding request there.

One of the main tasks of the operating system is to isolate programs from each other. For example, the browser should not interfere with the text editor. There are different approaches depending on the hardware and the implementation of the OS.

For example, some ARM Cortex-M processors (on embedded systems) have a memory protection unit (MPU) that defines a small number (for example, 8) of memory areas with different access permissions (for example, no access, read only, read records). With each memory access, the MPU ensures that the address is in the area with the correct permissions, otherwise it throws an exception. By changing areas and access permissions, the OS ensures that each process has access only to its memory in order to isolate processes from each other.

On x86, two different approaches to memory protection are supported: segmentation and page organization .

Segmentation was implemented in 1978, initially to increase the amount of addressable memory. At that time, CPUs supported only 16-bit addresses, which limited the amount of addressable memory to 64 KB. To increase this volume, we introduced additional segment registers, each of which contains an offset address. The CPU automatically adds this offset at every memory access, thus addressing up to 1 MB of memory.

The CPU automatically selects the segment register depending on the type of memory access: the code segment register is used for instructions

In the first version of segmentation, the registers directly contained offset and access control was not performed. With the advent of protected mode, the mechanism has changed. When the CPU is operating in this mode, segment descriptors store the index in a local or global descriptor table, which in addition to the offset address contains the segment size and access permissions. By loading separate global / local descriptor tables for each process, the OS can isolate processes from each other.

By changing the memory addresses before the actual access, the segmentation implemented a method that is now used almost everywhere: it is virtual memory .

The idea of virtual memory is to abstract the memory addresses from a physical device. Instead of directly accessing the storage device, the conversion step is first performed. In the case of segmentation, the offset address of the active segment is added at the translation stage. Imagine a program that accesses a memory address

To distinguish between two types of addresses, addresses before conversion are called virtual , and addresses after conversion are called physical.. There is one important difference between them: the physical addresses are unique and always refer to the same unique memory location. On the other hand, virtual addresses depend on the conversion function. Two different virtual addresses may well refer to the same physical address. In addition, identical virtual addresses can refer to different physical addresses after conversion.

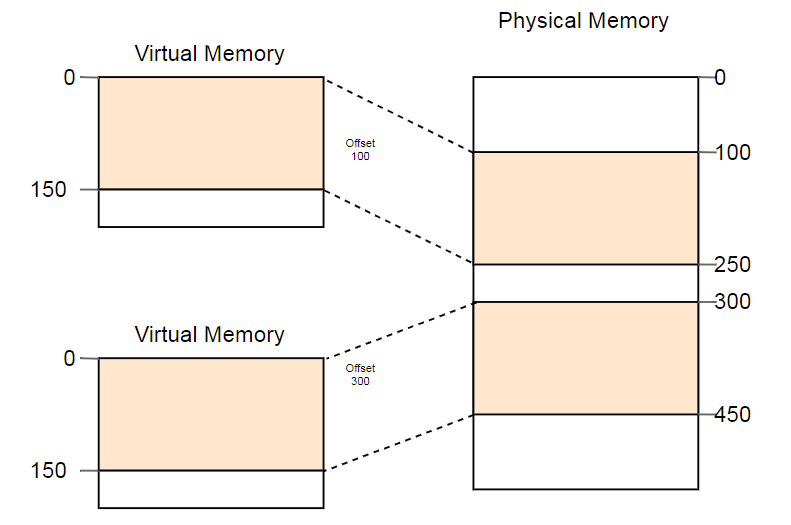

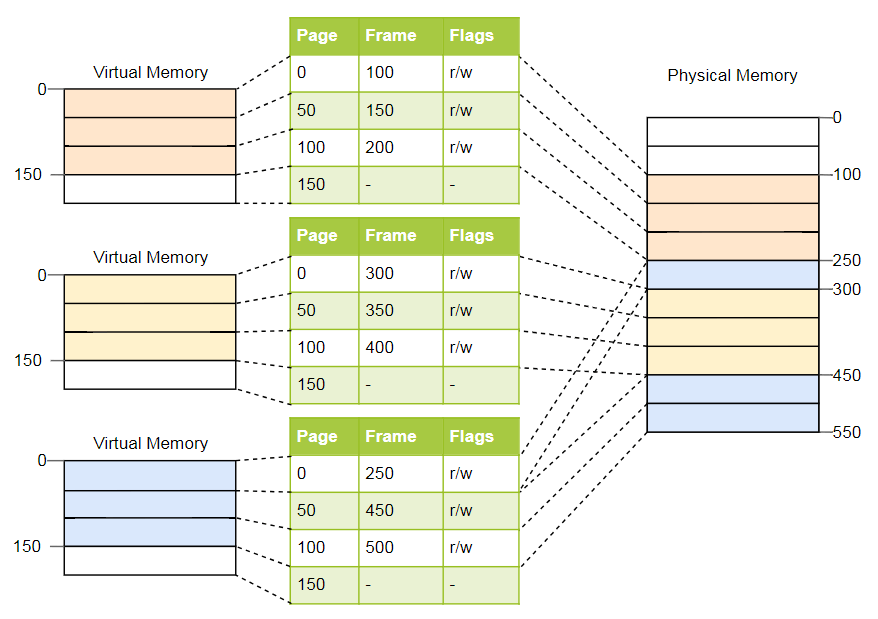

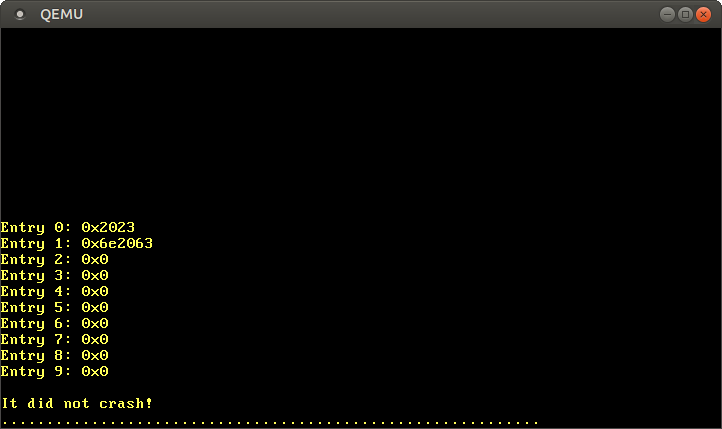

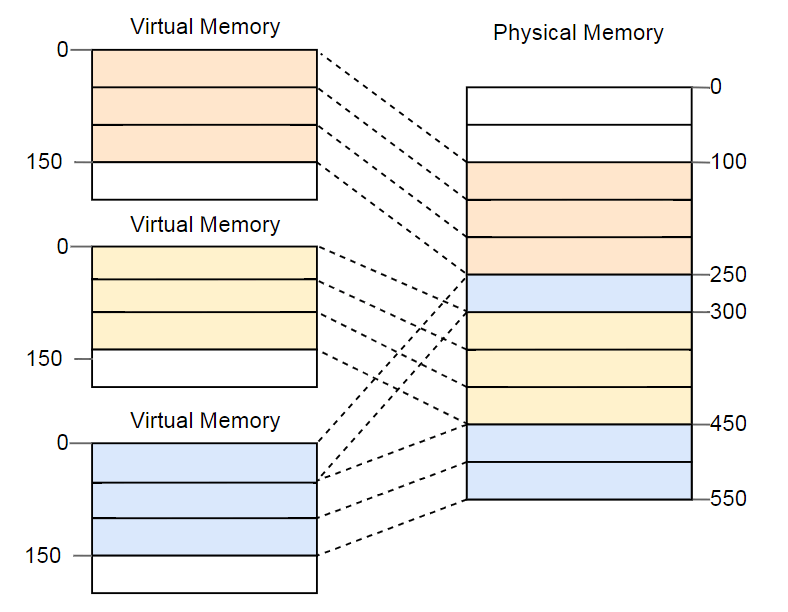

As an example of the useful use of this property we can cite the parallel launch of the same program twice:

Here the same program is launched twice, but with different conversion functions. In the first instance, the segment offset is 100, so its virtual addresses 0-150 are converted to physical addresses 100-250. The second instance has an offset of 300, which converts virtual addresses 0-150 into physical addresses 300-450. This allows both programs to execute the same code and use the same virtual addresses without interfering with each other.

Another advantage is that now programs can be placed in arbitrary locations of physical memory. Thus, the OS uses the entire amount of available memory without the need to recompile programs.

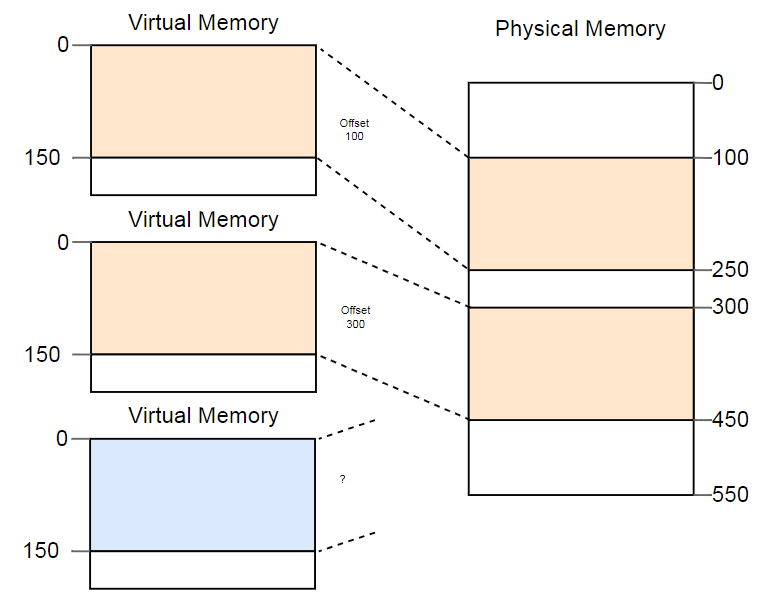

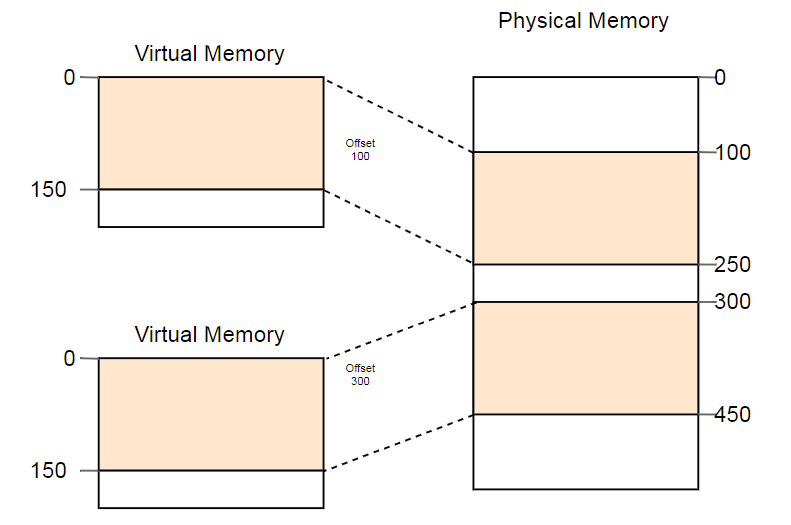

The distinction between virtual and physical addresses is a real segmentation achievement. But there is a problem. Imagine that we want to run a third copy of the program that we saw above:

Although there is more than enough space in the physical memory, the third copy does not fit anywhere. The problem is that he needs a continuous piece of memory and we can not use separate free areas.

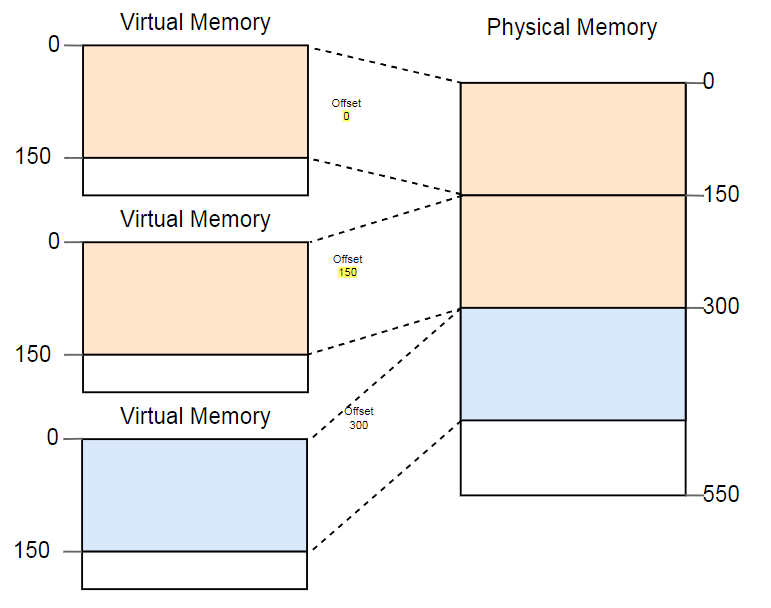

One way to deal with fragmentation is to pause the execution of programs, move the used parts of memory closer to each other, update the transformation, and then resume execution:

Now there is enough space to run the third instance.

The disadvantage of such defragmentation is the need to copy large amounts of memory, which reduces performance. This procedure has to be performed regularly until the memory becomes too fragmented. Performance becomes unpredictable, programs stop at an arbitrary time and may stop responding.

Fragmentation is one of the reasons why segmentation is not used in most systems. In fact, it is no longer supported even in 64-bit mode on x86. Instead of segmentation, pages that completely eliminate the problem of fragmentation are used.

The idea is to divide the space of virtual and physical memory into small blocks of fixed size. Blocks of virtual memory are called pages, and blocks of physical address space are called frames. Each page is individually mapped to a frame, which makes it possible to divide large areas of memory between non-adjacent physical frames.

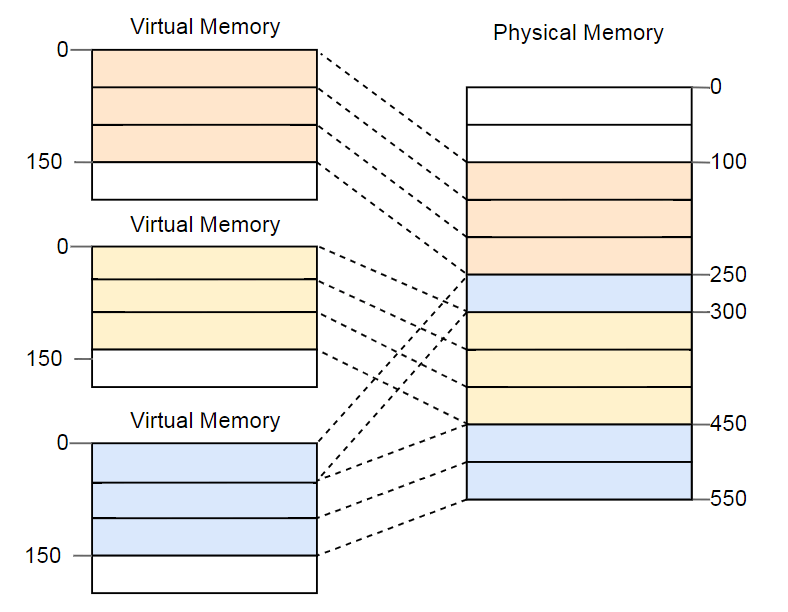

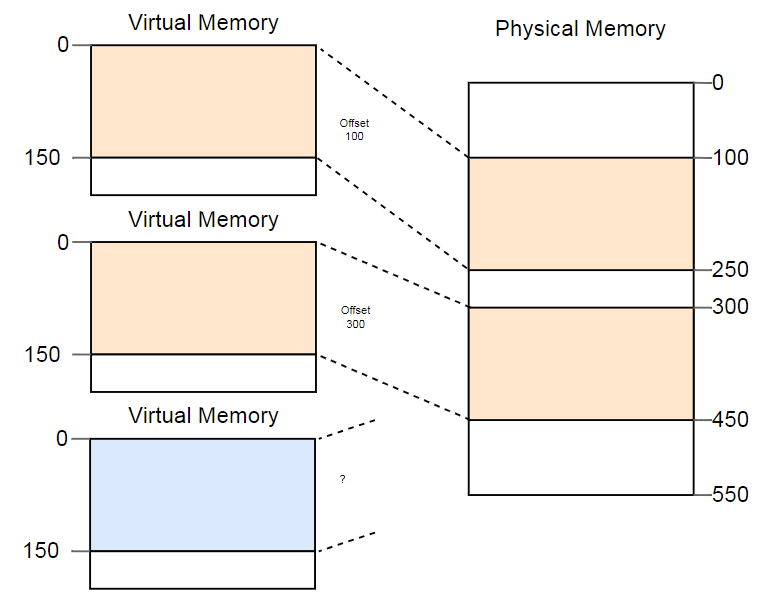

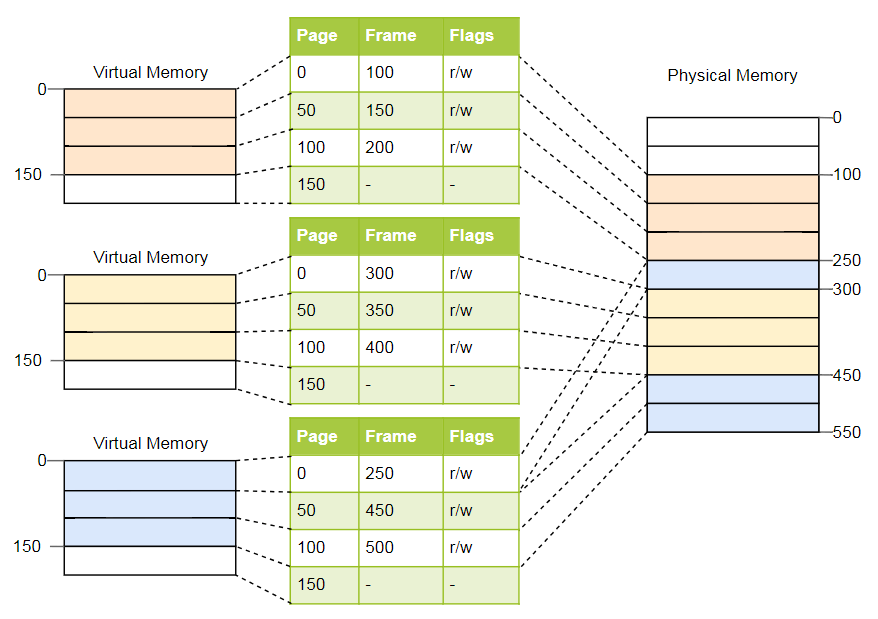

The advantage becomes obvious if we repeat the example with fragmented memory space, but this time using pages instead of segmentation:

In this example, the page size is 50 bytes, that is, each of the memory areas is divided into three pages. Each page is mapped to a separate frame, so a continuous area of virtual memory can be mapped to isolated physical frames. This allows you to run a third copy of the program without defragmentation.

Compared to segmentation, the paging organization uses many small fixed-size memory areas instead of several large variable-size areas. Each frame has the same size, so that fragmentation due to too small frames is impossible.

But this is only an appearance . In fact, there is a hidden kind of fragmentation, the so-called internal fragmentation due to the fact that not every area of memory is exactly a multiple of the page size. Imagine in the above example a program of size 101: it will still need three pages of size 50, so it will take 49 bytes more than necessary. For clarity, fragmentation due to segmentation is called outer fragmentation .

Internal fragmentation is no good, but often it is a lesser evil than external fragmentation. Extra memory is still consumed, but now there is no need to defragment, and the amount of fragmentation is predictable (on average, half a page per each memory area).

We saw that each of the millions of possible pages is individually matched to a frame. This address translation information needs to be stored somewhere. Segmentation uses separate segment registers for each active memory area, which is impossible in the case of pages, because there are many more than registers. Instead, it uses a structure called a page table .

For the above example, the tables will look like this:

As you can see, each copy of the program has its own page table. A pointer to the current active table is stored in a special register CPU. On

With each memory access, the CPU reads the table pointer from the register and searches for the corresponding frame in the table. This is a fully hardware function that runs completely transparent to the running program. To speed up the process, many processor architectures have a special cache that remembers the results of the latest transformations.

Depending on the architecture, attributes such as access rights can be stored in the flags field of the page table. In the example above, the flag

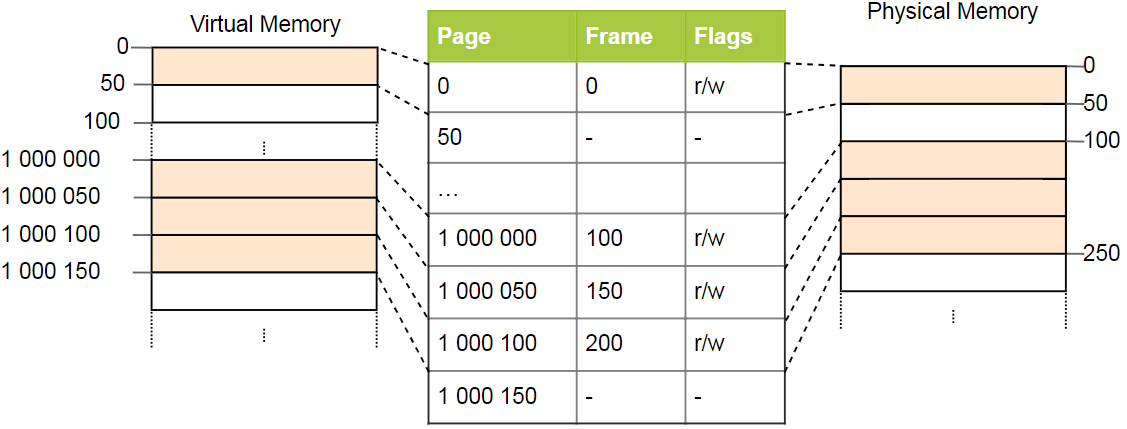

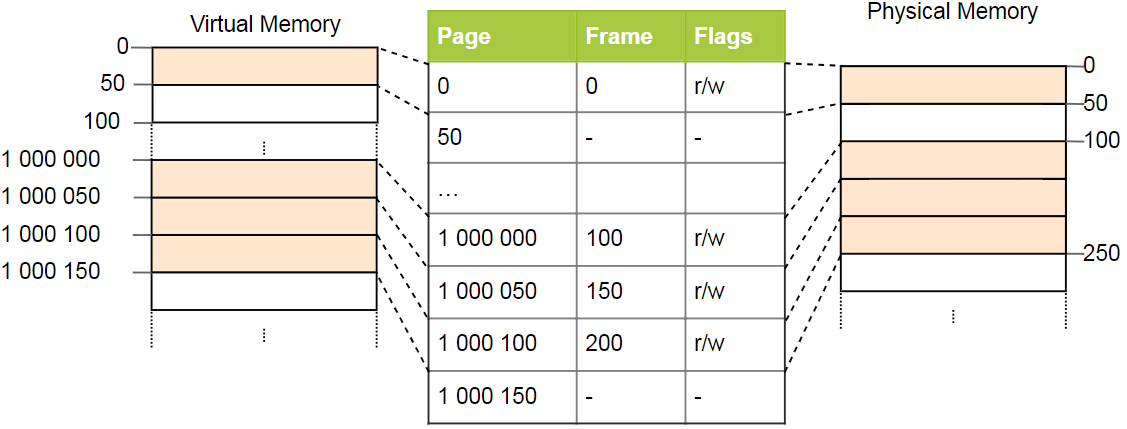

Simple page tables have a problem with large address spaces: memory is wasted. For example, the program uses four virtual pages

It takes only four physical frame, but in the pages of the table more than a million records. We cannot skip empty records, because then the CPU in the conversion process will not be able to go directly to the correct record (for example, it is no longer guaranteed that the fourth page uses the fourth record).

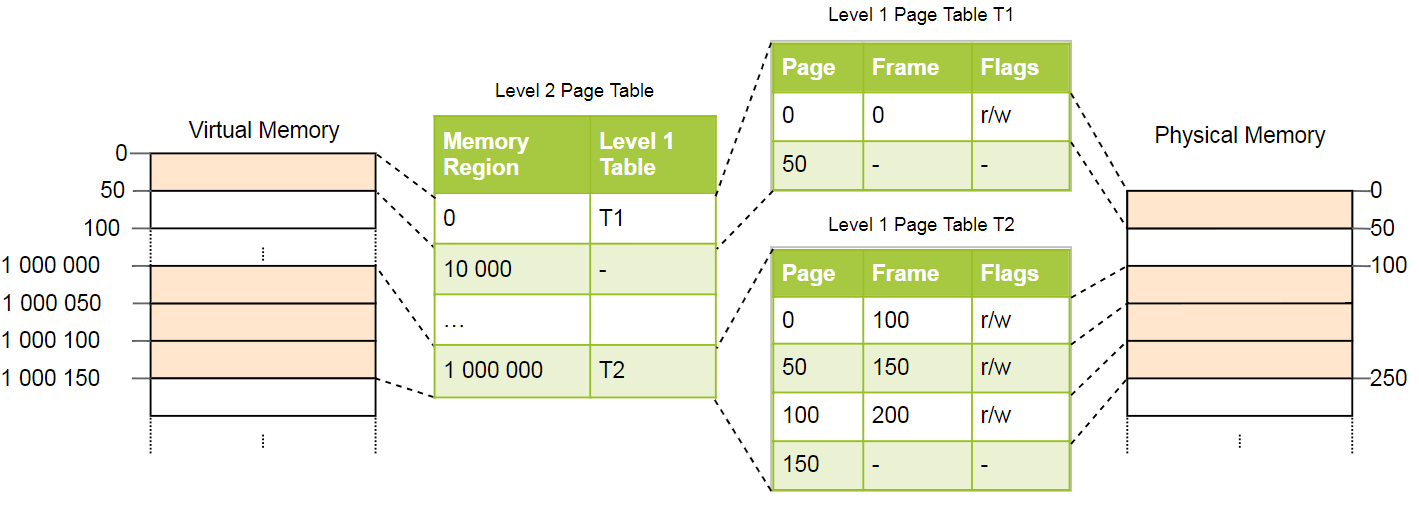

To reduce memory loss, you can use a two-tier organization . The idea is that we use different tables for different areas. Additional table called page tablesecond level , performs conversion between address areas and first level page tables.

This is best explained by example. We define that each page table of level 1 is responsible for the area size

Page 0 falls into the first

Pages

We still have 100 empty entries in the second level table, but this is much less than the previous million. The reason for the savings is that you do not need to create tables of the first level pages for unmatched memory areas between

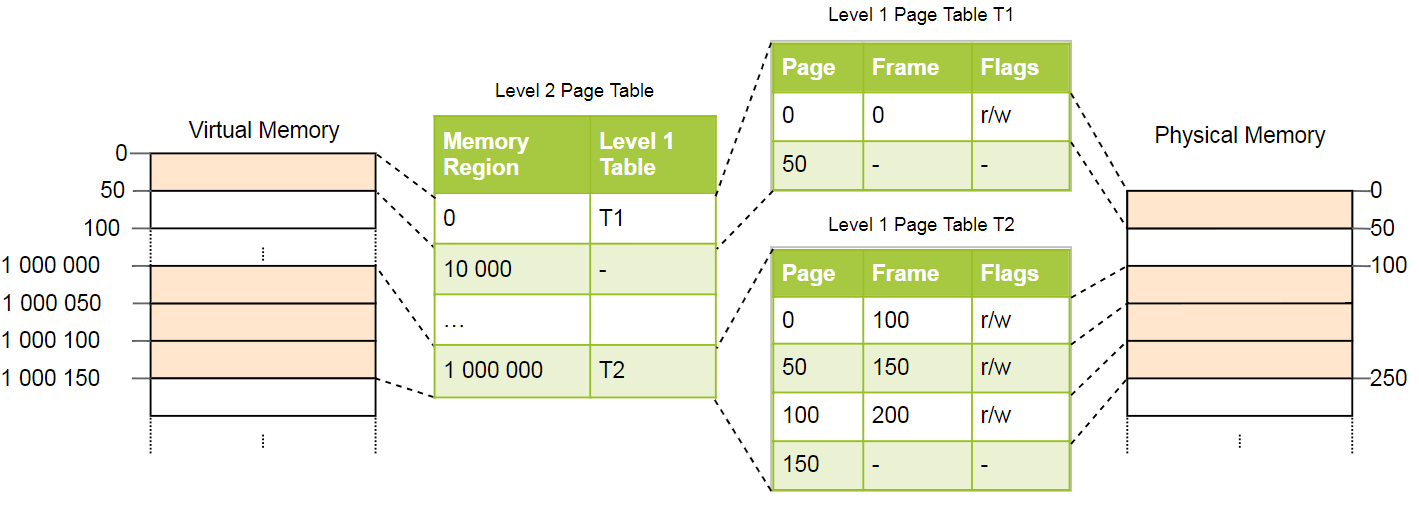

The principle of two-level tables can be extended to three, four or more levels. In general, such a system is called multi-level or hierarchicalpage table.

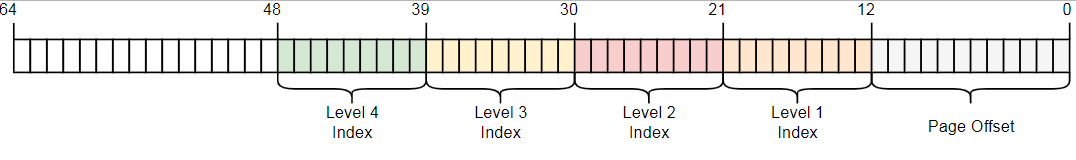

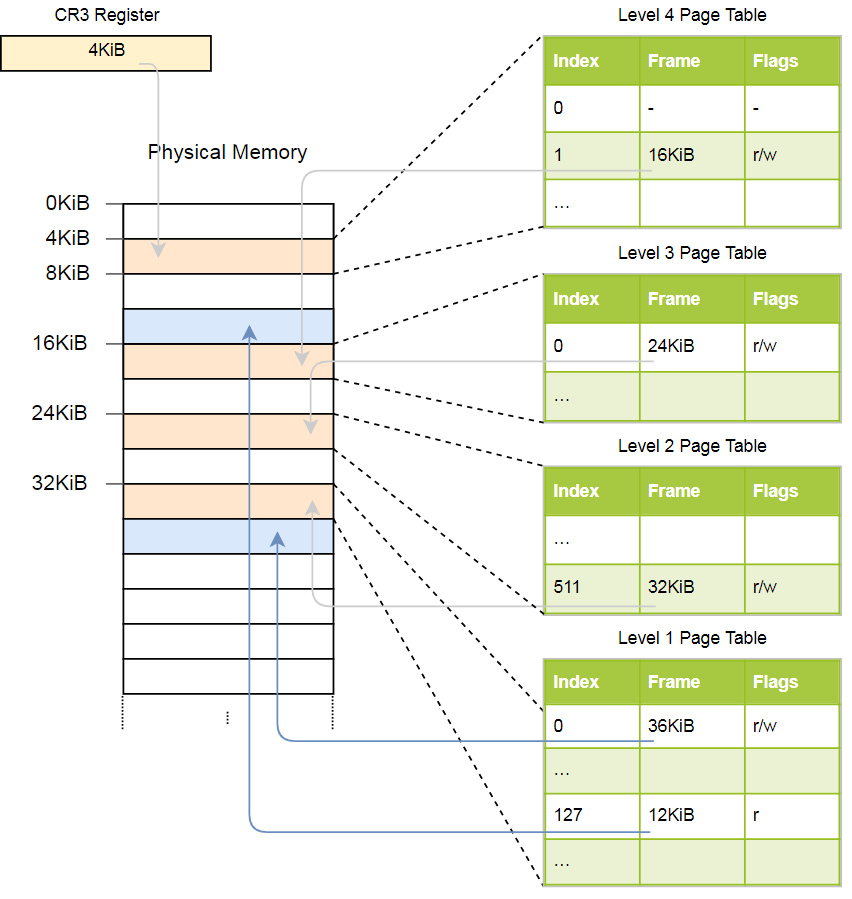

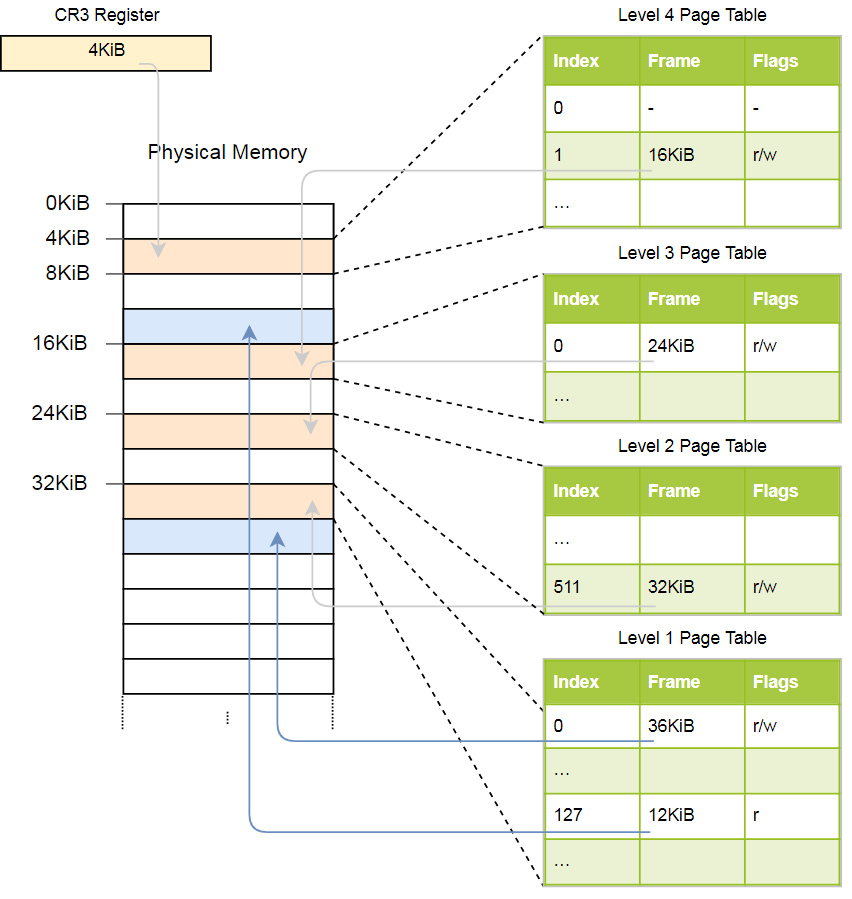

Knowing about page organization and multi-level tables, you can see how page organization is implemented in the x86_64 architecture (we assume that the processor is running in 64-bit mode).

The x86_64 architecture uses a four-level table with a 4 KB page size. Regardless of the level, each page table has 512 elements. Each record has a size of 8 bytes, so the size of the tables is 512 × 8 bytes = 4 KB.

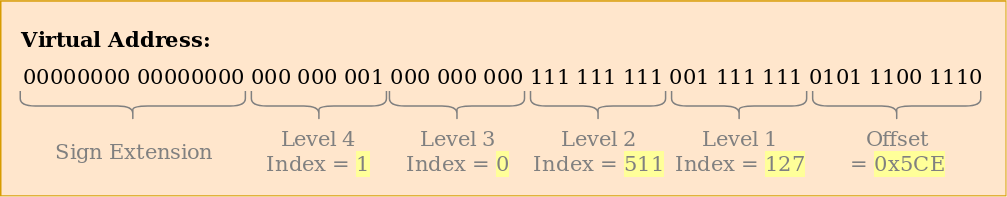

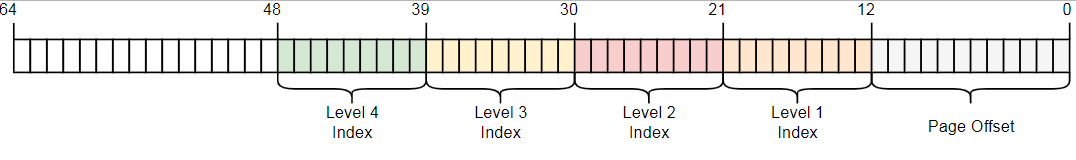

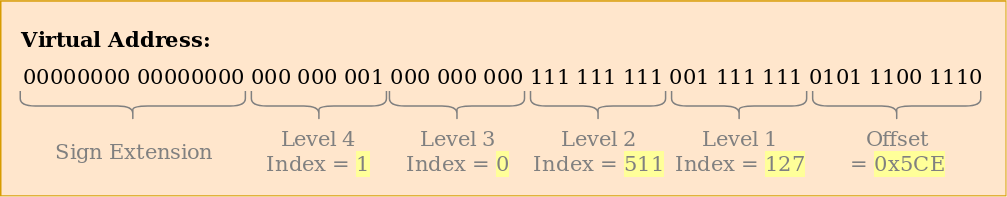

As you can see, each table index contains 9 bits, which makes sense, because in the tables 2 ^ 9 = 512 records. The lower 12 bits is the offset to the 4-kilobyte page (2 ^ 12 bytes = 4 KB). Bits 48 to 64 are discarded, so x86_64 is not really a 64-bit system, but only supports 48-bit addresses. There are plans to expand the size of the address to 57 bits through a 5-level page table , but such a processor has not yet been created.

Although bits 48 through 64 are discarded, they cannot be set to arbitrary values. All bits in this range must be copies of bit 47 in order to maintain unique addresses and allow future expansion, for example, to a 5-level page table. This is called a sign extension (sign-extension), because it is very similar to a sign extension in an additional code . If the address is incorrectly expanded, the CPU throws an exception.

Let us consider an example of how the address translation works: The

physical address of the current active page table of level 4, which is the root page table of this level, is stored in a register

The above hierarchy converts two pages (in blue). From the indices we can conclude that the virtual addresses of these pages

With these indexes, we can now go through the hierarchy of the page tables and find the appropriate frame:

For a page in the first-level table, a flag is indicated

Although this example uses only one instance of each table, usually there are several instances of each level in each address space. Maximum:

In the x86_64 architecture, page tables are essentially arrays of 512 entries. In Rust syntax:

As indicated in the attribute

The size of each entry is 8 bytes (64 bits) and the following format:

We see that only bits 12-51 are used to store the physical address of the frame, while the rest work as flags or can be used freely by the operating system. This is possible because we always point either to the address aligned to 4096 bytes, or to the aligned page of the tables, or to the beginning of the corresponding frame. This means that bits 0-11 are always zero, so they can not be stored, they are simply reset at the hardware level before using the address. The same applies to bits 52-63, since the x86_64 architecture only supports 52-bit physical addresses (and only 48-bit virtual addresses).

In more detail we will consider available flags:

The x86_64 architecture defines the format of the page tables and their entries , so we don’t have to create these structures ourselves.

Because of the four levels, for each address translation, four memory accesses are required. For the sake of performance, x86_64 caches the last few translations in a so-called associative translation buffer (TLB). This allows you to skip the conversion if it is still in the cache.

Unlike other processor caches, TLB is not completely transparent, does not update or remove conversions when the content of the page tables changes. This means that the kernel must update the TLB on its own whenever it changes the page table. To do this, there is a special CPU instruction called

It is important to remember to clean the TLB after each change of the page table, otherwise the CPU will continue to use the old translation, which will lead to unpredictable errors that are very difficult to debug.

We did not mention one thing: our core already supports paging . The loader from the article “Minimal core on Rust” has already established a four-level hierarchy that maps every page of our core with a physical frame, because page organization is mandatory in 64-bit mode on x86_64.

This means that in our kernel all memory addresses are virtual. Access to the VGA buffer at the address

Thanks to paging, the kernel is already relatively safe: every access beyond the permissible memory causes a page error, and does not allow writing to physical memory. The loader even set the correct access permissions for each page: only the pages with the code will be executable, and only the pages with the data will be available for writing

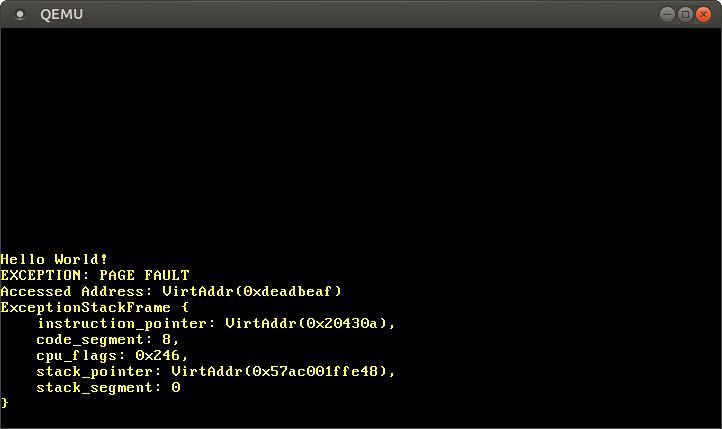

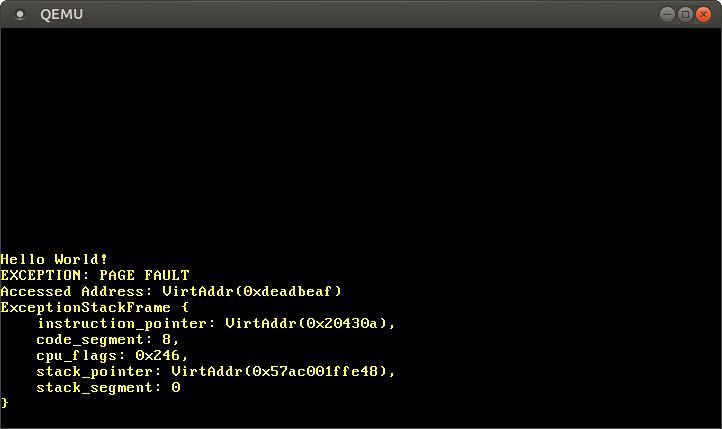

Let's try to call PageFault by accessing memory outside the kernel. First, we create an error handler and register it with our IDT in order to see a specific exception instead of a generic double error :

If the page fails, the CPU automatically sets the register

Now we get access to memory outside the kernel:

After launch, we see that the page error handler is being invoked: The

register

The current instruction pointer is

If we comment out the last line, we can make sure that the read is working, and the write causes a PageFault error.

Now take a look at the page tables for the kernel:

The function

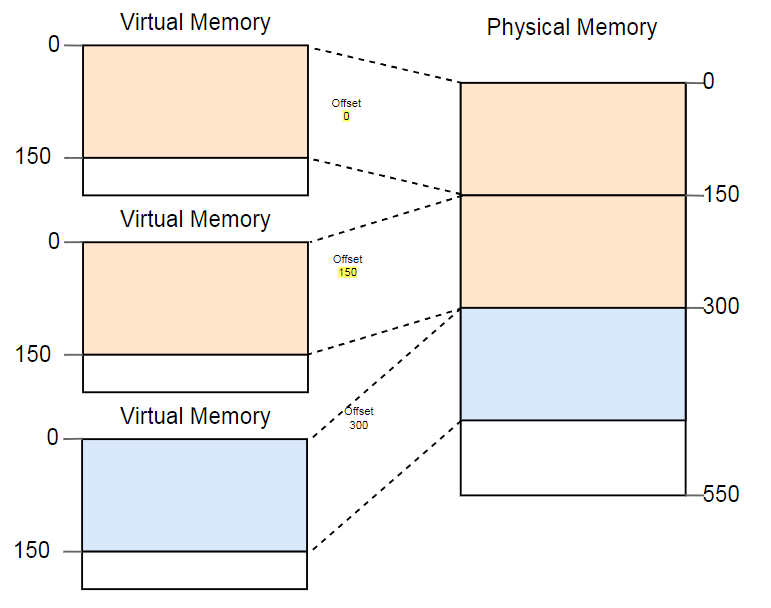

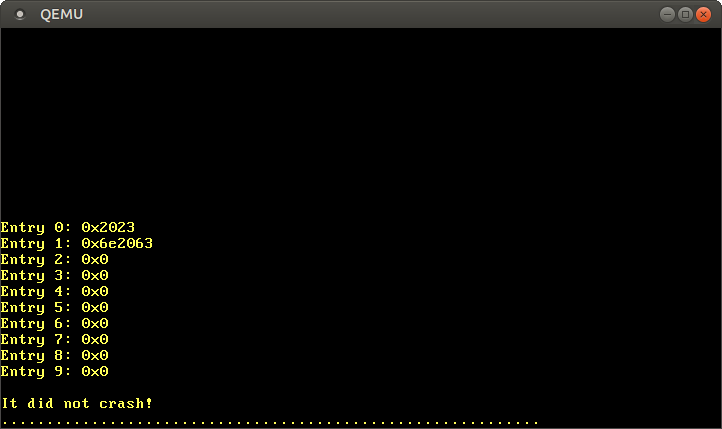

After the launch, we see the following result:

Thus, the currently active fourth-level page table is stored in physical memory at the address

With paging, direct access to physical memory is not possible, otherwise programs can easily bypass protection and gain access to the memory of other programs. Thus, the only way to access is through a virtual page that is translated into a physical frame at

We will describe the solutions to this problem in detail in the next article. For now, just say that the loader uses a method called recursive page tables . The last page of the virtual address space is

We have reduced the address of the last virtual page to a pointer to

After starting see the following results:

According to the format described above, the value

Instead of working with unsafe pointers, you can use the type

Here we assign a pointer to the pointer

The next step - to follow the signs to write 0 or write 1 to the page table level 3. But now we have again the problem arises that

The article presents two methods of memory protection: segmentation and page organization. The first method uses variable-size memory areas and suffers from external fragmentation, the second uses fixed-size pages and allows much more detailed control over access rights.

The page organization stores information about the pages broadcast in tables of one or several levels. The x86_64 architecture uses four-level tables with a page size of 4 KB. The equipment automatically bypasses the page tables and caches the conversion results in an associative translation buffer (TLB). When changing the page tables, it should be forcibly cleared.

We learned that our core already supports paging, and with unauthorized memory access, PageFault falls out. We tried to access the current active page tables, but managed to access only the fourth-level table, since the page tables store the physical addresses, and we cannot access them directly from the kernel.

The following article is based on the fundamentals that we have now learned. To access the page tables from the kernel, an advanced technique called recursive page tables is used to cross the table hierarchy and implement software translation of addresses. The article also explains how to create new broadcasts in the page tables.

This blog is posted on github . If you have any questions or problems, open the corresponding request there.

Memory protection

One of the main tasks of the operating system is to isolate programs from each other. For example, the browser should not interfere with the text editor. There are different approaches depending on the hardware and the implementation of the OS.

For example, some ARM Cortex-M processors (on embedded systems) have a memory protection unit (MPU) that defines a small number (for example, 8) of memory areas with different access permissions (for example, no access, read only, read records). With each memory access, the MPU ensures that the address is in the area with the correct permissions, otherwise it throws an exception. By changing areas and access permissions, the OS ensures that each process has access only to its memory in order to isolate processes from each other.

On x86, two different approaches to memory protection are supported: segmentation and page organization .

Segmentation

Segmentation was implemented in 1978, initially to increase the amount of addressable memory. At that time, CPUs supported only 16-bit addresses, which limited the amount of addressable memory to 64 KB. To increase this volume, we introduced additional segment registers, each of which contains an offset address. The CPU automatically adds this offset at every memory access, thus addressing up to 1 MB of memory.

The CPU automatically selects the segment register depending on the type of memory access: the code segment register is used for instructions

CS, and the stack segment register for push operations (push / pop) SS. Other instructions use a data segment DSregister or an additional segment register.ES. Later, two additional segment registers were added for free use FSand GS. In the first version of segmentation, the registers directly contained offset and access control was not performed. With the advent of protected mode, the mechanism has changed. When the CPU is operating in this mode, segment descriptors store the index in a local or global descriptor table, which in addition to the offset address contains the segment size and access permissions. By loading separate global / local descriptor tables for each process, the OS can isolate processes from each other.

By changing the memory addresses before the actual access, the segmentation implemented a method that is now used almost everywhere: it is virtual memory .

Virtual memory

The idea of virtual memory is to abstract the memory addresses from a physical device. Instead of directly accessing the storage device, the conversion step is first performed. In the case of segmentation, the offset address of the active segment is added at the translation stage. Imagine a program that accesses a memory address

0x1234000in a segment with an offset 0x1111000: in fact, the address goes to the address 0x2345000. To distinguish between two types of addresses, addresses before conversion are called virtual , and addresses after conversion are called physical.. There is one important difference between them: the physical addresses are unique and always refer to the same unique memory location. On the other hand, virtual addresses depend on the conversion function. Two different virtual addresses may well refer to the same physical address. In addition, identical virtual addresses can refer to different physical addresses after conversion.

As an example of the useful use of this property we can cite the parallel launch of the same program twice:

Here the same program is launched twice, but with different conversion functions. In the first instance, the segment offset is 100, so its virtual addresses 0-150 are converted to physical addresses 100-250. The second instance has an offset of 300, which converts virtual addresses 0-150 into physical addresses 300-450. This allows both programs to execute the same code and use the same virtual addresses without interfering with each other.

Another advantage is that now programs can be placed in arbitrary locations of physical memory. Thus, the OS uses the entire amount of available memory without the need to recompile programs.

Fragmentation

The distinction between virtual and physical addresses is a real segmentation achievement. But there is a problem. Imagine that we want to run a third copy of the program that we saw above:

Although there is more than enough space in the physical memory, the third copy does not fit anywhere. The problem is that he needs a continuous piece of memory and we can not use separate free areas.

One way to deal with fragmentation is to pause the execution of programs, move the used parts of memory closer to each other, update the transformation, and then resume execution:

Now there is enough space to run the third instance.

The disadvantage of such defragmentation is the need to copy large amounts of memory, which reduces performance. This procedure has to be performed regularly until the memory becomes too fragmented. Performance becomes unpredictable, programs stop at an arbitrary time and may stop responding.

Fragmentation is one of the reasons why segmentation is not used in most systems. In fact, it is no longer supported even in 64-bit mode on x86. Instead of segmentation, pages that completely eliminate the problem of fragmentation are used.

Paging memory organization

The idea is to divide the space of virtual and physical memory into small blocks of fixed size. Blocks of virtual memory are called pages, and blocks of physical address space are called frames. Each page is individually mapped to a frame, which makes it possible to divide large areas of memory between non-adjacent physical frames.

The advantage becomes obvious if we repeat the example with fragmented memory space, but this time using pages instead of segmentation:

In this example, the page size is 50 bytes, that is, each of the memory areas is divided into three pages. Each page is mapped to a separate frame, so a continuous area of virtual memory can be mapped to isolated physical frames. This allows you to run a third copy of the program without defragmentation.

Hidden fragmentation

Compared to segmentation, the paging organization uses many small fixed-size memory areas instead of several large variable-size areas. Each frame has the same size, so that fragmentation due to too small frames is impossible.

But this is only an appearance . In fact, there is a hidden kind of fragmentation, the so-called internal fragmentation due to the fact that not every area of memory is exactly a multiple of the page size. Imagine in the above example a program of size 101: it will still need three pages of size 50, so it will take 49 bytes more than necessary. For clarity, fragmentation due to segmentation is called outer fragmentation .

Internal fragmentation is no good, but often it is a lesser evil than external fragmentation. Extra memory is still consumed, but now there is no need to defragment, and the amount of fragmentation is predictable (on average, half a page per each memory area).

Page tables

We saw that each of the millions of possible pages is individually matched to a frame. This address translation information needs to be stored somewhere. Segmentation uses separate segment registers for each active memory area, which is impossible in the case of pages, because there are many more than registers. Instead, it uses a structure called a page table .

For the above example, the tables will look like this:

As you can see, each copy of the program has its own page table. A pointer to the current active table is stored in a special register CPU. On

x86it is called CR3. Before launching each instance of the program, the operating system must load a pointer to the correct page table there.With each memory access, the CPU reads the table pointer from the register and searches for the corresponding frame in the table. This is a fully hardware function that runs completely transparent to the running program. To speed up the process, many processor architectures have a special cache that remembers the results of the latest transformations.

Depending on the architecture, attributes such as access rights can be stored in the flags field of the page table. In the example above, the flag

r/wmakes the page readable and writable.Multi-level page tables

Simple page tables have a problem with large address spaces: memory is wasted. For example, the program uses four virtual pages

0, 1_000_000, 1_000_050and 1_000_100(use _as the thousands separator):

It takes only four physical frame, but in the pages of the table more than a million records. We cannot skip empty records, because then the CPU in the conversion process will not be able to go directly to the correct record (for example, it is no longer guaranteed that the fourth page uses the fourth record).

To reduce memory loss, you can use a two-tier organization . The idea is that we use different tables for different areas. Additional table called page tablesecond level , performs conversion between address areas and first level page tables.

This is best explained by example. We define that each page table of level 1 is responsible for the area size

10_000. Then in the example above, the following tables will exist:

Page 0 falls into the first

10_000byte area , so it uses the first entry of the second-level page table. This entry points to the T1 first-level page table, which indicates that page 0 refers to frame 0. Pages

1_000_000, 1_000_050and 1_000_100fall into the 100th byte region10_000, therefore, they use the 100th entry of the level 2 page table. This entry points to another first level table T2, which translates three pages into frames 100, 150 and 200. Note that the page address in the first level tables does not contain region offsets, therefore for example, a record for a page 1_000_050is total 50. We still have 100 empty entries in the second level table, but this is much less than the previous million. The reason for the savings is that you do not need to create tables of the first level pages for unmatched memory areas between

10_000and 1_000_000. The principle of two-level tables can be extended to three, four or more levels. In general, such a system is called multi-level or hierarchicalpage table.

Knowing about page organization and multi-level tables, you can see how page organization is implemented in the x86_64 architecture (we assume that the processor is running in 64-bit mode).

Paging on x86_64

The x86_64 architecture uses a four-level table with a 4 KB page size. Regardless of the level, each page table has 512 elements. Each record has a size of 8 bytes, so the size of the tables is 512 × 8 bytes = 4 KB.

As you can see, each table index contains 9 bits, which makes sense, because in the tables 2 ^ 9 = 512 records. The lower 12 bits is the offset to the 4-kilobyte page (2 ^ 12 bytes = 4 KB). Bits 48 to 64 are discarded, so x86_64 is not really a 64-bit system, but only supports 48-bit addresses. There are plans to expand the size of the address to 57 bits through a 5-level page table , but such a processor has not yet been created.

Although bits 48 through 64 are discarded, they cannot be set to arbitrary values. All bits in this range must be copies of bit 47 in order to maintain unique addresses and allow future expansion, for example, to a 5-level page table. This is called a sign extension (sign-extension), because it is very similar to a sign extension in an additional code . If the address is incorrectly expanded, the CPU throws an exception.

Conversion example

Let us consider an example of how the address translation works: The

physical address of the current active page table of level 4, which is the root page table of this level, is stored in a register

CR3. Each page table entry then points to the physical frame of the next level table. A level 1 table entry indicates a displayed frame. Note that all the addresses in the page tables are physical, not virtual, since otherwise the CPU will need to convert these addresses (which can lead to endless recursion). The above hierarchy converts two pages (in blue). From the indices we can conclude that the virtual addresses of these pages

0x803fe7f000and 0x803FE00000. Let's see what happens when a program tries to read the memory at0x803FE7F5CE. First, we translate the address into binary and determine the indexes of the page table and the offset for the address:

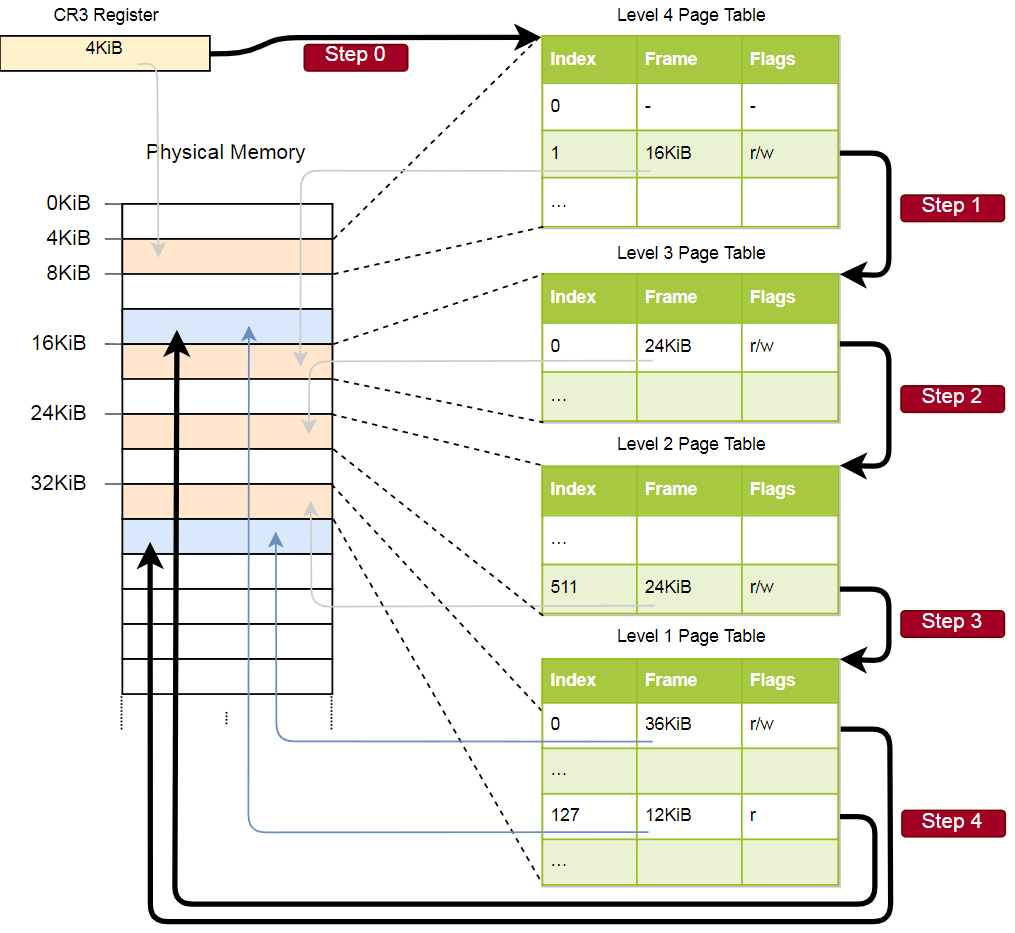

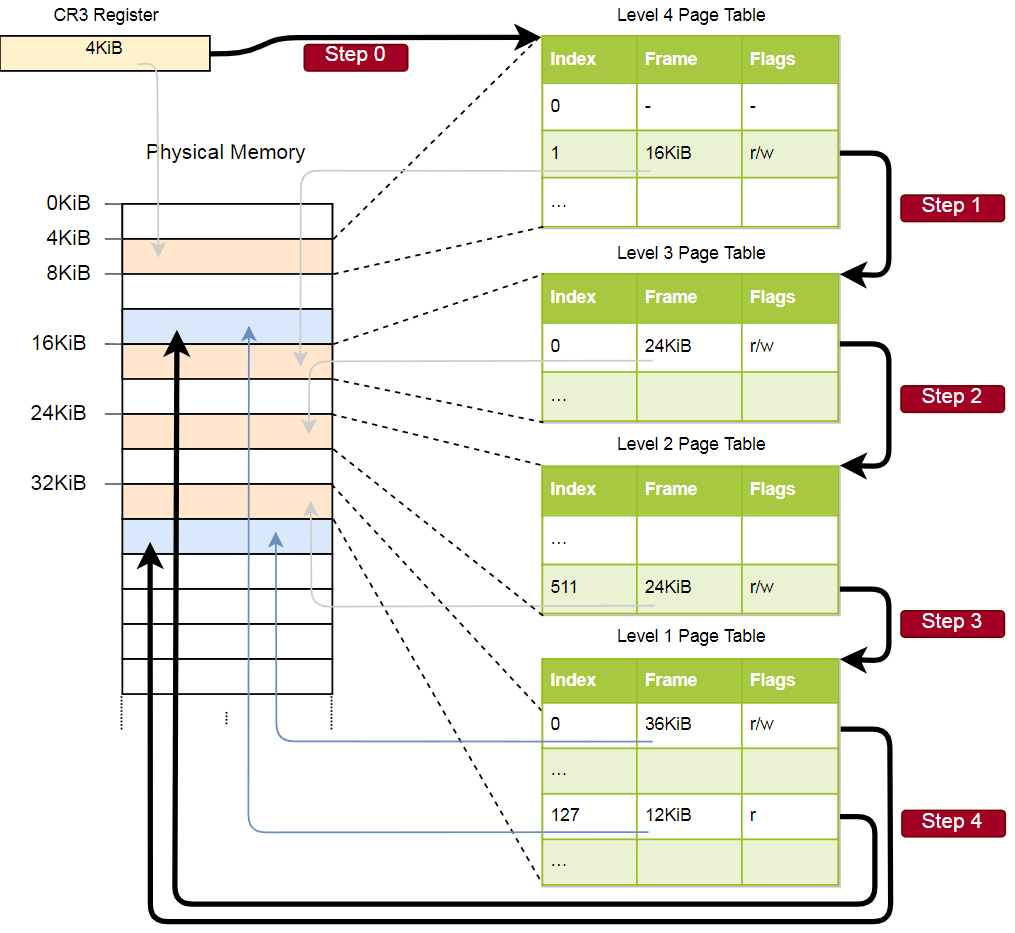

With these indexes, we can now go through the hierarchy of the page tables and find the appropriate frame:

- We read the address of the table of the fourth level of the register

CR3. - The fourth-level index is 1, so we are looking at the record with index 1 in this table. She says that the level 3 table is stored at 16 KB.

- We load a third-level table from this address and look at the entry with the index 0, which indicates the second-level table at 24 KB.

- The second level index is 511, so we are looking for the last record on this page to find out the address of the first level table.

- By recording with index 127 in the first level table, we finally find out that the page corresponds to a frame of 12 KB or 0xc000 in hexadecimal format.

- The final step is to add an offset to the frame address to get the physical address: 0xc000 + 0x5ce = 0xc5ce.

For a page in the first-level table, a flag is indicated

r, that is, only reading is allowed. At the hardware level, an exception will be thrown if we try to write there. Higher-level table permissions spread to lower levels, so if we set the read-only flag on the third level, no subsequent lower-level page will be writable, even if the flags that allow writing are specified there. Although this example uses only one instance of each table, usually there are several instances of each level in each address space. Maximum:

- one table of the fourth level

- 512 tables of the third level (since there are 512 records in the fourth level table),

- 512 * 512 second-level tables (since there are 512 entries in each of the third-level tables), and

- 512 * 512 * 512 tables of the first level (512 records for each table of the second level).

Page Table Format

In the x86_64 architecture, page tables are essentially arrays of 512 entries. In Rust syntax:

#[repr(align(4096))]pubstructPageTable {

entries: [PageTableEntry; 512],

}As indicated in the attribute

repr, the tables must be aligned with the page, i.e., at the border of 4 KB. This requirement ensures that the table always optimally fills the entire page, making the entries very compact. The size of each entry is 8 bytes (64 bits) and the following format:

| Bit (s) | Title | Value |

|---|---|---|

| 0 | present | page in memory |

| one | writable | recording allowed |

| 2 | user accessible | if the bit is not set, then only kernel access to the page |

| 3 | write through caching | write directly to memory |

| four | disable cache | disable cache for this page |

| five | accessed | The CPU sets this bit when the page is in use. |

| 6 | dirty | The CPU sets this bit when writing to the page. |

| 7 | huge page / null | zero bit in P1 and P4 creates 1 KB pages in P3, 2 MB pages in P2 |

| eight | global | the page is not filled out from the cache when switching the address space (the PGE register bit CR4 must be set) |

| 9-11 | available | OS can use them freely |

| 12-51 | physical address | the page-aligned 52-bit physical address of a frame or the following page table |

| 52-62 | available | OS can use them freely |

| 63 | no execute | disables code execution on this page (the NXE bit must be set in the EFER register) |

We see that only bits 12-51 are used to store the physical address of the frame, while the rest work as flags or can be used freely by the operating system. This is possible because we always point either to the address aligned to 4096 bytes, or to the aligned page of the tables, or to the beginning of the corresponding frame. This means that bits 0-11 are always zero, so they can not be stored, they are simply reset at the hardware level before using the address. The same applies to bits 52-63, since the x86_64 architecture only supports 52-bit physical addresses (and only 48-bit virtual addresses).

In more detail we will consider available flags:

- The flag

presentdistinguishes the displayed pages from not displayed. It can be used to temporarily save pages to disk when main memory is full. Upon subsequent access to the page, a special PageFault exception occurs, to which the OS responds by pumping up the page from the disk - the program continues. - Flags

writableandno executedetermine whether the content of the page is writable or contains executable instructions, respectively. - Flags

accessedanddirtyautomatically sets the processor when reading or writing to the page. The OS can use this information, for example, if it changes pages in places or when checking whether the content of the page has changed since the last download to disk. - Flags

write through cachinganddisable cacheallow you to manage the cache for each page separately. - The flag

user accessiblemakes the page available to code from user space, otherwise it is available only to the kernel. This function can be used to speed up system calls by keeping the address mapping for the kernel while the user program is running. However, Specter’s vulnerability allows programs to read these pages from user space. - The flag

globalsignals to the hardware that the page is available in all address spaces and cannot be removed from the address translation cache (see the TLB section below) on the address space switch. Usually, the user flag is simultaneously clearedaccessibleto translate the kernel code into all address spaces. - The flag

huge pageallows the creation of large-sized pages so that the records of tables of level 2 or 3 pages directly point to the displayed frame. This increases the page size by 512 times: at the second level up to 2 MB = 512 × 4 KB, and at the third level up to 1 GB = 512 × 2 MB. Larger pages require fewer address translation cache lines and fewer page tables.

The x86_64 architecture defines the format of the page tables and their entries , so we don’t have to create these structures ourselves.

Associative translation buffer (TLB)

Because of the four levels, for each address translation, four memory accesses are required. For the sake of performance, x86_64 caches the last few translations in a so-called associative translation buffer (TLB). This allows you to skip the conversion if it is still in the cache.

Unlike other processor caches, TLB is not completely transparent, does not update or remove conversions when the content of the page tables changes. This means that the kernel must update the TLB on its own whenever it changes the page table. To do this, there is a special CPU instruction called

invlpg(invalidate page), which removes the translation of the specified page from the TLB, so that the next time it is accessed, it is loaded again from the page table. TLB is completely cleared by resetting the register.CR3which imitates the address space switch. Through the tlb module in Rust both options are available. It is important to remember to clean the TLB after each change of the page table, otherwise the CPU will continue to use the old translation, which will lead to unpredictable errors that are very difficult to debug.

Implementation

We did not mention one thing: our core already supports paging . The loader from the article “Minimal core on Rust” has already established a four-level hierarchy that maps every page of our core with a physical frame, because page organization is mandatory in 64-bit mode on x86_64.

This means that in our kernel all memory addresses are virtual. Access to the VGA buffer at the address

0xb8000worked only because the bootloader ID translated this page into memory, that is, it associated the virtual page 0xb8000with a physical frame 0xb8000.Thanks to paging, the kernel is already relatively safe: every access beyond the permissible memory causes a page error, and does not allow writing to physical memory. The loader even set the correct access permissions for each page: only the pages with the code will be executable, and only the pages with the data will be available for writing

Page errors (PageFault)

Let's try to call PageFault by accessing memory outside the kernel. First, we create an error handler and register it with our IDT in order to see a specific exception instead of a generic double error :

// in src/interrupts.rs

lazy_static! {

staticref IDT: InterruptDescriptorTable = {

letmut idt = InterruptDescriptorTable::new();

[…]

idt.page_fault.set_handler_fn(page_fault_handler); // new

idt

};

}

use x86_64::structures::idt::PageFaultErrorCode;

extern"x86-interrupt"fnpage_fault_handler(

stack_frame: &mut ExceptionStackFrame,

_error_code: PageFaultErrorCode,

) {

use crate::hlt_loop;

use x86_64::registers::control::Cr2;

println!("EXCEPTION: PAGE FAULT");

println!("Accessed Address: {:?}", Cr2::read());

println!("{:#?}", stack_frame);

hlt_loop();

}If the page fails, the CPU automatically sets the register

CR2. It contains the virtual address of the page that caused the crash. To read and display this address, use the function Cr2::read. Typically, the type PageFaultErrorCodegives more information about the type of access to the memory that caused the error, but because of the LLVM bug, an invalid error code is transmitted, so for the time being we ignore this information. Program execution cannot be continued until we eliminate the page error, therefore we insert at the end hlt_loop. Now we get access to memory outside the kernel:

// in src/main.rs#[cfg(not(test))]#[no_mangle]pubextern"C"fn_start() -> ! {

use blog_os::interrupts::PICS;

println!("Hello World{}", "!");

// set up the IDT first, otherwise we would enter a boot loop instead of// invoking our page fault handler

blog_os::gdt::init();

blog_os::interrupts::init_idt();

unsafe { PICS.lock().initialize() };

x86_64::instructions::interrupts::enable();

// newlet ptr = 0xdeadbeafas *mutu32;

unsafe { *ptr = 42; }

println!("It did not crash!");

blog_os::hlt_loop();

}After launch, we see that the page error handler is being invoked: The

register

CR2does contain the address 0xdeadbeafwe wanted to access. The current instruction pointer is

0x20430a, so we know that this address points to a code page. Code pages are displayed as read-only by the loader, so reading from this address is working, and writing will cause an error. Try changing the pointer 0xdeadbeafto 0x20430a:// Note: The actual address might be different for you. Use the address that// your page fault handler reports.let ptr = 0x20430aas *mutu32;

// read from a code page -> worksunsafe { let x = *ptr; }

// write to a code page -> page faultunsafe { *ptr = 42; }If we comment out the last line, we can make sure that the read is working, and the write causes a PageFault error.

Access to page tables

Now take a look at the page tables for the kernel:

// in src/main.rs#[cfg(not(test))]#[no_mangle]pubextern"C"fn_start() -> ! {

use x86_64::registers::control::Cr3;

let (level_4_page_table, _) = Cr3::read();

println!("Level 4 page table at: {:?}", level_4_page_table.start_address());

[…]

}The function

Cr3::readof the x86_64returns from the register of CR3the currently active page table of the fourth level. Returns a pair PhysFrameand Cr3Flags. We are only interested in the first. After the launch, we see the following result:

Level 4 page table at: PhysAddr(0x1000)Thus, the currently active fourth-level page table is stored in physical memory at the address

0x1000, as indicated by the type PhysAddr. Now the question is: how to access this table from the kernel? With paging, direct access to physical memory is not possible, otherwise programs can easily bypass protection and gain access to the memory of other programs. Thus, the only way to access is through a virtual page that is translated into a physical frame at

0x1000. This is a typical problem, because the kernel should regularly refer to page tables, for example, when allocating a stack for a new thread. We will describe the solutions to this problem in detail in the next article. For now, just say that the loader uses a method called recursive page tables . The last page of the virtual address space is

0xffff_ffff_ffff_f000, use it to read some of the records in this table:// in src/main.rs#[cfg(not(test))]#[no_mangle]pubextern"C"fn_start() -> ! {

let level_4_table_pointer = 0xffff_ffff_ffff_f000as *constu64;

for i in0..10 {

let entry = unsafe { *level_4_table_pointer.offset(i) };

println!("Entry {}: {:#x}", i, entry);

}

[…]

}We have reduced the address of the last virtual page to a pointer to

u64. As mentioned in the previous section, each page table entry is 8 bytes (64 bits) in size, so it u64represents exactly one entry. With the help of the cycle forwe display the first 10 records of the table Inside the loop, we use an unsafe block to read directly from the pointer and метод offsetto calculate the pointer. After starting see the following results:

According to the format described above, the value

0x2023recording means having flags 0 present, writable, accessedand translation into a frame 0x2000. Record 1 is broadcast to the frame 0x6e2000and it has the same flags, plusdirty. Records 2-9 are missing, so these virtual address ranges are not mapped to any physical addresses. Instead of working with unsafe pointers, you can use the type

PageTablefrom x86_64:// in src/main.rs#[cfg(not(test))]#[no_mangle]pubextern"C"fn_start() -> ! {

use x86_64::structures::paging::PageTable;

let level_4_table_ptr = 0xffff_ffff_ffff_f000as *const PageTable;

let level_4_table = unsafe {&*level_4_table_ptr};

for i in0..10 {

println!("Entry {}: {:?}", i, level_4_table[i]);

}

[…]

}Here we assign a pointer to the pointer

0xffff_ffff_ffff_f000first, and then convert it to the Rust link. The operation is still unsafe, as the compiler cannot know that access to this address is allowed. But after conversion, we have a safe type &PageTable, which gives access to individual records through safe, proven boundaries of indexing operations . x86_64also provides some abstraction for individual records to instantly see the flags set:

The next step - to follow the signs to write 0 or write 1 to the page table level 3. But now we have again the problem arises that

0x2000, and 0x6e5000are physical addresses, so we do not we can get direct access to them. This problem will be solved in the next article.Summary

The article presents two methods of memory protection: segmentation and page organization. The first method uses variable-size memory areas and suffers from external fragmentation, the second uses fixed-size pages and allows much more detailed control over access rights.

The page organization stores information about the pages broadcast in tables of one or several levels. The x86_64 architecture uses four-level tables with a page size of 4 KB. The equipment automatically bypasses the page tables and caches the conversion results in an associative translation buffer (TLB). When changing the page tables, it should be forcibly cleared.

We learned that our core already supports paging, and with unauthorized memory access, PageFault falls out. We tried to access the current active page tables, but managed to access only the fourth-level table, since the page tables store the physical addresses, and we cannot access them directly from the kernel.

What's next?

The following article is based on the fundamentals that we have now learned. To access the page tables from the kernel, an advanced technique called recursive page tables is used to cross the table hierarchy and implement software translation of addresses. The article also explains how to create new broadcasts in the page tables.