IT Tracking, Emotion and VR: Technology Convergence and Current Research

Virtual reality, emotion recognition and IT tracking - three independently developing areas of knowledge and new commercially attractive technology markets - are increasingly seen in recent years as the focus of convergence, merging, synthesis of approaches to create new generation products. And in this natural process of rapprochement, there is hardly anything surprising: besides the results, which can be discussed with some caution, but also with considerable user enthusiasm (by the way, the recent film “First Player Get Ready” by Steven Spielberg literally visualizes many of the expected scenarios ) Let's discuss in more detail.

Virtual reality is, first of all, the opportunity to feel the most complete immersion in a fantastic, frankly, world: to fight against evil spirits, make friends with unicorns and dragons, imagine yourself a huge centipede, go through endless space to new planets. However, in order for virtual reality to be perceived - on a sensory level - really as reality, right up to confusion with the surrounding reality, elements of natural communication must be present in it: emotions and eye contact. Also in VR, a person can and should possess additional superpowers (for example, to move objects with his eyes) as something taken for granted. But everything is not so simple - behind the implementation of these opportunities there are many emerging problems and interesting technological solutions designed to remove them.

The gaming industry is rapidly adopting a wealth of technological know-how. Suppose simple IT trackers (in the form of game controllers) have already become available to a wide range of computer game users, while in other areas the widespread application of these developments has yet to be. It is obvious in this connection that one of the most rapidly developing trends in VR has become the combination of virtual reality systems and IT tracking. Recently, the Swedish IT tracking giant Tobii entered into a collaboration with Qualcomm , as reported in detail , the developer of virtual reality systems Oculus (owned by Facebook) has teamed up with the famous low cost startup trackers The Eye Tribe(a demonstration of the work of Oculus with the built-in IT tracker can be found here ), Apple in the traditionally secret mode acquired the large German IT tracking company SMI (though no one knows the motive and what the choice was due to ...).

Integration of the game controller function into virtual reality enables the user to control objects with their eyes (in the simplest case, to “grab” something or select an item from the menu, you just need to look at this object for a little longer than a couple of moments - the threshold method of fixation duration is used). However, this gives rise to the so-called The Midas-Touch Problem, when the user only wants to carefully examine the object, and as a result involuntarily activates some programmed function that he did not want to use at all.

Scientists from the University of Hong Kong (Pi & Shi, 2017) to solve the Midas problem suggest using a dynamic threshold for the duration of fixations using a probabilistic model. This model takes into account the previous choices of the subject and calculates the probability of choice for the next object, while for objects with a high probability a shorter threshold fixation time is set than for objects with a low probability. The proposed algorithm has demonstrated its advantages in terms of speed compared to the fixed threshold method of fixation duration. It should be noted that the mentioned system was designed for typing with the help of a glance, that is, it can be applied only in interfaces where the number of objects does not change over time.

A group of developers from the Technical University of Munich (Schenk, Dreiser, Rigoll, & Dorr, 2017) proposed a system for interacting with computer interfaces using GazeEverywhere's look, which included the development of SPOCK (Schenk, Tiefenbacher, Rigoll, & Dorr, 2016) to solve the problem Midas. SPOCK is an object selection method consisting of two steps: after fixing on an object whose duration is above the set threshold, two circles appear on the top and bottom of the object, slowly moving in different directions: the subject's task is to track the movement of one of them ( detection of slow tracking eye movement just activates the selected object). The GazeEverywhere system is also curious in that it includes an online recalibration algorithm,

As an alternative to the threshold method of fixation duration for selecting an object and for solving the Midas problem, a group of scientists from Weimar (Huckauf, Goettel, Heinbockel, & Urbina, 2005) consider it correct to use anti-saccades as a selection action (anti-saccades are the execution of eye movements in the opposite direction from the target). This method contributes to a faster selection of the object than the method of the threshold for the duration of fixations, but has less accuracy. Also, its use outside the laboratory raises questions: after all, looking at the desired object for a person is more natural behavior than not specifically looking in his direction.

Wu et al. (Wu, Wang, Lin, & Zhou, 2017) developed an even simpler method to solve the Midas problem - they suggested using a wink with one eye as a marker for selecting objects (i.e., an explicit pattern: one eye is open and the other is closed) . However, in a dynamic gameplay, the integration of such a method of selecting objects is hardly possible due to its discomfort, at least.

Pfeuffer et al. (Pfeuffer, Mayer, Mardanbegi, & Gellersen, 2017) propose to get away from the idea of creating an interface where the object is selected and manipulated only through eye movements, and use the Gaze + pinch interaction technique instead. This method is based on the fact that the object is selected due to the localization of the gaze on it in combination with a gesture of folded fingers into a “pinch” (of both one hand and both hands). Gaze + pinch interaction technique has advantages both in comparison with “virtual hands”, since it allows you to manipulate virtual objects from a distance, and with device controllers, because it frees up the user's hands and creates an option to expand the functionality by adding other gestures.

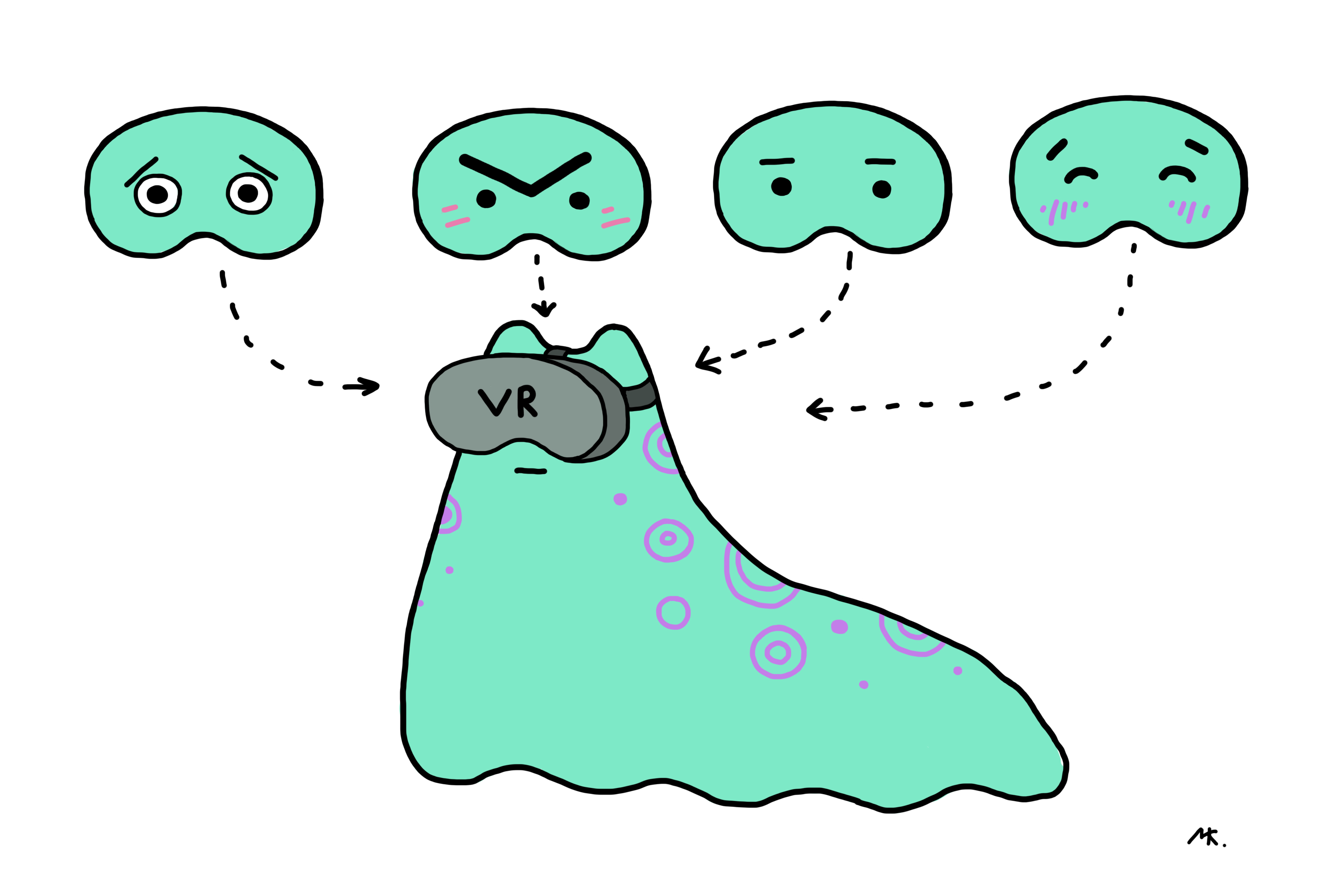

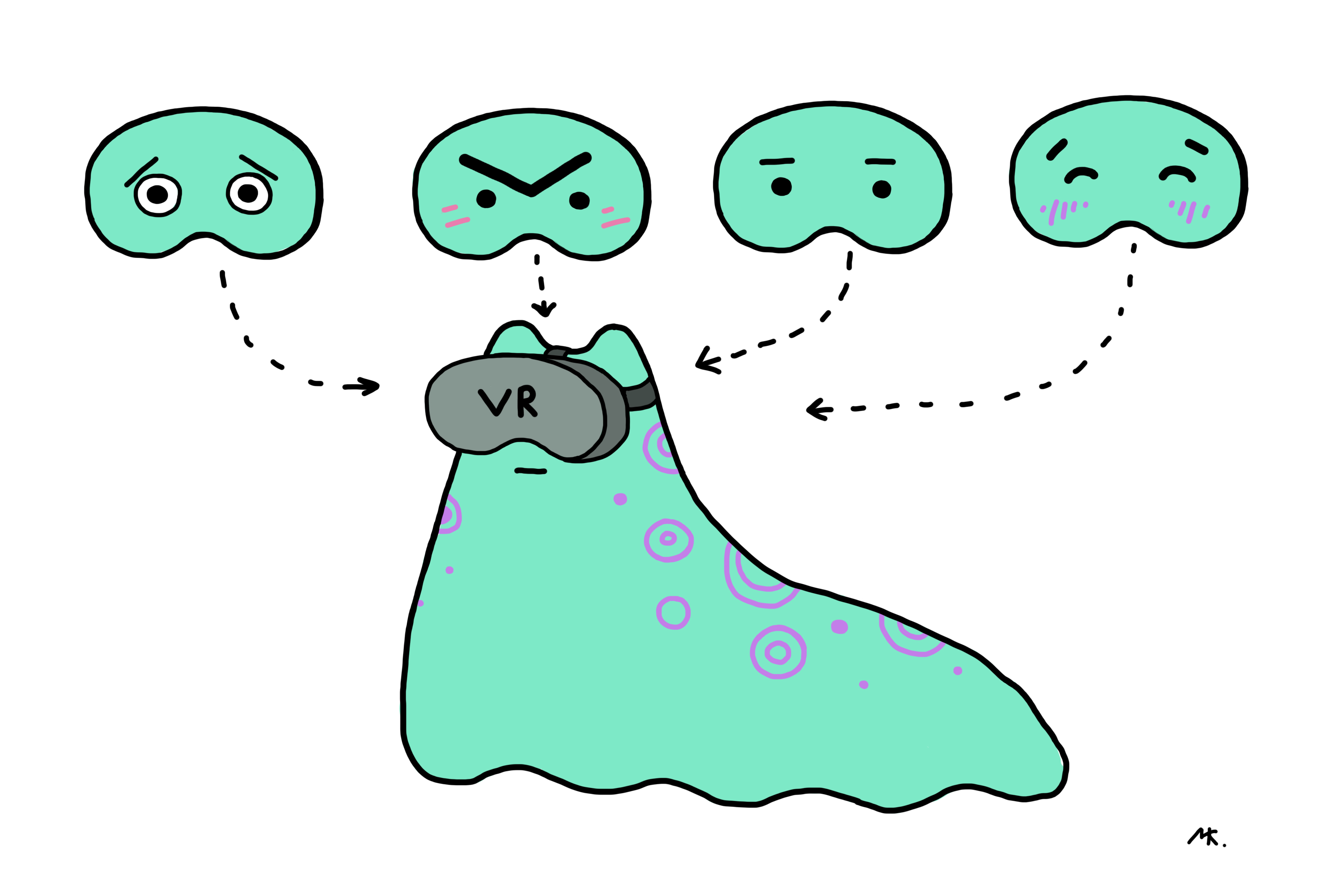

For successful virtual communication, social networks and multiplayer games in VR, first of all, user avatars must be able to naturally express emotions. Now we can distinguish two types of solutions for adding emotionality to VR: installing additional devices and purely software solutions.

So, the MindMaze Mask project offers a solution in the form of sensors that measure the electrical activity of the muscles of the face located inside the helmet. Also, its creators demonstrated an algorithm that, based on the current electrical activity of muscles, is able to predict facial expressions for high-quality rendering of an avatar. The development from FACEteq ( emotion sensing in VR ) is based on similar principles.

Startup Veesosupplemented virtual reality glasses with another camera that records the movements of the lower half of the face. Combining images of the lower half of the face and eye area, they recognize the facial expressions of the entire face to transfer it to a virtual avatar.

A team from the Georgia Institute of Technology in partnership with Google (Hickson, Dufour, Sud, Kwatra, & Essa, 2017) proposed a more elegant solution and developed technology for recognizing human emotions in virtual reality glasses without adding any additional hardware devices to the frame. This algorithm is based on the convolutional neural network and recognizes facial expressions ("anger", "joy", "surprise", "neutral facial expression", "closed eyes") in an average of 74% of cases. For her training, the developers used their own dataset of eye images from infrared cameras of the built-in IT tracker, recorded during the visualization of an emotion by participants (there were 23 in total) in virtual reality glasses. In addition, the dataset included emotional expressions of the entire face of the study participants, recorded on a regular camera. As a method for detecting facial expressions, they chose the well-known system based on recognition of action units -FACS (however, they took only the action units of the upper half of the face). Each action unit was recognized by the proposed algorithm in 63.7% of cases without personalization, and in 70.2% with personalization.

There are similar developments in the field of detecting emotions only from images of the eye area and without reference to virtual reality (Priya & Muralidhar, 2017; Vinotha, Arun, & Arun, 2013). For example, (Priya & Muralidhar, 2017) also designed a system based on action units and derived an algorithm for recognizing seven emotional facial expressions (joy, sadness, anger, fear, disgust, surprise, and a neutral facial expression) using seven points (three along the eyebrow, two in the corners of the eye and two more in the center of the upper and lower eyelids), which works with an accuracy of 78.2%.

Global research and experimentation is ongoing, and the Neurodata Lab IT tracking team is sure to tell you about them. Stay with us.

Bibliography:

Hickson, S., Dufour, N., Sud, A., Kwatra, V., & Essa, I. (2017). Eyemotion: Classifying facial expressions in VR using eye-tracking cameras. Retrieved from arxiv.org/abs/1707.07204

Huckauf, A., Goettel, T., Heinbockel, M., & Urbina, M. (2005). What you don't look at is what you get: anti-saccades can reduce the midas touch-problem. In Proceedings of the 2nd Symposium on Applied Perception in Graphics and Visualization, APGV 2005, A Coruña, Spain, August 26-28, 2005 (pp. 170-170). doi.org/10.1145/1080402.1080453

Pfeuffer, K., Mayer, B., Mardanbegi, D., & Gellersen, H. (2017). Gaze + pinch interaction in virtual reality. Proceedings of the 5th Symposium on Spatial User Interaction - SUI '17, (October), 99–108. doi.org/10.1145/3131277.3132180

Pi, J., & Shi, BE (2017). Probabilistic adjustment of dwell time for eye typing. Proceedings - 2017 10th International Conference on Human System Interactions, HSI 2017, 251–257. doi.org/10.1109/HSI.2017.8005041

Priya, VR, & Muralidhar, A. (2017). Facial Emotion Recognition Using Eye. International Journal of Applied Engineering Research ISSN, 12 (September), 5655-5659. doi.org/10.1109/SMC.2015.387

Schenk, S., Dreiser, M., Rigoll, G., & Dorr, M. (2017). GazeEverywhere: Enabling Gaze-only User Interaction on an Unmodified Desktop PC in Everyday Scenarios. CHI '17 Proceedings of the 2017 CHI Conference on Human Factors in Computing Systems, 3034-3044. doi.org/10.1145/3025453.3025455

Schenk, S., Tiefenbacher, P., Rigoll, G., & Dorr, M. (2016). Spock Proceedings of the 2016 CHI Conference Extended Abstracts on Human Factors in Computing Systems - CHI EA '16, 2681–2687. doi.org/10.1145/2851581.2892291

Vinotha, SR, Arun, R., & Arun, T. (2013). Emotion Recognition from Human Eye Expression. International Journal of Research in Computer and Communication Technology, 2 (4), 158–164.

Wu, T., Wang, P., Lin, Y., & Zhou, C. (2017). A Robust Noninvasive Eye Control Approach For Disabled People Based on Kinect 2.0 Sensor. IEEE Sensors Letters, 1 (4), 1–4. doi.org/10.1109/LSENS.2017.2720718

Material author:

Maria Konstantinova, researcher at the Neurodata Lab , biologist, physiologist, specialist in the visual sensory system, oculography and oculomotorics.

Virtual reality is, first of all, the opportunity to feel the most complete immersion in a fantastic, frankly, world: to fight against evil spirits, make friends with unicorns and dragons, imagine yourself a huge centipede, go through endless space to new planets. However, in order for virtual reality to be perceived - on a sensory level - really as reality, right up to confusion with the surrounding reality, elements of natural communication must be present in it: emotions and eye contact. Also in VR, a person can and should possess additional superpowers (for example, to move objects with his eyes) as something taken for granted. But everything is not so simple - behind the implementation of these opportunities there are many emerging problems and interesting technological solutions designed to remove them.

The gaming industry is rapidly adopting a wealth of technological know-how. Suppose simple IT trackers (in the form of game controllers) have already become available to a wide range of computer game users, while in other areas the widespread application of these developments has yet to be. It is obvious in this connection that one of the most rapidly developing trends in VR has become the combination of virtual reality systems and IT tracking. Recently, the Swedish IT tracking giant Tobii entered into a collaboration with Qualcomm , as reported in detail , the developer of virtual reality systems Oculus (owned by Facebook) has teamed up with the famous low cost startup trackers The Eye Tribe(a demonstration of the work of Oculus with the built-in IT tracker can be found here ), Apple in the traditionally secret mode acquired the large German IT tracking company SMI (though no one knows the motive and what the choice was due to ...).

Integration of the game controller function into virtual reality enables the user to control objects with their eyes (in the simplest case, to “grab” something or select an item from the menu, you just need to look at this object for a little longer than a couple of moments - the threshold method of fixation duration is used). However, this gives rise to the so-called The Midas-Touch Problem, when the user only wants to carefully examine the object, and as a result involuntarily activates some programmed function that he did not want to use at all.

Virtual King Midas

Scientists from the University of Hong Kong (Pi & Shi, 2017) to solve the Midas problem suggest using a dynamic threshold for the duration of fixations using a probabilistic model. This model takes into account the previous choices of the subject and calculates the probability of choice for the next object, while for objects with a high probability a shorter threshold fixation time is set than for objects with a low probability. The proposed algorithm has demonstrated its advantages in terms of speed compared to the fixed threshold method of fixation duration. It should be noted that the mentioned system was designed for typing with the help of a glance, that is, it can be applied only in interfaces where the number of objects does not change over time.

A group of developers from the Technical University of Munich (Schenk, Dreiser, Rigoll, & Dorr, 2017) proposed a system for interacting with computer interfaces using GazeEverywhere's look, which included the development of SPOCK (Schenk, Tiefenbacher, Rigoll, & Dorr, 2016) to solve the problem Midas. SPOCK is an object selection method consisting of two steps: after fixing on an object whose duration is above the set threshold, two circles appear on the top and bottom of the object, slowly moving in different directions: the subject's task is to track the movement of one of them ( detection of slow tracking eye movement just activates the selected object). The GazeEverywhere system is also curious in that it includes an online recalibration algorithm,

As an alternative to the threshold method of fixation duration for selecting an object and for solving the Midas problem, a group of scientists from Weimar (Huckauf, Goettel, Heinbockel, & Urbina, 2005) consider it correct to use anti-saccades as a selection action (anti-saccades are the execution of eye movements in the opposite direction from the target). This method contributes to a faster selection of the object than the method of the threshold for the duration of fixations, but has less accuracy. Also, its use outside the laboratory raises questions: after all, looking at the desired object for a person is more natural behavior than not specifically looking in his direction.

Wu et al. (Wu, Wang, Lin, & Zhou, 2017) developed an even simpler method to solve the Midas problem - they suggested using a wink with one eye as a marker for selecting objects (i.e., an explicit pattern: one eye is open and the other is closed) . However, in a dynamic gameplay, the integration of such a method of selecting objects is hardly possible due to its discomfort, at least.

Pfeuffer et al. (Pfeuffer, Mayer, Mardanbegi, & Gellersen, 2017) propose to get away from the idea of creating an interface where the object is selected and manipulated only through eye movements, and use the Gaze + pinch interaction technique instead. This method is based on the fact that the object is selected due to the localization of the gaze on it in combination with a gesture of folded fingers into a “pinch” (of both one hand and both hands). Gaze + pinch interaction technique has advantages both in comparison with “virtual hands”, since it allows you to manipulate virtual objects from a distance, and with device controllers, because it frees up the user's hands and creates an option to expand the functionality by adding other gestures.

Emotions in VR

For successful virtual communication, social networks and multiplayer games in VR, first of all, user avatars must be able to naturally express emotions. Now we can distinguish two types of solutions for adding emotionality to VR: installing additional devices and purely software solutions.

So, the MindMaze Mask project offers a solution in the form of sensors that measure the electrical activity of the muscles of the face located inside the helmet. Also, its creators demonstrated an algorithm that, based on the current electrical activity of muscles, is able to predict facial expressions for high-quality rendering of an avatar. The development from FACEteq ( emotion sensing in VR ) is based on similar principles.

Startup Veesosupplemented virtual reality glasses with another camera that records the movements of the lower half of the face. Combining images of the lower half of the face and eye area, they recognize the facial expressions of the entire face to transfer it to a virtual avatar.

A team from the Georgia Institute of Technology in partnership with Google (Hickson, Dufour, Sud, Kwatra, & Essa, 2017) proposed a more elegant solution and developed technology for recognizing human emotions in virtual reality glasses without adding any additional hardware devices to the frame. This algorithm is based on the convolutional neural network and recognizes facial expressions ("anger", "joy", "surprise", "neutral facial expression", "closed eyes") in an average of 74% of cases. For her training, the developers used their own dataset of eye images from infrared cameras of the built-in IT tracker, recorded during the visualization of an emotion by participants (there were 23 in total) in virtual reality glasses. In addition, the dataset included emotional expressions of the entire face of the study participants, recorded on a regular camera. As a method for detecting facial expressions, they chose the well-known system based on recognition of action units -FACS (however, they took only the action units of the upper half of the face). Each action unit was recognized by the proposed algorithm in 63.7% of cases without personalization, and in 70.2% with personalization.

There are similar developments in the field of detecting emotions only from images of the eye area and without reference to virtual reality (Priya & Muralidhar, 2017; Vinotha, Arun, & Arun, 2013). For example, (Priya & Muralidhar, 2017) also designed a system based on action units and derived an algorithm for recognizing seven emotional facial expressions (joy, sadness, anger, fear, disgust, surprise, and a neutral facial expression) using seven points (three along the eyebrow, two in the corners of the eye and two more in the center of the upper and lower eyelids), which works with an accuracy of 78.2%.

Global research and experimentation is ongoing, and the Neurodata Lab IT tracking team is sure to tell you about them. Stay with us.

Bibliography:

Hickson, S., Dufour, N., Sud, A., Kwatra, V., & Essa, I. (2017). Eyemotion: Classifying facial expressions in VR using eye-tracking cameras. Retrieved from arxiv.org/abs/1707.07204

Huckauf, A., Goettel, T., Heinbockel, M., & Urbina, M. (2005). What you don't look at is what you get: anti-saccades can reduce the midas touch-problem. In Proceedings of the 2nd Symposium on Applied Perception in Graphics and Visualization, APGV 2005, A Coruña, Spain, August 26-28, 2005 (pp. 170-170). doi.org/10.1145/1080402.1080453

Pfeuffer, K., Mayer, B., Mardanbegi, D., & Gellersen, H. (2017). Gaze + pinch interaction in virtual reality. Proceedings of the 5th Symposium on Spatial User Interaction - SUI '17, (October), 99–108. doi.org/10.1145/3131277.3132180

Pi, J., & Shi, BE (2017). Probabilistic adjustment of dwell time for eye typing. Proceedings - 2017 10th International Conference on Human System Interactions, HSI 2017, 251–257. doi.org/10.1109/HSI.2017.8005041

Priya, VR, & Muralidhar, A. (2017). Facial Emotion Recognition Using Eye. International Journal of Applied Engineering Research ISSN, 12 (September), 5655-5659. doi.org/10.1109/SMC.2015.387

Schenk, S., Dreiser, M., Rigoll, G., & Dorr, M. (2017). GazeEverywhere: Enabling Gaze-only User Interaction on an Unmodified Desktop PC in Everyday Scenarios. CHI '17 Proceedings of the 2017 CHI Conference on Human Factors in Computing Systems, 3034-3044. doi.org/10.1145/3025453.3025455

Schenk, S., Tiefenbacher, P., Rigoll, G., & Dorr, M. (2016). Spock Proceedings of the 2016 CHI Conference Extended Abstracts on Human Factors in Computing Systems - CHI EA '16, 2681–2687. doi.org/10.1145/2851581.2892291

Vinotha, SR, Arun, R., & Arun, T. (2013). Emotion Recognition from Human Eye Expression. International Journal of Research in Computer and Communication Technology, 2 (4), 158–164.

Wu, T., Wang, P., Lin, Y., & Zhou, C. (2017). A Robust Noninvasive Eye Control Approach For Disabled People Based on Kinect 2.0 Sensor. IEEE Sensors Letters, 1 (4), 1–4. doi.org/10.1109/LSENS.2017.2720718

Material author:

Maria Konstantinova, researcher at the Neurodata Lab , biologist, physiologist, specialist in the visual sensory system, oculography and oculomotorics.