SOA: send a request to the server? What could be easier?

You may have heard about Booking.com that they experiment a lot and often deploy without testing . And yet, that there is one large 4 GB repository, it has 4 million lines of pearl bar code, and generally a monolithic architecture.

Booking.com is changing at the same time. This is not to say that this is a cardinal abrupt change, but a slow and confident transformation. The stack is changing, Java is gradually being introduced in those places where it is relevant. Including the term service-oriented architecture (SOA) is heard more and more often in internal discussions.

Further, the story of Ivan Kruglov ( @vian ) about these changes in terms of the interaction of internal components on Highload Junior ++ 2017 . Having fallen into the trap of cyclically dependent workers, we had to qualitatively figure out what was happening, and by what means, all this could be fixed.

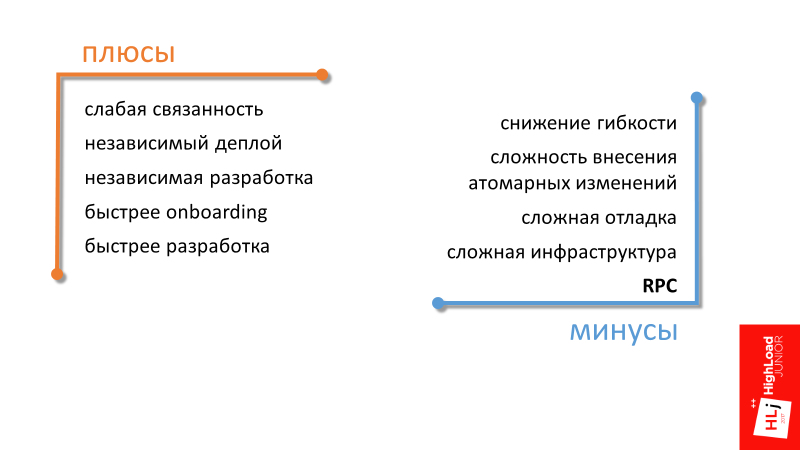

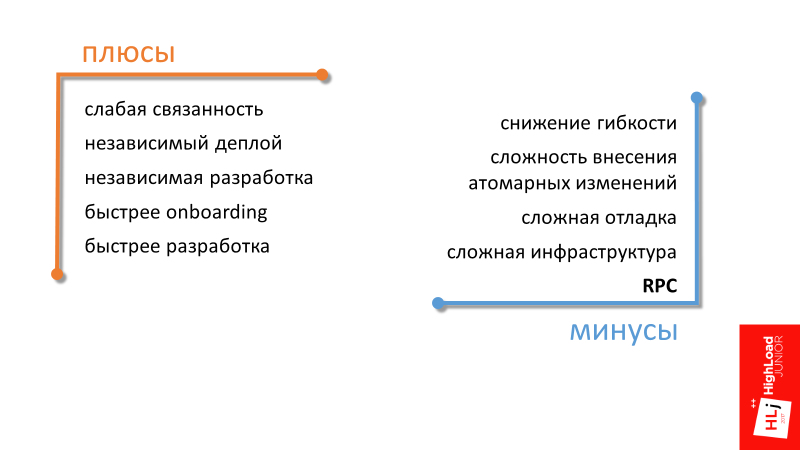

The first 3 advantages of a service-oriented architecture - weak connectivity, independent deployment, independent development - are understandable, I will not dwell on them in detail. We proceed immediately to the next.

Faster onboarding

In a company in a phase of intensive growth, a lot of attention is paid to the topic of quickly including a new employee in the work process. A service-oriented architecture can help here by focusing a new employee on a specific small area. It is easier for him to gain knowledge about a separate part of the entire system.

Faster development

The last paragraph summarizes the rest. From the transition to a service-oriented architecture, if we cannot significantly speed up development, then at least maintain the current pace due to less connected components.

Reduced flexibility

By flexibility I mean flexibility in redistributing human resources. For example, in a monolithic architecture, in order to make any changes to the code and break anything, you need to have knowledge of how a large area works. With this approach, it turns out that transferring a person between projects becomes simple, because with a high degree of probability this person will already know a fairly large area in a new project. From a management point of view, the system is more flexible.

In a service-oriented architecture, the opposite is true. If you have a different technological stack in different teams, the transition between the teams may be equivalent to switching to another company, where everything can be completely different.

This is a managerial minus, the rest are technical minuses.

The complexity of making atomic changes (not just data)

In a distributed system, we lose the possibility of transactions and atomic changes in the code. One commit to several repositories cannot be extended.

Complex debugging A

distributed system is fundamentally more difficult to debug. Elementary, we have no debugger in which we could see where we will go next, where the data will fly in the next step. Even in the elementary task of analyzing logs, when there are 2 servers, each of which has its own logs, it is difficult to understand what is related to what.

It turns out that in a service-oriented architecture, the infrastructure as a whole is more complicated. Many supporting components appear, for example, a distributed logging system and a distributed tracing system are needed.

This article will focus on another drawback.

Remote Procedure Call (RPC)

It stems from the fact that if in a monolithic architecture, in order to perform some operation, it is enough to call the desired function, then in SOA you will have to make a remote call.

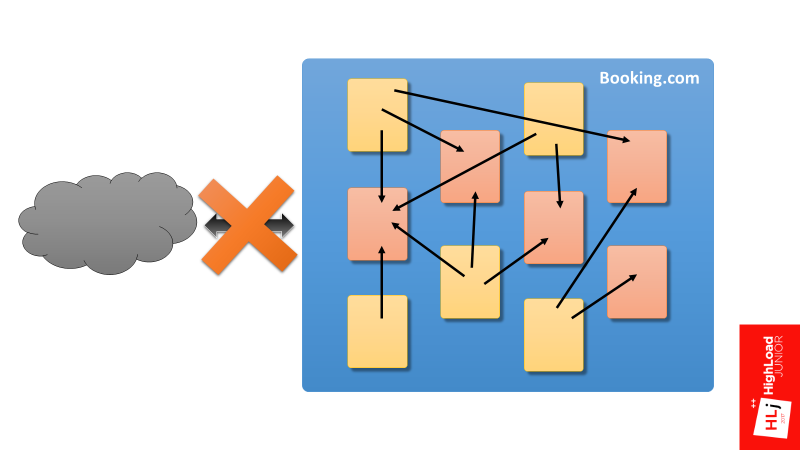

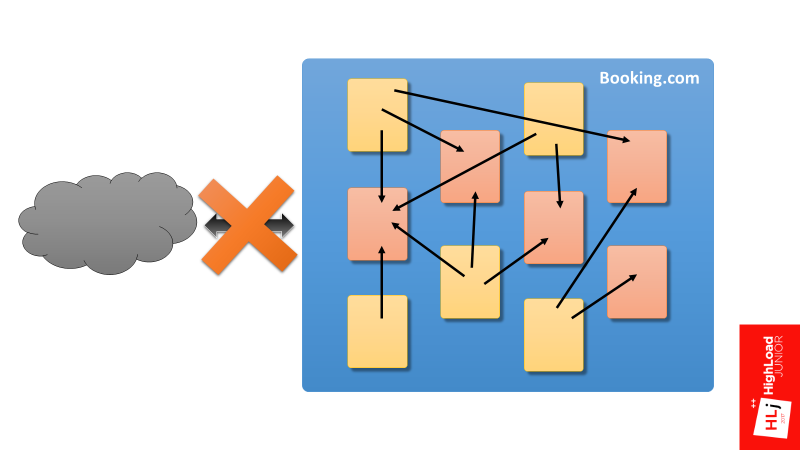

I want to say right away what I will and what I will not talk about. I will not talk about the interaction of Booking.com with the outside world. My talk is about the internal components of Booking.com and their interactions.

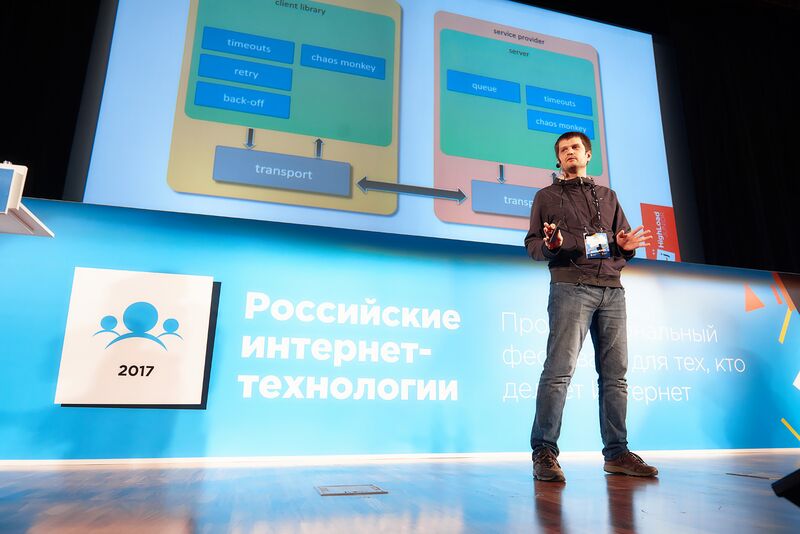

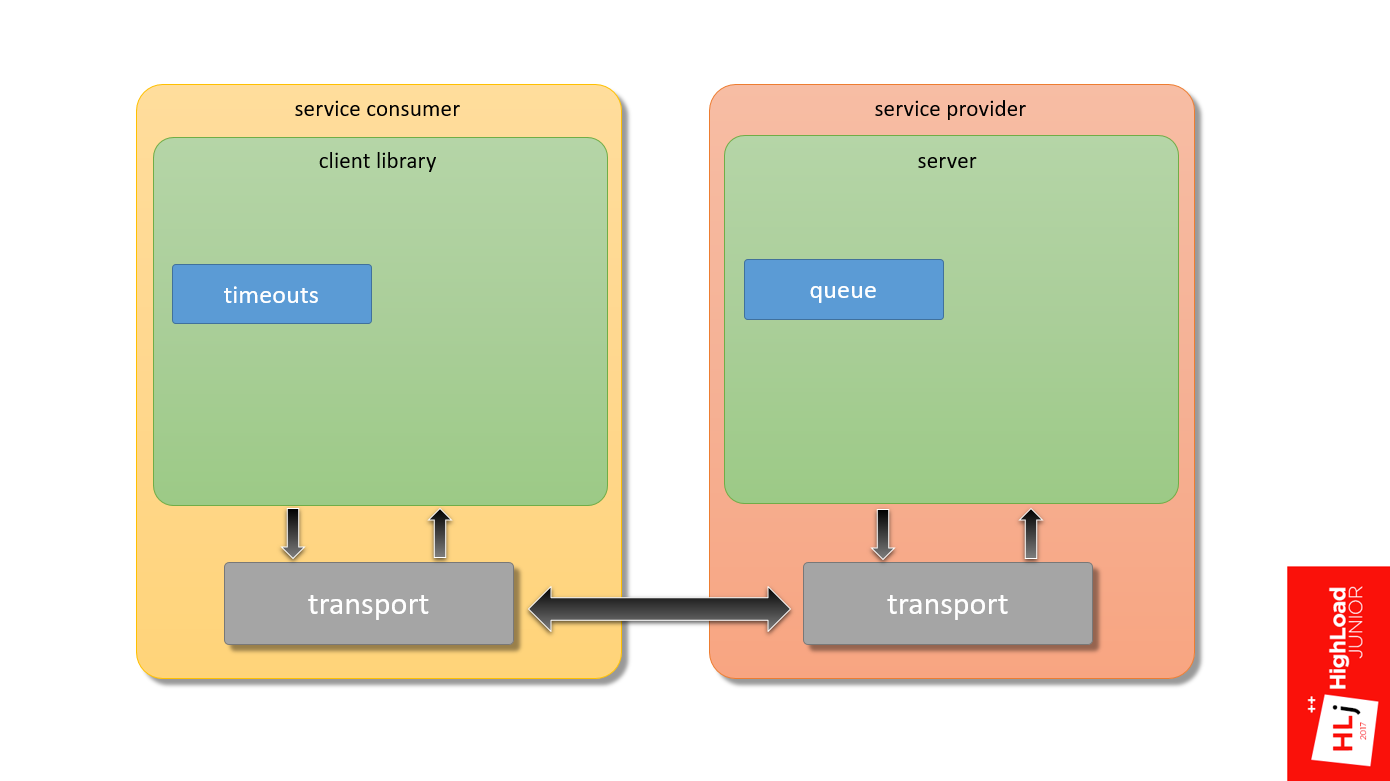

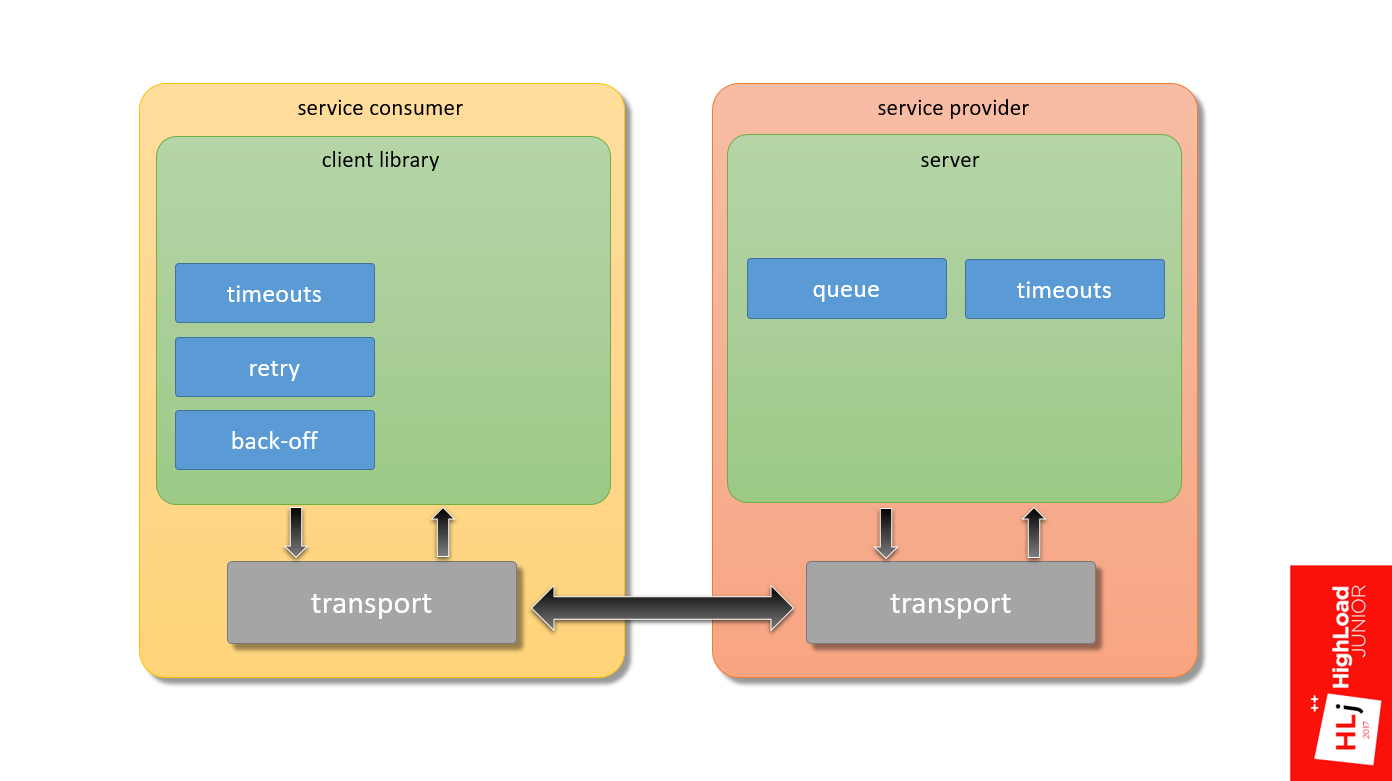

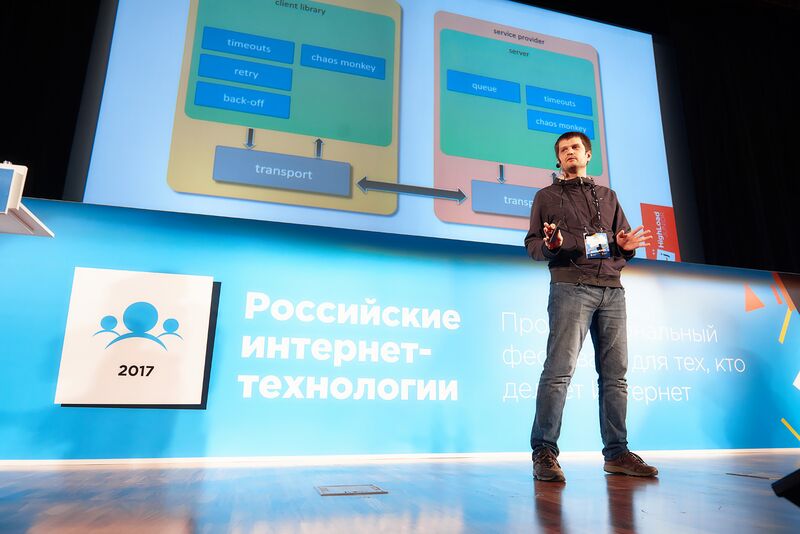

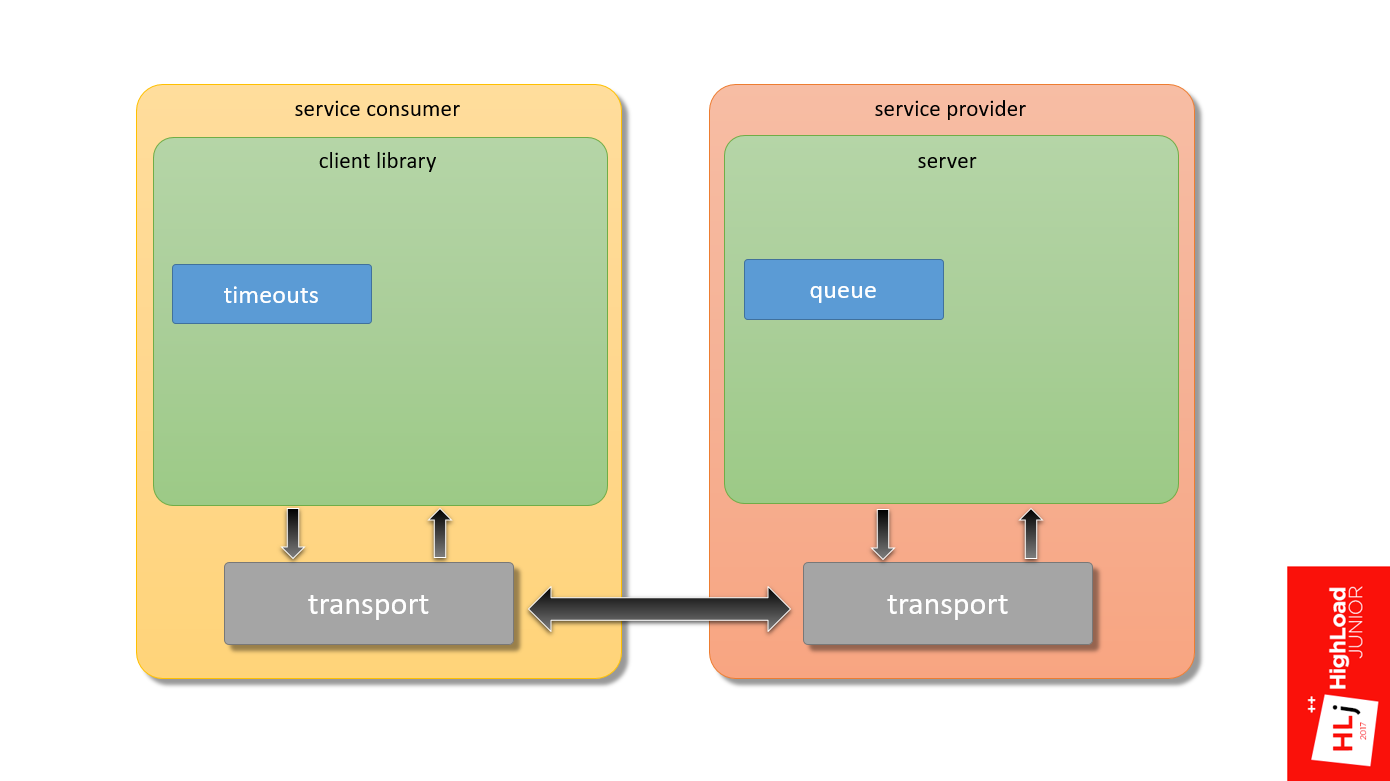

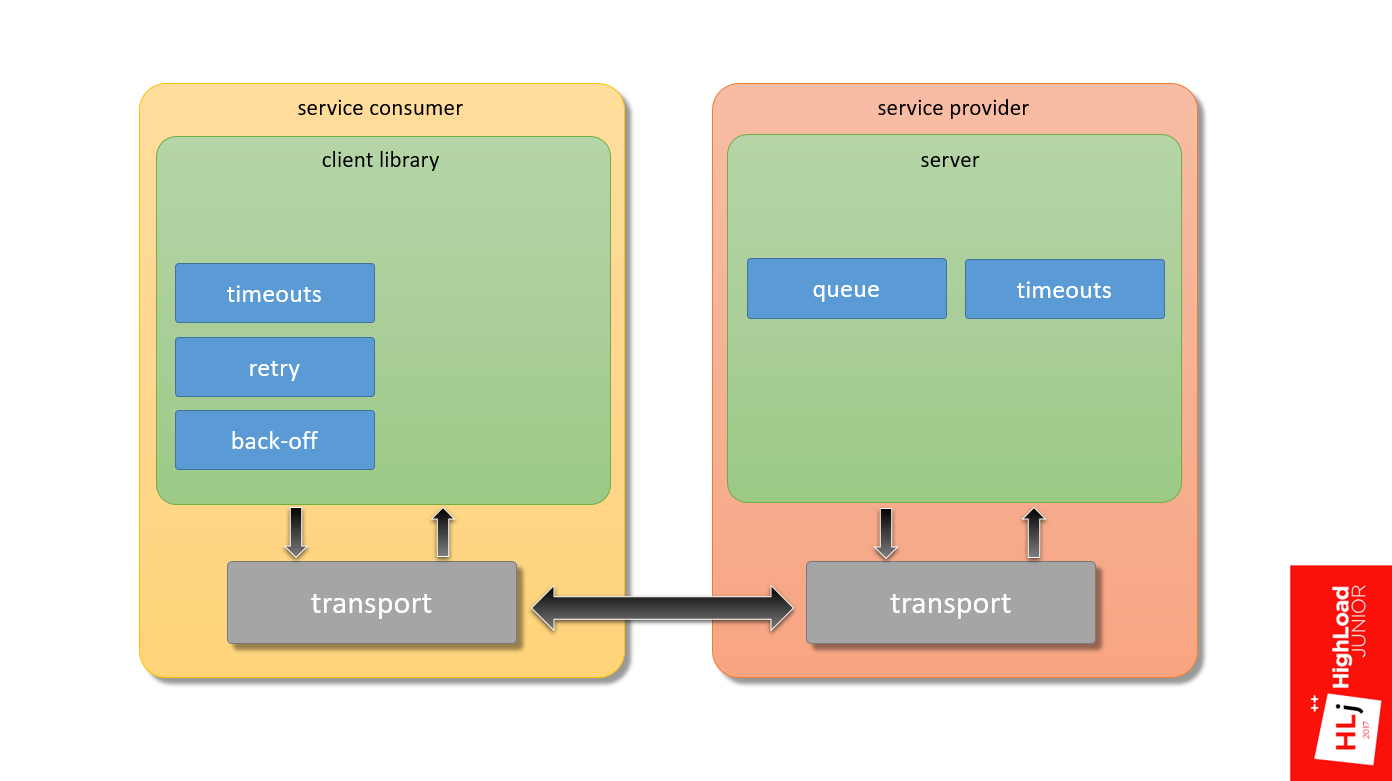

Moreover, if we take a hypothetical service consumer in which the application interacts with the server through the client library and transport to the service provider and vice versa, my focus is precisely the framework that ensures the interaction of these two components.

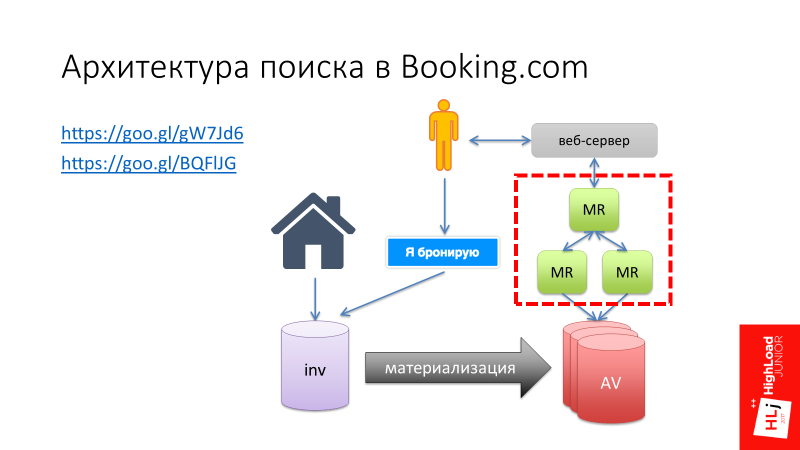

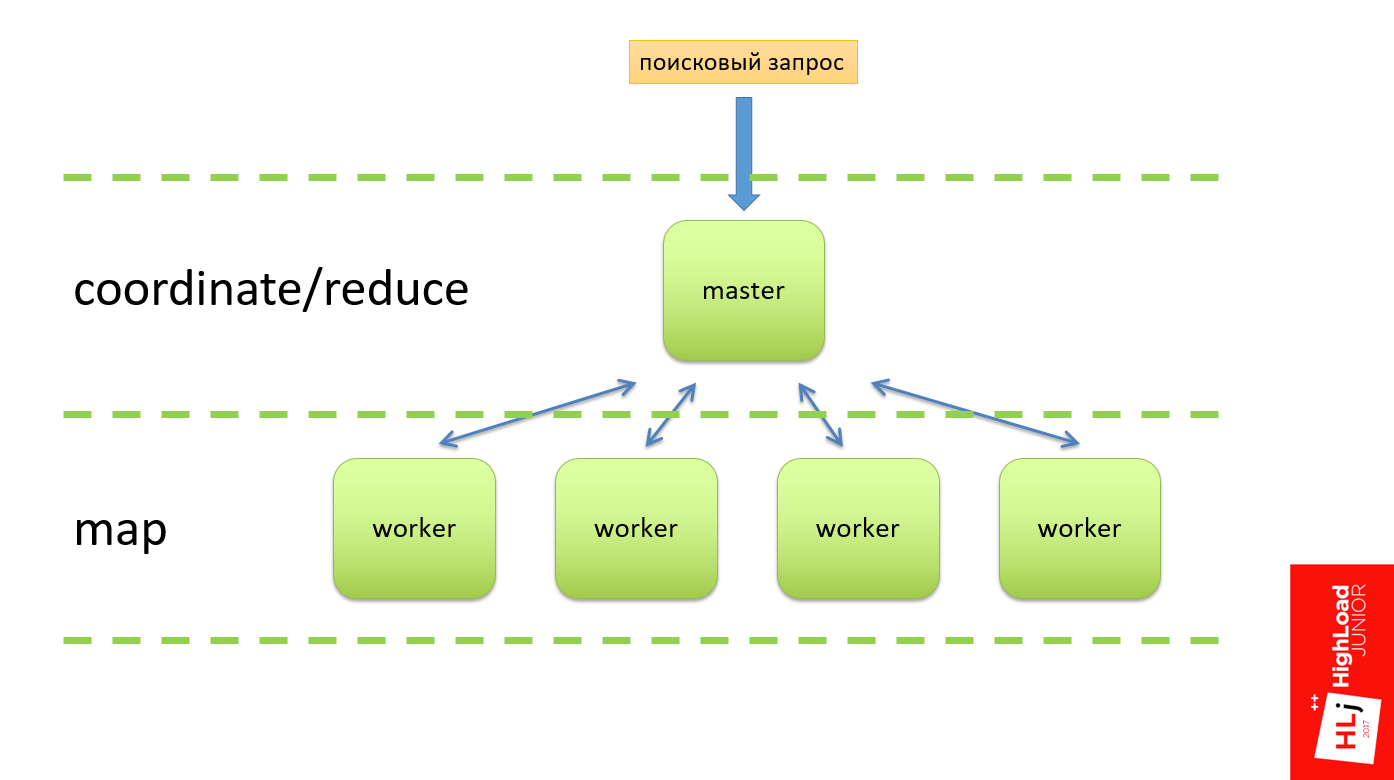

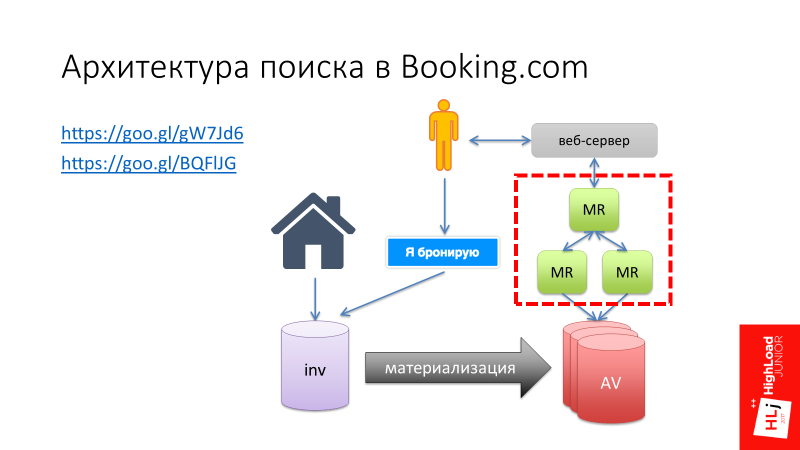

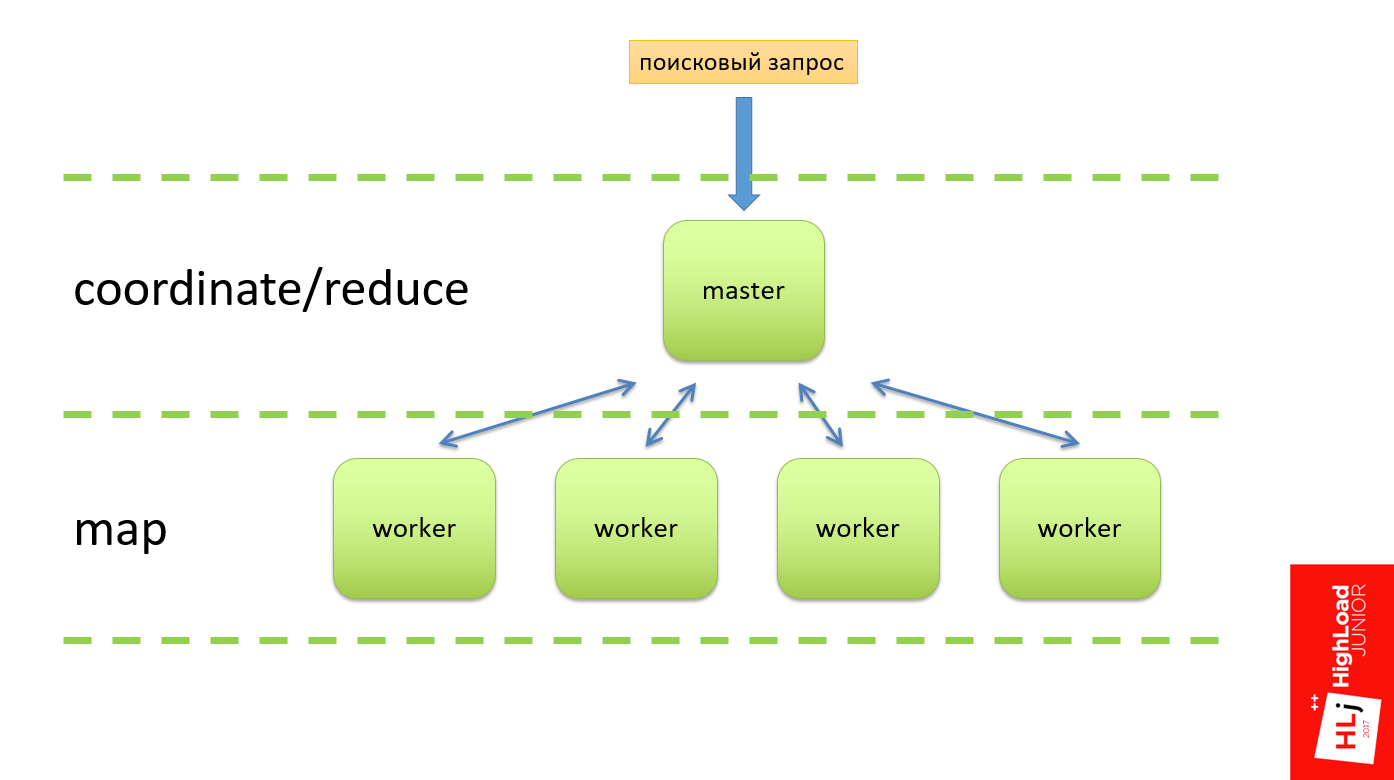

I want to tell one story. Here I will refer to my report “ Search.com Search Architecture ”, in which I talked about how Booking.com developed, and at one stage we decided to create our own unique Map-Reduce framework (highlighted in red in the figure below) .

This Map-Reduce framework was supposed to work as follows (diagram below). There should have been a search query at the entrance, which arrives at the master node, which divides this query into several subqueries and sends them to the workers. The worker does his work, forms the result, and sends it back to the master. Classic scheme: on Master - reduce function, on Worker - phase Map. So it should have worked in theory.

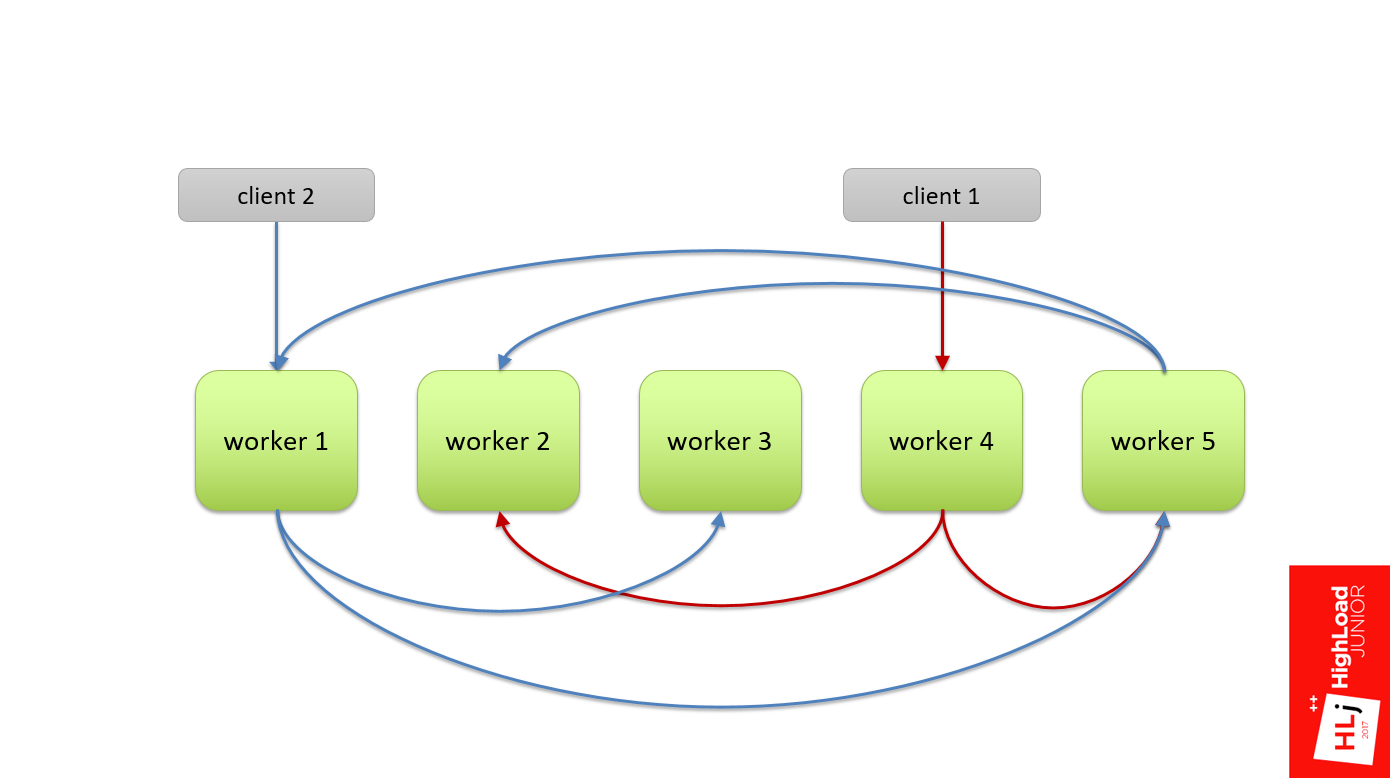

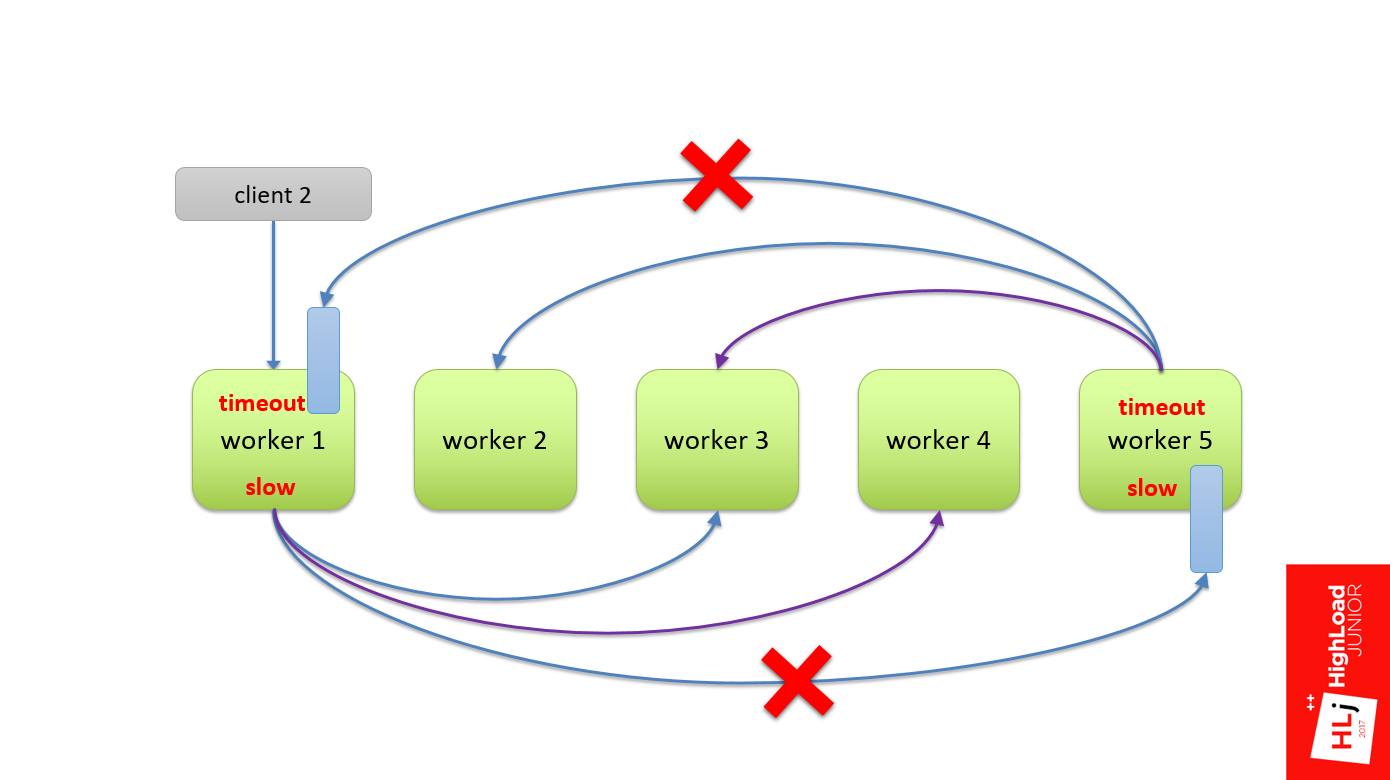

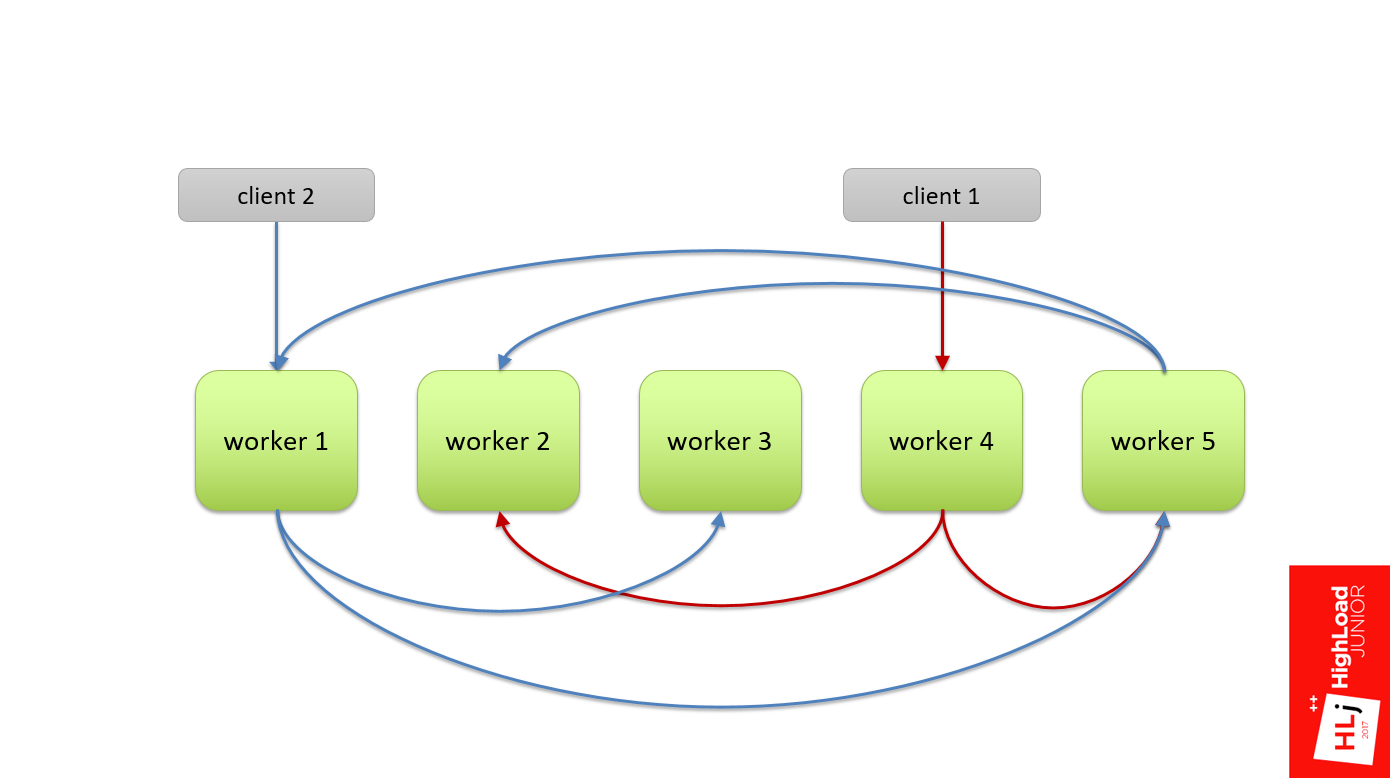

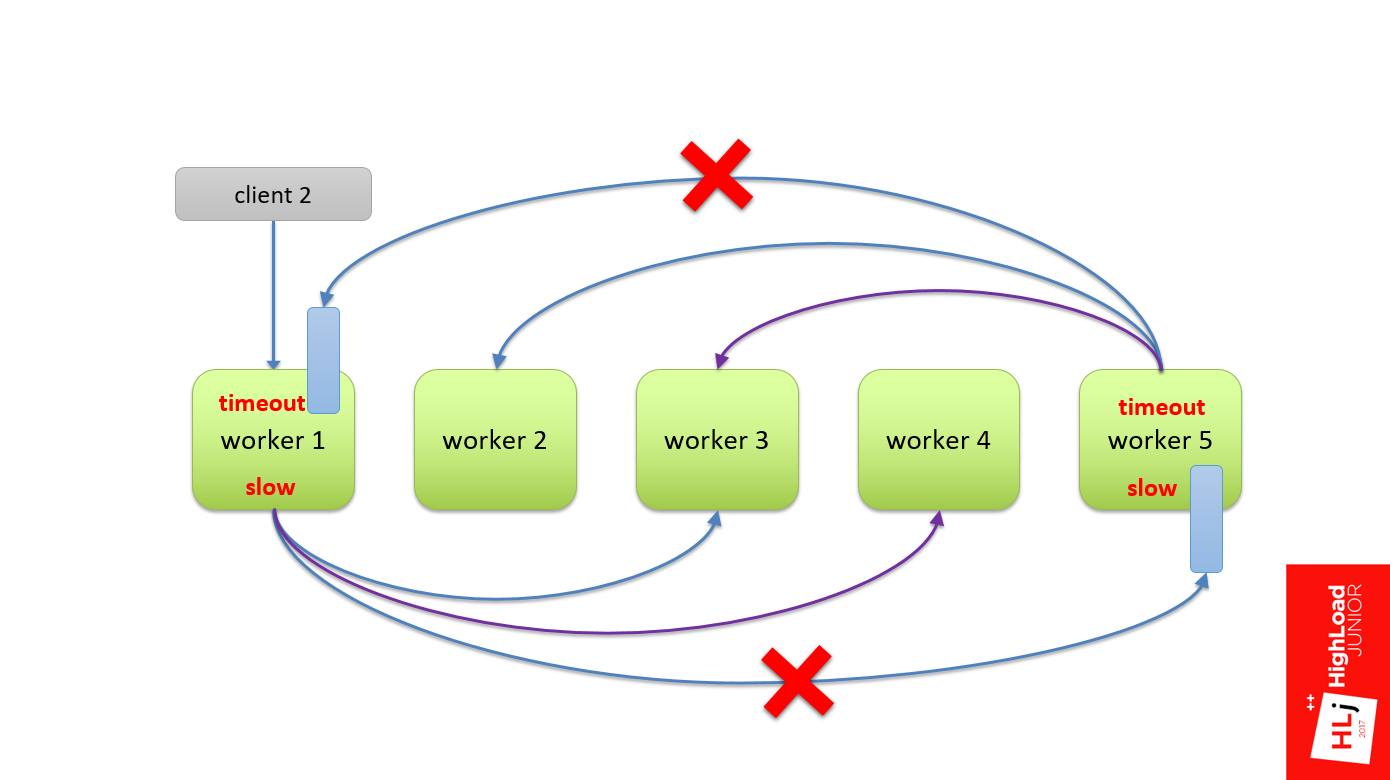

In practice, we did not have a hierarchy, that is, all the workers were equal. When a client arrives, he randomly selects a worker (in figure this is worker 4). This worker, as part of the request, became a master and divided this request into subqueries and also randomly selected for himself apprentices, for example, the 2nd and 5th workers.

The second request is the same: randomly select the 1st worker, he divides it and sends it to the 3rd and 5th.

But there was such a feature that this query tree was multi-level, and the 5th worker could, in turn, also decide to divide his request into several subqueries. He also chose some nodes from the same cluster as an apprentice.

Since the nodes are selected randomly, it could happen that he takes and selects the first node and the second node, as his sub-apprentices. The figure shows that between the 1st and 5th workers a cyclical dependence formed, when the 1st worker needs resources from the 5th to fulfill the request, and the 5th needs resources from the 1st, which can not to be.

This is not necessary. This is a recipe for the disaster that happened to us.

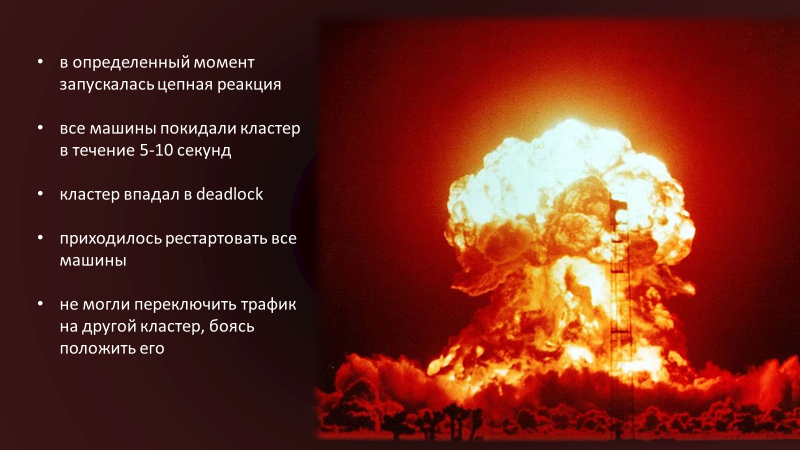

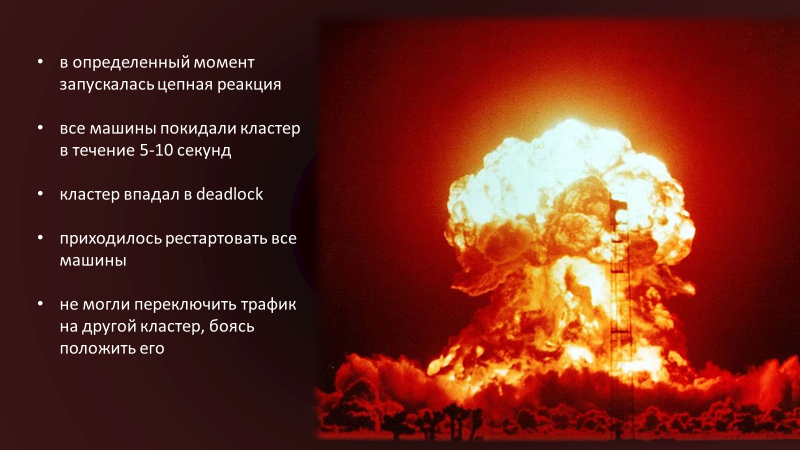

At a certain point in the cluster, a chain reaction started, because of which all the machines left the cluster within 5-10 s. The cluster fell into deadlock . Even if we removed all traffic entering it, it remained in a blocked state.

In order to get it out of this state, we had to restart all the machines in the cluster. That was the only way. We did this lively because we did not know what was the cause of our problem. We were afraid to switch traffic to another cluster, to another data center, because we were afraid to start the same chain reaction. The choice was between a loss of 50% and 100% traffic , we chose the first.

Explaining what happened is easiest through a demonstration. I want to say right away that this demonstration does not 100% reflect what was in that system, because it no longer exists. We refused it, including due to architectural problems. My example highlights key points, but it does not fully emulate the situation.

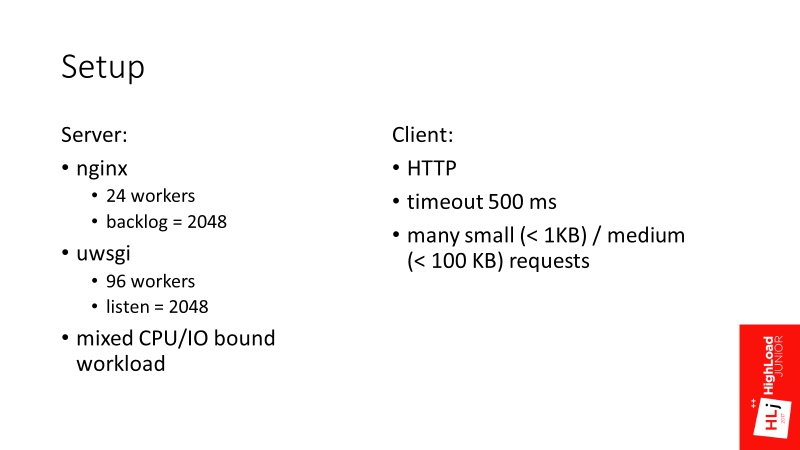

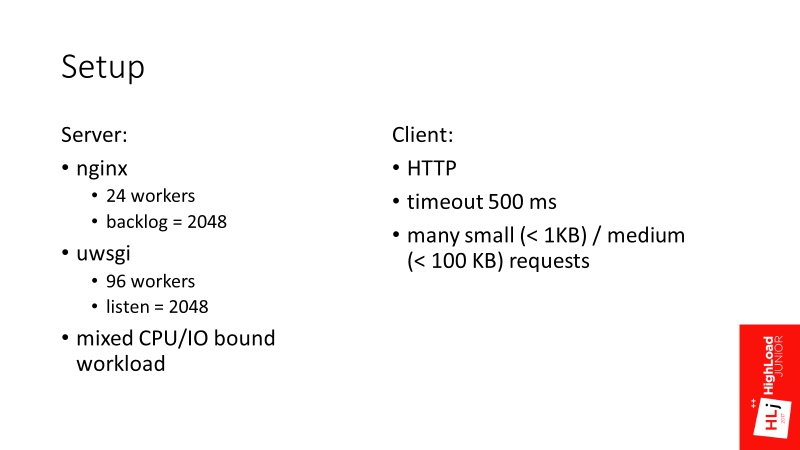

For the time being, take two parameters backlog = 2048 and listen = 2048 as data, then you will understand why they are important here.

Scenario

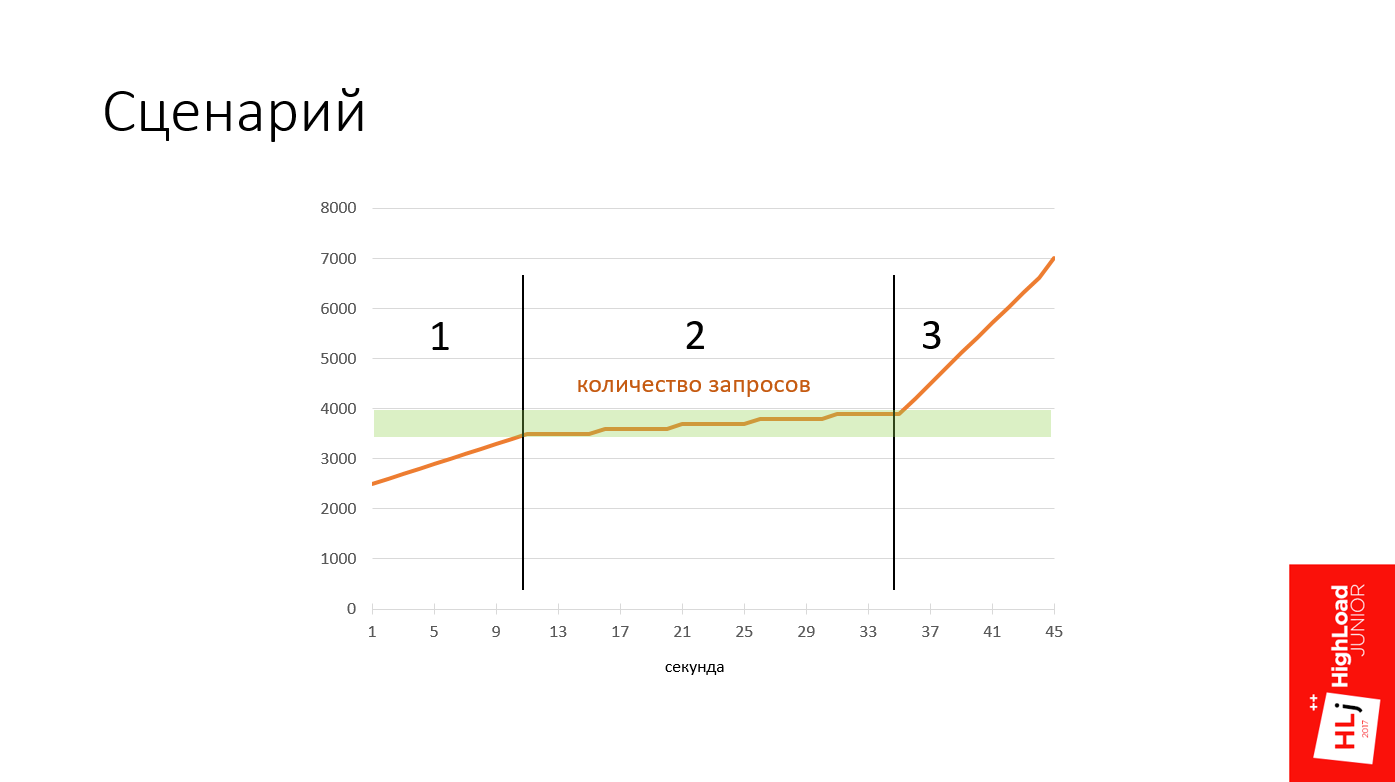

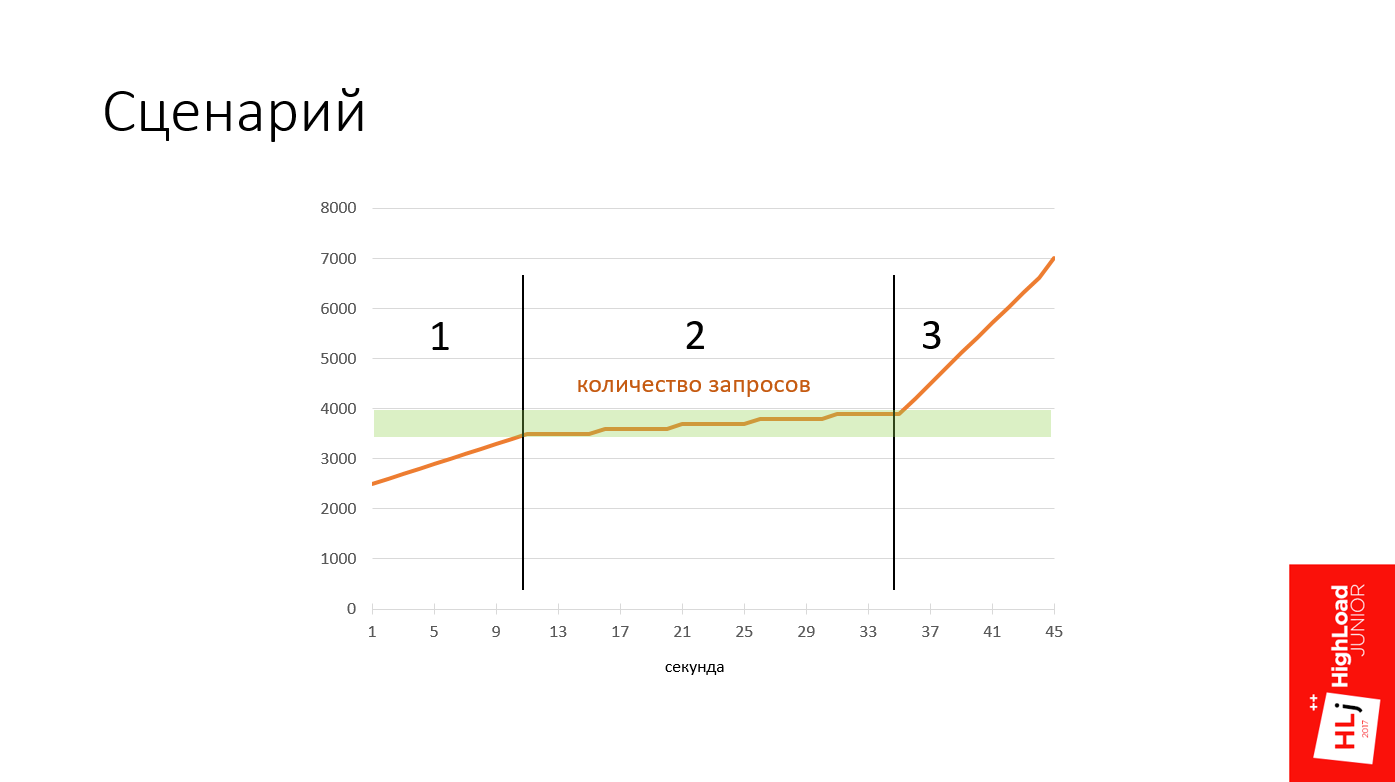

All my demos will follow the same script. The graph shows an action plan.

There are 3 phases here:

I will demonstrate all this with the help of a self-written tool that can be found on my GitHub. This is how it looks.

Explanation:

Go!

We started with 2500 requests per second, the number is growing - 2800, 2900. You can see the normal distribution somewhere around 5 ms.

As the server approaches the congestion zone , requests become slower. They flow, flow, flow, and then at a certain moment there is a sharp degradation in the quality of service. All requests became erroneous. Everything became bad, the system crashed.

In the resulting state, 100% of the requests failed , and they clearly fell into 2 categories: slow failures , which take ~ 0.5 s, and very fast (~ 1 ms).

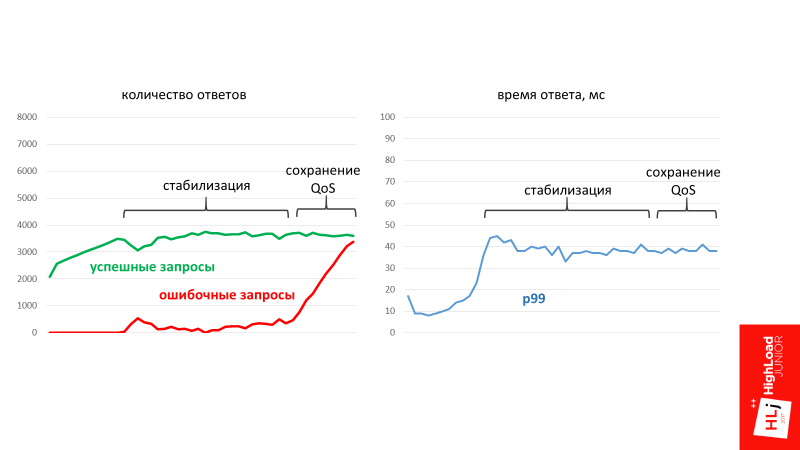

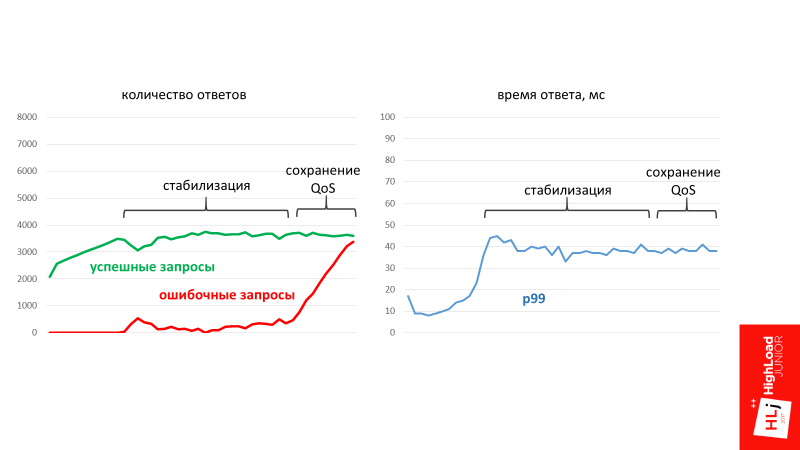

Query graphs look something like the image below. At a certain moment, a qualitative change occurred, all positive requests were replaced by negative ones, and the 99th percentile of the response time significantly degraded.

Step by step

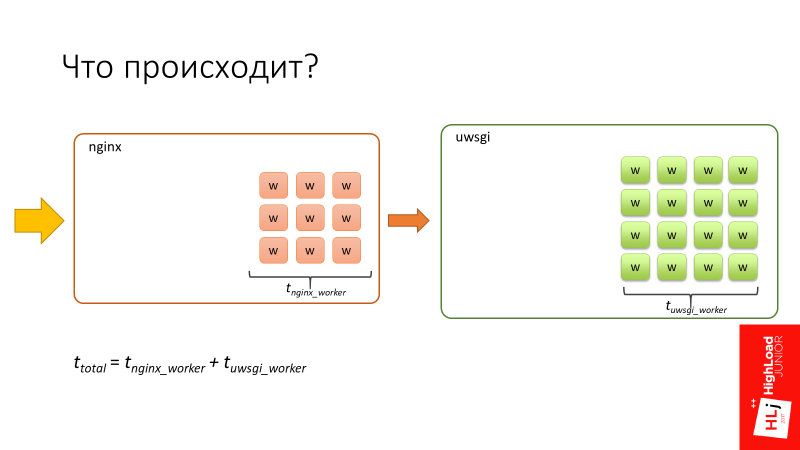

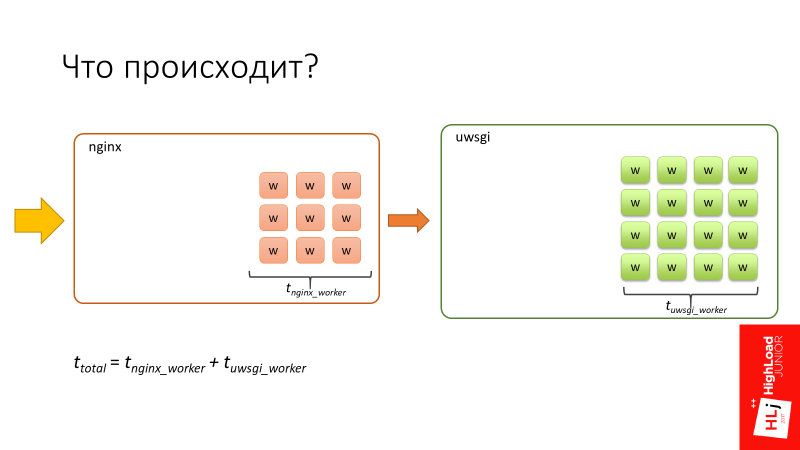

We begin with how the system processes requests in the normal state when it is not under load. Once again, the explanation is simplified.

Let's look carefully at the degradation zone, in which requests flow into slower ones. The pause was set at an interesting point, because if you pay attention to the number of in-flight requests (requests that are happening right now), there are 94 of them. Let me remind you that we have 96 uWSGI workers.

It is at this moment that there is a significant degradation of quality service. That is, sharply all requests go into very slow ones, and everything ultimately fails.

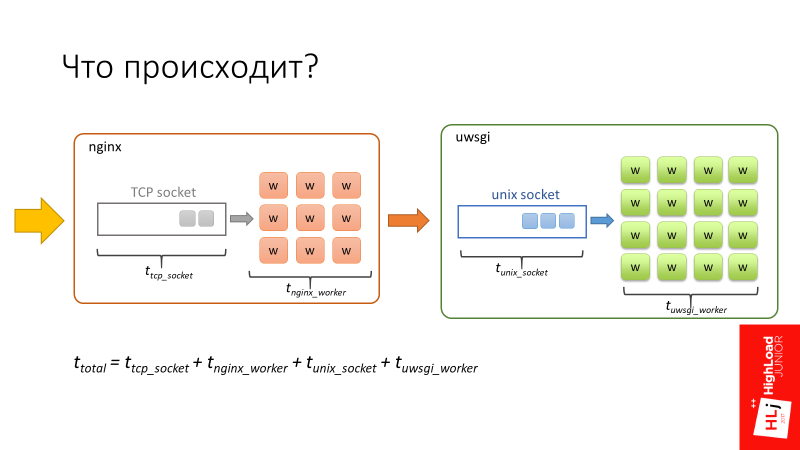

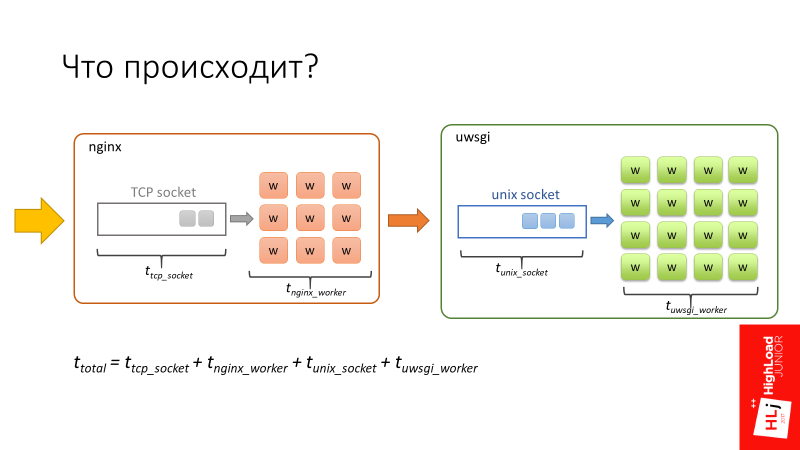

We return to the diagram.

The total request time is made up of these 4 components.

There is a feature. nginx is a great fast software . In addition, nginx workers are asynchronous, so nginx is able to handle the queue that exists in the TCP socket very quickly.

UWSGI workers, on the other hand, are synchronous. In fact, these are pearl processes, and when the number of requests that fly into the system begins to exceed the number of available workers, a queue begins to form in the Unix socket.

In Setup, I focused on 2 parameters: backlog = 2048 and listen = 2048. They determine the length of this queue, which in this case will be up to 2048.

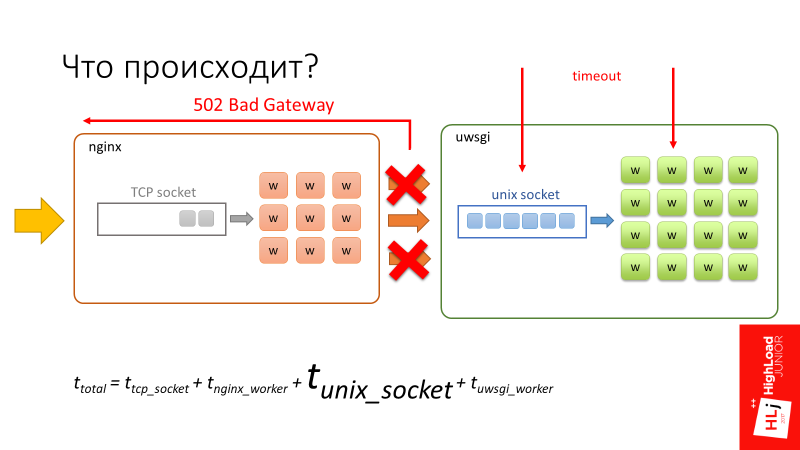

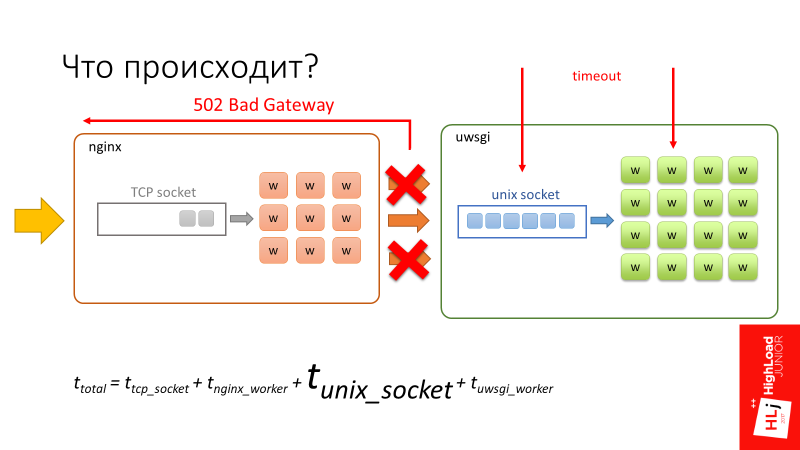

The request begins to spend considerable time in the unix socket, just sitting in line and waiting for its chance for the worker in uWSGI to free itself and begin to process it. At this point, a timeout of 500 ms occurs. The client side tears the connection, but only tears it up to nginx .

From the point of view of uWSGI, nothing happens. For him, this is a fully established connection , since the requests are small, they all lie in the buffer. When the worker is released and takes the record, from his point of view, this is an absolutely valid request , which he continues to fulfill.

This is not shown in the figure, but if you look at the server at this moment, it will be 100% loadedHe will continue to process these dangling connections, dangling requests.

That is, it turns out that the client does the work, sends requests, but receives 100% errors. The server, for its part, receives requests, honestly processes them, but when it tries to send the data back, nginx tells it: “This answer is no longer needed by anyone!”

That is, the client and server are trying to talk, but they do not hear each other .

The next stage

All requests degraded into erroneous, their number per second continues to grow. At this point, the nginx worker, trying to connect to socket uWSGI, receives error 502 (Bad Gateway), because the queue is complete.

Since in this case the request time is limited only by processing in nginx, the answer is very, very fast. In essence, nginx only needs to parse the HTTP protocol, make a connection to a unix socket and that’s it.

Here I want to return you to the original problem and tell in more detail what is happening there.

The following happens. For some reason, some vorkers have become slow, let’s not dwell on why, accept this as given. Because of this, uWSGI workers begin to end and form a line in front of themselves.

When a client’s request arrives, the 1st worker sends a request to the 5th, and this request must defend its turn. After he defended his turn and began to be processed by the 5th worker, the 5th worker, in turn, also shares his request into subqueries. A subquery arrives at the 1st worker, in which he must also defend the queue.

Now there is a timeout on the side of the 1st worker. At the same time, the request breaks, but the 5th worker knows nothing about this. It successfully continues to process the original request. And the first worker takes and does Retry on the same request.

In turn, the 5th worker continues to process, a timeout also occurs in it, because we defended the queue in the 1st. He terminates his request and also does Retry.

I think it’s clear that if the process continues, spinning this flywheel, as a result, the whole system will be clogged with requests that no one needs. In fact, we got a system with positive feedback . This is what laid our system.

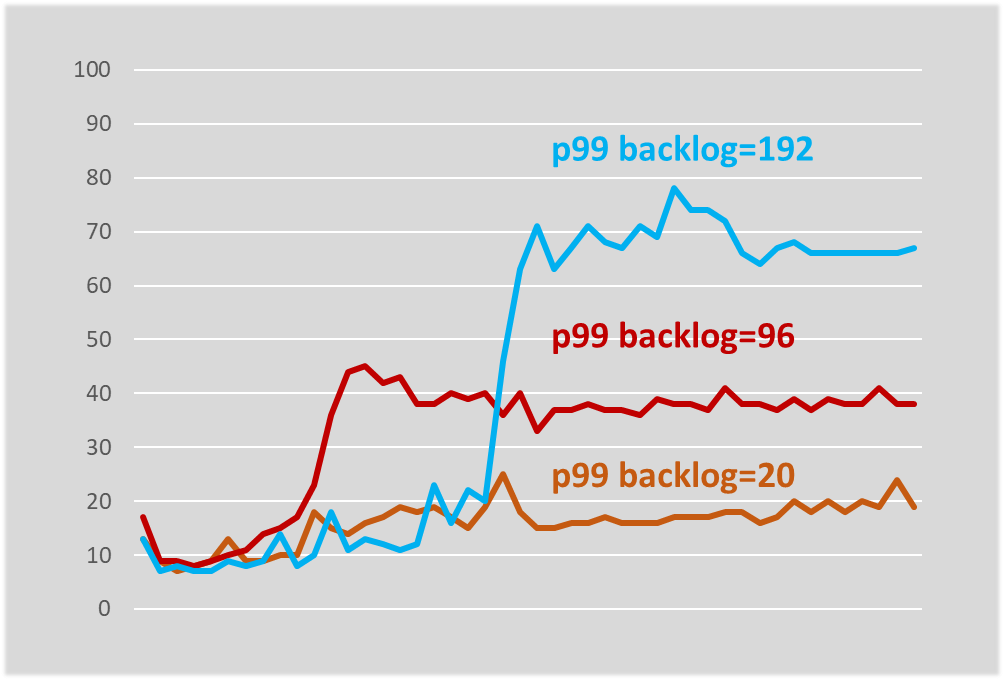

When we analyzed this case, which, incidentally, took a lot of time, because it was completely unclear what was happening, we thought: since we spend a lot of time in a unix socket (we have a very long one - 2048 elements), let's try to do it is less so that for each worker there is only 1 element.

Let's now see how the exact same system behaves with the only parameter changed - the queue length.

The scenario is the same as last time: we start from 2500, we also raise requests: 2900, 3000, 3100, 3200. We enter the zone when the system is not stable. Demands are slowly dropping down, but not so fundamentally degraded. This system stabilizes literally around 45 ms. She continues to successfully process requests, but at the same time some of them lead to errors.

On the chart it looks like this.

At a certain point in time, the number of requests that it can successfully process has stabilized in the system. Moreover, at the time of stabilization erroneous requests began to form. This is the number of requests that the system is not able to process at the moment.

It turns out that due to the length of the queue, the system will always take only the request that will most likely be successfully executed. According to statistics, this volume of requests is always fixed. Everything else, the system simply ignores and gives a 502 error.

In the third phase, when the number of requests rises sharply, the system still maintains its operability. Moreover, it maintains its quality of service, that is, p99 of the response time does not increase, it has stabilized.

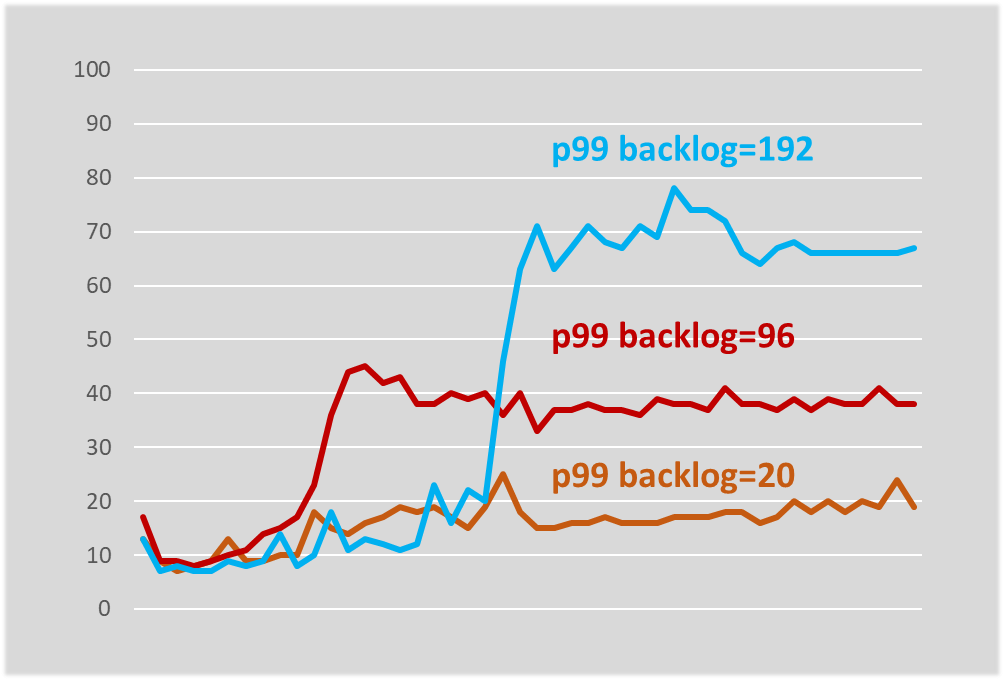

This is what we started in production with, I’ll tell you more about that. I experimented with the queue length and it turned out that the queue length determines, at least in our system, the worst response time.

The system stabilized at a slightly higher response time, but it was still stable. If you try to limit the queue to literally 20, then the response time does not increase at all, changing only a few milliseconds.

In the short term:

Reducing the length of the queue, first of all, conditionally broke the cycle . Conditionally, then the cyclic dependence is still present, but the properties have changed. The fact is that a specific server, if it does not have enough resources to process an incoming request, will simply reject the request. And this will be done very quickly, with a minimum of overhead. If there are enough resources in this server, the request will be accepted. As a result, the cycle, if this particular server is loaded, breaks.

Moreover, there is one more nuance. In the first example, there were 2 classes of errors - very fast (~ 1 ms) and very slow (~ 500 ms). By reducing the length of the queue, we translated all slow errors into a number of cheap ones, that is, made them very fast. Thanks to this, we have becomecheap replays . The situation has ceased to repeat when we, when doing Retry, essentially once again load some second component.

In the long run, of course, we had to switch to a 2-stage architecture that would coincide with the logical architecture. This is what we did in the next iteration when we developed a replacement for the search service.

Now let's talk about what components you need and what you need to keep if you want to build a predictable RPC system - a framework with predictable system behavior in boundary conditions. I’ll tell you what we have, what approaches we use, without being tied to a specific service.

Returning to the first diagram (the interaction of the client and the server with each other), we have already touched on the first 2 bricks that need to be configured correctly:

We have already figured out the slow errors - we transferred them to the category of fast errors, what should I do with fast errors?

We need to consider 2 different cases.

First, what to do when the system is in a saturation phase ?

Here, in fact, nothing can be done! When the system is in the saturation phase, it has run out of resources, and it cannot process anything new. For example, I can carry 100 kg, but give me 101 kg, and I will fall.

This is a very good property, because here we are talking, in fact, about 2 very important, in my opinion, components of any framework:

Secondly, what if the queue overflows for a short time ?

This is possible if, for example, there is some kind of delay in the network, requests have accumulated in it, and part of them immediately arrives at the server, briefly overflowing the queue.

In this case, a very simple and reliable mechanism is to take and repeat (Retry). On our chart lies another brick.

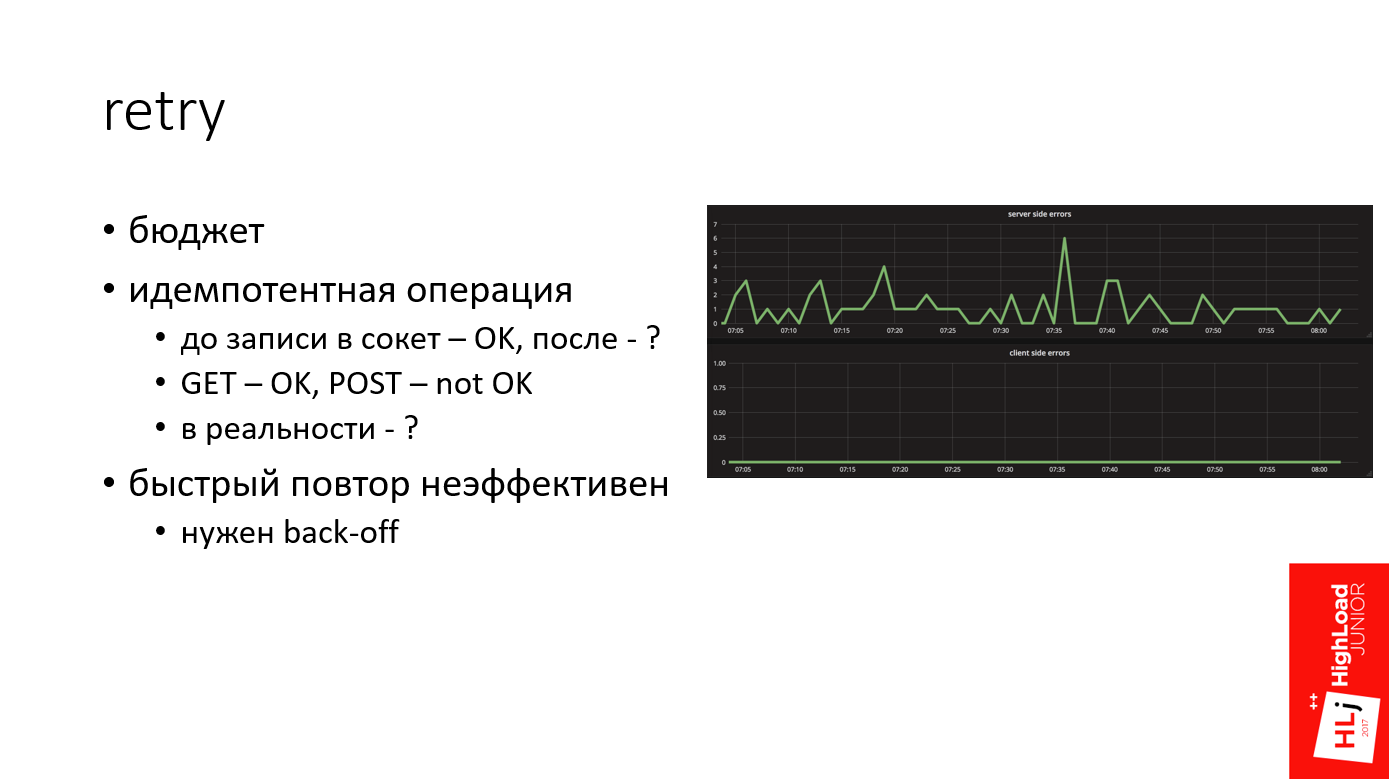

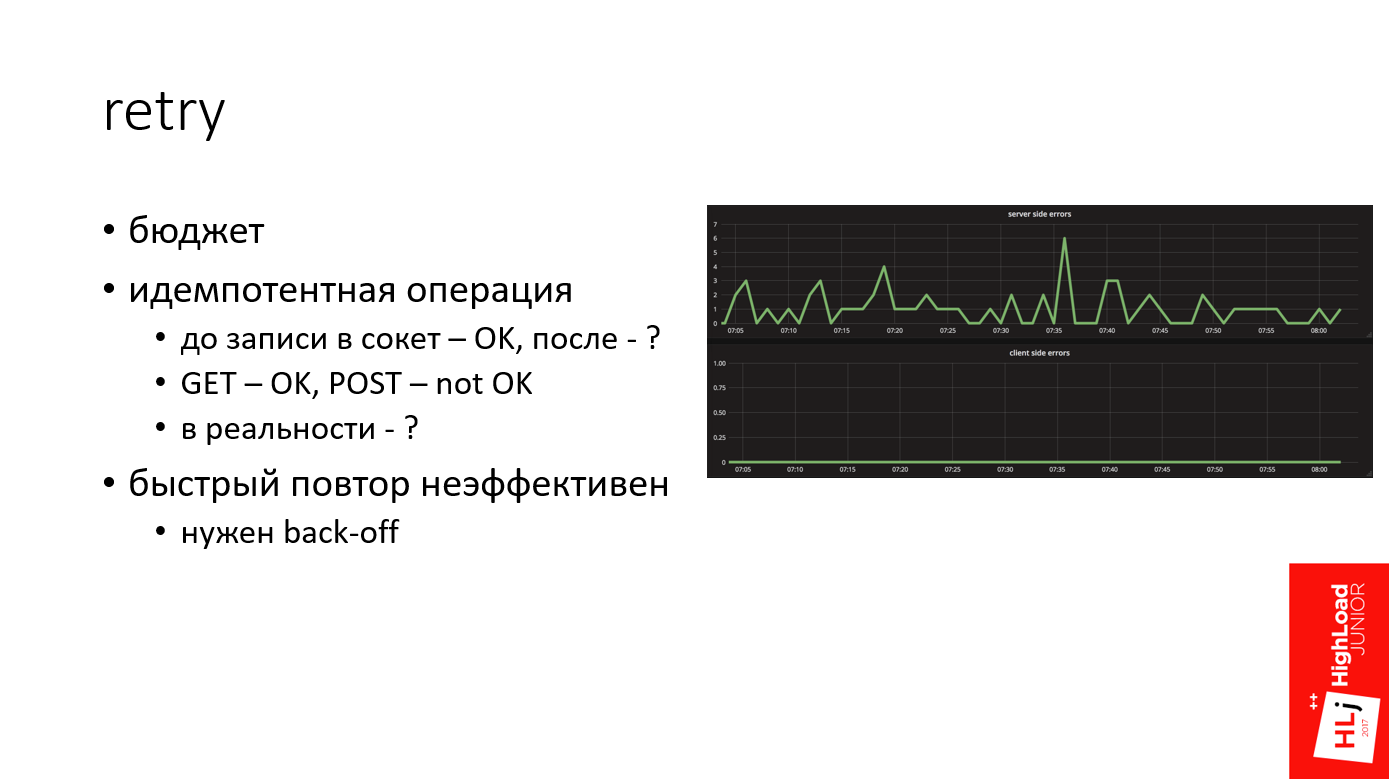

In my practice, Retry is a powerful mechanism that in many cases allows you to get dry out of the water. For example, on the graph above is the number of errors on the server side, and below is the number of errors on the client side. It can be seen that the server for some reason constantly generates errors, and nothing is visible on the client side.

Quite often, it happens that even if we have some problems on the server side for any reason, they do not reach the client because of a competent Retry.

When we talk about Retry, it's important to keep in mind:

When we send an HTTP request, everything that happened before the moment we sent the data to the server, that is, in fact, wrote to socket, is a safe operation, because we have not sent anything to the server yet. For example, if you had some kind of error when you tried to resolve the DNS address, or if the server is simply not available, then this is normal.

Everything that happened afterwards is in question, because according to the HTTP standard, read operations performed by the GET method are idempotent by default, which cannot be said about POST.

This is in theory, but in practice it comes out differently, depending on the system. For example, there are a number of systems that write: “Somewhere sending GET requests” Therefore, we need to look at a specific system.

Imagine that a short-term problem occurred on the network, for example, a route is being rebuilt, some host has become unavailable for a short period of time. If you repeat one time, followed by a second, third, then you will burn your budget in just a few milliseconds. It is not effective. You did not give any chance to the system recovering.

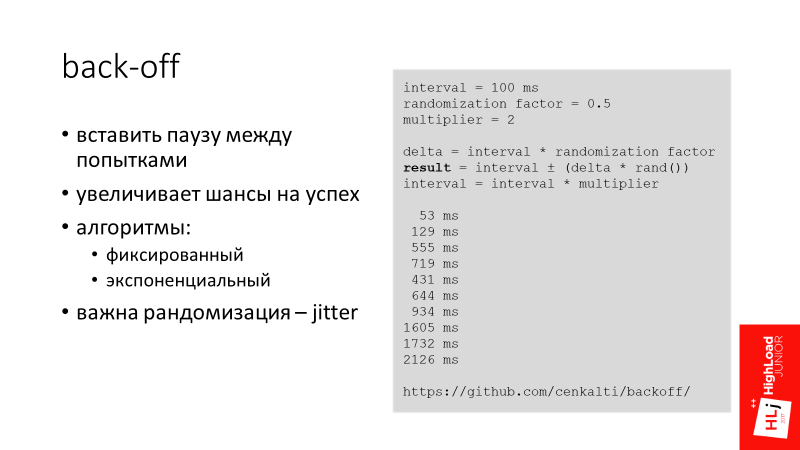

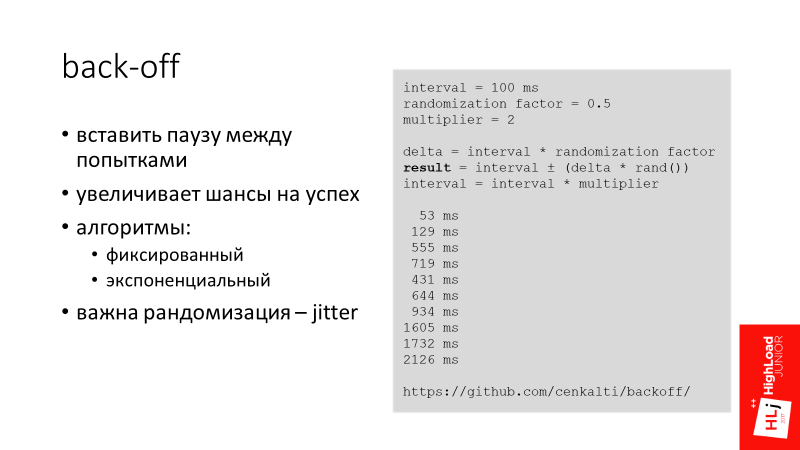

We put another brick on the diagram in the service consumer block - back-off.

The main idea of back-off is to insert a pause between attempts in order to increase the chances of success. So we give the system a chance, and wait on our side, hoping that the system itself will recover.

I am aware of several back-off algorithms:

Another important factor when we talk about back-offs is the randomization of indentation intervals - jitter.

Imagine that 100 requests are flying to you, which arrive at one moment and overload the server for a short time. All requests get an error. Now we are indenting for 100 ms, and the same requests again fly to the server. The situation, in fact, repeats itself - they overload the server in the same way.

If you add a randomized delta to the indentation locally in the server, it turns out that all the second requests are spread out in time and arrive at the server more than once. The server will be much more likely to handle them.

At Booking.com, we use an exponential randomized interval (see example). For example, the first indent is 53 ms, then 129 ms, 555 ms, etc.

The next brick is timeouts on the server side, more precisely, the consistency of timeouts on the client and server sides.

I have already touched on this topic a little. It is important that when the client falls off, the server does not continue to process that request.

In modern frameworks there is such a mechanism as canceling a request - request cancellation . Our framework, unfortunately, does not have such properties, so we had to get around this problem.

When our client flies to the server, it sets the HTTP header - X-Booking-Timeout-Ms , in which it says what its timeout is equal to. After that, the server takes this data and sets its local server timeout based on the client and some delta to allow the request to reach the server:

server timeout = client timeout + delta

It turns out that when the client falls off after 100 ms, the server will fall off, for example, literally after 110 ms. That is, the request is canceled.

There are already 5 elements on the diagram that are not needed when the system is stable. They are required when everything is bad, in fact, like backups. These components are not needed in normal life, but when they are needed, they are really needed .

There are people who do not do backups yet. But those who had a negative experience, periodically restore backups. We are essentially for these purposes, that is, in order to test our entire stack, we use Chaos Monkey.

Initially, the idea of Chaos Monkey was that we turn off the data center and watch how our system reacts. We have not reached this scale yet - our scale is more modest. But there are interesting things that I want to talk about.

We have 3 types of Chaos Monkey.

1. Checking the HTTP client

We expect special behavior from the client when, for example, a 502 response from nginx arrives to it. We know that this is a cheap answer, so the client automatically repeats it. To test this logic, a certain percentage of requests takes and artificially throws a 502 response. In my opinion, this is 1% of artificially created errors in production.

That is, Chaos Monkey really spoils 1% of internal requests in order to make sure that when real requests arrive, the system will correctly process them.

2. Soft degradation of applications. The

second type of Chaos Monkey is more interesting. What does soft application degradation mean?

Imagine that there is a search page, it has the main search functionality, which is our main business. This functionality is tied to minor components that also make remote calls.

If this minor component crashes, we don’t want the whole page to fall. We expect a developer who writes minor components that he will design the system so that when an error arrives from a minor query, we will not generate the 500th response for our client. To do this, our servers periodically generate 400th responses, which mean that the request was incorrectly formed, and therefore the HTTP client forwards it to the topmost stack in the application, which is visible to the developer.

Such a Chaos Monkey mechanism works for us only internally. It is clear that if we run this in business, we can reduce the conversion, which is not good.

Since we are really talking about the failure of part of the functionality, we always have a list of critical queries, for example, the same basic search query that is not involved in this logic.

3. Readiness for delays in replication.

The last look of Chaos Monkey is a bit off to the side. We have a data retrieval system to which the client comes and says: “I have 1000 records, give me all of them!” But this system uses MySQL to store data and it may turn out that some part of these records has only been recorded and the master record has not yet reached the slave. Therefore, from time to time, the system responds that it has 900, and 100 does not yet, because they did not have time to reach the slave and appear later.

Using the Chaos Monkey mechanism, we test this functionality. The system generates the correct 200th answer, but emulates a logical error that some part of the records is simply missing. This also works for us in production.

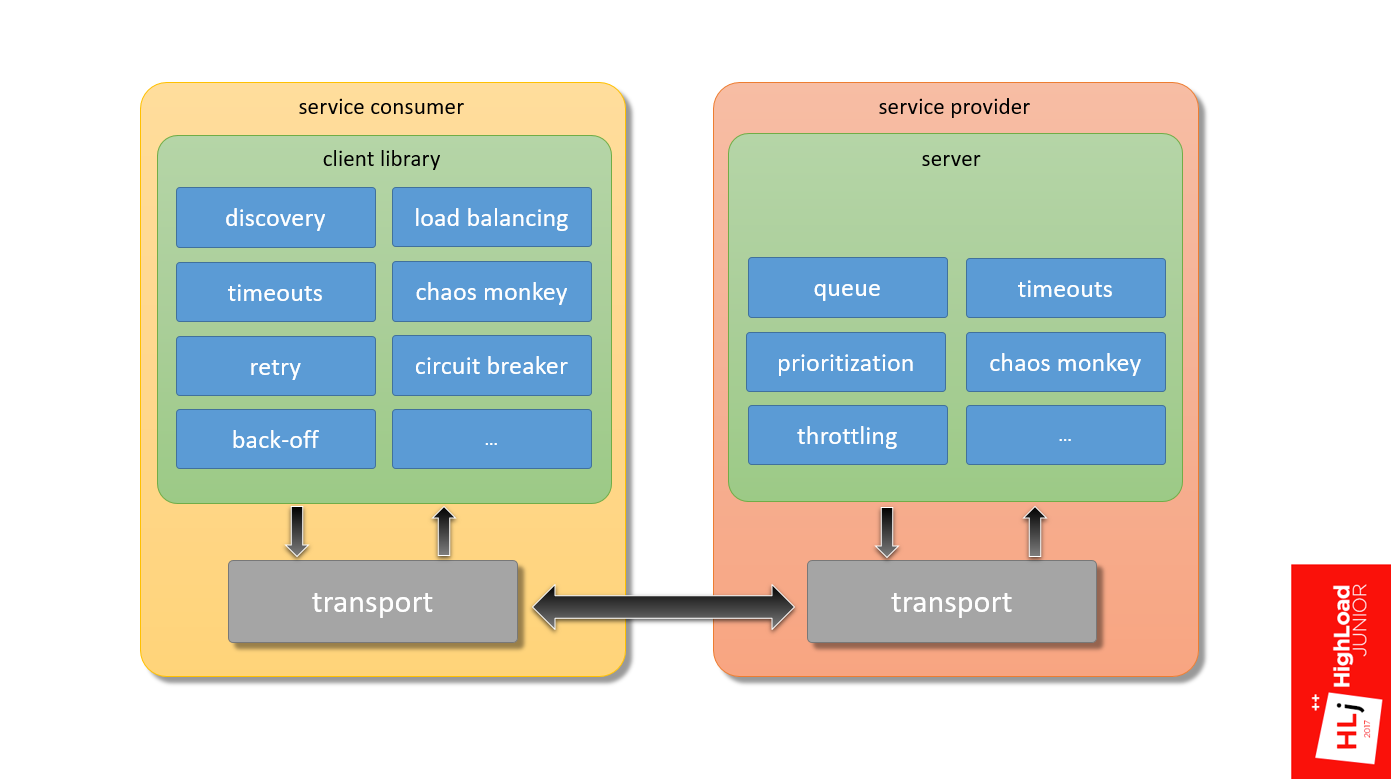

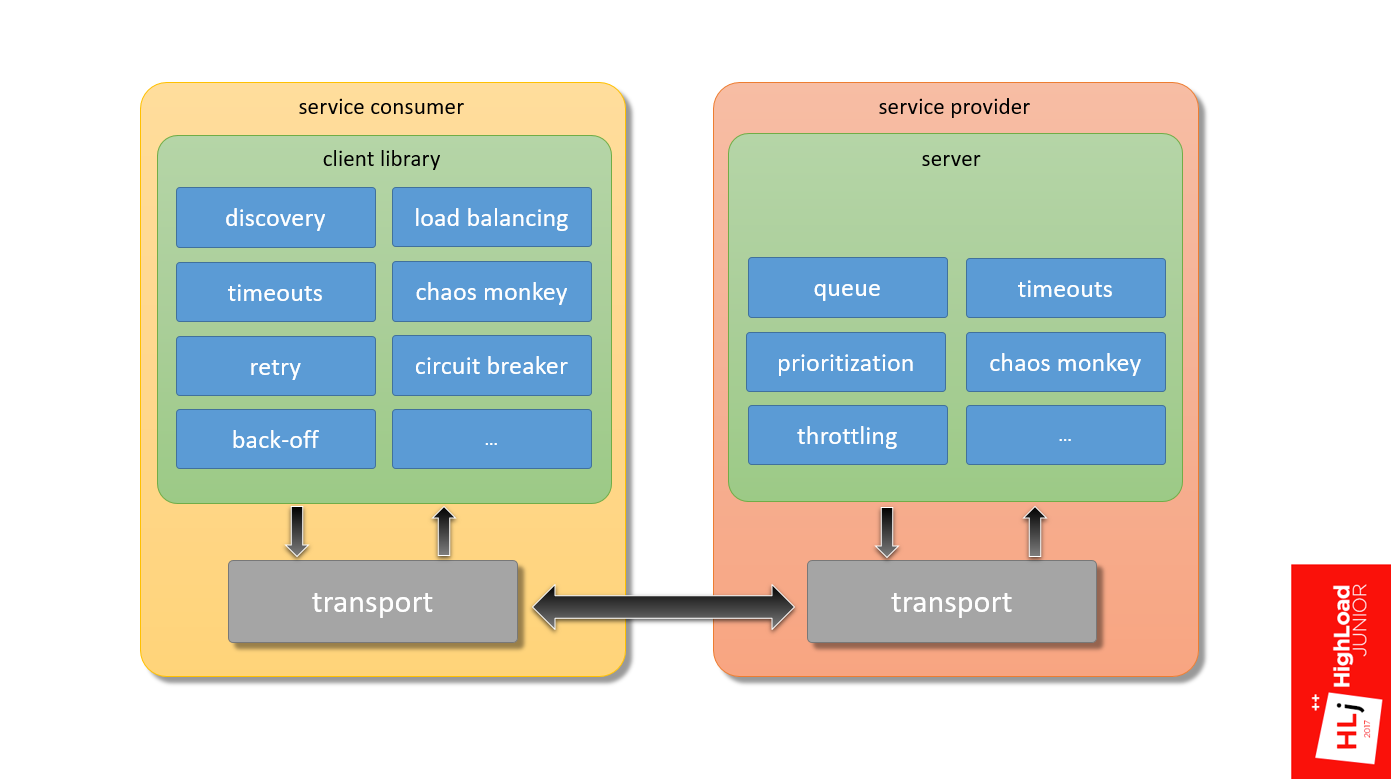

We return to the diagram.

In fact, there are many more components. Only from what I know:

If we talk only about the stack over the transport, and there is still a lot of everything inside the transport that you need to configure correctly in order for the system to work predictably.

Predictably sending an HTTP request is a difficult task! There are so many components that need to be configured correctly. All systems are different, there are no silver bullets.

In short, test and verify ! In my opinion, the only way to make the system work is to put it in boundary conditions and see how it will react.

At Booking, we had to invent a little bicycle and write our own framework. Most likely, you are not working with Perl, so you are a little luckier.

Look at frameworks like gRPC and Finagle. If you prefer proxies, then Linkerd and Envoy. I must say right away that I do not have production experience with these systems, I can not recommend anything concrete.

Last - experiment with the queues . From our own experience, we realized that the length of the queue can radically change the behavior of the system, which is what happened with us. So put a note to yourself - try to play around.

But please do not copy my example - take and check.

There is an important point here - if you want to experiment, control over the client is important, because your system behavior is changing, and it is also important to adapt your client.

What I showed only works when nginx has a web server that works through a unix socket. TCP socket behaves differently.

That's all for today, and below are links that can help sort out the details:

Booking.com is changing at the same time. This is not to say that this is a cardinal abrupt change, but a slow and confident transformation. The stack is changing, Java is gradually being introduced in those places where it is relevant. Including the term service-oriented architecture (SOA) is heard more and more often in internal discussions.

Further, the story of Ivan Kruglov ( @vian ) about these changes in terms of the interaction of internal components on Highload Junior ++ 2017 . Having fallen into the trap of cyclically dependent workers, we had to qualitatively figure out what was happening, and by what means, all this could be fixed.

Pros of moving to a service-oriented architecture

The first 3 advantages of a service-oriented architecture - weak connectivity, independent deployment, independent development - are understandable, I will not dwell on them in detail. We proceed immediately to the next.

Faster onboarding

In a company in a phase of intensive growth, a lot of attention is paid to the topic of quickly including a new employee in the work process. A service-oriented architecture can help here by focusing a new employee on a specific small area. It is easier for him to gain knowledge about a separate part of the entire system.

Faster development

The last paragraph summarizes the rest. From the transition to a service-oriented architecture, if we cannot significantly speed up development, then at least maintain the current pace due to less connected components.

Cons of switching to a service-oriented architecture

Reduced flexibility

By flexibility I mean flexibility in redistributing human resources. For example, in a monolithic architecture, in order to make any changes to the code and break anything, you need to have knowledge of how a large area works. With this approach, it turns out that transferring a person between projects becomes simple, because with a high degree of probability this person will already know a fairly large area in a new project. From a management point of view, the system is more flexible.

In a service-oriented architecture, the opposite is true. If you have a different technological stack in different teams, the transition between the teams may be equivalent to switching to another company, where everything can be completely different.

This is a managerial minus, the rest are technical minuses.

The complexity of making atomic changes (not just data)

In a distributed system, we lose the possibility of transactions and atomic changes in the code. One commit to several repositories cannot be extended.

Complex debugging A

distributed system is fundamentally more difficult to debug. Elementary, we have no debugger in which we could see where we will go next, where the data will fly in the next step. Even in the elementary task of analyzing logs, when there are 2 servers, each of which has its own logs, it is difficult to understand what is related to what.

It turns out that in a service-oriented architecture, the infrastructure as a whole is more complicated. Many supporting components appear, for example, a distributed logging system and a distributed tracing system are needed.

This article will focus on another drawback.

Remote Procedure Call (RPC)

It stems from the fact that if in a monolithic architecture, in order to perform some operation, it is enough to call the desired function, then in SOA you will have to make a remote call.

I want to say right away what I will and what I will not talk about. I will not talk about the interaction of Booking.com with the outside world. My talk is about the internal components of Booking.com and their interactions.

Moreover, if we take a hypothetical service consumer in which the application interacts with the server through the client library and transport to the service provider and vice versa, my focus is precisely the framework that ensures the interaction of these two components.

History of one problem

I want to tell one story. Here I will refer to my report “ Search.com Search Architecture ”, in which I talked about how Booking.com developed, and at one stage we decided to create our own unique Map-Reduce framework (highlighted in red in the figure below) .

This Map-Reduce framework was supposed to work as follows (diagram below). There should have been a search query at the entrance, which arrives at the master node, which divides this query into several subqueries and sends them to the workers. The worker does his work, forms the result, and sends it back to the master. Classic scheme: on Master - reduce function, on Worker - phase Map. So it should have worked in theory.

In practice, we did not have a hierarchy, that is, all the workers were equal. When a client arrives, he randomly selects a worker (in figure this is worker 4). This worker, as part of the request, became a master and divided this request into subqueries and also randomly selected for himself apprentices, for example, the 2nd and 5th workers.

The second request is the same: randomly select the 1st worker, he divides it and sends it to the 3rd and 5th.

But there was such a feature that this query tree was multi-level, and the 5th worker could, in turn, also decide to divide his request into several subqueries. He also chose some nodes from the same cluster as an apprentice.

Since the nodes are selected randomly, it could happen that he takes and selects the first node and the second node, as his sub-apprentices. The figure shows that between the 1st and 5th workers a cyclical dependence formed, when the 1st worker needs resources from the 5th to fulfill the request, and the 5th needs resources from the 1st, which can not to be.

This is not necessary. This is a recipe for the disaster that happened to us.

At a certain point in the cluster, a chain reaction started, because of which all the machines left the cluster within 5-10 s. The cluster fell into deadlock . Even if we removed all traffic entering it, it remained in a blocked state.

In order to get it out of this state, we had to restart all the machines in the cluster. That was the only way. We did this lively because we did not know what was the cause of our problem. We were afraid to switch traffic to another cluster, to another data center, because we were afraid to start the same chain reaction. The choice was between a loss of 50% and 100% traffic , we chose the first.

Demo

Explaining what happened is easiest through a demonstration. I want to say right away that this demonstration does not 100% reflect what was in that system, because it no longer exists. We refused it, including due to architectural problems. My example highlights key points, but it does not fully emulate the situation.

For the time being, take two parameters backlog = 2048 and listen = 2048 as data, then you will understand why they are important here.

Scenario

All my demos will follow the same script. The graph shows an action plan.

There are 3 phases here:

- From 0 to 3500 requests per second;

- From 3500 to 4500 requests per second.

In the second phase, the zone is highlighted in green when the server reaches the saturation point. It is interesting to see how he reacts at this moment. - Server response after saturation point, when the number of requests per second grows to 7000.

I will demonstrate all this with the help of a self-written tool that can be found on my GitHub. This is how it looks.

Explanation:

- Histogram of query results for the last 10 s:

- * means that a certain number of requests completed successfully;

- E - requests completed with an error.

- On the left are time intervals from 0 to ~ 1 s in a logarithmic scale.

- The current and required RPS are highlighted in yellow above.

Go!

We started with 2500 requests per second, the number is growing - 2800, 2900. You can see the normal distribution somewhere around 5 ms.

As the server approaches the congestion zone , requests become slower. They flow, flow, flow, and then at a certain moment there is a sharp degradation in the quality of service. All requests became erroneous. Everything became bad, the system crashed.

In the resulting state, 100% of the requests failed , and they clearly fell into 2 categories: slow failures , which take ~ 0.5 s, and very fast (~ 1 ms).

Query graphs look something like the image below. At a certain moment, a qualitative change occurred, all positive requests were replaced by negative ones, and the 99th percentile of the response time significantly degraded.

Step by step

We begin with how the system processes requests in the normal state when it is not under load. Once again, the explanation is simplified.

- Nginx at the input receives a request.

- There are 24 workers in nginx.

- The request has been processed in nginx for some time.

- Nginx redirects the request to uWSGI.

- 96 uWSGI workers are Perl processes that also take some time.

- The sum of the time that the request spent in nginx and in uwsgi is

Let's look carefully at the degradation zone, in which requests flow into slower ones. The pause was set at an interesting point, because if you pay attention to the number of in-flight requests (requests that are happening right now), there are 94 of them. Let me remind you that we have 96 uWSGI workers.

It is at this moment that there is a significant degradation of quality service. That is, sharply all requests go into very slow ones, and everything ultimately fails.

We return to the diagram.

- When a request gets into nginx, it first gets into the queue that is associated with the

TCP socket , which is at the input of nginx. - Further, when the nginx worker connects to uWSGI, the request also spends some time in the queue associated with the

Unix socket uWSGI.

The total request time is made up of these 4 components.

There is a feature. nginx is a great fast software . In addition, nginx workers are asynchronous, so nginx is able to handle the queue that exists in the TCP socket very quickly.

UWSGI workers, on the other hand, are synchronous. In fact, these are pearl processes, and when the number of requests that fly into the system begins to exceed the number of available workers, a queue begins to form in the Unix socket.

In Setup, I focused on 2 parameters: backlog = 2048 and listen = 2048. They determine the length of this queue, which in this case will be up to 2048.

The request begins to spend considerable time in the unix socket, just sitting in line and waiting for its chance for the worker in uWSGI to free itself and begin to process it. At this point, a timeout of 500 ms occurs. The client side tears the connection, but only tears it up to nginx .

From the point of view of uWSGI, nothing happens. For him, this is a fully established connection , since the requests are small, they all lie in the buffer. When the worker is released and takes the record, from his point of view, this is an absolutely valid request , which he continues to fulfill.

This is not shown in the figure, but if you look at the server at this moment, it will be 100% loadedHe will continue to process these dangling connections, dangling requests.

That is, it turns out that the client does the work, sends requests, but receives 100% errors. The server, for its part, receives requests, honestly processes them, but when it tries to send the data back, nginx tells it: “This answer is no longer needed by anyone!”

That is, the client and server are trying to talk, but they do not hear each other .

The next stage

All requests degraded into erroneous, their number per second continues to grow. At this point, the nginx worker, trying to connect to socket uWSGI, receives error 502 (Bad Gateway), because the queue is complete.

Since in this case the request time is limited only by processing in nginx, the answer is very, very fast. In essence, nginx only needs to parse the HTTP protocol, make a connection to a unix socket and that’s it.

Here I want to return you to the original problem and tell in more detail what is happening there.

The following happens. For some reason, some vorkers have become slow, let’s not dwell on why, accept this as given. Because of this, uWSGI workers begin to end and form a line in front of themselves.

When a client’s request arrives, the 1st worker sends a request to the 5th, and this request must defend its turn. After he defended his turn and began to be processed by the 5th worker, the 5th worker, in turn, also shares his request into subqueries. A subquery arrives at the 1st worker, in which he must also defend the queue.

Now there is a timeout on the side of the 1st worker. At the same time, the request breaks, but the 5th worker knows nothing about this. It successfully continues to process the original request. And the first worker takes and does Retry on the same request.

In turn, the 5th worker continues to process, a timeout also occurs in it, because we defended the queue in the 1st. He terminates his request and also does Retry.

I think it’s clear that if the process continues, spinning this flywheel, as a result, the whole system will be clogged with requests that no one needs. In fact, we got a system with positive feedback . This is what laid our system.

When we analyzed this case, which, incidentally, took a lot of time, because it was completely unclear what was happening, we thought: since we spend a lot of time in a unix socket (we have a very long one - 2048 elements), let's try to do it is less so that for each worker there is only 1 element.

Let's now see how the exact same system behaves with the only parameter changed - the queue length.

The scenario is the same as last time: we start from 2500, we also raise requests: 2900, 3000, 3100, 3200. We enter the zone when the system is not stable. Demands are slowly dropping down, but not so fundamentally degraded. This system stabilizes literally around 45 ms. She continues to successfully process requests, but at the same time some of them lead to errors.

On the chart it looks like this.

At a certain point in time, the number of requests that it can successfully process has stabilized in the system. Moreover, at the time of stabilization erroneous requests began to form. This is the number of requests that the system is not able to process at the moment.

It turns out that due to the length of the queue, the system will always take only the request that will most likely be successfully executed. According to statistics, this volume of requests is always fixed. Everything else, the system simply ignores and gives a 502 error.

In the third phase, when the number of requests rises sharply, the system still maintains its operability. Moreover, it maintains its quality of service, that is, p99 of the response time does not increase, it has stabilized.

This is what we started in production with, I’ll tell you more about that. I experimented with the queue length and it turned out that the queue length determines, at least in our system, the worst response time.

The system stabilized at a slightly higher response time, but it was still stable. If you try to limit the queue to literally 20, then the response time does not increase at all, changing only a few milliseconds.

How we solved our problem

In the short term:

Reducing the length of the queue, first of all, conditionally broke the cycle . Conditionally, then the cyclic dependence is still present, but the properties have changed. The fact is that a specific server, if it does not have enough resources to process an incoming request, will simply reject the request. And this will be done very quickly, with a minimum of overhead. If there are enough resources in this server, the request will be accepted. As a result, the cycle, if this particular server is loaded, breaks.

Moreover, there is one more nuance. In the first example, there were 2 classes of errors - very fast (~ 1 ms) and very slow (~ 500 ms). By reducing the length of the queue, we translated all slow errors into a number of cheap ones, that is, made them very fast. Thanks to this, we have becomecheap replays . The situation has ceased to repeat when we, when doing Retry, essentially once again load some second component.

In the long run, of course, we had to switch to a 2-stage architecture that would coincide with the logical architecture. This is what we did in the next iteration when we developed a replacement for the search service.

Build a predictable RPC system

Now let's talk about what components you need and what you need to keep if you want to build a predictable RPC system - a framework with predictable system behavior in boundary conditions. I’ll tell you what we have, what approaches we use, without being tied to a specific service.

Returning to the first diagram (the interaction of the client and the server with each other), we have already touched on the first 2 bricks that need to be configured correctly:

- Queues on the server side

- Client -side timeouts .

We have already figured out the slow errors - we transferred them to the category of fast errors, what should I do with fast errors?

We need to consider 2 different cases.

First, what to do when the system is in a saturation phase ?

Here, in fact, nothing can be done! When the system is in the saturation phase, it has run out of resources, and it cannot process anything new. For example, I can carry 100 kg, but give me 101 kg, and I will fall.

This is a very good property, because here we are talking, in fact, about 2 very important, in my opinion, components of any framework:

- Fail fast. That is, if some kind of error occurs, then we do it very quickly.

- Soft degradation ( Graceful degradation ). If there is a significant load, the system will always stably successfully process a percentage of requests, instead of completely crashed.

Secondly, what if the queue overflows for a short time ?

This is possible if, for example, there is some kind of delay in the network, requests have accumulated in it, and part of them immediately arrives at the server, briefly overflowing the queue.

In this case, a very simple and reliable mechanism is to take and repeat (Retry). On our chart lies another brick.

Retry

In my practice, Retry is a powerful mechanism that in many cases allows you to get dry out of the water. For example, on the graph above is the number of errors on the server side, and below is the number of errors on the client side. It can be seen that the server for some reason constantly generates errors, and nothing is visible on the client side.

Quite often, it happens that even if we have some problems on the server side for any reason, they do not reach the client because of a competent Retry.

When we talk about Retry, it's important to keep in mind:

- Retry does not have to be infinite. It should always be limited by budget . We usually use 3 attempts at home.

- Only idempotent surgery can be safely repeated . This is an operation that no matter how many times you apply, the result will be the same.

When we send an HTTP request, everything that happened before the moment we sent the data to the server, that is, in fact, wrote to socket, is a safe operation, because we have not sent anything to the server yet. For example, if you had some kind of error when you tried to resolve the DNS address, or if the server is simply not available, then this is normal.

Everything that happened afterwards is in question, because according to the HTTP standard, read operations performed by the GET method are idempotent by default, which cannot be said about POST.

This is in theory, but in practice it comes out differently, depending on the system. For example, there are a number of systems that write: “Somewhere sending GET requests” Therefore, we need to look at a specific system.

- Another important point - a quick repeat is not effective (in my practice).

Imagine that a short-term problem occurred on the network, for example, a route is being rebuilt, some host has become unavailable for a short period of time. If you repeat one time, followed by a second, third, then you will burn your budget in just a few milliseconds. It is not effective. You did not give any chance to the system recovering.

Back off

We put another brick on the diagram in the service consumer block - back-off.

The main idea of back-off is to insert a pause between attempts in order to increase the chances of success. So we give the system a chance, and wait on our side, hoping that the system itself will recover.

I am aware of several back-off algorithms:

- Фиксированный — когда между попытками равнозначный промежуток. Например, первый запрос не прошел, мы отступили на 100 мс, попытались второй, опять не получилось, опять пропускаем 100 мс.

- Экспоненциальный. Например, первый раз делаем отступ на 10 мс, потом на 20, 40 и т.д.

Another important factor when we talk about back-offs is the randomization of indentation intervals - jitter.

Imagine that 100 requests are flying to you, which arrive at one moment and overload the server for a short time. All requests get an error. Now we are indenting for 100 ms, and the same requests again fly to the server. The situation, in fact, repeats itself - they overload the server in the same way.

If you add a randomized delta to the indentation locally in the server, it turns out that all the second requests are spread out in time and arrive at the server more than once. The server will be much more likely to handle them.

At Booking.com, we use an exponential randomized interval (see example). For example, the first indent is 53 ms, then 129 ms, 555 ms, etc.

Timeout

The next brick is timeouts on the server side, more precisely, the consistency of timeouts on the client and server sides.

I have already touched on this topic a little. It is important that when the client falls off, the server does not continue to process that request.

In modern frameworks there is such a mechanism as canceling a request - request cancellation . Our framework, unfortunately, does not have such properties, so we had to get around this problem.

When our client flies to the server, it sets the HTTP header - X-Booking-Timeout-Ms , in which it says what its timeout is equal to. After that, the server takes this data and sets its local server timeout based on the client and some delta to allow the request to reach the server:

server timeout = client timeout + delta

It turns out that when the client falls off after 100 ms, the server will fall off, for example, literally after 110 ms. That is, the request is canceled.

There are already 5 elements on the diagram that are not needed when the system is stable. They are required when everything is bad, in fact, like backups. These components are not needed in normal life, but when they are needed, they are really needed .

There are people who do not do backups yet. But those who had a negative experience, periodically restore backups. We are essentially for these purposes, that is, in order to test our entire stack, we use Chaos Monkey.

Chaos monkey

Initially, the idea of Chaos Monkey was that we turn off the data center and watch how our system reacts. We have not reached this scale yet - our scale is more modest. But there are interesting things that I want to talk about.

We have 3 types of Chaos Monkey.

1. Checking the HTTP client

We expect special behavior from the client when, for example, a 502 response from nginx arrives to it. We know that this is a cheap answer, so the client automatically repeats it. To test this logic, a certain percentage of requests takes and artificially throws a 502 response. In my opinion, this is 1% of artificially created errors in production.

That is, Chaos Monkey really spoils 1% of internal requests in order to make sure that when real requests arrive, the system will correctly process them.

2. Soft degradation of applications. The

second type of Chaos Monkey is more interesting. What does soft application degradation mean?

Imagine that there is a search page, it has the main search functionality, which is our main business. This functionality is tied to minor components that also make remote calls.

If this minor component crashes, we don’t want the whole page to fall. We expect a developer who writes minor components that he will design the system so that when an error arrives from a minor query, we will not generate the 500th response for our client. To do this, our servers periodically generate 400th responses, which mean that the request was incorrectly formed, and therefore the HTTP client forwards it to the topmost stack in the application, which is visible to the developer.

Such a Chaos Monkey mechanism works for us only internally. It is clear that if we run this in business, we can reduce the conversion, which is not good.

Since we are really talking about the failure of part of the functionality, we always have a list of critical queries, for example, the same basic search query that is not involved in this logic.

3. Readiness for delays in replication.

The last look of Chaos Monkey is a bit off to the side. We have a data retrieval system to which the client comes and says: “I have 1000 records, give me all of them!” But this system uses MySQL to store data and it may turn out that some part of these records has only been recorded and the master record has not yet reached the slave. Therefore, from time to time, the system responds that it has 900, and 100 does not yet, because they did not have time to reach the slave and appear later.

Using the Chaos Monkey mechanism, we test this functionality. The system generates the correct 200th answer, but emulates a logical error that some part of the records is simply missing. This also works for us in production.

We return to the diagram.

In fact, there are many more components. Only from what I know:

- Server-side need prioritization of requests and throttling .

- On the client side, there is such an interesting thing as a circuit breaker , when the client locally decides not to send a request to the server so that just the service consumer knows who he is talking to.

- We need discovery logic , smart load balancing and much more.

If we talk only about the stack over the transport, and there is still a lot of everything inside the transport that you need to configure correctly in order for the system to work predictably.

Conclusion

Predictably sending an HTTP request is a difficult task! There are so many components that need to be configured correctly. All systems are different, there are no silver bullets.

In short, test and verify ! In my opinion, the only way to make the system work is to put it in boundary conditions and see how it will react.

At Booking, we had to invent a little bicycle and write our own framework. Most likely, you are not working with Perl, so you are a little luckier.

Look at frameworks like gRPC and Finagle. If you prefer proxies, then Linkerd and Envoy. I must say right away that I do not have production experience with these systems, I can not recommend anything concrete.

Last - experiment with the queues . From our own experience, we realized that the length of the queue can radically change the behavior of the system, which is what happened with us. So put a note to yourself - try to play around.

But please do not copy my example - take and check.

There is an important point here - if you want to experiment, control over the client is important, because your system behavior is changing, and it is also important to adapt your client.

What I showed only works when nginx has a web server that works through a unix socket. TCP socket behaves differently.

That's all for today, and below are links that can help sort out the details:

- https://github.com/ikruglov/slapper

- SRE book

- resiliency patterns

- circuit breaker

- back-off

- tcp_abort_on_overflow

This year we decided to combine the HighLoad ++ Junior and Backend Conf programs - now the topics of both conferences will be considered as part of the Backend Conf RIT ++ . So do not worry, nothing is missing and we are still looking forward to your applications for reports. Moreover, impatience is growing, registration for speakers is open only until April 9 .