Overview of server monitoring systems. Replacing munin with ...

- Tutorial

I wanted to write an article for a very long time, but I did not have enough time. Nowhere (including on Habré) has I found such a simple alternative to munin as described in this article.

I’m a backend developer and very often there are no dedicated admins on my projects (especially at the very beginning of the product’s life), so I’ve been doing basic server administration for a long time (initial setup, configuration, backups, replication, monitoring, etc.). I really like it and I always learn something new in this direction.

In most cases, a single server is enough for a project, and as a senior developer (and just a responsible person), I always had to control its resources in order to understand when we run into its limitations. Munin was enough for these purposes.

It is easy to install and has small requirements. It is written in perl and uses a ring database ( RRDtool ).

It was always enough for monitoring server resources, and for monitoring server availability a free service like uptimerobot.com was used .

I use this combination to monitor my home projects on a virtual server.

If the project grows from one server, then on the second server it is enough to install munin-node, and on the first - add one line in the config to collect metrics from the second server. The graphs for both servers will be separate, which is not convenient for viewing the overall picture - which server runs out of free space on the disk, and which RAM. This situation can be corrected by adding a dozen lines to the config for aggregation of one chart.with metrics from both servers. Accordingly, it is advisable to do this only for the most basic metrics. If you make a mistake in the config, you will have to read for a long time in the logs what exactly led to it and, not finding the information, try to correct the situation using the “poke method”.

Needless to say, for more servers this turns into a real hell. Maybe this is due to the fact that munin was developed in 2003 and was not originally designed for this.

I determined for myself the necessary qualities that a new monitoring system should have:

I will list all that I considered.

Almost the same as munin only in php . As a database, you can use rrdtool like munin or mysql . First release: 2001 .

Almost the same as the previous ones, written in php , rrdtool as the database . First release: 1998 .

An even simpler system than the previous ones. Written in c , rrdtool as the database . First release: 2005 .

It consists of three components written in python :

carbon collects metrics, writes them to the db

whisper - own rrdtool -like db

graphite-web - interface

First release: 2008 .

Professional monitoring system used by most admins. There is almost everything, including email notifications (for slack and telegram, you can write a simple bash script). Heavy for user and server. Previously, I had to use, impressions, as if I returned from jira to mantis.

The kernel is written in c , the web interface is in php . As a database it can use: MySQL, PostgreSQL, SQLite, Oracle or IBM DB2 . First release: 2001 .

A worthy alternative to Zabbix. Written in p . First release: 1999 .

Fork Nagios. As a database it can use: MySQL, Oracle, and PostgreSQL . First release: 2009 year.

All of the above systems are worthy of respect. They are easily installed from packages in most linux distributions and have long been used in production on many servers, they are supported, but they are very poorly developed and have an outdated interface.

Half of the products use sql databases, which is not optimal for storing historical data (metrics). On the one hand, these databases are universal, and on the other hand, they create a large load on disks, and data takes up more storage space.

For such tasks, modern time series databases such as ClickHouse are more suitable .

New generation monitoring systems use time series databases, some of them include them as an inseparable part, others are used as a separate component, and the third can work without any databases.

It does not require a database at all, but it can upload metrics to Graphite, OpenTSDB, Prometheus, InfluxDB . Written in c and python . First release: 2016 .

It consists of three components written in go :

prometheus - the kernel, its own built-in database and web interface.

node_exporter - an agent that can be installed on another server and forward metrics to the kernel only works with prometheus.

alertmanager - notification system.

First release: 2014 .

It consists of four components written in go that can work with third-party products:

telegraf - an agent that can be installed on another server and send metrics, as well as logs to influxdb , elasticsearch , prometheus or graphite databases , as well as to several queue servers .

influxdb is a database that can receive data from telegraf , netdata or collectd .

chronograf - web interface for visualization of metrics from the database.

kapacitor - notification system.

First release:2013 year.

I would also like to mention a product such as grafana , it is written in go and allows you to visualize data from influxdb, elasticsearch, clickhouse, prometheus, graphite , as well as send notifications by e-mail, to slack and telegram.

First release: 2014 .

On the Internet and on Habré, including, it is full of examples of using various components from different products to get what you need.

carbon (agent) -> whisper (db) -> grafana (interface)

netdata (as agent) -> null / influxdb / elasticsearch / prometheus / graphite (as db) -> grafana (interface)

node_exporter (agent) -> prometheus (as a database) -> grafana (interface)

collectd (agent) -> influxdb (database) -> grafana (interface)

zabbix (agent + server) -> mysql -> grafana (interface)

telegraf (agent) -> elasticsearch (db) -> kibana (interface)

... etc.

I even saw a mention of such a bunch:

... (agent) -> clickhouse (db) -> grafana (interface)

In most cases, grafana was used as the interface, even if it was in conjunction with a product that already contained its own interface (prometheus, graphite-web).

Therefore (and also due to its versatility, simplicity and convenience) as an interface, I settled on grafana and proceeded to choosing a database: prometheus dropped out because I did not want to pull all its functionality together with the interface because of only one database, graphite - database of the previous decade, reworked by rrdtool-bd of the previous century, well, actually I settled on influxdb and as it turned out - not one I made that choice .

Also, I decided to choose telegraf for myself, because it met my needs (a large number of metrics and the ability to write my own plugins in bash), and also works with different databases, which may be useful in the future.

The final bunch I got is this:

telegraf (agent) -> influxdb (db) -> grafana (interface + notifications)

All components do not contain anything superfluous and are written in go . The only thing I was afraid of was that this bunch would be difficult to install and configure, but as you can see below, it was in vain.

So, a short installation guide for TIG:

influxdb

Now you can make queries to the database (although there is no data there yet):

telegraf

Telegraf will automatically create a base in influxdb with the name "telegraf", the username "telegraf" and the password "metricsmetricsmetricsmetrics".

grafana

The interface is available at

Initially, nothing will be in the interface, because the graphan knows nothing about the data.

1) You need to go to the sources and specify influxdb (db: telegraf)

2) You need to create your own dashboard with the necessary metrics (it will take a lot of time) or import the finished one, for example:

928 - allows you to see all the metrics for the selected host

914 - the same

61 - allows metrics for selected hosts on one chart

Grafana has an excellent tool for importing third-party dashboards (just specify its number), you can also create your own dashboard and share it with the community.

Here is a list all dashboards working with influxdb data that were collected using the telegraf collector.

All ports on your servers should be open only from those ip that you trust or authorization must be enabled in the products used and the default passwords changed (I do both).

influxdb

In influxdb, authorization is disabled by default and anyone can do anything. Therefore, if there is no firewall on the server, then I highly recommend enabling authorization:

telegraf

grafana

In the source settings, you need to specify a new login for influxdb: "grafana" and the password "password_for_grafana" from the item above.

Also in the interface you need to change the default password for the admin user.

Update: added an item to his criteria “free and open source”, forgot to specify it from the very beginning, and now I am advised of a bunch of paid / shareware / trial / closed software. There would be a deal with free.

Update2: now a group of enthusiasts is creating a table in google docs , comparing various monitoring systems by key parameters (Language, Bytes / point, Clustering). Work in full swing, the current slice under the cut.

Update3: another comparison of Open-Source TSDB in Google Docs. A little more elaborate, but the systems are smaller than AnyKey80lvl

P.S .: if I omitted some points in the description of the setup-installation, then write in the comments and I will update the article. Typos - in PM.

PPS: of course, no one will hear this (based on previous experience in writing articles), but I still have to try: do not ask questions in PM on Habr, VK, fb, etc., but write comments here.

PPPS: the size of the article and the time spent on it are strongly out of the initial "budget", I hope that the results of this work will be useful to someone.

I’m a backend developer and very often there are no dedicated admins on my projects (especially at the very beginning of the product’s life), so I’ve been doing basic server administration for a long time (initial setup, configuration, backups, replication, monitoring, etc.). I really like it and I always learn something new in this direction.

In most cases, a single server is enough for a project, and as a senior developer (and just a responsible person), I always had to control its resources in order to understand when we run into its limitations. Munin was enough for these purposes.

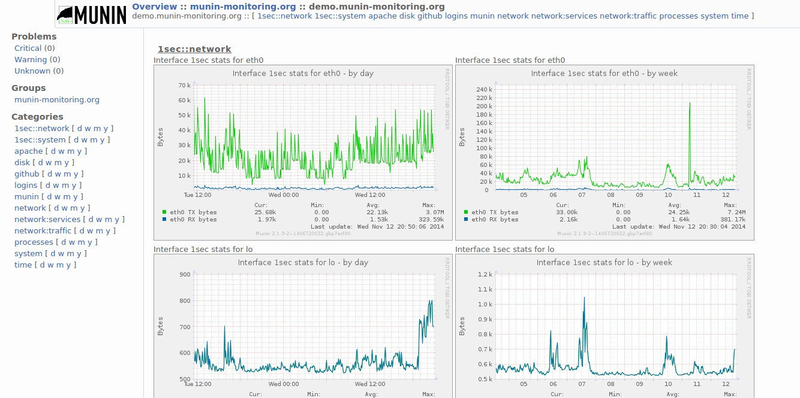

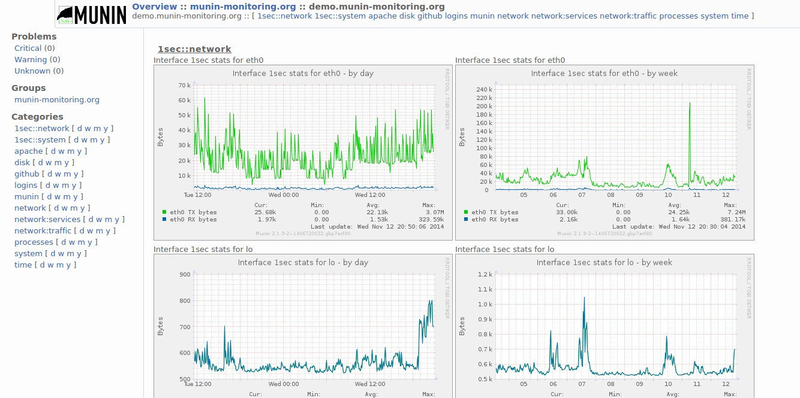

Interface

Munin

It is easy to install and has small requirements. It is written in perl and uses a ring database ( RRDtool ).

Installation example

We execute the commands: apt-get install munin munin-node

service munin-node start

Now munin-node will collect system metrics and write them to the database, and munin will generate html reports from this database every 5 minutes and put them in the / folder var / cache / munin / www

For convenient viewing of these reports, you can create a simple config for nginx

Actually that's all. You can already watch any graphs of CPU, memory, hard disk, network and much more for a day / week / month / year. Most often, I was interested in the read / write load on the hard disk, because the database was always the bottleneck.

We execute the commands: apt-get install munin munin-node

service munin-node start

Now munin-node will collect system metrics and write them to the database, and munin will generate html reports from this database every 5 minutes and put them in the / folder var / cache / munin / www

For convenient viewing of these reports, you can create a simple config for nginx

server {

listen 80;

server_name munin.myserver.ru;

root /var/cache/munin/www;

index index.html;

}Actually that's all. You can already watch any graphs of CPU, memory, hard disk, network and much more for a day / week / month / year. Most often, I was interested in the read / write load on the hard disk, because the database was always the bottleneck.

It was always enough for monitoring server resources, and for monitoring server availability a free service like uptimerobot.com was used .

I use this combination to monitor my home projects on a virtual server.

If the project grows from one server, then on the second server it is enough to install munin-node, and on the first - add one line in the config to collect metrics from the second server. The graphs for both servers will be separate, which is not convenient for viewing the overall picture - which server runs out of free space on the disk, and which RAM. This situation can be corrected by adding a dozen lines to the config for aggregation of one chart.with metrics from both servers. Accordingly, it is advisable to do this only for the most basic metrics. If you make a mistake in the config, you will have to read for a long time in the logs what exactly led to it and, not finding the information, try to correct the situation using the “poke method”.

Needless to say, for more servers this turns into a real hell. Maybe this is due to the fact that munin was developed in 2003 and was not originally designed for this.

Alternatives to munin for monitoring multiple servers

I determined for myself the necessary qualities that a new monitoring system should have:

- the number of metrics is not less than that of munin (it has about 30 basic graphs and about 200 more plug-ins in the kit)

- the ability to write custom plugins in bash (I had two of these plugins)

- have small server requirements

- the ability to display metrics from different servers on the same chart without editing configs

- notifications on mail, in slack and telegram

- Time Series Database more powerful than RRDtool

- easy installation

- nothing extra

- free and open source

I will list all that I considered.

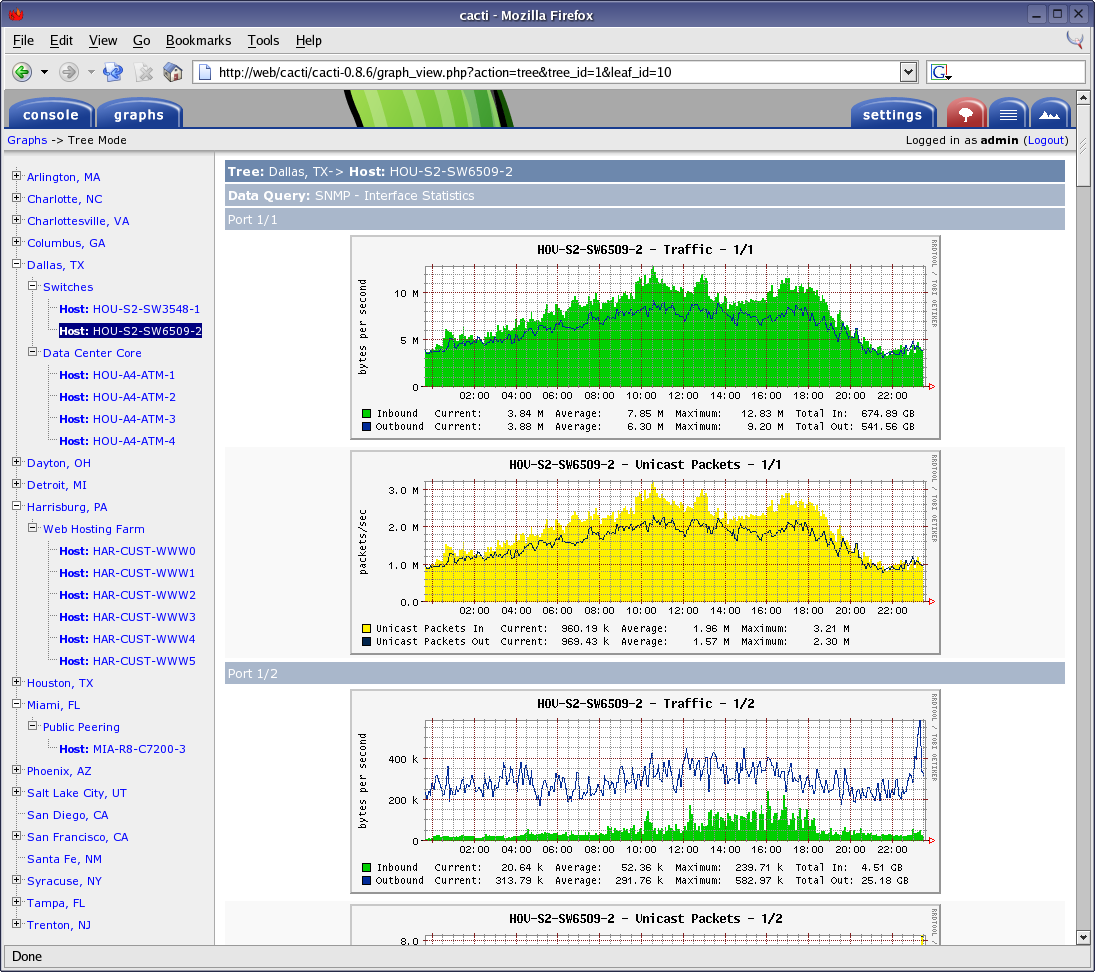

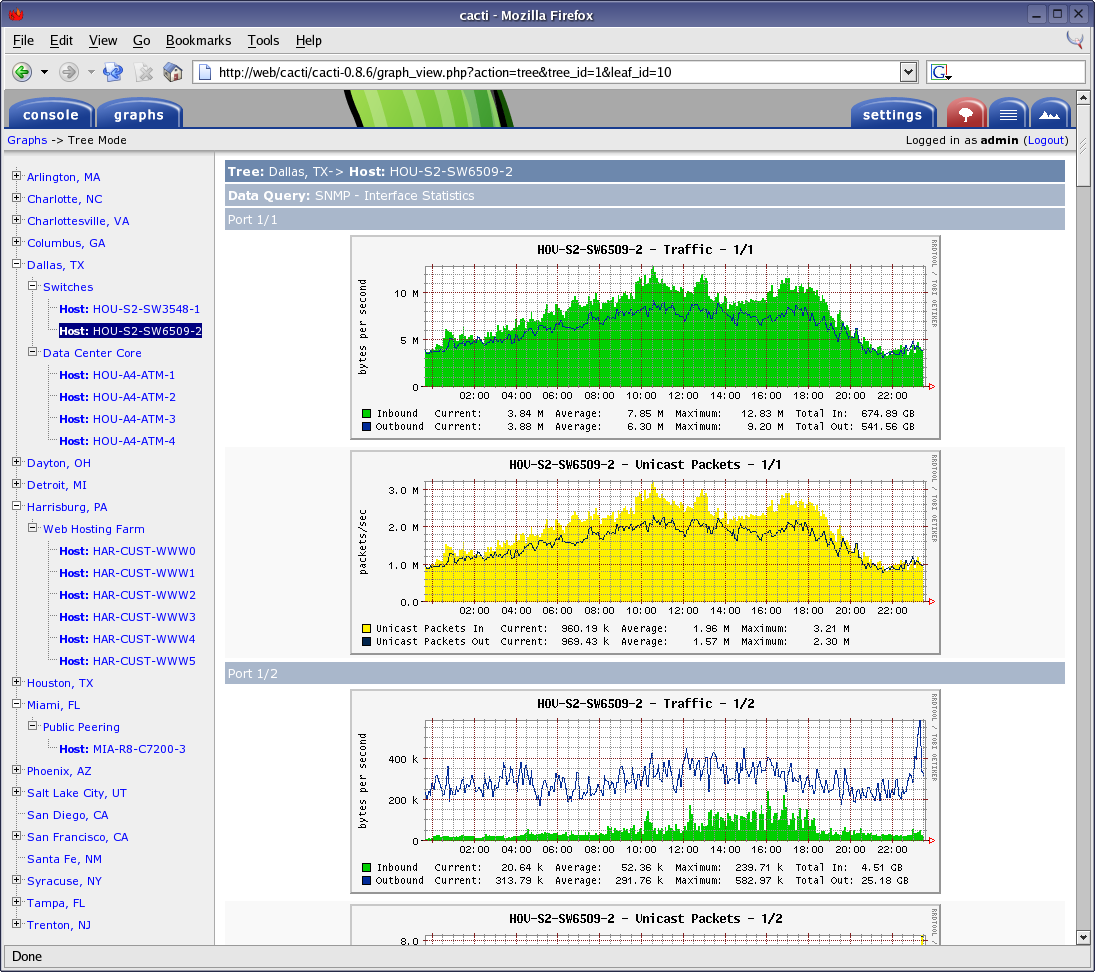

Cacti

Almost the same as munin only in php . As a database, you can use rrdtool like munin or mysql . First release: 2001 .

Interface

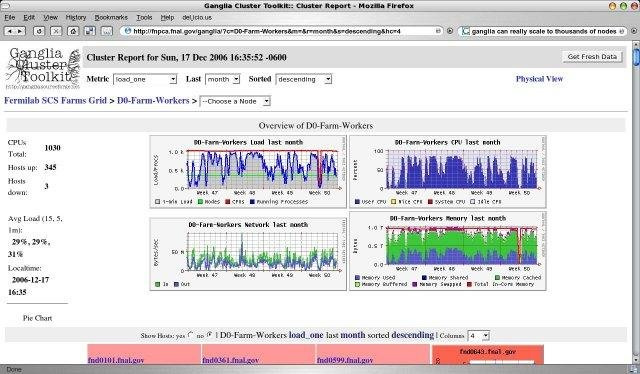

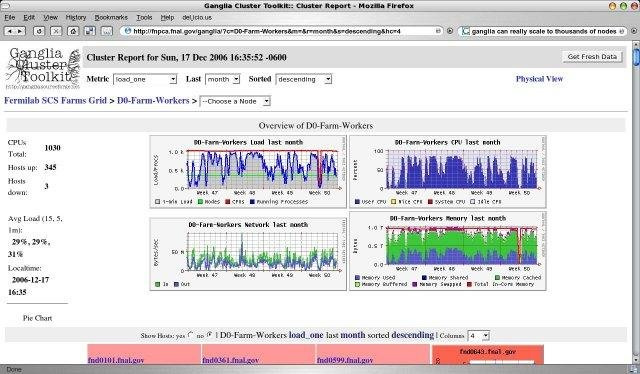

Ganglia

Almost the same as the previous ones, written in php , rrdtool as the database . First release: 1998 .

Interface

Collectd

An even simpler system than the previous ones. Written in c , rrdtool as the database . First release: 2005 .

Interface

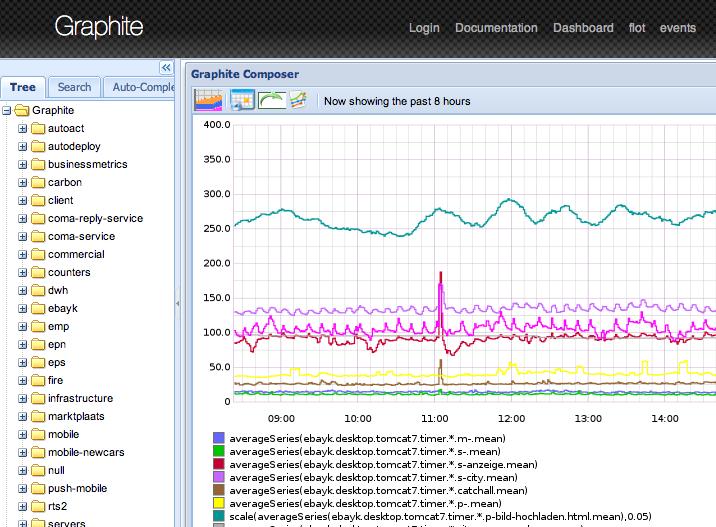

Graphite

It consists of three components written in python :

carbon collects metrics, writes them to the db

whisper - own rrdtool -like db

graphite-web - interface

First release: 2008 .

Interface

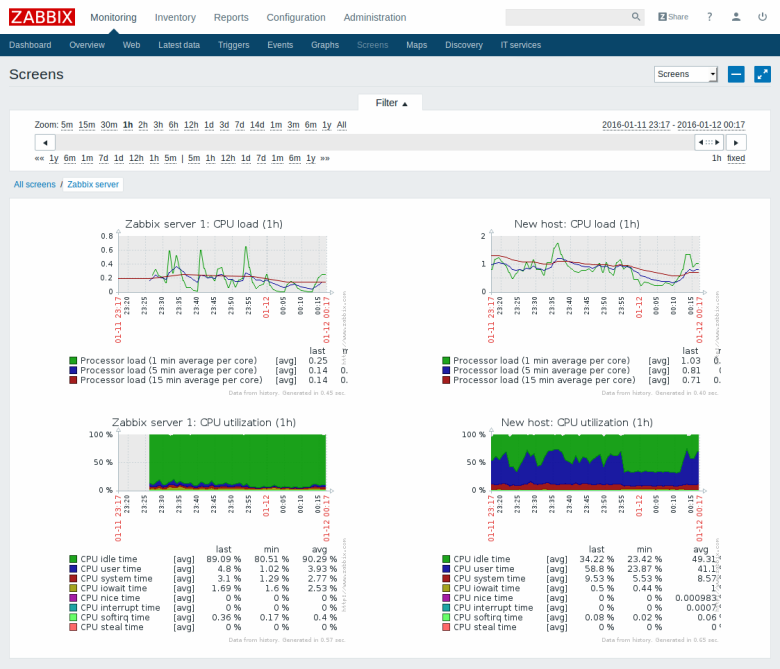

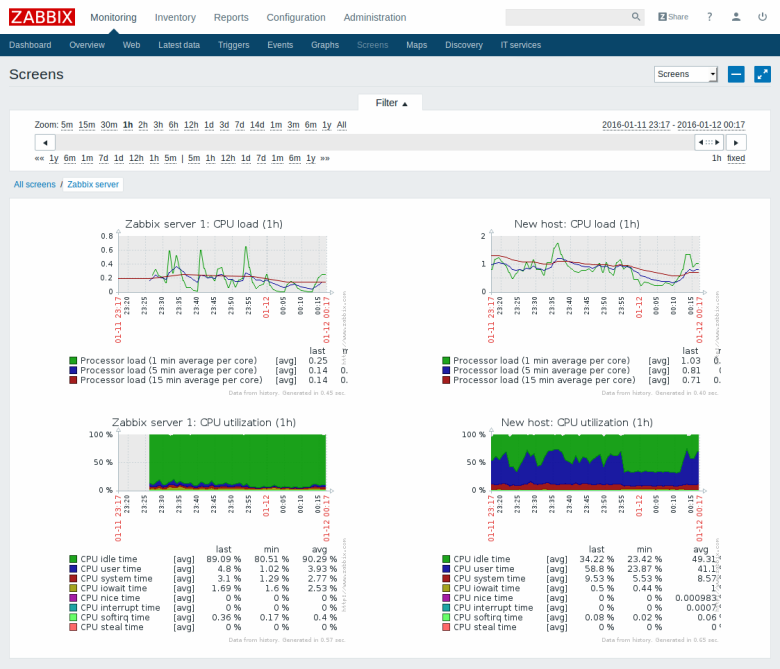

Zabbix

Professional monitoring system used by most admins. There is almost everything, including email notifications (for slack and telegram, you can write a simple bash script). Heavy for user and server. Previously, I had to use, impressions, as if I returned from jira to mantis.

The kernel is written in c , the web interface is in php . As a database it can use: MySQL, PostgreSQL, SQLite, Oracle or IBM DB2 . First release: 2001 .

Interface

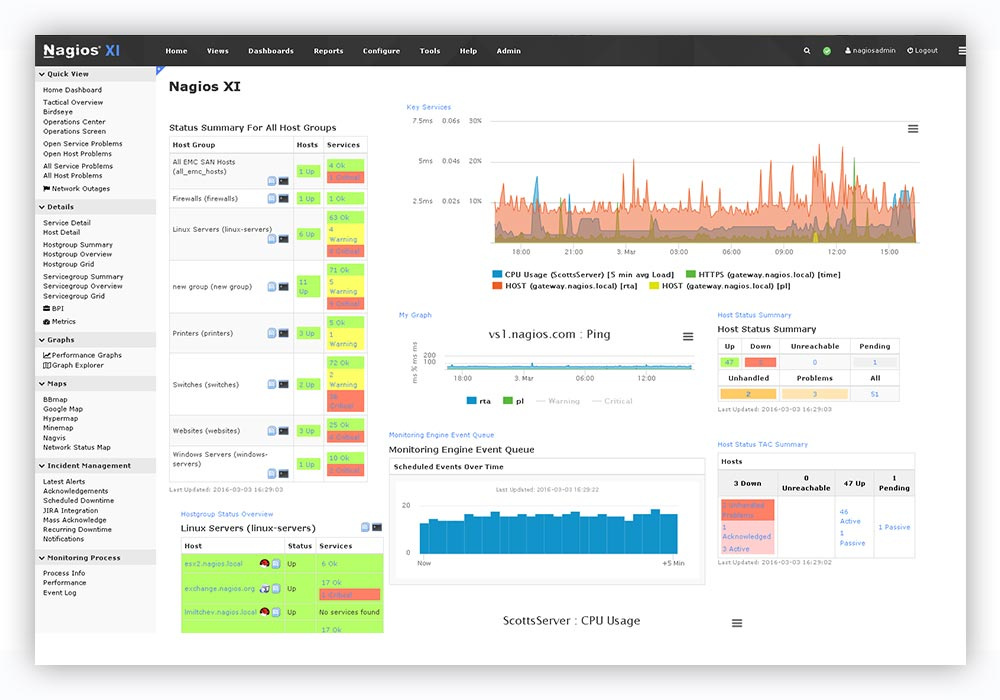

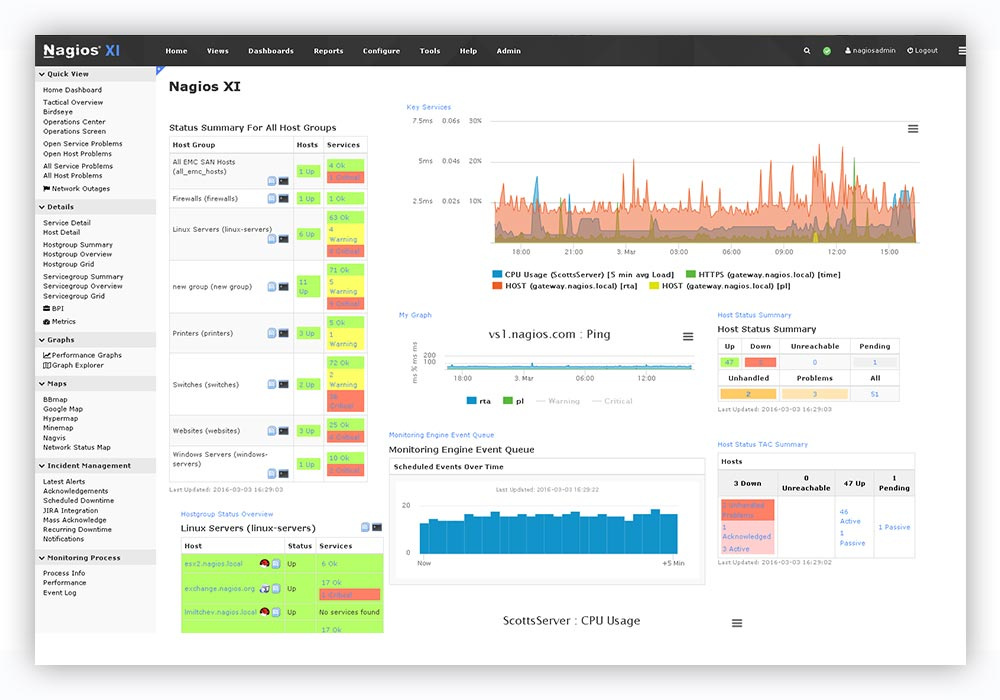

Nagios

A worthy alternative to Zabbix. Written in p . First release: 1999 .

Interface

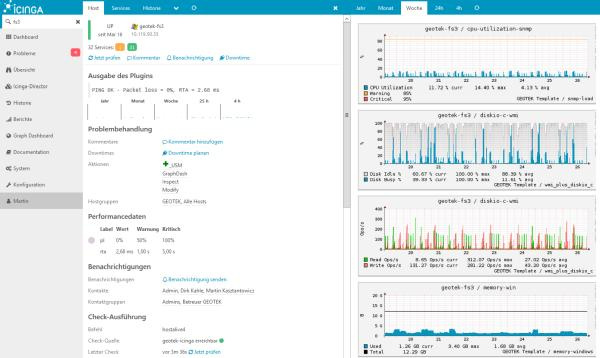

Icinga

Fork Nagios. As a database it can use: MySQL, Oracle, and PostgreSQL . First release: 2009 year.

Interface

Small digression

All of the above systems are worthy of respect. They are easily installed from packages in most linux distributions and have long been used in production on many servers, they are supported, but they are very poorly developed and have an outdated interface.

Half of the products use sql databases, which is not optimal for storing historical data (metrics). On the one hand, these databases are universal, and on the other hand, they create a large load on disks, and data takes up more storage space.

For such tasks, modern time series databases such as ClickHouse are more suitable .

New generation monitoring systems use time series databases, some of them include them as an inseparable part, others are used as a separate component, and the third can work without any databases.

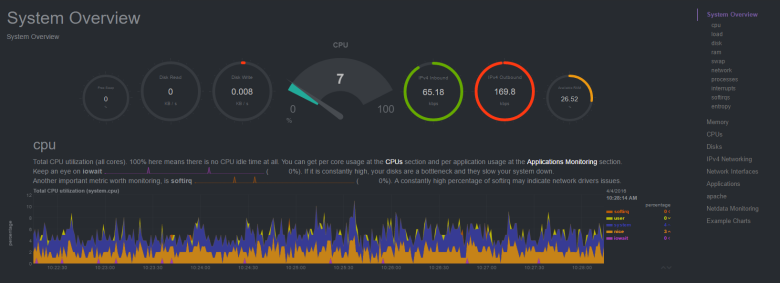

Netdata

It does not require a database at all, but it can upload metrics to Graphite, OpenTSDB, Prometheus, InfluxDB . Written in c and python . First release: 2016 .

Interface

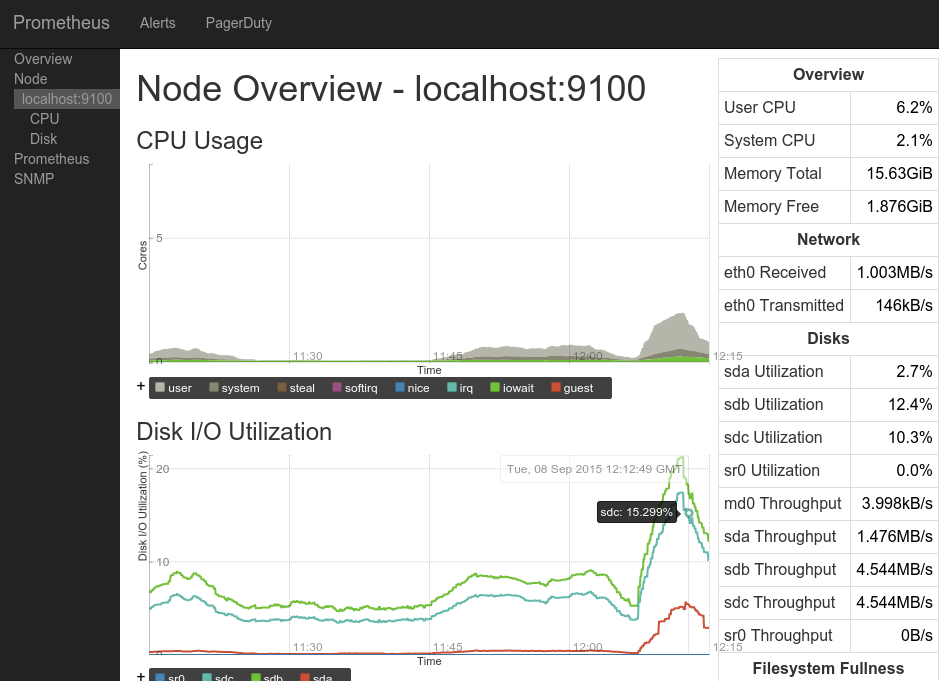

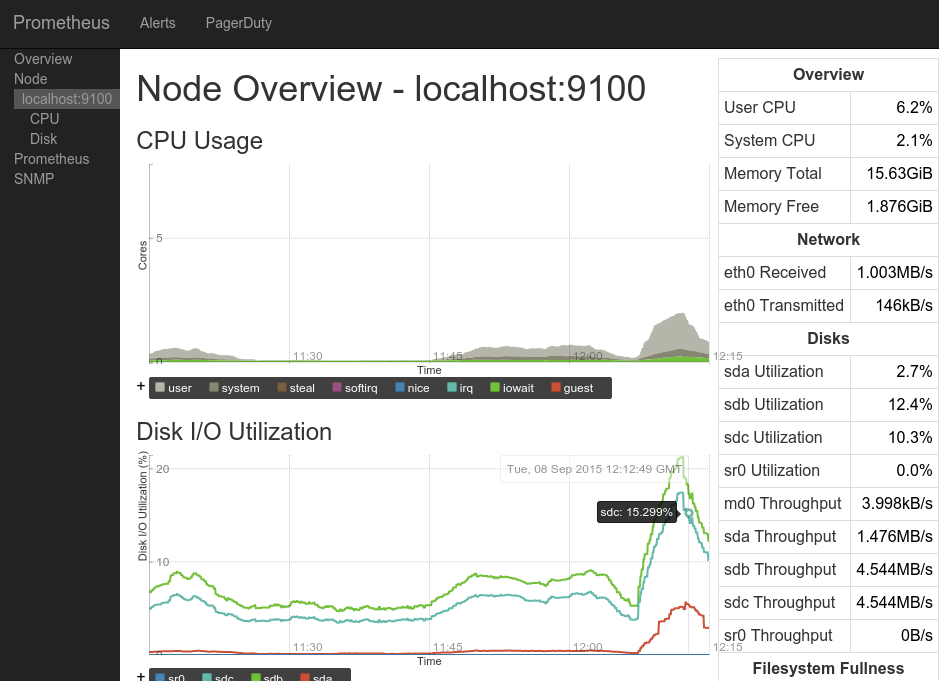

Prometheus

It consists of three components written in go :

prometheus - the kernel, its own built-in database and web interface.

node_exporter - an agent that can be installed on another server and forward metrics to the kernel only works with prometheus.

alertmanager - notification system.

First release: 2014 .

Interface

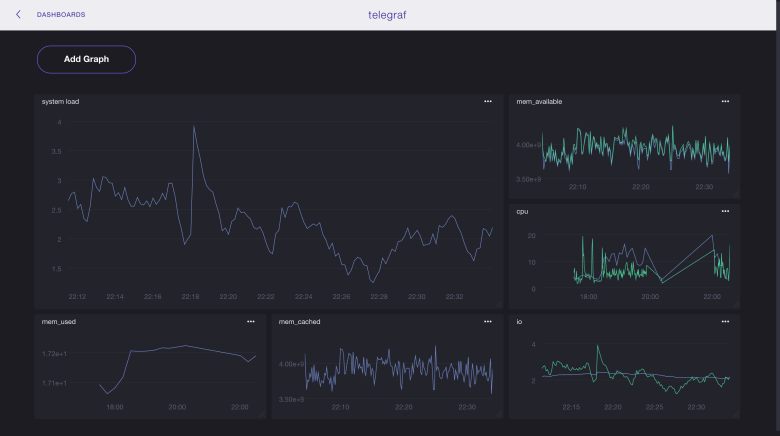

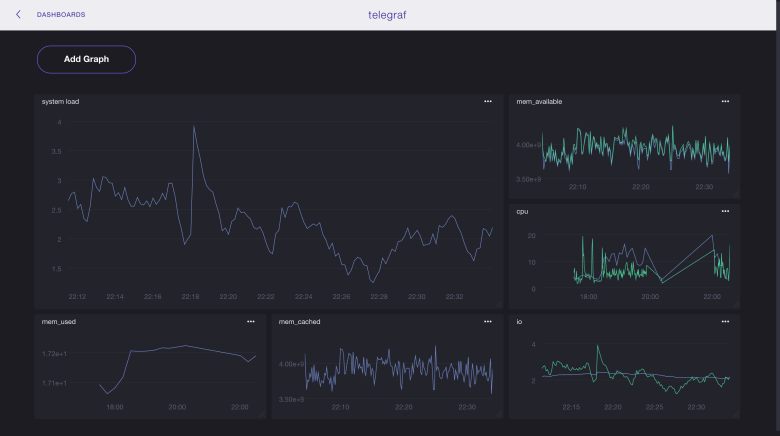

InfluxData (TICK Stack)

It consists of four components written in go that can work with third-party products:

telegraf - an agent that can be installed on another server and send metrics, as well as logs to influxdb , elasticsearch , prometheus or graphite databases , as well as to several queue servers .

influxdb is a database that can receive data from telegraf , netdata or collectd .

chronograf - web interface for visualization of metrics from the database.

kapacitor - notification system.

First release:2013 year.

Interface

I would also like to mention a product such as grafana , it is written in go and allows you to visualize data from influxdb, elasticsearch, clickhouse, prometheus, graphite , as well as send notifications by e-mail, to slack and telegram.

First release: 2014 .

Interface

Choose the best

On the Internet and on Habré, including, it is full of examples of using various components from different products to get what you need.

carbon (agent) -> whisper (db) -> grafana (interface)

netdata (as agent) -> null / influxdb / elasticsearch / prometheus / graphite (as db) -> grafana (interface)

node_exporter (agent) -> prometheus (as a database) -> grafana (interface)

collectd (agent) -> influxdb (database) -> grafana (interface)

zabbix (agent + server) -> mysql -> grafana (interface)

telegraf (agent) -> elasticsearch (db) -> kibana (interface)

... etc.

I even saw a mention of such a bunch:

... (agent) -> clickhouse (db) -> grafana (interface)

In most cases, grafana was used as the interface, even if it was in conjunction with a product that already contained its own interface (prometheus, graphite-web).

Therefore (and also due to its versatility, simplicity and convenience) as an interface, I settled on grafana and proceeded to choosing a database: prometheus dropped out because I did not want to pull all its functionality together with the interface because of only one database, graphite - database of the previous decade, reworked by rrdtool-bd of the previous century, well, actually I settled on influxdb and as it turned out - not one I made that choice .

Also, I decided to choose telegraf for myself, because it met my needs (a large number of metrics and the ability to write my own plugins in bash), and also works with different databases, which may be useful in the future.

The final bunch I got is this:

telegraf (agent) -> influxdb (db) -> grafana (interface + notifications)

All components do not contain anything superfluous and are written in go . The only thing I was afraid of was that this bunch would be difficult to install and configure, but as you can see below, it was in vain.

So, a short installation guide for TIG:

influxdb

wget https://dl.influxdata.com/influxdb/releases/influxdb-1.2.2.x86_64.rpm && yum localinstall influxdb-1.2.2.x86_64.rpm #centos

wget https://dl.influxdata.com/influxdb/releases/influxdb_1.2.4_amd64.deb && dpkg -i influxdb_1.2.4_amd64.deb #ubuntu

systemctl start influxdb

systemctl enable influxdbNow you can make queries to the database (although there is no data there yet):

http://localhost:8086/query?q=select+*+from+telegraf..cputelegraf

wget https://dl.influxdata.com/telegraf/releases/telegraf-1.2.1.x86_64.rpm && yum -y localinstall telegraf-1.2.1.x86_64.rpm #centos

wget https://dl.influxdata.com/telegraf/releases/telegraf_1.3.2-1_amd64.deb && dpkg -i telegraf_1.3.2-1_amd64.deb #ubuntu

#в случае установки на сервер отличный от того где находится influxdb необходимо в конфиге /etc/telegraf/telegraf.conf в секции [[outputs.influxdb]] поменять параметр urls = ["http://localhost:8086"]:

sed -i 's| urls = ["http://localhost:8086"]| urls = ["http://myserver.ru:8086"]|g' /etc/telegraf/telegraf.conf

systemctl start telegraf

systemctl enable telegrafTelegraf will automatically create a base in influxdb with the name "telegraf", the username "telegraf" and the password "metricsmetricsmetricsmetrics".

grafana

yum install https://s3-us-west-2.amazonaws.com/grafana-releases/release/grafana-4.3.2-1.x86_64.rpm #centos

wget https://s3-us-west-2.amazonaws.com/grafana-releases/release/grafana_4.3.2_amd64.deb && dpkg -i grafana_4.3.2_amd64.deb #ubuntu

systemctl start grafana-server

systemctl enable grafana-server

The interface is available at

http://myserver.ru:3000. Login: admin, password: admin. Initially, nothing will be in the interface, because the graphan knows nothing about the data.

1) You need to go to the sources and specify influxdb (db: telegraf)

2) You need to create your own dashboard with the necessary metrics (it will take a lot of time) or import the finished one, for example:

928 - allows you to see all the metrics for the selected host

914 - the same

61 - allows metrics for selected hosts on one chart

Grafana has an excellent tool for importing third-party dashboards (just specify its number), you can also create your own dashboard and share it with the community.

Here is a list all dashboards working with influxdb data that were collected using the telegraf collector.

Emphasis on security

All ports on your servers should be open only from those ip that you trust or authorization must be enabled in the products used and the default passwords changed (I do both).

influxdb

In influxdb, authorization is disabled by default and anyone can do anything. Therefore, if there is no firewall on the server, then I highly recommend enabling authorization:

#Создаём базу и пользователей:

influx -execute 'CREATE DATABASE telegraf'

influx -execute 'CREATE USER admin WITH PASSWORD "password_for_admin" WITH ALL PRIVILEGES'

influx -execute 'CREATE USER telegraf WITH PASSWORD "password_for_telegraf"'

influx -execute 'CREATE USER grafana WITH PASSWORD "password_for_grafana"'

influx -execute 'GRANT WRITE ON "telegraf" TO "telegraf"' #чтобы telegraf мог писать метрики в бд

influx -execute 'GRANT READ ON "telegraf" TO "grafana"' #чтобы grafana могла читать метрики из бд

#в конфиге /etc/influxdb/influxdb.conf в секции [http] меняем параметр auth-enabled для включения авторизации:

sed -i 's| # auth-enabled = false| auth-enabled = true|g' /etc/influxdb/influxdb.conf

systemctl restart influxdbtelegraf

#в конфиге /etc/telegraf/telegraf.conf в секции [[outputs.influxdb]] меняем пароль на созданный в предыдущем пункте:

sed -i 's| # password = "metricsmetricsmetricsmetrics"| password = "password_for_telegraf"|g' /etc/telegraf/telegraf.conf

systemctl restart telegrafgrafana

In the source settings, you need to specify a new login for influxdb: "grafana" and the password "password_for_grafana" from the item above.

Also in the interface you need to change the default password for the admin user.

Admin -> profile -> change passwordUpdate: added an item to his criteria “free and open source”, forgot to specify it from the very beginning, and now I am advised of a bunch of paid / shareware / trial / closed software. There would be a deal with free.

How did i choose

1) first looked at the comparison of monitoring systems on the English Wikipedia

2) looked at the top-end projects on the github

3) looked at what is on this topic on the hub

4) google what systems are now in the trend

2) looked at the top-end projects on the github

3) looked at what is on this topic on the hub

4) google what systems are now in the trend

Update2: now a group of enthusiasts is creating a table in google docs , comparing various monitoring systems by key parameters (Language, Bytes / point, Clustering). Work in full swing, the current slice under the cut.

Update3: another comparison of Open-Source TSDB in Google Docs. A little more elaborate, but the systems are smaller than AnyKey80lvl

P.S .: if I omitted some points in the description of the setup-installation, then write in the comments and I will update the article. Typos - in PM.

PPS: of course, no one will hear this (based on previous experience in writing articles), but I still have to try: do not ask questions in PM on Habr, VK, fb, etc., but write comments here.

PPPS: the size of the article and the time spent on it are strongly out of the initial "budget", I hope that the results of this work will be useful to someone.

Only registered users can participate in the survey. Please come in.

Что вы используете для мониторинга?

- 11.9%munin186

- 7.7%cacti121

- 0.3%ganglia6

- 3.9%collectd61

- 5.3%graphite84

- 52.3%zabbix819

- 11.1%nagios175

- 3.1%icinga50

- 2.8%netdata45

- 7.8%prometheus122

- 7.8%influxdb+telegraf123

- 21.5%grafana (в качестве интерфейса)337

- 3.1%внешняя система мониторинга (saas)50

- 6%самописная система95

- 10.8%другое170