Why I do not believe microbenchmark

I think that this one screenshot of a real - life performance measurement is enough to convey the meaning of the article, but if the reader is interested in my thoughts on this subject, then welcome.

Programmers are obsessed with the speed of program execution. We follow the speed even where this speed is not very important. Sometimes contrary to common sense and logic. Not even fully understanding what the words “speed” or “performance” really mean in each particular case. We still want the fastest hardware, the fastest language, and the lightest framework.

I readily believe that you, username, are not like that. That you yourself are able to write the right benchmark, you know how this or that runtime works, you hate speed optimization just for the sake of speed optimization and you know a lot about hardware. But there are more people from the previous paragraph. Checked.

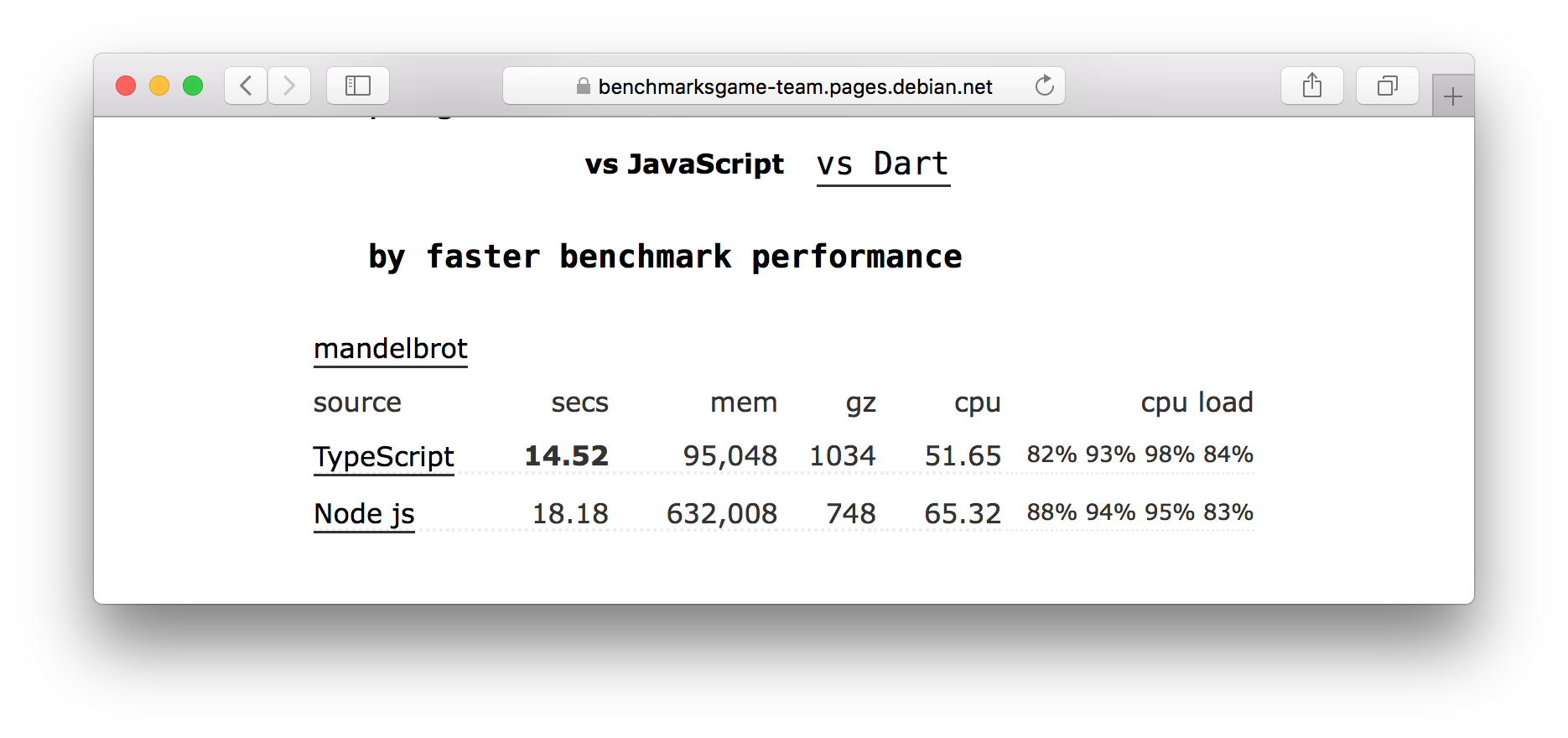

Seeing the example in the picture above, I first choked coffee ...

- How can typescript work faster than javascript, and at the same time eat several times less memory ?!

- No!

Typescript does not have its own runtime. That is, you cannot run typescript anywhere or almost anywhere. You must first compile it into javascript, which you then run in runtime, which this same javascript understands. In this case, node v11.3434 acted as such a runtime. By a happy coincidence, the same runtime example runs on a javascript example.

Instead of comparing languages, we get a micro-competition on sports programming.

→ Typescript code

→ Javascript code

It turns out that the execution time, memory consumption and other characteristics critically depend on who and how this verification code was written. Of course, here you can drag by the ears the argument that typescript makes you write "the right code." But no one will hurt to compile typescript in javascript, and I also did not see any places with optimizations.

By the way, how long have you seen someone write a similar code, and he was reviewing? Solid, OOP and OP here and does not smell. The code is written explicitly in a procedural style. Because his task is different. Understand correctly, although the code solves the problem, it requires refactoring before production. And it is unknown how refactoring will affect performance. But this is probably a niggle.

With an example sorted out. Obviously, the example is inadequate.

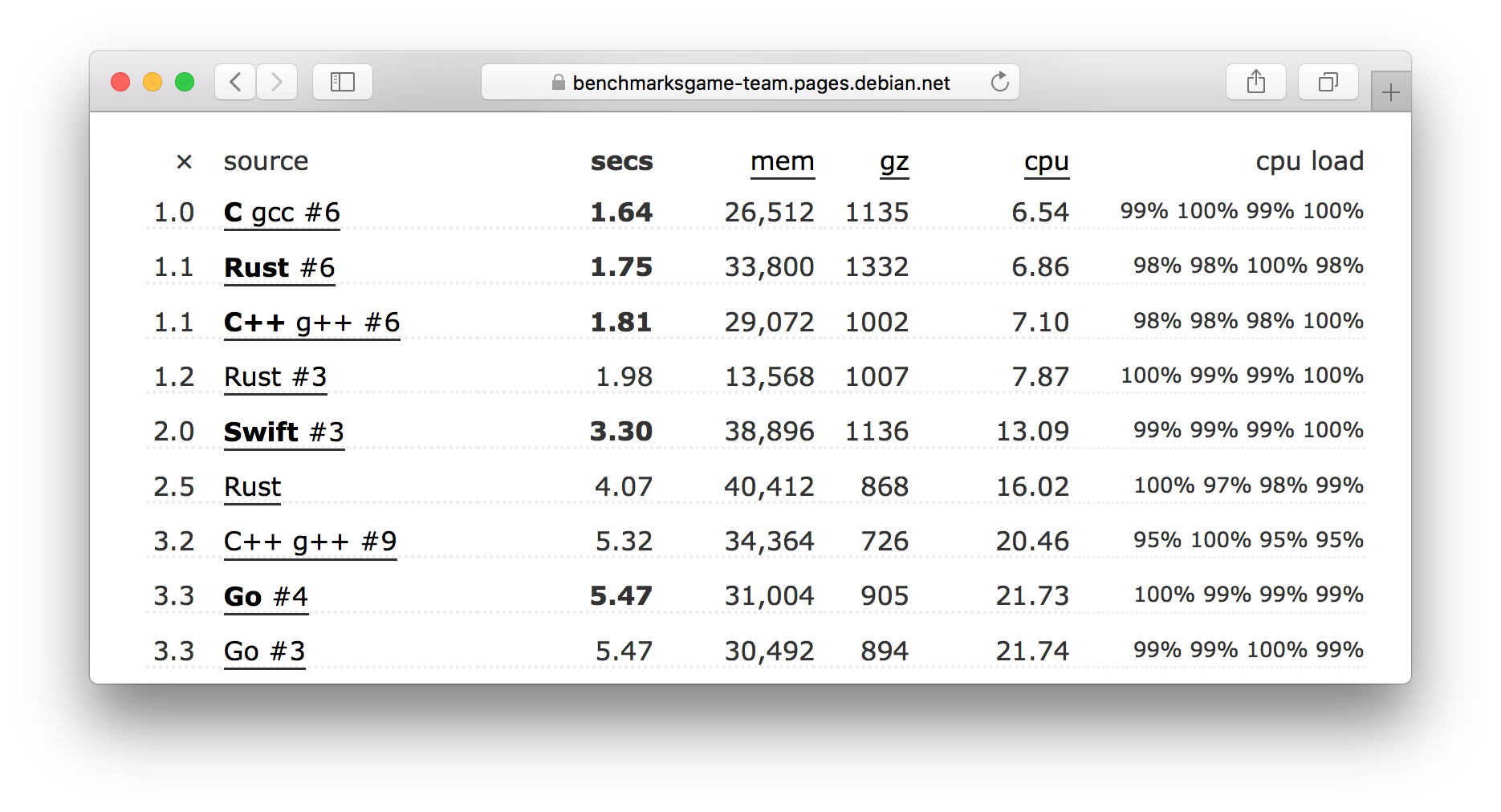

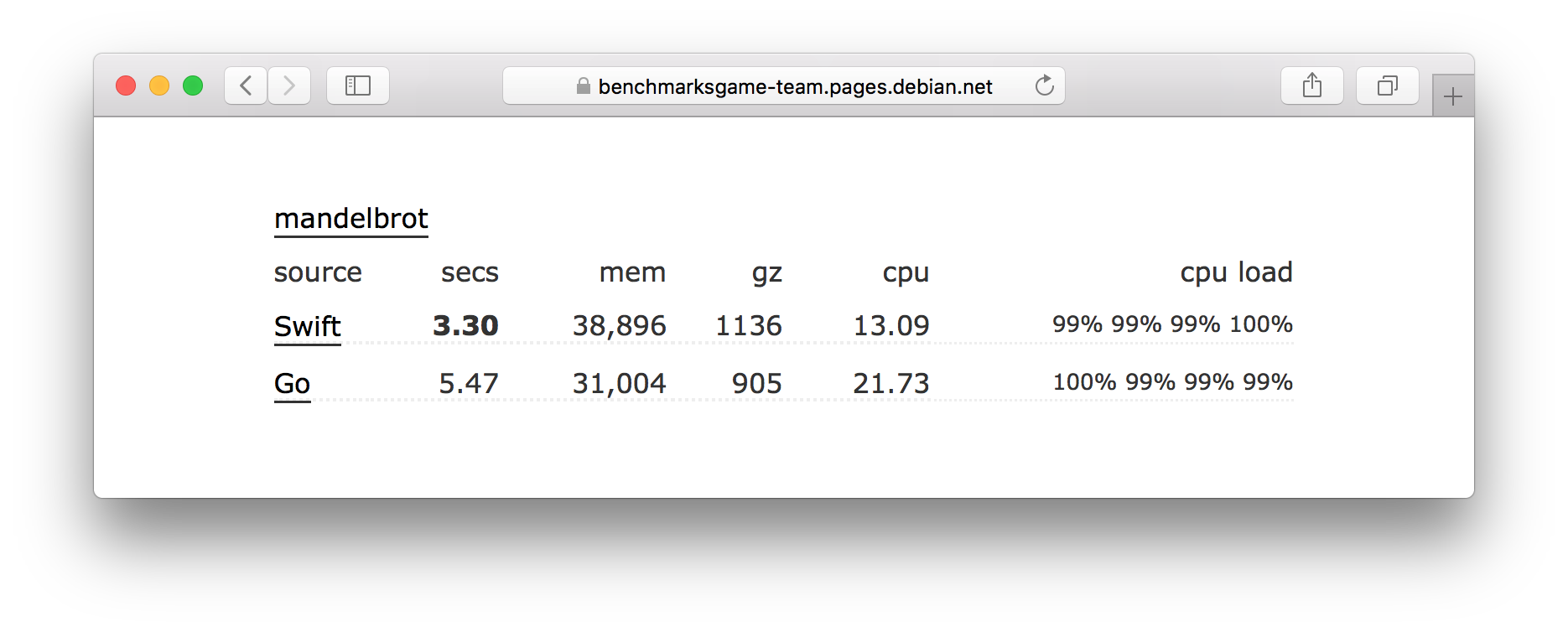

But let's see how other programming languages passed the same test.

What do you think, how correct is it to conclude that Swift is faster than Go? I think that is wrong. It suffices to see that the two implementations on Rust (2 and 6 line) differ in time by 2 times.

And here the problem looks more serious. If the comparison of typescript and javascript is perceived as a joke, then in the case of other languages the problem is not so obvious. Would you understand what was the matter if you saw such a report?

It turns out that the benchmark code needs to be checked closely.

Question: When was the last time you saw a person who climbs to double-check how the code of benchmarks was written right after seeing standard beautiful graphics of performance?

I meet these very rarely. I think that such engineers are vanishingly small.

On the other hand, there are many examples before our eyes, when on the basis of such tests a conclusion is drawn about the performance and lightness of the technology. And, by the way, public opinion is forming.

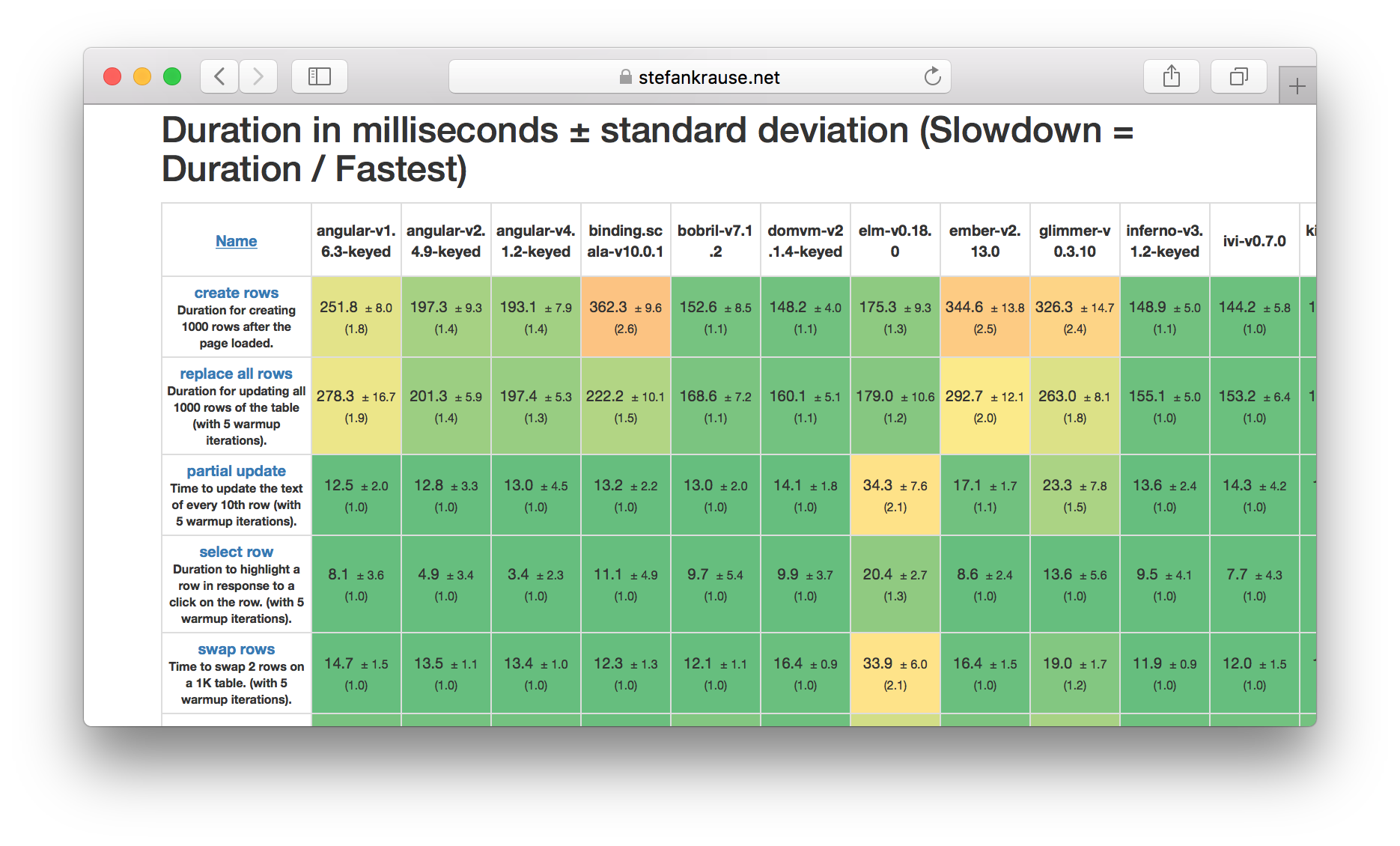

Here it is possible to compare the performance of the main frameworks for javascript.

Do you still trust the results? Me not.

That's all. But there is good news. The programmer's skill still strongly influences program performance.

Conclusion:

Microbenchmarks will not show you anything if you are not a professional in performance for a specific platform. And even better, you yourself write these benchmarks taking into account your requirements and conditions, while understanding what you are doing.

PS: The

post is written as a response to hasty comparisons and conclusions about future performance. Of course, all the conclusions and arguments from the article can be cited without this example, but with numbers and specifics it is more fun and clearer.

Only registered users can participate in the survey. Sign in , please.