Attempts to discover a new checker tactics or What to do with a pipe dream

Introduction

The sports game "Checkers" is one of the games of humanity that the computer has not yet fully calculated. There is news that scientists have found a strategy in which the computer never loses. In my 9 years devoted to this game, I met only one program that I could not defeat in any way. Perhaps my sports experience will make it possible to assume that it was a program that implements the strategy described above. To my great surprise, it took up only 60 MB. Or maybe there was a well-trained neural network at the core? But still I can’t believe that it’s impossible to calculate them. There are only 10 ^ 20 positions, can my computer not cope with such a task? And also, is there really no tactic in which, at the beginning of a game, an opponent gives a saber and finds themselves in a tactical advantage ?! I have never seen such a debut. I'll go check ...

Implementation of the algorithm for solving combinational problems

The first attempt was made at the end of the 2nd year. After a quality study of the C language, I thought that it would not hurt to learn C ++. After watching a large number of lectures on this language, I wanted to take up some project. And he took up the checkers. Finer work with variables allowed us to spend more time rationally calculating positions. If these actions are likened to actions with graphs, then this algorithm could be called a breadth-first search. The stop of the algorithm was a complete passage through the graph.

One object described the situation on the board. In one object was stored:

class Queue

{

public:

Queue *pnr; /*pointer to next record*/

Queue *ppr; /*pointer to prev record*/

Map *pdb; /*pointer to draught board*/

char *action; /*actions of parents*/

Queue *pp; /*pointer to parent*/

Queue *pfc; /*pointer to first child*/

int nmd; /*Approval of the number of moves to a draw*/

int color;

};

Since the breadth-first search algorithm was implemented here, the data could be stored in a doubly connected sheet, where pnr and ppr pointers implement this collection.

pdb - Pointer to the placement of the board.

action - data on the progress made by the parent, in order to achieve this position. It looked like a regular record of moves in checkers “c3 – d4” when moving the checkers or “c3: e5” when cutting. The variable was necessary for more accurate debugging of the current situation.

pp , pfc- pointers to the parent and to the first child position. Because the search algorithm was implemented in width, then all the generated positions in the doubly linked list were located side by side, one after the other. Therefore, in order to save data in the form of a tree, it is enough to store a pointer to the first child, and all other subsequent children referred to the same parent. This algorithm allowed the parent to extract the results of the children, that is, to analyze the current situation, looking only at what this or that move that gave rise to the child can lead to.

nmd- A number indicating how many moves are left before the game is considered a draw. For example, in the situation of 3 ladies versus the 1st, only 15 moves are allocated to complete the game. When the number of checkers changes, and also after the checkers become a queen, this number is recalculated.

color - Whose move is now.

I was very afraid of the stack overflow and thought that passing pointers to functions would still be better, so I decided to avoid directly passing the object as a parameter. The implementation was simple: take an element from the queue, examine it, generating subsequent positions, then take the next element from the queue and so on in a circle.

Result:

Viewed: 61.133 positions

Stored entries in the queue: 264.050 positions

RAM (2 GB) ended only with such data. Such results are only suitable for short combinational problems.

But this way of working with checkers positions allowed us to carefully debug the work of the "core" of the program.

Transition to the "version control system"

In the algorithm, a lot of memory was spent saving data about the board. Each position required to allocate a piece of memory. Moreover, how to code the current checker arrangement better, I did not come up with:

/* info about one checker */

class Checkers

{

public:

chlive live;

chstatus status;

};

/* info about position a checker */

class PosCheckers

{

public:

chcolor color;

Checkers *ptrCheckers;

};

/* battlefield */

class Map

{

public:

PosCheckers coordinate[8][8];

};

color - Checker color. Black, white, or empty.

ptrCheckers - If there are no checkers in this field, then do not allocate memory.

status - Queen or checker.

live - Is the checker alive. Only in order not to cut down this checker again.

I understand that instead of coordinate [8] [8], only coordinate [8] [4] could be dispensed with, but understanding your own code would be very affected.

In general, a lot of memory was required to maintain the position, so I decided to assign the responsibility for identifying the positions of the pieces to action - a record of the parent’s progress, which contained data of type “c3-d4”.

Now, when we take an element from the queue, we, starting from the very beginning. We take the starting position of the checkers and from it already on the way back, we carry out moves that attracted to this child. So the arrangement of checkers on the board was built for each element of the queue.

Result:

Viewed: 1.845.265 items

Stored entries in the queue: 8.209.054 items

Rejection of the "version control system"; go to deep search

Unfortunately, the breadth-first search had at least a few significant problems: Sometimes it created the same batch layouts. And to recognize an already created party, with a lot of data, was time-consuming. It was also not clear what to do when we ran out of memory. All the stored data was necessary in order to restore the ideological chain, so that, if necessary, “scoop” the memory from somewhere, it did not turn out to be.

The search “in depth” allowed us to move from local problems (solutions to combinational problems) to global ones (miscalculation of the entire batch). This algorithm considers the current arrangement of checkers, spawning descendants, selects the first of them and assigns it to the role of the current one. If there are no descendants, then we indicate that in this position we lost and go on to consider the next arrangement with the parent.

Also, in order not to calculate repeated positions in the presence of ladies, it was decided to look at all the parent positions. If there is one, I do not consider it, but proceed to the next.

It was decided to remove from the memory of the great-grandchildren at the constellation, in which the result was known. Thus, it was possible to avoid its overflow. If overflow does not occur when searching for the deepest element.

It was also decided to add a new way to store a position - a list of shapes:

class ListCheckers

{

public:

ListCheckers *pnr;

chcolor color;

chstatus status;

int x,y; /* coordinate */

};

With it, we began to more quickly find the possibility of a log house, as well as sort out checkers to check the possibility of a move. Now our “position” object stored in the queue had the following fields:

class Queue

{

public:

ListCheckers *plch; /*pointer to list of checkers*/

ListChilds *pcs; /*pointer to list of childs*/

chcolor color;

int draw;

chresult result;

};

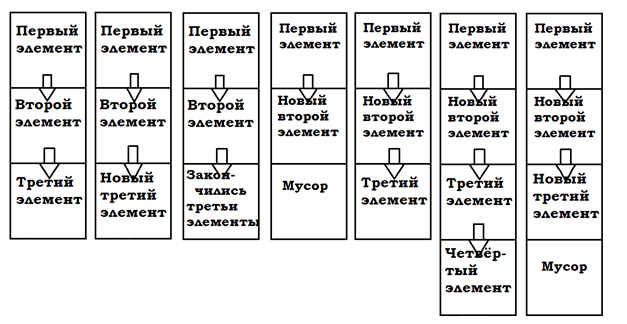

I did not expect that the free command does not guarantee 100% free memory . During debugging, it turned out that after executing the free command, memory was not freed at all. Somewhere in the dark of the forums, I found out that free only gives the OS instructions about freeing up memory, and the OS alone decides whether to free up memory or not. In general, my OS was “greedy”, so I had to work with memory in a different way. I allocated memory as needed to keep the chain of the first “children”. And instead of deleting the “first” child that we looked at, the data about it was overwritten with data about the next child.

For this, an element was developed that is responsible for dynamic work with memory:

class MainQueue

{

public:

MainQueue* parent; /*pointer to parent’s record*/

MainQueue* next_record; /*pointer to child’s record*/

Queue* now; /*pointer to record on data with position*/

int draw;

};

Result:

For 1 day of continuous work:

2.040.963 lots were created; 1.241.938 lots were

viewed; RAM

occupied: 1.378 MB

Work with files

Unfortunately, with such a choice of the next element, we would have "hung" for a long time with the same positions. Especially with miscalculations for the ladies. Therefore, I wanted to implement a database where the results of already viewed positions would be stored. Therefore, I decided to store data about each batch.

Moving away from the memory problem, I ran into it again. Since I did not have enough RAM, I decided to use an external medium. All the positions I wrote to the index.txt and queue.txt file. In queue.txt, I stored data about each batch. And in index.txt - the batch identifier and the location of information about this batch in queue.txt, that is, the offset. I wanted to compress the data, but leave it readable. Because I thought that the finish line was still far away. Therefore, I saved the data in this form:

queue.txt :

aaaaeaaaaacaaaaa aaaaeaaaaaakaaaa 50 U

Aaaaaaeaaacaaaaa Aaaaaaauaacaaaaa 49 U

aaaaaaeakaaaaaaa aaaaaaeacaaaaaaa 48 W

Aaaaaaaaaauaaaaa 47 L

…

index.txt :

Aaaaeaaaaaaacaaa000000000000000000000

aaaaeaaaaacaaaaa000000000000000000040

aaaaeaaaaaakaaaa---------------------

Aaaaaaeaaacaaaaa000000000000000000080

Aaaaaaauaacaaaaa000000000000000000178

…On the board, a game cell can take 5 states: white / black checker, white / black queen, or empty. So, two cells will have 25 states in various combinations. Note that the Latin alphabet has 26 letters and it is quite suitable in order to describe the state of 2 game cells at once with one letter. This means that the entire board with 32 game cells can be described with 16 letters of the Latin alphabet, which have a more or less readable look. It is also necessary to save whose move in this position. Then, if the first letter is capitalized, then the move is now black. We should also remember the number of moves from the current lineup to accepting a positional draw. And also the result: W-win, L-lose, D-draw, U-unknown. And debugging has not become more difficult.

Result:

For two hours, the program required only 4 MB of RAM, but counted very few parties. There were 2918 entries in queue.txt and was equal to 401 KB. The index.txt file contained 6095 records and weighed 232 KB. At such a pace, only 500 million = 5 * 10 ^ 8 positions can be calculated, and my 1TB drive reported that he did not have enough memory. Yes, and it will happen very, very soon. My calculated positions will have a weak effect on the whole game.

Data compression

I was able to come up with only 3 options for promoting the project:

- 5 ^ 32 - various arrangements of figures,

2 * 5 ^ 32 - considering whose move

2 * (2 * 5 ^ 32) - the maximum amount of occupied space is ~ 9.32 * 10 ^ 22 bits , provided that each arrangement is sufficient indicate the result equal to 2 bits.

Moreover, in a regular batch there are 5 * 10 ^ 20 different positions.

So, about 2 * (2 * 5 * 10 ^ 20) = 2 * 10 ^ 21 bits will be informational, and the rest ~ (9,12 * 10 ^ 21) will indicate that such a placement of drafts in this party does not exist.

1TB = 8 * 10 ^ 12 bits are available.

It is necessary to develop a compression algorithm for this task, while maintaining fast data indexing. - In a regular batch there are 5 * 10 ^ 20 different positions.

For quick indexing, we will save each position of its “children”, as well as the result. On average, a position has about Y descendants.

We encode the “links” to descendants in X bits, and the result is 2 bits, as well as the separator between entries in Z. We get (X * Y + 2 + Z) bits for each position. Total (X * Y + 2 + Z) * 5 * 10 ^ 20 bits are required to be allocated for storage of all positions used in initial arrangement.

1TB = 8 * 10 ^ 12 bits are available.

It is necessary to develop a compression algorithm for this task, while maintaining fast data indexing. - Let's try to find repeating patterns in the records and implement replacing the replays with shorter ones. The algorithm is ideally similar to the Huffman code.

Negatively affect not so fast indexing.

In total, you need to compress the data from ≈10 ^ 22 to 10 ^ 13 bits. So, it was necessary to write an algorithm that would allow compressing data by only 99.9999999914163%. I doubt that any algorithm is capable of this, moreover, without considering yet that it is necessary to maintain fast indexing.

Result:

Saving any data about all parties is not at all applicable.

Return to the project only on RAM. Creating a "processor"

It was customary to store data that was stored in files in RAM. To do this, it was decided to change the data storage structure:

class list_strings

{

public:

list_strings* next;

char* data;

list_strings()

{

data = new char[17];

data[0] = '\0';

next = nullptr;

}

};

class Queue

{

public:

ListCheckers *plch; /*pointer to list of checkers*/

list_strings *pcs; /*list of data of childs*/

chcolor color;

chresult result;

int to_draw;

}

Also, the principle of storing the Queue object has been changed. After the MainQueue has been rewritten, the Queue object pointed to by MainQueue is also rewritten.

The “pcs” field for storing “children” is now implemented as a singly linked list with data such as “Aaaaaaaaacaaaaa”, expanding as necessary. In order to use the allocated memory repeatedly (when overwriting), the data fields became equal to '\ 0' - zero, to indicate that the fields do not contain important information. Because The “object” has changed.

Result:

Maximum available memory space: 844 KB. Running for 7 hours allowed viewing 8.865.798.818 positions. But, positions can be repeated. These results are insufficient to achieve a complete calculation of the party for an acceptable lead time.

Now we can say that there is a “processor” that will grind the positions and it will only need 844 KB of RAM, which means that the remaining memory can be spent usefully, for example, implement “cache memory” so as not to calculate the positions that have already been calculated .

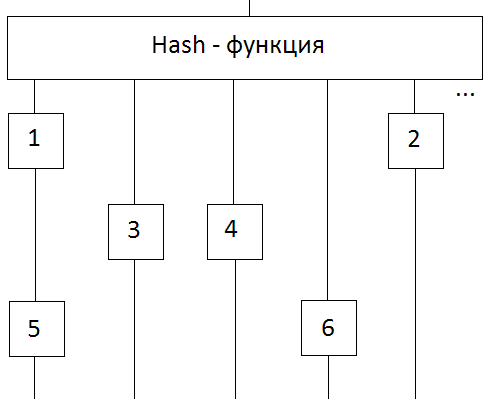

Creating a "cache"

For the fastest data retrieval from memory, a hash table was selected that fills the maximum possible space in RAM. As the hash function, the last numbers of the md5 algorithm were selected, the input of which was fed the encoded described position. That is, the position “Aaauaueaaacckaaa” with md5 0237d0d0b76bcb8872ecc05a455e5dcf will be stored at: f * 2 ^ 12 + c * 2 ^ 8 + d * 2 ^ 4 + 5 = 15 * 4096 + 12 * 256 + 13 * 16 + 5 = 64725.

The unit of storage for a record in a hash table was a cell. This approach allows you to delete data from "obsolete" cells and reuse their space. Obsolescence is implemented using a ring buffer:

At the first address in the cache memory cells No. 1 and No. 5 are stored, in the second No. 3 ... And in the ring buffer the cells are stored in chronological order. If we could store a maximum of 5 cells, then cell No. 1 was “pulled out” from the place where it is now and placed in place of the 6th cell. Moreover, now in the cache-memory at the first address will be stored only cell number 5.

class mem_cel

{

public:

mem_cel* pnhc; /* pointer to next in hash collection */

mem_cel* pphc; /* pointer to prev in hash collection */

mem_cel* pnrb; /* pointer to next "mem_cel" record in ring buffer */

mem_cel* pprb; /* pointer to prev "mem_cel" record in ring buffer */

chresult result;

int draw;

stringMap condition;

};

The condition field is necessary for identifying the desired checker arrangement, because different arrangements can be stored at the same cache address.

The draw field is necessary to identify a match request. If the memory contains a draw of the position for which only 3 moves were allocated, then if there are more moves, you can either win or lose.

Result:

Running for 1 hour allowed viewing 10,400.590 positions. It is necessary to implement something to speed up this miscalculation. But, if you make calculations, then my computer, at best, will calculate 10 ^ 20 positions within: 10 ^ 20 / 10.400.590 = 9.6 * 10 ^ 12 hours = 4 * 10 ^ 11 days.

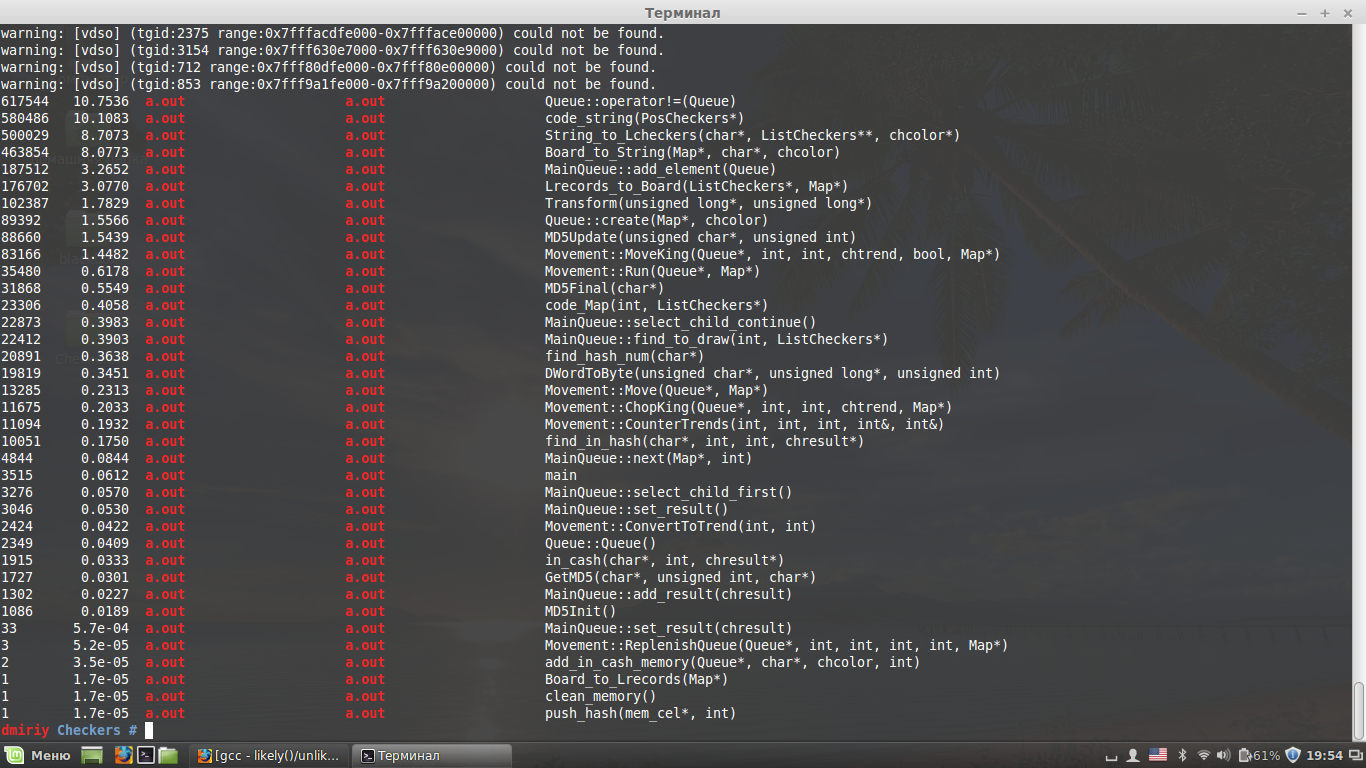

Let's see which piece of code is the “narrow neck”. For this purpose, I used the OProfile profiler:

1.

Queue::operator!=(Queue)Checking the difference in queue elements. Called when a new item is added to the queue to check for a triplicate position. The check is performed with all the elements that led to the current position.

Optimization: When changing the number of pieces on the board or their status, mark. In order to with positions that have a different set of shapes, do not check.

2.

code_string(PosCheckers*)Used to convert a checkered item element to a letter. Required for Board_to_String (Map *, char *, chcolor).

Optimization: First, reduce the number of calls to the Board_to_String (Map *, char *, chcolor)

3 function .

String_to_Lcheckers(stringMap, ListCheckers**, chcolor*)4.

Board_to_String(Map*, char*, chcolor)Called often enough. So it is necessary to reduce the number of calls to them.

String_to_Lcheckers is needed to convert text to a collection of checkers data.

Board_to_String is needed to translate the position into text that can be stored in the OP.

Optimization: Since I need to work with three data structures to represent the same position on the board:

Lcheckers - a list of checkers on the field. Needed to quickly select checkers to see the possibility of a move. Map or “Board” - an array of [8] [8]. It contains a complete balance of power.

stringMap or “String” - a string for storing data. Therefore, it is necessary to reduce the number of conversions from one data representation to another, or try to reduce the number of data structures.

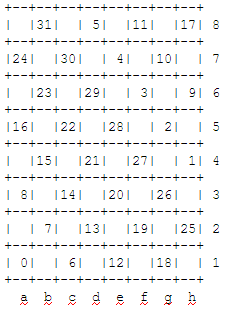

Magical bitBoards

Unexpectedly for myself, I found a solution on habrahabr: Magical bitboards and Russian checkers . In which the author of the article proposes to encode information about the board using 4 32-bit words.

Words:

1) the positions of all figures in white

2) the positions of all figures in black

3) the positions of checkers in white

4) the positions of checkers in black

And the positions of the figures are numbered as follows:

Now, to find out the possibility of a log house with at least one checker, it is enough to move the position of all the checkers in the corresponding direction:

bitboard_t try_left_up = ((((checkers & possible_left_up) << 1) & enemy) << 1) & empty;

This accelerates the search for opportunities, as a log house, and a simple move. Unfortunately, I still did not understand the author of this article, how he decided to work with the lady. At the moment I don’t have a beautiful for, which, of course, needs to be fixed in the future.

Thus, you can replace the 3 data structures described above with one:

typedef uint32_t bitboard_t;

bitboard_t bbMap[4];

Also, this change allowed not to use md5 to find the number in the hash table. This mission was entrusted with this formula:

#define HASH_NUM 0xFFFFFF

hash_num = ((bbMap[0] ^ ~bbMap[1]) ^ ReverseBits(~bbMap[2] ^ bbMap[3])) & HASH_NUM;

Where ReverseBits is a bit reversal. Example: there was a number 0x80E0, it became 0x0701.

Result:

Running for 1 hour allowed viewing 15.140.000 positions, which is undoubtedly better. But this is still not enough for a complete miscalculation.

Fixed bug

Previously, I indicated 754,000,000 positions per hour. Shameful typo in code ...

I believe that the project algorithm has been implemented. Therefore, you can focus on speeding up the functions.

Speeding up functions

Having read about likely / unlikely, I decided to check its "acceleration" of the code. In general, likely / unlikely indicates what instructions the processor should load during the execution of the if statement. Either those that are after then, or after else. If the choice of instructions is unsuccessful, for example, then, the processor is idle, waiting for instructions indicated after else. Under the MS compiler, such an instruction is called __assume.

Having implemented each if in this way, I decided to test:

__assume(selected == nullptr);

if (selected != nullptr)

{

return selected;

}

Result:

To my great surprise, the code really accelerated a lot. For the first hour, 16.750.000 positions were calculated.

I did not dare to implement assembler inserts. My code immediately becomes unreadable, and this is an important aspect for me. Since interruptions in the project I sometimes reached several months.

Limit the number of calculated moves in depth

Unfortunately, this can be called like this: over time, I really realized that it is not yet possible to calculate all the positions. Therefore, I added a move limiter with the resulting function:

checker = +1 point, lady = +3 points. I realize that this approach is not entirely true, but for the most part it will give valid values. Therefore, I decided to dwell on this.

Result:

I returned to where I started. With a limiter on the number of calculated moves, my program is currently a program for solving combination problems. But this is not scary, the limiter can be removed.

Overall result:

In the first hour, ≈17 million positions are calculated.

What to do next? Unclear. You can use CUDA, parallelize the calculations ... But I don’t think that with this you can calculate at least 50 moves for each player on one computer. To know how many positions you have to sort out ...

You need to change the algorithm ... How? There are no ideas ... Can use advertised neural networks? I do not think that the neural network will appreciate the loss of checkers at the beginning of the game.

So far, I’ll wait for the coming time of the appearance / cost reduction of faster computers. Perhaps then more advanced technologies in code acceleration will already appear. And while I go to study neural networks, maybe I'm wrong and they will be at the head of the computational algorithm ... Wait and see.

Some data:

The maximum miscalculation depth - The number of positions viewed

1 - 7

2 - 56 (We make the first move of white. Then we generate 7 new positions for black. We look at each of them and make sure that we don’t need to calculate further. We make the second move of white ... 7 + 7 * 7)

3 - 267

4 - 1017

5 - 3570

6 - 11 460

7 - 34 455

8 - 95 921

9 - 265 026

10 - 699 718

11 - 1 793 576

12 - 4 352 835

13 - 10 571 175