MIT course "Computer Systems Security". Lecture 19: “Anonymous Networks”, part 1 (lecture from the creator of the Tor network)

- Transfer

- Tutorial

Massachusetts Institute of Technology. Lecture course # 6.858. "Security of computer systems." Nikolai Zeldovich, James Mykens. year 2014

Computer Systems Security is a course on the development and implementation of secure computer systems. Lectures cover threat models, attacks that compromise security, and security methods based on the latest scientific work. Topics include operating system (OS) security, capabilities, information flow control, language security, network protocols, hardware protection and security in web applications.

Lecture 1: “Introduction: threat models” Part 1 / Part 2 / Part 3

Lecture 2: “Control of hacker attacks” Part 1 / Part 2 / Part 3

Lecture 3: “Buffer overflow: exploits and protection” Part 1 /Part 2 / Part 3

Lecture 4: “Privilege Separation” Part 1 / Part 2 / Part 3

Lecture 5: “Where Security System Errors Come From” Part 1 / Part 2

Lecture 6: “Capabilities” Part 1 / Part 2 / Part 3

Lecture 7: “Native Client Sandbox” Part 1 / Part 2 / Part 3

Lecture 8: “Network Security Model” Part 1 / Part 2 / Part 3

Lecture 9: “Web Application Security” Part 1 / Part 2/ Part 3

Lecture 10: “Symbolic execution” Part 1 / Part 2 / Part 3

Lecture 11: “Ur / Web programming language” Part 1 / Part 2 / Part 3

Lecture 12: “Network security” Part 1 / Part 2 / Part 3

Lecture 13: “Network Protocols” Part 1 / Part 2 / Part 3

Lecture 14: “SSL and HTTPS” Part 1 / Part 2 / Part 3

Lecture 15: “Medical Software” Part 1 / Part 2/ Part 3

Lecture 16: “Attacks through a side channel” Part 1 / Part 2 / Part 3

Lecture 17: “User authentication” Part 1 / Part 2 / Part 3

Lecture 18: “Private Internet viewing” Part 1 / Part 2 / Part 3

Lecture 19: “Anonymous Networks” Part 1 / Part 2 / Part 3

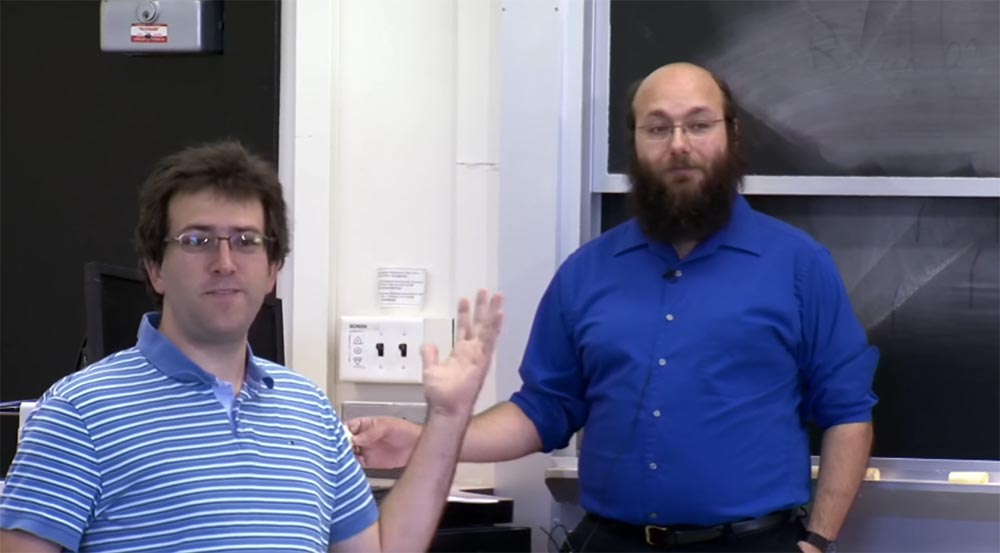

Nikolai Zeldovich: great guys, let's start! Today we will talk about Tor. Here we have one of the authors of the article you read today, Nick Mathewson. He is also one of the main developers of Tor and is going to tell you in detail about this system.

Nick Mathewson: I could start by saying that “please raise your hands if you haven’t read the lecture article,” but it will not work because you are ashamed to admit that you haven’t read the article. Therefore, I will ask in another way: think about your date of birth. If the last digit of your date of birth is odd, or if you have not read the article, please raise your hand. Well, almost half the audience. I believe that most still read the article.

So, the means of communication that maintain our confidentiality allow us to communicate more honestly in order to gather better information about the world, because due to justified or unjustified social or other consequences, we are less relaxed in communication.

This leads us to Tor, which is an anonymous network. Together with my friends and colleagues, I have been working on this network for the last 10 years. We have a group of volunteers who have provided over 6,000 working servers and manage them to support Tor. First of all, they were our friends, whom I and Roger Dungledane knew from my studies at MIT.

After that, we advertised our network, and more people started running servers. Tor is now managed by non-profit organizations, private individuals, some university teams, perhaps some of the people here, and, no doubt, some very dubious individuals. Today we have about 6,000 nodes that serve hundreds of thousands to hundreds of millions of users, depending on how you count. It is difficult to count all users because they are anonymous, so you must use statistical methods to evaluate. Our traffic is about terabytes per second.

Many people need anonymity for their usual work, and not everyone who needs anonymity thinks of it as anonymity. Some people say that they do not need anonymity, they freely identify themselves.

But there is a widespread understanding that confidentiality is necessary or useful. And when ordinary people use anonymity, they tend to do it because they want privacy in search results or privacy in conducting online research. They want to be able to engage in local politics without offending local politicians and so on. Researchers often use anonymization tools to avoid collecting preconceived data based on geolocation, because it may be useful to them to develop certain versions of some things.

Companies use anonymity technology to protect sensitive data. For example, if I can track all the movements of a group of employees of a large Internet company, just by watching how they visit their web server from different places around the world, or how different companies around the world visit, then I can find out much about who they work with. Companies prefer to keep such information secret. Companies also use anonymity technology to conduct research.

So, one major manufacturer of routers, I don’t know if it exists now, regularly sent completely different versions of the technical specifications of its products to the IP addresses associated with its competitors in order to complicate their reverse engineering. Competitors discovered this with the help of our network and said to this manufacturer: “Hey, wait a minute, we got a completely different specification when we went through Tor than the one we received directly from you!”

Law enforcement agencies also need anonymity technologies in order not to intimidate suspects with their observation. You do not want the IP address of the local police station displayed in the web logs of the suspect’s computer. As I have already said, ordinary people need anonymity to avoid harassment due to online activity when learning very sensitive things.

If you live in a country with uncertain health legislation, then you don’t want your diseases to be made public, or others to find out about some of your unsafe hobbies. Many criminals also use anonymity technology. This is not the only option, but if you are ready to buy time on a botnet network, then you can buy pretty good privacy that is inaccessible to people who consider the botnet something immoral.

Tor, as well as the general means of anonymity, is not the only multi-purpose privacy technology. Let's see ... the average graduate age is 20 years. Since you were born, have you ever talked about crypto wars? Not!

Meanwhile, during the 1990s in the United States, the question of how legitimate the civil use of cryptography and the extent to which its export to public applications is permissible was suspended. This issue was resolved only in the late 90s - early 2000s. And although there are still some debates about anonymity technology, this is nothing more than a debate. And I think they will end in the same - a recognition of the legality of anonymity.

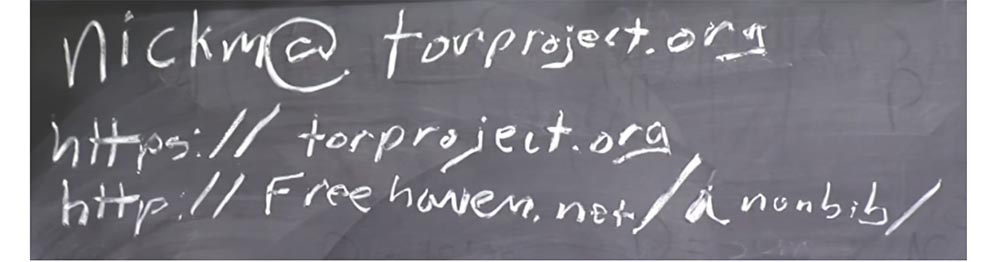

So on the board you see a summary of my talk. I gave you a little introduction, then we will discuss what, in a technical sense, is anonymity and talk a little about the motivation for using it.

After that, I'm going to take you step by step along the way, at the beginning of which there is the idea that we need some anonymity, and at the end what the Tor project should look like according to this idea. I will also mention some of the branch points from this topic, by which you can be “brought” to other projects. I also intend to dwell on some interesting questions that you all sent according to your homework for this lecture.

I'll tell you a little about how node detection works, this is an important topic. After that, I will vote on which of the additional topics mentioned here should be covered.

I think we call them complementary, because they follow the basic material of the lecture, and I cannot cover them all, but these are really cool topics.

I will mention some of the Tor-related systems, the structure of which you should read, if this topic interests you and you want to know more about it.

I will talk about the future work that we want to do with Tor, and I hope that we will sometime have time for this. And if after all this we have time to answer your questions, I will answer all. I hope that I will not need to take an extra hour of lecture time. My colleague David is sitting there in the audience, he will “hang out” among you during the lecture and talk to anyone who wants to talk on the subject.

So anonymity. What do we mean when we talk about anonymity? There are many informal concepts that are used in informal discussions, on forums, on the Internet, and so on. Some people believe that anonymity simply means “I will not put my name on it.” Some people believe that anonymity is when "no one can prove that it is me, even if it strongly suspects."

We mean a number of concepts expressing the ability to associate a user or an attacker with any actions on the network. These concepts come from the terminology of Fittsman and Hansen’s article; you will find a link to it in freehaven.net/anonbib/, a bibliography of anonymity, which I help maintain.

This bibliography includes most of the good work in this area. We need to update it to the present, until 2014, but even in its current form it is a rather useful resource.

Therefore, when I say "anonymity", I mean that Alice, Alice is engaged in some kind of activity. Suppose Alice is buying new socks. And here we have some kind of attacker, let's call her Eve, Eve. Eve can say that Alice is doing something. Preventing this is not what we mean by anonymity. This is called nonobservability. Perhaps Eve can also say that someone is buying socks. Again, this is not what we mean by anonymity. But we hope that Eve will not be able to say that Alice is the person who buys new socks.

In this case, we mean that, at the category level, Eve not only cannot prove with mathematical precision that it is Alice who buys socks, but she also cannot assume that Alice buys socks with a greater probability than any random person. I would also like Eve, watching Alice’s activities, to be able to conclude that Alice sometimes buys socks, even if Eva finds out about Alice’s particular activity in the area of buying socks.

There are other concepts related to ensuring anonymity. One of them is incoherence, or the absence of direct links. Non-binding is like Alice’s temporary profile. For example, Alice writes under the pseudonym “Bob” in a political blog, which can ruin her career if her superiors learn about it. So she writes like Bob. So, non-connectivity is the inability of Eve to associate Alice with a specific user profile, in this case a user profile named Bob.

The final concept is non-observable, when some systems try to make it impossible to even say that Alice is on the Internet, that Alice is connecting to something on the network, and that she is showing network activity.

These systems are quite difficult to build, later I will tell you a little about how useful they are. The ability to hide the fact that Alice uses the anonymity system, rather than the fact that she is on the Internet, can be useful in this area. This is more achievable than the absolute concealment of the fact that Alice is on the Internet.

Why did I start working on this first? Well, partly due to "engineering itch." This is a cool problem, this is an interesting problem, and no one has yet worked on it. In addition, my friend Roger received a contract to complete a stalled research project that was supposed to be completed before the grant expired. He was so good at this job that I said, "Hey, I will join this business too." After some time, we formed a non-profit organization and released our open source project.

From the point of view of deeper motivations, I think that humankind has many problems that can be solved only through better and more focused communication, freer self-expression and greater freedom of thought. And I do not know how to solve these problems. The only thing I can do is try to prevent the infringement of freedom of communication, thoughts, conversations.

Student: I know there are many good reasons to use Tor. Please do not take this as criticism, but I am curious how you feel about criminal activity?

Nick Mathewson:What is my opinion on criminal activity? Some laws are good, some are bad. My lawyer would tell me never to advise anyone to break the law. My goal was not to create an opportunity for a criminal to act against most laws with which I agree. But where criticism of the government is illegal, I am for this kind of criminal activity. So in this case, it can be considered that I support this kind of criminal activity.

My position on the use of an anonymous network for criminal activity is that if the existing laws are fair, then I would prefer that people do not violate them. In addition, I think that any computer security system that is not used by criminals is a very bad computer security system.

I think that if we prohibit the security that works for criminals, we will be brought into the area of completely unsafe systems. This is my opinion, although I am more a programmer than a philosopher. Therefore, I will give very banal answers to philosophical questions and questions of a legal nature. In addition, I am not a lawyer and I cannot give a legal assessment of this problem, so do not take my statements as legal advice.

Nevertheless, many of these research problems, which I will talk about, are far from being resolved. So why are we continuing to research in the same direction? One of the reasons is that we considered it impossible to advance in anonymity research without the existence of the necessary test platform. This view has been fully confirmed, since Tor has become a research platform for working with low-latency anonymity systems and has been a great help in this area.

But even now, 10 years later, many big problems are still unsolved. So if we waited 10 years to fix everything, we would wait in vain. We expected that the existence of such a system of anonymity would bring long-term results to the world. That is, it is very easy to argue that what does not exist should be prohibited. Arguments against civilian use of cryptography were much easier to use in 1990 than in our time, because at that time there was practically no reliable encryption for civilian use. Opponents of civilian cryptography at that time could argue that if you make something legal stronger than DES, civilization will collapse, criminals will never be caught, and organized crime will prevail in everything.

But in 2000, you could not have argued that the consequences of the spread of cryptography would be a disaster for society, because by that time civil cryptography already existed, and it turned out that this was not the end of the world. In addition, in 2000, it would be much more difficult to advocate for the prohibition of cryptography, because the majority of voters favored its use.

So if someone in 1985 said that “let's ban powerful cryptography,” it could be assumed that banks need it, so an exception could be made for the banking sector. But besides the banks, in the public, civilian sphere of activity there were not those who had an acute need for encryption of information.

But if someone in 2000 demanded to prohibit powerful encryption systems, it would deal a blow to any Internet company, and everyone who launches https pages would start screaming and waving their hands.

Therefore, at present, the ban on powerful cryptography is practically not feasible, although people occasionally return to this idea. But again, I am not a philosopher or a political analyst of this movement.

Some ask me what is your threat model? It’s good to think in terms of threat models, but unfortunately our threat model is rather strange. We began not with consideration of the requirements of opposition to the enemy, but with the requirements for usability. We ourselves decided that the first requirement for our product was that it should be useful for browsing web pages and interactive protocols, and for this we intended to ensure maximum security. So our threat model will look rather strange if you try to write into it what an attacker can do, under what circumstances and how. This is because we have set a goal that first of all our product should work on the Internet.

I will come back to this in a couple of minutes, but for now let's talk about how we build anonymity.

So, before you Alice, who wants to buy socks. Suppose Alice controls the computer. We’ll call it the R-Relay, which sends Alice’s traffic to the site ... I would like to call it socks.com, but I’m afraid this will be terrible, so I’ll call it zappos.com, they also sell socks.

So Alice wants to buy socks at zappos.com. And she goes through this relay she controls. Anyone listening in, who looks at it, will say: "Most likely, this is Alice, this is her computer."

Now suppose that two more people use the same repeater - let's call them just A2 - Alice 2 and A3 - Alice 3, because I lack standard cryptographic names. They buy books and post photos of cats, that's 80% of what people usually do on the Internet, right?

All three users use one computer, from which now three streams are coming out. Now the person who is watching this computer will not be able to easily determine, at least, we hope - we will come back to this later - that he will not be able to determine that the first Alice buys socks, the second Alice buys books, and the third Alice tweaks photos of cats

Well, except that if he watches the connection on the side of Alice, he will be able to see how she says to this R: “please connect me to zappos.com”. For this case, we add a bit of encryption between A and R, for example, we use TLS for all these links. Provided the attacker is unable to hack TLS and match Alice with a request to access zappos.com, Alice receives some privacy.

But this is still not enough. Because, firstly, we assume that this R enjoys full confidence. I hope that you know the definition of the term “trusted” and why it doesn’t really mean “trusted”. He is trusted in the sense that he can break the entire system, and not that he is truly trustworthy.

So, we can use several repeaters, or several relays. We can provide different repeaters to different people. In fact, this is not the topology that we use in our system, but my drawing technique is terrible, and I do not want to redraw anything. We can imagine that these connections go through several repeaters, each of which removes one level of encryption.

So, all that the first, leftmost repeater sees is that Alice is doing something. Everything that the last, right repeater sees is that someone buys socks. But the right repeater sees that someone is buying socks thanks to the connection that came from the middle repeater. The first repeater simply sees Alice doing something and sends the connection to the second repeater. At the same time, there is no outsider who could establish the true connection and interaction of the participants in this whole process.

Now we come to the basic system design. Suppose that Eve is observing here, at the AR section and here, at the R section - Buy socks. I still haven’t mentioned that there is nothing that would hide Alice’s timing and volume. Of course, you should take into account the network noise, which is formed in the process of all operations of computing and decoding data, network latency, and the like.

But in the end, if Alice sends a kilobyte of data, then the construct I depicted produces the same kilobyte at the output. And if the socks shop web page is 64k long and served by this web server at 11 hours and 26 minutes, then Alice will receive a 64k long package at 11:26 or 11:27.

Now, using some statistics, Eve can match some of these data streams if we do not hide information about volumes and timing. There are solutions that hide the amount and time of information exchange. For example, an anonymous email processor, or remailer, Mixmaster and services such as DC-Net.

It may be that each of these nodes received a large number of requests within an hour, and then these nodes swap them and send all at the same time. In this case, we can say that all requests and responses must be the same size, for example, requests 1k, and answers - 1 megabyte. After a little work on this, we receive something that will allow you to send an e-mail message that arrives to the addressee within an hour or provides you with a web page an hour after sending the request. This assumes that you are optimizing packages for single round trip travel. These systems exist and existed when we started developing Tor.

They are not really used, although I wrote one program called Mixminion, which was the successor of Mixmaster remailer, but for the last 3 years I have not received a single message from remailer. Tor has millions of users, remailers have no more than a few hundred.

You might think that these systems still provide better anonymity for people who really need it. However, there is one circumstance: if you have about hundreds of users, you are not really able to provide them with the anonymity that they rely on. Because the hacker will see that there are about a hundred people there and the message that interested him was addressed to the Bulgarian site. How many people from this hundred speak Bulgarian? Five! So to reveal such anonymity will not be a particular problem.

It is said that anonymity loves company. If you do not have a large user base, then no system can actually ensure anonymity. Therefore, even in this construction, if all our Alice belong to the same organization, they should have a common public, not private, corporate system. If they all legally work at MIT and investigate the activities of some fake MIT website selling fake diplomas and use the legal MIT anonymizer, then in fact it will not hide who they are. But if you have a large number of different organizations using all of this, then you will be able to provide some privacy.

We will return to the consideration of correlation attacks, but for now let's say that we do not resist these correlation attacks. Instead, we must minimize the possibility that an attacker who controls both parts of such a system will be able to reveal the anonymity of its users.

I just talked about messaging. Suppose we have something like a mixed network where you give each of these repeaters a public key - K3, K2, K1. And when Alice wants to send something to the site selling socks, she encrypts her message with three keys in this way: K1 (K2 (K3 "socks"))).

But the public key, as you know, is quite expensive, so you don’t want to use it for massive traffic.

Therefore, you agree a set of keys with each server. Thus, Alice shares one symmetric key with the first repeater, the other symmetric key with the second, and the third key with the third repeater. This is associated with what we call the traffic pattern through the network. After the initial public key is configured to create two other keys, Alice can use symmetric encryption to transfer data over the network.

If you stop at this stage, you will receive onion routing as it was developed in the 1990s by Cyeerson, Goldshlag and Reed. Paul Cyverson is still engaged in research in this area, the other two scientists are working on other things.

In addition, as soon as you add a similar scheme, where data travels through the averaged path through repeaters, you can easily replicate a channel through which things sent back in the same way go to Alice, encrypting at each step instead of decrypting.

And, of course, you will need some kind of integrity check, both between nodes and from end to end, because if you don’t check integrity, the following may occur. Suppose you are using XOR-based stream encryption. If you do not do the integrity check, then this node — the first repeater — can use XOR as “Alice, Alice, Alice, Alice, Alice” in an encrypted message. Then, when it will finally be decrypted after the third repeater, because it is a rather pliable cryptographic scheme, if the same attacker controls the last node or watches the area between the third relay and the target site, as well as watches it here, then the attacker will see "Alice, Alice, Alice, Alice, Alice" encrypted XOR in plain text and be able to conclude

27:06

The course MIT "Computer Security". Lecture 19: "Anonymous Networks", part 2

Full version of the course is available here .

Thank you for staying with us. Do you like our articles? Want to see more interesting materials? Support us by placing an order or recommending to friends, 30% discount for Habr's users on a unique analogue of the entry-level servers that we invented for you: The whole truth about VPS (KVM) E5-2650 v4 (6 Cores) 10GB DDR4 240GB SSD 1Gbps from $ 20 or how to share the server? (Options are available with RAID1 and RAID10, up to 24 cores and up to 40GB DDR4).

VPS (KVM) E5-2650 v4 (6 Cores) 10GB DDR4 240GB SSD 1Gbps until December for free if you pay for a period of six months, you can order here .

Dell R730xd 2 times cheaper? Only here2 x Intel Dodeca-Core Xeon E5-2650v4 128GB DDR4 6x480GB SSD 1Gbps 100 TV from $ 249 in the Netherlands and the USA! Read about How to build an infrastructure building. class c using servers Dell R730xd E5-2650 v4 worth 9000 euros for a penny?