DotHill 4824 Storage Overview

The protagonist of this review will be the modest DotHill 4824 storage system. Surely many of you have heard that DotHill, as an OEM partner, produces entry-level storage for Hewlett-Packard, the very popular HP MSA (Modular Storage Array) already in its fourth generation. The DotHill 4004 line corresponds to the HP MSA2040 with slight differences, which will be described in detail below.

DotHill is a classic entry-level storage system. Form factor, 2U, two options for different drives and with a large variety of host interfaces. Mirrored cache, two controllers, asymmetric active-active with ALUA. Last year, new functionality was added: disk pools with three-tier tiering (tiered data storage) and SSD cache.

For those who are not familiar with the theory, it is worth talking about the principles of operation of disk pools and tiered storage. More precisely, about a specific implementation in DotHill storage.

Before the pools, we had two limitations:

The disk pool in DotHill storage is a combination of several disk groups with load distribution between them. In terms of performance, you can consider the pool as RAID-0 from several subarrays, i.e. we are already solving the problem of short disk groups. In total, only two disk pools, A and B are supported on the storage system, one per controller), each pool can have up to 16 disk groups. The main architectural difference is the maximum use of the free allocation of stripes on disks. Several technologies and features are based on this feature:

Connection was made through one controller, direct, through 4 ports of 8Gbit FC. Naturally, the mapping of volumes to the host was through 4 ports, and multipath was configured on the host.

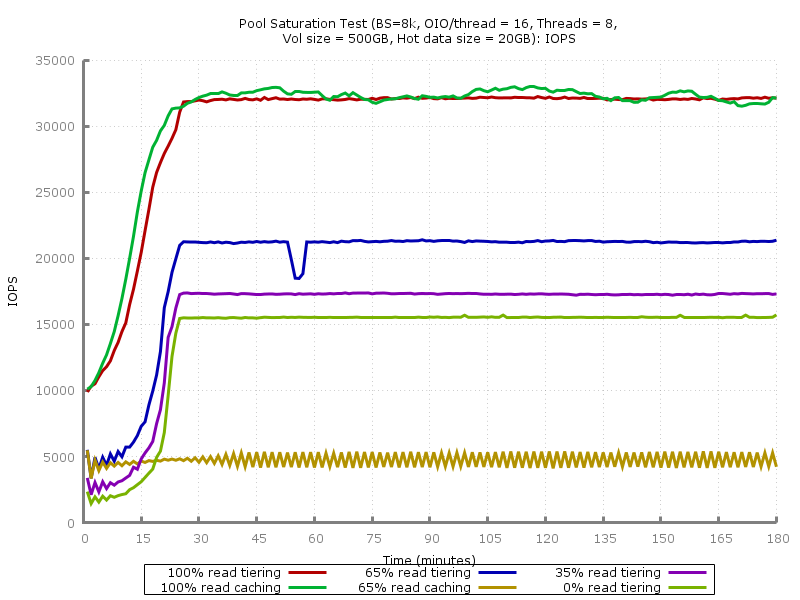

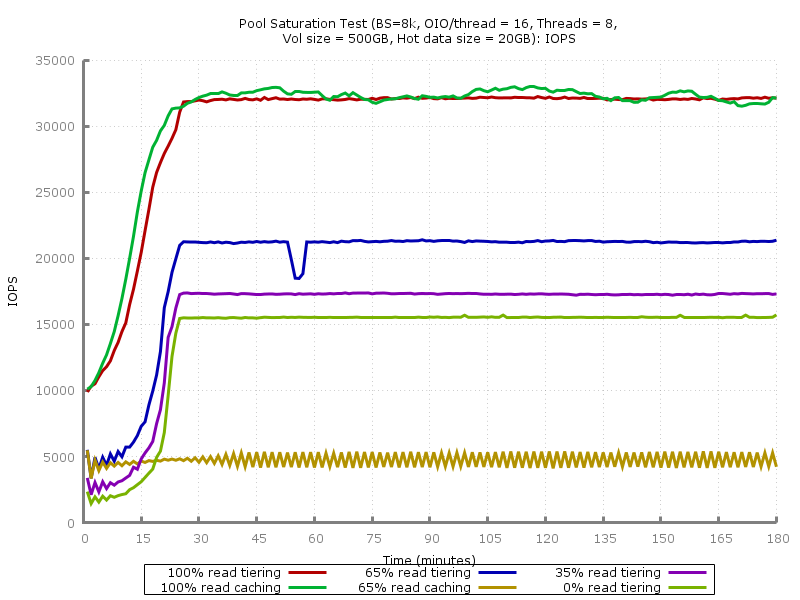

This test is a three-hour (180 cycles of 60 seconds) load with random access of 8KiB blocks (8 streams with queue depth of 16 each) with different read / write ratios. The entire load is concentrated in the 0-20GB area, which is guaranteed to be less than the performance tier or cache on the SSD (800GB) - this was done with the aim of quickly filling the cache or tier in an acceptable time.

Before each test run, the volume was created anew (to clear the SSD-tier or SSD cache), filled with random data (sequential write with 1MiB blocks), read ahead turned off on the volume. The values of IOPS, average and maximum delay were determined within each 60-second cycle.

Tests with 100% read and 65/35 read + write were carried out both with an SSD-tier (a disk group of 4x400GB SSD in RAID-10 was added to the pool) and with an SSD cache (2x400GB SSD in RAID-0, storage does not allow adding more than two SSDs to the cache for each pool). The volume was created on a pool of two RAID-6 disk groups of 10 46GB disks of 15 thousand rpm SAS (i.e., in fact, this is RAID-60 according to the 2x10 scheme). Why not 10 or 50? To intentionally complicate random storage for storage.

The results were quite predictable. As the vendor claims, the advantage of an SSD cache over an SSD tier is its faster cache filling, i.e. SHD reacts faster to the appearance of “hot” areas with an intense load on random access: IOPS grow together with falling delays 100% reading faster than when using tier'ing.

This advantage ends as soon as a significant recording load is added. RAID-60, to put it mildly, is not very suitable for random recording in small blocks, but this configuration was chosen specifically to show the essence of the problem: the storage system can not write, because it bypasses the cache on a slow RAID-60, the queue is quickly clogged, there is not enough time for servicing read requests, even taking into account caching. Some blocks still get there, but quickly become invalid, because recording is in progress. This vicious circle leads to the fact that with such a load profile, a read-only cache becomes ineffective. Exactly the same situation could be observed with early versions of the SSD cache (before Write-Back) in the PCI-E RAID controllers LSI and Adaptec. Solution - use an initially more productive volume, i.e.

This chart uses a logarithmic scale. In the case of 100% and using the SSD cache, you can see a more stable delay value - after filling the cache, the peak values do not exceed 20ms.

What can be summarized in the dilemma "caching versus tiering (tiering)"?

What to choose?

The volume was tested on a linear disk group - RAID-10 out of four 400GB SSDs. In this DotHill supply, the HGST HUSML4040ASS600 turned out to be the abstract “400GB SFF SAS SSD”. This is an Ultrastar SSD of the SSD400M series with a fairly high declared performance (56000/24000 IOPS for reading / writing 4KiB), and most importantly - a resource of 10 rewrites per day for 5 years. Of course, now in the arsenal of HGST there are more powerful SSD800MM and SSD1600MM, but for DotHill 4004 these are quite enough.

We used tests designed for single SSDs - “IOPS Test” and “Latency Test” from the SNIA Solid State Storage Performance Test Specification Enterprise v1.1:

The test consists of a series of measurements - 25 rounds of 60 seconds. Preload - sequential recording with 128KiB blocks until a 2-fold capacity is reached. The steady state window (4 rounds) is checked by plotting. Criteria for steady state: the linear approximation within the window should not go beyond the boundaries of 90% / 110% of the average value.

As expected, the claimed performance limit of one controller on IOPS with small blocks was reached. For some reason, DotHill indicates 100,000 IOPS for reading, and HP for the MSA2040 - more realistic 80,000 IOPS (it turns out 40 thousand per controller), which we see on the graph.

To verify the test, a single SSD HGST HGST HUSML4040ASS600 was tested with connection to the SAS HBA. On the 4KiB block, about 50 thousand IOPS were received for reading and writing, during saturation (SNIA PTS Write Saturation Test), the recording dropped to 25-26 thousand IOPS, which corresponds to the characteristics declared by HGST.

Average Latency (ms):

Maximum Latency (ms): The

average and peak latencies are only 20-30% higher than those for a single SSD when connected to the SAS HBA.

Of course, the article turned out to be somewhat chaotic and does not give an answer to several important questions:

DotHill is a classic entry-level storage system. Form factor, 2U, two options for different drives and with a large variety of host interfaces. Mirrored cache, two controllers, asymmetric active-active with ALUA. Last year, new functionality was added: disk pools with three-tier tiering (tiered data storage) and SSD cache.

Specifications

- Form Factor: 2U 24x 2.5 "or 12x 3.5"

- Interfaces (per controller): 4524C / 4534C - 4x SAS3 SFF-8644, 4824C / 4834C - 4x FC 8Gbps / 4x FC 16Gbps / 4x iSCSI 10Gbps SFP + (depending on the transceivers used)

- Scaling: 192 2.5 "drives or 96 3.5" drives, supports up to 7 additional disk shelves

- RAID support: 0, 1, 3, 5, 6, 10, 50

- Cache (per controller): 4GB with flash protection

- Functionality: snapshots, volume cloning, asynchronous replication (except SAS), thin provisioning, SSD cache, 3-level tiering (SSD, 10 / 15k HDD, 7.2k HDD)

- Configuration limits: 32 arrays (vDisk), up to 256 volumes per array, 1024 volumes per system

- Management: CLI, Web-based, SMI-S support

DotHill Disk Pools

For those who are not familiar with the theory, it is worth talking about the principles of operation of disk pools and tiered storage. More precisely, about a specific implementation in DotHill storage.

Before the pools, we had two limitations:

- The maximum size of a disk group. RAID-10, 5, and 6 can have a maximum of 16 disks. RAID-50 - up to 32 drives. If you need a volume with a large number of spindles (for the sake of performance and / or volume), then you had to combine LUNs on the host side.

- Suboptimal use of fast disks. You can create a large number of disk groups for several load profiles, but with a large number of hosts and services on them, constantly monitoring performance, volume and periodically making changes becomes difficult.

The disk pool in DotHill storage is a combination of several disk groups with load distribution between them. In terms of performance, you can consider the pool as RAID-0 from several subarrays, i.e. we are already solving the problem of short disk groups. In total, only two disk pools, A and B are supported on the storage system, one per controller), each pool can have up to 16 disk groups. The main architectural difference is the maximum use of the free allocation of stripes on disks. Several technologies and features are based on this feature:

- Thin provisioning(thin allocation of resources). The technology is not new, TP was probably encountered even by those who have not seen a single storage system, for example, in desktop hypervisors. Volumes are created on top of the pool or regular linear disk group, which are then presented to the hosts. Disk space under the "thin" volume is not immediately reserved, but only as it is filled with data. This allows you to pre-allocate the required disk space taking into account growth forecasts and allocate more space than it actually is. In the future, we will not need to expand the system at all (not all volumes will actually use the allocated volume), or we can add the necessary number of disks without resorting to complex manipulations (data transfer, increasing LUNs and file systems on them, etc. ) If you are afraid of a possible situation with a lack of volume,

When using Thin Provisioning, it is important to ensure the reverse process - the return of unused disk space back to the pool (space reclamation). When deleting a file, the storage system will not be able to find out about the release of the corresponding blocks unless additional steps are taken. Once storage systems supporting space reclamation could only rely on the periodic recording of zeros from the host side (for example, using SDelete), but now almost all (and, of course, DotHill) support the SCSI UNMAP command (an analogue of the well-known TRIM in the ATA command set ) - Ability to transparently add and remove pool elements . In traditional RAID arrays, it is often possible to expand the array by adding disks, but most disk pool implementations, including those of DotHill, allow you to add or remove a disk group without losing data. Blocks from this group will be redistributed to the remaining groups in the pool. Naturally, subject to the availability of a sufficient number of free blocks.

- Tiering (tier data storage). Instead of distributing volumes with different performance requirements across different disk groups, you can combine volumes on disks with different performance into a common pool and rely on the automatic migration of blocks between three tiers with different performance. The criterion for migration is the load intensity with random access. More often requested blocks “pop up” to the top, rarely used blocks “settle” on slow disks.

There are three fixed tiers in DotHill storage systems: Archive (archived, 7200 rpm drives), Standard (standard, “real” SAS disks 10/15 thousand rpm) and Performance (performance, SSD). In this regard, there is a chance to get an incorrectly working configuration, consisting, for example, of a dozen 7200 disks and a pair of 15k disks. DotHill will consider a mirror from a pair of 15k-disks more productive and move "hot" data to it.

When planning a configuration with tiering, it should be borne in mind that you will always receive a performance gain with some delay - the system needs time to move the blocks to the desired tier. The second point is the primitive organization of tiering in DotHill 4004, i.e. lack of ability to prioritize volumes. No complicated designs with profiles andtier affinity , as in DataCore or some midrange storage systems, it’s not here, it's still an entry-level storage system. Want guaranteed performance immediately and always? Use regular disk groups. Another alternative to tiering in DotHill is the SSD cache, details of which will be implemented below.

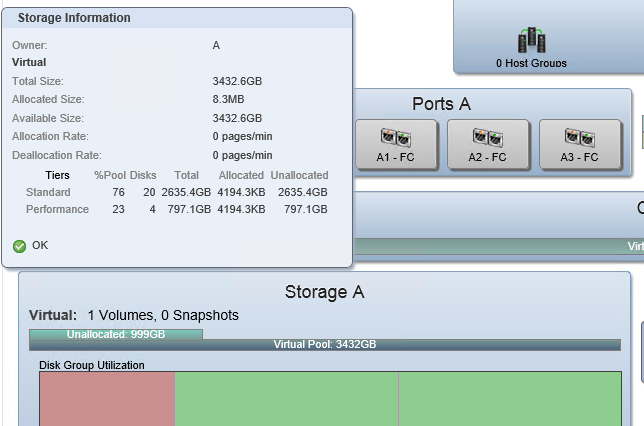

DotHill 4004 has a simple and intuitive tool for analyzing the efficiency of using the pool:

This is a screenshot from the Pools tabnew Web-based Storage Management Console (SMC) v3 (HP calls it SMU). You can see the pool, consisting of two tiers: Standard (2 disk groups) and Performance. You can immediately understand how the data is distributed in the pool, and how much space is allocated on each tier: just one volume has been created and it is not filled with real data, so only 8 with a small MB are actually allocated.

Second screenshot, performance:

A load test is currently running after the volume is pre-filled. We can see how much in IOPS and throughput each tier in the pool gives. The space for new blocks is initially allocated on the Standard tier, and here the process of migration to the Performance tier under the influence of load is already visible.

Differences from HP MSA2040

- The MSA 2040 is an HP product with all the consequences and benefits. First of all, there is an extensive network of service centers and the ability to purchase various advanced support packages. So far, only distributors and their partners are engaged in Russian-language support and maintenance of DotHill. All that remains is the optional 8x5 support with a response on the next business day and dispatch of spare parts on the next business day.

The documentation is completely identical to HP's with the exception of names and logos, i.e. equally high quality and detailed. Of course, HP has many additional FAQs, best practices, and reference architecture descriptions for the MSA2040. The web interface of HP (HP SMU) differs only in the corporate font and icons.

Price. Of course, nothing is given for nothing. The price of the MSA2040 in common configurations (two controllers, 24 450-600GB 10k drives, 8Gbps FC transceivers) is about 30% higher than the DotHill 4004. Of course, without CarePacks. Also, without taking into account the separately licensed by HP expansion of the number of snapshots (from 64 to 512) and asynchronous replication (Remote Snap), which add a few thousand more to the cost of the solution. Our statistics show that it is extremely rare to buy additional CarePacks to MSA in the territory of the Russian Federation, after all, this is a budget segment. Implementation in most cases is carried out on their own, sometimes it is carried out by the supplier as part of a common project, and very rarely - HP engineers. - Disks . At DotHill and HP they are “their own”, that is, they use HDD and SSD with non-standard firmware, the rails come with disks. DotHill offers from 3.5 "drives only nearline SAS, i.e. with a spindle speed of 7200 rpm, i.e.

all fast drives are only 2.5"*. HP offers 300, 450, and 600GB 15,000 rpm drives in 3.5 "performance.

* Update as of March 25 , 2015: DotHill's 10k and 15k drives in the 3.5" version are still available on request. - Related Products . HP MSA 1040 is a budget version of MSA 2040 with certain restrictions:

- No support for SSD. The optional auto-tiering is still there, it's just 2-level.

- Less host ports - 2 per controller instead of 4. There is no support for 16Gbps FC and SAS.

- Fewer drives - only 3 additional disk shelves instead of 7.

So if you do not need all the features of the MSA 2040, then you can save about 20% and get close to the cost of the “original” (but full-featured).

DotHill has another variation - the AssuredSAN Ultra series . This is a storage system with exactly the same controllers, functionality and management interface as the 4004 series, but with a high disk density: Ultra48 - 48x 2.5 "in 2U and Ultra56 - 56x 3.5" in 4U.

Performance

Storage Configuration

- DotHill 4824 (2U, 24x2.5 ")

- Firmware Version: GL200R007 (the latest at the time of testing)

- Activated RealTier 2.0 License

- Two controllers with CNC ports (FC / 10GbE), 4 transceivers 8Gbit FC (installed in the first controller)

- 20x 146GB 15K rpm SAS HDD (Seagate ST9146852SS)

- 4x 400GB SSD (HGST HUSML4040ASS600)

Host configuration

- Supermicro 1027R-WC1R Platform

- 2x Intel Xeon E5-2620v2

- 8x 8GB DDR3 1600MHz ECC RDIMM

- 480GB SSD Kingston E50

- 2x Qlogic QLE2562 (2-Port 8Gbps FC HBA)

- CentOS 7, fio 2.1.14

Connection was made through one controller, direct, through 4 ports of 8Gbit FC. Naturally, the mapping of volumes to the host was through 4 ports, and multipath was configured on the host.

Pool with tier-1 and cache on SSD

This test is a three-hour (180 cycles of 60 seconds) load with random access of 8KiB blocks (8 streams with queue depth of 16 each) with different read / write ratios. The entire load is concentrated in the 0-20GB area, which is guaranteed to be less than the performance tier or cache on the SSD (800GB) - this was done with the aim of quickly filling the cache or tier in an acceptable time.

Before each test run, the volume was created anew (to clear the SSD-tier or SSD cache), filled with random data (sequential write with 1MiB blocks), read ahead turned off on the volume. The values of IOPS, average and maximum delay were determined within each 60-second cycle.

Tests with 100% read and 65/35 read + write were carried out both with an SSD-tier (a disk group of 4x400GB SSD in RAID-10 was added to the pool) and with an SSD cache (2x400GB SSD in RAID-0, storage does not allow adding more than two SSDs to the cache for each pool). The volume was created on a pool of two RAID-6 disk groups of 10 46GB disks of 15 thousand rpm SAS (i.e., in fact, this is RAID-60 according to the 2x10 scheme). Why not 10 or 50? To intentionally complicate random storage for storage.

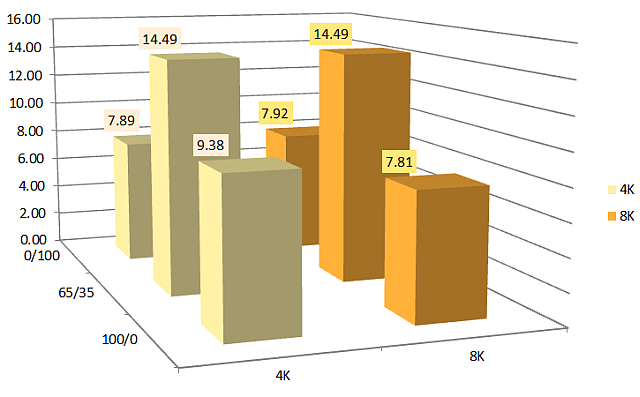

IOPS

The results were quite predictable. As the vendor claims, the advantage of an SSD cache over an SSD tier is its faster cache filling, i.e. SHD reacts faster to the appearance of “hot” areas with an intense load on random access: IOPS grow together with falling delays 100% reading faster than when using tier'ing.

This advantage ends as soon as a significant recording load is added. RAID-60, to put it mildly, is not very suitable for random recording in small blocks, but this configuration was chosen specifically to show the essence of the problem: the storage system can not write, because it bypasses the cache on a slow RAID-60, the queue is quickly clogged, there is not enough time for servicing read requests, even taking into account caching. Some blocks still get there, but quickly become invalid, because recording is in progress. This vicious circle leads to the fact that with such a load profile, a read-only cache becomes ineffective. Exactly the same situation could be observed with early versions of the SSD cache (before Write-Back) in the PCI-E RAID controllers LSI and Adaptec. Solution - use an initially more productive volume, i.e.

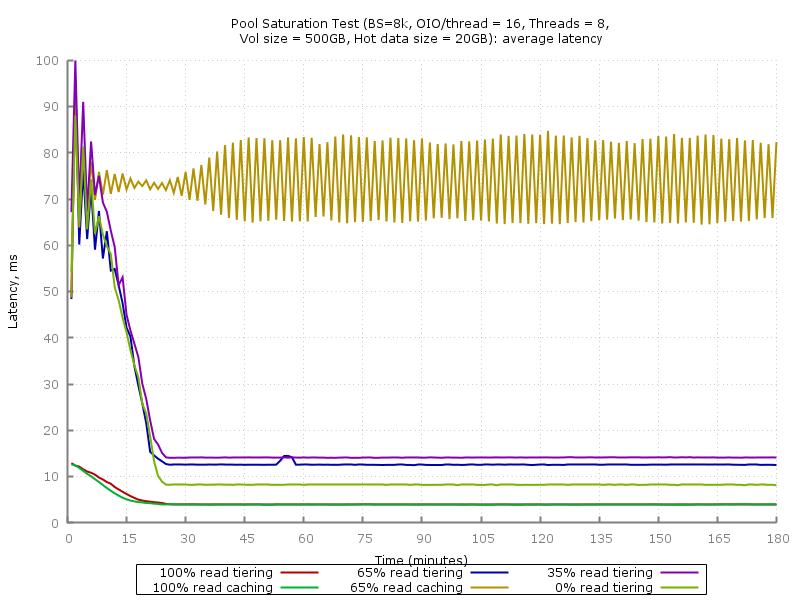

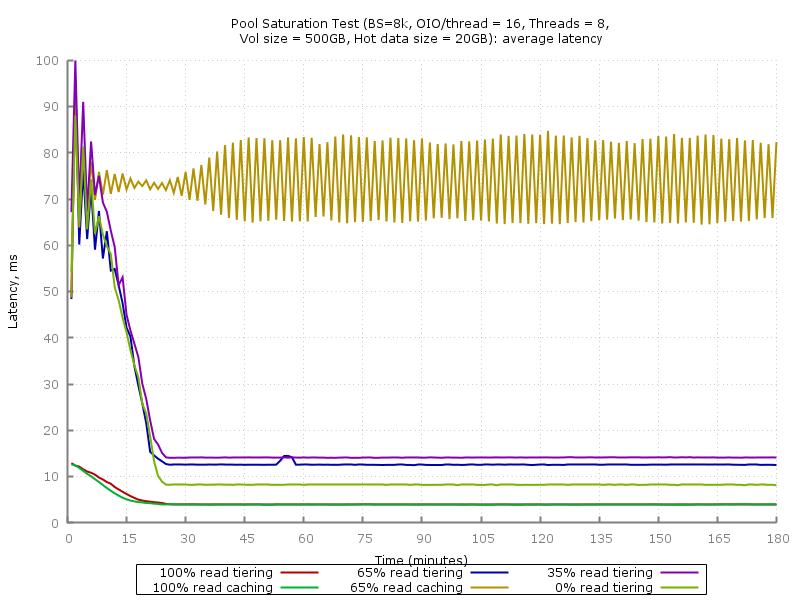

Average delay

Maximum delay

This chart uses a logarithmic scale. In the case of 100% and using the SSD cache, you can see a more stable delay value - after filling the cache, the peak values do not exceed 20ms.

What can be summarized in the dilemma "caching versus tiering (tiering)"?

What to choose?

- Filling the cache is faster. If your load consists of predominantly random reads and the hot area changes periodically, then you should choose a cache.

- Saving “fast” volume. If the "hot" data fits entirely in the cache, but not in the SSD-tier, then the cache may be more efficient. The SSD cache in DotHill 4004 is read-only, so a RAID-0 disk group is created for it. For example, having 4 SSDs of 400 GB each, you can get 800 GB of cache for each of the two pools (1600 GB in total) or 2 times less when using tiering (800 GB for one pool or 400 GB for two). Of course, there is another 1200GB option in RAID-5 for one pool, if the second does not need SSDs.

On the other hand, the total usable pool size when using tiering will be greater by storing only one copy of the blocks. - Cache does not affect sequential access performance. When caching does not move blocks, only copying. With a suitable load profile (random reading in small blocks with repeated access to the same LBA), the storage system returns data from the SSD cache, if there is one, or from the HDD and copies it to the cache. When a load with serial access appears, the data will be read from the HDD. Example: a pool of 20 10 or 15k HDD can give about 2000MB / s for sequential reading, but if the necessary data is on the disk group of a pair of SSDs, then we get about 800MB / s. Critical or not, it depends on the real scenario of using storage systems.

4x SSD 400GB HGST HUSML4040ASS600 RAID-10

The volume was tested on a linear disk group - RAID-10 out of four 400GB SSDs. In this DotHill supply, the HGST HUSML4040ASS600 turned out to be the abstract “400GB SFF SAS SSD”. This is an Ultrastar SSD of the SSD400M series with a fairly high declared performance (56000/24000 IOPS for reading / writing 4KiB), and most importantly - a resource of 10 rewrites per day for 5 years. Of course, now in the arsenal of HGST there are more powerful SSD800MM and SSD1600MM, but for DotHill 4004 these are quite enough.

We used tests designed for single SSDs - “IOPS Test” and “Latency Test” from the SNIA Solid State Storage Performance Test Specification Enterprise v1.1:

- IOPS Test . The number of IOPSs (input / output operations per second) is measured for blocks of various sizes (1024KiB, 128KiB, 64KiB, 32KiB, 16KiB, 8KiB, 4KiB) and random access with different read / write ratios (100/0, 95/5, 65/35, 50/50, 35/65, 5/95, 0/100). Used 8 threads with a queue depth of 16.

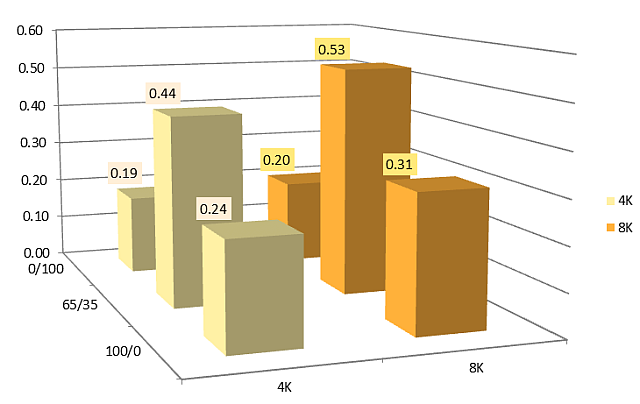

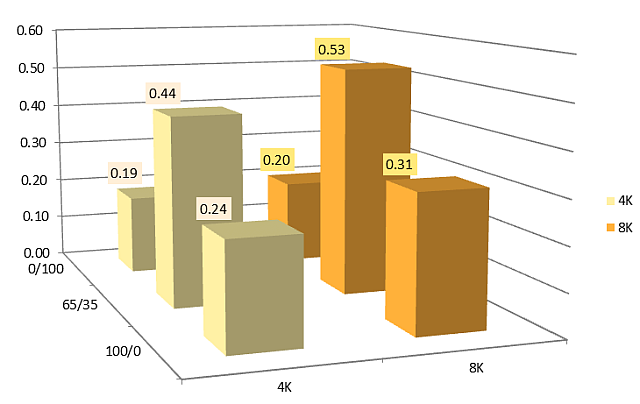

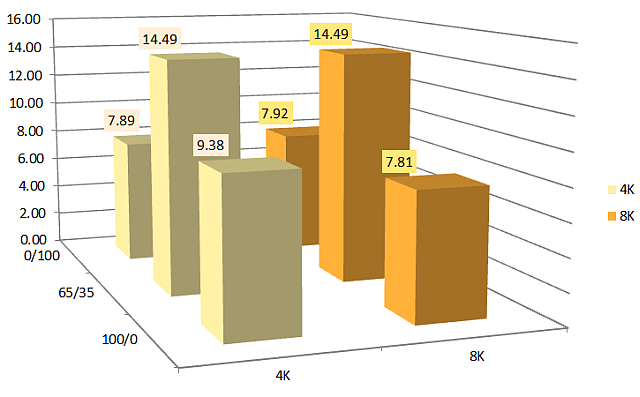

- Latency Test . The average and maximum latencies are measured for various block sizes (8KiB, 4KiB) and read / write ratios (100/0, 65/35, 0/100) at the minimum queue depth (1 stream with QD = 1).

The test consists of a series of measurements - 25 rounds of 60 seconds. Preload - sequential recording with 128KiB blocks until a 2-fold capacity is reached. The steady state window (4 rounds) is checked by plotting. Criteria for steady state: the linear approximation within the window should not go beyond the boundaries of 90% / 110% of the average value.

SNIA PTS: IOPS test

As expected, the claimed performance limit of one controller on IOPS with small blocks was reached. For some reason, DotHill indicates 100,000 IOPS for reading, and HP for the MSA2040 - more realistic 80,000 IOPS (it turns out 40 thousand per controller), which we see on the graph.

To verify the test, a single SSD HGST HGST HUSML4040ASS600 was tested with connection to the SAS HBA. On the 4KiB block, about 50 thousand IOPS were received for reading and writing, during saturation (SNIA PTS Write Saturation Test), the recording dropped to 25-26 thousand IOPS, which corresponds to the characteristics declared by HGST.

SNIA PTS: Latency Test

Average Latency (ms):

Maximum Latency (ms): The

average and peak latencies are only 20-30% higher than those for a single SSD when connected to the SAS HBA.

Conclusion

Of course, the article turned out to be somewhat chaotic and does not give an answer to several important questions:

- Comparison in a similar configuration with products of other vendors: IBM v3700, Dell PV MD3 (and other descendants of the LSI CTS2600), Infrotrend ESDS 3000 and other storage systems come to us in different configurations and, as a rule, not for long - you need to load and / or to introduce.

- Storage bandwidth limit has not been tested. I managed to see about 2100MiB / s (RAID-50 from 20 disks), but I did not test the sequential load in detail due to the insufficient number of disks. I am sure that the claimed 3200/2650 MB / s for reading / writing could be obtained.

- There is no IOPS vs latency chart useful in many cases, where, with varying queue depths, you can see how many IOPS can be obtained with an acceptable delay value. Alas, there was not enough time.

- Best Practices. I did not want to reinvent the wheel, as there is a good document for the HP MSA 2040 . Only recommendations for the proper use of disk pools can be added to it (see above).