Deconvolutional neural network

- Tutorial

The use of classical neural networks for image recognition is complicated, as a rule, by the large dimension of the vector of input values of the neural network, the large number of neurons in the intermediate layers, and, as a consequence, the high cost of computing resources for training and computing the network. Convolutional neural networks are less inherent in the disadvantages described above.

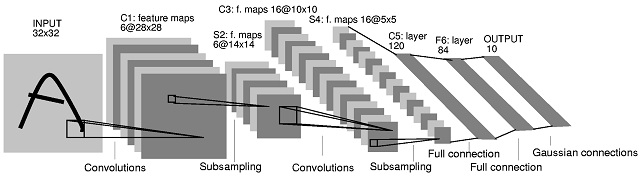

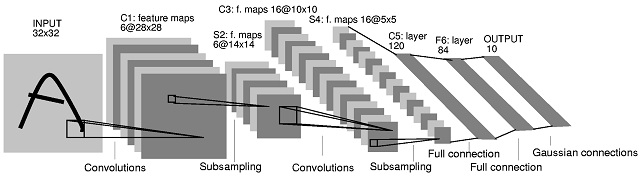

Convolutional neural network (Eng. Convolutional neural network A , CNN's ) - special architecture of artificial neural networks proposed by Ian Lekunom and aimed at the effective recognition of images, part of the deep learning technologies (Eng. Deep the leaning ). This technology is built by analogy with the principles of the visual cortex.the brain, in which the so-called simple cells were discovered, reacting to straight lines at different angles, and complex cells, the reaction of which is associated with the activation of a certain set of simple cells. Thus, the idea of convolutional neural networks is to alternate convolution layers (English convolution layers ) and subsampling layers (English subsampling layers , subsample layers). [6]

Figure 1. Architecture of a convolutional neural network.

The key to understanding convolutional neural networks is the concept of so-called “shared” weights, i.e. part of the neurons of a given layer of the neural network can use the same weighting factors. Neurons using the same weights are combined into attribute maps (feature maps ), and each neuron of the feature map is associated with a part of the neurons of the previous layer. When computing the network, it turns out that each neuron performs a convolution ( convolution operation ) of a certain area of the previous layer (determined by the set of neurons associated with this neuron). The layers of the neural network constructed in the described manner are called convolutional layers. In addition to the convolutional layers in the convolutional neural network, there may be sub-sampling layers (performing the function of decreasing the dimension of the space of feature maps) and fully connected layers (the output layer, as a rule, is always fully connected). All three types of layers can alternate in random order, which allows you to compile feature maps from feature maps, and this in practice means the ability to recognize complex feature hierarchies [3].

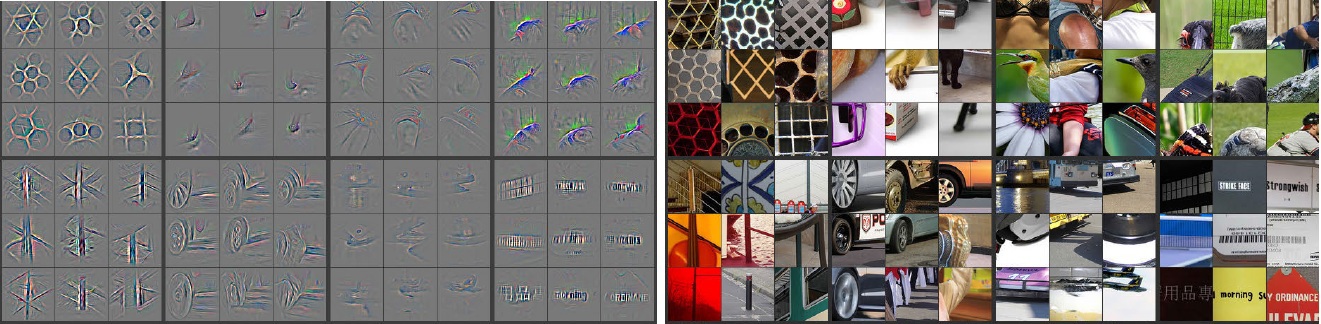

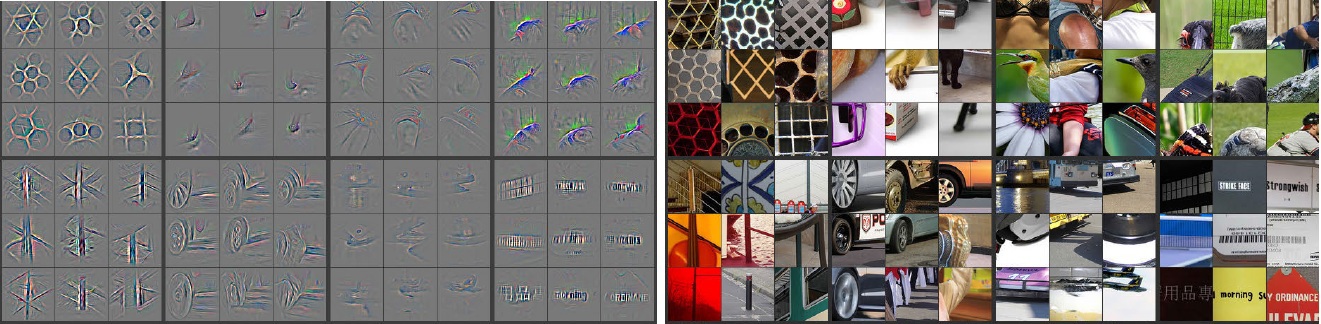

What exactly affects the quality of pattern recognition when training convolutional neural networks? Puzzled by this question, stumbled upon an article by Matthew Zeiler ( Matthew Zeiler ). He developed the concept and technology of Deconvolutional Neural Networks ( DNN ) to understand and analyze the operation of current neural networks. In the article by Matthew Sailer, Deconvolutional Neural Network s technology is proposed , which carries out the construction of hierarchical representations of the image (Fig. 2), taking into account the filters and parameters obtained during CNN training(Fig 2). These representations can be used both for solving primary signal processing tasks, such as noise reduction, and they can also provide low-level functions for object recognition. Each level of the hierarchy can form more complex functions based on the functions of the levels located in the hierarchy below.

Figure 2. Image Representations

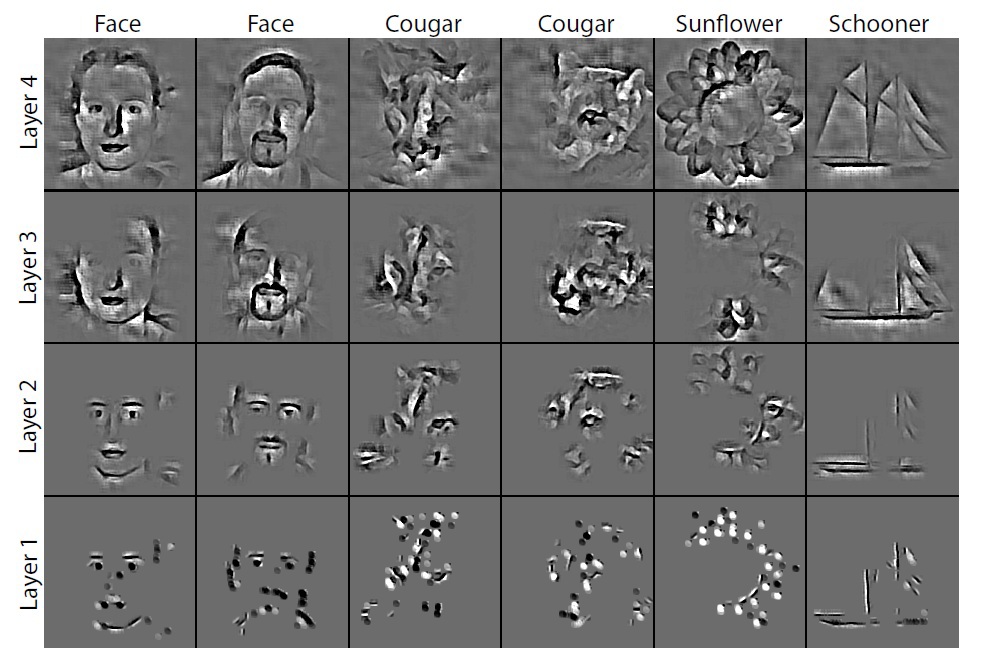

The main difference between CNN and DNN is that in CNN the input signal undergoes several layers of convolution and subsampling. DNNon the contrary, it seeks to generate an input signal in the form of a sum of convolutions of feature cards, taking into account the filters used (Fig. 3). To solve this problem, a wide range of tools of the theory of pattern recognition are used, for example, deblurring algorithms . The work, written by Matthew Sailer, is an attempt to connect the recognition of image objects with low-level tasks and algorithms for processing and filtering data.

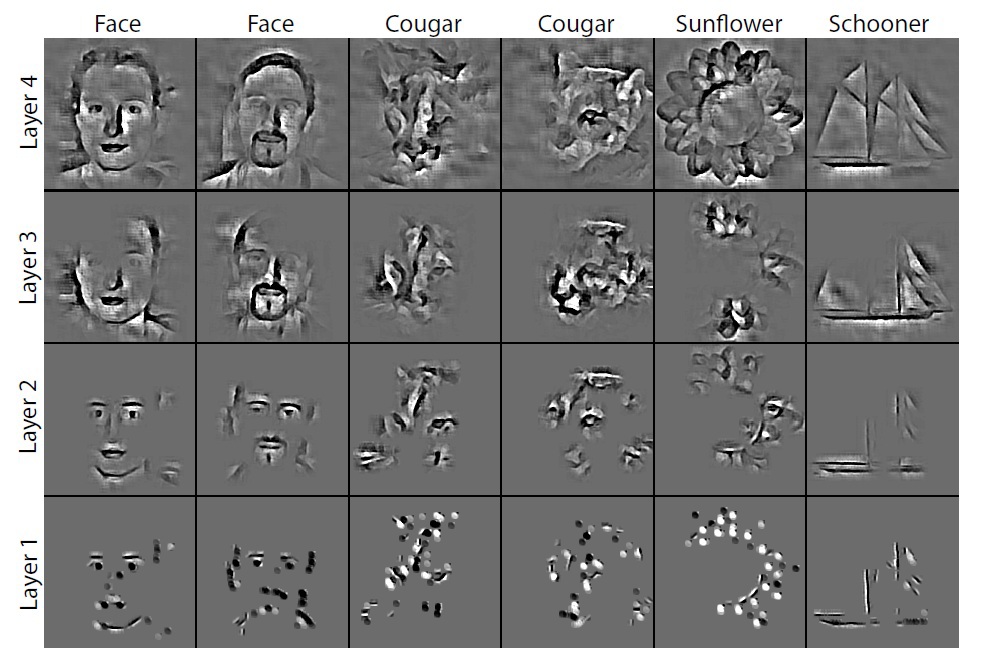

To understand the convolution operation, an interpretation of the behavior of feature maps in intermediate layers is required. For the study of a convolutional neural network, DNNattached to each of its layers, as shown in Fig. 3, providing a continuous path from the network outputs to the input image pixels. First, a convolution operation is performed on the input image and feature maps are calculated for all layers, after which, to study the behavior in CNN , the weights of all neurons in the layer are set to zero and the resulting feature maps are used as input parameters for the attached deconvnet layer. Then we sequentially perform the operations: (I) separation, (II) rectification and (III) filtration. Character maps in the layer below are reconstructed so as to obtain the necessary parameters, such as the weights of the neurons in the layer and the filters used. This operation is repeated until the values of the input pixels of the image are reached.

Fig. 3. The process of research of convolutional neural networks using DNN.

Disconnection operation: in convolutional neural networks, this is a union operation, it is irreversible, however, you can get an approximate inverse value by recording the location of the maxima within each area. The operation of combining refers to the summation of all input values of the neuron and the transfer of the resulting amount to the transfer function of the neuron. In DNN , the disconnect operation uses the changes in the set of variables located in the layer above at the appropriate places in the layer that is currently being processed (see Figure 2).

Rectification operation: convolutional neural network uses a nonlinear function ( relu (x) = max (x, 0)where x is the input image), thereby ensuring that the resulting feature maps are always positive.

Filtering operation: the convolutional neural network uses the filters obtained during the training of the network to convolve feature maps from the previous layer. To understand which filters were applied to the image, deconvnet uses transposed versions of the same filters. Designing a “descent down” from higher levels uses the changes in parameters obtained from CNN training. Since these changes are peculiar to this input image, the reconstruction obtained from one function thus resembles a small piece of the initial image with structures (Fig. 4), weighted in accordance with their contribution to the feature map. Since the model is trained in accordance with the revealed features, they, structures, implicitly show which parts of the input image (or parts of two different images) are different in terms of the received characteristics [4]. Also, the resulting structures allow us to draw conclusions about which low-level features of the image are key to its classification.

Fig. 4. Image structures.

Although in theory a global minimum can always be found, in practice it is difficult. This is due to the fact that elements in feature maps are connected to each other through filters. One element in the map may affect other elements located far from this element, which means that minimization can take a very long time.

Advantages of using DNN :

1) conceptually simple learning schemes. DNN training is carried out through the use of unpooling, rectification and image filtering, as well as feature maps obtained during CNN training ;

2) applying DNNto the original images, you can get a large set of filters that cover the entire structure of the image using primitive representations; Thus, filters are obtained that apply to the entire image, and not to every small piece of the original image. This is a great advantage, as a better understanding of the processes occurring during CNN training appears .

3) representations (Fig. 2) can be obtained without configuring specific parameters or additional modules, such as separation, rectification and filtering. These representations are obtained in the course of CNN training ;

4) the approach using DNN is based on the method of finding the global minimum, as well as the use of filters obtained during trainingCNN , and is intended to minimize the ill-conditioned costs that arise in the convolutional approach.

The reviewed article also presents the results of experiments conducted by Matthew Zeiler. The network he proposed at ImageNet 2013, showed the best result in solving the problem of image classification, the error was only 14.8%. Classification of objects of 1000 categories. The training sample consisted of 1.2 million images, and the test sample consisted of 150 thousand images. For each test image, the recognition algorithm should produce 5 class labels in descending order of their reliability. When calculating the error, it was taken into account whether the most reliable label corresponds to the class label of the object that is actually present on the image, which is known for each image. The use of 5 labels is intended in order to eliminate the “punishment” for the algorithm in the case when it recognized objects of other classes in the image that could be presented implicitly [1]. More contests for ImageNet 2013 are described here .

The results of the Deconvolution Neural Networks are shown in Figure 5.

Figure 5. DNN Results Zeiler

plans to further develop DNN technology in the following areas:

1) improvement of image classification in DNN. DNN networks were introduced in order to understand the features of the convolutional network learning process. Using the parameters obtained during the training of the convolutional neural network, in addition to the high-level functions, a mechanism can be provided to increase the classification level in DNN. Further work is related to the classification of source images, so we can say that the template method will be applied. Images will be classified based on the class to which the object belongs.

2) DNN scaling . The output methods used in DNN are slow in nature, because many iterations are necessary. Numerical approximation methods based on direct communication should also be investigated. This will require only those functions and merge parameters for the current batch of snapshots that allow DNN to scale to large data sets.

3) improvement of convolutional models for detecting several objects in the image. Convolutional networks are known to have been used for classification for many years and have recently been applied to very large data sets. However, further development of the algorithms used in the convolutional approach for detecting several objects present in the image is necessary. To detect multiple objects CNN at once in the image requires a large set of training data, as well as significantly increasing the number of parameters for training a neural network. [4]

Having studied his article, we decided to conduct a study on DNN . Matthew Sailer has developed the Deconvolutional Network Toolbox for Matlab. And immediately faced with a problem - a non-trivial task of installing this Toolbox . After a successful installation, we decided to share these skills with the Habrachians.

So, let's move on to the installation process. The Deconvolutional Network Toolbox was installed on a computer with the following technical specifications:

• Windows 7 64x

• Matlab b2014a

Let's start by preparing the software that needs to be installed:

1) Windows SDK , when installing, uncheck the box for Visual C ++ Compilers

Microsoft Visual C ++ 2010

If on a computer VS 2010 redistributable already installedx64 or VS 2010 redistributable x86, you will have to remove it.

We complete the installation process of the Windows SDK , and install the patch

2) After that, download and install VS 2010

3) Also, to install this toolbox, you need to install the icc compiler, in our case it is Intel C ++ Composer XE Compiler 2011 .

4) In Matlab, type the command

Similarly happens with

If the compiler has been successfully installed, now you can start building the toolbox.

Software preparation completed. We begin the compilation process.

1) Download the toolbox from

www.matthewzeiler.com/software/DeconvNetToolbox/DeconvNetToolbox.zip and unzip it

2) Go to Matlab to the directory where the unpacked toolbox is located and run the file “setupDeconvNetToolbox.m”

3) Go to the PoolingToolbox folder . Open the compilemex.m file.

A number of changes need to be made to this file, since it was written for Linux.

It is necessary to register the paths in MEXOPTS_PATH to Matlab, to the libraries located in the compiler folder for the 64-bit system, as well as to the compiler and VisualStudio 2010 header files .

4) We’ll make a few more changes, they look like this. We need to do the same for the rest of the compiled files. ( Example ) 5) We’ll make the same changes in mexopts.sh There you need to also specify the paths to the 64 and 32 bit compiler. ( Example ) 6) Now we go to the IPP Convolution Toolbox directory 7) We go into the MEX folder and run the complimex.m file , here you also need to register the same as in step 4, and add the paths to ipp_lib and

ipp_include . (Example)

Matlab will say that there are not enough libraries , you need to put them in c: \ Program Files (x86) \ Intel \ ComposerXE-2011 \ ipp \ lib \ intel64 \

8) We make changes similar to p5

( Example )

Run the file, if everything worked correctly - continue.

9) Go to the GUI folder

10) Open the gui.m file , here you need to register the path to the folder with the unpacked Deconvolutional toolbox , it looks like this for me ( Example ) 11) Run the gui.m file . Has it earned? Close and move on. 12) Now in the folder where the Toolbox was pumped out, create the Results folder , and in it the temp folder . Now run from the Results folder gui.m,

a graphical interface appears in which the parameters are set, in the lower right corner of the “Save Results” set to 1, and click “ Save ”. As a result of these actions, a file gui_has_set_the_params.mat is generated in the GUI directory with the parameters of the DNN network demo model proposed by Matthew Sailer. 13) Now you can start training the resulting model by calling the trainAll.m script . If all the actions were performed correctly, then after completing the training, you can see the result of work in the Results folder . Now you can start researching! A separate article will be devoted to the research results.

References:

1) habrahabr.ru/company/nordavind/blog/206342

2) habrahabr.ru/company/synesis/blog/238129

3) habrahabr.ru/post/229851

4) www.matthewzeiler.com/pubs

5) geektimes .ru / post / 74326

6) nordavind.ru/node/550

Convolutional neural network (Eng. Convolutional neural network A , CNN's ) - special architecture of artificial neural networks proposed by Ian Lekunom and aimed at the effective recognition of images, part of the deep learning technologies (Eng. Deep the leaning ). This technology is built by analogy with the principles of the visual cortex.the brain, in which the so-called simple cells were discovered, reacting to straight lines at different angles, and complex cells, the reaction of which is associated with the activation of a certain set of simple cells. Thus, the idea of convolutional neural networks is to alternate convolution layers (English convolution layers ) and subsampling layers (English subsampling layers , subsample layers). [6]

Figure 1. Architecture of a convolutional neural network.

The key to understanding convolutional neural networks is the concept of so-called “shared” weights, i.e. part of the neurons of a given layer of the neural network can use the same weighting factors. Neurons using the same weights are combined into attribute maps (feature maps ), and each neuron of the feature map is associated with a part of the neurons of the previous layer. When computing the network, it turns out that each neuron performs a convolution ( convolution operation ) of a certain area of the previous layer (determined by the set of neurons associated with this neuron). The layers of the neural network constructed in the described manner are called convolutional layers. In addition to the convolutional layers in the convolutional neural network, there may be sub-sampling layers (performing the function of decreasing the dimension of the space of feature maps) and fully connected layers (the output layer, as a rule, is always fully connected). All three types of layers can alternate in random order, which allows you to compile feature maps from feature maps, and this in practice means the ability to recognize complex feature hierarchies [3].

What exactly affects the quality of pattern recognition when training convolutional neural networks? Puzzled by this question, stumbled upon an article by Matthew Zeiler ( Matthew Zeiler ). He developed the concept and technology of Deconvolutional Neural Networks ( DNN ) to understand and analyze the operation of current neural networks. In the article by Matthew Sailer, Deconvolutional Neural Network s technology is proposed , which carries out the construction of hierarchical representations of the image (Fig. 2), taking into account the filters and parameters obtained during CNN training(Fig 2). These representations can be used both for solving primary signal processing tasks, such as noise reduction, and they can also provide low-level functions for object recognition. Each level of the hierarchy can form more complex functions based on the functions of the levels located in the hierarchy below.

Figure 2. Image Representations

The main difference between CNN and DNN is that in CNN the input signal undergoes several layers of convolution and subsampling. DNNon the contrary, it seeks to generate an input signal in the form of a sum of convolutions of feature cards, taking into account the filters used (Fig. 3). To solve this problem, a wide range of tools of the theory of pattern recognition are used, for example, deblurring algorithms . The work, written by Matthew Sailer, is an attempt to connect the recognition of image objects with low-level tasks and algorithms for processing and filtering data.

To understand the convolution operation, an interpretation of the behavior of feature maps in intermediate layers is required. For the study of a convolutional neural network, DNNattached to each of its layers, as shown in Fig. 3, providing a continuous path from the network outputs to the input image pixels. First, a convolution operation is performed on the input image and feature maps are calculated for all layers, after which, to study the behavior in CNN , the weights of all neurons in the layer are set to zero and the resulting feature maps are used as input parameters for the attached deconvnet layer. Then we sequentially perform the operations: (I) separation, (II) rectification and (III) filtration. Character maps in the layer below are reconstructed so as to obtain the necessary parameters, such as the weights of the neurons in the layer and the filters used. This operation is repeated until the values of the input pixels of the image are reached.

Fig. 3. The process of research of convolutional neural networks using DNN.

Disconnection operation: in convolutional neural networks, this is a union operation, it is irreversible, however, you can get an approximate inverse value by recording the location of the maxima within each area. The operation of combining refers to the summation of all input values of the neuron and the transfer of the resulting amount to the transfer function of the neuron. In DNN , the disconnect operation uses the changes in the set of variables located in the layer above at the appropriate places in the layer that is currently being processed (see Figure 2).

Rectification operation: convolutional neural network uses a nonlinear function ( relu (x) = max (x, 0)where x is the input image), thereby ensuring that the resulting feature maps are always positive.

Filtering operation: the convolutional neural network uses the filters obtained during the training of the network to convolve feature maps from the previous layer. To understand which filters were applied to the image, deconvnet uses transposed versions of the same filters. Designing a “descent down” from higher levels uses the changes in parameters obtained from CNN training. Since these changes are peculiar to this input image, the reconstruction obtained from one function thus resembles a small piece of the initial image with structures (Fig. 4), weighted in accordance with their contribution to the feature map. Since the model is trained in accordance with the revealed features, they, structures, implicitly show which parts of the input image (or parts of two different images) are different in terms of the received characteristics [4]. Also, the resulting structures allow us to draw conclusions about which low-level features of the image are key to its classification.

Fig. 4. Image structures.

Although in theory a global minimum can always be found, in practice it is difficult. This is due to the fact that elements in feature maps are connected to each other through filters. One element in the map may affect other elements located far from this element, which means that minimization can take a very long time.

Advantages of using DNN :

1) conceptually simple learning schemes. DNN training is carried out through the use of unpooling, rectification and image filtering, as well as feature maps obtained during CNN training ;

2) applying DNNto the original images, you can get a large set of filters that cover the entire structure of the image using primitive representations; Thus, filters are obtained that apply to the entire image, and not to every small piece of the original image. This is a great advantage, as a better understanding of the processes occurring during CNN training appears .

3) representations (Fig. 2) can be obtained without configuring specific parameters or additional modules, such as separation, rectification and filtering. These representations are obtained in the course of CNN training ;

4) the approach using DNN is based on the method of finding the global minimum, as well as the use of filters obtained during trainingCNN , and is intended to minimize the ill-conditioned costs that arise in the convolutional approach.

The reviewed article also presents the results of experiments conducted by Matthew Zeiler. The network he proposed at ImageNet 2013, showed the best result in solving the problem of image classification, the error was only 14.8%. Classification of objects of 1000 categories. The training sample consisted of 1.2 million images, and the test sample consisted of 150 thousand images. For each test image, the recognition algorithm should produce 5 class labels in descending order of their reliability. When calculating the error, it was taken into account whether the most reliable label corresponds to the class label of the object that is actually present on the image, which is known for each image. The use of 5 labels is intended in order to eliminate the “punishment” for the algorithm in the case when it recognized objects of other classes in the image that could be presented implicitly [1]. More contests for ImageNet 2013 are described here .

The results of the Deconvolution Neural Networks are shown in Figure 5.

Figure 5. DNN Results Zeiler

plans to further develop DNN technology in the following areas:

1) improvement of image classification in DNN. DNN networks were introduced in order to understand the features of the convolutional network learning process. Using the parameters obtained during the training of the convolutional neural network, in addition to the high-level functions, a mechanism can be provided to increase the classification level in DNN. Further work is related to the classification of source images, so we can say that the template method will be applied. Images will be classified based on the class to which the object belongs.

2) DNN scaling . The output methods used in DNN are slow in nature, because many iterations are necessary. Numerical approximation methods based on direct communication should also be investigated. This will require only those functions and merge parameters for the current batch of snapshots that allow DNN to scale to large data sets.

3) improvement of convolutional models for detecting several objects in the image. Convolutional networks are known to have been used for classification for many years and have recently been applied to very large data sets. However, further development of the algorithms used in the convolutional approach for detecting several objects present in the image is necessary. To detect multiple objects CNN at once in the image requires a large set of training data, as well as significantly increasing the number of parameters for training a neural network. [4]

Having studied his article, we decided to conduct a study on DNN . Matthew Sailer has developed the Deconvolutional Network Toolbox for Matlab. And immediately faced with a problem - a non-trivial task of installing this Toolbox . After a successful installation, we decided to share these skills with the Habrachians.

So, let's move on to the installation process. The Deconvolutional Network Toolbox was installed on a computer with the following technical specifications:

• Windows 7 64x

• Matlab b2014a

Let's start by preparing the software that needs to be installed:

1) Windows SDK , when installing, uncheck the box for Visual C ++ Compilers

Microsoft Visual C ++ 2010

If on a computer VS 2010 redistributable already installedx64 or VS 2010 redistributable x86, you will have to remove it.

We complete the installation process of the Windows SDK , and install the patch

2) After that, download and install VS 2010

3) Also, to install this toolbox, you need to install the icc compiler, in our case it is Intel C ++ Composer XE Compiler 2011 .

4) In Matlab, type the command

mbuild -setupand SDK 7.1 is automatically selected .

Similarly happens with

mex –setup

If the compiler has been successfully installed, now you can start building the toolbox.

Software preparation completed. We begin the compilation process.

1) Download the toolbox from

www.matthewzeiler.com/software/DeconvNetToolbox/DeconvNetToolbox.zip and unzip it

2) Go to Matlab to the directory where the unpacked toolbox is located and run the file “setupDeconvNetToolbox.m”

3) Go to the PoolingToolbox folder . Open the compilemex.m file.

A number of changes need to be made to this file, since it was written for Linux.

It is necessary to register the paths in MEXOPTS_PATH to Matlab, to the libraries located in the compiler folder for the 64-bit system, as well as to the compiler and VisualStudio 2010 header files .

4) We’ll make a few more changes, they look like this. We need to do the same for the rest of the compiled files. ( Example ) 5) We’ll make the same changes in mexopts.sh There you need to also specify the paths to the 64 and 32 bit compiler. ( Example ) 6) Now we go to the IPP Convolution Toolbox directory 7) We go into the MEX folder and run the complimex.m file , here you also need to register the same as in step 4, and add the paths to ipp_lib and

exec_string = strcat({'mex '},MEXOPTS_PATH,{' '},{'-liomp5mt max_pool.cpp'});

eval(exec_string{1});ipp_include . (Example)

Matlab will say that there are not enough libraries , you need to put them in c: \ Program Files (x86) \ Intel \ ComposerXE-2011 \ ipp \ lib \ intel64 \

8) We make changes similar to p5

exec_string = strcat ({'mex'}, MEXOPTS_PATH, {''}, {IPP_INCLUDE_PATH}, {''}, {IPP_LIB64_PATH}, {''}, {IPP_LIB_PATH}, {''}, {'- liomp5mt -lippiemerged -lippimerged -lippcore -lippsemerged -lippsmerged -lippi ipp_conv2.cpp '});

eval (exec_string {1});( Example )

Run the file, if everything worked correctly - continue.

9) Go to the GUI folder

10) Open the gui.m file , here you need to register the path to the folder with the unpacked Deconvolutional toolbox , it looks like this for me ( Example ) 11) Run the gui.m file . Has it earned? Close and move on. 12) Now in the folder where the Toolbox was pumped out, create the Results folder , and in it the temp folder . Now run from the Results folder gui.m,

START_DATASET_DIRECTORY = 'C:/My_projects/DeconvNetToolbox/DeconvNetToolbox';

START_RESULTS_DIRECTORY = 'C:/My_projects/DeconvNetToolbox/DeconvNetToolbox';a graphical interface appears in which the parameters are set, in the lower right corner of the “Save Results” set to 1, and click “ Save ”. As a result of these actions, a file gui_has_set_the_params.mat is generated in the GUI directory with the parameters of the DNN network demo model proposed by Matthew Sailer. 13) Now you can start training the resulting model by calling the trainAll.m script . If all the actions were performed correctly, then after completing the training, you can see the result of work in the Results folder . Now you can start researching! A separate article will be devoted to the research results.

References:

1) habrahabr.ru/company/nordavind/blog/206342

2) habrahabr.ru/company/synesis/blog/238129

3) habrahabr.ru/post/229851

4) www.matthewzeiler.com/pubs

5) geektimes .ru / post / 74326

6) nordavind.ru/node/550