Risk Protection Machine Learning System Architecture

Our business is largely built on mutual trust between Airbnb, homeowners and travelers. Therefore, we are trying to create one of the most trusted communities . One of the tools for building such a community has become a review system that helps users find members who have earned a high reputation.

We all know that users who try to trick other people from time to time come across the network. Therefore, in Airbnb, a separate development team deals with the security problem. The tools they create are aimed at protecting our user community from deceivers, and much attention is paid to the "quick response" mechanisms so that these people do not have time to harm the community.

Any network business faces a wide variety of risks. Obviously, they need to be protected, otherwise you risk the very existence of your business. For example, Internet providers are making considerable efforts to protect users from spam, and payment services from scammers.

We also have a number of opportunities to protect our users from malicious intent.

- Product Changes . Some problems can be avoided by introducing additional user verification. For example, requiring confirmation of an email account or implementing two-factor authentication to prevent theft of accounts.

- Deviation detection . Some attacks can often be detected by changing some parameters over a short period of time. For example, an unexpected 1000% increase in bookings could result from either an excellent marketing campaign or fraud.

- A model based on heuristics and machine learning . Fraudsters often act according to some well-known schemes. Knowing some kind of scheme, heuristic analysis can be used to recognize such situations and prevent them. In complex and multi-stage schemes, heuristics become too cumbersome and inefficient, therefore, it is necessary to use machine learning.

If you are interested in a deeper look at online risk management, you can refer to the book of Ohad Samet.

Machine Learning Architecture

Different risk vectors may require different architectures. Say, for a number of situations, time is not critical, but large computational resources must be used for detection. In such cases, offline architecture is best suited. However, as part of this post, we will only consider systems that work in real time and close to it. In general, the machine learning pipeline for these types of risks depends on two conditions:

- The framework should be fast and reliable . It is necessary to reduce to zero the possible time of downtime and inoperability, and the framework itself must constantly provide feedback. Otherwise, fraudsters can take advantage of the lack of performance or the buggy framework by launching several attacks at the same time or continuously attacking in some relatively simple way, in anticipation of an imminent overload or system shutdown. Therefore, the framework should work, ideally, in real time, and the choice of model should not depend on the speed of evaluation or other factors.

- The framework must be flexible . Since the vectors of fraud are constantly changing, it is necessary to be able to quickly test and implement new models of detection and countermeasures. The process of building models should allow engineers to freely solve problems.

Optimizing real-time computing during transactions is primarily about speed and reliability, while optimizing model building and iteration should be more focused on flexibility. Our engineering and data processing teams have jointly developed a framework that meets the above requirements: it is fast, reliable in detecting attacks and has a flexible pipeline for building models.

Feature Highlighting

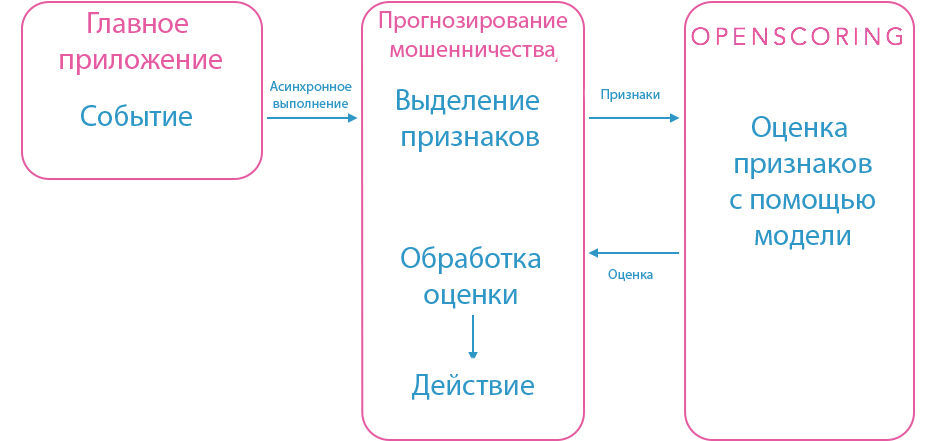

In order to preserve our service-oriented architecture, we have created a separate fraud forecasting service that highlights all the necessary features for a particular model. When the system registers a critical event, for example, housing is reserved, a processing request is sent to the fraud forecasting service. After that, he selects the signs in the event in accordance with the model of “housing reservation” and sends them to our Openscoring service. He issues an assessment and decision based on the criteria laid down. All this information can use the forecasting service.

These processes must proceed quickly in order to be able to respond in time in case of danger. The forecasting service is written in Java, like many of our other backend services, for which performance is critical. Database queries are parallelized. At the same time, we need freedom in cases when heavy calculations are made in the process of distinguishing features. Therefore, the forecasting service is executed asynchronously so as not to block the booking procedure, etc. The asynchronous model works well in cases where a delay of several seconds does not play a special role. However, there are situations when you need to instantly respond and block the transaction. In these cases, synchronous queries and the evaluation of pre-calculated features are applied. Therefore, the forecasting service consists of various modules and internal API, which makes it easy to add new events and models.

Openscoring

This is a Java service providing the JSON REST interface for JPMML-Evaluator . JPMML and Openscoring are open source projects and are distributed under the AGPL 3.0 license. The JPMML backend uses PMML , an XML language that encodes several common machine learning models, including tree-based, log-transform, SVM, and neural networks. We have implemented Openscoring in our production environment, adding additional features: Kafka logging, statsd monitoring, etc.

What, in our opinion, are the advantages of Openscoring:

- Open project . This allows us to modify it to our requirements.

- Supports a variety of trees . We tested several training methods and found that random populations provide an appropriate level of accuracy.

- High speed and reliability . During stress testing, most responses were received in less than 10 ms.

- Multiple Models . After our refinement, Openscoring allows you to run many different models at the same time.

- PMML format . PMML allows our analysts and developers to use any compatible machine learning packages (R, Python, Java, etc.). The same PMML files can be used with Cascading Pattern to evaluate groups of large-scale distributed models.

But Openscoring has its drawbacks:

- PMML does not support some kinds of models . Only relatively standard ML models are supported, so we cannot launch new models or significantly modify standard ones.

- There is no native support for online learning . Models cannot train themselves on the fly. An additional mechanism is needed to automatically access new situations.

- Embryonic error handling . PMML is hard to debate, manually editing an XML file is a risky task. JPMML is known for providing cryptic error messages when problems with PMML occur.

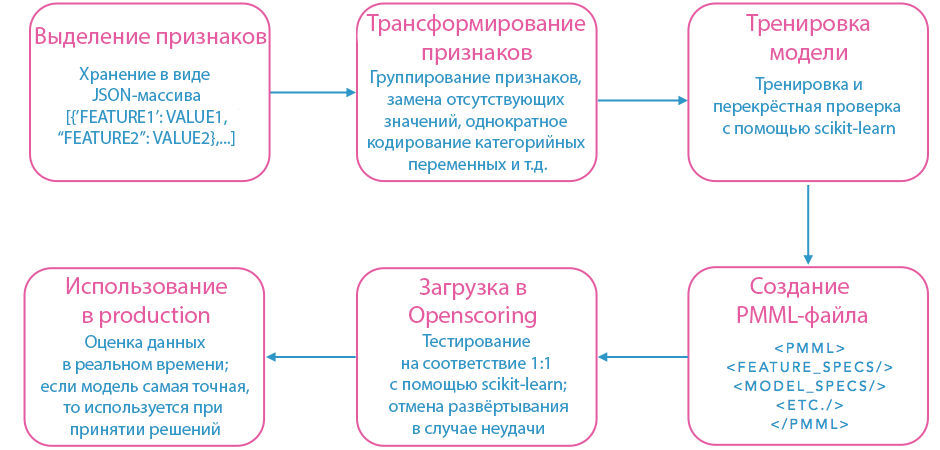

Model Build Pipeline

Above is a diagram of our model building pipeline using PMML. First, signs are highlighted from the data stored on the site. Since the combination of signs that give the optimal signal is constantly changing, we store them in JSON format. This allows you to generalize the process of loading and transforming features based on their names and types. Then the signs are grouped, and to improve the signal, appropriate variables are inserted instead of the missing variables. Statistically unimportant signs are discarded. All these operations take considerable time, so you have to sacrifice many details in order to increase productivity. Then, the transformed features are used to train and cross-validate the model with our favorite PMML-compatible machine learning library. After that, the resulting model is loaded into Openscoring.

The model training phase can take place in any language that has a PMML library. For example, the R PMML package is often used . It supports many types of transformations and data manipulation methods, but it cannot be called a universal solution. We deploy the model as a separate stage, after its training, so we have to check it manually, which can take a lot of time. Another drawback of R is the low implementation rate of the PMML exporter with a large number of attributes and trees. However, we found that simply rewriting a function export in C ++ reduces the execution time by about 10,000 times, from a few days to a few seconds.

We are quite able to circumvent the flaws of R, at the same time using its advantages in building a pipeline based on Python and scikit-learn . Scikit-learn is a package that supports many standard machine learning models. It also includes useful utilities for checking patterns and performing character transformations.

For us, Python has become a more appropriate language than R for situational data management and feature extraction. The automated extraction process relies on a set of rules encoded in the names and types of variables in JSON, so new features can be embedded in the pipeline without changing the existing code. Deployment and testing can also be done automatically in Python using standard network libraries for interacting with Openscoring. Performance tests of the standard model (precision-recall, ROC curves, etc.) are performed using sklearn. It does not support PMML export out of the box, so I had to rewrite the internal export mechanism for certain classifiers. When the PMML file is uploaded to Openscoring, it is automatically tested for compliance with the scikit-learn model it represents.

The main stages: situation -> signs -> choice of algorithm

The process we built showed excellent results for some models, but with the rest we got a poor level of precision-recall. First, we decided that the reason was errors, and tried to use more data and more features. However, there was no improvement. We analyzed the data more deeply and found out that the problem was that the situations themselves were incorrect.

Take, for example, cases with refund requirements. Causes of returns may be situations where the product is “Not As Described (NAD) or fraud. But to group both of these reasons in one model was a mistake, since conscientious users can document the validity of NAD returns. This problem is easily solved, like a number of others. However, in many cases, it is quite difficult to separate the behavior of fraudsters from the activities of normal users, it is necessary to create additional data warehouses and logging pipelines.

For most specialists working in the field of machine learning, they will find this obvious, but we still want to emphasize once again: if your analyzed situations are not entirely correct, then you have already set the upper limit on the accuracy of accuracy and completeness of classification. If the situations are essentially erroneous, then this limit turns out to be rather low.

Sometimes it happens that until you come across a new type of attack, you don’t know what data you need to determine it, especially if you haven’t worked before under conditions of risk. Or they worked, but in a different field. In this case, you can advise logging everything that is possible. In the future, this information may not only be useful, but even prove invaluable for protection against a new attack vector.

Future prospects

We are constantly working to further improve and develop our fraud forecasting system. The current architecture of the company and the amount of available resources are reflected in the current architecture. Often, smaller companies can afford to use in production only a few ML-models and a small team of analysts, they have to manually process the data and train models in non-standard cases. Larger firms can afford to create numerous models, widely automate processes and get significant returns from training models online. Working in an extremely fast growing company makes it possible to work in a landscape that is radically changing from year to year, so the training conveyors need to be constantly updated.

With the development of our tools and techniques, investing in the further improvement of learning algorithms is becoming increasingly important. Probably, our efforts will be shifted towards testing new algorithms, wider implementation of online model training and upgrading the framework so that it can work with more voluminous data sets. Some of the most significant opportunities for improving models are based on the uniqueness of the information we accumulate, sets of attributes, and other aspects that we cannot sound for obvious reasons. However, we are interested in sharing experiences with other development teams. Write us!