Fourier Transform in Action: Accurately Determining Signal Frequency and Highlighting Notes

The latest version of the article is available at makeloft.xyz.

Let's start with the piano. Very simplified, this musical instrument is a set of white and black keys, when you click on each of them a certain sound of a predetermined frequency from low to high is extracted. Of course, each keyboard instrument has its own unique timbre of sound, thanks to which we can distinguish, for example, an accordion from a piano, but if roughly generalized, each key is simply a generator of sinusoidal acoustic waves of a certain frequency.

When a musician plays a composition, he alternately or simultaneously pinches and releases the keys, as a result of which several sinusoidal signals overlap each other to form a pattern. It is this pattern that we perceive as a melody, thanks to which we can easily recognize one piece performed on various instruments in different genres or even unprofessionally hummed by a person.

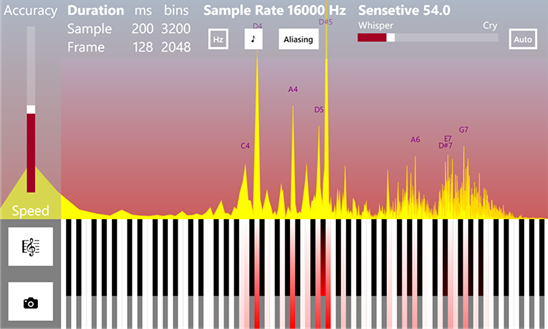

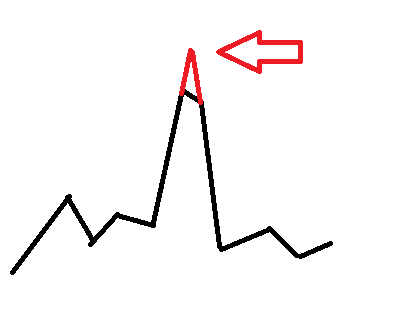

Visual illustration of musical drawing.

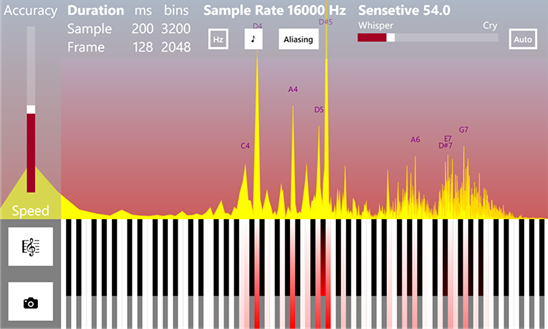

Determining the frequency (guitar tuner mode) The

inverse problem is to parse the sounding musical composition into notes. That is, decompose the total acoustic signal captured by the ear into the original sinusoids. In fact, this process is a direct Fourier transform. But keystrokes and sound extraction is the process of the inverse Fourier transform.

Mathematically, in the first case, the decomposition of a complex periodic (at a certain time interval) function into a series of more elementary orthogonal functions (sine and cosine) occurs. And in the second, their inverse summation, that is, the synthesis of a complex signal.

Orthogonality, in some way, denotes the immiscibility of functions. For example, if we take a few pieces of colored plasticine and glue them together, then we can still figure out which colors were originally, but if we mix several jars of gouache paints well, it will be impossible to accurately restore the original colors without additional information.

(!) It is important to understand that when we undertake to analyze a real signal using the Fourier transform, we idealize the situation and proceed from the assumption that it is periodic in the current time interval and consists of elementary sinusoids. This is often the case, since acoustic signals, as a rule, have a harmonic nature, but more complex cases are generally possible. Any of our assumptions about the nature of the signal usually leads to partial distortions and errors, but without this it is extremely difficult to extract useful information from it.

Now we describe the entire analysis process in more detail:

1. It all starts with the fact that sound waves vibrate the membrane of the microphone, which converts them into analog oscillations of the electric current.

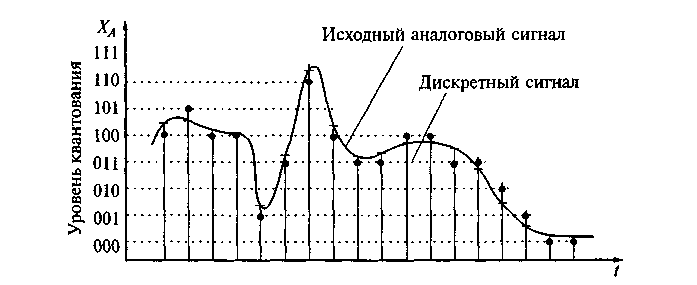

2. Then digitalization of the analog electrical signal takes place . At this point, it is worth stopping in detail.

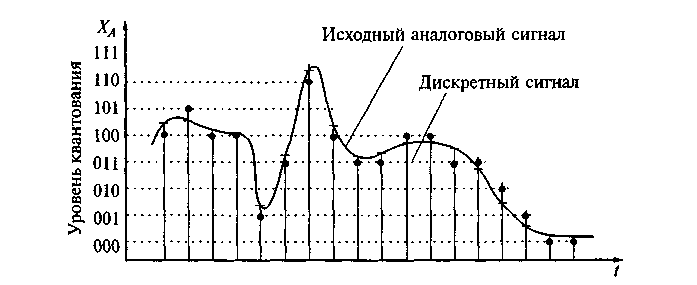

Since the analog signal mathematically consists of an infinite continuous set of time points of amplitude values, during the measurement process we can select from it only a finite series of values at discrete time instants, that is, in fact, perform time quantization ...

As a rule, sample values taken at small equal time intervals, that is, with a certain frequency, for example, 16000 or 22000 Hz. However, in the general case, discrete readings can go unevenly, but this complicates the mathematical apparatus of analysis, and therefore is usually not used in practice.

There is an important Kotelnikov-Nyquist-Shannon theorem, which states that an analog periodic signal having a finite (limited in width) spectrum can be uniquely restored without distortion and loss from its samples taken with a frequency greater than or equal to twice the upper frequency of the spectrum (called the sampling frequency or Nyquist).

For this restoration, it is necessary to use special interpolating functions, but the problem is that when using these functions, calculations must be performed at an infinite time interval, which is impossible in practice. Therefore, in real life it is impossible to arbitrarily increase the sampling rate artificially without distortion even if it initially satisfies the Kotelnikov-Nyquist-Shannon theorem. Farrow filters are used for this operation.

Also, discretization occurs not only in time, but also in terms of amplitude values, since the computer is able to manipulate only a limited set of numbers. It also introduces small errors.

3. The next step is the discrete direct Fourier transform itself .

We select a short frame (interval) of the composition, consisting of discrete samples, which we conventionally consider periodic and apply the Fourier transform to it. As a result of the conversion, we obtain an array of complex numbers containing information about the amplitude and phase spectra of the analyzed frame. Moreover, the spectra are also discrete with a step equal to (sampling frequency) / (number of samples). That is, the more samples we take, the more accurate the resolution we get in frequency. However, at a constant sampling rate, increasing the number of samples, we increase the analyzed time interval, and since notes in real musical works have different sound durations and can quickly replace each other, they overlap, so the amplitude of long notes “eclipses” the amplitude of short notes.

There is also a fairly simple trick to increasing the resolution in frequency - you need to fill in the original discrete signal with zeros between the samples. However, as a result of this filling, the phase spectrum is strongly distorted, but the resolution of the amplitude increases. It is also possible to use Farrow filters and artificially increase the sampling frequency, but it also introduces distortions in the spectra.

The frame duration is usually from about 30 ms to 1 s. The shorter it is, the better resolution we get in time, but worse in frequency, the longer the sample, the better in frequency, but worse in time. This is very reminiscent of Heisenberg’s uncertainty principle in quantum mechanics ... and not easy, as Wikipedia says,the uncertainty relation in quantum mechanics in the mathematical sense is a direct consequence of the properties of the Fourier transform ...

It is also interesting that, as a result of the analysis of a sample of a single sinusoidal signal, the amplitude spectrum is very similar to the diffraction picture ... A

sinusoidal signal limited by a rectangular window and its "diffraction"

Diffraction of light waves

On in practice, this is an undesirable effect that complicates the analysis of signals; therefore, they try to reduce it by applying window functions. A lot of such functions have been invented, the implementations of some of them are presented below, as well as the comparative effect on the spectrum of a single sinusoidal signal.

Applying the window function to the input frame is very simple:

As for computers, at one time an algorithm was developed for the fast Fourier transform , which minimizes the number of mathematical operations needed to calculate it. The only requirement of the algorithm is that the number of samples be a multiple of the power of two (256, 512, 1024, and so on).

Below is its classic recursive implementation in C #.

There are two varieties of the FFT algorithm - with decimation in time and frequency, but both give an identical result. The functions take an array of complex numbers filled with real values of the signal amplitudes in the time domain, and after their execution they return an array of complex numbers containing information about the amplitude and phase spectra. It is worth remembering that the real and imaginary parts of a complex number are far from the same as its amplitude and phase!

magnitude = Math.Sqrt (x.Real * x.Real + x.Imaginary * x.Imaginary)

phase = Math.Atan2 (x.Imaginary, x.Real)

Результирующий массив комплексных чисел заполнен полезной информацией ровно на половину, другая половина является лишь зеркальным отражением первой и спокойно может быть исключена из рассмотрения. Если вдуматься, то этот момент хорошо иллюстрирует теорему Котельникова-Найквиста-Шеннона, о том, что частота дискретизации должна быть не меньше максимальной удвоенной частоты сигнала…

Также существует разновидность алгоритма БПФ без рекурсии по Кули-Тьюки, которая часто применяется на практике, но она чуть более сложна для восприятия.

Сразу после вычисления преобразования Фурье удобно нормализовать амплитудный спектр:

Это приведёт к тому, что величина значений амплитуды получится одного порядка не зависимо от размеров сэмпла.

By calculating the amplitude and frequency spectra, it is easy to process the signal, for example, apply frequency filtering or perform compression. In fact, in this way you can make the equalizer: by performing the direct Fourier transform, it is easy to increase or decrease the amplitude of a certain frequency range, and then perform the inverse Fourier transform (although the work of real equalizers is usually based on another principle - the phase shift of the signal). And to compress the signal is very simple - you just need to make a dictionary where the key is frequency and the value is the corresponding complex number. Only frequencies with a signal amplitude that exceeds a certain minimum threshold should be entered into the dictionary. Information about the "quiet" frequencies that are not heard by the ear will be lost, but tangible compression will be obtained while maintaining an acceptable sound quality.

4. Accurate determination of frequency The

discrete Fourier transform gives us a discrete spectrum, where each amplitude value is separated from the neighboring ones by equal intervals in frequency. And if the frequency in the signal is a multiple of the step equal to (sampling frequency) / (number of samples), then we get a pronounced pointed peak, but if the signal frequency lies somewhere between the boundaries of the step closer to the middle, we get a peak with a “cut off” peak and we it will be difficult to say what kind of frequency is there. It may well be that the signal contains two frequencies lying next to each other. This is the limitation of frequency resolution. Just as in a low-resolution photograph, small objects stick together and become indistinguishable, so the fine details of the spectrum can be lost.

But the frequencies of musical notes lie far from the grid of Fourier transform steps, and for everyday tasks, tuning musical instruments and recognizing notes, you need to know exactly the exact frequency. Moreover, at low octaves with a resolution of 1024 samples or lower, the Fourier frequency grid becomes so rare that it just becomes possible to fit a few notes at one step and it becomes virtually impossible to determine which one actually plays.

To somehow circumvent this restriction, approximating functions are sometimes used, for example, parabolic ones.

www.ingelec.uns.edu.ar/pds2803/Materiales/Articulos/AnalisisFrecuencial/04205098.pdf

mgasior.web.cern.ch/mgasior/pap/biw2004_poster.pdf

But all these are artificial measures that, while improving some indicators, may distort others.

Is there a more natural way to accurately determine the frequency?

Yes, and it is hidden precisely in the use of the phase spectrum of the signal, which is often neglected.

This method of refining the signal frequency is based on calculating the phase delay of the spectra of two frames superimposed on each other, but slightly shifted in time.

You can read more about it at the links:

www.guitarpitchshifter.com/algorithm.html

www.dspdimension.com/admin/pitch-shifting-using-the-ft (+ code examples)

eudl.eu/pdf/10.1007/978-3 -642-29157-9_43

ctuner.googlecode.com (examples of using the algorithm in C ++ and Java)

In C #, the implementation of the method looks pretty simple:

The application is also simple:

Usually the original frames are shifted by 1/16 or 1/32 of their length, that is, ShiftsPerFrame is 16 or 32.

As a result, we get a dictionary of frequency-amplitude, where the frequency values will be pretty close to real ones. However, “cut peaks” will still be observed, albeit less pronounced. To eliminate this drawback, you can simply "finish" them.

Prospects

Note analysis of musical works opens up a number of interesting opportunities. After all, having a ready-made musical notation available, you can search for other musical compositions with a similar pattern.

For example, the same work can be performed on another instrument, in a different manner, with a different timbre, or transposed in octaves, however, the musical note will remain similar, which will allow you to find different versions of the same work. This is very similar to the “guess the tune” game.

In some cases, such an analysis will help to identify plagiarism in musical works. Also, in musical notation, theoretically, one can search for works of a certain mood or genre, which raises the search to a new level.

Summary

This article outlines the basic principles for accurately determining the frequencies of acoustic signals and highlighting notes. And also some subtle intuitive relationship of the discrete Fourier transform with quantum physics is shown, which prompts reflection on a single picture of the world.

PS The Rainbow Framework containing all the above code examples can be downloaded from the Codex .

PPS Perhaps this article will someday come in handy for you in your professional activities and help to save a lot of time and effort, so if you want to thank the author for his work, you can make a donation , buy an application ( free version with advertising), due to which the article arose, or simply to express gratitude with a kind word.

Literature

1. Fundamentals of spectral analysis of sounds pandia.org/text/77/481/644.php

2. Cooley-Tukey algorithm www.codeproject.com/Articles/32172/FFT-Guitar-Tuner

3. Resampling (resampling)

www.dsplib .ru / forum / viewtopic.php? f = 5 & t = 11

www.dsplib.ru/content/farrow/farrow.html

4. Frequency correction by phase offset

www.guitarpitchshifter.com/algorithm.html

www.dspdimension.com/admin / pitch-shifting-using-the-ft (+ code examples)

eudl.eu/pdf/10.1007/978-3-642-29157-9_43

ctuner.googlecode.com (examples of using the algorithm in C ++ and Java)

Let's start with the piano. Very simplified, this musical instrument is a set of white and black keys, when you click on each of them a certain sound of a predetermined frequency from low to high is extracted. Of course, each keyboard instrument has its own unique timbre of sound, thanks to which we can distinguish, for example, an accordion from a piano, but if roughly generalized, each key is simply a generator of sinusoidal acoustic waves of a certain frequency.

When a musician plays a composition, he alternately or simultaneously pinches and releases the keys, as a result of which several sinusoidal signals overlap each other to form a pattern. It is this pattern that we perceive as a melody, thanks to which we can easily recognize one piece performed on various instruments in different genres or even unprofessionally hummed by a person.

Visual illustration of musical drawing.

Determining the frequency (guitar tuner mode) The

inverse problem is to parse the sounding musical composition into notes. That is, decompose the total acoustic signal captured by the ear into the original sinusoids. In fact, this process is a direct Fourier transform. But keystrokes and sound extraction is the process of the inverse Fourier transform.

Mathematically, in the first case, the decomposition of a complex periodic (at a certain time interval) function into a series of more elementary orthogonal functions (sine and cosine) occurs. And in the second, their inverse summation, that is, the synthesis of a complex signal.

Orthogonality, in some way, denotes the immiscibility of functions. For example, if we take a few pieces of colored plasticine and glue them together, then we can still figure out which colors were originally, but if we mix several jars of gouache paints well, it will be impossible to accurately restore the original colors without additional information.

(!) It is important to understand that when we undertake to analyze a real signal using the Fourier transform, we idealize the situation and proceed from the assumption that it is periodic in the current time interval and consists of elementary sinusoids. This is often the case, since acoustic signals, as a rule, have a harmonic nature, but more complex cases are generally possible. Any of our assumptions about the nature of the signal usually leads to partial distortions and errors, but without this it is extremely difficult to extract useful information from it.

Now we describe the entire analysis process in more detail:

1. It all starts with the fact that sound waves vibrate the membrane of the microphone, which converts them into analog oscillations of the electric current.

2. Then digitalization of the analog electrical signal takes place . At this point, it is worth stopping in detail.

Since the analog signal mathematically consists of an infinite continuous set of time points of amplitude values, during the measurement process we can select from it only a finite series of values at discrete time instants, that is, in fact, perform time quantization ...

As a rule, sample values taken at small equal time intervals, that is, with a certain frequency, for example, 16000 or 22000 Hz. However, in the general case, discrete readings can go unevenly, but this complicates the mathematical apparatus of analysis, and therefore is usually not used in practice.

There is an important Kotelnikov-Nyquist-Shannon theorem, which states that an analog periodic signal having a finite (limited in width) spectrum can be uniquely restored without distortion and loss from its samples taken with a frequency greater than or equal to twice the upper frequency of the spectrum (called the sampling frequency or Nyquist).

For this restoration, it is necessary to use special interpolating functions, but the problem is that when using these functions, calculations must be performed at an infinite time interval, which is impossible in practice. Therefore, in real life it is impossible to arbitrarily increase the sampling rate artificially without distortion even if it initially satisfies the Kotelnikov-Nyquist-Shannon theorem. Farrow filters are used for this operation.

Also, discretization occurs not only in time, but also in terms of amplitude values, since the computer is able to manipulate only a limited set of numbers. It also introduces small errors.

3. The next step is the discrete direct Fourier transform itself .

We select a short frame (interval) of the composition, consisting of discrete samples, which we conventionally consider periodic and apply the Fourier transform to it. As a result of the conversion, we obtain an array of complex numbers containing information about the amplitude and phase spectra of the analyzed frame. Moreover, the spectra are also discrete with a step equal to (sampling frequency) / (number of samples). That is, the more samples we take, the more accurate the resolution we get in frequency. However, at a constant sampling rate, increasing the number of samples, we increase the analyzed time interval, and since notes in real musical works have different sound durations and can quickly replace each other, they overlap, so the amplitude of long notes “eclipses” the amplitude of short notes.

There is also a fairly simple trick to increasing the resolution in frequency - you need to fill in the original discrete signal with zeros between the samples. However, as a result of this filling, the phase spectrum is strongly distorted, but the resolution of the amplitude increases. It is also possible to use Farrow filters and artificially increase the sampling frequency, but it also introduces distortions in the spectra.

The frame duration is usually from about 30 ms to 1 s. The shorter it is, the better resolution we get in time, but worse in frequency, the longer the sample, the better in frequency, but worse in time. This is very reminiscent of Heisenberg’s uncertainty principle in quantum mechanics ... and not easy, as Wikipedia says,the uncertainty relation in quantum mechanics in the mathematical sense is a direct consequence of the properties of the Fourier transform ...

It is also interesting that, as a result of the analysis of a sample of a single sinusoidal signal, the amplitude spectrum is very similar to the diffraction picture ... A

sinusoidal signal limited by a rectangular window and its "diffraction"

Diffraction of light waves

On in practice, this is an undesirable effect that complicates the analysis of signals; therefore, they try to reduce it by applying window functions. A lot of such functions have been invented, the implementations of some of them are presented below, as well as the comparative effect on the spectrum of a single sinusoidal signal.

Applying the window function to the input frame is very simple:

for (var i = 0; i < frameSize; i++)

{

frame[i] *= Window.Gausse(i, frameSize);

}

using System;

using System.Numerics;

namespace Rainbow

{

public class Window

{

private const double Q = 0.5;

public static double Rectangle(double n, double frameSize)

{

return 1;

}

public static double Gausse(double n, double frameSize)

{

var a = (frameSize - 1)/2;

var t = (n - a)/(Q*a);

t = t*t;

return Math.Exp(-t/2);

}

public static double Hamming(double n, double frameSize)

{

return 0.54 - 0.46*Math.Cos((2*Math.PI*n)/(frameSize - 1));

}

public static double Hann(double n, double frameSize)

{

return 0.5*(1 - Math.Cos((2*Math.PI*n)/(frameSize - 1)));

}

public static double BlackmannHarris(double n, double frameSize)

{

return 0.35875 - (0.48829*Math.Cos((2*Math.PI*n)/(frameSize - 1))) +

(0.14128*Math.Cos((4*Math.PI*n)/(frameSize - 1))) - (0.01168*Math.Cos((4*Math.PI*n)/(frameSize - 1)));

}

}

}

As for computers, at one time an algorithm was developed for the fast Fourier transform , which minimizes the number of mathematical operations needed to calculate it. The only requirement of the algorithm is that the number of samples be a multiple of the power of two (256, 512, 1024, and so on).

Below is its classic recursive implementation in C #.

using System;

using System.Numerics;

namespace Rainbow

{

public static class Butterfly

{

public const double SinglePi = Math.PI;

public const double DoublePi = 2*Math.PI;

public static Complex[] DecimationInTime(Complex[] frame, bool direct)

{

if (frame.Length == 1) return frame;

var frameHalfSize = frame.Length >> 1; // frame.Length/2

var frameFullSize = frame.Length;

var frameOdd = new Complex[frameHalfSize];

var frameEven = new Complex[frameHalfSize];

for (var i = 0; i < frameHalfSize; i++)

{

var j = i << 1; // i = 2*j;

frameOdd[i] = frame[j + 1];

frameEven[i] = frame[j];

}

var spectrumOdd = DecimationInTime(frameOdd, direct);

var spectrumEven = DecimationInTime(frameEven, direct);

var arg = direct ? -DoublePi/frameFullSize : DoublePi/frameFullSize;

var omegaPowBase = new Complex(Math.Cos(arg), Math.Sin(arg));

var omega = Complex.One;

var spectrum = new Complex[frameFullSize];

for (var j = 0; j < frameHalfSize; j++)

{

spectrum[j] = spectrumEven[j] + omega*spectrumOdd[j];

spectrum[j + frameHalfSize] = spectrumEven[j] - omega*spectrumOdd[j];

omega *= omegaPowBase;

}

return spectrum;

}

public static Complex[] DecimationInFrequency(Complex[] frame, bool direct)

{

if (frame.Length == 1) return frame;

var halfSampleSize = frame.Length >> 1; // frame.Length/2

var fullSampleSize = frame.Length;

var arg = direct ? -DoublePi/fullSampleSize : DoublePi/fullSampleSize;

var omegaPowBase = new Complex(Math.Cos(arg), Math.Sin(arg));

var omega = Complex.One;

var spectrum = new Complex[fullSampleSize];

for (var j = 0; j < halfSampleSize; j++)

{

spectrum[j] = frame[j] + frame[j + halfSampleSize];

spectrum[j + halfSampleSize] = omega*(frame[j] - frame[j + halfSampleSize]);

omega *= omegaPowBase;

}

var yTop = new Complex[halfSampleSize];

var yBottom = new Complex[halfSampleSize];

for (var i = 0; i < halfSampleSize; i++)

{

yTop[i] = spectrum[i];

yBottom[i] = spectrum[i + halfSampleSize];

}

yTop = DecimationInFrequency(yTop, direct);

yBottom = DecimationInFrequency(yBottom, direct);

for (var i = 0; i < halfSampleSize; i++)

{

var j = i << 1; // i = 2*j;

spectrum[j] = yTop[i];

spectrum[j + 1] = yBottom[i];

}

return spectrum;

}

}

}

There are two varieties of the FFT algorithm - with decimation in time and frequency, but both give an identical result. The functions take an array of complex numbers filled with real values of the signal amplitudes in the time domain, and after their execution they return an array of complex numbers containing information about the amplitude and phase spectra. It is worth remembering that the real and imaginary parts of a complex number are far from the same as its amplitude and phase!

magnitude = Math.Sqrt (x.Real * x.Real + x.Imaginary * x.Imaginary)

phase = Math.Atan2 (x.Imaginary, x.Real)

Результирующий массив комплексных чисел заполнен полезной информацией ровно на половину, другая половина является лишь зеркальным отражением первой и спокойно может быть исключена из рассмотрения. Если вдуматься, то этот момент хорошо иллюстрирует теорему Котельникова-Найквиста-Шеннона, о том, что частота дискретизации должна быть не меньше максимальной удвоенной частоты сигнала…

Также существует разновидность алгоритма БПФ без рекурсии по Кули-Тьюки, которая часто применяется на практике, но она чуть более сложна для восприятия.

Сразу после вычисления преобразования Фурье удобно нормализовать амплитудный спектр:

var spectrum = Butterfly.DecimationInTime(frame, true);

for (var i = 0; i < frameSize; i++)

{

spectrum[i] /= frameSize;

}

Это приведёт к тому, что величина значений амплитуды получится одного порядка не зависимо от размеров сэмпла.

By calculating the amplitude and frequency spectra, it is easy to process the signal, for example, apply frequency filtering or perform compression. In fact, in this way you can make the equalizer: by performing the direct Fourier transform, it is easy to increase or decrease the amplitude of a certain frequency range, and then perform the inverse Fourier transform (although the work of real equalizers is usually based on another principle - the phase shift of the signal). And to compress the signal is very simple - you just need to make a dictionary where the key is frequency and the value is the corresponding complex number. Only frequencies with a signal amplitude that exceeds a certain minimum threshold should be entered into the dictionary. Information about the "quiet" frequencies that are not heard by the ear will be lost, but tangible compression will be obtained while maintaining an acceptable sound quality.

4. Accurate determination of frequency The

discrete Fourier transform gives us a discrete spectrum, where each amplitude value is separated from the neighboring ones by equal intervals in frequency. And if the frequency in the signal is a multiple of the step equal to (sampling frequency) / (number of samples), then we get a pronounced pointed peak, but if the signal frequency lies somewhere between the boundaries of the step closer to the middle, we get a peak with a “cut off” peak and we it will be difficult to say what kind of frequency is there. It may well be that the signal contains two frequencies lying next to each other. This is the limitation of frequency resolution. Just as in a low-resolution photograph, small objects stick together and become indistinguishable, so the fine details of the spectrum can be lost.

But the frequencies of musical notes lie far from the grid of Fourier transform steps, and for everyday tasks, tuning musical instruments and recognizing notes, you need to know exactly the exact frequency. Moreover, at low octaves with a resolution of 1024 samples or lower, the Fourier frequency grid becomes so rare that it just becomes possible to fit a few notes at one step and it becomes virtually impossible to determine which one actually plays.

To somehow circumvent this restriction, approximating functions are sometimes used, for example, parabolic ones.

www.ingelec.uns.edu.ar/pds2803/Materiales/Articulos/AnalisisFrecuencial/04205098.pdf

mgasior.web.cern.ch/mgasior/pap/biw2004_poster.pdf

But all these are artificial measures that, while improving some indicators, may distort others.

Is there a more natural way to accurately determine the frequency?

Yes, and it is hidden precisely in the use of the phase spectrum of the signal, which is often neglected.

This method of refining the signal frequency is based on calculating the phase delay of the spectra of two frames superimposed on each other, but slightly shifted in time.

You can read more about it at the links:

www.guitarpitchshifter.com/algorithm.html

www.dspdimension.com/admin/pitch-shifting-using-the-ft (+ code examples)

eudl.eu/pdf/10.1007/978-3 -642-29157-9_43

ctuner.googlecode.com (examples of using the algorithm in C ++ and Java)

In C #, the implementation of the method looks pretty simple:

using System;

using System.Collections.Generic;

using System.Linq;

using System.Numerics;

namespace Rainbow

{

// Δ∂ωπ

public static class Filters

{

public const double SinglePi = Math.PI;

public const double DoublePi = 2*Math.PI;

public static Dictionary GetJoinedSpectrum(

IList spectrum0, IList spectrum1,

double shiftsPerFrame, double sampleRate)

{

var frameSize = spectrum0.Count;

var frameTime = frameSize/sampleRate;

var shiftTime = frameTime/shiftsPerFrame;

var binToFrequancy = sampleRate/frameSize;

var dictionary = new Dictionary();

for (var bin = 0; bin < frameSize; bin++)

{

var omegaExpected = DoublePi*(bin*binToFrequancy); // ω=2πf

var omegaActual = (spectrum1[bin].Phase - spectrum0[bin].Phase)/shiftTime; // ω=∂φ/∂t

var omegaDelta = Align(omegaActual - omegaExpected, DoublePi); // Δω=(∂ω + π)%2π - π

var binDelta = omegaDelta/(DoublePi*binToFrequancy);

var frequancyActual = (bin + binDelta)*binToFrequancy;

var magnitude = spectrum1[bin].Magnitude + spectrum0[bin].Magnitude;

dictionary.Add(frequancyActual, magnitude*(0.5 + Math.Abs(binDelta)));

}

return dictionary;

}

public static double Align(double angle, double period)

{

var qpd = (int) (angle/period);

if (qpd >= 0) qpd += qpd & 1;

else qpd -= qpd & 1;

angle -= period*qpd;

return angle;

}

}

}

The application is also simple:

var spectrum0 = Butterfly.DecimationInTime(frame0, true);

var spectrum1 = Butterfly.DecimationInTime(frame1, true);

for (var i = 0; i < frameSize; i++)

{

spectrum0[i] /= frameSize;

spectrum1[i] /= frameSize;

}

var spectrum = Filters.GetJoinedSpectrum(spectrum0, spectrum1, ShiftsPerFrame, Device.SampleRate);

Usually the original frames are shifted by 1/16 or 1/32 of their length, that is, ShiftsPerFrame is 16 or 32.

As a result, we get a dictionary of frequency-amplitude, where the frequency values will be pretty close to real ones. However, “cut peaks” will still be observed, albeit less pronounced. To eliminate this drawback, you can simply "finish" them.

using System;

using System.Collections.Generic;

using System.Linq;

using System.Numerics;

namespace Rainbow

{

public static class Filters

{

public static Dictionary Antialiasing(Dictionary spectrum)

{

var result = new Dictionary();

var data = spectrum.ToList();

for (var j = 0; j < spectrum.Count - 4; j++)

{

var i = j;

var x0 = data[i].Key;

var x1 = data[i + 1].Key;

var y0 = data[i].Value;

var y1 = data[i + 1].Value;

var a = (y1 - y0)/(x1 - x0);

var b = y0 - a*x0;

i += 2;

var u0 = data[i].Key;

var u1 = data[i + 1].Key;

var v0 = data[i].Value;

var v1 = data[i + 1].Value;

var c = (v1 - v0)/(u1 - u0);

var d = v0 - c*u0;

var x = (d - b)/(a - c);

var y = (a*d - b*c)/(a - c);

if (y > y0 && y > y1 && y > v0 && y > v1 &&

x > x0 && x > x1 && x < u0 && x < u1)

{

result.Add(x1, y1);

result.Add(x, y);

}

else

{

result.Add(x1, y1);

}

}

return result;

}

}

}

Prospects

Note analysis of musical works opens up a number of interesting opportunities. After all, having a ready-made musical notation available, you can search for other musical compositions with a similar pattern.

For example, the same work can be performed on another instrument, in a different manner, with a different timbre, or transposed in octaves, however, the musical note will remain similar, which will allow you to find different versions of the same work. This is very similar to the “guess the tune” game.

In some cases, such an analysis will help to identify plagiarism in musical works. Also, in musical notation, theoretically, one can search for works of a certain mood or genre, which raises the search to a new level.

Summary

This article outlines the basic principles for accurately determining the frequencies of acoustic signals and highlighting notes. And also some subtle intuitive relationship of the discrete Fourier transform with quantum physics is shown, which prompts reflection on a single picture of the world.

PS The Rainbow Framework containing all the above code examples can be downloaded from the Codex .

PPS Perhaps this article will someday come in handy for you in your professional activities and help to save a lot of time and effort, so if you want to thank the author for his work, you can make a donation , buy an application ( free version with advertising), due to which the article arose, or simply to express gratitude with a kind word.

Literature

1. Fundamentals of spectral analysis of sounds pandia.org/text/77/481/644.php

2. Cooley-Tukey algorithm www.codeproject.com/Articles/32172/FFT-Guitar-Tuner

3. Resampling (resampling)

www.dsplib .ru / forum / viewtopic.php? f = 5 & t = 11

www.dsplib.ru/content/farrow/farrow.html

4. Frequency correction by phase offset

www.guitarpitchshifter.com/algorithm.html

www.dspdimension.com/admin / pitch-shifting-using-the-ft (+ code examples)

eudl.eu/pdf/10.1007/978-3-642-29157-9_43

ctuner.googlecode.com (examples of using the algorithm in C ++ and Java)