Introduction to .NET Core

- Transfer

At the connect () conference , we announced that .NET Core will be fully released as open source software . In this article, we will review the .NET Core, explain how we are going to release it, how it compares with the .NET Framework, and what this all means for cross-platform development and open source development.

Looking Back - Motivation for .NET Core

First of all, let's take a look back to understand how the .NET platform was arranged earlier. This will help to understand the motives of the individual decisions and ideas that led to the emergence of .NET Core.

.NET - a set of verticals

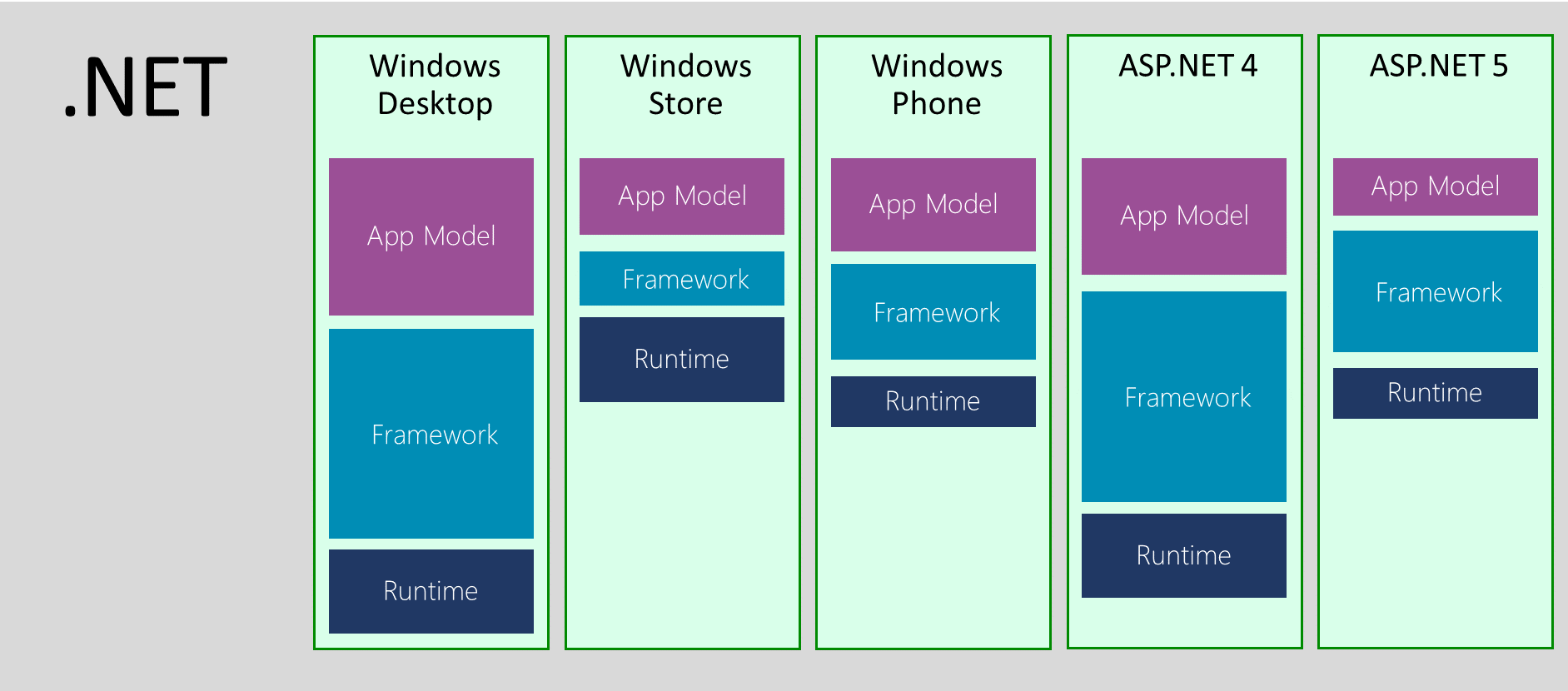

When we originally released the .NET Framework in 2002, it was the only framework. Soon after, we released the .NET Compact Framework, which was a subset of the .NET Framework that fits on small devices, including Windows Mobile. The compact framework required a separate code base and included a whole vertical: runtime, framework and application model on top of them.

Since then, we have repeated the exercise of selecting a subset several times: Silverlight, Windows Phone, and most recently, the Windows Store. This leads to fragmentation, because in fact the .NET platform is not something single, but a set of platforms independently managed and supported by different teams.

Of course, there is nothing wrong with the idea of offering a specialized set of features that meet specific needs. This becomes a problem when there is no systematic approach and specialization occurs on each layer without taking into account what happens in the corresponding layers of other verticals. The consequence of such a decision is a set of platforms that have a number of common APIs only because they once started from a single code base. Over time, this leads to an increase in differences, unless explicit (and expensive) exercises are carried out to achieve uniform API.

At what point does the fragmentation problem arise? If you are aimed at only one vertical, then in reality there is no problem. You are provided with a set of APIs optimized specifically for your vertical. The problem manifests itself as soon as you want to do something horizontal, oriented to many verticals. Now you are wondering about the availability of various APIs and are thinking how to produce blocks of code that work on different target verticals.

Today, the task of developing applications that cover different devices is very common: almost always there is some kind of back-end running on a web server, often there is an administrative front-end using desktop Windows, and also a set of mobile applications available for people with the most different devices. Therefore, it is critical for us to support developers creating components covering all .NET verticals.

The Birth of Portable Class Libraries (PCL)

Initially, there was no special concept for dividing code between different verticals. There were no portable class libraries or shared projects . You literally needed to create many projects, use file links and many #if. This made the task of targeting multiple verticals really difficult.

By the time Windows 8 was released, we had a plan to deal with this problem. When we designed the Windows Store profile , we introduced a new concept for better modeling of a subset: contracts.

When the .NET Framework was designed, there was an assumption that it would always be distributed as a single unit, so decomposition issues were not considered critical. The very core of the assembly, on which everything depends, is mscorlib. The mscorlib library shipped with the .NET Framework contains many features that cannot be universally supported (for example, remote execution and AppDomain). This leads to the fact that each vertical makes its own subset of the platform core itself. Hence, difficulties arise in creating class libraries designed for many verticals.

The idea behind contracts is to provide a well-thought-out set of APIs suitable for code decomposition tasks. Contracts are just assemblies for which you can compile your code. Unlike conventional assemblies, contract assemblies are designed specifically for decomposition tasks. We clearly trace the dependencies between the contracts and write them so that they are responsible for one thing, and not be a dump of the API. Contracts have independent versioning and follow the relevant rules, for example, if a new API is added, it will be available in the assembly with the new version.

Today we use contracts to model APIs in all verticals. A vertical can simply pick and choose which contracts it will support. The important point is that the vertical is obliged to support the contract as a whole or not at all. In other words, it cannot include a subset of the contract.

This allows us to talk about the differences in the API between the verticals already at the assembly level, as opposed to the individual differences in the API, as it was before. This allows us to implement the mechanism of code libraries that are aimed at many verticals. Such libraries are now known as portable class libraries.

API form union versus implementation union

You can think of portable code libraries as a model that combines various .NET verticals based on an API form. This allows you to address the most important need - the ability to create libraries that work in different .NET verticals. This approach also serves as an architecture planning tool that allows you to bring together different verticals, in particular, Windows 8.1 and Windows Phone 8.1.

However, we still have various implementations, forks (code branches), of the .NET platform. These implementations are managed by various teams, have independent versioning and have different delivery mechanisms. This leads to the fact that the task of unifying the form of the API becomes a moving target: APIs are portable only when their implementations move forward along all verticals, but since the code bases are different, it becomes quite an expensive pleasure, and therefore the subject of (re-) prioritization . Even if we could perfectly implement API uniformity, the fact that all verticals have different delivery mechanisms means that some parts of the ecosystem will always lag behind.

It is much better to unify implementations: instead of just providing a well-decomposed description, we have to prepare a decomposed implementation. This will allow verticals to just use the same implementation. Rapprochement of verticals will no longer require additional actions; it is achieved simply by properly designing the solution. Of course, there will still be cases in which various implementations are needed. A good example of this is file operations, which require the use of different technologies depending on the environment. However, even in this case, it is much easier to ask each team responsible for specific components to think about how the APIs will work in different verticals than to post factum try to provide a single set of APIs on top. Portability is not something you can add after. For example, our File API includes support for Windows Access Control Lists (ACLs), which are not supported by all environments. When designing an API, you need to consider such points and, in particular, provide similar functionality in separate assemblies, which may not be available on platforms that do not support ACLs.

Machine-wide frameworks versus local application frameworks

Another interesting challenge is how the .NET Framework is distributed.

The .NET Framework is a whole machine framework. Any changes made to it affect all applications that depend on it. Having a single framework for the whole machine was a sensible decision, because it allowed to solve the following problems:

- Centralized service

- Reduces disk space requirements

- Allows sharing native code between applications

But everything has a price.

First of all, it is difficult for application developers to immediately switch to the just released framework. In fact, you either depend on the latest version of the operating system, or you must prepare an application installer that can also install the .NET Framework if necessary. If you are a web developer, you may not even have this option, as the IT department dictates to you which version is allowed to use. And if you are a mobile developer, in general, you have no choice, you only determine the target OS.

But even if you undertook to solve the problem of preparing the installer to make sure that the .NET Framework is available, you may encounter the fact that updating the framework may disrupt the operation of other applications.

Stop, don't we (Microsoft) say our updates are highly compatible? Yes, we are. And we consider the task of compatibility very seriously. We carefully analyze any changes we make to the .NET Framework. And for everything that can be a “breaking” change, we are conducting a separate study to examine the consequences. We have a whole compatibility lab in which we test many popular .NET applications to make sure that we don't break them. We also understand which .NET Framework the application was built by. This allows us to maintain compatibility with existing applications and at the same time provide improved behavior and features for applications that are ready to work with the latest version of the .NET Framework.

Unfortunately, we also know that even compatible changes can disrupt the application. Here are some examples:

- Adding an interface to an existing type can disrupt applications, as it can affect how the type is serialized.

- Adding an overload to a method that previously did not have any overloads can disrupt reflections when the code does not handle the probability of finding more than one method.

- Renaming an internal type can break applications if the type name is determined through the ToString () method.

These are all very rare cases, but when you have a user base of 1.8 billion cars, being 99.9% compatible still means that 1.8 million cars are affected.

Interestingly, in many cases, fixing the affected applications requires fairly simple steps. But the problem is that the application developer is not always available at the time of the breakdown. Let's look at a specific example.

You tested the application with the .NET Framework 4 and it is you who install it with your application. But one day, one of your customers delivered another application that upgraded the machine to the .NET Framework 4.5. You do not know that your application has broken until the customer calls your support. At this point, fixing the compatibility problem can already be an expensive task: you need to find the appropriate sources, configure the computer to reproduce the problem, debug the application, make the necessary changes, integrate them into the release branch, release a new version of the software, test it, and finally transfer update to customers.

Compare this with this situation: you decided to take advantage of some functionality added in the latest version of the .NET Framework. At this moment you are at the development stage, you are ready to make changes to your application. If there is a slight compatibility problem, you can easily take it into account in the subsequent work.

Because of these issues, releasing a new version of the .NET Framework takes a considerable amount of time. And the more noticeable the change, the more time we need to prepare it. This leads to a paradoxical situation when our beta versions are already almost frozen and we have almost no opportunity to fulfill requests for changes in design.

Two years ago, we started distributing libraries through NuGet. Since we did not add these libraries to the .NET Framework, we mark them out as “out-of-band” (optional). Additional libraries do not suffer from the problems that we just discussed, as they are local to the application. In other words, they are distributed as if they were part of your application.

This approach, in general, almost completely removes all the problems that prevent you from upgrading to the latest version. Your ability to upgrade is limited only by your ability to release a new version of your application. It also means that you control which version of the library the particular application is using. Updates are made in the context of one application, without affecting other applications on the same machine.

This allows you to release updates in a much more flexible manner. NuGet also offers the opportunity to try pre-release versions, which allows us to release builds without strict promises regarding the operation of specific APIs. This approach allows us to support the process in which we offer you our fresh look at the assembly design - and if you don't like it, just change it. A good example of this is immutable collections. The beta period lasted about 9 months. We spent a lot of time trying to get the right design before releasing the first version. Needless to say, the final version of the library's design - thanks to your many reviews - is much better than the initial one.

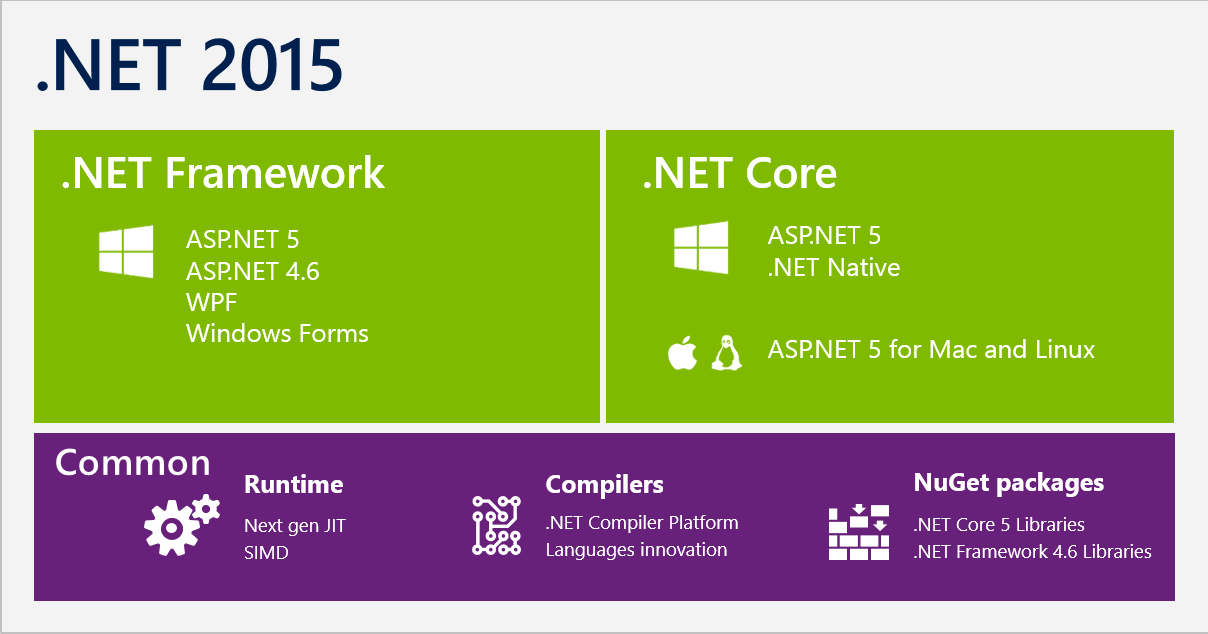

Welcome to .NET Core

All these aspects forced us to rethink and change the approach to the formation of the .NET platform in the future. This led to the creation of .NET Core:

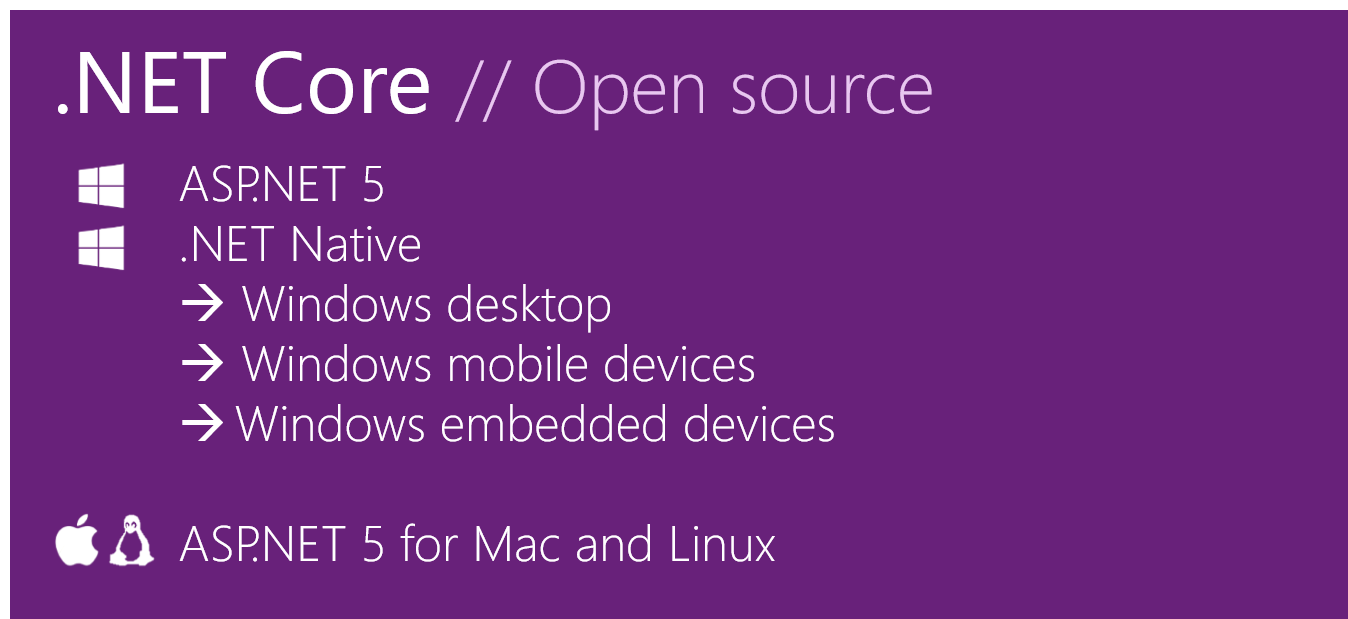

.NET Core is a modular implementation that can be used with a wide range of verticals, from data centers to touch devices, available open source, and supported by Microsoft on Windows, Linux, and Mac OSX.

Let's take a closer look at what .NET Core is and how the new approach addresses the issues we discussed above.

Single implementation for .NET Native and ASP.NET

When we designed the .NET Native, it was obvious that we could not use the .NET Framework as the basis for the class library framework. That is why .NET Native actually merges the framework with the application and then removes parts that are not needed in the application before generating the native code (I roughly simplify this process, you can study it in more detail here ). As I explained earlier, the implementation of the .NET Framework is not decomposed, this makes it difficult for the linker to reduce the part of the framework that will be “compiled” into the application - the area with dependencies is simply very large.

ASP.NET 5 experienced similar problems. Although it does not use .NET Native, one of the goals of the new ASP.NET 5 web stack was to provide a stack that could simply be copied so that web developers would not have to coordinate with the IT department to add features from the latest version . In this scenario, it is important to minimize the size of the framework, since it needs to be placed with the application.

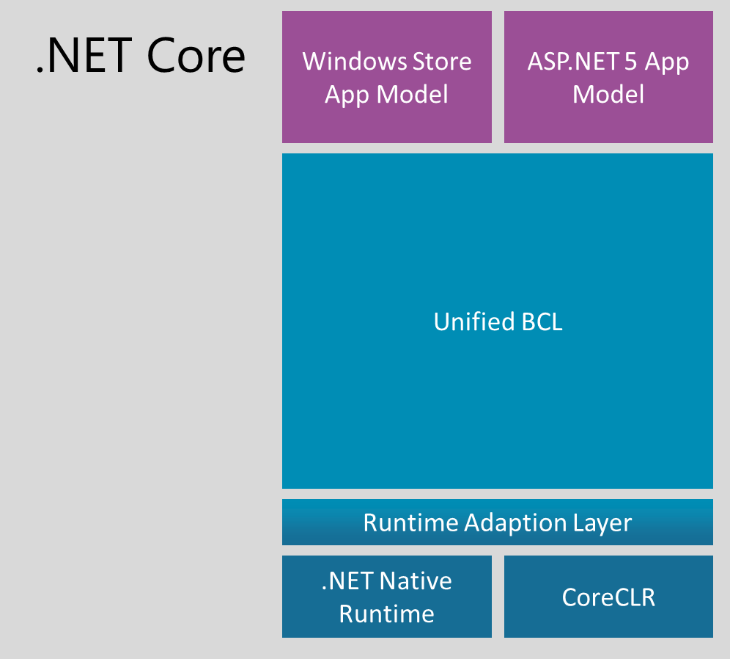

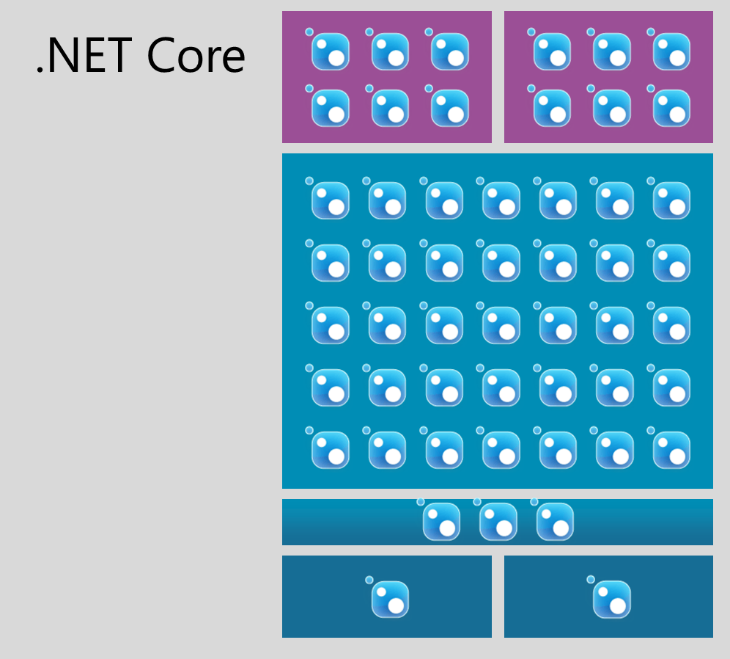

In fact, .NET Core is a fork of the .NET Framework whose implementation has been optimized for decomposition. Although the scripts for .NET Native (mobile devices) and ASP.NET 5 (the server side of web development) are different in order, we were able to create a unified Base Class Library (BCL).

The API set available in the .NET Core BCL is identical for both verticals: for both .NET Native and ASP.NET 5. At the core, BCL has a very thin layer specific to the .NET runtime. At the moment, we have two implementations: one specific for the .NET Native environment, and the second specific for CoreCLR, which is used in ASP.NET 5. However, this layer does not change very often. It contains types like String and Int32. Most BCLs are pure MSIL assemblies that can be reused as-is. In other words, the APIs do not just look the same, they use the same implementation. For example, there is no reason to have different implementations for collections.

On top of BCL, there are APIs specific to the application model. In particular, .NET Native provides client-specific development APIs for Windows, such as WinRT interop. ASP.NET 5 also adds an API, such as MVC, which is specific to the server side of web development.

We look at .NET Core as code that is not specific to either .NET Native or ASP.NET 5. BCL. Runtime environments target generalized tasks and are designed to be modular. In this way, they form the basis for future .NET verticals.

NuGet as a first-class delivery mechanism

Unlike the .NET Framework, the .NET Core platform will be delivered as a set of NuGet packages. We rely on NuGet , as this is exactly the place where most of the library ecosystem is already located.

To continue our efforts towards modularity and good decomposition, instead of providing the entire .NET Core as a single NuGet package, we split it into a set of NuGet packages:

For the BCL layer, we will have a direct correspondence between assemblies and NuGet packages.

In the future, NuGet packages will have the same names as the assemblies. For example, immutable collections will no longer be distributed under the name Microsoft.Bcl.Immutable and will instead be in a package called System.Collections.Immutable .

In addition, we decided to use a semantic approach for assembly versioning. The version number of the NuGet package will be consistent with the build version.

The consistency of naming and versioning between assemblies and packages will greatly facilitate their search. You should not have a question about which package System.Foo contains, Version = 1.2.3.0 - it is in the System.Foo package with version 1.2.3.

NuGet allows us to deliver .NET Core in a flexible manner. So if we release any update to any of the NuGet packages, you can simply update the corresponding link to NuGet.

Delivering the framework as such through NuGet also erases the difference between native .NET dependencies and third-party dependencies - they are now just NuGet dependencies. This allows third-party packages to declare that they, for example, require a more recent version of the System.Collections library. Installing a third-party package can now offer you to update your link to System.Collections. You do not need to understand the dependency graph - you only need consent to make changes to it.

NuGet-based delivery turns the .NET Core platform into a local application framework. The modular design of the .NET Core makes sure that every application needs to install only what it needs. We are also working to allow smart sharing of resources if multiple applications use the same framework builds. However, the goal is to make sure that each application logically has its own framework so that the update will not affect other applications on the same machine.

Our decision to use NuGet as a delivery mechanism does not change our commitment to interoperability. We continue to take this task as seriously as possible and will not make changes to the API or behavior that could disrupt the application once the package is marked as stable. However, the on-premises deployment of the application allows you to make sure that the rare cases when the added changes break the applications are limited only to the development process. In other words, for .NET Core, such violations can occur only when you update package links. At this very moment you have two options: fix the compatibility problems in your application or roll back to the previous version of the NuGet package. But unlike the .NET Framework, such violations will not occur after

Ready for corporate use.

The delivery model through NuGet allows you to go through a flexible release process and faster updates. However, we do not want to get rid of the experience of getting in just one place, which is present in working with the .NET Framework.

Strictly speaking, the .NET Framework is so beautiful that it comes as a single unit, which means that Microsoft has tested and supports its components as a single entity. For .NET Core, we will also provide this feature. We will introduce the concept of .NET Core distribution. Simply put, this is just a slice of all the packages in the specific versions that we tested.

Our basic idea is that our teams are responsible for individual packages. The release of a new version of the package of one of the commands requires from it testing only its component in the context of those components on which they depend. Since you can mix different NuGet packages, we can come to a situation where a separate combination of components will not dock well. Distributions of .NET Core will not have such problems, since all components will be tested in combination with each other.

We expect distributions to be released at a lower frequency than individual packages. We are currently thinking about about four issues per year. This will give Nast the necessary margin of time for conducting the necessary tests, fixing errors and signing the code.

Although .NET Core is distributed as a set of NuGet packages, this does not mean that you need to download packages every time you create a project. We have provided an offline installer for distributions and will also include them in Visual Studio, so creating a new project will be as fast as it is today and will not require an Internet connection during the development process.

Although application-local deployment is excellent in isolating the effects of dependency on new features, it may not be completely suitable in all cases. Critical bug fixes must be distributed quickly and holistically in order to be effective. We are fully committed to releasing security updates, as has always been the case for .NET.

To avoid the compatibility issues that we have seen in the past with centralized updates to the .NET Framework, it is critical that we focus only on security vulnerabilities. Of course, at the same time, there still remains a small chance that such updates will disrupt the operation of applications. That is why we will release updates only for really critical problems, when it is acceptable to assume that a small set of applications will stop working than if all applications work with the vulnerability.

The foundation for open source and cross-platform

To make .NET cross-platform in a supported form, we decided to open the source code for .NET Core .

From past experience, we know that the success of open source depends on the community around it. A key aspect of this is an open and transparent development process that allows the community to participate in code reviews, familiarize themselves with design documents, and make changes to the product.

Open source allows us to extend the unification of .NET to cross-platform development. Situations when basic components like collections need to be implemented several times negatively affect the ecosystem. The goal of .NET Core is to have a single code base that can be used to create and maintain code on all platforms, including Windows, Linux, and Mac OSX.

Of course, individual components, such as the file system, require a separate implementation. The delivery model through NuGet allows us to ignore these differences. We can have a single NuGet package that provides various implementations for each of the environments. However, the important point here is precisely that it is the internal kitchen of the component implementation. From the point of view of the developer, this is a single API that runs on different platforms.

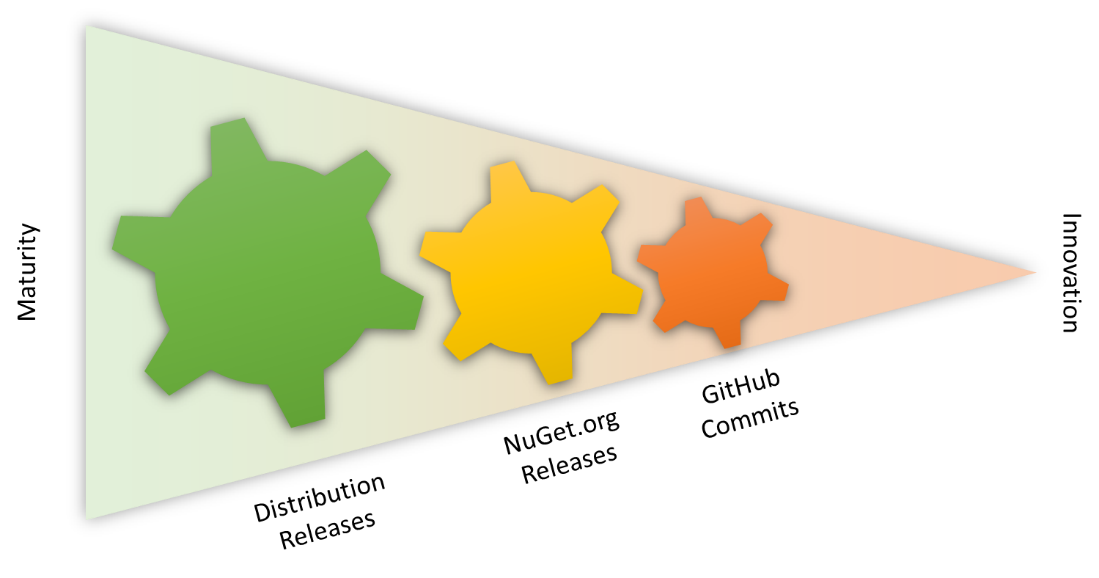

Another way to look at this is that open source output is a continuation of our desire to release .NET components in a more flexible way:

- Open source makes it possible to understand "in real time" the implementation and general direction of development

- Packet release through NuGet.org gives component-level flexibility

- Distributions give platform-level flexibility

The presence of all three elements allows us to achieve a wide range of flexibility and maturity of decisions:

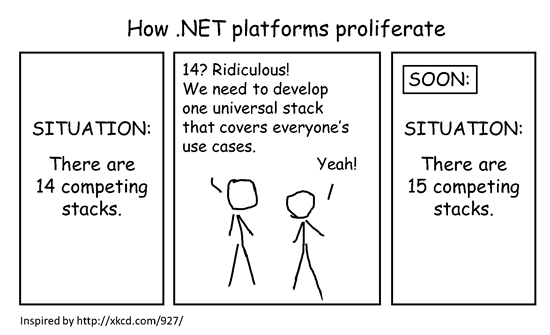

Connect .NET Core with Existing Platforms

Although we designed .NET Core so that it became the core of all subsequent stacks, we are very familiar with the dilemma of creating a “one universal stack” that everyone could use: It seems to us that we have found a good balance between laying the foundation for the future, while maintaining good compatibility with existing stacks. Let's take a closer look at some of these platforms.

.NET Framework 4.6

The .NET Framework is still a key platform for creating rich desktop applications, and the .NET Core does not change that.

In the context of Visual Studio 2015, our goal is to make sure that .NET Core is a pure subset of the .NET Framework. In other words, there should be no gaps in functionality. With the release of Visual Studio 2015, we expect .NET Core to grow faster than the .NET Framework. This means that at some point in time, opportunities will appear that are available only in platforms based on .NET Core.

We will continue to release updates for the .NET Framework. Our current plan includes the same frequency of new issues as today, that is, about once a year. In these updates, we will be porting innovations from the .NET Core to the .NET Framework. However, we will not simply blindly transfer all the new features, it will be based on an analysis of costs and benefits. As I noted above, even just add-ons in the .NET Framework can lead to problems in existing applications. Our goal here is to minimize differences in API and behavior, without violating compatibility with existing applications on the .NET Framework.

Important! Part of the investment, on the contrary, is made exclusively in the .NET Framework, for example, the plans that we announced in the WPF roadmap .

Mono

Many of you have asked what the cross-platform history of .NET Core for Mono means. The Mono project, in fact, is an (re) open source .NET Framework implementation. As a result, it not only has a common rich API from the .NET Framework, but also its own problems, especially in terms of decomposition.

Mono is alive and has a wide ecosystem. That is why, regardless of the .NET Core, we also released parts of the .NET Framework Reference Source under the open-source friendly GitHub license. This was done specifically to help the Mono community close the differences between the .NET Framework and Mono, simply using the same code. However, due to the complexity of the .NET Framework, we are not ready to launch it as an open source project on GitHub, in particular, we cannot accept change requests for it.

Another look at this: The .NET Framework actually has two forks. One fork is provided by Microsoft and it only works on Windows. Another fork is Mono, which you can use on Linux and Mac.

With .NET Core, we can develop the entire .NET stack as an open source project. Thus, it is no longer necessary to support separate forks: together with the Mono community, we will make .NET Core work perfectly on Windows, Linux and Mac OSX. It also allows the Mono community to innovate on top of the compact .NET Core stack and even port it to environments that Microsoft is not interested in.

Windows Store and Windows Phone

Both the Windows Store 8.1 and Windows Phone 8.1 platforms are fairly small subsets of the .NET Framework. However, they are also a subset of .NET Core. This allows us to continue to use .NET Core as the basis for the implementation of both of these platforms. So, if you are developing for these platforms, you can directly use all the innovations without waiting for the framework update.

It also means that the number of BCL APIs available for both platforms will be identical to what you can see today in ASP.NET 5. For example, this includes non-generic collections. This will make it much easier to port existing code that runs on top of the .NET Framework to touch device applications.

Another obvious effect is the BCL APIs in the Windows Store and Windows Phone are fully compatible and will remain so, since both .NET platforms are based on the .NET Core.

Common code for .NET Core and other .NET platforms

Since .NET Core forms the basis for all future .NET platforms, the ability to use common code between .NET-based platforms is already in them.

Nevertheless, the question remains how the use of common code works with platforms that are not based on .NET Core, for example, with the .NET Framework. The answer is this: just like today, you can continue to use portable class libraries and common projects:

- Portable class libraries are great when your generic code is not platform-specific, or for reusing libraries in which platform-specific code is decomposed.

- Shared projects are good to use when your shared code contains a little platform-specific code, since you can adapt it using #if.

The choice between the two approaches is described in more detail in the article “Sharing code across platforms” .

In the future, portable class libraries will also support targeting platforms based on .NET Core. The only difference is that if you focus only on platforms with .NET Core, then you are not limited to a fixed set of APIs. Instead, you will be tied to NuGet packages that you can update as desired.

If you add at least one platform that is not based on .NET Core, you will be limited to a list of APIs that are common with it. In this mode, you can still update NuGet packages, but at the same time you may get a requirement to choose a higher version of the platform or completely lose their support.

This approach allows two worlds to coexist, while taking advantage of .NET Core.

Summary

The .NET Core platform is the new .NET stack optimized for open source development and flexible delivery through NuGet. We are working with the Mono community to make it work perfectly on Windows, Linux, and Mac, and Microsoft will support it on all three platforms.

We maintain the qualities that the .NET Framework brings to enterprise-level development. We will be releasing .NET Core distributions, which are a set of NuGet packages that we tested and support as a whole. Visual Studio remains your key development point, and adding NuGet packages as part of the distribution does not require an internet connection.

We are responsible for continuing to release critical security updates that do not require work by application developers, even if the affected components are distributed exclusively as NuGet packages.

If you have questions or doubts, let us know by writing comments on this article (in English), by sending a tweet to @dotnet, or by starting a related topic on the .NET Foundation forums . We are interested in what you think about all this.

And here are our other articles on similar topics :

- Reference application based on containers and microservices architecture ;

- A bit about the .NET Framework and .NET Core [plus useful links] ;

- F # on Linux as a cure for the soul ;

- Open web interface for .NET (OWIN) ;

- A selection of useful materials: Microservices on .NET Core ;

- Let's dot the .NET Core and .NET Standard ;

- ASP.NET Core: Creating ASP.NET Web API Reference Pages Using Swagger .