New optimizations for x86 in the upcoming GCC 5.0

So, the actual development of new optimizations in GCC 5.0 can be considered complete. The GCC 5.0 product is now in the phase3 phase , that is, the optimization is already being finalized. In this and subsequent articles, I will talk about optimizations implemented in GCC 5.0 for x86 and their impact on the performance of programs for Intel Atom and Intel Core processors . Today we will focus on the vectorization of group memory accesses. In subsequent articles, I will talk about accelerations in 32-bit PIC mode and additional amplification of vectorization.

As you probably already guessed, in GCC 5.0 the vectorization of group memory accesses has been significantly improved. Group memory access refers to an iterable sequence of calls. For example:

iterable over i is a group of memory downloads of length 3. The length of the group of memory accesses is the distance between the lowest and highest addresses in the group. For the example above, this is (i + 2) - (i) + 1 = 3 The

number of memory accesses in the group does not exceed its length. For example:

iterable over i is a group of length 3, despite the fact that there are only 2 memory accesses.

GCC 4.9 vectorizes groups where length is a power of 2 (2, 4, 8 ...).

GCC 5.0 vectorizes groups where the length is 3 or degree 2 (2, 4, 8 ...). Other lengths are not vectorized, as they are very rare in real applications.

Most often, vectorization of a group of memory accesses is used when working with arrays of structures.

1. Image conversion, say, in the RGB structure. An example .

2. Work with N-dimensional coordinates (say, to normalize three-dimensional points). An example .

3. Multiplication of vectors by a constant matrix:

Overall in GCC 5.0 (compared to 4.9)

The following tables provide estimates of the performance increase when using GCC 5.0 on byte structures (the largest number of elements in a vector) compared to GCC 4.9. The following cycle is used for evaluation:

Where:

Such a matrix is used to minimize calculations inside the loop to relatively fast additions and subtractions.

Compilation options "-Ofast" plus "-march = slm" for Silvermont , "-march = core-avx2" for Haswell and all combinations -DLDGSIZE = {1,2,3,4,8} -DSTGSIZE = {1,2 , 3,4,8}

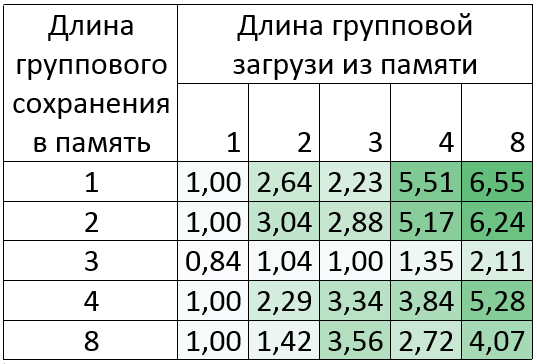

GCC 5.0 performance increase compared to 4.9 (how many times it accelerated, the more the better).

Silvermont : Intel Atom (TM) CPU C2750 @ 2.41GHz

Increase up to 6.5 times!

As can be seen from the table, the results for memory groups of length 3 are not very good. This is because such a conversion requires 8 “pshufb” instructions, the execution time of which on Silvermont is about 5 clock cycles. Despite this, the vectorization of other teams in the loop can give a greater increase. This can be seen in the example of a group of memory downloads of length 2, 3, 4 and 8.

Example GCC assembler for a memory load group of length 2:

Here, as in the example for Haswell below , it is worth noting that most of the ".LC" constants will be invariants in the loop, but only if there are free registers. Otherwise, they will have to be installed directly in phufb: “pshufb .LC0,% xmm3”. Such pshufb will be larger by the size of the address and potentially take longer to execute.

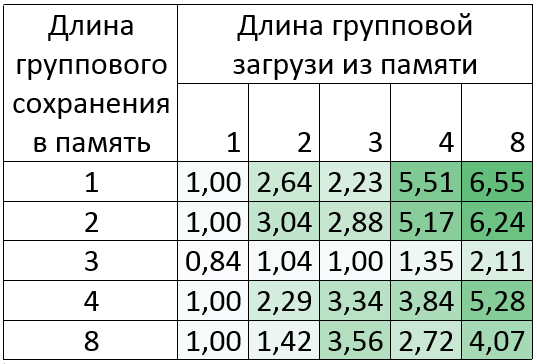

Haswell : Intel Core (TM) i7-4770K CPU @ 3.50GHz

Increase up to 3 times!

In this case, the results for memory groups of length 3 are much better, since on Haswell the instruction “pshufb” is executed in 1 clock cycle. Moreover, the largest increase here is for embedded vectorization of memory access groups of length 3.

Example GCC assembler for a memory load group of length 2:

From the foregoing, the conclusion follows: using GCC 5.0, you can significantly accelerate the performance of applications such as those described above. You can start trying now! Most edits are planned to be ported to Android NDK.

Compilers used in measurements:

You can download the example on which measurements were taken from the original text of the article in English .

As you probably already guessed, in GCC 5.0 the vectorization of group memory accesses has been significantly improved. Group memory access refers to an iterable sequence of calls. For example:

x = a[i], y = a[i + 1], z = a[i + 2]iterable over i is a group of memory downloads of length 3. The length of the group of memory accesses is the distance between the lowest and highest addresses in the group. For the example above, this is (i + 2) - (i) + 1 = 3 The

number of memory accesses in the group does not exceed its length. For example:

x = a[i], z = a[i + 2]iterable over i is a group of length 3, despite the fact that there are only 2 memory accesses.

GCC 4.9 vectorizes groups where length is a power of 2 (2, 4, 8 ...).

GCC 5.0 vectorizes groups where the length is 3 or degree 2 (2, 4, 8 ...). Other lengths are not vectorized, as they are very rare in real applications.

Most often, vectorization of a group of memory accesses is used when working with arrays of structures.

1. Image conversion, say, in the RGB structure. An example .

2. Work with N-dimensional coordinates (say, to normalize three-dimensional points). An example .

3. Multiplication of vectors by a constant matrix:

a[i][0] = 7 * b[i][0] - 3 * b[i][1];

a[i][1] = 2 * b[i][0] + b[i][1];

Overall in GCC 5.0 (compared to 4.9)

- There was a vectorization of a group of memory accesses of length 3

- Significantly improved vectorization of a group of memory downloads for any length

- The techniques of vectorizing memory access groups optimal for a particular x86 processor began to be used.

The following tables provide estimates of the performance increase when using GCC 5.0 on byte structures (the largest number of elements in a vector) compared to GCC 4.9. The following cycle is used for evaluation:

int i, j, k;

byte *in = a, *out = b;

for (i = 0; i < 1024; i++)

{

for (k = 0; k < STGSIZE; k++)

{

byte s = 0;

for (j = 0; j < LDGSIZE; j++)

s += in[j] * c[j][k];

out[k] = s;

}

in += LDGSIZE;

out += STGSIZE;

}

Where:

- c is a constant matrix:

const byte c[8][8] = {1, -1, 1, -1, 1, -1, 1, -1,

1, 1, -1, -1, 1, 1, -1, -1, 1, 1, 1, 1, -1, -1, -1, -1, -1, 1, -1, 1, -1, 1, -1, 1, -1, -1, 1, 1, -1, -1, 1, 1, -1, -1, -1, -1, 1, 1, 1, 1, -1, -1, -1, 1, 1, 1, -1, 1, 1, -1, 1, 1, 1, -1, -1, -1}; Such a matrix is used to minimize calculations inside the loop to relatively fast additions and subtractions.

- in and out are pointers to the global arrays “a [1024 * LDGSIZE]” and “b [1024 * STGSIZE]”

- byte is unsigned char

- LDGSIZE and STGSIZE are macros that determine the length of a group of memory downloads and save to memory, respectively

Compilation options "-Ofast" plus "-march = slm" for Silvermont , "-march = core-avx2" for Haswell and all combinations -DLDGSIZE = {1,2,3,4,8} -DSTGSIZE = {1,2 , 3,4,8}

GCC 5.0 performance increase compared to 4.9 (how many times it accelerated, the more the better).

Silvermont : Intel Atom (TM) CPU C2750 @ 2.41GHz

Increase up to 6.5 times!

As can be seen from the table, the results for memory groups of length 3 are not very good. This is because such a conversion requires 8 “pshufb” instructions, the execution time of which on Silvermont is about 5 clock cycles. Despite this, the vectorization of other teams in the loop can give a greater increase. This can be seen in the example of a group of memory downloads of length 2, 3, 4 and 8.

Example GCC assembler for a memory load group of length 2:

| GCC 4.9 | Gcc 5.0 |

| movdqa .LC0 (% rip),% xmm7 movdqa .LC1 (% rip),% xmm6 movdqa .LC2 (% rip),% xmm5 movdqa .LC3 (% rip),% xmm4 movdqu a (% rax,% rax), % xmm1 movdqu a + 16 (% rax,% rax),% xmm0 movdqa% xmm1,% xmm3 pshufb% xmm7,% xmm3 movdqa% xmm0,% xmm2 pshufb% xmm5,% xmm1 pshufb% xmm6,% xmm2 pshufb % xmm0 por% xmm2,% xmm3 por% xmm0,% xmm2 | movdqa .LC0 (% rip),% xmm3 movdqu a (% rax,% rax),% xmm0 movdqu a + 16 (% rax,% rax),% xmm1 movdqa% xmm3,% xmm4 pand% xmm0,% xmm3 psrlw $ 8 ,% xmm0 pand% xmm1,% xmm4 psrlw $ 8,% xmm1 packuswb% xmm4,% xmm3 packuswb% xmm1,% xmm0 |

Haswell : Intel Core (TM) i7-4770K CPU @ 3.50GHz

Increase up to 3 times!

In this case, the results for memory groups of length 3 are much better, since on Haswell the instruction “pshufb” is executed in 1 clock cycle. Moreover, the largest increase here is for embedded vectorization of memory access groups of length 3.

Example GCC assembler for a memory load group of length 2:

| GCC 4.9 | Gcc 5.0 |

| vmovdqa .LC0 (% rip),% ymm7 vmovdqa .LC1 (% rip),% ymm6 vmovdqa .LC2 (% rip),% ymm5 vmovdqa .LC3 (% rip),% ymm4 vmovdqu a (% rax,% rax), % ymm0 vmovdqu a + 32 (% rax,% rax),% ymm2 vpshufb% ymm7,% ymm0,% ymm1 vpshufb% ymm5,% ymm0,% ymm0 vpshufb% ymm6,% ymm2,% ymm3 vpshufb% ymm4 % ymm2 vpor% ymm3,% ymm1,% ymm1 vpor% ymm2,% ymm0,% ymm0 vpermq $ 216,% ymm1,% ymm1 vpermq $ 216,% ymm0,% ymm0 | vmovdqa .LC0 (% rip),% ymm3 vmovdqu a (% rax,% rax),% ymm0 vmovdqu a + 32 (% rax,% rax),% ymm2 vpand% ymm0,% ymm3,% ymm1 vpsrlw $ 8,% ymm0 ,% ymm0 vpand% ymm2,% ymm3,% ymm4 vpsrlw $ 8,% ymm2,% ymm2 vpackuswb% ymm4,% ymm1,% ymm1 vpermq $ 216,% ymm1,% ymm1 vpackuswb% ymm2,% ymm0,% ymm0 vpermq ymm0,% ymm0 |

Compilers used in measurements:

You can download the example on which measurements were taken from the original text of the article in English .