6 A / B tests that you can conduct today (and get results)

- Transfer

Email marketing is one of the main secrets of business success for a significant number of entrepreneurs. But there is one caveat in it. It just does not work automatically, even if you are an exceptional person. Instead, you must do some serious work: create a list of addresses and emails that need to be constantly improved and tested. In this article, we will share with you the most effective A / B tests for email marketing that we have discovered.

Testing is a major component in email marketing that is often overlooked. The real way to achieve great results here is to check what exactly in your letters has the most tangible impact.

When you start testing your emails, things will gradually come to light that you didn't even know if they work or not.

This alone provides you with new perspectives, but beyond that, more. Testing emails leads you to more successful email marketing, which in turn leads to overall success.

How do you test your emails?

First, answer the simple question: “How do you conduct your A / B testing?”

It's easy. Most email marketing programs have built-in functionality for split testing. All you have to do is activate it (sometimes you have to buy it separately), install your tests and expect results.

Here are some basic email marketing programs that use built-in A / B testing:

• Infusionsoft, ConstantContact, НubSpot Email

• AWeber, Marketo, Mailchimp.

This article is not a guide to using each of these programs. This is a guide on how to conduct built-in A / B testing on any of the email marketing platforms.

A small warning

As soon as you start testing your emails, you may begin to think that split testing is the solution to all your problems in email marketing. Well, this is not so. However, this is the best you have.

There is one problem regarding email marketing that I would like to draw your attention to - statistical significance. The problem is not in the testing itself, but rather in how some programs produce results.

And although A / B testing can be done in most email marketing programs, not all of them provide an accurate result.

As Peter Borden explained in his Sumall article:

I have not seen such an email program that really takes results and tries to determine if the latter are statistically significant. Most of them do not. The program just looks at which version was opened, and which one reacted the most - the one won.

However, nothing prevents you from looking at the data with your own eyes, not trusting the “winner”. This is a pretty easy way to figure it out.

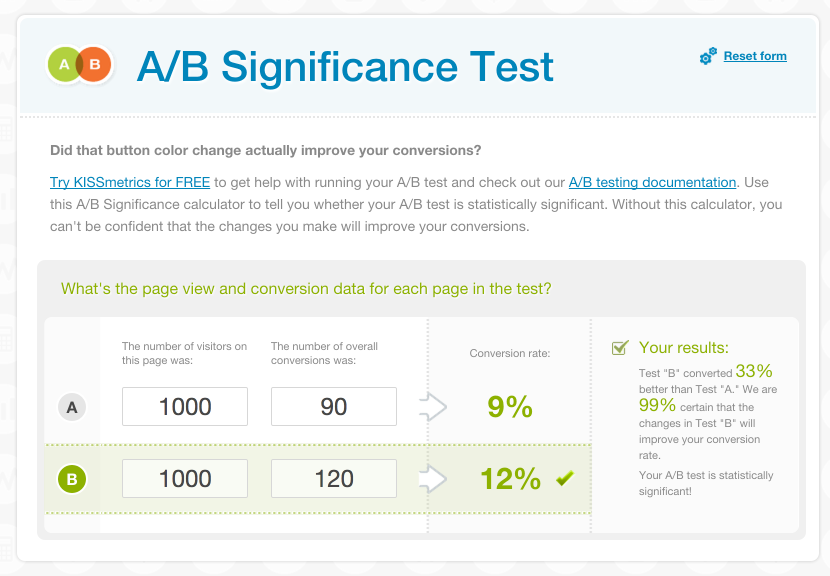

To determine the significance, use a special calculator. For example, this one. Just enter the data and analyze the results.

This calculator will allow you to work with the data yourself and determine the significance / reliability of your A / B tests.

Now, let's take a look at those tests that you can start using today, getting results that will change your business.

Time calculation: what day of the week or what time of the day is the highest open rate?

Time calculation in email marketing is just the question that everyone asks. What is the best time to send emails?

Everyone wants to know this, but only a few really try to find out in practice. Save yourself time, save yourself from suffering and take a short break.

You see, there is no right answer to the question: "What is the best time?" Like everything in marketing (and in life), the answer depends on many circumstances.

Everything has a perfect time - tweets, posts, retweets, etc. It has a major impact on whether anyone sees your emails, not to mention openness, click through, and conversion.

Finding the ideal time is not only the maximum percentage of openness, it is one of the main factors in obtaining the highest percentage of clickability and conversion.

For example, you think that the letter you sent at 7:30 in the morning will get the best CTR. Perhaps this is so. Workers turn on their computers and start browsing their email. But there is one caveat. They are in a hurry, open the letter, but perhaps they do not have time to answer it. Of course, you will get high click-through, but your conversion ... it will be just awful.

But what if you send a letter at 4:30 in the afternoon, when most working people are bored, waiting for the end of the working day, looking for something to distract. They can see your letter, open it and, most likely, become your customers. Open rate - less? Maybe. Higher Conversion? Of course.

See? It all depends on many things. Test and ultimately you will find the perfect time.

Subject: Which subject line increases open rates and conversion rates?

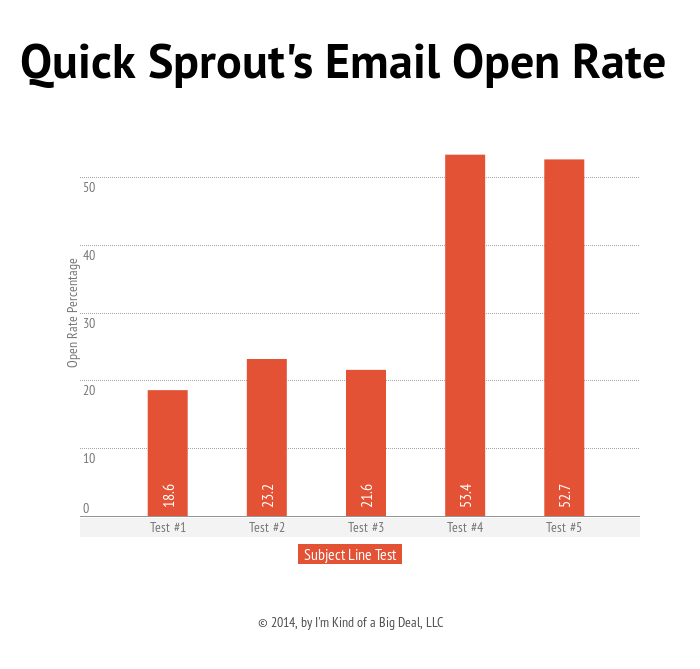

The most successful tests so far include working with subject lines. Here, look at the results that were obtained as a result of such testing:

Testing email topics can raise your open rate to heaven. It took a little effort, but in the end - found a formula that increased this indicator by 203%.

As part of email marketing, 203% can easily be interpreted as an increase in income by tens of thousands of dollars. Think about what is good in letters that no one opens? Statistically speaking, most people do not open messages containing ads. The global percentage of openness is only 32%, and the clickthrough rate is a shocking 4%. Sorry, but this is life.

If you somehow forced people to open your letter, this automatically equates to success.

The subject of the letter is designed to increase your open rate. But that is not all. The subject of the letter does not only make people open the letter. It also forms their perception of the entire message as a whole, whether they will perform the targeted actions indicated in it.

The result that is easiest to determine when testing the subject line is the open rate. But you also need to track how it affects your conversion rate.

You cannot afford not to test email topics. They are the most important part of your entire message. But what exactly should you test? Here are 6 possible parameters to check:

1. Length: Which works best - a long or short subject for writing?

The MailChimp website claims that 28-39 characters is the middle ground. True, practical testing shows that sometimes shorter options work better.

2. Curiosity: What works best - the subject of the letter, which reveals its content, or the one that causes curiosity?

Some marketers repeat, like some kind of mantra, that you should always disclose the content of the letter in its subject. Others - that curiosity is the only sure thing to increase open rate. So what is better?

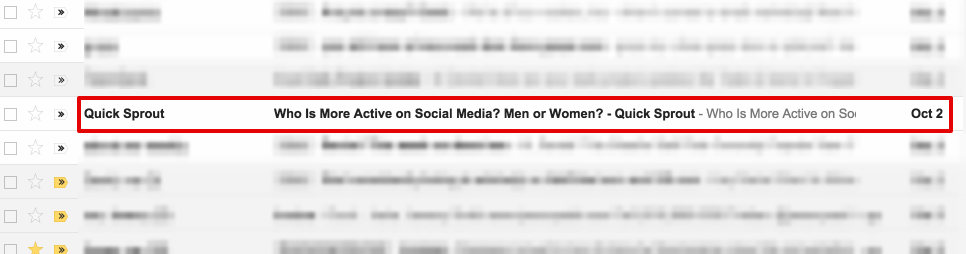

The subject line of Quicksprout reads: “Who is more active on social media? Men or women? ”

Obviously, you will not know this until you check.

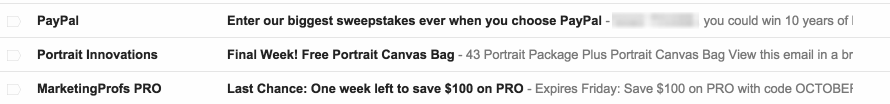

3. Marketing:What works best - the subject of a letter that encourages people to shop, or one that carries value for the user?

It was found that the subject of the letter, which carries a certain value for the user, have a higher open rate. People look at their inbox and think: “What is there for me?” If your letter carries something good, they will most likely open it.

Note that these email names emphasize the benefits that they have in themselves and, to a lesser extent, the subject of the purchase. Each of them contains some kind of value.

4. Uppercase letters: What works best - the subject of a letter with words entirely consisting of uppercase letters, or the one that is written in lowercase letters?

Most of us know that using capital letters can scare away a significant number of readers, however, some marketers have used this technique quite successfully. Will this work with your emails?

5. Questions: What works best - the subject of the letter, which contains the question, or the one in which it is not?

Questions make users think. To be more precise, the questions make them think about your letter. If the answer is not obvious, or if they want to find out if their answer is correct, then your “piggy bank of clicks” will replenish.

6. Greetings: What works best - the subject of the letter in which the user is addressed by name, or the one in which they do not?

Calls by name can be very powerful, or they can completely turn the user away from the letter. This is a rather complicated problem, because some users may like personalization, while other people see this as vulgarity, artificiality, or even violation of personal space.

The best way to find out is practice. After you find “working” writing topics that are suitable for you, you will receive one of the strongest trump cards in the field of email marketing.

Sender: What works best - sending a letter on behalf of the company or on behalf of a person?

Almost every email client shows the sender’s name even before the user opens the message.

In fact, the name of the sender is the first thing that people notice, even before reading the subject line of the letter! And all due to the fact that many email clients display the name of the sender earlier than the subject of the letter.

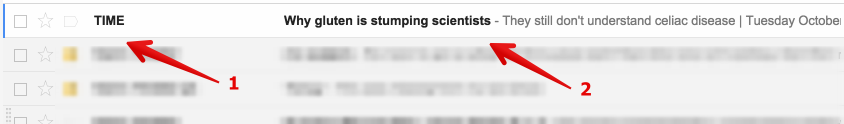

Here's how Gmail displays messages in the inbox: 1) The sender’s name, 2) The subject of the message, followed by a small preview of it.

From all this it follows that the sender of the message has a huge impact on the open rate of letters and other indicators related to it.

The best test would be: send a letter both on behalf of an ordinary person and on behalf of the company. On an intuitive level, it is clear that people are more likely to open a message from the first than to trust some kind of faceless corporate entity.

But can you be so sure of that? Only if you check it yourself.

Greeting: Which works best - personalized or non-personalized greeting?

In all forms of marketing, personalization is seen as a major breakthrough.

However, some evidence suggests that it may not be that effective. Have you experienced any interference with your privacy? If so, then you realize that personalization can look a little intimidating.

Back in 2012, researchers concluded: personalized emails do not impress customers. A report by Sanil Watall of Temple University Fox School of Business says:

Given the increased interest in cybersecurity caused by phishing, identity theft and credit card fraud, many buyers will be suspicious of any emails, especially with a personalized greeting.

Now, as a result of major cyber scandals, buyers are probably even more cautious. But are your customers so careful? This aspect has been tested more than once. Results vary greatly. Although, often, the differences can be quite insignificant.

Foolishadventure conducted a test: personalized writing versus non-personalized writing. First post:

Open rate slightly higher, at 1%. Click-through, in turn, is 2% higher.

However, in subsequent testing, the opposite was true. The personalized greeting had a lower open rate or CTR.

The point here is not that "personalization is better" or "stereotyping is better." And the fact that Foolishadventure should conduct a couple more tests.

Another key point is that it is you who should test to find out what the results will be.

Length: What works best - full email or where you want to click on the link?

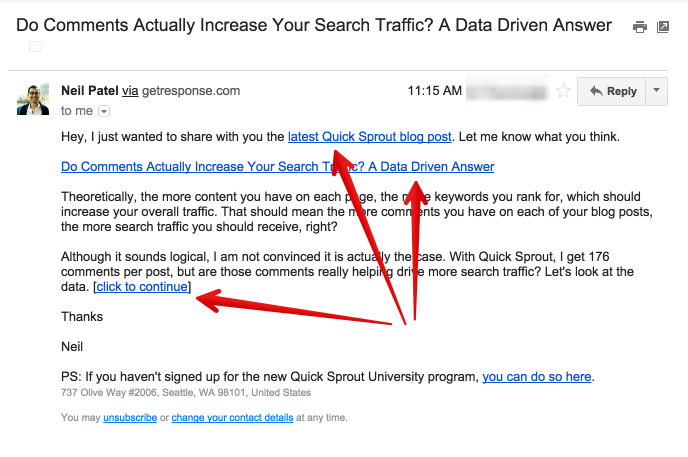

For a complete reading of most of the letters from Neil Patel, you must click on the link in them. Why? All this is connected with the goal of his email marketing. In the case of Quicksprout, in the letter below, Neil will try to provide subscribers with the most complete information on the Internet so that they can get the full impression of staying on his blog: communicate using comments and interact at a higher level. This is why they are given three options to follow the links.

Other marketers use a longer approach. In particular, Ramit Networks from IWT writes really big emails, and his email marketing, in turn, is beyond praise.

Both approaches work. All testing depends on the purpose of your email marketing. Want to drive traffic, improve conversions, increase readership? Make a decision about the purpose of your marketing, or let it show you the results of your testing.

Call to action: Which works best - a button or text?

Any good letter contains a call to action (CTA) - something that you ask the reader to do. It may be a simple “read the rest of the article”, or it may be a larger “register and get free trial access”.

Should you embed a call to action in a line of text? Or should I use a button? Or both options?

The question of CTA in the email is one of the most important. The biggest and most shocking mistake ever made in email marketing was the complete lack of a call to action! As you wish, but embed CTA in your letter, and then find out which way gives you a big conversion.

This test is especially valuable because it gives you the opportunity to take a closer look at the aspects that are most relevant in email marketing - conversion or clickability.

You can (and should) do more tests related to the results of your call to action. As soon as you find out which one gives the greatest conversion, you can begin to test the color of the button, its size and other features. You should also try different options for a call to action, and even its various goals.

Test Plan

So, you read this material, and now you think: “Oh, great. And then what? ”

Let's briefly outline the things that should be done next. First, ask your email marketing provider how to launch and how to use A / B testing. Secondly, do a test - only one test. Once you get the results, continue to the next test.

Below is a list of these tests in the sequence in which you should run them. They work akin to a pyramid, just the opposite: the first test allows you to capture the largest number of open emails, from which a larger number of conversions in the final tests follows.

1. Timing: What day of the week or what time of day is the highest open rate?

2. Subject of the letter: What subject of the letter increases the percentage of openness and conversion?

3. Sender: What works best - sending a letter on behalf of the company or on behalf of a person?

4. Greeting: What works best - a personalized or non-personalized greeting?

5. Length: What works best - full email or where do you want to click on the link?

6. Call to action: What works best - a button or text?

Once you have completed these six basic tests, move on to more advanced options, or simply start over by restarting each of the tests.

What A / B tests did you use? And what gave the real effect?