Camel pull or Camel integration. Part 1

The history of one project.

Have you ever dreamed of camels? So I do not either. But when you work with Camel for the third year, not only camels begin to dream.

In general, I will share my experience, write about camels and teach you how to cook them. This is a series of articles in three parts: the first part will be for those who are interested in the stories and torments of creativity; the second is more technical, about integration patterns, their application, and the third part is about errors and debugging.

If you need to combine your services, here you will find out why Camel is good. If you want to learn how to use something new, here we will start with the basics. If you like the stories and the original chips that are in every team, then read on.

Integration task

I'll start with the need for a service bus. We are developing a large system that is affectionately called “behind the eyes” a monster. The monster turned out to be big and scary, but in reality it was one of the BPM systems (business process managment system). It all started a few years ago. Once at a meeting, the project manager spoke about plans for the future:

- Colleagues, in the near future we plan to integrate with a large number of internal and external systems. Now we need to work out a systematic approach so that our analysts can begin preparing tasks.

Then for some time he talked about those systems with which we have to integrate. Here were both external well-known systems, for example, elibrary.ru, and internal ones that were yet to be created. At the end of the story, the characteristic features of integration with different types of systems were identified. For external systems - this is a big uncertainty of the description of processes and objects of the subject area (business objects), which were to be exchanged; but it was clear the direction of data transfer (loading or unloading); viewed additional requirements for protecting data transmission channels, ensuring guaranteed delivery and prerequisites for creating tools to correct erroneous data. For the second - internal systems, there was more clarity. The functional purpose of each of the systems was clearly described. Thanks to what it was possible to work out a circle of objects to be transferred. But only for these systems the uncertainty manifested itself at the level we will - we will not, we will have time - we will not have time to realize it. Without going into the details of the application area, I confine myself to the typical tasks on which we concentrated.

- Information must be uploaded to the main system using a standard protocol. At the time of the discussion, two systems were mentioned for external systems: HTTP, ftp; for internal: JMS, HTTP, NFS, SMB and / or using the RMI interface. What architecture could we solve this problem with?

- Suppose you need to unload information from the main system and transfer it to the external system using one of the standard protocols. What could be ways to solve such a problem?

- Or, you need to transfer one, two, n business objects. How could we prepare the data and what format to use?

- Suppose you want to send an email message through a mail server that is temporarily unavailable. To do this, the user will either need to wait until the latter is available, or receive a message about the impossibility to finish his work now. How could we build a system that guarantees delivery and does not block users?

- We assume that we need to be able to collect information from an external system at 1 or 3 in the morning, but our system at this time interrupted its work for maintenance. How to make sure that during unavailability of the main system the processes of uploading and downloading data do not stop?

- And the last task: you must be able to quickly change the login and password to access an external system or the communication protocol from ftp to sftp. How could we make it possible to change the settings flexibly without disrupting the operation of the main system?

After discussing the tasks, we came to the decision to create a stand-alone application that will connect the main system with others. The idea of using an intermediate link is not new and is known in the world of integration under the term enterprise service bus or ESB. Let's outline the range of functionality that our application should have had. Based on the wording of the tasks. It must be able to:

- interact with other systems using standard protocols: HTTP, FTP, as well as JMS or using the RMI interface .

- read, write and create files on the local file system to work with NFS

- send mail messages through an SMTP server.

- parse one of the transport formats XML, JSON

- have flexible settings

- be able to describe format approval rules in one of the Java programming languages, Groovy. We worked with these languages, so we could count on the lack of additional overhead for learning.

- the ability to work with XSLT and xPath would be an added plus.

From reasoning to practice

After some time, when the search process was only gaining momentum, analysts wrote the first statement on the client’s order:

- Guys, we wrote the statement here, we need to do it quickly. The customer was waiting for her yesterday, so try.

This often happens with us, but we always manage to make new functionality in time. This time is no exception, we were waiting for a large amount of work and tight deadlines for implementation. Although we could not use the service bus for this formulation, we used the formulation as the first practical task to create a prototype.

In the meantime, we had to choose the technologies on which our prototype was to be built. Reinventing your bike and starting from scratch is great, but too expensive. Proprietary solutions were also not used because the result was required quickly, but it takes time to coordinate and resolve financial issues. Therefore, they turned to open source projects. At that time, the choice was small, so they were able to evaluate the merits of the “camel” at first sight.

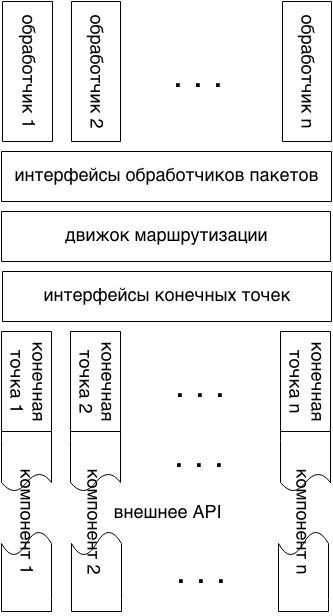

As you can see from the diagram, Apache Camel is a modular, easily extensible framework for integrating applications. The main structural elements: component model, routing mechanism, message processing mechanism. A component model is a set of factories that create routing endpoints. For example, an endpoint may be an abstraction that sends messages to the broker's JMS queue. A routing mechanism associates endpoints with message processing abstractions. The latter, a message processing mechanism, allows you to manipulate message data. For example, convert to another format, validate, add new content, journal and much more. All three architectural components are modular, and thanks to this, Camel capabilities are constantly expanding. You can familiarize yourself with architecture in detail inWikipedia and the official website . Just appeared, Apache Camel has acquired a solid collection of components. We found components to satisfy all our requirements: JMS, HTTP, ftp, file, SMTP, xPath, xslt, XStream, Groovy, Java. Like the author of Which Integration Framework Should You Use - Spring Integration, Mule ESB or Apache Camel? , we chose Camel. This article compares three frameworks: Spring Integration, Mule ESB and Apache Camel. The author describes the key advantage as follows:

... Apache Camel due to its awesome Java, Groovy and Scala DSLs, combined with many supported technologies.The ability to use fluent Java DSL instead of clumsy XML has become a big advantage for us. The question arises: what is clumsy? XML is a great markup language, but it has long been preferred by JSON or YAML. They are preferred because of simplicity, better readability, fewer supporting information and simpler parsing algorithms. Programming languages such as Java, Groovy, Scala have full support for modern IDEs, which means that, unlike XML, debugging and refactoring is possible. There was no doubt in Camel, and it formed the basis of our service bus. The fact that this project was used by other companies added confidence in the right choice.

The main question remained - how to integrate Camel into our monster.

Flour of choice: JMS vs RMI.

The integration of the service bus and our monster could be implemented using one of the many components supported by Camel. Based on the tasks given above, we formed the requirements: communication should be stable and guarantee message delivery. We settled on three options: the first two were synchronous RMI and HTTP, and one asynchronous JMS. Of the three, I settled on the two simplest versions suitable for our Java projects: JMS, RMI. JMS (Java message service) is a standard for sending messages, it regulates the rules for sending, receiving, creating and viewing messages. The second, RMI (Remote Method Invocation), is the programming interface for calling remote procedures; it allows you to call methods of one JVM in the context of another. The standard remote call procedure involves packing Java objects and passing. In fairness, it is worth noting that contrasting JMS and RMI is not correct because JMS can be a transport and an integral part of RMI. We contrast the standard implementation of RMI and the implementation of JMS - ActiveMQ. Previously, we used RMI to integrate two applications. Why was he chosen then? When both applications are in Java, nothing is simpler than RMI. In order to use it, it is enough to describe the interface and register an object that implements this interface. But we were able to solve the problems that arise with RMI when transferring a large amount of data between applications: the memory was clogged, and the applications “stacked”. We looked for ways to solve this problem and discussed it with the JavaOne JVM developers. It turned out that garbage collectors in a virtual machine and distributed collectors are two different things. Everything rested on that for a standard garbage collector it was possible to select its type and configure optimal parameters, but for a distributed one there wasn’t such an opportunity. If we talk about other differences, then RMI limited integration to applications running on the JVM, while JMS did not. In addition to the difficulties described, there was a desire to learn something new: abandon RMI and use an alternative solution.

The first prototype of Camel

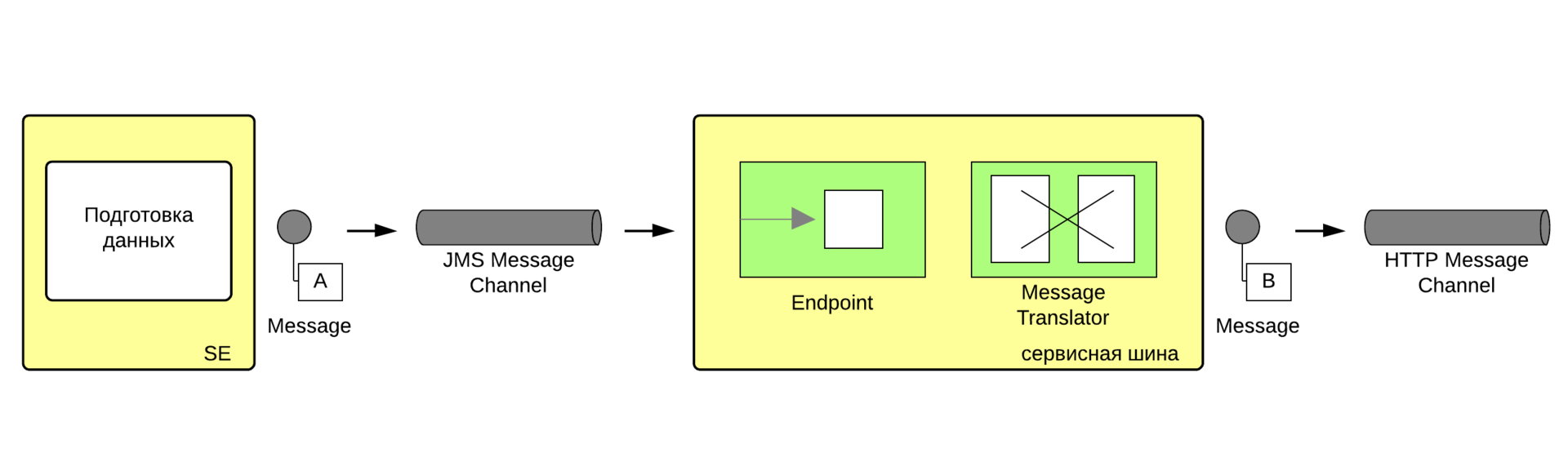

Let's get back to creating a prototype. The first practical task for the service bus was this: the user initiates the process of downloading data, the system prepares it and sends it to the service bus. All the data delivery work rests with her. The figure shows an example of the problem statement in the symbols of enterprise application integration patterns (EIP).

The service bus combines the reception of messages from the JMS channel, their conversion and sending via HTTP. The JMS channel and the HTML message sending channel marked in the figure were supposed to be implemented using the Camel JMS and Jetty components. The data conversion process could be implemented in Java and / or using template engines, such as, for example, VM (Apache Velocity). The proposed data transfer scheme is implemented in Java DSL in one line. Example:

from("jms:queue:se.export")

.setHeader(Exchange.HTTP_METHOD,constant(org.apache.camel.component.http.HttpMethods.POST))

.process( new JmsToHttpMessageConvertor() )

.inOnly("jetty:{{externalsystem.uri}}");

The above example shows a Java DSL route. A route is a description of a message transmission route. Camel descriptions can be of two types: Java DSL and XML DSL. The characteristics of such a route are the start and one or more end points, indicated by from and tokens, respectively. The route describes the message path from the start to the end point. If the endpoints are indicated sequentially, the message will be sent to the first one, the service bus will wait for a response, which will then be sent to the next point. There may be routes that select the desired endpoint ( Dynamic Router ) or send a message to several points at once ( Recipient List) The from and tokens parameter is a string with a URI. URI is a triple of parameters consisting of the name of the Camel component, resource identifier and connection parameters. Let's look at an example:

from(“jms:queue:se.export?timeToLife=10000”)

This is a description of the entry point that uses the JMS component. The JMS component provides the ability to retrieve data from the queue: se.export resource. Where queue is the type of message channel, it can be either a queue or topic. Next comes the channel name “se.export”. A queue with this name will be created by the message broker. The last part of the URI, the endpoint parameters: “timeToLife = 10000”, indicates that the packet has a lifetime of 10 seconds.

From the example, it is clear how we planned to organize the transfer of data, in the next article there will be more real code and examples.

So, we solved the problem of data transfer, created a prototype of the integration bus, which consisted of Camel and was almost ready for implementation. It remained to solve the problem of its correct and convenient configuration.

Prototype setup

I am very impressed with this topic, since it is difficult to find practical advice and implementation examples.

Our stand structure is as follows:

Each developer has his own copy of the software, and he fully configures it before launching it. There are two test benches for manual testing, and of course, there is a working system. The statement of the problem is as follows:

- fine tuning on each of the stands should have been done simply by editing the minimum number of parameters in the system configuration files

- large customization (adding new features) was performed seamlessly for all stands

In addition, while it was possible to use the project builder (Maven) on the developers' stands, this was not possible on the test stands and the production server.

There are a lot of difficulties in this task: Camel is connected with the JMS broker, which forces you to use different channels for different stands or different message brokers. We went the simplest way by launching the ActiveMQ broker integrated into the bus. In this case, it remains to provide connection settings for different servers.

Let's move on to examples of using parameters in Camel settings:

- Example from camel-config.xml

classpath:system.properties classpath:smtp.properties

This example sets two configuration files for Spring. - An example that uses the settings specified by two files from the previous example.

The properties themselves are set in the line "$ {config.someService.routeStartTime}" - An example in which several settings files are transferred to the Camel context

… - An example of using parameters in Camel routes on Java DSL

from("jms:queue:email.send") .setHeader("to", simple("${headers.email}")) .setHeader("from", simple("${properties:config.smtp.from}")) .to("{{config.smtps.server}}?username={{config.smtps.user}}&password={{config.smtps.password}}&contentType=text/html&consumer.delay=60000")

Here several methods of addressing parameters are used at once. Here they are:

- in a simple dialect, with the string “$ {properties: config.smtp.to}”

- in the endpoint URI with the string “{{config.smtps.server}}”

Parameter names can be any, the lines are taken from the example above.

Let's imagine the real problem:

there is a service that sends letters through the service bus to the SMTP server; such a service should send messages to users for the working system, and for the test system send all letters to one mailbox.

Then this task is transformed into adding different logic for some routes that are executed on the test and production systems.

Here is an example of how such a task can be solved using the parameters in Camel routes.

from("jms:queue:event.recoverypass")

.setHeader("to", isDebug() ? simple("${properties:config.smtp.to}") : simple("${headers.email}"))

.setHeader("from", simple("${properties:config.smtp.from}"))

… // some other headers

.choice()

.when( header("password").isNotNull() )

.setHeader("subject", simple("${properties:config.passwordNotify}"))

.to("velocity:vm/email/newPasswordNotify.vm")

.otherwise()

.setHeader("subject", simple("${properties:config.recoverypass}"))

.to("velocity:vm/email/recoveryPassword.vm")

.end()

.to("{{config.smtps.server}}?username={{config.smtps.user}}&password={{config.smtps.password}}&contentType=text/html&consumer.delay=60000")

The sample code above is needed for the password recovery service. The following logic has been implemented: the user clicks the reset password button, an email is sent to him with a temporary link to generate a new password, the user follows the link and the same route sends the user a new password by mail. That is probably where the first part is to be completed, it remains only to take stock.

Summary

The bus prototype was completed: several routes for sending messages appeared, and configuration files appeared that made it easy to configure and deploy the bus. According to the results of the first steps in mastering Camel, one could talk about the great potential of such an approach. The simplicity and conciseness of route writing is fascinating. It seems that everything can be done in one line. But you should pay attention to the fact that working with routes requires a change in thinking. For programmers who did not deal with them, it is best to immediately turn to the book “Integration Templates for Enterprise Applications”. The effect of understanding integration patterns is comparable to the effect of knowing design patterns in classic OOP .

On this bye, bye. See you in the next part. Let me remind you that it will be devoted to the use cases of Camel.