EMC XtremIO and VDI. Effective but still raw stuff

On the third day, our two-week testing period for EMC XtremIO ended .

CROC has already shared its impressions of such an experience, but mainly in the form of the results of synthetic tests and enthusiastic statements, which applies to any storage system, it looks impressive, but uninformative. I currently work in the customer, and not in the integrator, the application aspect is more important to us, so the testing was organized in the appropriate way. But in order.

XtremIO is a new (relatively) product of EMC, or rather XtremIO, acquired by EMC. It is an All-Flash array, consisting of five modules - two Intel single-server servers as controllers, two Eaton UPSs and one 25-disk drive shelf. All of the above are combined in a brick - an extension unit of XtremIO.

A killer-featured solution is deduplication "on the fly." We were particularly interested in this fact, since we use VDI with full clones and we have a lot of them. Marketing promised that they all fit in one brick (brick capacity - 7.4 TB). At the moment, they occupy almost 70+ TB on a regular array.

I forgot to photograph the subject of the story, but in the KROK post you can see everything in detail. I am even sure that this is the same array.

The device came mounted in a mini-rack. We thoughtlessly went to take it together and nearly got over it, just dragging it from the car to the trolley. Having disassembled it into components, however, I transferred it to the data center and mounted it alone without much difficulty. Mounting the slide involves screwing a large number of screws, so I had to spend a lot of time with a screwdriver in the position of the letter “G”, but the connection pleased - all the cables of the necessary and sufficient length are inserted and removed easily, but have protection against accidental pulling out. (This fact was positively noted after testing another flash storage system - Violin - in which for some reason a cable connecting two neighboring sockets was made one and a half meters long, and one of the ethernet patch cords, having inserted, cannot be subsequently pulled out without an additional flat tool, for example, a screwdriver) .

A specially trained engineer came to set up the subject. It was very interesting to know that at the moment the updating of the management software is happening with data loss. Adding a new brick to the system is the same. Departure of two disks at once also leads to data loss (without loss, the second disk can be lost after a rebuild), but they promise to fix it in the next update. As for me, it would be better if the first two points were done in a normal way.

To fully configure and manage the array, the system requires a dedicated server (XMS - XtremeIO Management Server). It can be ordered as a physical addition to the kit (I think by increasing the cost of the solution by the price of the weight equivalent of this server in gold), or you can simply deploy the virtual box (linux) from the template. Then you need to place the archive with the software in it and install it. And then set up using half a dozen default accounts. In general, the initial configuration process is different from the usual configuration of storage and not to say that in the direction of relief. I hope it will be further developed.

Without further ado, they decided to transfer part of the combat VDI to the device under test as a test and see what happens. Particularly interested in the effectiveness of dedup. I did not believe in a 10: 1 coefficient, because even though we have complete clones, profiles and some user data are inside the VM (although their volume is most often small relative to the OS), I’m just skeptical of the declared miracles.

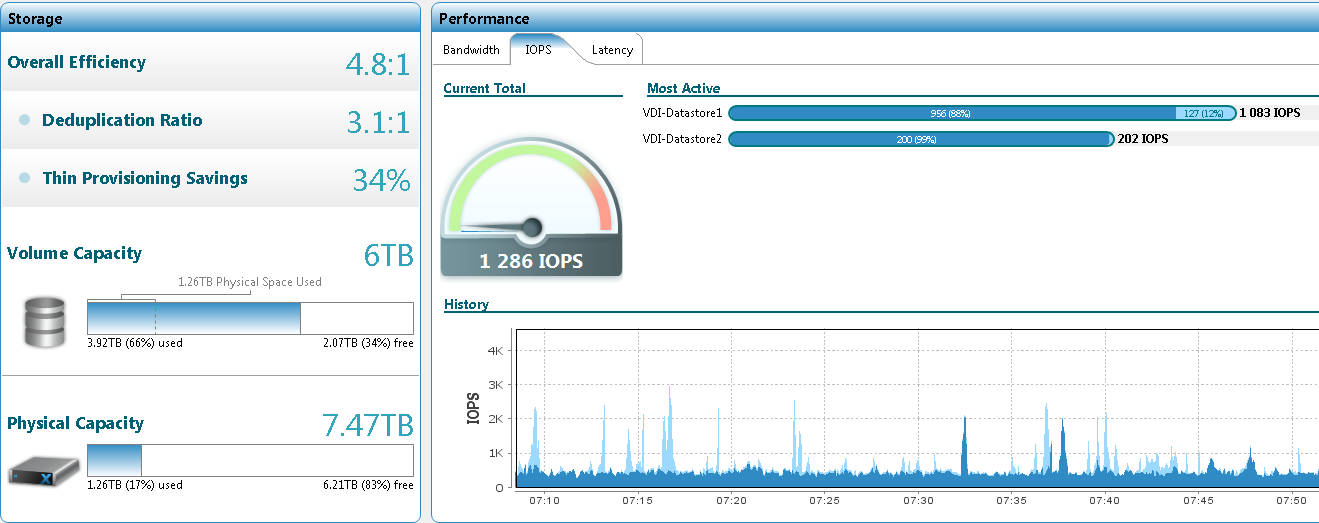

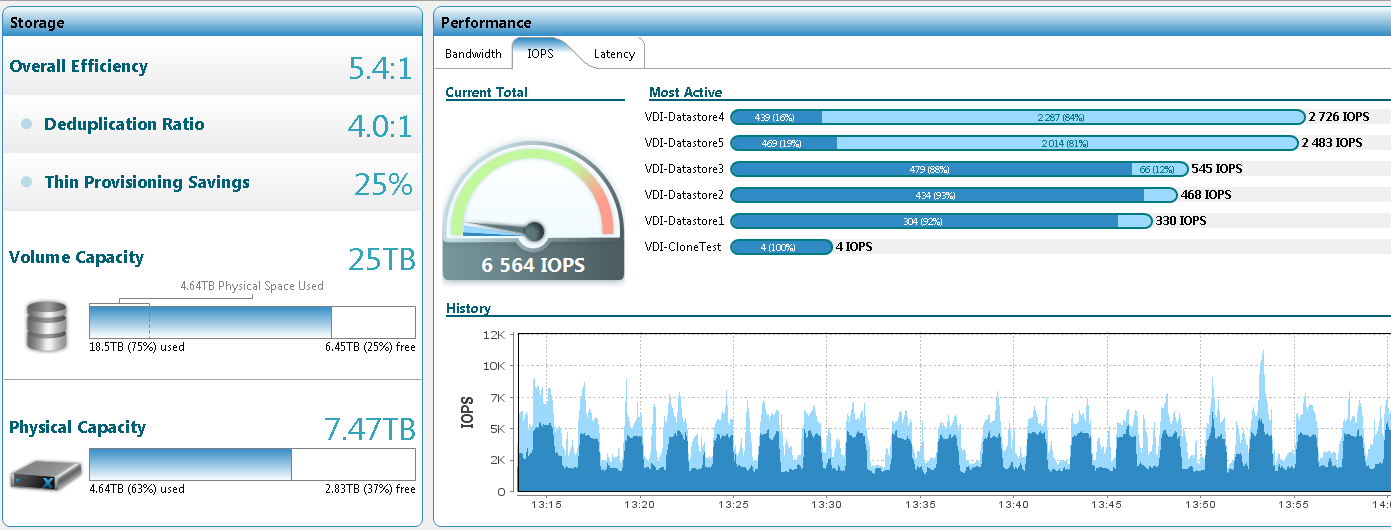

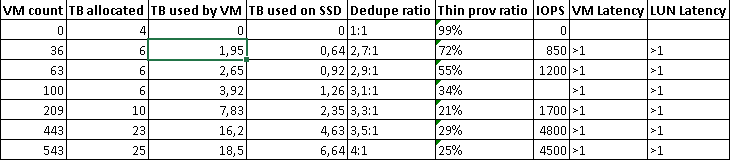

The first 36 VMs transferred took 1.95 TB on the datastore, and 0.64 TB on the drives. The deduplication ratio is 2.7: 1. True, the first batch consisted of virtual desktops of representatives of the IT department, who especially like to keep everything inside their PC, whether it is physical or virtual. Many even have additional drives. As the system was filled with new machines, the coefficient grew, but not significantly, without jerking. Here's an example screenshot of a system with hundreds of virtual machines:

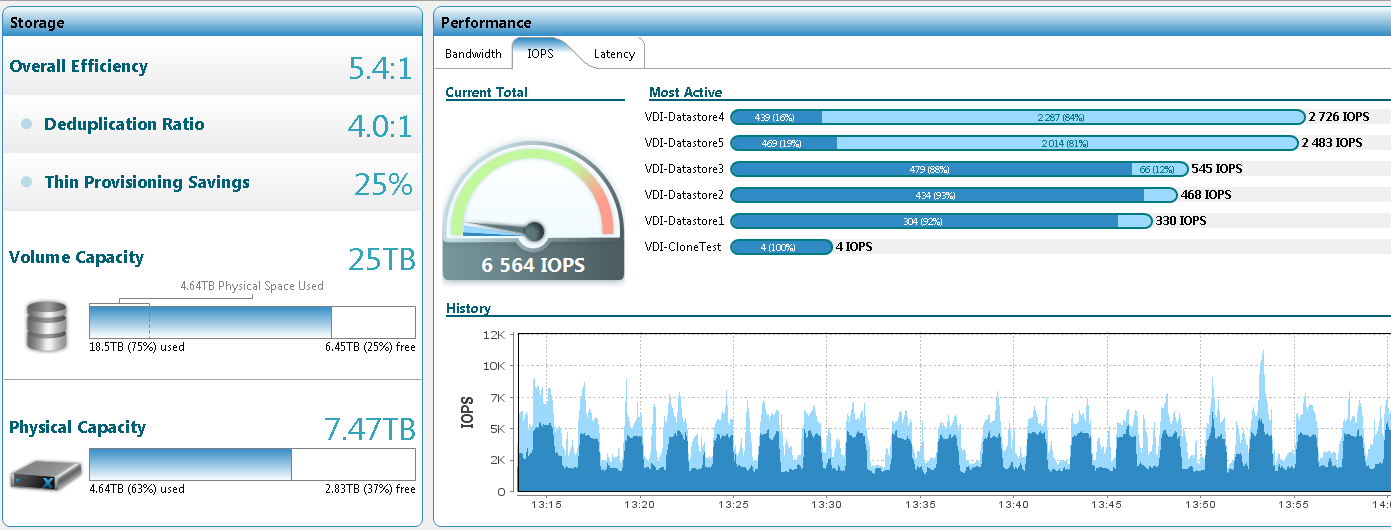

And here's 450: The

average size of vmdk is 43 GB. Inside is Windows 7 EE.

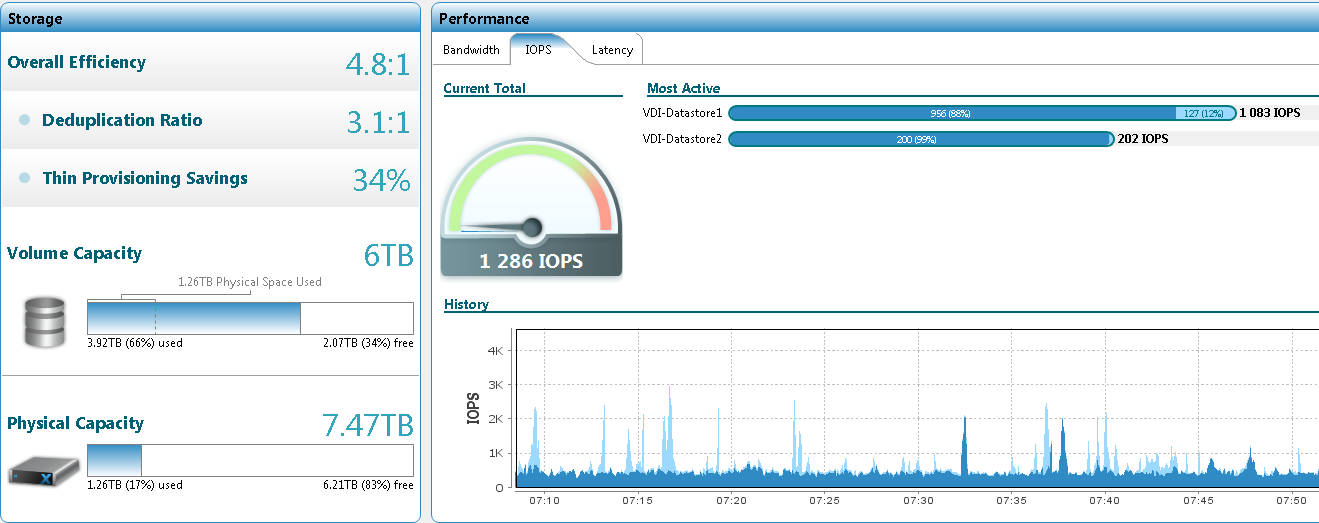

On this amount, I decided to stop and crank up a new test - create a new datastore and hammer it with "clean" clones, freshly deployed from the template, checking both deployment time and dedup. The results are impressive:

100 clones created;

4 TB populated datastore;

disk space usage increased by 10 GB.

Screenshot (compare with the previous one):

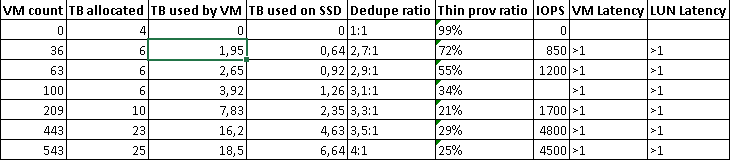

Here is the complete table with the results:

There was still an idea to include these 100 clones and see a) how the system behaves in the bootstorm, b) how the dedup coefficient will change after switching on and some time the clean system works. However, these activities had to be postponed, as what happened could be called unpleasant behavior. One of the five LUNs blocked and stake the entire cluster.

It is worth recognizing that the reason lies in the host configuration features. Due to the use of IBM SVC as a storage aggregator (whether it was wrong) on the hosts, I had to disable Hardware Assist Lock, the most important VAAI element that allows blocking not the entire datastore when changing metadata, but its small sector. So why the LUN is blocked - understandably. Why it did not unlock - not really. And it is completely incomprehensible why it did not unblock even after it was disconnected from all hosts (it was necessary, since while we did not do this, no VM could turn on or migrate, but as luck would have it, at that moment someone Very Important People of the company needed to rebuild their cars) and passed all conceivable and unimaginable timeouts. According to the "old-timers", similar behavior was observed on old IBM DSxxxx,

This button is not provided here, as a result, 89 people were without access to their desktops for three hours, and we were already thinking about restarting the array and were looking for the safest and most reliable way to this (restarting the controllers one by one did not help).

Helped a support EMC, who first took out their long desired engineering subsoil, which is then checked back a long time and looked through all the tabs and console, and the decision itself was simple and elegant:

vmkfstools --lock lunreset /vmfs/devices/disks/vml.02000000006001c230d8abfe000ff76c198ddbc13e504552432035

ie unlock command from any host.

Live and learn.

The next week, I frantically moved the desktops back to the HDD-storage, postponing all the tests for later-if-there will be time. There is no time left, since the array was taken away from us two days earlier than the promised time, since the next tester was not in Moscow, but Ufa (the suddenness and organization in the return procedure is worth separate not completely normative statements).

Ideologically, I liked the solution. If you slightly finish our VDI infrastructure by uploading user data to a file server, you can place all of our 70 TB, if not on one, then on two or three bricks. That is 12-18 units against the whole rack. Could, of course, not like the speed of svMotion and the deployment of the clone (less than a minute). However, it’s still damp. Loss of data during updating and scaling cannot be considered acceptable in the enterprise. And the moment with reservation lock also embarrassed.

On the other hand, it somehow looks like an unfinished Nutanix. I can only evaluate the latter only by presentation, but they are always beautiful.

And about the price. CROC in his posts wrote about some incomprehensible figure of 28 million. I heard about six and a half, and this was not a minimum. Although I have neither access nor interest to price negotiations now, so I will not undertake to say for sure.

CROC has already shared its impressions of such an experience, but mainly in the form of the results of synthetic tests and enthusiastic statements, which applies to any storage system, it looks impressive, but uninformative. I currently work in the customer, and not in the integrator, the application aspect is more important to us, so the testing was organized in the appropriate way. But in order.

general information

XtremIO is a new (relatively) product of EMC, or rather XtremIO, acquired by EMC. It is an All-Flash array, consisting of five modules - two Intel single-server servers as controllers, two Eaton UPSs and one 25-disk drive shelf. All of the above are combined in a brick - an extension unit of XtremIO.

A killer-featured solution is deduplication "on the fly." We were particularly interested in this fact, since we use VDI with full clones and we have a lot of them. Marketing promised that they all fit in one brick (brick capacity - 7.4 TB). At the moment, they occupy almost 70+ TB on a regular array.

I forgot to photograph the subject of the story, but in the KROK post you can see everything in detail. I am even sure that this is the same array.

Installation and setup

The device came mounted in a mini-rack. We thoughtlessly went to take it together and nearly got over it, just dragging it from the car to the trolley. Having disassembled it into components, however, I transferred it to the data center and mounted it alone without much difficulty. Mounting the slide involves screwing a large number of screws, so I had to spend a lot of time with a screwdriver in the position of the letter “G”, but the connection pleased - all the cables of the necessary and sufficient length are inserted and removed easily, but have protection against accidental pulling out. (This fact was positively noted after testing another flash storage system - Violin - in which for some reason a cable connecting two neighboring sockets was made one and a half meters long, and one of the ethernet patch cords, having inserted, cannot be subsequently pulled out without an additional flat tool, for example, a screwdriver) .

A specially trained engineer came to set up the subject. It was very interesting to know that at the moment the updating of the management software is happening with data loss. Adding a new brick to the system is the same. Departure of two disks at once also leads to data loss (without loss, the second disk can be lost after a rebuild), but they promise to fix it in the next update. As for me, it would be better if the first two points were done in a normal way.

To fully configure and manage the array, the system requires a dedicated server (XMS - XtremeIO Management Server). It can be ordered as a physical addition to the kit (I think by increasing the cost of the solution by the price of the weight equivalent of this server in gold), or you can simply deploy the virtual box (linux) from the template. Then you need to place the archive with the software in it and install it. And then set up using half a dozen default accounts. In general, the initial configuration process is different from the usual configuration of storage and not to say that in the direction of relief. I hope it will be further developed.

Exploitation, Deduplication, and Problem Situation

Without further ado, they decided to transfer part of the combat VDI to the device under test as a test and see what happens. Particularly interested in the effectiveness of dedup. I did not believe in a 10: 1 coefficient, because even though we have complete clones, profiles and some user data are inside the VM (although their volume is most often small relative to the OS), I’m just skeptical of the declared miracles.

The first 36 VMs transferred took 1.95 TB on the datastore, and 0.64 TB on the drives. The deduplication ratio is 2.7: 1. True, the first batch consisted of virtual desktops of representatives of the IT department, who especially like to keep everything inside their PC, whether it is physical or virtual. Many even have additional drives. As the system was filled with new machines, the coefficient grew, but not significantly, without jerking. Here's an example screenshot of a system with hundreds of virtual machines:

And here's 450: The

average size of vmdk is 43 GB. Inside is Windows 7 EE.

On this amount, I decided to stop and crank up a new test - create a new datastore and hammer it with "clean" clones, freshly deployed from the template, checking both deployment time and dedup. The results are impressive:

100 clones created;

4 TB populated datastore;

disk space usage increased by 10 GB.

Screenshot (compare with the previous one):

Here is the complete table with the results:

There was still an idea to include these 100 clones and see a) how the system behaves in the bootstorm, b) how the dedup coefficient will change after switching on and some time the clean system works. However, these activities had to be postponed, as what happened could be called unpleasant behavior. One of the five LUNs blocked and stake the entire cluster.

It is worth recognizing that the reason lies in the host configuration features. Due to the use of IBM SVC as a storage aggregator (whether it was wrong) on the hosts, I had to disable Hardware Assist Lock, the most important VAAI element that allows blocking not the entire datastore when changing metadata, but its small sector. So why the LUN is blocked - understandably. Why it did not unlock - not really. And it is completely incomprehensible why it did not unblock even after it was disconnected from all hosts (it was necessary, since while we did not do this, no VM could turn on or migrate, but as luck would have it, at that moment someone Very Important People of the company needed to rebuild their cars) and passed all conceivable and unimaginable timeouts. According to the "old-timers", similar behavior was observed on old IBM DSxxxx,

This button is not provided here, as a result, 89 people were without access to their desktops for three hours, and we were already thinking about restarting the array and were looking for the safest and most reliable way to this (restarting the controllers one by one did not help).

Helped a support EMC, who first took out their long desired engineering subsoil, which is then checked back a long time and looked through all the tabs and console, and the decision itself was simple and elegant:

vmkfstools --lock lunreset /vmfs/devices/disks/vml.02000000006001c230d8abfe000ff76c198ddbc13e504552432035

ie unlock command from any host.

Live and learn.

The next week, I frantically moved the desktops back to the HDD-storage, postponing all the tests for later-if-there will be time. There is no time left, since the array was taken away from us two days earlier than the promised time, since the next tester was not in Moscow, but Ufa (the suddenness and organization in the return procedure is worth separate not completely normative statements).

Summary

Ideologically, I liked the solution. If you slightly finish our VDI infrastructure by uploading user data to a file server, you can place all of our 70 TB, if not on one, then on two or three bricks. That is 12-18 units against the whole rack. Could, of course, not like the speed of svMotion and the deployment of the clone (less than a minute). However, it’s still damp. Loss of data during updating and scaling cannot be considered acceptable in the enterprise. And the moment with reservation lock also embarrassed.

On the other hand, it somehow looks like an unfinished Nutanix. I can only evaluate the latter only by presentation, but they are always beautiful.

And about the price. CROC in his posts wrote about some incomprehensible figure of 28 million. I heard about six and a half, and this was not a minimum. Although I have neither access nor interest to price negotiations now, so I will not undertake to say for sure.