Balancing traffic between web servers using IP CEF on network equipment

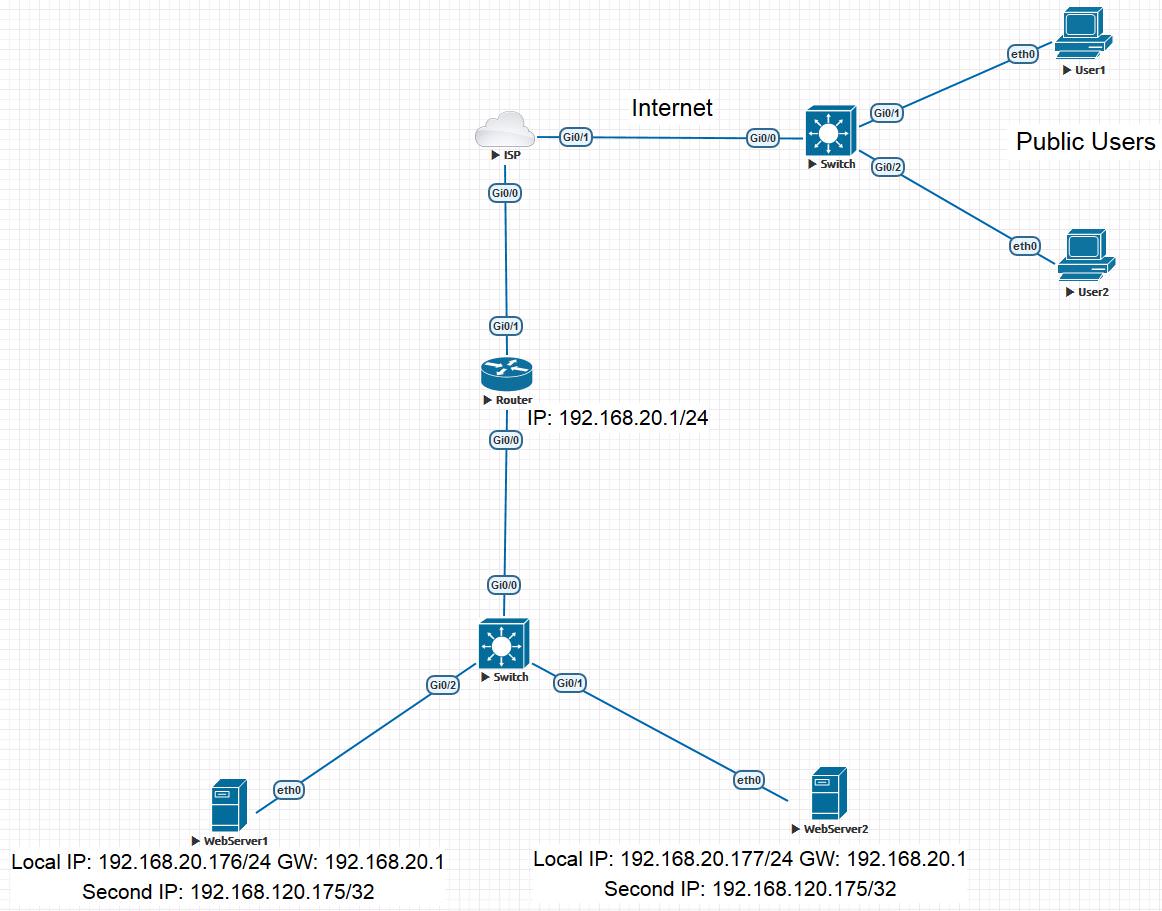

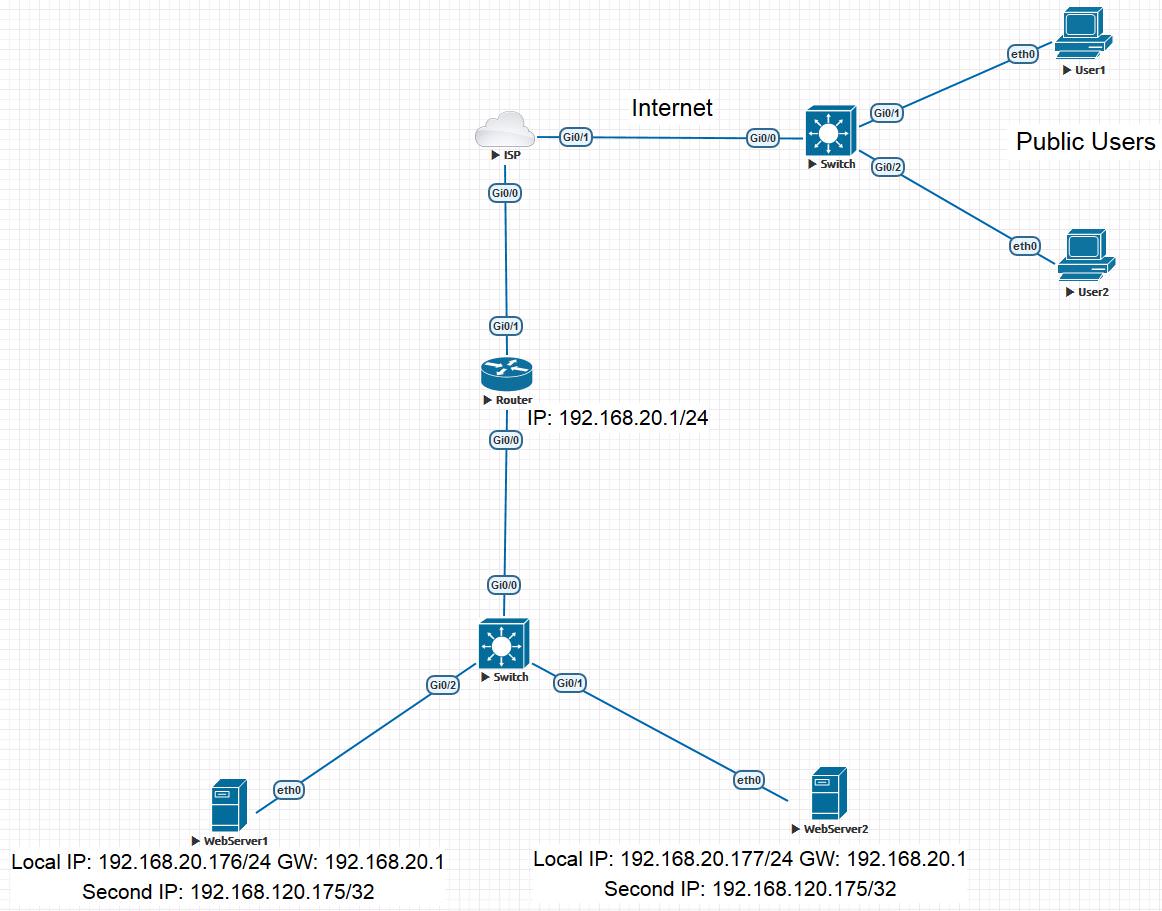

The task was to implement a fault-tolerant solution for two web servers and, if possible, the implementation of load distribution between web servers, as sometimes one database did not cope with all requests. It was not possible to buy special equipment, and therefore the following scheme was invented. Perhaps the idea is not original, but I did not find anything like this on the Internet Our topology is:

There is a Cisco Router that brings a web server to the Internet. Two web servers on Centos 7 with nginx. The IP addresses of the first and second web servers are 192.168.20.176/24 and 192.168.20.177/24, respectively. To implement the plan, web servers need to set the same secondary ip address. This can be any private ip address that is not used on your network. I chose 192.168.120.175 and registered it with the secondary ip address of the main eth0 interface of web servers. On Centos, this is done by creating the eth0: 0 file in the / etc / sysconfig / network-scripts / directory. File contents:

It is important to note that the mask 255.255.255.255 is used and this allows you to avoid any ip conflicts, since the web server will not use it to generate traffic. So to say, we will have Loopback interfaces on web servers.

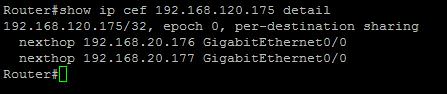

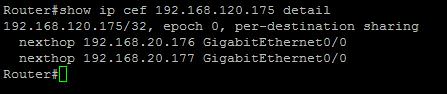

After that, the router can implement load balancing with static routing. This technology is implemented using Ip Cef on Cisco routers. Link here . Other vendors may have certain nuances.

In Cisco, thread allocation can be done in two ways:

We register two routes with the help of commands:

Thus, both routes will be set up in the routing table and load distribution will be carried out over them: We also check if the balancing method is chosen correctly: the Source IP address will change, and the Destination IP will always remain the same. This can affect the uniformity of balancing, given the NAT. For optimization, you can consider the source port, which will be randomly different, depending on the client session. To do this, use the following command:

You also need to configure static NAT to redirect web requests to 192.168.120.175:

What do we get? Requests from users from the Internet will fall on our router, which will distribute them between our servers across streams, depending on the source port in TCP. When you open a new session, the client may fall on a new server.

What happens if one of the servers falls? The route that led to this server will be removed from the routing table. To optimize this process, you can use IP SLA. Monitor the status of servers by ping every 10 seconds:

Next, add monitoring to the appropriate routes:

IP SLA on Cisco routers allows monitoring also via HTTP GET requests, which will help determine the fall of a web server, not only because it is not on the network, but also when the web service is down.

Thus, to build such a scheme does not require additional equipment and any software for web servers. All you need is a router with the ability to balance traffic.

There is a Cisco Router that brings a web server to the Internet. Two web servers on Centos 7 with nginx. The IP addresses of the first and second web servers are 192.168.20.176/24 and 192.168.20.177/24, respectively. To implement the plan, web servers need to set the same secondary ip address. This can be any private ip address that is not used on your network. I chose 192.168.120.175 and registered it with the secondary ip address of the main eth0 interface of web servers. On Centos, this is done by creating the eth0: 0 file in the / etc / sysconfig / network-scripts / directory. File contents:

TYPE="Ethernet"

DEVICE=eth0:0

BOOTPROTO="static"

IPADDR=192.168.120.175

NETMASK=255.255.255.255

ONBOOT="yes"

It is important to note that the mask 255.255.255.255 is used and this allows you to avoid any ip conflicts, since the web server will not use it to generate traffic. So to say, we will have Loopback interfaces on web servers.

After that, the router can implement load balancing with static routing. This technology is implemented using Ip Cef on Cisco routers. Link here . Other vendors may have certain nuances.

In Cisco, thread allocation can be done in two ways:

- Per-Destination (default). We need this option. All packets from one stream will be sent to one of the two servers. The principle of operation is that the hash is calculated by the source and destination ip addresses and, depending on this hash, either the first route (server) or the second is selected. Next, we will slightly change this behavior.

- Per-Packet. This option does not suit us, since balancing will occur by packages. Roughly speaking, the first packet is on the first route, the second packet is on the second one.

We register two routes with the help of commands:

ip route 192.168.120.175255.255.255.255 GigabitEthernet0/0192.168.20.176

ip route 192.168.120.175255.255.255.255 GigabitEthernet0/0192.168.20.177Thus, both routes will be set up in the routing table and load distribution will be carried out over them: We also check if the balancing method is chosen correctly: the Source IP address will change, and the Destination IP will always remain the same. This can affect the uniformity of balancing, given the NAT. For optimization, you can consider the source port, which will be randomly different, depending on the client session. To do this, use the following command:

ip cef load-sharing algorithm include-ports sourceYou also need to configure static NAT to redirect web requests to 192.168.120.175:

ip nat inside source static tcp 192.168.120.17580interfaceGigabitEthernet0/1 80What do we get? Requests from users from the Internet will fall on our router, which will distribute them between our servers across streams, depending on the source port in TCP. When you open a new session, the client may fall on a new server.

What happens if one of the servers falls? The route that led to this server will be removed from the routing table. To optimize this process, you can use IP SLA. Monitor the status of servers by ping every 10 seconds:

ip sla 10

icmp-echo 192.168.20.176

frequency 10

ip sla schedule 10 life forever start-timenow

ip sla 20

icmp-echo 192.168.20.177

frequency 10

ip sla schedule 20 life forever start-timenowNext, add monitoring to the appropriate routes:

ip route 192.168.120.175255.255.255.255 GigabitEthernet0/0192.168.20.176 track 10

ip route 192.168.120.175255.255.255.255 GigabitEthernet0/0192.168.20.177 track 20IP SLA on Cisco routers allows monitoring also via HTTP GET requests, which will help determine the fall of a web server, not only because it is not on the network, but also when the web service is down.

Thus, to build such a scheme does not require additional equipment and any software for web servers. All you need is a router with the ability to balance traffic.