HP Dynamic VPN Technology. Part 1

Introduction

Greetings to all readers on our blog.

This series of articles will be dedicated to our wonderful solution called HP Dynamic VPN (or HP DVPN).

This is the first article in a series, and in it I will try to talk about what the HP DVPN solution is, as well as describe its features and application scenarios. The following articles in this series will focus on the practical implementation of DVPN capabilities and the configuration of the equipment itself.

What is DVPN?

In short, DVPN is an architecture that allows multiple branches or regional offices (spoke) to dynamically create secure IPsec VPN tunnels over any IP transport to connect to a central office or data center (hub).

For whom and how can this be useful?

Suppose there is a certain organization that has many branches or remote offices scattered throughout the city or even throughout the country (for example, a bank or a network of shops, there are many examples of such organizations). We are faced with the task of combining all these branches with each other using one transport network, as well as providing the ability to connect them to the central office or data center, which hosts the main IT resources of this enterprise.

It would seem that the task is quite trivial, but there may be some nuances:

- Some branches can have at their disposal only one way to connect to the outside world - an Internet channel from an ISP (due to the banal lack of alternatives or their economic non-purpose). Therefore, the option of renting VPN services from telecom operators to connect all branches to one WAN “cloud” at once in this case simply disappears.

- There are issues of ensuring the protection of transmitted data in the transport network. As you know, transferring confidential information directly via the Internet is not at all safe, and not everyone will want to completely trust the telecom operator in the VPN rental option.

- How long can it take to deploy such a network? Days? Weeks? And if there are thousands of branches? Do I need to configure equipment at each branch? Do I need to configure existing devices on the network when adding a new branch? What if some branches should be able to transfer data directly between themselves, bypassing the center?

It is precisely in view of the presence of such nuances and pitfalls that such solutions to this problem as the already mentioned possibility of renting a dedicated VPN network from a telecom operator, or the option of directly connecting branches to the center via the Internet, do not suit us.

What are acceptable options for solving this problem? From the first thing that comes to mind is the use of secure IPsec VPN tunnels between the center and branches over the Internet in the star topology (that is, when each branch builds an IPsec tunnel to the center, the center acts as a hub for VPN tunnels coming from branches. At the same time, the news traffic of branches passes through the center).

This solution has been used for many years by many organizations and is also supported on the equipment of many manufacturers (since IPsec is a universally recognized standard), however, as part of our task, it is not without some very significant drawbacks:

- The very fact that for each new branch in the center it is necessary to configure a separate IPsec tunnel negatively affects the scalability of such a solution, and also increases the time it takes for new nodes to connect to the transport network. How long does it take to configure a new IPsec tunnel in the center, then make the same tunnel settings in the branch? And if we suddenly made a mistake in the settings? Where to look for a problem and for how long?

- If the task is to provide the ability to communicate between branches directly, bypassing the center (to reduce delays and optimize the load of communication channels), for N nodes in the transport network we will have to configure N * (N-1) / 2 tunnels (the so-called topology “ Full Mesh "). Imagine the amount of work for a network with at least a few dozen nodes?

- But what if part of the network nodes (for example, nodes in regional offices) in the future should move to more reliable transport and connect to each other using dedicated channels from the telecom operator with a guarantee of quality of service? Do the remaining nodes still connect via the Internet? Now completely reconfigure the configurations on these nodes?

What allows to implement the DVPN architecture?

To solve such problems and shortcomings, we have developed the HP Dynamic VPN (HP DVPN) architecture, which allows you to connect up to 3,000 nodes in a single secure transport network (or DVPN domain).

The main features of this architecture:

- DVPN allows you to dynamically lift IPsec VPN tunnels between the nodes of a DVPN domain over any IP transport (Internet or WAN).

- When you connect a new node to such a network, you can take the device configuration from an already connected network node, change only the IP addresses of network interfaces unique to each branch in it, and upload it to the new device. The IPsec VPN tunnel to the center will rise automatically, and the connection between the new branch and the center will be established.

- DVPN is optimized for star topologies (or Hub-and-Spoke). Just our case.

- In addition, DVPN can be configured to work in full mesh mode (Full Mesh). In this mode, IPsec VPN tunnels are dynamically created directly between branches as soon as they begin to transmit data to each other over the network.

- DVPN uses the standard IPsec protocol to create tunnels, with all its advantages (openness of the standard, many encryption and authentication options, dynamic key changes, etc.).

- The configuration on the central site (Hub) is dynamically updated when a new Spoke node is added to the network. At the same time, other Spoke nodes automatically receive information about the new node and get the opportunity to exchange data with it.

- The configuration of the equipment in the center and other branches is not affected.

- In summary, DVPN provides an automated, secure transport architecture that acts as an “overlay” (or “Overlay”) over an existing IP network including the Internet.

DVPN Architecture Components

DVPN consists of five main components:

- VAM server (up to 2 pieces per domain for fault tolerance)

- VAM - VPN Address Management or VPN address management.

- It is installed in the center, registers VAM clients and receives address information from all nodes of the DVPN domain.

- Mapping public IP addresses (from the lower transport network) to private IP addresses (from the “overlay” overlay network) of each node and transfers this information to them upon request. In fact, it is a kind of Address Resolution server, working on the similarity of DNS.

- It can work on one device in conjunction with the Hub.

- VAM client

- It registers the public IP address, private IP address, VAM node identifier on the VAM server.

- It determines addresses of other nodes by requesting information from the VAM server.

- Hub and Spoke devices are VAM clients.

- Hub (up to 2 pieces per domain for fault tolerance)

- The device (router), which is located in the center and acts as a hub of IPsec VPN tunnels from Spoke.

- Allows balancing Spoke connections between two Hubs in Active / Active mode.

- Spoke

- A device (router) installed in a branch that creates an IPsec tunnel from the Hub and tunnels traffic towards the center.

- It can also dynamically create IPsec tunnels with other Spokes in Full Mesh mode.

- Authentication Server (RADIUS / TACACS)

- Allows centrally authenticating VAM clients on a VAM server.

- Optional, the VAM server also supports the ability to locally authenticate VAM clients.

What does it look like?

The following is a simplified diagram of a typical DVPN network in Hub-and-Spoke and Full Mesh topologies, respectively.

The Hub-and-Spoke topology is characterized by the following features:

- DVPN is an Overlay Non-Broadcast Multiple-Access (NBMA) infrastructure that runs on top of an existing IP transport network and addresses DVPN nodes by private IP addresses within the same Overlay network segment.

- Spoke nodes do not have direct communication with each other, all traffic between them passes through the central Hub node.

- DVPN is optimized for Hub-and-Spoke transport topologies, as it defaults to implementing the same Overlay network topology.

- In a Hub-and-Spoke topology, one DVPN domain can contain up to 3000 Spoke nodes per Hub with dynamic BGP routing between nodes.

The Full Mesh topology has its own characteristics:

- Like the Hub and Spoke topologies, Full Mesh DVPN is an NBMA Overlay infrastructure supporting work on top of any IP transport.

- In the Full Mesh or Partial Mesh topology (where only a part of all nodes have direct connections to each other) traffic can be directly transmitted over dynamically created IPsec tunnels between Spoke nodes, while unloading the channels on the central Hub node.

- The Full Mesh topology has less scalability than the Hub-and-Spoke topology. The final numbers depend on many factors, including the performance of the DVPN routers on the nodes.

HP hardware with DVPN support

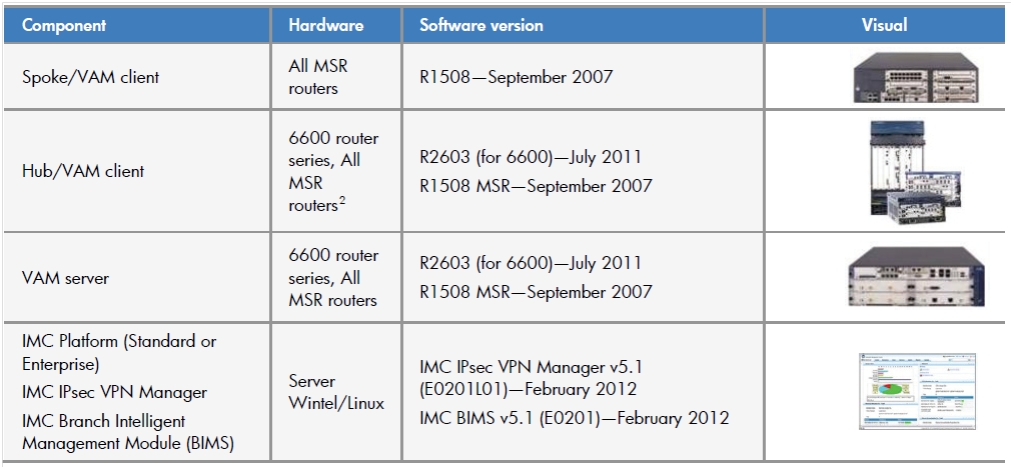

The table below provides a list of equipment that supports DVPN and recommendations on which component of the DVPN architecture it is desirable to use.

The table shows that DVPN is supported on almost all HP router series (HP MSR and HP 6600) except for the HP 8800 series.

More information on the HP DVPN-enabled product portfolio is available here .

DVPN and dynamic routing

Without dynamic routing between nodes, all the advantages of simplicity of configuration and scaling of the DVPN architecture would have come to naught. Obviously, with a large number of nodes in the network, static routing is not applicable, therefore DVPN supports the following dynamic routing protocols:

- BGP for large-scale networks (more than 50-100 nodes, up to a maximum of 3000 per domain)

- OSPF in NBMA mode

- Broadcast OSPF for Full Mesh Topology

DVPN Scaling and Resiliency

As already mentioned, DVPN allows you to scale up to 3000 Spoke nodes per domain. If necessary, the number of domains can be increased to 10 (on the HP 6600 router operating as a Hub), thereby providing support for creating networks with the number of nodes reaching 30,000!

To ensure fault tolerance, DVPN networks use duplication of devices acting as a VAN server, Hub router, and authentication server (if available).

At the same time, the VAM client on the Spoke nodes is registered on both VAM servers, and two independent DVPN domains are created on the router of these nodes, inside which IPsec VPN tunnels are built to the primary and backup Hub routers at the same time.

With this approach, using dynamic routing protocols, it is possible to balance traffic between IPsec VPN tunnels of both DVPN domains (primary and backup).

Below is a more detailed diagram of building a DVPN network using redundancy.

Management mechanisms

Even more efficient and easy to manage and monitor the network with the DVPN architecture, the HP Intelligent Management Center (IMC) management system will help.

For example, using a special module for this Branch Intelligent Management System (BIMS), it is possible to completely abandon the manual configuration of Spoke routers in branches by using the automatic configuration of subscriber devices of the TR-069 protocol.

You can read more about IMC and the BIMC module in the following series of articles on HP Networking in our company's blog.

Conclusion

The DVPN architecture allows you to quickly deploy highly scalable and secure corporate data networks (DSPs) of virtually any size and various topologies for enterprises with a developed infrastructure of geographically distributed units (branches) over any existing L3 transport (including the Internet, dedicated WAN channels, etc. )

What's next?

In the next article from the series on DVPN architecture, an example of setting up a typical DVPN network will be described in detail.

Stay tuned for updates to our blog!