QSAN storage as a competitor to Tier 1 brands

Modern IT infrastructure is now unthinkable without the use of virtualization systems. And virtualization most fully reveals its capabilities in the case of using a centralized data storage system. And besides this important role, there are other tasks where storage systems may be required: large-scale video surveillance projects, storing large amounts of data, working with media and so on.

Among the storage systems currently on the market, we want to draw your attention to the storage system of the Taiwanese manufacturer Qsan Technology XCubeSAN series.

QSAN as an independent company appeared in 2008. Initially, the QSAN team was engaged in the development and OEM production of RAID controllers for various storage vendors. A little later, having enlisted the support of such giants among ODM manufacturers like Compal and Gigabyte (yes, Gigabyte not only produces motherboards and video cards well-known to everyone, but also a lot of things from the Enterprise sector), they switched to producing their own storage systems. In Russia, the manufacturer has been present almost since its inception (that is, for almost 9 years) and during this time has passed a long way from an unknown vendor to a supplier of solutions that can successfully compete with the so-called Tier 1 brands.

So, QSAN XCubeSAN is the latest generation of storage systems, combining the maximum possible number of technologies. The main motto of the manufacturer is to make Enterprise functionality available to small and medium-sized companies. The main thing that you should immediately pay attention to is absolutely official support by the vendor of third-party disks. Therefore, no one will tie your hands (and turn out the pockets in search of money) when choosing a disk subsystem. Of course, you should immediately make a reservation that sticking to the compatibility sheet in the Enterprise segment is what is called a must have, otherwise you may encounter very unpleasant problems during operation.

Separately, it is worth noting in the context of the use of third-party drives is gaining increasing popularity of building SSD-based storage (and even All Flash Storage). If for some types of branded hard drives prices close to “shop” are still possible, then for SSDs, even with large project discounts, it is unrealistic to get closer to the cost, for example, to HGST or Intel. This means that All Flash based on QSAN will simply not reach the price when compared with Tier 1 brands.

Storage Hardware Components

The QSAN XCubeSAN line consists of three series, with indexes of models XS1200 , XS3200 and XS5200 . They differ in the type of processor (Pentium / Xeon, 2-4-8 cores), which in turn affects peak performance. The rest of the "iron" and software are completely identical. Therefore, in the review we will not focus on a particular model, since the information applies to essentially all (with some amendments, of course).

4 types of enclosures are available:

- 2U 12 bay LFF

- 3U 16 bay LFF

- 4U 24 bay LFF

- 2U 26 bay SFF

All models with LFF disks (3.5 ”) support including installation of disks / SSD form factor SFF (2.5”) without any additional options.

26 2U drives

The first three types of enclosures will hardly surprise anyone. But the case 2U26 is currently a very interesting form factor. Such a high density of disc placement is achieved by thinning the slide for the discs, as well as by the special arrangement of the stiffeners of the storage housing. Currently, none of the manufacturers have such solutions: the typical number of compartments for 2U is 24-25. And the extra two compartments for disks are not at all superfluous, as they allow a more flexible approach to the issue of building RAID groups and not saving on hot spare.

Universal skid disc

Next - internal components: power supplies, cooling modules, controllers. All of them are duplicated for fault tolerance. And, of course, support the "hot" replacement. No, of course, you can order a storage system with a single controller. But the entire progressive world has long concluded that the overpayment for the second controller is the customer's calm and continuous access to the services located on the storage system.

Rear panel

The controller is based on the Intel Xeon / Pentium D-1500 processor, which is specifically designed for use in embedded solutions. DDR4 memory with mandatory ECC support is used as RAM. The board has 4 connectors for this (2 for the younger model). Supports dual channel mode with a maximum capacity of up to 128GB (32GB for the younger model). There is a SATA DOM module on which the operating system is installed.

Controller top view

For communication with the outside world, there are two 10GbE iSCSI RJ-45 ports (backward compatible with 1GbE), a dedicated control port, and 2 miniSAS HD ports for connecting expansion shelves via the SAS 12G interface. In addition, there are connectors for connecting the console and UPS via COM or USB ports.

Controller, rear view

In addition to the integrated interface, there are two slots for expansion cards: PCI-E x8 Gen3 and PCI-E x4 Gen2. A variety of host connector options are supported:

- 4x 16Gb FC (SFP +)

- 2x 16Gb FC (SFP +)

- 2x 10Gb iSCSI (10GBASE-T)

- 4x 10Gb iSCSI (SFP +)

- 4x 1Gb iSCSI (1GBASE-T)

Expansion Cards

You can arbitrarily combine interfaces, including combining them in the same Fiber Channel system and iSCSI. The only limitation is that the port configuration in both controllers must be the same. In the maximum configuration in a dual controller storage system, there can be up to 16 FC 16G ports or up to 20 10G iSCSI ports. Of course, being measured by maximum values is a thankless task, but from the point of view of practice, having a large number of interfaces not only can improve performance in a number of scenarios, but also allows you to stop using expensive Fiber Channel switches or 10Gb Ethernet within 6-10 servers.

To protect the controller cache from a sudden power outage, the Caсhe-to-Flash module is used, consisting of a battery or a capacitor and an SSD with a PCI-E M.2 interface. Using such a fast drive is necessary to have time to copy the contents of the cache while the battery supports the controller. The entire operation is given no more than 2 minutes, even if the cache volume is maximum - 128GB. In this case, the battery capacity is enough for 3-4 such cycles, that is, you can be calm even with repeated power cuts. We also want to note that in order to maintain the Caсhe-to-Flash module, it is not necessary to remove the power supply unit, much less a controller. The module has a hot-swap feature and is available from the back panel.

To expand the disk capacity, it is possible to connect the XCubeDAS shelves, which are available in the same cases as the storage systems themselves: 2U12, 3U16, 4U24, 2U26. Moreover, there is no restriction on the configuration of the “head” and the shelves; you can combine them in any combination. However, the maximum number of shelves can not exceed 10, which in most cases is more than enough, since the number of disks within one system can reach 286.

By the way, in the very scheme of connecting shelf expansion to storage, QSAN has its own know-how related to ensuring fault tolerance. Physically, the shelf is connected to the "head" with two SAS cables, like all other vendors. But logically, each storage controller sees both shelf controllers, including through a neighbor, via an internal bus. As a result, in case of a cross-failure of the storage controller and the JBOD controller, the system will continue its work (here, of course, an important observation is that in such a situation, no one pulled out the cables between the components).

Storage connectivity and expansion shelves

We agree that the occurrence of such an incident is unlikely, but if there is additional protection (for which no one asks for money), then work with such a solution is somehow calmer.

Since we have touched upon the topic of know-how, it would not be superfluous to mention the support of the Wake-on-SAS technology, thanks to which you can control the power supply of the expansion shelves via SAS cables. It may be necessary to turn on / off the expansion shelves along with the “head” in the correct order in automatic mode. Of course, turning off the storage system is not at all often. But when such a moment comes, control of the administrator’s actions by the system’s automation will be far from superfluous. After all, for example, disabling the shelf before the “head” can lead to the collapse of the RAID group, if this group is “smeared” across several units.

Summarizing, we can conclude that the hardware component of QSAN XCubeSAN has the capacity to build completely diverse solutions (from the most simple and budget to very advanced), can be integrated with any SAN networks (including heterogeneous), and also allows you to use hard drives and Third-party SSD.

Features of the software component of storage

The basis is a Linux-like operating system of its own design - SANOS is already the 4th version. Management is carried out through the browser. The interface is presented in several languages, including Russian. Standard http and https protocols are supported with the ability to change port numbers for greater security. The interface does not require installation of Java, Flash and other third-party tools. You can also control via ssh protocol (but with slightly reduced functionality).

The interface is a vertical menu of basic functions and a viewing area occupying the main part of the screen. Getting used to navigation and control is a matter of a few minutes, since everything is quite intuitive. If you have ever worked with any vendor storage system, you can easily figure it out. In the interface, if anything, there are explanatory hints regarding certain values of parameters. Documentation, of course, is present and obligatory for familiarization before use.

Management interface Also available for review.

An important feature of the QSAN XCubeSAN storage management concept is the minimum restrictions on the configurations used and the maximum settings for them. Here, no one will impose on you, for example, predefined configurations by disk. For most key functions, there are ample opportunities for customization, and not just on / off. Therefore, your common sense, rather than software, will be a limitation in building configurations.

Maintenance of the system during operation involves notifying the administrator of any problems with the storage system. QSAN XCubeSAN can send similar information by e-mail, send messages to a syslog server and issue an SNMP trap. In addition, you can learn about the current status from the WebGUI: detailed monitoring of hardware sensors, current performance of the entire system, individual disks, volumes and input / output ports.

Monitoring

Separately, we want to note the function of integrating storage systems with uninterruptible power supplies. Communication with the UPS is supported via COM and USB ports, as well as via Ethernet. The result of this connection is the ability to shutdown the storage system at the command of the UPS. Most often, in the event of a sudden power outage, it is enough to shut down the servers correctly, and the storage system can simply be disconnected from the power supply. But if you organize an emergency shutdown process according to all the rules, then such integration of the storage system and UPS will be very useful, since will allow you to properly shut down the entire infrastructure, including storage.

If we are talking about possible incidents with equipment, it is impossible not to note the current trend of most vendors to introduce an automatic system for sending information about the state of equipment to cloud services, in order to analyze this information in Big Data and predict possible failures. The service is certainly useful, but in our country it faces fierce resistance from users who do not want to share such data with someone from the outside. This may be due to different reasons: security policies at the site of operation, fear that confidential information will be sent or payment for such a service. Partly for this reason, QSAN does not provide an automatic data collection service on the status of its storage systems. Instead of this, for effective diagnostics of storage systems it has an extended logging mode for all internal processes. Therefore, it is enough for the administrator to send a debug info file to technical support so that engineers can determine the source of the problem as accurately as possible.

Firmware update is made on the go without stopping the system. For modern storage, this is already a de facto standard, but it is impossible not to mention this.

The storage space in QSAN XCubeSAN is based on the current pool concept. Physical disks are combined into RAID groups, which, in turn, form pools. The popularity of pools is currently due to the fact that, unlike the classic RAID groups, they are a kind of add-on in the form of virtualization of disk space and allow you to perform a number of operations most painlessly in relation to the data. And first of all, it is such a routine as the expansion of storage space. Adding new disks to a RAID group has always been a very risky operation, since during the restructuring of the group no RAID algorithms provide data protection. In addition, the restructuring process is very slow (depending on the size and type of disks, it could reach several days and even weeks), the disks at this moment experience an increased load, that can only accelerate the failure of one of them. Therefore, if a disk failure occurs, then all data located in the group will go into oblivion. Restoring from a backup copy and filling in in some way the changed data from the moment of creating the backup will not add any enthusiasm to administrators to conduct array expansion operations.

When using pools, on the contrary, everything is quite simple. The pool expansion team is the creation of another one or more RAID groups and joining them to existing groups. As a result, the administrator will have access to the common space as a whole, despite the fact that it consists of several pieces. It is recommended that the existing pool be expanded with groups of the same level as the original one in order to obtain maximum performance. But if necessary, you can “glue” into one pool, for example, RAID5 and RAID6 groups. Just remember that in this case, performance will be limited to the slowest link.

Within a single pool, you can combine not only groups with different RAID levels, but also different types of disks. Moreover, the storage system can automatically transfer data between disks in accordance with the demand for this data. Such data transfer functionality is called tiering . In the tearing pool there can be up to three levels:

- SSD drives - the highest, fastest level, the most frequently used (“hot”) data

- Fast drives SAS 10K and 15K - medium level

- Slow and capacious disks 7.2K - the lower level, the least used (“cold”) data

For the tearing pool, you can flexibly set a schedule for when and with which priority to migrate data (at least every hour). But a reasonable value would be moving 1-2 times a day so that it does not have a strong influence on current tasks and, at the same time, was effective in terms of final performance. It is important that all these settings can be changed "on the fly."

For volumes created in the tearing pool, you can specify the initial position, as well as in which direction to move the data. Detailed statistics are available for all volumes: what and where was moved.

In addition to tiring, SSD caching is another way to improve performance.. In this case, frequently requested data is copied to dedicated SSDs. We want to immediately focus on the fact that in a QSAN SSD storage system, the cache works not only for read operations, but also for write operations. The cache itself is physically located on dedicated SSDs that are not available for data storage. The SSD in the cache can be several (including a different volume), they are all used together. If a write cache is used, the SSD must be a multiple of two. This is necessary for data protection (mirroring), because in case of failure of one of the SSDs, it is important not to lose the contents of the cache, which has not yet been written to the disks. In case of using a read-only cache, there is no need to protect it, since it contains only a copy of the data located on the disks.

Cache statistics

Unlike other vendors' products, where the SSD cache function has only a single “on / off” setting, in QSAN XCubeSAN, this functionality is not at all a “black box”. For all volumes that require caching, a “behavior profile” is selected, according to which the data is cached. There are several predefined profiles (database, file server, web server), as well as the ability to create a new one, specifically for your needs. For it, you need to specify which blocks to cache, and also set the number of read / write requests, after which the block will be copied to the cache. Due to this flexibility in the settings of this option, the QSAN XCubeSAN storage system can show better performance results in a number of user tasks than the storage systems of other manufacturers.

SSD cache settings

Without such an important functionality related to data security, snapshots of modern storage systems no longer exist. In QSAN this, of course, is also present. Snapshots work using copy-on-write technology. Can be created manually and according to the schedule with a frequency of up to 15 minutes. There is integration with Microsoft VSS for creating consistent snapshots from the point of view of applications. They can also be mounted in the form of volumes, including those with write permissions.

Functions that are based on snapshot technology are also not ignored. This is replication and cloning.. They differ from each other in that cloning is creating a copy of a volume within a single system, and replication is creating a copy on another physical storage system. Both operations are performed using the block asynchronous method. The shortest possible interval is 15 minutes. As a receiving device for replication, it is not necessary to have exactly the same storage model. The main thing is that this should also be a QSAN storage system. The iSCSI protocol serves as a transport. It is possible to work through low-speed channels, including via the Internet. There is support for multipath I / O to ensure the fault tolerance of the process and utilization of the bandwidth of the available communication channels. And so that replication does not interfere with the work of the main services of the company, you can activate traffic shaping in the settings. Various replication topologies are supported: single and double sided, one-to-many, many-to-one. Thus, it is possible to build disaster recovery solutions with different configurations.

It is worth noting that, of all the listed functionality, only two technologies are paid and subject to additional licensing. This is tearing and SSD caching. The remaining functions are available immediately "out of the box" without any restrictions. Yes, and these two licenses are purchased once for the entire life cycle without reference to the data volume, the number of expansion shelves, etc. Yes, and do not forget that the Qsan storage system supports the installation of third-party SSDs. So quite often it makes sense to simply place the data critical to the performance of the disk subsystem on solid-state drives and not bother, for example, with the same tearing.

XCubeSAN is the QSAN flagship platform that continues to evolve. In particular, features such as QoS, synchronous replication, and integration with VMware SRM are under development. All this will be available to users with future firmware updates. But even with the current functionality, XCubeSAN looks quite solid against competitors, which allows it to become the core of the IT infrastructure of a company of any scale.

Checking in

After becoming familiar with the hardware and software features of the QSAN XCubeSAN storage system, the time has come to test this platform in action.

Today we can say with confidence that the performance of any configurations with hard disks on modern storage systems of different vendors, plus or minus, is the same and is determined by the performance of the disks themselves. There may be differences due to different caching algorithms or features of the implementation of RAID groups. But these differences are hardly worth detailed study. In the Enterprise segment, the key characteristics of the equipment that directly affect its choice are trouble-free operation as part of the software and hardware complex and qualified technical support. Therefore, it is not interesting for anyone to look for the difference in performance between, for example, storage systems with a magnifying glass, as they do in consumer computing reviews. However, performance indicators still play a role in some top configurations, when not a drive may become a bottleneck.

To try to "put" the system during the test, causing the storage controllers to become saturated with requests, a sufficiently large number of SSDs will be required. Unfortunately, we do not have so many of them in constant access. Therefore, in this article, we present the results of tests that were conducted by experts from StorageReview when they wrote their reviews of the QSAN XCubeSAN storage systems. They used 24 pieces of Toshiba PX04SV SAS 3.0 SSD. Such an amount of SSD is not enough to overload the storage controllers, but it is enough to show what the XCubeSAN series is capable of.

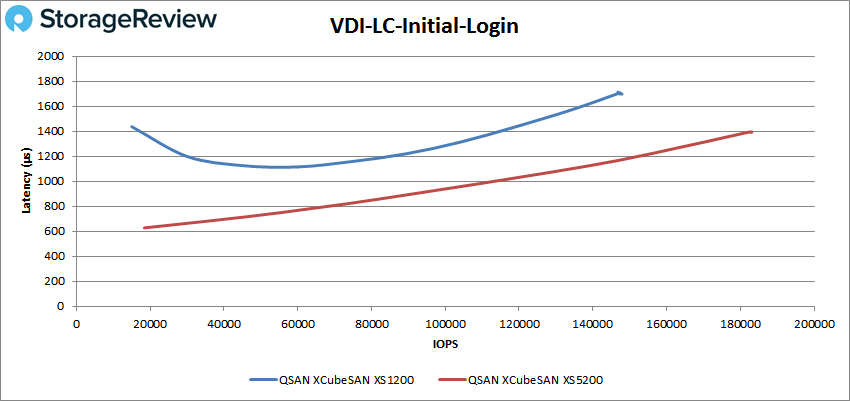

The tests used two systems for 26 disks: the younger XS1200 series and the older XS5200 series. In addition to answering questions about the overall performance, you can also understand what a more efficient processor in the storage system provides (in fact, there is still a difference between the series in the amount of pre-installed cache memory, but in these tests this figure was the same).

If anyone is not interested in watching test graphs, feel free to scroll to conclusions. For the rest we provide more detailed information.

Stand description:

4 node Cluster based on Dell PowerEdge R740xd servers

- 8 Intel Xeon Gold 6130 CPU for 269GHz в кластере (Два на ноду, 2.1GHz, 16-cores, 22MB Cache)

- 1TB RAM (256GB на ноду, 16GB x 16 DDR4, 128GB на CPU)

- 4 x Emulex 16GB dual-port FC HBA

- 4 x Mellanox ConnectX-4 rNDC 25GbE dual-port NIC

На СХД было создано 2 пула RAID10 по 12 дисков в каждом. По одному пулу на каждый контроллер СХД. На каждом пуле было создано по одному тому размером ~5TB.

В качестве тестового ПО использовался пакет VDBench от Oracle, который помимо тестов на случайный и последовательный доступ эмулирует работу баз данных SQL и Oracle, а также работу VDI окружения.

Используемые профили:

- 4K Random Read: 100% Read, 128 threads, 0-120% iorate

- 4K Random Write: 100% Write, 64 threads, 0-120% iorate

- 64K Sequential Read: 100% Read, 16 threads, 0-120% iorate

- 64K Sequential Write: 100% Write, 8 threads, 0-120% iorate

- Synthetic Database: SQL and Oracle

- VDI Full Clone and Linked Clone Traces

According to the test results, we can note the very decent performance of the QSAN XCubeSAN storage system . Thus, in the most popular tests, indicators of more than 400K IOPS @ 4K for reading and more than 270K of IOPS @ 4K for writing with latency not exceeding 2ms were achieved. And already “in a jump”, if you don’t look at the value of latency, the indicators all the way up to 450K / 300K IOPS for reading / writing. The result is, of course, not like a full-fledged All Flash Array, but the cost of the solution is much lower.

As for the younger model, it lags behind the top-end both in maximum performance indicators (up to 1.5 times) and in latency (it is not very noticeable on synthetics, and almost twice in real tasks). However, it is still possible to get more than 200K IOPS on most real-world tasks with a reasonable value of latency.

Verdict

The ability to use third-party disks is not at all a unique characteristic among storage vendors. And quite naturally, an association arises with a rather peculiar organization of support for these vendors: communication in English only, long waiting for spare parts, sometimes with possible surprises in the form of their own customs clearance, etc. Therefore, it is not surprising that in the Enterprise segment, despite the increased cost, the products of Tier 1 level manufacturers, which fully take care of all after sales support, are not surprising.

The standard warranty for Qsan storage includes Russian-language support for 9x5 NBD for 3 years from the date of purchase. When a warranty event occurs, a vendor sends at its own expense a leading replacement of a spare part from a warehouse in Moscow. So the real time the customer waits for a new component in most cases will be just one working day. If necessary, it is possible to purchase additional service packages, including those with on-site service, and extending the warranty period to 5 years.

We also want to note that the end of the warranty does not mean the end of technical support. After the warranty period, customers will still have access to the firmware and the ability to create service tickets.

Summarizing our review of the Qsan XCubeSAN storage system, I would like to note that the product turned out to be very interesting, with the necessary hardware and software components in actual operation. Performance is quite high. And technical support meets the expectations of working with Enterprise products.