Failover Cluster PostgreSQL + Patroni. Implementation Experience

In the article, I will tell you how we approached the PostgreSQL fault tolerance issue, why this has become important to us, and what happened in the end.

We have a highly loaded service: 2.5 million users worldwide, 50K + active users every day. The servers are located in Amazone in one region of Ireland: there are constantly 100+ different servers in operation, of which almost 50 are with databases.

The whole backend is a large monolithic stateful Java application that keeps a constant websocket connection to the client. With the simultaneous work of several users on one board, they all see the changes in real time, because we record each change in the database. We have approximately 10K queries per second to our databases. At peak load in Redis, we write at 80-100K queries per second.

Initially, our service worked with Redis, a key-value repository that stores all the data in the server’s RAM.

Pros of Redis:

Cons Redis for us:

We took into account the disadvantages and realized that we need to move to something more convenient, with normal transactions and less dependence on latency. Conducted a study, analyzed many options and chose PostgreSQL.

We have been moving to a new database for 1.5 years and have only transferred a small part of the data, so now we are working simultaneously with Redis and PostgreSQL. More information about the stages of moving and switching data between databases is written in an article by my colleague .

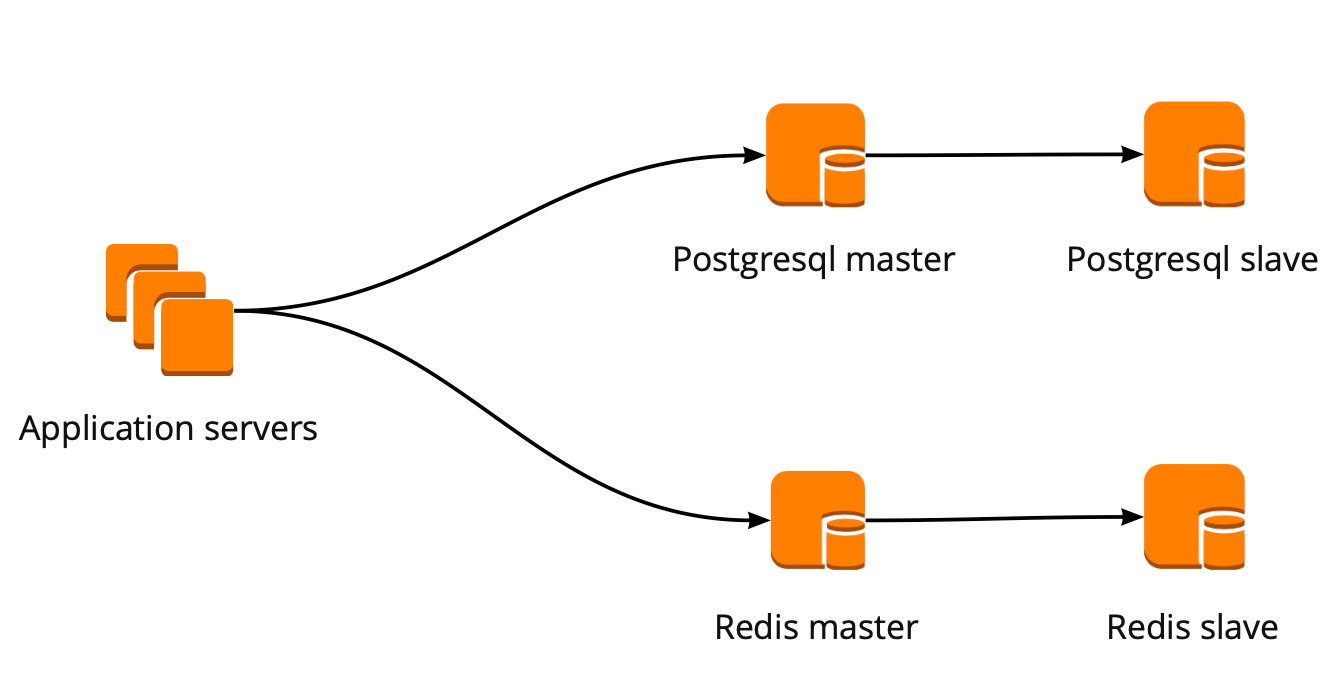

When we just started moving, our application worked directly with the database and turned to the Redis and PostgreSQL wizard. The PostgreSQL cluster consisted of a master and an asynchronous replica replica. This is how the database operation scheme looked:

While we were moving, the product also developed: the number of users and the number of servers that worked with PostgreSQL increased, and we began to miss connections. PostgreSQL creates a separate process for each connection and consumes resources. You can increase the number of connections up to a certain point, otherwise there is a chance to get non-optimal database operation. The ideal option in this situation would be the choice of a connection manager who will stand in front of the base.

We had two options for the connection manager: Pgpool and PgBouncer. But the first does not support the transactional mode of working with the database, so we chose PgBouncer.

We have set up the following working scheme: our application accesses one PgBouncer, followed by Masters PostgreSQL, and behind each master, one replica with asynchronous replication.

At the same time, we could not store the entire amount of data in PostgreSQL, and the speed of working with the database was important for us, so we started sharding PostgreSQL at the application level. The scheme described above is relatively convenient for this: when adding a new PostgreSQL shard, it is enough to update the PgBouncer configuration and the application can immediately work with the new shard.

This scheme worked until the only PgBouncer instance died. We are located in AWS, where all instances are running on hardware that periodically dies. In such cases, the instance simply moves to the new hardware and works again. This happened with PgBouncer, but it became unavailable. The result of this fall was the inaccessibility of our service for 25 minutes. AWS recommends using redundancy on the user side for such situations, which was not implemented with us at that time.

After that, we seriously thought about the fault tolerance of PgBouncer and PostgreSQL clusters, because a similar situation could happen again with any instance in our AWS account.

We built the PgBouncer fault tolerance scheme as follows: all application servers access the Network Load Balancer, behind which there are two PgBouncer. Each of the PgBouncer looks at the same master PostgreSQL of each shard. If the AWS instance crashes again, all traffic is redirected through another PgBouncer. Fault tolerance Network Load Balancer provides AWS.

This scheme allows you to easily add new PgBouncer servers.

In solving this problem, we considered different options: self-written failover, repmgr, AWS RDS, Patroni.

They can monitor the work of the master and, in case of its fall, promote the replica to the master and update the configuration of PgBouncer.

The advantages of this approach are maximum simplicity, because you yourself write scripts and understand exactly how they work.

Minuses:

Self-written failover looks very complicated and requires non-trivial support. With a single PostgreSQL cluster, this will be the easiest option, but it does not scale, so it is not suitable for us.

Replication Manager for PostgreSQL clusters, which can manage the operation of a PostgreSQL cluster. At the same time, there is no automatic failover “out of the box” in it, so for work you will need to write your own “wrapper” on top of the ready-made solution. So everything can turn out even more complicated than with self-written scripts, so we did not even try Repmgr.

It supports everything you need for us, knows how to backup and supports a connection pool. It has automatic switching: upon the death of the master, the replica becomes the new master, and AWS changes the dns record to the new master, while the replicas can be in different AZs.

The disadvantages include the lack of subtle settings. As an example of fine-tuning: on our instances there are restrictions for tcp connections, which, unfortunately, cannot be done in RDS:

In addition, the AWS RDS price is almost two times higher than the regular instance price, which was the main reason for rejecting this decision.

This is a python template for managing PostgreSQL with good documentation, automatic failover and github source code.

Pros of Patroni:

Minuses:

As a result, to create a failover cluster, we chose Patroni.

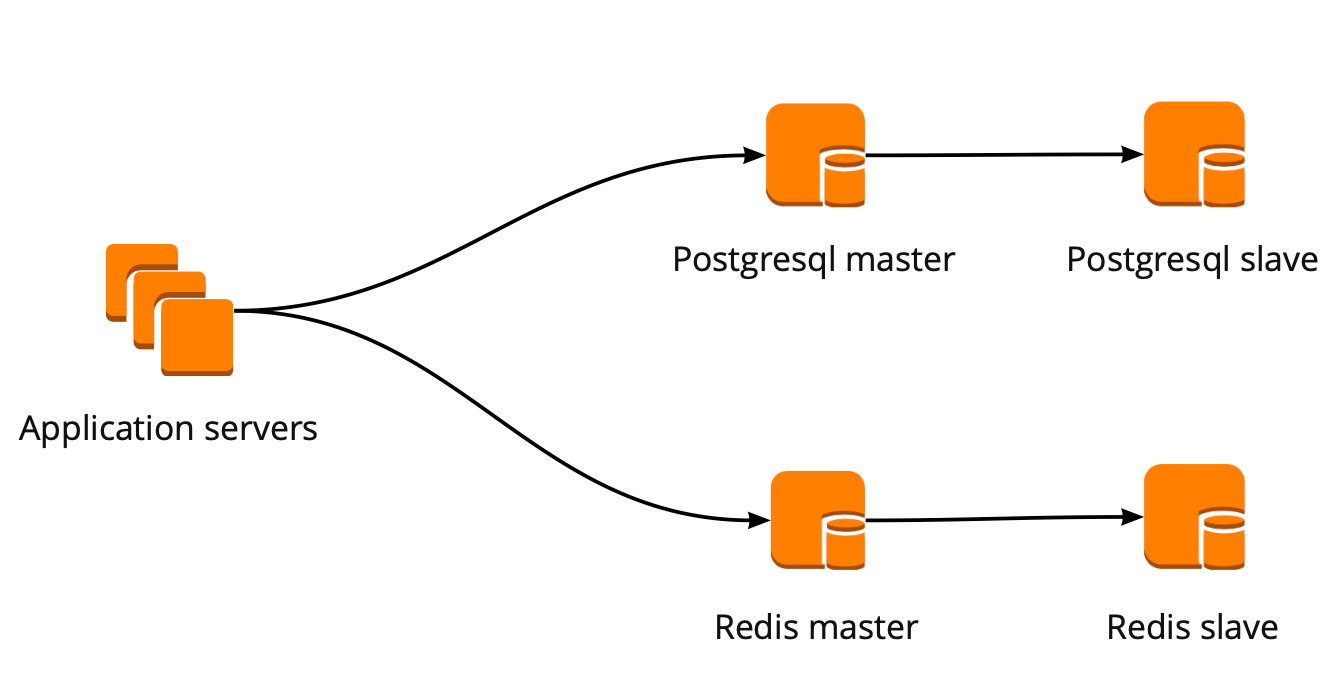

Before Patroni, we had 12 PostgreSQL shards in configuration, one master and one replica with asynchronous replication. Application servers accessed the databases through the Network Load Balancer, behind which there were two instances with PgBouncer, and behind them were all PostgreSQL servers.

To implement Patroni, we needed to select a distributed cluster configuration repository. Patroni works with distributed configuration storage systems such as etcd, Zookeeper, Consul. We just have a full-fledged Consul cluster on prod that works in conjunction with Vault and we don’t use it anymore. A great reason to start using Consul for its intended purpose.

We have a Consul cluster, which consists of three nodes, and a Patroni cluster, which consists of a leader and a replica (in Patroni, a master is called a cluster leader, and slaves are called replicas). Each instance of a Patroni cluster constantly sends cluster status information to Consul. Therefore, from Consul you can always find out the current configuration of the Patroni cluster and who is the leader at the moment.

To connect Patroni to Consul, it is enough to study the official documentation, which says that you need to specify the host in http or https format, depending on how we work with Consul, and the connection scheme, optionally:

It looks simple, but here pitfalls begin. With Consul we are working on a secure connection via https and our connection config will look like this:

But that doesn't work. At start, Patroni cannot connect to Consul, because it tries to follow http anyway.

The source code for Patroni helped deal with the problem. Good thing it is written in python. It turns out the host parameter is not parsed at all, and the protocol must be specified in the scheme. Here is the working configuration block for working with Consul with us:

So, we have chosen storage for a configuration. Now you need to understand how PgBouncer will switch its configuration when changing the leader in the Patroni cluster. The documentation does not answer this question, because there, in principle, work with PgBouncer is not described.

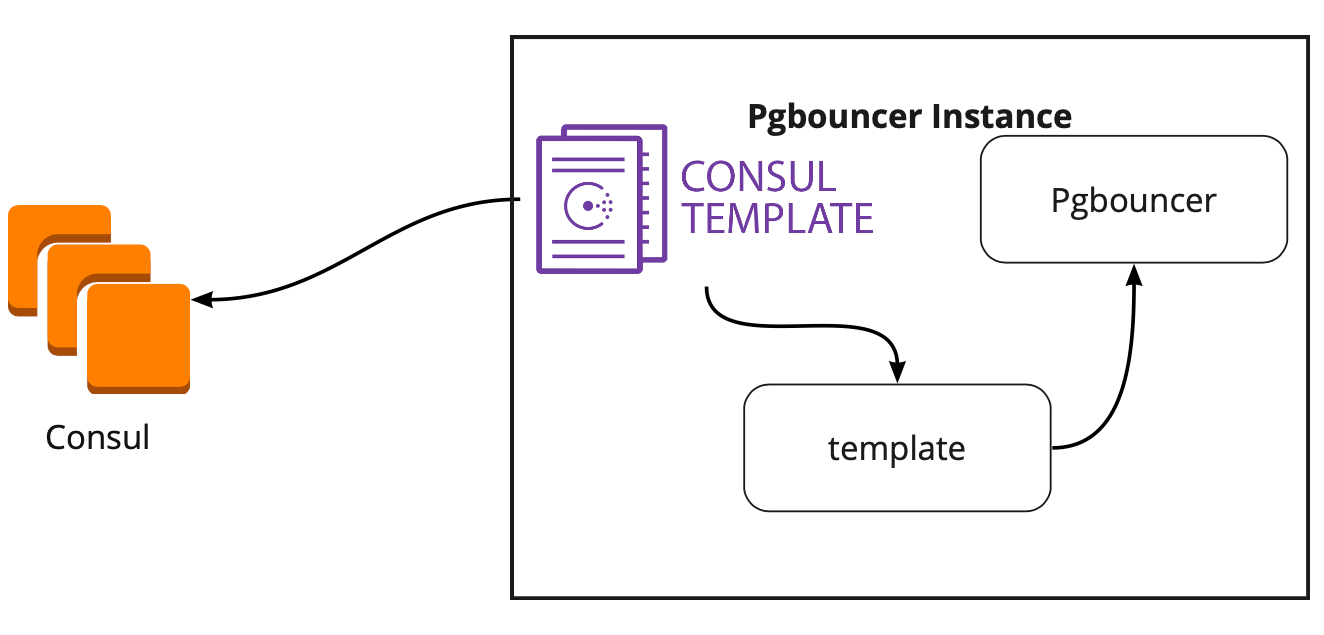

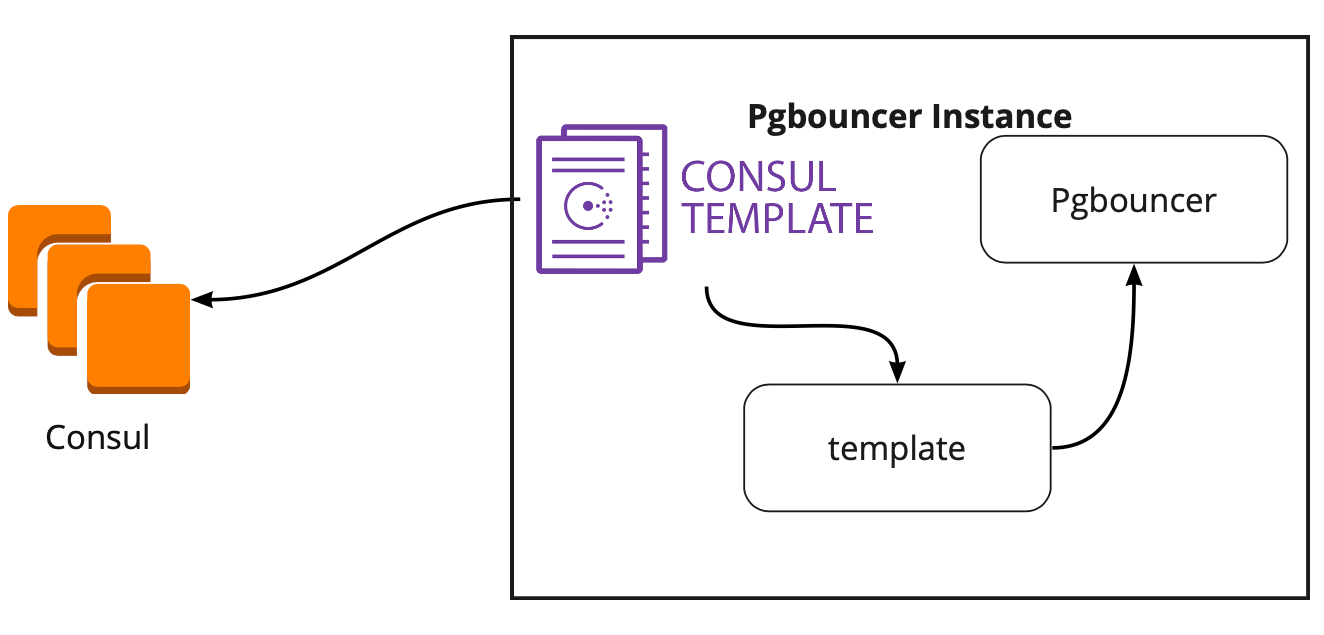

In search of a solution, we found an article (I don’t remember the name, unfortunately), where it was written that the Consul-template helped a lot in connecting PgBouncer and Patroni. This prompted us to study the work of the Consul-template.

It turned out that the Consul-template constantly monitors the configuration of the PostgreSQL cluster in Consul. When the leader changes, he updates the PgBouncer configuration and sends a command to reboot it.

The big plus of template is that it is stored as code, so when adding a new shard, it is enough to make a new commit and update the template in automatic mode, supporting the principle of Infrastructure as code.

As a result, we got the following working scheme:

All application servers turn to the balancer → two PgBouncer instances are behind it → Сonsul-template is launched on each instance, which monitors the status of each Patroni cluster and monitors the relevance of the PgBouncer config, which sends requests to the current leader of each a cluster.

Before launching the program, we launched this circuit on a small test environment and checked the operation of automatic switching. They opened the board, moved the sticker and at that moment “killed” the leader of the cluster. In AWS, just turn off the instance through the console.

The sticker returned back within 10-20 seconds, and then again began to move normally. This means that the Patroni cluster worked correctly: it changed the leader, sent the information to Consul, and the Consul-template immediately picked up this information, replaced the PgBouncer configuration and sent the command to reload.

Everything works perfectly! But new questions arise: How will it work under high load? How to quickly and safely roll everything into production?

The test environment in which we conduct load testing helps us answer the first question. It is completely identical to production in architecture and has generated test data, which are approximately equal in volume to production. We decide to just “kill” one of the PostgreSQL wizards during the test and see what happens. But before that, it is important to check the automatic rolling, because on this environment we have several PostgreSQL shards, so we will get excellent testing of configuration scripts before selling.

Both tasks look ambitious, but we have PostgreSQL 9.6. Maybe we will immediately upgrade to 11.2?

We decide to do this in 2 stages: first upgrade to 11.2, then launch Patroni.

To quickly upgrade the version of PostgreSQL, you must use the -k option , which creates a hard link on the disk and there is no need to copy your data. On bases of 300-400 GB, the update takes 1 second.

We have a lot of shards, so the update needs to be done automatically. To do this, we wrote Ansible playbook, which performs the entire update process for us:

It is important to note here that before starting the upgrade, it is necessary to execute it with the --check parameter to be sure of the possibility of an upgrade. Our script also makes the substitution of configs for the upgrade. The script we completed in 30 seconds, this is an excellent result.

To solve the second problem, just look at the configuration of Patroni. In the official repository there is an example configuration with initdb, which is responsible for initializing a new database when Patroni is first launched. But since we have a ready-made database, we just deleted this section from the configuration.

When we started installing Patroni on a ready-made PostgreSQL cluster and running it, we faced a new problem: both servers started as leader. Patroni knows nothing about the early state of the cluster and tries to start both servers as two separate clusters with the same name. To solve this problem, delete the data directory on the slave:

This must be done only on slave!

When connecting a clean replica, Patroni makes a basebackup leader and restores it to the replica, and then catches up with the current state by wal-logs.

Another difficulty we encountered is that all PostgreSQL clusters are called main by default. When each cluster knows nothing about the other, this is normal. But when you want to use Patroni, then all clusters must have a unique name. The solution is to change the cluster name in the PostgreSQL configuration.

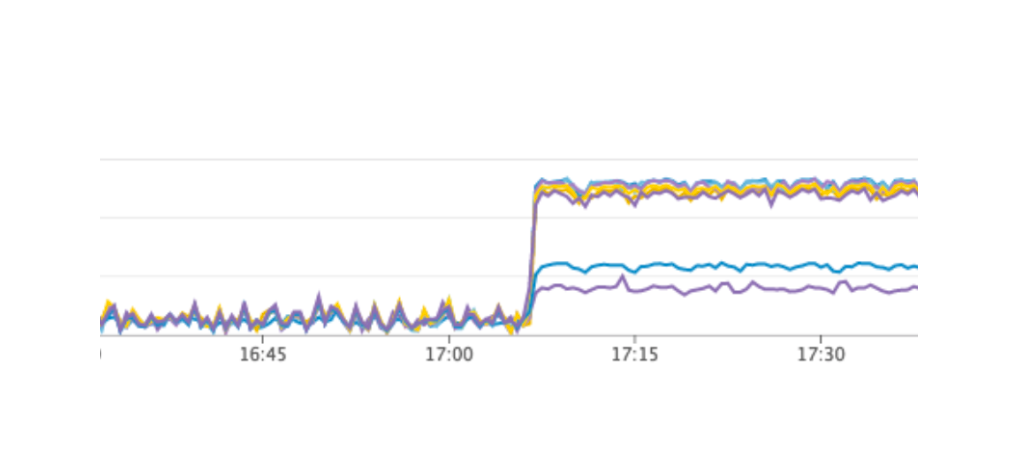

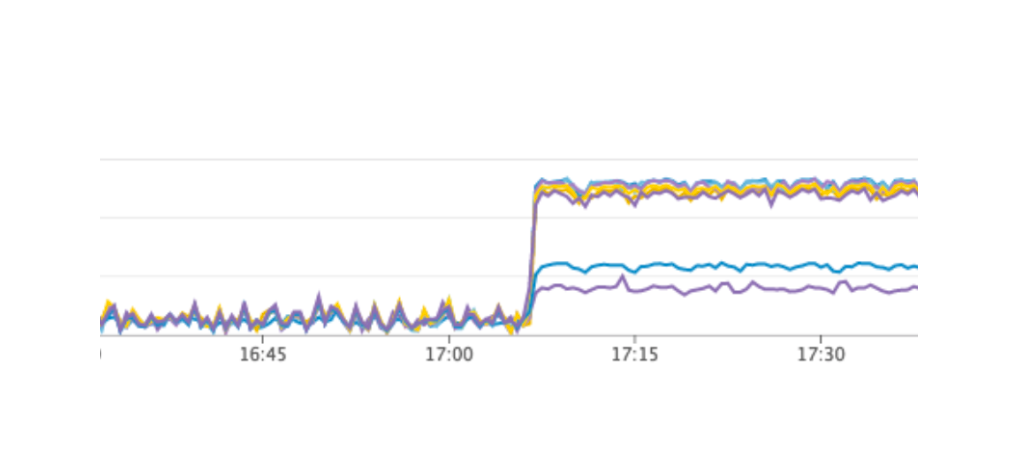

We launched a test that simulates the work of users on the boards. When the load reached our average daily value, we repeated the exact same test, we turned off one instance with leader PostgreSQL. The automatic failover worked as we expected: Patroni changed the leader, Consul-template updated the configuration of PgBouncer and sent the command to reload. According to our graphs in Grafana, it was clear that there are delays of 20-30 seconds and a small amount of errors from servers related to connecting to the database. This is a normal situation, such values are valid for our failover and definitely better than the downtime of the service.

As a result, we got the following plan:

At the same time, our scheme allows you to make the first item at almost any time, we can take turns removing each PgBouncer from work and execute a deployment on it and launching the consul-template. So we did.

For quick rolling, we used Ansible, since we already checked all the playbook on a test environment, and the execution time of the full script was from 1.5 to 2 minutes for each shard. We could roll everything out alternately for each shard without stopping our service, but we would have to turn off every PostgreSQL for a few minutes. In this case, users whose data is on this shard could not fully work at this time, and this is unacceptable to us.

The way out of this situation was the planned maintenance, which takes place every 3 months. This is a window for scheduled work when we completely turn off our service and update database instances. There was one week left until the next window, and we decided to just wait and further prepare. During the wait, we additionally made sure: for each PostgreSQL shard we raised a spare replica in case of failure in order to save the latest data, and added a new instance for each shard, which should become a new replica in the Patroni cluster so as not to execute a command to delete data . All this helped to minimize the risk of error.

We restarted our service, everything worked as it should, users continued to work, but on the graphs we noticed an abnormally high load on the Consul server.

Why didn’t we see it on the test environment? This problem illustrates very well that it is necessary to follow the principle of Infrastructure as code and refine the entire infrastructure, starting with test environments and ending with production. Otherwise, it is very easy to get the kind of problem that we got. What happened Consul first appeared on production, and then on test environments, as a result, on test environments, the version of Consul was higher than on production. Just in one of the releases, a CPU leak was solved when working with consul-template. Therefore, we just updated Consul, thus solving the problem.

However, we got a new problem that we were not even aware of. When updating Consul, we simply remove the Consul node from the cluster using the consul leave command → Patroni connects to another Consul server → everything works. But when we reached the last instance of the Consul cluster and sent the consul leave command to it, all Patroni clusters simply restarted, and in the logs we saw the following error:

The Patroni cluster was unable to obtain information about its cluster and restarted.

To find a solution, we contacted the authors of Patroni through issue on github. They suggested improvements to our configuration files:

We were able to repeat the problem on a test environment and tested these parameters there, but, unfortunately, they did not work.

The problem is still unresolved. We plan to try the following solutions:

We understand the place where the error occurred: the problem is probably using the default timeout, which is not overridden through the configuration file. When the last Consul server is removed from the cluster, the entire Consul cluster freezes for more than a second, because of this Patroni cannot get the state of the cluster and completely restarts the entire cluster.

Fortunately, we did not meet any more errors.

After the successful launch of Patroni, we added an additional replica in each cluster. Now in each cluster there is a semblance of a quorum: one leader and two replicas - to insure against the case of split-brain when switching.

Patroni has been working at production for more than three months. During this time, he has already managed to help us out. Recently, the leader of one of the clusters died in AWS, the automatic failover worked, and users continued to work. Patroni completed his main task.

A small summary of the use of Patroni:

We have a highly loaded service: 2.5 million users worldwide, 50K + active users every day. The servers are located in Amazone in one region of Ireland: there are constantly 100+ different servers in operation, of which almost 50 are with databases.

The whole backend is a large monolithic stateful Java application that keeps a constant websocket connection to the client. With the simultaneous work of several users on one board, they all see the changes in real time, because we record each change in the database. We have approximately 10K queries per second to our databases. At peak load in Redis, we write at 80-100K queries per second.

Why we switched from Redis to PostgreSQL

Initially, our service worked with Redis, a key-value repository that stores all the data in the server’s RAM.

Pros of Redis:

- High response rate, as everything is stored in memory;

- Convenience of backup and replication.

Cons Redis for us:

- There are no real transactions. We tried to simulate them at the level of our application. Unfortunately, this did not always work well and required writing very complex code.

- The amount of data is limited by the amount of memory. As the amount of data increases, memory will grow, and in the end, we will run into the characteristics of the selected instance, which in AWS requires stopping our service to change the type of instance.

- It is necessary to constantly maintain a low latency level, as We have a very large number of requests. The optimal delay level for us is 17-20 ms. At the level of 30-40 ms, we get long answers to the requests of our application and the degradation of the service. Unfortunately, this happened with us in September 2018, when one of the Redis instances for some reason received a latency 2 times higher than usual. To solve the problem, we stopped the service in the middle of the day for unscheduled maintenance and replaced the problematic Redis instance.

- It is easy to get data inconsistency even with minor errors in the code and then spend a lot of time writing code to fix this data.

We took into account the disadvantages and realized that we need to move to something more convenient, with normal transactions and less dependence on latency. Conducted a study, analyzed many options and chose PostgreSQL.

We have been moving to a new database for 1.5 years and have only transferred a small part of the data, so now we are working simultaneously with Redis and PostgreSQL. More information about the stages of moving and switching data between databases is written in an article by my colleague .

When we just started moving, our application worked directly with the database and turned to the Redis and PostgreSQL wizard. The PostgreSQL cluster consisted of a master and an asynchronous replica replica. This is how the database operation scheme looked:

PgBouncer Deployment

While we were moving, the product also developed: the number of users and the number of servers that worked with PostgreSQL increased, and we began to miss connections. PostgreSQL creates a separate process for each connection and consumes resources. You can increase the number of connections up to a certain point, otherwise there is a chance to get non-optimal database operation. The ideal option in this situation would be the choice of a connection manager who will stand in front of the base.

We had two options for the connection manager: Pgpool and PgBouncer. But the first does not support the transactional mode of working with the database, so we chose PgBouncer.

We have set up the following working scheme: our application accesses one PgBouncer, followed by Masters PostgreSQL, and behind each master, one replica with asynchronous replication.

At the same time, we could not store the entire amount of data in PostgreSQL, and the speed of working with the database was important for us, so we started sharding PostgreSQL at the application level. The scheme described above is relatively convenient for this: when adding a new PostgreSQL shard, it is enough to update the PgBouncer configuration and the application can immediately work with the new shard.

PgBouncer Fault Tolerance

This scheme worked until the only PgBouncer instance died. We are located in AWS, where all instances are running on hardware that periodically dies. In such cases, the instance simply moves to the new hardware and works again. This happened with PgBouncer, but it became unavailable. The result of this fall was the inaccessibility of our service for 25 minutes. AWS recommends using redundancy on the user side for such situations, which was not implemented with us at that time.

After that, we seriously thought about the fault tolerance of PgBouncer and PostgreSQL clusters, because a similar situation could happen again with any instance in our AWS account.

We built the PgBouncer fault tolerance scheme as follows: all application servers access the Network Load Balancer, behind which there are two PgBouncer. Each of the PgBouncer looks at the same master PostgreSQL of each shard. If the AWS instance crashes again, all traffic is redirected through another PgBouncer. Fault tolerance Network Load Balancer provides AWS.

This scheme allows you to easily add new PgBouncer servers.

Creating a PostgreSQL Failover Cluster

In solving this problem, we considered different options: self-written failover, repmgr, AWS RDS, Patroni.

Self-written scripts

They can monitor the work of the master and, in case of its fall, promote the replica to the master and update the configuration of PgBouncer.

The advantages of this approach are maximum simplicity, because you yourself write scripts and understand exactly how they work.

Minuses:

- The master might not die; instead, a network failure could occur. Failover, without knowing this, will advance the replica to the master, and the old master will continue to work. As a result, we get two servers in the role of master and we don’t know which of them has the latest actual data. This situation is also called split-brain;

- We were left without a replica. In our configuration, the master and one replica, after switching the replica, it moves to the master and we no longer have replicas, so we have to manually add a new replica;

- We need additional monitoring of failover operation, while we have 12 PostgreSQL shards, which means we must monitor 12 clusters. If you increase the number of shards, you must still remember to update failover.

Self-written failover looks very complicated and requires non-trivial support. With a single PostgreSQL cluster, this will be the easiest option, but it does not scale, so it is not suitable for us.

Repmgr

Replication Manager for PostgreSQL clusters, which can manage the operation of a PostgreSQL cluster. At the same time, there is no automatic failover “out of the box” in it, so for work you will need to write your own “wrapper” on top of the ready-made solution. So everything can turn out even more complicated than with self-written scripts, so we did not even try Repmgr.

AWS RDS

It supports everything you need for us, knows how to backup and supports a connection pool. It has automatic switching: upon the death of the master, the replica becomes the new master, and AWS changes the dns record to the new master, while the replicas can be in different AZs.

The disadvantages include the lack of subtle settings. As an example of fine-tuning: on our instances there are restrictions for tcp connections, which, unfortunately, cannot be done in RDS:

net.ipv4.tcp_keepalive_time=10

net.ipv4.tcp_keepalive_intvl=1

net.ipv4.tcp_keepalive_probes=5

net.ipv4.tcp_retries2=3

In addition, the AWS RDS price is almost two times higher than the regular instance price, which was the main reason for rejecting this decision.

Patroni

This is a python template for managing PostgreSQL with good documentation, automatic failover and github source code.

Pros of Patroni:

- Each configuration parameter is painted, it is clear how it works;

- Automatic failover works out of the box;

- It is written in python, and since we write a lot in python ourselves, it will be easier for us to deal with problems and, possibly, even help the development of the project;

- It fully controls PostgreSQL, allows you to change the configuration on all nodes of the cluster at once, and if a cluster restart is required to apply the new configuration, then this can be done again using Patroni.

Minuses:

- From the documentation it is not clear how to work with PgBouncer. Although it’s difficult to call it a minus, because the task of Patroni is to manage PostgreSQL, and how connections to Patroni will go is our problem;

- There are few examples of implementation of Patroni on large volumes, while many examples of implementation from scratch.

As a result, to create a failover cluster, we chose Patroni.

Patroni Implementation Process

Before Patroni, we had 12 PostgreSQL shards in configuration, one master and one replica with asynchronous replication. Application servers accessed the databases through the Network Load Balancer, behind which there were two instances with PgBouncer, and behind them were all PostgreSQL servers.

To implement Patroni, we needed to select a distributed cluster configuration repository. Patroni works with distributed configuration storage systems such as etcd, Zookeeper, Consul. We just have a full-fledged Consul cluster on prod that works in conjunction with Vault and we don’t use it anymore. A great reason to start using Consul for its intended purpose.

How Patroni Works with Consul

We have a Consul cluster, which consists of three nodes, and a Patroni cluster, which consists of a leader and a replica (in Patroni, a master is called a cluster leader, and slaves are called replicas). Each instance of a Patroni cluster constantly sends cluster status information to Consul. Therefore, from Consul you can always find out the current configuration of the Patroni cluster and who is the leader at the moment.

To connect Patroni to Consul, it is enough to study the official documentation, which says that you need to specify the host in http or https format, depending on how we work with Consul, and the connection scheme, optionally:

host: the host:port for the Consul endpoint, in format: http(s)://host:port

scheme: (optional) http or https, defaults to httpIt looks simple, but here pitfalls begin. With Consul we are working on a secure connection via https and our connection config will look like this:

consul:

host: https://server.production.consul:8080

verify: true

cacert: {{ consul_cacert }}

cert: {{ consul_cert }}

key: {{ consul_key }}But that doesn't work. At start, Patroni cannot connect to Consul, because it tries to follow http anyway.

The source code for Patroni helped deal with the problem. Good thing it is written in python. It turns out the host parameter is not parsed at all, and the protocol must be specified in the scheme. Here is the working configuration block for working with Consul with us:

consul:

host: server.production.consul:8080

scheme: https

verify: true

cacert: {{ consul_cacert }}

cert: {{ consul_cert }}

key: {{ consul_key }}Consul-template

So, we have chosen storage for a configuration. Now you need to understand how PgBouncer will switch its configuration when changing the leader in the Patroni cluster. The documentation does not answer this question, because there, in principle, work with PgBouncer is not described.

In search of a solution, we found an article (I don’t remember the name, unfortunately), where it was written that the Consul-template helped a lot in connecting PgBouncer and Patroni. This prompted us to study the work of the Consul-template.

It turned out that the Consul-template constantly monitors the configuration of the PostgreSQL cluster in Consul. When the leader changes, he updates the PgBouncer configuration and sends a command to reboot it.

The big plus of template is that it is stored as code, so when adding a new shard, it is enough to make a new commit and update the template in automatic mode, supporting the principle of Infrastructure as code.

New architecture with Patroni

As a result, we got the following working scheme:

All application servers turn to the balancer → two PgBouncer instances are behind it → Сonsul-template is launched on each instance, which monitors the status of each Patroni cluster and monitors the relevance of the PgBouncer config, which sends requests to the current leader of each a cluster.

Manual testing

Before launching the program, we launched this circuit on a small test environment and checked the operation of automatic switching. They opened the board, moved the sticker and at that moment “killed” the leader of the cluster. In AWS, just turn off the instance through the console.

The sticker returned back within 10-20 seconds, and then again began to move normally. This means that the Patroni cluster worked correctly: it changed the leader, sent the information to Consul, and the Consul-template immediately picked up this information, replaced the PgBouncer configuration and sent the command to reload.

How to survive under high load and maintain a minimum downtime?

Everything works perfectly! But new questions arise: How will it work under high load? How to quickly and safely roll everything into production?

The test environment in which we conduct load testing helps us answer the first question. It is completely identical to production in architecture and has generated test data, which are approximately equal in volume to production. We decide to just “kill” one of the PostgreSQL wizards during the test and see what happens. But before that, it is important to check the automatic rolling, because on this environment we have several PostgreSQL shards, so we will get excellent testing of configuration scripts before selling.

Both tasks look ambitious, but we have PostgreSQL 9.6. Maybe we will immediately upgrade to 11.2?

We decide to do this in 2 stages: first upgrade to 11.2, then launch Patroni.

PostgreSQL Update

To quickly upgrade the version of PostgreSQL, you must use the -k option , which creates a hard link on the disk and there is no need to copy your data. On bases of 300-400 GB, the update takes 1 second.

We have a lot of shards, so the update needs to be done automatically. To do this, we wrote Ansible playbook, which performs the entire update process for us:

/usr/lib/postgresql/11/bin/pg_upgrade \

--link \

--old-datadir='' --new-datadir='' \

--old-bindir='' --new-bindir='' \

--old-options=' -c config_file=' \

--new-options=' -c config_file='It is important to note here that before starting the upgrade, it is necessary to execute it with the --check parameter to be sure of the possibility of an upgrade. Our script also makes the substitution of configs for the upgrade. The script we completed in 30 seconds, this is an excellent result.

Launch Patroni

To solve the second problem, just look at the configuration of Patroni. In the official repository there is an example configuration with initdb, which is responsible for initializing a new database when Patroni is first launched. But since we have a ready-made database, we just deleted this section from the configuration.

When we started installing Patroni on a ready-made PostgreSQL cluster and running it, we faced a new problem: both servers started as leader. Patroni knows nothing about the early state of the cluster and tries to start both servers as two separate clusters with the same name. To solve this problem, delete the data directory on the slave:

rm -rf /var/lib/postgresql/This must be done only on slave!

When connecting a clean replica, Patroni makes a basebackup leader and restores it to the replica, and then catches up with the current state by wal-logs.

Another difficulty we encountered is that all PostgreSQL clusters are called main by default. When each cluster knows nothing about the other, this is normal. But when you want to use Patroni, then all clusters must have a unique name. The solution is to change the cluster name in the PostgreSQL configuration.

Load test

We launched a test that simulates the work of users on the boards. When the load reached our average daily value, we repeated the exact same test, we turned off one instance with leader PostgreSQL. The automatic failover worked as we expected: Patroni changed the leader, Consul-template updated the configuration of PgBouncer and sent the command to reload. According to our graphs in Grafana, it was clear that there are delays of 20-30 seconds and a small amount of errors from servers related to connecting to the database. This is a normal situation, such values are valid for our failover and definitely better than the downtime of the service.

Patroni's output to production

As a result, we got the following plan:

- Deploy Consul-template to the PgBouncer server and launch;

- PostgreSQL updates to version 11.2;

- Cluster name change;

- Starting a Patroni cluster.

At the same time, our scheme allows you to make the first item at almost any time, we can take turns removing each PgBouncer from work and execute a deployment on it and launching the consul-template. So we did.

For quick rolling, we used Ansible, since we already checked all the playbook on a test environment, and the execution time of the full script was from 1.5 to 2 minutes for each shard. We could roll everything out alternately for each shard without stopping our service, but we would have to turn off every PostgreSQL for a few minutes. In this case, users whose data is on this shard could not fully work at this time, and this is unacceptable to us.

The way out of this situation was the planned maintenance, which takes place every 3 months. This is a window for scheduled work when we completely turn off our service and update database instances. There was one week left until the next window, and we decided to just wait and further prepare. During the wait, we additionally made sure: for each PostgreSQL shard we raised a spare replica in case of failure in order to save the latest data, and added a new instance for each shard, which should become a new replica in the Patroni cluster so as not to execute a command to delete data . All this helped to minimize the risk of error.

We restarted our service, everything worked as it should, users continued to work, but on the graphs we noticed an abnormally high load on the Consul server.

Why didn’t we see it on the test environment? This problem illustrates very well that it is necessary to follow the principle of Infrastructure as code and refine the entire infrastructure, starting with test environments and ending with production. Otherwise, it is very easy to get the kind of problem that we got. What happened Consul first appeared on production, and then on test environments, as a result, on test environments, the version of Consul was higher than on production. Just in one of the releases, a CPU leak was solved when working with consul-template. Therefore, we just updated Consul, thus solving the problem.

Restart Patroni cluster

However, we got a new problem that we were not even aware of. When updating Consul, we simply remove the Consul node from the cluster using the consul leave command → Patroni connects to another Consul server → everything works. But when we reached the last instance of the Consul cluster and sent the consul leave command to it, all Patroni clusters simply restarted, and in the logs we saw the following error:

ERROR: get_cluster

Traceback (most recent call last):

...

RetryFailedError: 'Exceeded retry deadline'

ERROR: Error communicating with DCS

LOG: database system is shut downThe Patroni cluster was unable to obtain information about its cluster and restarted.

To find a solution, we contacted the authors of Patroni through issue on github. They suggested improvements to our configuration files:

consul:

consul.checks: []

bootstrap:

dcs:

retry_timeout: 8We were able to repeat the problem on a test environment and tested these parameters there, but, unfortunately, they did not work.

The problem is still unresolved. We plan to try the following solutions:

- Use Consul-agent on each instance of the Patroni cluster;

- Fix the problem in the code.

We understand the place where the error occurred: the problem is probably using the default timeout, which is not overridden through the configuration file. When the last Consul server is removed from the cluster, the entire Consul cluster freezes for more than a second, because of this Patroni cannot get the state of the cluster and completely restarts the entire cluster.

Fortunately, we did not meet any more errors.

Results of using Patroni

After the successful launch of Patroni, we added an additional replica in each cluster. Now in each cluster there is a semblance of a quorum: one leader and two replicas - to insure against the case of split-brain when switching.

Patroni has been working at production for more than three months. During this time, he has already managed to help us out. Recently, the leader of one of the clusters died in AWS, the automatic failover worked, and users continued to work. Patroni completed his main task.

A small summary of the use of Patroni:

- Convenience of change of a configuration. It is enough to change the configuration on one instance and it will be pulled over the entire cluster. If a reboot is required to apply the new configuration, Patroni will report this. Patroni can restart the entire cluster with a single command, which is also very convenient.

- Automatic failover works and has already managed to help us out.

- PostgreSQL update without application downtime. You must first upgrade the replicas to the new version, then change the leader in the Patroni cluster and update the old leader. In this case, the necessary testing of automatic failover occurs.