How we broke Glusterfs

The story began a year ago, when our friend, colleague and a large expert on enterprise marketing came to us with the words: “Guys, I have a chic storehouse with all the fashionable features lying around here. 90Tb. " We did not see a special need for it, but, of course, did not refuse. We set up a couple of backups there and for some time safely forgot.

Periodically, tasks arose such as transferring large files between hosts, building a WAL for Postgre replicas, etc. Gradually, we began to shift all the scattered goodness somehow related to our project into this refrigerator. We set up a rotate, alerts about successful and not very successful backup attempts. Over the year, this storage has become one of the important elements of our infrastructure in the operating group.

Everything was fine until our expert came to us again and said that he wants to pick up his present. And you need to return it urgently.

The choice was small - to shove everything again anywhere or collect your own refrigerator from blackjack and sticks. At this point, we had already been taught, had seen enough of many not-so-fault-tolerant systems, and fault-tolerance was our second self.

Of the many options, Gluster, Glaster, especially caught our eye. Anyway, what to call. If only there was a result. Therefore, we began to mock him.

What is Glaster and why is it needed?

This is a distributed file system that has long been friends with Openstack and integrated into oVIrt / RHEV. Although our IaaS is not on Openstack, Glaster has a large active community and has native qemu support in the form of the libgfapi interface. Thus, we kill two birds with one stone:

- Raise backup for backups, fully supported by us. You no longer have to be afraid in anticipation when the vendor sends a broken part.

- We are testing a new type of storage (volume type), which we can provide to our customers in the future.

Hypotheses that we tested:

- What a glaster works. Checked.

- That it is fault tolerant - we can reboot any node and the cluster will continue to work, the data will be available. We can reboot several nodes, the data will not be lost. Checked.

- That it is reliable - that is, it does not fall by itself, does not expire in memory, etc. Partly true, it took a long time to understand that the problem is not in our hands and heads, but in the Striped configuration of a volume that could not work stable in none of the configurations we assembled (details at the end).

A month went into experiments and assemblies of various configurations and versions, then there was a test operation in production as the second destination for our technical backups. We wanted to see how he behaved for six months before fully relying on him.

How to raise

We had enough of sticks for experiments with a surplus - a rack with Dell Poweredge r510 and a pack of not very nimble SATA two-terabytes, which we inherited from the old S3.

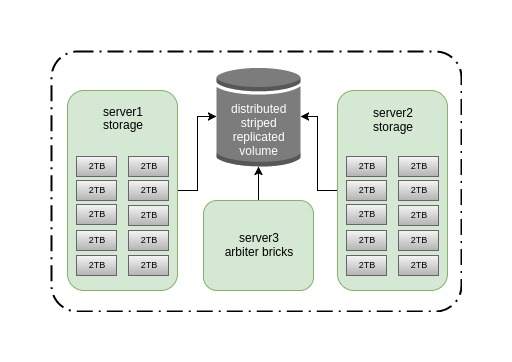

We figured that we do not need more than 20 TB of storage, and after that it took us about half an hour to fill up 10 disks in two old Dell Power Edge r510 drives, select another server for the arbiter role, download packets and deploy it. The result is such a scheme.

We chose striped-replicated with the arbiter, because it is fast (data is spread evenly across several bricks), reliable enough (replica 2), you can survive the fall of one node without receiving a split-brain. How wrong we were ...

The main disadvantage of our cluster in the current configuration is a very narrow channel, only 1G, but for our purpose it is enough. Therefore, this post is not about testing the speed of the system, but about its stability and what to do in case of accidents. Although in the future we plan to switch it to Infiniband 56G with rdma and conduct performance tests, but this is a completely different story.

I will not go deep into the process of assembling the cluster; here everything is quite simple.

Create directories for bricks:

for i in {0..9} ; do mkdir -p /export/brick$i ; done

We roll xfs onto the brique wheels:

for i in {b..k} ; do mkfs.xfs /dev/sd$i ; doneAdd mount points to / etc / fstab:

/dev/sdb /export/brick0/ xfs defaults 0 0

/dev/sdc /export/brick1/ xfs defaults 0 0

/dev/sdd /export/brick2/ xfs defaults 0 0

/dev/sde /export/brick3/ xfs defaults 0 0

/dev/sdf /export/brick4/ xfs defaults 0 0

/dev/sdg /export/brick5/ xfs defaults 0 0

/dev/sdh /export/brick6/ xfs defaults 0 0

/dev/sdi /export/brick7/ xfs defaults 0 0

/dev/sdj /export/brick8/ xfs defaults 0 0

/dev/sdk /export/brick9/ xfs defaults 0 0

Mount:

mount -aAdd the directory for the volume to the briks, which will be called holodilnik:

for i in {0..9} ; do mkdir -p /export/brick$i/holodilnik ; doneNext, we need to spin the cluster hosts and create a volume.

We put packages on all three hosts:

pdsh -w server[1-3] -- yum install glusterfs-server -yLaunch the Glaster:

systemctl enable glusterd

systemctl start glusterdIt is useful to know that Glaster has several processes, here are their purposes:

glusterd = management daemon

The main daemon controls the volume, pulls the rest of the daemons responsible for bricks and data recovery.

glusterfsd = per-brick daemon

Each brick launches its own glusterfsd daemon.

glustershd = self-heal daemon

Responsible for data rebuild in replicated volumes in cases of a cluster node dump.

glusterfs = usually client-side, but also NFS on servers

For example, it arrives with the glusterfs-fuse native client package.

Pirim nodes:

gluster peer probe server2

gluster peer probe server3

We collect the volume, the order of the bricks is important here - the replicated bricks follow one after another:

gluster volume create holodilnik stripe 10 replica 3 arbiter 1 transport tcp server1:/export/brick0/holodilnik server2:/export/brick0/holodilnik server3:/export/brick0/holodilnik server1:/export/brick1/holodilnik server2:/export/brick1/holodilnik server3:/export/brick1/holodilnik server1:/export/brick2/holodilnik server2:/export/brick2/holodilnik server3:/export/brick2/holodilnik server1:/export/brick3/holodilnik server2:/export/brick3/holodilnik server3:/export/brick3/holodilnik server1:/export/brick4/holodilnik server2:/export/brick4/holodilnik server3:/export/brick4/holodilnik server1:/export/brick5/holodilnik server2:/export/brick5/holodilnik server3:/export/brick5/holodilnik server1:/export/brick6/holodilnik server2:/export/brick6/holodilnik server3:/export/brick6/holodilnik server1:/export/brick7/holodilnik server2:/export/brick7/holodilnik server3:/export/brick7/holodilnik server1:/export/brick8/holodilnik server2:/export/brick8/holodilnik server3:/export/brick8/holodilnik server1:/export/brick9/holodilnik server2:/export/brick9/holodilnik server3:/export/brick9/holodilnik force

We had to try a large number of combinations of parameters, kernel versions (3.10.0, 4.5.4) and Glusterfs itself (3.8, 3.10, 3.13) so that the Glaster began to behave stably.

We also experimentally set the following parameter values:

gluster volume set holodilnik performance.write-behind on

gluster volume set holodilnik nfs.disable on

gluster volume set holodilnik cluster.lookup-optimize off

gluster volume set holodilnik performance.stat-prefetch off

gluster volume set holodilnik server.allow-insecure on

gluster volume set holodilnik storage.batch-fsync-delay-usec 0

gluster volume set holodilnik performance.client-io-threads off

gluster volume set holodilnik network.frame-timeout 60

gluster volume set holodilnik performance.quick-read on

gluster volume set holodilnik performance.flush-behind off

gluster volume set holodilnik performance.io-cache off

gluster volume set holodilnik performance.read-ahead off

gluster volume set holodilnik performance.cache-size 0

gluster volume set holodilnik performance.io-thread-count 64

gluster volume set holodilnik performance.high-prio-threads 64

gluster volume set holodilnik performance.normal-prio-threads 64

gluster volume set holodilnik network.ping-timeout 5

gluster volume set holodilnik server.event-threads 16

gluster volume set holodilnik client.event-threads 16Additional useful options:

sysctl vm.swappiness=0

sysctl vm.vfs_cache_pressure=120

sysctl vm.dirty_ratio=5

echo "deadline" > /sys/block/sd[b-k]/queue/scheduler

echo "256" > /sys/block/sd[b-k]/queue/nr_requests

echo "16" > /proc/sys/vm/page-cluster

blockdev --setra 4096 /dev/sd[b-k]It is worth adding that these parameters are good in our case with backups, namely with linear operations. For random read / write cases, you need to pick something else up.

Now we will consider the pros and cons of different types of connection to the Glaster and the results of negative test cases.

To connect to the volume, we tested all the main options:

1. Gluster Native Client (glusterfs-fuse) with the backupvolfile-server parameter.

Cons:

- installing additional software on clients;

- speed.

Plus / minus:

- long data inaccessibility in the event of a dump of one of the cluster nodes. The problem is corrected by the network.ping-timeout parameter on the server side. By setting the parameter to 5, the ball falls off, respectively, for 5 seconds.

A plus:

- it works quite stably, there were no massive problems with broken files.

2. Gluster Native Client (gluster-fuse) + VRRP (keepalived).

Configured a moving IP between two nodes of the cluster and extinguished one of them.

Minus:

- installation of additional software.

Plus:

- a configurable timeout when switching in case of a cluster node dump.

As it turned out, specifying the backupvolfile-server parameter or the keepalived setting is optional, the client itself connects to the Glaster daemon (no matter what address), finds out the remaining addresses and starts recording on all nodes of the cluster. In our case, we saw symmetric traffic from the client to server1 and server2. Even if you give him a VIP address, the client will still use Glusterfs cluster addresses. We came to the conclusion that this parameter is useful if, at startup, the client tries to connect to the Glusterfs server, which is unavailable, then it will next contact the host specified in backupvolfile-server.

Comment from official documentation:

The FUSE client allows the mount to happen with a GlusterFS “round robin” style connection. In / etc / fstab , the name of one node is used; however, internal mechanisms allow that node to fail, and the clients will roll over to other connected nodes in the trusted storage pool. The performance is slightly slower than the NFS method based on tests, but not drastically so. The gain is automatic HA client failover, which is typically worth the effect on performance.

3. NFS-Ganesha server, with Pacemaker.

The recommended type of connection, if for some reason you do not want to use the native client.

Cons:

- even more additional software;

- fuss with pacemaker;

- caught the bug .

4. NFSv3 and NLM + VRRP (keepalived).

Classic NFS with lock support and moving IP between two cluster nodes.

Pluses:

- fast switching in case of failure of a node;

- ease of setup keepalived;

- nfs-utils is installed on all our client hosts by default.

Cons:

- hanging NFS client in D status after a few minutes rsync to the mount point;

- node drop with the client entirely - BUG: soft lockup - CPU stuck for Xs!

- Caught a lot of cases when files broke with errors stale file handle, Directory not empty with rm -rf, Remote I / O error, etc.

The worst option, moreover, in later versions of Glusterfs it became deprecated, we advise no one .

As a result, we chose glusterfs-fuse without keepalived and with backupvolfile-server parameter. Since in our configuration it was the only one that showed stability, despite the relatively low speed.

In addition to the need to configure a highly accessible solution, in productive operation, we must be able to restore the service in case of accidents. Therefore, after assembling a stable working cluster, we proceeded to destructive tests.

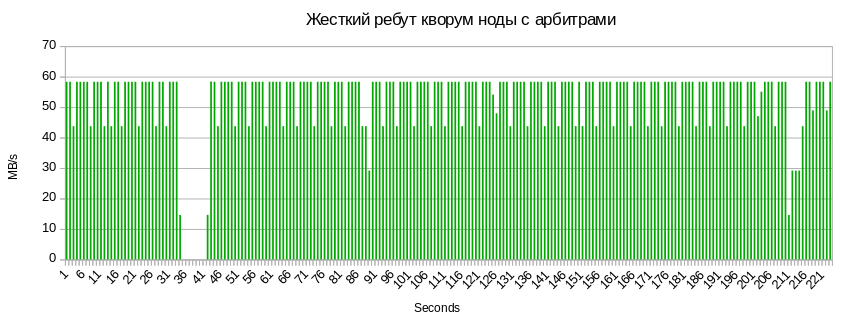

Abnormal shutdown of a node (cold reboot)

We launched rsync of a large number of files from one client, hard-off one of the cluster nodes and got very funny results. After the node fell, at first the recording stopped for 5 seconds (the network.ping-timeout 5 parameter is responsible for this), after that the write speed to the ball doubled, since the client can no longer replicate data and starts sending all traffic to the remaining node, continuing to rest into our 1G channel.

When the server booted up, the automatic data disinfection process in the cluster began, for which the glustershd daemon was responsible, and the speed dropped significantly.

So you can see the number of files that are treated after the node’s dump:

gluster volume heal holodilnik info...

Brick server2: / export / brick1 / holodilnik

/2018-01-20-weekly/billing.tar.gz

Status: Connected

Number of entries: 1

Brick server2: / export / brick5 / holodilnik

/ 2018-01-27-weekly / billing .tar.gz

Status: Connected

Number of entries: 1

Brick server3: / export / brick5 / holodilnik

/2018-01-27-weekly/billing.tar.gz

Status: Connected

Number of entries: 1

...

/2018-01-20-weekly/billing.tar.gz

Status: Connected

Number of entries: 1

Brick server2: / export / brick5 / holodilnik

/ 2018-01-27-weekly / billing .tar.gz

Status: Connected

Number of entries: 1

Brick server3: / export / brick5 / holodilnik

/2018-01-27-weekly/billing.tar.gz

Status: Connected

Number of entries: 1

...

At the end of the treatment, the counters were reset to zero and the recording speed returned to the previous values.

Disc blade and its replacement

The dump of a bric disk, as well as its replacement, did not slow down the write speed to the ball. Probably the fact is that the bottleneck here, again, is the channel between the cluster nodes, and not the disk speed. As soon as we have additional Infiniband cards, we will conduct tests with a wider channel.

I want to note that when you change the crashed disk, it should return with the same name in sysfs (/ dev / sdX). It often happens that a new drive is assigned the next letter. I highly recommend not introducing it in this form, since upon a subsequent reboot it will take the old name, the names of the block devices will go out and the bricks will not go up. Therefore, you have to carry out several actions.

Most likely, the problem is that somewhere in the system there were mount points for the crashed disk. Therefore, do umount.

umount /dev/sdXWe also check which process this device can hold:

lsof | grep sdXAnd stop this process.

After that, you need to make rescan.

We look in dmesg-H for more detailed information about the location of the crashed disk :

[Feb14 12:28] quiet_error: 29686 callbacks suppressed

[ +0.000005] Buffer I/O error on device sdf, logical block 122060815

[ +0.000042] lost page write due to I/O error on sdf

[ +0.001007] blk_update_request: I/O error, dev sdf, sector 1952988564

[ +0.000043] XFS (sdf): metadata I/O error: block 0x74683d94 ("xlog_iodone") error 5 numblks 64

[ +0.000074] XFS (sdf): xfs_do_force_shutdown(0x2) called from line 1180 of file fs/xfs/xfs_log.c. Return address = 0xffffffffa031bbbe

[ +0.000026] XFS (sdf): Log I/O Error Detected. Shutting down filesystem

[ +0.000029] XFS (sdf): Please umount the filesystem and rectify the problem(s)

[ +0.000034] XFS (sdf): xfs_log_force: error -5 returned.

[ +2.449233] XFS (sdf): xfs_log_force: error -5 returned.

[ +4.106773] sd 0:2:5:0: [sdf] Synchronizing SCSI cache

[ +25.997287] XFS (sdf): xfs_log_force: error -5 returned.

Где sd 0:2:5:0 — это:

h == hostadapter id (first one being 0)

c == SCSI channel on hostadapter (first one being 2) — он же PCI-слот

t == ID (5) — он же номер слота вылетевшего диска

l == LUN (first one being 0)

echo 1 > /sys/block/sdY/device/delete

echo "2 5 0" > /sys/class/scsi_host/host0/scanwhere sdY is the incorrect name of the replaced drive.

Next, to replace the brick, we need to create a new directory for mounting, roll up the file system and mount it:

mkdir -p /export/newvol/brick

mkfs.xfs /dev/sdf -f

mount /dev/sdf /export/newvol/We replace brick:

gluster volume replace-brick holodilnik server1:/export/sdf/brick server1:/export/newvol/brick commit forceWe start treatment:

gluster volume heal holodilnik full

gluster volume heal holodilnik info summaryReferee Blade:

The same 5-7 seconds of inaccessibility of balls and 3 seconds of drawdown associated with sync metadata to a quorum node.

Summary

The results of the destructive tests pleased us, and we partially introduced it into the products, but we didn’t rejoice for long ...

Problem 1, it’s a known bug

When deleting a large number of files and directories (about 100,000), we ate this “beauty”:

rm -rf /mnt/holodilnik/*

rm: cannot remove ‘backups/public’: Remote I/O error

rm: cannot remove ‘backups/mongo/5919d69b46e0fb008d23778c/mc.ru-msk’: Directory not empty

rm: cannot remove ‘billing/2018-02-02_before-update_0.10.0/mongodb/’: Stale file handleI read about 30 such applications, which begin in 2013. There is no solution to the problem anywhere.

Red Hat recommends updating the version , but this did not help us.

Our workaround is just to clean up the remnants of broken directories in bricks on all nodes:

pdsh -w server[1-3] -- rm -rf /export/brick[0-9]/holodilnik/But then worse.

Problem 2, the worst

We tried to unzip the archive with a large number of files inside the Striped volume balls and got a dangling tar xvfz in Uninterruptible sleep. Which is treated only by reboot of the client node.

Realizing that you can’t continue to live like this, we turned to the last configuration we had not tried, which did not inspire confidence in us - erasure coding. Its only difficulty is in understanding the principle of assembling the volume.

Having run all the same destructive tests, we got the same nice results. Millions of files were downloaded into it and deleted. As soon as we tried, we did not succeed in breaking the Dispersed volume. We have seen a higher load on the CPU, but so far this is not critical for us.

Now it backups a piece of our infrastructure and is used as a file-cleaner for our internal applications. We want to live with him, to see how he works under different loads. It’s clear that the type of stripe volumes works strangely, and the rest works very well. Further in the plans - to collect 50 TB dispersed volume 4 + 2 on six servers with a wide Infiniband channel, run performance tests and continue to delve deeper into the principles of its work.