UIKit Performance Optimization

- Transfer

Despite the fact that many articles and videos from WWDC conferences are devoted to UIKit performance, this topic is still incomprehensible to many iOS developers. For this reason, we decided to collect the most interesting questions and problems, which primarily depend on the speed and smoothness of the UI application.

The first problem to pay attention to is the mixing of colors.

The article specifically used the original pictures and code. So that everyone could find out an interesting topic ... and conduct experiments in the new Xcode and Instruments .

Blending is a frame rendering operation that determines the final color of a pixel. Each UIView (to be honest, CALayer) affects the color of the final pixel, for example, in the case of combining a set of properties such as alpha , backgroundColor , opaque of all overlying views.

Let's start with the most used UIView properties, such as UIView.alpha, UIView.opaque, and UIView.backgroundColor.

UIView.opaque is a hint for the visualizer, which allows you to view images as a completely opaque surface, thereby improving the quality of rendering. Opacity means: “ Don’t paint anything under the surface .” UIView.opaque allows you to skip rendering of the lower layers of the image and thus does not mix colors. The topmost color will be used for the view.

If alpha is less than 1, then opaque will be ignored, even if it is YES .

Despite the fact that the default opacity is YES, the result is color mixing, since we made our image transparent by setting the Alpha to less than 1.

Note: If you want to get accurate information about real performance, you need to test the application on a real device, not on a simulator. The device’s CPU is slower than the processor of your Mac device, which makes them very different.

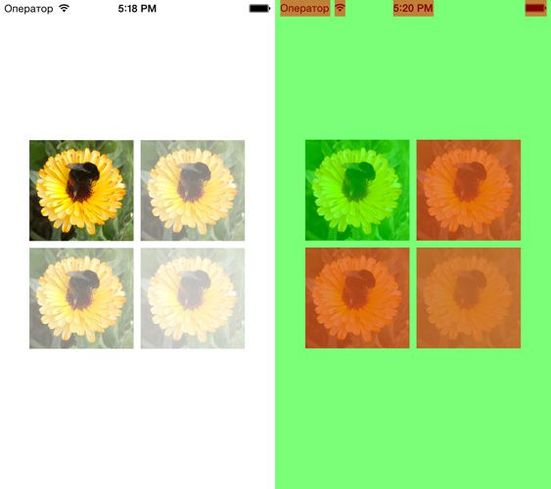

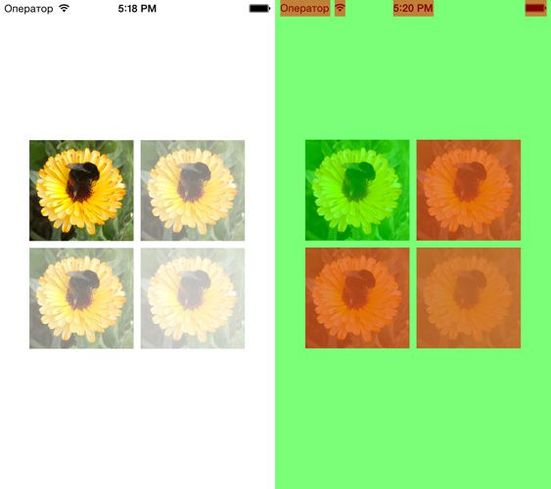

In the debug menu of the iOS simulator, you can find the item “Color Blended Layers”. The debugger can show mixed layers of the image, where several translucent layers overlap each other. Several layers of the image, which are laid on top of one another with the mixing support enabled, are highlighted in red, while several layers of the image that are displayed without mixing are highlighted in green.

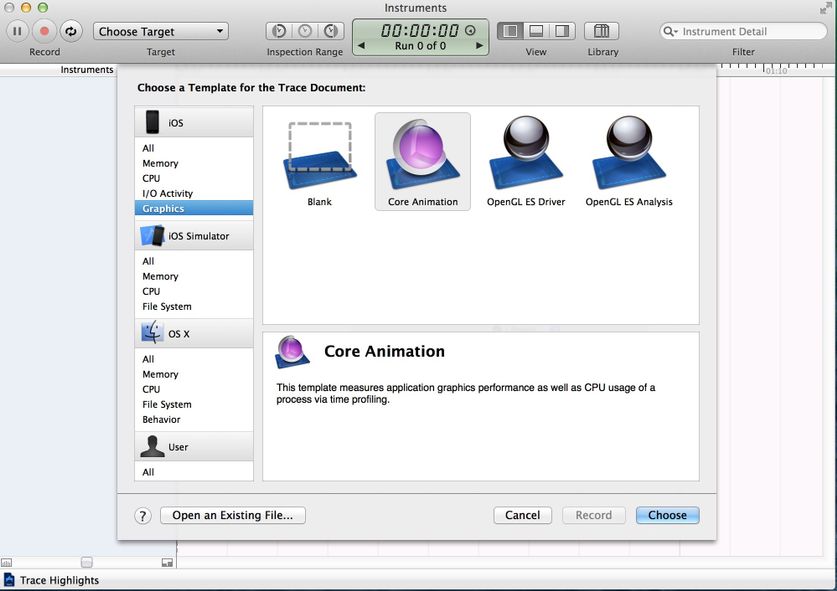

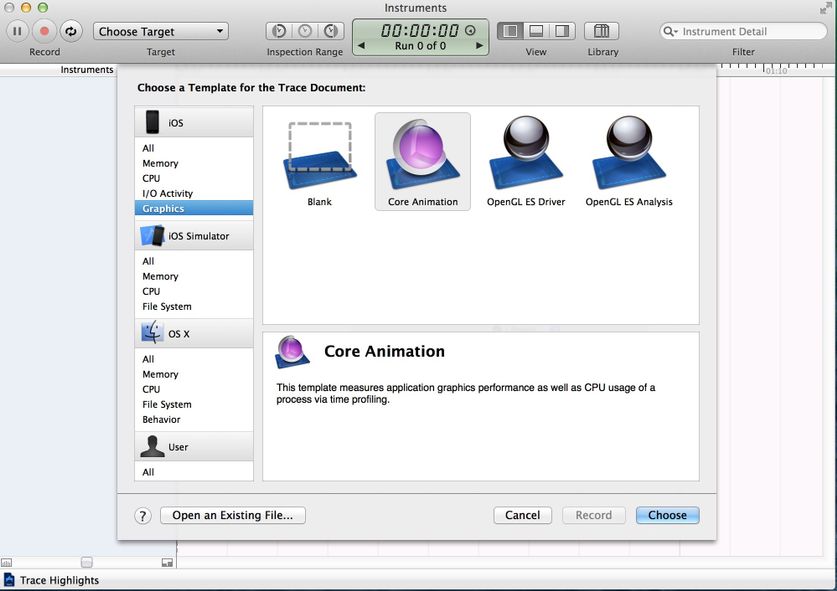

To use the Core Animation tool, you must connect a real device.

You can find the 'Color Blended Layers' here.

The same problem arises when we try to understand how a change in the alpha channel can affect the transparency of the UIImageView (also consider the effect of the alpha property). Let's use the category for UIImage to get another image with a custom alpha channel:

Consider 4 cases:

Mixed image layers are displayed by the simulator. Therefore, even when the alpha property for UIImageView has a default value of 1.0, and the image has a converted alpha channel, we get a mixed layer.

Apple's official documentation encourages developers to pay more attention to color mixing:

“To significantly improve the performance of your application, reduce the amount of red when mixing colors. Using color blending often slows down scrolling. ”

In order to create a transparent layer, additional calculations are needed. The system must mix the top and bottom layers to determine the color and draw it.

Frame-by-frame rendering is a rendering of an image that cannot be done using hardware acceleration of the GPU; instead, you should use a CPU processor.

At a low level, it looks like this: during the rendering of a layer that needs off-screen rendering, the GPU stops the rendering process and transfers control to the CPU. In turn, the CPU performs all the necessary operations (for example, crams your fantasies into DrawRect :) and returns control to the GPU with the layer already drawn. The GPU renders it and the drawing process continues.

In addition, off-screen visualization requires the allocation of additional memory for the so-called backup storage. At the same time, it is not needed for drawing layers where hardware acceleration is used.

What effects / settings lead to offscreen visualization? Let's look at them:

custom DrawRect: (any, even if you just fill the background with color)

We can easily detect offscreen visualization using the Core Animation tool in Instruments, if you enable the Color Offscreen-Rendered Yellow option. Places where off-screen rendering occurs will be indicated by a yellow layer.

Consider several cases and test the quality of work. We will try to find the best solution that will improve the quality of work and, at the same time, realize your vision of good design.

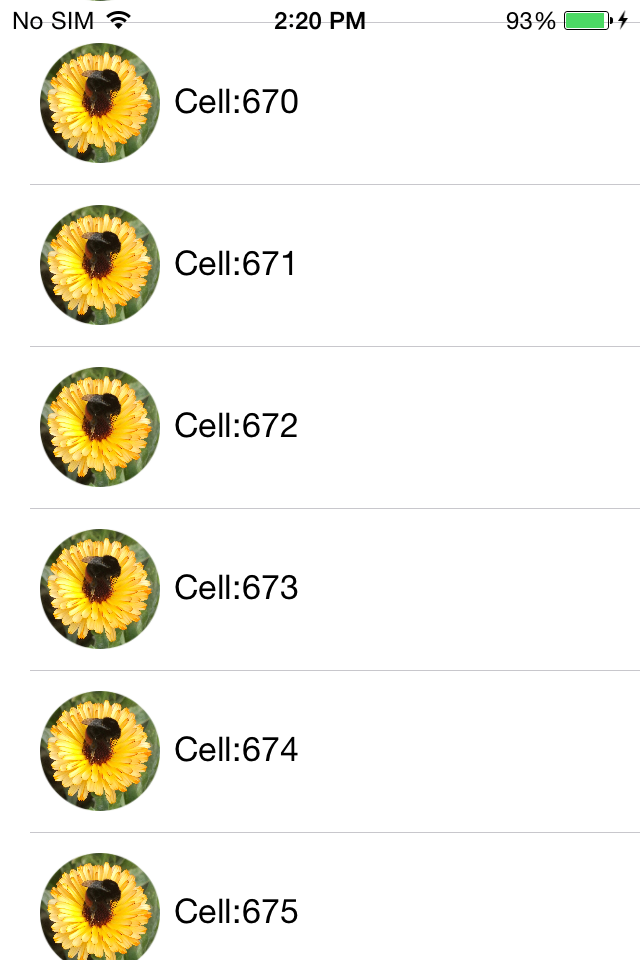

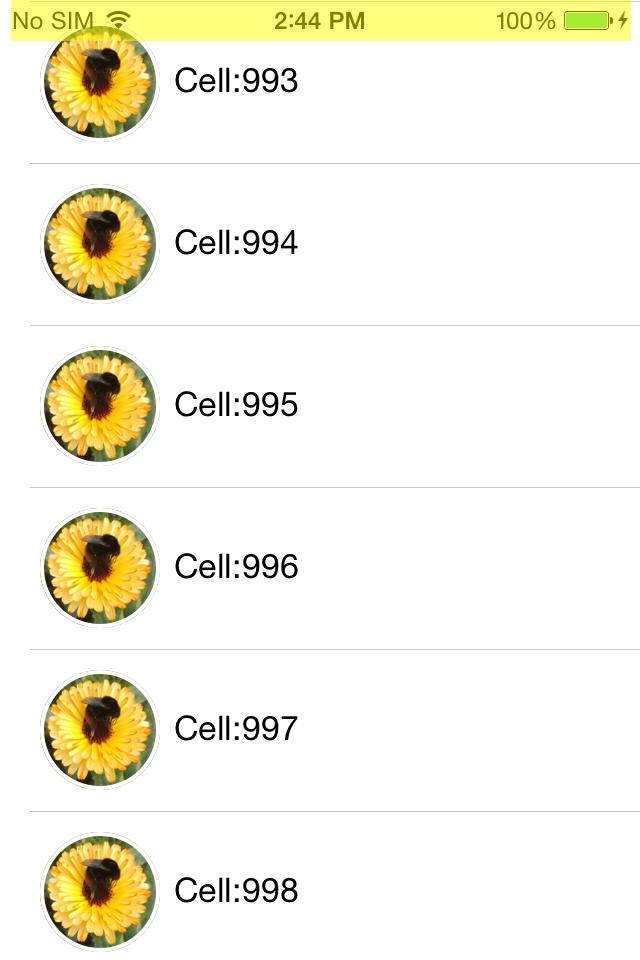

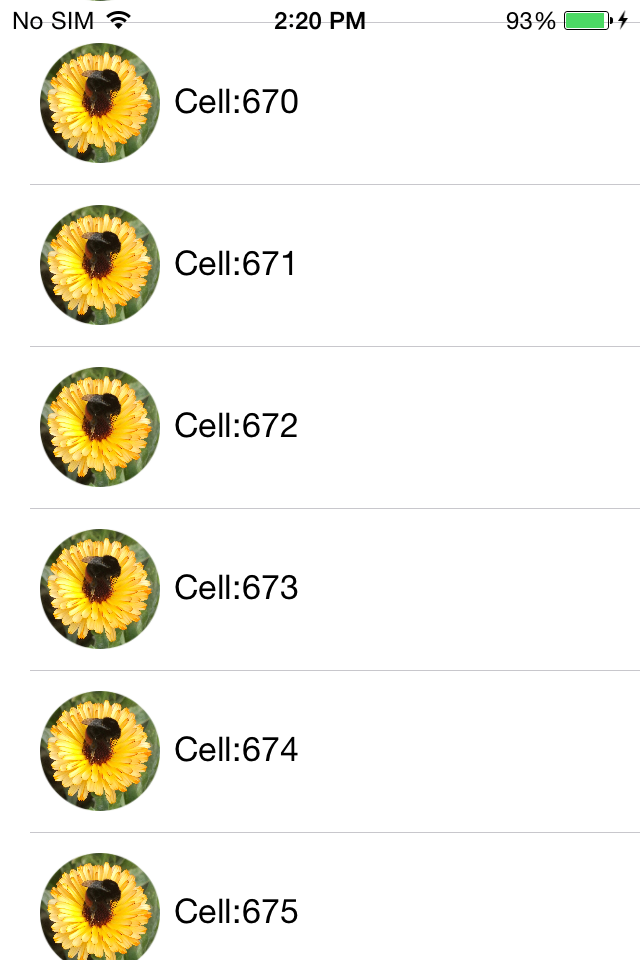

Create a simple Tableview with our custom cell and add UIImageView and UILabel to our cell. Remember the good old days when buttons were round? To achieve this fantastic effect in Tableview, we need to set the YES value for CALayer.cornerRadius and CALayer.masksToBounds.

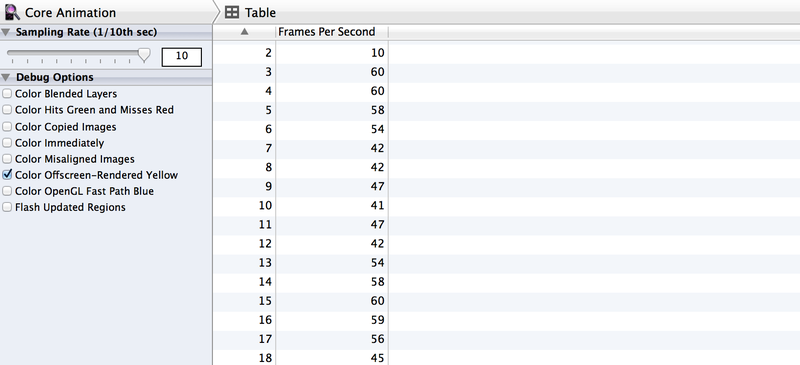

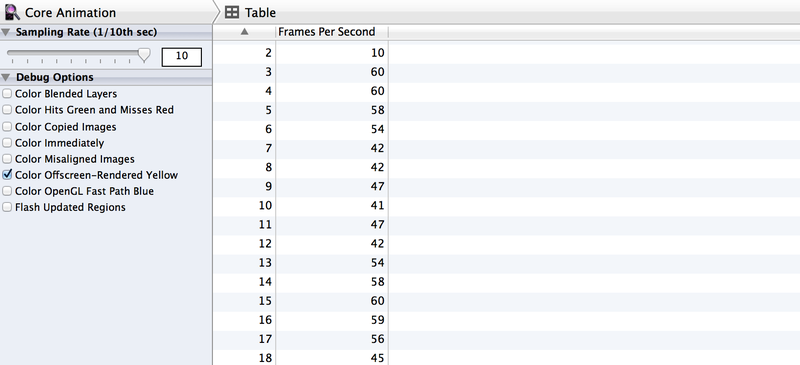

Despite the fact that we achieved the desired effect, even without Instruments it is obvious that the performance is very far from the recommended 60 FPS . But we will not look into the crystal ball to find unverified numerical answers. Instead, we just test performance with Instruments .

First of all, enable the Color Offscreen-Rendered Yellow option. Each UIImageView cell is covered in a yellow layer.

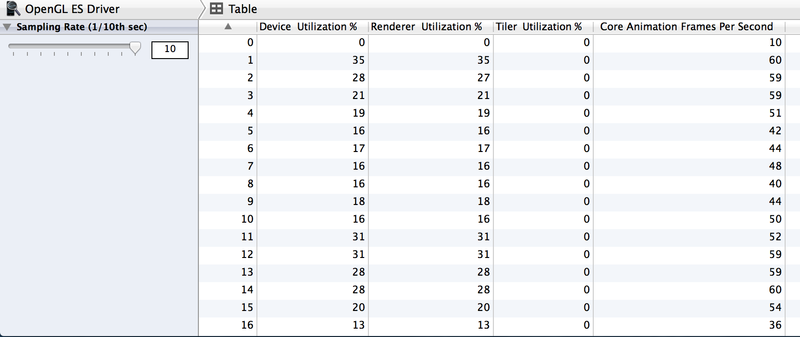

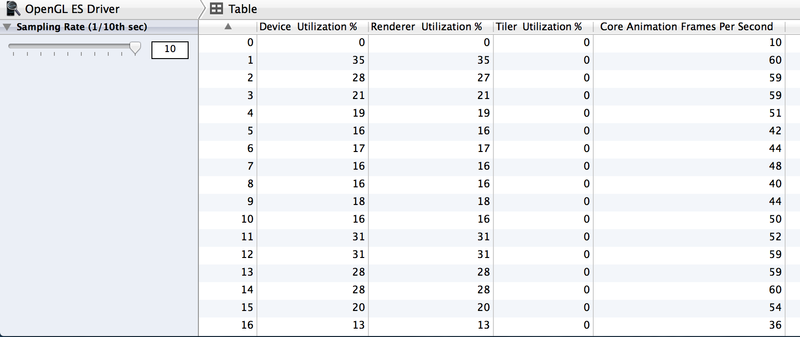

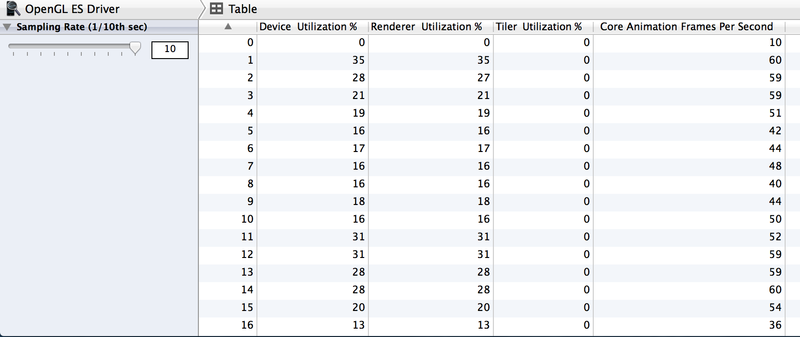

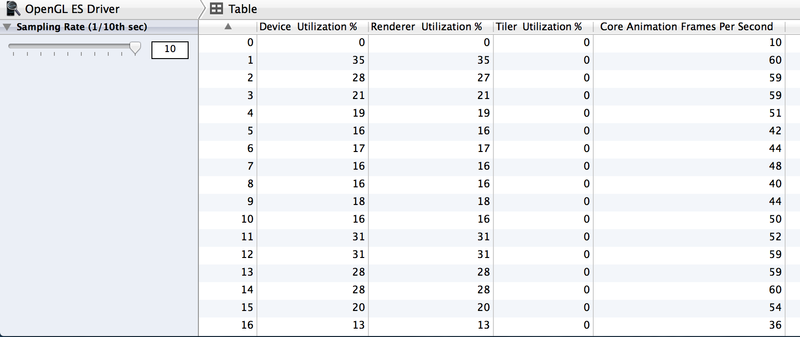

It's also worth checking out how to work with Animation and OpenGL ES Driver in Instruments.

Speaking of the OpenGL ES Driver tool, what does this give us? To understand how it works, let's look at the GPU from the inside. GPU consists of two components - Renderer and Tiler. The duty of the renderer is to draw data, although the order and composition are determined by the Tiler component. Thus, the work of Tiler is to divide the frame into pixels and determine their visibility. Only then are visible pixels passed to the renderer (i.e. the rendering process slows down ).

If the Renderer Utilization value is above ~ 50%, then this means that the animation process may be limited by the filling speed. If Tiler Utilization is above 50%, then this suggests that the animation can be geometrically limited, that is, on the screen, most likely, there are too many layers.

Now it’s clear that we must look for a different approach to achieve the desired effect and, at the same time, increase productivity. Use the category for UIImage to round corners without using the cornerRadius properties:

And now we will change the implementation of the dataSource of the cellForRowAtIndexPath method.

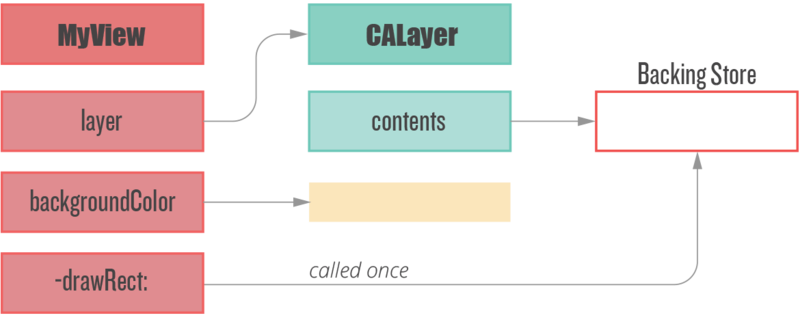

The rendering code is called only once when the object is first displayed on the screen. The object is cached by CALayer and subsequently displayed without additional rendering. Regardless of the fact that it works slower than the methods of Core Animation, this approach allows you to convert frame-by-frame rendering one-time.

Before we get back to measuring performance, let's check the off-screen rendering again.

57 - 60 FPS! We succeeded in an optimal way to double productivity and reduce Tiler Utilization and Renderer Utilization.

Keep in mind that the -drawRect method leads to offscreen rendering, even when you just need to fill the background with color.

Especially if you want to make your own implementation of the DrawRect method for such simple operations as setting the background color, instead of using the UIView BackgroundColor property.

This approach is irrational for two reasons.

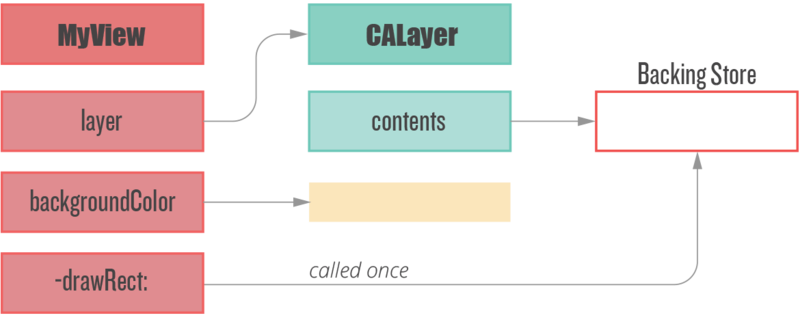

First: system-wide UIViews can implement their own rendering methods to display their contents, and it is obvious that Apple is trying to optimize these processes. In addition, we must remember the backup storage - a new backup image, with pixel sizes equal to the size of the image times the contentsScale, which will be cached, until the next image update.

Secondly, if we avoid abusing the DrawRect method, we do not need to allocate additional memory for the backup storage and reset it every time we perform a new rendering cycle.

Another way to speed up performance for off-screen rendering is to use the CALayer.shouldRasterize property. The layer is rendered once and cached, until the moment when you need to draw this layer again.

However, despite the potential performance improvement, if the layer needs to be redrawn too often, the additional caching costs make it useless, because the system will rasterize the layer after each draw.

In the end, the use of CALayer.shouldRasterize depends on the specific use case and Instruments.

With the help of shadows it is possible to make the user interface more beautiful. On iOS, it’s very easy to add a shadow effect:

With “Offscreen Rendering” turned on, we can see that the shadow adds off-screen rendering, which is why CoreAnimation calculates the shadow rendering in real time, which reduces FPS .

»Allowing Core Animation to determine the shape of the shadow can affect the performance of your application. Instead, define the shape of the shadow using the shadowPath property for CALayer. When using shadowPath, Core Animation uses the specified form to render and cache the shadow effect. For layers whose state does not change or rarely changes, this significantly improves performance by reducing the number of visualizations performed by Core Animation. "

Therefore, we must provide shadow caching (CGPath) for CoreAnimation, which is quite easy to do:

With one line of code, we avoided off-screen rendering and greatly improved performance.

So, as you can see, many UI performance issues can be solved quite easily. One small remark - do not forget to measure performance before and after optimization :)

WWDC 2011 Video: Understanding UIKit rendering

WWDC 2012 Video: iOS App performance: Graphics and Animations

Book: iOS Core animation. Advanced Techniques by Nick Lockwood

iOS image caching. Libraries benchmark

WWDC 2014 Video: Advanced Graphics and Animations for iOS Apps

The first problem to pay attention to is the mixing of colors.

From the author of the translation

The article specifically used the original pictures and code. So that everyone could find out an interesting topic ... and conduct experiments in the new Xcode and Instruments .

Color mixing

Blending is a frame rendering operation that determines the final color of a pixel. Each UIView (to be honest, CALayer) affects the color of the final pixel, for example, in the case of combining a set of properties such as alpha , backgroundColor , opaque of all overlying views.

Let's start with the most used UIView properties, such as UIView.alpha, UIView.opaque, and UIView.backgroundColor.

Opacity vs Transparency

UIView.opaque is a hint for the visualizer, which allows you to view images as a completely opaque surface, thereby improving the quality of rendering. Opacity means: “ Don’t paint anything under the surface .” UIView.opaque allows you to skip rendering of the lower layers of the image and thus does not mix colors. The topmost color will be used for the view.

Alpha

If alpha is less than 1, then opaque will be ignored, even if it is YES .

- (void)viewDidLoad {

[super viewDidLoad];

UIView *view = [[UIView alloc] initWithFrame:CGRectMake(35.f, 35.f, 200.f, 200.f)];

view.backgroundColor = [UIColor purpleColor];

view.alpha = 0.5f;

[self.view addSubview:view];

}

Despite the fact that the default opacity is YES, the result is color mixing, since we made our image transparent by setting the Alpha to less than 1.

How to check?

Note: If you want to get accurate information about real performance, you need to test the application on a real device, not on a simulator. The device’s CPU is slower than the processor of your Mac device, which makes them very different.

In the debug menu of the iOS simulator, you can find the item “Color Blended Layers”. The debugger can show mixed layers of the image, where several translucent layers overlap each other. Several layers of the image, which are laid on top of one another with the mixing support enabled, are highlighted in red, while several layers of the image that are displayed without mixing are highlighted in green.

To use the Core Animation tool, you must connect a real device.

You can find the 'Color Blended Layers' here.

Alpha channel image

The same problem arises when we try to understand how a change in the alpha channel can affect the transparency of the UIImageView (also consider the effect of the alpha property). Let's use the category for UIImage to get another image with a custom alpha channel:

- (UIImage *)imageByApplyingAlpha:(CGFloat) alpha {

UIGraphicsBeginImageContextWithOptions(self.size, NO, 0.0f);

CGContextRef ctx = UIGraphicsGetCurrentContext();

CGRect area = CGRectMake(0, 0, self.size.width, self.size.height);

CGContextScaleCTM(ctx, 1, -1);

CGContextTranslateCTM(ctx, 0, -area.size.height);

CGContextSetBlendMode(ctx, kCGBlendModeMultiply);

CGContextSetAlpha(ctx, alpha);

CGContextDrawImage(ctx, area, self.CGImage);

UIImage *newImage = UIGraphicsGetImageFromCurrentImageContext();

UIGraphicsEndImageContext();

return newImage;

}

Consider 4 cases:

- UIImageView has a standard alpha property value (1.0) and the image does not have an alpha channel.

- UIImageView has a standard alpha property value (1.0) and the image has an alpha channel of 0.5.

- UIImageView has a mutable alpha property value, but the image does not have an alpha channel.

- UIImageView has a mutable alpha property value and the image has an alpha channel of 0.5.

- (void)viewDidLoad {

[super viewDidLoad];

UIImage *image = [UIImage imageNamed:@"flower.jpg"];

UIImage *imageWithAlpha = [image imageByApplyingAlpha:0.5f];

//1st case

[self.imageViewWithImageHasDefaulAlphaChannel setImage:image];

//The 2nd case

[self.imageViewWihImageHaveCustomAlphaChannel setImage:imageWithAlpha];

//The 3d caseself.imageViewHasChangedAlphaWithImageHasDefaultAlpha.alpha = 0.5f;

[self.imageViewHasChangedAlphaWithImageHasDefaultAlpha setImage:image];

//The 4th caseself.imageViewHasChangedAlphaWithImageHasCustomAlpha.alpha = 0.5f;

[self.imageViewHasChangedAlphaWithImageHasCustomAlpha setImage:imageWithAlpha];

}

Mixed image layers are displayed by the simulator. Therefore, even when the alpha property for UIImageView has a default value of 1.0, and the image has a converted alpha channel, we get a mixed layer.

Apple's official documentation encourages developers to pay more attention to color mixing:

“To significantly improve the performance of your application, reduce the amount of red when mixing colors. Using color blending often slows down scrolling. ”

In order to create a transparent layer, additional calculations are needed. The system must mix the top and bottom layers to determine the color and draw it.

Offscreen visualization

Frame-by-frame rendering is a rendering of an image that cannot be done using hardware acceleration of the GPU; instead, you should use a CPU processor.

At a low level, it looks like this: during the rendering of a layer that needs off-screen rendering, the GPU stops the rendering process and transfers control to the CPU. In turn, the CPU performs all the necessary operations (for example, crams your fantasies into DrawRect :) and returns control to the GPU with the layer already drawn. The GPU renders it and the drawing process continues.

In addition, off-screen visualization requires the allocation of additional memory for the so-called backup storage. At the same time, it is not needed for drawing layers where hardware acceleration is used.

Screen visualization

Offscreen visualization

What effects / settings lead to offscreen visualization? Let's look at them:

custom DrawRect: (any, even if you just fill the background with color)

- rounding radius for CALayer

- shadow for calayer

- mask for CALayer

- any custom drawing using CGContext

We can easily detect offscreen visualization using the Core Animation tool in Instruments, if you enable the Color Offscreen-Rendered Yellow option. Places where off-screen rendering occurs will be indicated by a yellow layer.

Consider several cases and test the quality of work. We will try to find the best solution that will improve the quality of work and, at the same time, realize your vision of good design.

Testing Environment:

- Device: iPhone 4 with iOS 7.1.1 (11D201)

- Xcode: 5.1.1 (5B1008)

- MacBook Pro 15 Retina (ME294) with OS X 10.9.3 (13D65)

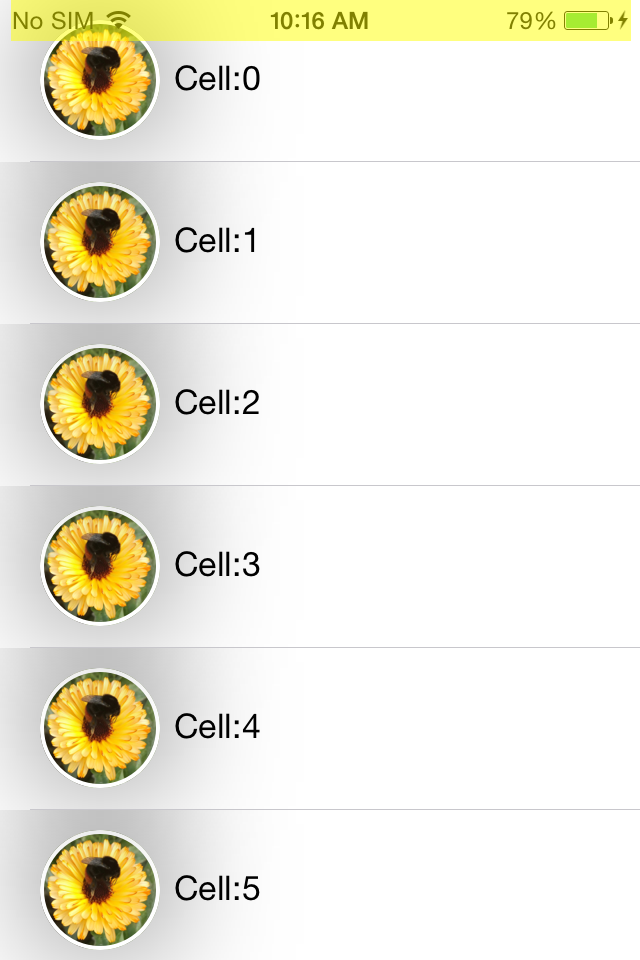

Rounding corners

Create a simple Tableview with our custom cell and add UIImageView and UILabel to our cell. Remember the good old days when buttons were round? To achieve this fantastic effect in Tableview, we need to set the YES value for CALayer.cornerRadius and CALayer.masksToBounds.

- (UITableViewCell *)tableView:(UITableView *)tableView cellForRowAtIndexPath:(NSIndexPath *)indexPath {

NSString *identifier = NSStringFromClass(CRTTableViewCell.class);

CRTTableViewCell *cell = [self.tableView dequeueReusableCellWithIdentifier:identifier];

cell.imageView.layer.cornerRadius = 30;

cell.imageView.layer.masksToBounds = YES;

cell.imageView.image = [UIImage imageNamed:@"flower.jpg"];

cell.textLabel.text = [NSString stringWithFormat:@"Cell:%ld",(long)indexPath.row];

return cell;

}

Despite the fact that we achieved the desired effect, even without Instruments it is obvious that the performance is very far from the recommended 60 FPS . But we will not look into the crystal ball to find unverified numerical answers. Instead, we just test performance with Instruments .

First of all, enable the Color Offscreen-Rendered Yellow option. Each UIImageView cell is covered in a yellow layer.

It's also worth checking out how to work with Animation and OpenGL ES Driver in Instruments.

Speaking of the OpenGL ES Driver tool, what does this give us? To understand how it works, let's look at the GPU from the inside. GPU consists of two components - Renderer and Tiler. The duty of the renderer is to draw data, although the order and composition are determined by the Tiler component. Thus, the work of Tiler is to divide the frame into pixels and determine their visibility. Only then are visible pixels passed to the renderer (i.e. the rendering process slows down ).

If the Renderer Utilization value is above ~ 50%, then this means that the animation process may be limited by the filling speed. If Tiler Utilization is above 50%, then this suggests that the animation can be geometrically limited, that is, on the screen, most likely, there are too many layers.

Now it’s clear that we must look for a different approach to achieve the desired effect and, at the same time, increase productivity. Use the category for UIImage to round corners without using the cornerRadius properties:

@implementationUIImage (YALExtension)

- (UIImage *)yal_imageWithRoundedCornersAndSize:(CGSize)sizeToFit {

CGRect rect = (CGRect){0.f, 0.f, sizeToFit};

UIGraphicsBeginImageContextWithOptions(sizeToFit, NO, UIScreen.mainScreen.scale);

CGContextAddPath(UIGraphicsGetCurrentContext(),

[UIBezierPath bezierPathWithRoundedRect:rect cornerRadius:sizeToFit.width].CGPath);

CGContextClip(UIGraphicsGetCurrentContext());

[self drawInRect:rect];

UIImage *output = UIGraphicsGetImageFromCurrentImageContext();

UIGraphicsEndImageContext();

return output;

}

@endAnd now we will change the implementation of the dataSource of the cellForRowAtIndexPath method.

- (UITableViewCell *)tableView:(UITableView *)tableView cellForRowAtIndexPath:(NSIndexPath *)indexPath {

NSString *identifier = NSStringFromClass(CRTTableViewCell.class);

CRTTableViewCell *cell = [self.tableView dequeueReusableCellWithIdentifier:identifier];

cell.myTextLabel.text = [NSString stringWithFormat:@"Cell:%ld",(long)indexPath.row];

UIImage *image = [UIImage imageNamed:@"flower.jpg"];

cell.imageViewForPhoto.image = [image yal_imageWithRoundedCornersAndSize:cell.imageViewForPhoto.bounds.size];

return cell;

}

The rendering code is called only once when the object is first displayed on the screen. The object is cached by CALayer and subsequently displayed without additional rendering. Regardless of the fact that it works slower than the methods of Core Animation, this approach allows you to convert frame-by-frame rendering one-time.

Before we get back to measuring performance, let's check the off-screen rendering again.

57 - 60 FPS! We succeeded in an optimal way to double productivity and reduce Tiler Utilization and Renderer Utilization.

Avoid abuse of drawRect method overrides

Keep in mind that the -drawRect method leads to offscreen rendering, even when you just need to fill the background with color.

Especially if you want to make your own implementation of the DrawRect method for such simple operations as setting the background color, instead of using the UIView BackgroundColor property.

This approach is irrational for two reasons.

First: system-wide UIViews can implement their own rendering methods to display their contents, and it is obvious that Apple is trying to optimize these processes. In addition, we must remember the backup storage - a new backup image, with pixel sizes equal to the size of the image times the contentsScale, which will be cached, until the next image update.

Secondly, if we avoid abusing the DrawRect method, we do not need to allocate additional memory for the backup storage and reset it every time we perform a new rendering cycle.

CALayer.shouldRasterize

Another way to speed up performance for off-screen rendering is to use the CALayer.shouldRasterize property. The layer is rendered once and cached, until the moment when you need to draw this layer again.

However, despite the potential performance improvement, if the layer needs to be redrawn too often, the additional caching costs make it useless, because the system will rasterize the layer after each draw.

In the end, the use of CALayer.shouldRasterize depends on the specific use case and Instruments.

Shadows & shadowPath

With the help of shadows it is possible to make the user interface more beautiful. On iOS, it’s very easy to add a shadow effect:

cell.imageView.layer.shadowRadius = 30;

cell.imageView.layer.shadowOpacity = 0.5f;

With “Offscreen Rendering” turned on, we can see that the shadow adds off-screen rendering, which is why CoreAnimation calculates the shadow rendering in real time, which reduces FPS .

What does Apple say?

»Allowing Core Animation to determine the shape of the shadow can affect the performance of your application. Instead, define the shape of the shadow using the shadowPath property for CALayer. When using shadowPath, Core Animation uses the specified form to render and cache the shadow effect. For layers whose state does not change or rarely changes, this significantly improves performance by reducing the number of visualizations performed by Core Animation. "

Therefore, we must provide shadow caching (CGPath) for CoreAnimation, which is quite easy to do:

UIBezierPath *shadowPath = [UIBezierPath bezierPathWithRect:cell.imageView.bounds];

[cell.imageView.layer setShadowPath:shadowPath.CGPath];

With one line of code, we avoided off-screen rendering and greatly improved performance.

So, as you can see, many UI performance issues can be solved quite easily. One small remark - do not forget to measure performance before and after optimization :)

Useful links and resources

WWDC 2011 Video: Understanding UIKit rendering

WWDC 2012 Video: iOS App performance: Graphics and Animations

Book: iOS Core animation. Advanced Techniques by Nick Lockwood

iOS image caching. Libraries benchmark

WWDC 2014 Video: Advanced Graphics and Animations for iOS Apps