How to do an intro on 64k

- Transfer

Intro Intro

The demoscene is about creating cool pieces that work in real time (as if “spinning in your computer”). They are called demos . Some of them are really small, say, 64k or less - these are called intros . The name comes from advertising or presenting cracked programs (crack intro). So the intro is just a little demo.

I noticed that many people are interested in demo scenes, but they don’t have a clue how real demos are made. In this article - a brain dump and posthumous dissection of our fresh intro Guberniya. I hope it will be interesting for both beginners and experienced veterans. The article covers almost all the techniques that are used in the demos, and should give a good idea of how to make them. In this article I will call people by nickname, because that's exactly what is accepted on stage .

Windows binary : guberniya_final.zip (61.8 kB) (breaks down a bit on AMD cards)

Guberniya in a Nutshell

This is a 64k intro released at demo Revision 2017 . Some numbers:

- C ++ and OpenGL, dear imgui for GUI

- 62976 bytes binary for Windows, kkrunchy packed

- basically, raymarching ( rakecasting option - approx. per. )

- group of 6 people

- one artist :)

- done in four months

- ~ 8300 lines of C ++, not including library code and spaces

- 4840 lines of GLSL shaders

- ~ 350 git commits

Development

Demos are usually released on demopati, where viewers watch them and vote for the winner. The demo party issue gives you good motivation because you have a solid deadline and a passionate audience. In our case, it was Revision 2017 , a big demo party, which traditionally takes place on Easter weekend. You can look at some photos to get an idea of the event.

The number of commits per week. The biggest surge is that we urgently hack right in front of the deadline. The last two columns are the changes for the final version, after the demo

party. We started working on the demo in early January and released it on Easter in April during the event. You can see the record of the whole competition if you wish :)

Our team consisted of six people: cce (it's me), varko , noby , branch , msqrt and goatman.

Design and influence

The song was ready at a fairly early stage, so I tried to draw something based on its motives. It was clear that we needed something big and cinematic with memorable parts.

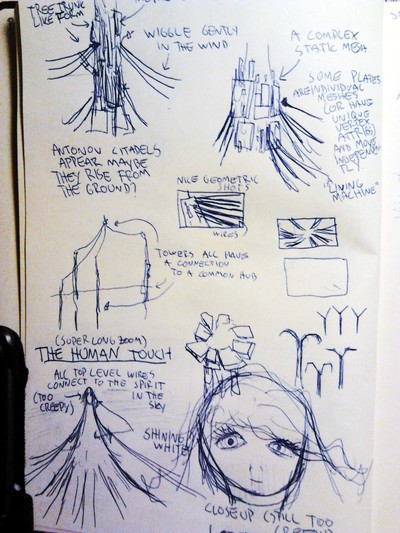

The first visual ideas revolved around wires and their use. I really like the work of Viktor Antonov, so the first drafts are largely copied from Half-Life 2:

The first drafts of the citadel towers and ambitious human characters. Full size .

Victor Antonov's conceptual work for Half-Life 2: Raising the Bar The

similarities are quite obvious. In landscape scenes, I also tried to convey the mood of Eldion Passageway by Anthony Shimes.

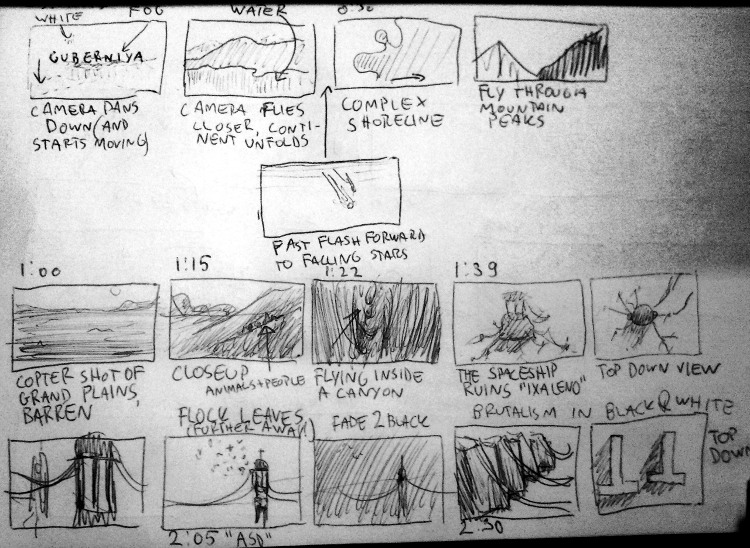

The landscape is inspiredthis glorious video about Iceland , as well as Koyaaniskatsi, I guess. I had big plans for the story depicted in the storyboard:

This storyboard is different from the final version of the intro. For example, brutal architecture was cut out. Full storyboard .

If I would do it again, I would limit myself to just a couple of photos that set the mood. So less work and more space for imagination. But at least drawing helped me streamline my thoughts.

Ship

The spacecraft was designed by noby . This is a combination of numerous Mandelbrot fractals intersecting with geometric primitives. The design of the ship remained a little incomplete, but it seemed to us that it was better not to touch it in the final version.

A spaceship is a raymarching field of distances, like everything else.

We had another ship shader that did not enter the intro. Now I look at the design, it is very cool, and it is unfortunate that there was no place for it.

Design of a spaceship from branch. Full size .

Implementation

We started with the code base of our old Pheromone intro ( YouTube ). There was basic cropping functionality and a library of standard OpenGL functions along with a file system utility that packed files from the data directory into an executable file using

bin2h.The working process

We used Visual Studio 2013 to compile the project because it did not compile in VS2015. Our replacement of the standard library did not work very well with the updated compiler and produced funny errors like the following:

Visual Studio 2015 did not get along with our code base.

For some reason, we still got stuck on VS2015 as an editor and simply compiled the project using the v120 platform tools.

Most of my work with the demo looked like this: shaders are open in one window, and the final result with console output is in others. Full size .

We did a simple global keystroke capture that reloaded all shaders if it detected a CTRL + S combination:

// Listen to CTRL+S.

if (GetAsyncKeyState(VK_CONTROL) && GetAsyncKeyState('S'))

{

// Wait for a while to let the file system finish the file write.

if (system_get_millis() - last_load > 200) {

Sleep(100);

reloadShaders();

}

last_load = system_get_millis();

}It worked really cool, and editing shaders in real time became much more interesting. No need to intercept file system events or the like.

GNU Rocket

For animation and production, we used Ground Control , a fork of GNU Rocket . Rocket is a program for editing animated curves; it connects to the demo via a TCP socket. The reference frames are sent at the request of the demo. This is very convenient because you can edit and recompile the demo without closing the editor and without risking losing the synchronization position. For the final version, reference frames are exported in binary format. However, there are some annoying restrictions .

Tool

Changing the point of view with the mouse and keyboard is very convenient to choose camera angles. Even a simple GUI helps a lot when the little things matter.

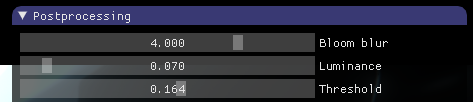

Unlike some , we did not have a tool for demos, so we had to create it as we worked. The magnificent dear imgui library makes it easy to add features as needed.

For example, you need to add several sliders to control the color parameters - all you need to do is add these lines to the rendering cycle ( not to a separate GUI code).

imgui::Begin("Postprocessing");

imgui::SliderFloat("Bloom blur", &postproc_bloom_blur_steps, 1, 5);

imgui::SliderFloat("Luminance", &postproc_luminance, 0.0, 1.0, "%.3f", 1.0);

imgui::SliderFloat("Threshold", &postproc_threshold, 0.0, 1.0, "%.3f", 3.0);

imgui::End();End result:

These sliders were easy to add.

The camera position can be saved to a file

.cppby clicking F6, so that after the next compilation it will be in the demo. This eliminates the need for a separate data format and corresponding serialization code, but such a solution can also turn out to be rather sloppy.Making small binaries

The main thing to minimize the binary is to throw away the standard library and compress the compiled binary. As the basis for our own implementation of the library, we used the Tiny C Runtime Library from Mike_V.

Compressed binary engaged kkrunchy - tool made just for this purpose. It works with individual executables, so you can write your demo in C ++, Rust, Object Pascal or anything else. To be honest, size was not a big deal for us. We did not store a lot of binary data like images, so there was room for maneuver. I didn’t even have to delete comments from shaders!

Floating point

The floating point code delivered some headache by making calls to the functions of a non-existent standard library. Most of them were resolved by disabling SSE vectorization by the compiler key

/arch:IA32and deleting calls to ftolusing a flag /QIfstthat generates code that does not save FPU flags for truncation mode. This is not a problem, because you can set the floating point truncation mode at the beginning of your program with the following code from Peter Schoffhauser :// set rounding mode to truncate

// from http://www.musicdsp.org/showone.php?id=246

static short control_word;

static short control_word2;

inline void SetFloatingPointRoundingToTruncate()

{

__asm

{

fstcw control_word // store fpu control word

mov dx, word ptr [control_word]

or dx, 0x0C00 // rounding: truncate

mov control_word2, dx

fldcw control_word2 // load modfied control word

}

}You can read more about these things at benshoof.org .

POW

The call

powstill generates a call to an internal function __CIpowthat does not exist. I couldn’t figure out its signature myself, but I found an implementation in ntdll.dll from Wine - it became clear that it expects two double-precision numbers in the registers. After that, it became possible to make a wrapper that calls our own implementation pow:double __cdecl _CIpow(void) {

// Load the values from registers to local variables.

double b, p;

__asm {

fstp qword ptr p

fstp qword ptr b

}

// Implementation: http://www.mindspring.com/~pfilandr/C/fs_math/fs_math.c

return fs_pow(b, p);

}If you know the best way to deal with this, please let us know.

Winapi

If you can not count on SDL or something like that, then you have to use pure WinAPI for the necessary operations to display the window on the screen. If you have problems, here are some things that might help:

Please note that in the last example, we load function pointers only for those OpenGL functions that are actually used in business. It might be a good idea to automate this. Functions must be accessed along with string identifiers that are stored in the executable file, so the fewer functions are loaded, the more space is saved. The Whole Program Optimization option can remove all unused string literals, but we will not use it due to a problem with memcpy .

Rendering techniques

The rendering is mainly done by the raymarching method, and for convenience we used the hg_sdf library . Inigo Quilez (from now on referred to simply as iq) has written a lot about this and many other techniques. If you have ever visited ShaderToy , you should be familiar with this.

In addition, we had the issuance of a raster - the value of the depth buffer, so that we could combine SDF (sign distance fields) with the geometry in the raster, and also apply post-processing effects.

Shading

We used standard shading Unreal Engine 4 ( here is a large pdf with description ) with a bit of GGX. This is not very noticeable, but it matters in the main points. From the very beginning, we planned to make the same lighting for both raymarching and rasterized forms. The idea was to use deferred rendering and shadow maps, but that didn't work out at all.

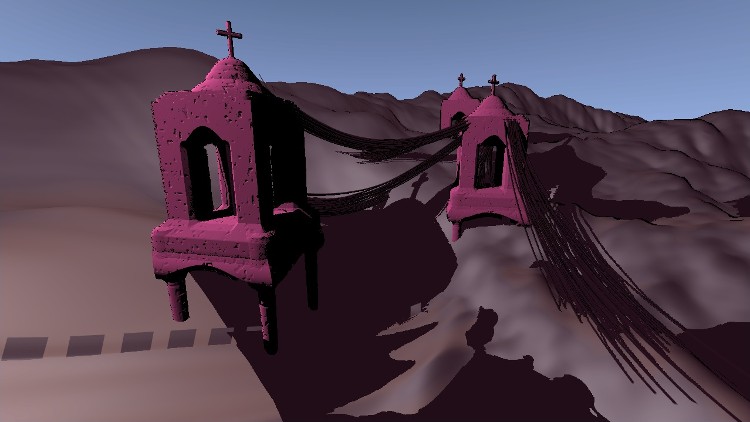

One of the first experiments with the imposition of shadow cards. Notice that both towers and wires cast a shadow on the raymarching ground and also intersect correctly. Full size .

It is incredibly difficult to correctly render large areas with shadow maps due to the wildly skipping screen-to-shadow map-texel ratio and other accuracy issues. I also had no desire to start experiments with cascading shadow maps . In addition, raymarching the same scene from different angles of view is really slow . So we just decided to scrape the whole system of the same lighting. This turned out to be a huge problem later when we tried to correlate the lighting of rasterized wires and the raymarching geometry of the scene.

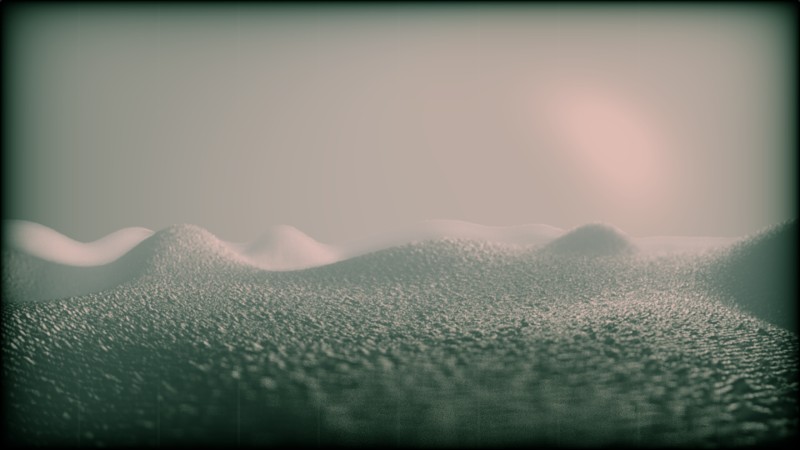

Terrain

Raymarching terrain was produced by numerical noise with analytical derivatives. 1Of course, the generated derivatives were used to apply shadows, but also to control the pitch of the rays to accelerate the rays around the smooth contours, as in the iq examples. If you want to know more, read an old article about this technique or play around with a cool rainforest scene on ShaderToy . The landscape heights map became more realistic when msqrt implemented exponentially distributed noise .

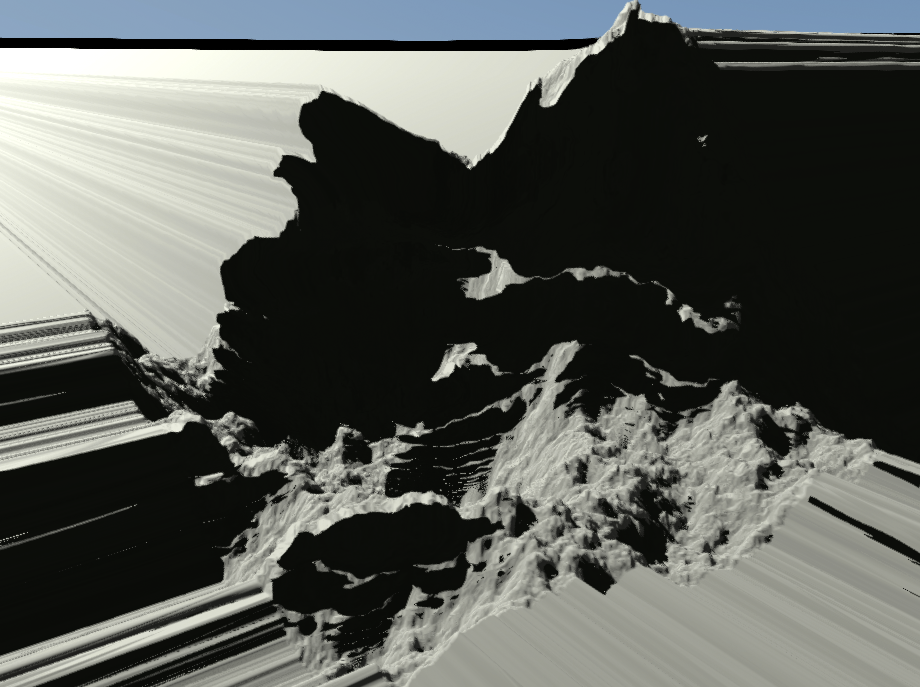

The first tests of my own implementation of numerical noise.

The implementation of the area from the branch, which they decided not to use. I don’t remember why. Full size .

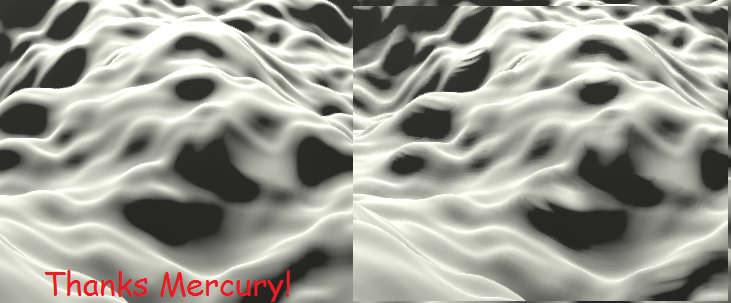

The landscape effect is calculated very slowly, because we brute force shadows and reflections. Using shadows isA small hack with shadows , in which the penumbra size is determined by the shortest distance that was encountered when going around the shadow beam. They look pretty good in action . We also tried using bisection tracing to speed up the effect, but it produced too many artifacts. On the other hand, tricks raymarching from Mercury (another demogruppa) helped us a little bit to improve the quality without losing speed.

Terrain rendering with improved fixed-point iterations (left) compared to regular raymarching (right). Note the unpleasant ripple artifacts in the picture on the right.

The sky is generated by almost the same techniques as described in behind elevatedfrom iq, slide 43. A few simple functions of the beam direction vector. The sun produces quite large values in the frame buffer (above 100), so this also adds some color naturalness.

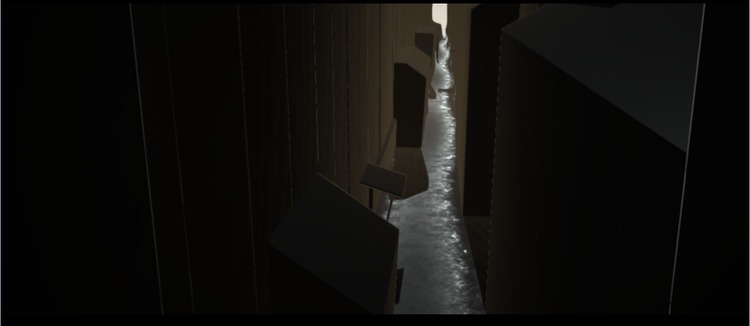

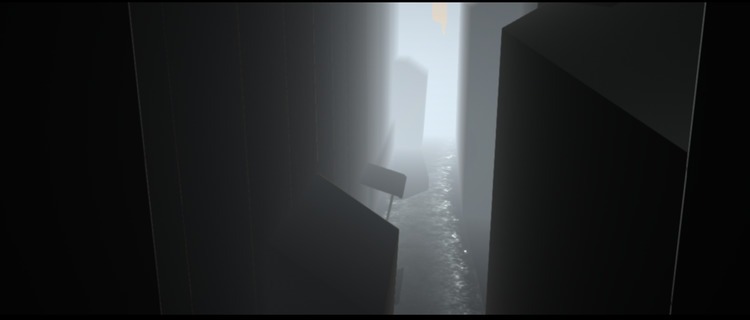

Lane scene

This is a view created under the influence of Fan Ho photos . Our post-processing effects really made it possible to create a solid scene, although the initial geometry is pretty simple.

An ugly distance field with some repeating fragments. Full size .

Added a bit of fog with an exponential change in distance. Full size .

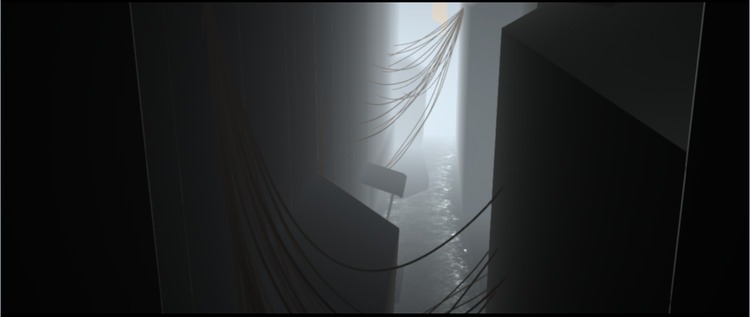

Wires make the scene more interesting and realistic. Full size .

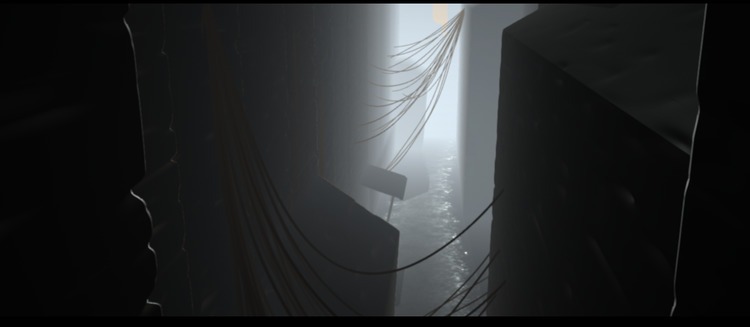

In the final version, a bit of noise was added to the distance field to create the impression of brick walls. Full size .

When post-processing added color gradient, chromaticity, chromatic aberration and glare. Full size.

Modeling with distance fields

The B-52 bombers are a good example of simulation with SDF. They were much easier at the development stage, but we brought them to the final release. From a distance they look pretty convincing:

Bombers look normal from a distance. Full version .

However, this is just a bunch of capsules. Admittedly, it would be easier to just simulate them in some kind of 3D package, but we didn’t have any suitable tool at hand, so we chose a faster way. Just for reference, here's what the distance field shader looks like: bomber_sdf.glsl .

However, they are actually very simple. Full size .

Characters

The first four frames of a goat animation.

Animated characters are just packed 1-bit bitmaps. During playback, frames smoothly transition from one to another. Stuff provided by the mysterious goatman.

Cozopas with his friends.

Post processing

Post-processing effects were written by varko. The system is as follows:

- Apply shadow from G-buffer.

- Calculate the depth of field.

- Remove light parts for color.

- Perform N individual Gaussian blur operations.

- Calculate fake lens flare and spotlight flare.

- Put it all together.

- Make smooth contours with FXAA ( thanks mudlord ).

- Color correction.

- Gamma correction and light grain.

The lens flare follows in many ways the technique described by John Chapman . It was sometimes difficult to work with them, but the end result delivers.

We tried to aesthetically use the effect of depth of field. Full size .

The depth of field effect (based on the DICE technique ) is done in three passes. The first calculates the size of the circle of confusion for each pixel, and the other two passes superimpose on them two spots from the rotating areas. We also make an improvement in several iterations (in particular, we apply numerous Gaussian blurring) if necessary. This implementation worked well for us and it was fun to play with.

Depth of field effect in action. The red picture shows the calculated circle of sharpness for the DOF spot.

Color correction

Rocket has an animated parameter

pp_indexthat is used to switch between color correction profiles. Each profile is simply different branches of the large branch operator in the post-processing shader:vec3 cl = getFinalColor();

if (u_GradeId == 1) {

cl.gb *= UV.y * 0.7;

cl = pow(cl, vec3(1.1));

} else if (u_GradeId == 2) {

cl.gb *= UV.y * 0.6;

cl.g = 0.0+0.6*smoothstep(-0.05,0.9,cl.g*2.0);

cl = 0.005+pow(cl, vec3(1.2))*1.5;

} /* etc.. */It is very simple, but it works quite well.

Physical modeling

The demo has two simulated systems: wires and a flock of birds. They were also written by varko.

Wires

Wires add realism to the scene. Full size .

Wires are considered as a series of springs. They are modeled on a GPU using computational shaders. We are doing this simulation in many small steps because of the instability of the Verlet method of numerical integration, which we use here. The computational shader also outputs the geometry of the wire (a series of triangular prisms) to the vertex buffer. Unfortunately, for some reason, the simulation does not work on AMD cards.

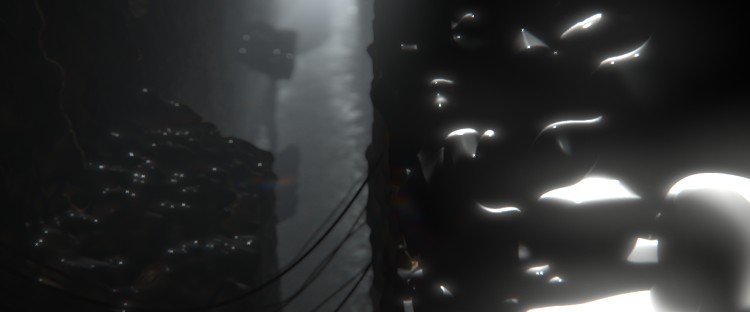

Flock of birds

Birds give a sense of scale.

The flock model consists of 512 birds, where the first 128 are considered leaders. Leaders follow the swirling noise pattern , while the rest follow them. I think that in real life the birds follow the movements of their closest neighbors, but this simplification also looks pretty good. The flock rendered as

GL_POINTsbeing modulated in size to give the impression of a flap of wings. I think this rendering technique was also used in Half-Life 2.Music

Usually, music for a 64k intro is made using a VST plugin : this is how musicians can use their usual instruments for composing music. A classic example of this approach is farbrausch's V2 Synthesizer .

That was a problem. I didn’t want to use some kind of ready-made synthesizer, but from previous unsuccessful experiments I knew that making my own virtual instrument would require a lot of work. I remember how I really liked the mood of the element / gesture 61% demo that branch did with the ambient music theme prepared by paulstretched . This prompted me to realize this in the amount of 4k or 64k.

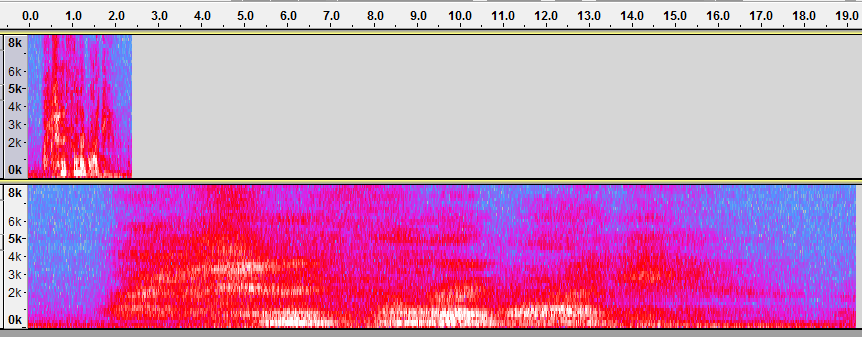

Paulstretch

Paulstretch is a great tool for really crazy stretching music. If you haven’t heard of him, then you should definitely listen to what he can do from the sound of Windows 98 greeting . Its internal algorithms are described in this interview with the author , and he is also open source.

Original sound (top) and stretched sound (bottom) created using the Paulstretch effect for Audacity. Notice also how the frequencies are smeared over the spectrum (vertical axis).

Essentially, along with stretching the original signal, it also shakes its phases in the frequency space, so that instead of metal artifacts you get an unearthly echo. This requires many Fourier transforms, and the original application uses the Kiss FFT library for this. I did not want to depend on an external library, so in the end I implemented a simple discrete Fourier transform

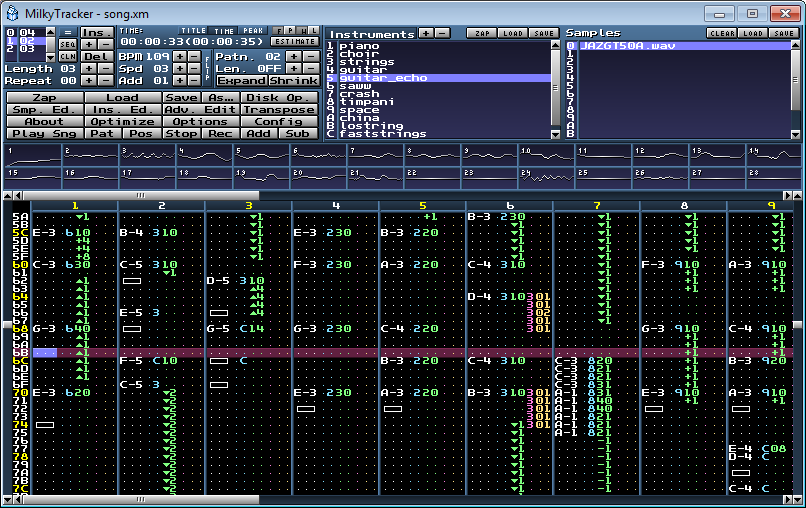

Tracker module

Now it has become possible to wind coils of ambient buzz if there is some meaningful sound as the source data. So I decided to use the proven and tested technology: tracker music. It is a lot like MIDI 2., but packed into a file with samples. For example, in the kasparov demo from elitegroup ( YouTube ), a module with additional reverb is used. If it worked 17 years ago, then why not now?

I used the

gm.dlsWindows built-in MIDI sound bank (again an old trick) and made a song using MilkyTracker in the format of the XM module. This format was used for many demos under MS-DOS in the 90s.

I used MilkyTracker to compose the original song. The final module file is cleared of instrument samples, and instead of them, the offset and length parameters from the

gm.dlsTrick with

gm.dlsthat 1996 Roland instruments sound very archaic and of poor quality. But it turned out that there is no problem if you immerse them in a ton of reverb! Here is an example in which a short test song is played first, followed by a stretched version:Surprisingly atmoferno, agree? So yes, I made a song that mimics Hollywood music, and it worked out great. This is generally all about the musical side.

Acknowledgments

Thanks to varko for helping with some of the technical details of this article.

Additional materials

- Logicoma Ferris shows off his 64k demo toolkit

- Do not forget to first look at Engage , their work in the same competition in which we participated

- Sources of some demos Ctrl-Alt-Test

- There are 4k and 64k codes.

- They also have an intro: H-Immersion

1. You can calculate the analytical derivatives for gradient noise: https://mobile.twitter.com/iquilezles/status/863692824100782080 ↑

2. The first thought was to simply use MIDI instead of the tracker module, but it didn't seem to be a way to simply render a song to a Windows audio buffer. Apparently, somehow this is possible using the DirectMusic API, but I could not find how. ↑