Questionnaire errors. 2 mistake: the wording of the questionnaire. 13 cases of misunderstanding and manipulation in the survey (1 part)

I continue to share the experience of mistakes and findings in questionnaire research. In the first article, I talked about how you can attract relevant respondents and increase the return of completed questionnaires.

Read the first article Questionnaire Errors. 1 error: sampling bias. 8 ways to attract the right respondents

In this article, I will explain why the problem of comprehensibility of the questionnaire for the survey participants is much more important than it seems at first glance. Let us also consider examples of manipulating the opinion of respondents, falsifying survey results and using surveys for marketing purposes.

The advantage of questionnaires - large coverage and quick results - also becomes their main disadvantage. Losing the possibility of communication with the final respondent, we are forced to make an expensive assumption: "all respondents understand the meaning of the questionnaire and fill it out correctly . " If you make this assumption, you can exhale and work with the results of the survey, as with relevant facts and opinions. And if such an assumption is not made, the study may turn into a series of cross-checks and approvals and ultimately become completely paralyzed.

To be honest, having gone through the difficulties of attracting respondents and returning questionnaires, the less that the researcher wants is to doubt that the questionnaires are filled out correctly. But even sadder is to realize that the questionnaires are corrupted due to a misunderstanding by the participants of the instruction, or questions. And to protect yourself and the customer from these doubts, you can observe a number of preparatory stages that will significantly increase confidence in the study. We will cover these steps in the next article. First, we’ll figure out where the difficulties of understanding can lie, and what it can lead to.

At least the following parts of the questionnaire study can be distinguished, where misunderstanding may arise:

Everything is simple here. Indeed, respondents often do not understand the meaning of words that seem obvious to the researcher. And this does not always apply to professional slang. Sometimes, it would seem, the usual words of the Russian language cause difficulties for the survey participants, but the experimenter rarely has the opportunity to learn about it.

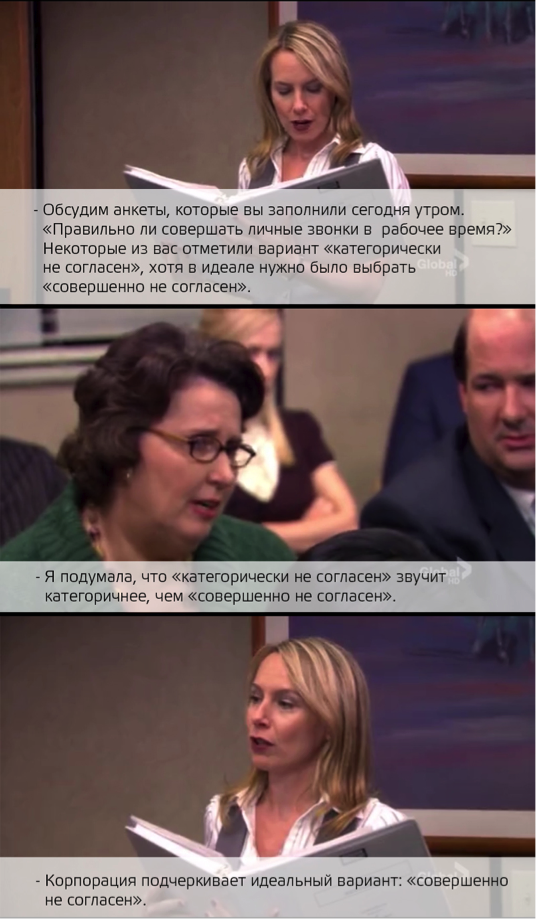

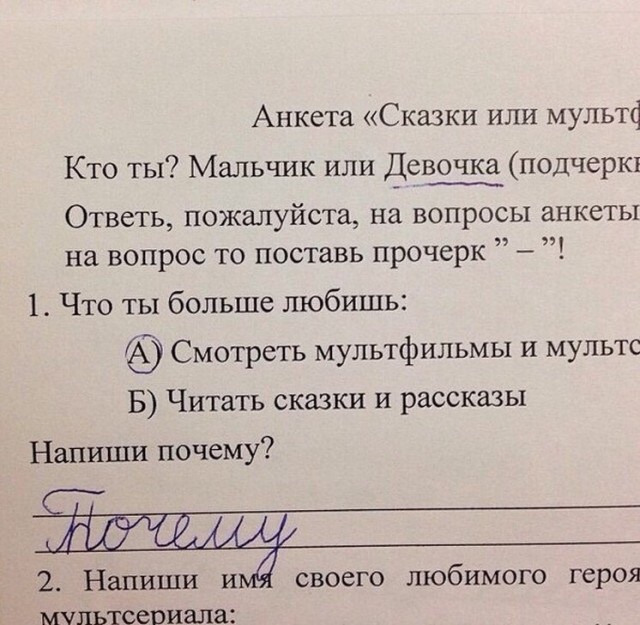

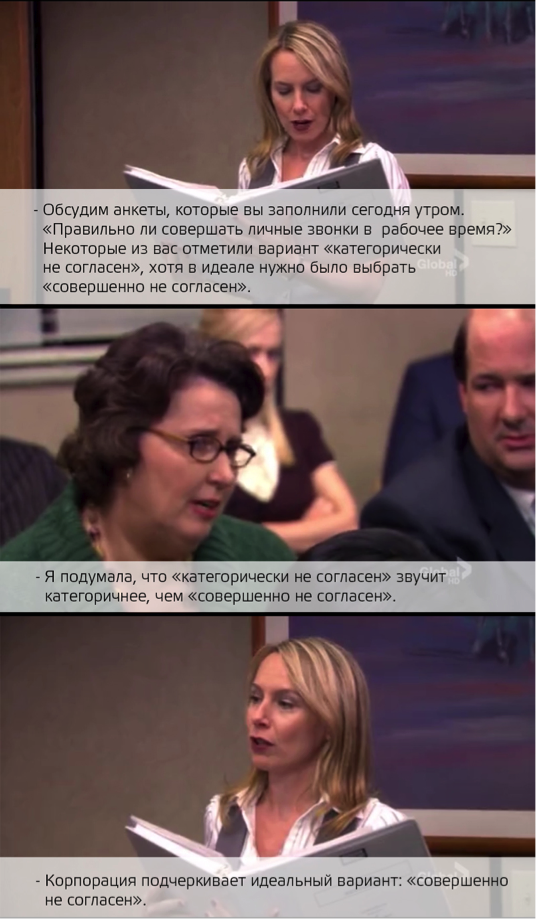

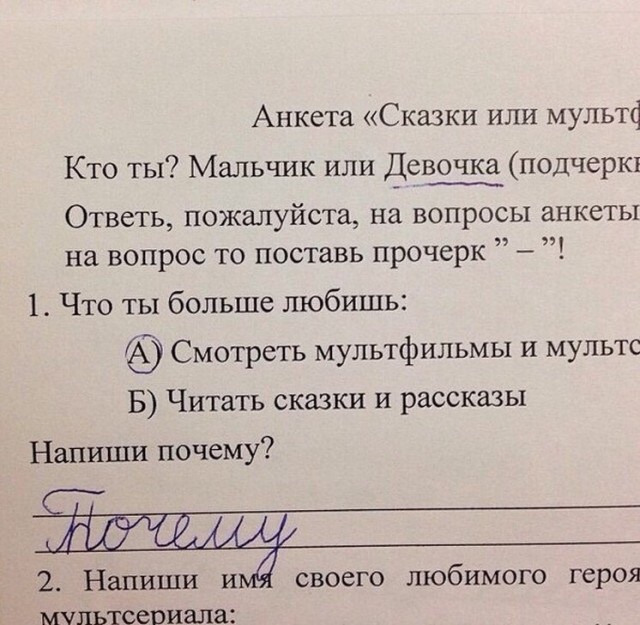

On the picture: user comment on a questionnaire survey published on the site (source: pikabu.ru)

The second threat comes from the use of slang in the questionnaires, which may either not be understood by the participants or annoy them. This can happen if a researcher tries to flirt with the target audience and uses their slang in the questionnaire, not being a carrier of this culture.

Misunderstanding the instructions is the most obvious point in this article and would seem to be useless. Almost all researchers clarify in the introduction to the questionnaire what the study is aimed at and how to fill out the form. Almost all respondents already have experience in participating in some kind of polls, and now you can rarely hear the worried questions that we were asked about 8 years ago: “Do you need to put a plus sign or a tick in the box?” And “Can I cross out the answer if I change my mind?” ? "

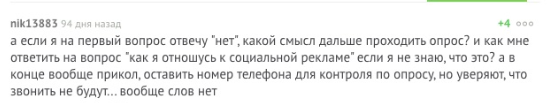

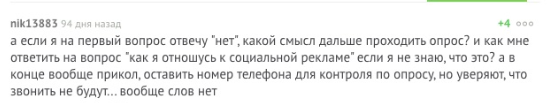

On the picture: Questionnaire with inaccurate instructions for applicants (source: pikabu.ru).

Despite the experience of an ordinary respondent, the usability questionnaire again arises if the researcher tries new forms (for example, on-line surveys and questionnaires on mobile devices with non-obvious instructions or the inability to miss an answer), forgets about the option “one answer option / several answer options” , or forgets to give the correct instruction to difficult questions (for example, questions with ranking, or pairwise comparison). And it’s a great success for the researcher to find out about the difficulties of the participant, as in the example in the picture below.

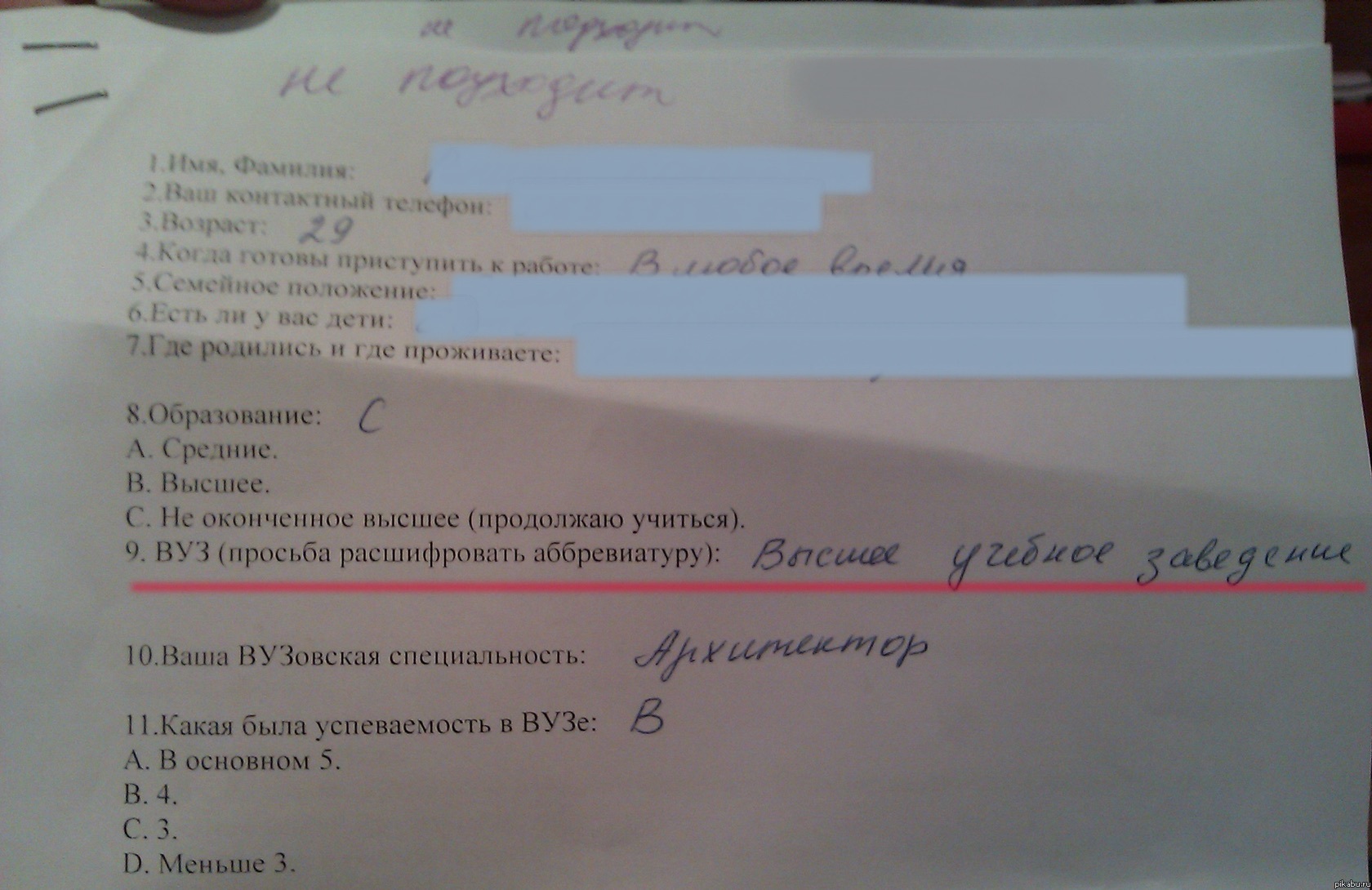

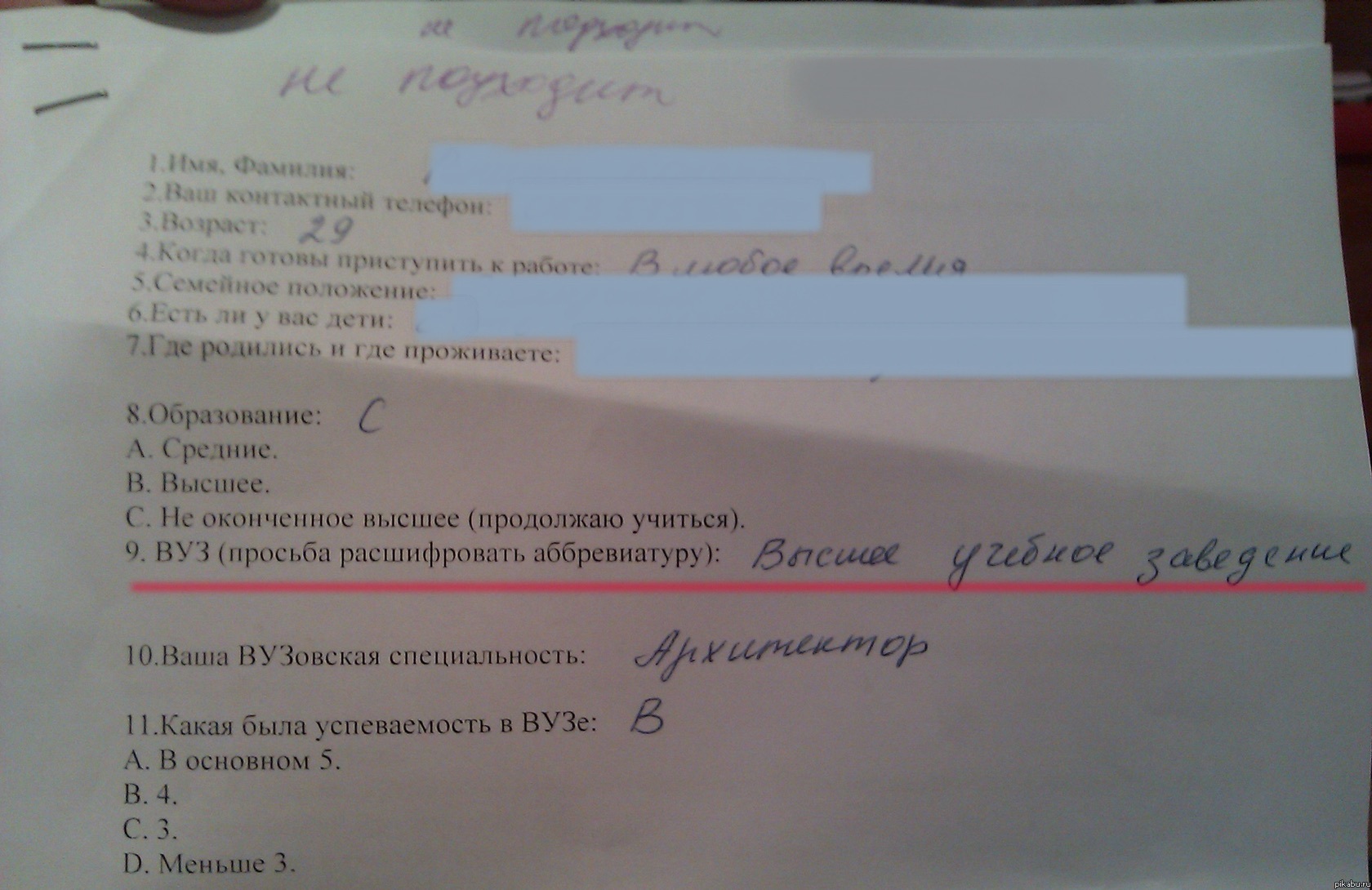

In the picture: a user’s comment on a questionnaire published on the site (source: pikabu.ru)

What seems obvious to the researcher and most of the respondents can be difficult for less experienced participants.

In the picture: Questionnaire with inaccurate instructions, not adapted for the survey participant (source: adme.ru).

There is one rule in creating a questionnaire, or planning an interview: “A question is almost the answer.” An informative answer can only be obtained with a very well thought out question. In order to create such questions, a lot of preparatory work is carried out (in the next part of the article I will talk about the stages of this preparation).

But our experience with researchers shows that almost no one pays attention to such training. Responsibility for the answer is often assigned to the respondent, an essential assumption is made that the respondents have a formulated opinion on our issue and really want to express it. Often this is a completely erroneous assumption. Not only the problems of researchers, but the language of their questions is often not perceived by respondents.

On the picture: Shot from the movie "Hitchhiker 's Guide to the Galaxy."

Wikipedia: “The Final Answer to the greatest question of Life, the Universe and All That (...):“ Forty-two. ” The reaction was:

- Forty-two! Squealed Lunkkuool. “And that's all you can say after seven and a half million years of work?”

“I checked everything very carefully,” the computer said, “and with all certainty I declare that this is the answer.” It seems to me, if you are absolutely honest with you, then the whole point is that you yourself did not know what the question was.

“But this is a great question!” The final question of life, the universe and all that! - almost howled Lunkkuool.

“Yes,” the computer said in the voice of a sufferer, enlightening a round fool. “And what is this question?”

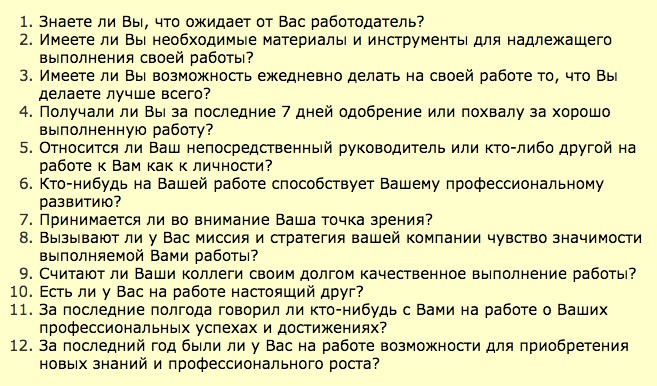

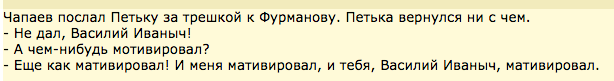

In the picture: One of the options for the Russian translation of the staff engagement questionnaire from Gallup (Source: antropos.ru).

We were unable to find links to publications with adaptation and validation of the Gallup questionnaire (mandatory steps when using translation methods). Nevertheless, you can find several options for its free translation, many recommendations for its use in business publications and even more offers from consulting companies that use 12 unvalidated and unadapted questions as the only tool for measuring staff involvement.

Naturally, when a client sees in the report only generalized indicators of engagement and - even better - the growth of such indicators, he rarely asks about the methods by which they were obtained.

Misunderstanding the direction of the issue is a much more complex and widespread mistake in questionnaire research. Sometimes it is impossible to assume how a person can interpret a seemingly unambiguously formulated question.

There is an elegant solution for this problem, which we will discuss in the next part. In the meantime, we can talk about cases where researchers deliberately hide the true meaning of the question, or confuse the wording in order to get the desired answer.

The illusion of choice.In the practice of social manipulation, there is such a move: providing the interlocutor with the illusion of choice, in which any of his answers will be beneficial to the manipulator. A classic example of the illusion of choosing fast-food chain restaurants from sales: When a customer orders coffee, the waiter asks: “Will you take a muffin for coffee, or a pie?” (The question “Would you like something for coffee?” Is considered much less effective from the point of view of sales ) Such a move is often used in questionnaires when the research organizer is interested in certain answers.

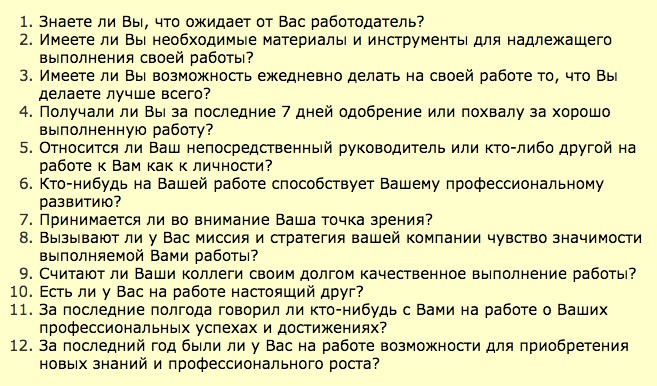

On the picture: A fragment of the feedback questionnaire for the participants in the game.

Questions 5, 6, and 9 are questions with the illusion of choice. They look pretty full. But, if you look closely, any answer either reflects a positive assessment or shifts the responsibility to the one filling out (according to the principle “it wasn’t a bad game - I still don’t understand the standards of behavior that have been explained to me for several months now”, or “I already I understand the essence of standards well ”).

The last question is an example of a question with the illusion of choice. All its options are positive. In formulating it, we knew how the results would look in the report: how the positive effects set by us in advance in the questionnaire would look in the diagrams, and how pleasant it would be for the manager to consider them. When a person views such a report, he rarely raises the question of whether negative answers were provided by the questionnaire.

If the research customer is not interested in the source text of the questionnaire and does not specify what options were provided to the participants, the organizers can replace the meaning of the results by placing emphasis.

In the picture: a fragment of news about the results of the opinion poll (source: www.solidarnost.org ).

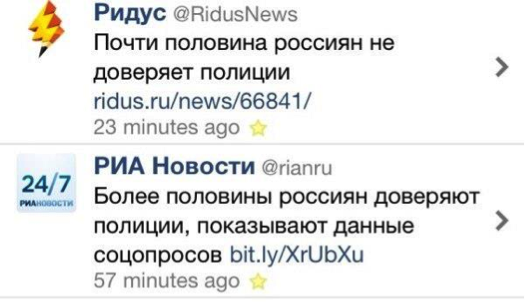

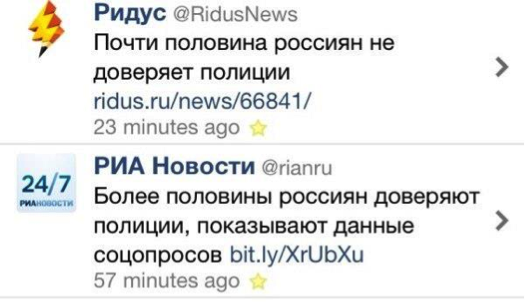

On the picture: a screenshot of the news feed - emphasis by different publications (source: joyreactor.cc)

Substitution of concepts. In fact, the practice of protecting the customer alone of the research results without first examining the raw data and how to collect them leaves a huge field for falsifying data and manipulating the results without any falsification. In such cases, it is important to pay attention to the logic and focus of the questions in the questionnaire before analyzing its results.

Therefore, the proposals of training and consulting companies look especially funny on their own to assess the effect of staff training, or implemented implementations, and provide the customer with a report on the return on investment. In feedback questionnaires, contractors may ask employees not at all about what, but in the final report to replace concepts. But customers usually readily accept such reports, as they look quite authoritative, and do not require efforts from internal services.

Suggestive wording. The tonality of the question in the questionnaire, or its orientation, can lead the participant to a specific answer. The benefit of the research organizer from such answers is to confirm their own position (for example, when falsifying theories, or justifying managerial decisions).

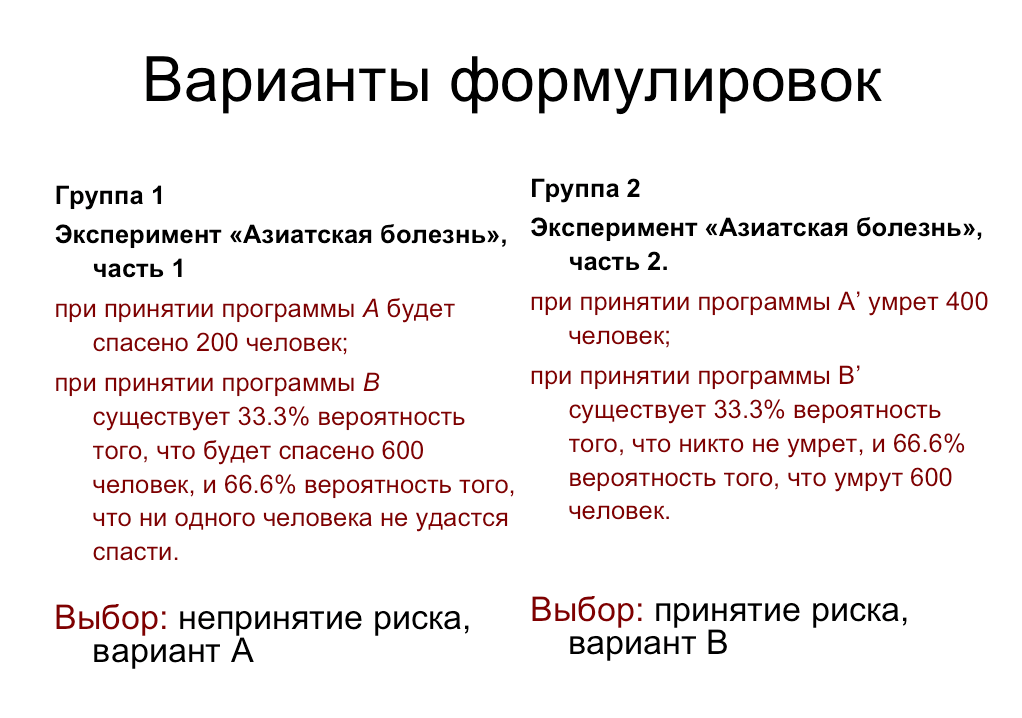

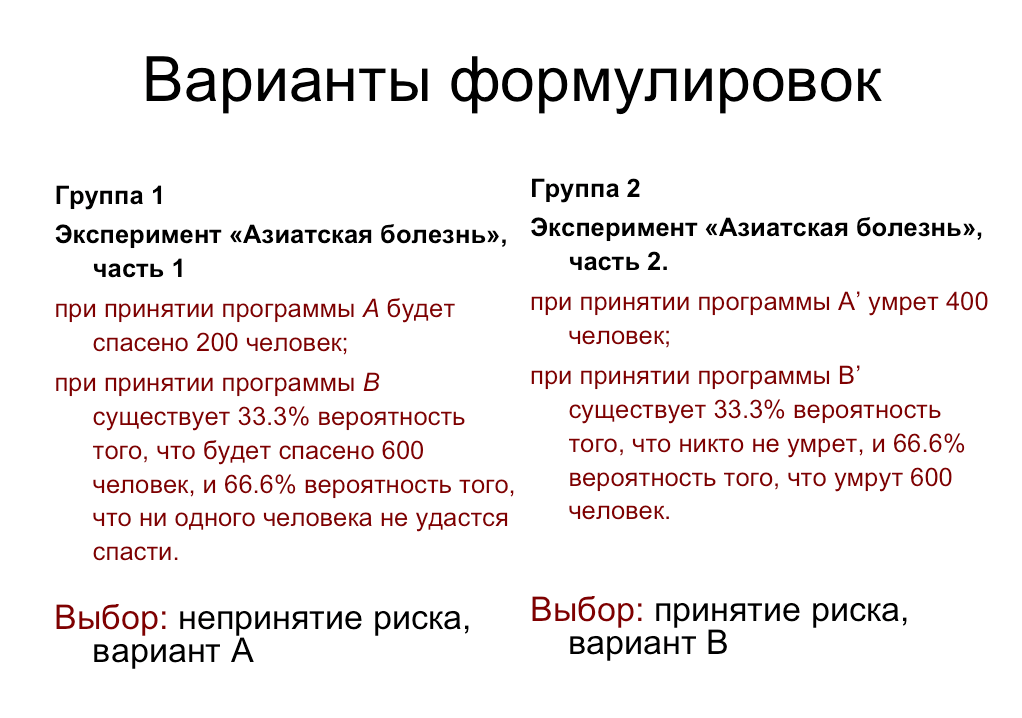

In the picture: The wording of the “programs” of the “Asian Disease” experiment and the preference for answers by the participants.

Another common example from D. Kahneman’s research: if one group of subjects is asked: “Did Gandhi live to be 114 years old? At what age did he die? ”And another:“ Did Gandhi live to be 35? At what age did he die? ”, The first group will evaluate Gandhi's life as much longer than the second.

So, successfully formulating the questionnaire questions, focusing on the positive options, or using suggestive numbers, the researchers create a “binding effect” and can manipulate the participants' answers.

Second option, in which the technique of suggestive formulations is used, is a gradual change in the beliefs of the study participant using the questionnaire. In this case, an outline is created from question to question, in which the respondent is forced to answer in a certain way, encounters his answers as with new ideas, and gradually changes his point of view. A continuation of this method is the need-creating questions (see next paragraph).

Surveys creating need. Creating the necessary needs for the participants with the help of correctly formulated questions is another tactic of manipulation and sales. And questionnaires in this event provide good support to selling companies.

No one will be deceived by pseudo-sociological polls in the style of “Do you care for your skin properly?”, Or “Do you know everything about cleansing the body?”, Where after the third question appears: “Are you familiar with the products of company N?”. About ten years ago in our country, these polls were very popular.

Now the tactics of creating needs are used more subtly. The first option is to covertly inform the participant using a questionnaire about the assortment and new products of the company, which creates awareness and arouses curiosity (see figure).

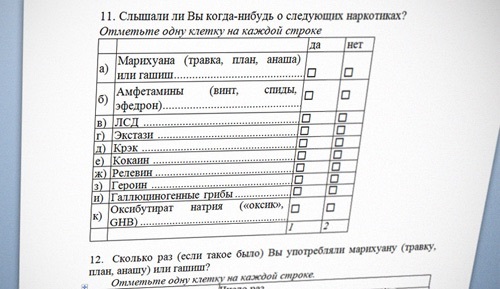

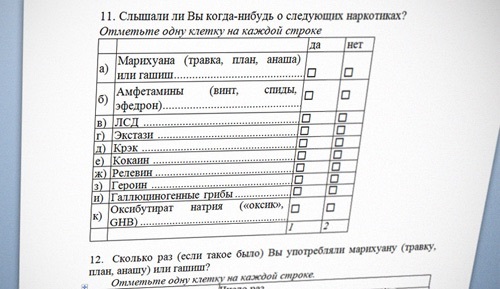

In the picture: a fragment of the questionnaire recommended by the Ministry of Health to determine the risk group for drug use by adolescents. Among the questions were also: “How many times, if this happened, did you sniff inhalants - glue, aerosol, gasoline - specifically to get“ unusual sensations ”?” Questionnaires were offered to distribute to children 10-13 years old, which caused a storm of indignation of parents. (Sources: Ridus.ru, RG.ru).

The second option for creating needs is to offer the client a questionnaire, supposedly helping to sort out what type of service (product) he actually needs. This is not to say that this is an unambiguous "policy of evil." Such questionnaires, indeed, help to clarify the needs of the client, but they very well help to sell.

The essence of the sale is that under the guise of a survey:

a) the respondent is led to the idea that he doesn’t have something, or that something is used inefficiently (cause a feeling of anxiety),

b) inform about new services (products, options) that will help solve his problem (about which he did not know before the survey).

An approximate algorithm of the questionnaire that creates the need:

1. Introductory neutral questions on the topic, "ice breakers."

2. Questions about the current state of the respondent, which gradually lead him to the idea that he is missing something, or that something is out of date.

3. Questions clarifying whether the respondent understands what the consequences of this shortage are, and what it may turn out to be for him or his business personally.

4.Questions about whether the respondent is familiar with some decisions (goods, services, technologies).

5. Questions informing the respondent exactly how these solutions help to cope with his problem.

6. Questions suggestive of a purchase (informing about the price, discount, conditions).

7. Questions confirming that when purchasing this solution the respondent’s problems will be resolved and he will receive additional benefits.

In this example, the “white threads” of sales are still clearly visible, but the closer your respondent is to customer status (for example, fills out a questionnaire on the product’s website) and the closer he is to purchase, the better they will perceive such a questionnaire and the easier it will be to increase the volume of his purchase due to additional sales.

Third optioncreating need profiles, very popular now, these are entertaining tests. Internet users are still attracted by tests in the style of “Are you good at computer games?” (Where you can inadvertently add a few questions listing the gaming innovations of a certain company), “Do you know how to manage your time?” (Here it’s appropriate to mention several specialized mobile apps you want to sell) etc. Such tests sometimes look professional and deliver positive emotions, even when their advertising nature is obvious.

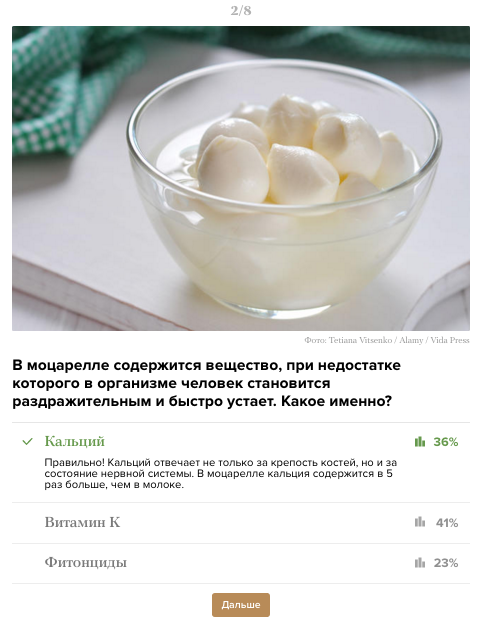

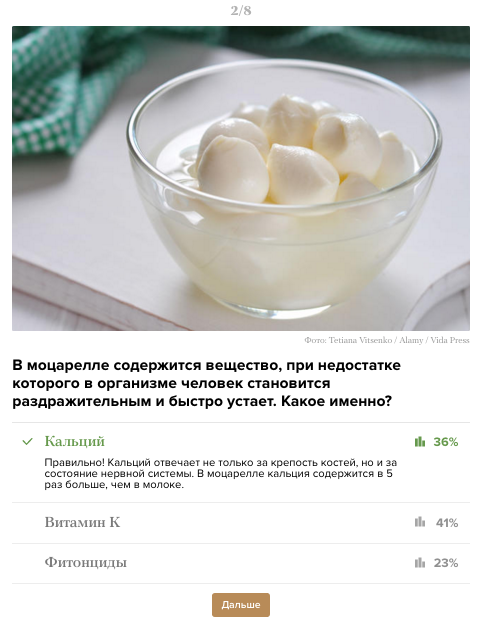

On the picture: One of the questions of the test for knowledge of Italian cheeses ( Source ).

Offset polls. Apart from this list of polls with hidden motives are polls with a biased purpose, since they are used mainly not for manipulation, but for a more elegant study of respondents' motives, when this cannot be done with direct questions.

Researchers do not always clarify assessment criteria, expecting them to be intuitive to participants.

As a way out of such situations, it is customary to use the Likert scale (Lakert, Likert) containing more detailed wordings for assessment (for example, from “I totally dislike” to “I like it very much”, or from “Never” to “Very often”). You can find or come up with many formulations of this scale for a variety of questions. But this scale also has drawbacks, since subjective understanding, for example, of the frequency of some actions in different people may vary. And if it is important for us to know not their opinion about the frequency, but a more or less accurate indicator, it is better to specify such answers in exact intervals (for example: “Less often than once a year” - “...” - “Several times a day”).

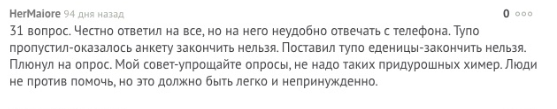

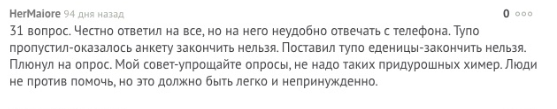

In the picture: participants' comments on the questionnaire survey published on the site (source: pikabu.ru) The

development of answer options becomes especially important when the questionnaire is used to assess complex, subjectively significant parameters. In such cases, respondents are prone to “mental savings” and choose assessment options that correspond to their general idea of the object without thinking about the question (for example, “I generally like the game, put 4 and 5 everywhere - it doesn’t matter, I evaluate the sound , or the level of feedback from players ").

Such averaged estimates are common when evaluating employees using the 360 degree method. When evaluating their colleagues, people tend to give positive scores to “loved ones” and neutral, or negative, scores to “unloved”, regardless of which parameter they are rated (unloved employee can be a strong leader, or don’t conflict with anyone, but “hand will not rise ”to give him high scores even for these indicators). As a result, such an assessment turns out to be completely useless for the organizers of the study, since it does not make it possible to identify real problem points among the company's employees.

The situation is exactly the same when evaluating user experience when working with IT products. If the user, in general, likes the site, or the company, he will tend to give high ratings in general to “not offend anyone”. And such a survey will not help developers make the product better.

In such cases, the researcher’s aerobatics is the development of “behavioral” response options that describe examples of the manifestation of quality in human behavior (or — when evaluating a product — especially the interaction of the user with him). According to the behavioral options, it is impossible to unambiguously determine which one is positive and which negative, and the respondent has to choose the option that is closest to reality.

На картинке: Пример вопроса оценки «ориентации на команду» для оценки сотрудников методом 360 градусов. В каждом варианте можно заметить положительные и негативные черты, что увеличивает вероятность честной оценки, в отличие от простого приписывания высоких, или низких баллов сотруднику (Источник).

Developing behavioral assessment options is a difficult step. It is especially difficult to choose adequate options when it is not entirely clear how they appear in real behavior (or user interaction with the product). For example, when evaluating sound effects in a game, the highest sound score would be: “Sounds do not distract from the game, create a good background”, or “Sounds play a crucial role, help to monitor the dynamics of the game, and prompt the players to take actions”. In order for the evaluation options to be the most appropriate for the real user experience, the operationalization stage is used , which I will discuss in detail in the next part of the article.

I tried to describe examples where the survey steps that are obvious to research organizers are misunderstood by the participants. Respondents may misunderstand words and phrases, wording of questions, instructions for the questionnaire, evaluation criteria and focus of questions. These difficulties lead to the collection of irrelevant information, or non-return of questionnaires.

We also examined examples where the confusion of respondents is provoked intentionally in order to manipulate the results of the survey. Among such manipulations:

- providing the illusion of choice;

- arrangement of accents;

- substitution of concepts;

- suggestive language;

- use of the binding effect;

- creating needs: 1) through information and curiosity, 2) through provocation of anxiety, 3) through entertaining tests,

- polls with a biased purpose.

In order to evaluate whether the researchers are not manipulating your opinion by presenting the results of a questionnaire, you can ask them several questions about the research procedure:

1. Ask to see the source text of the questionnaire and try to fill it out yourself before getting acquainted with the results (better yet, before how to authorize a study).

2. Imagine how your typical client or employee fills out this questionnaire (whether all terms and wording will be clear to him).

3.Pay attention to the wording of the questions and answer options: are there negative options, do they occupy an equal place with positive options.

4. Please note whether there are any suggestive language and “bindings” in the questionnaire questions.

5. Look at how clearly and concretely the evaluation criteria are formulated in the questionnaire (do they provoke “mental savings” - neutral, or extremely positive answers). The best option is if the assessment criteria are formulated in behavioral examples.

6. When analyzing the final report, pay attention to the basis of what questions in the questionnaire the conclusions are based on: whether the researchers replace the concept, do not give out the opinions of respondents as facts.

7.If you use a foreign technique (for example, from a well-known company), take a look at links to publications with a description of the procedure for its translation, adaptation and validation. Without these procedures, a technique cannot be considered an authoritative research method, regardless of the status of its developer.

8. Request the exact distribution of the survey participants by groups (gender, age, social status, or: departments, positions) in order to avoid sampling bias (more about this in the previous article about the sample).

In the following articles:

Mistake 2. The wording of the questions: why did you decide that you are understood? (Part 2)

Mistake 3. Types of lies in polls: why do you believe the answers?

Error 4.Opinion is not equal to behavior: are you really asking what you want to know?

Mistake 5. Types of polls: do you need to find out or confirm?

Mistake 6. Separate and saturate the sample: the average does not help to understand anything.

Mistake 7. The notorious “Net Promouter Score” is NOT an elegant solution.

Read the first article Questionnaire Errors. 1 error: sampling bias. 8 ways to attract the right respondents

In this article, I will explain why the problem of comprehensibility of the questionnaire for the survey participants is much more important than it seems at first glance. Let us also consider examples of manipulating the opinion of respondents, falsifying survey results and using surveys for marketing purposes.

The advantage of questionnaires - large coverage and quick results - also becomes their main disadvantage. Losing the possibility of communication with the final respondent, we are forced to make an expensive assumption: "all respondents understand the meaning of the questionnaire and fill it out correctly . " If you make this assumption, you can exhale and work with the results of the survey, as with relevant facts and opinions. And if such an assumption is not made, the study may turn into a series of cross-checks and approvals and ultimately become completely paralyzed.

To be honest, having gone through the difficulties of attracting respondents and returning questionnaires, the less that the researcher wants is to doubt that the questionnaires are filled out correctly. But even sadder is to realize that the questionnaires are corrupted due to a misunderstanding by the participants of the instruction, or questions. And to protect yourself and the customer from these doubts, you can observe a number of preparatory stages that will significantly increase confidence in the study. We will cover these steps in the next article. First, we’ll figure out where the difficulties of understanding can lie, and what it can lead to.

At least the following parts of the questionnaire study can be distinguished, where misunderstanding may arise:

1. Misunderstanding of words and special terms.

2. Misunderstanding of the instructions.

3. Misunderstanding of the focus of the survey.

4. Misunderstanding of the wording of the questions.

5. Misunderstanding of the evaluation criteria.

1. Misunderstanding of words and special terms

Everything is simple here. Indeed, respondents often do not understand the meaning of words that seem obvious to the researcher. And this does not always apply to professional slang. Sometimes, it would seem, the usual words of the Russian language cause difficulties for the survey participants, but the experimenter rarely has the opportunity to learn about it.

On the picture: user comment on a questionnaire survey published on the site (source: pikabu.ru)

Example:We conducted a study of people of different social status and different age groups. Among the research methods, the T. Leary test was used - an authoritative psychological test adapted for Russian-speaking respondents, used for decades to assess personality and relationships. Thousands of studies have been conducted in Russia using this test. Several hundred people have already taken part in our study when we came to interview students at a prestigious university. Students filled out questionnaires directly in the classroom. A few minutes later they began to raise their hands and say that they did not understand the meaning of some words from the test. Here is a list of these words: soft-bodied, self-incriminating, tyrannical, conceited, flexible, easily located, easily stumbled. This was a revelation for us. It turned out The adaptation of the classic authority test is already outdated for some respondents of this age and status. Hundreds of questionnaires collected by that time from respondents of other ages and statuses were also in doubt: people didn’t clarify the meaning of words with us, which means they either understood them or were embarrassed to ask and answered at random.

The second threat comes from the use of slang in the questionnaires, which may either not be understood by the participants or annoy them. This can happen if a researcher tries to flirt with the target audience and uses their slang in the questionnaire, not being a carrier of this culture.

Example 2: When we studied communication between departments in one IT company, several of its developers told in an interview that they are very angry when HR managers, conducting internal surveys or sending out newsletters, copy the features of the language and insert Englishism. Although the developers themselves often sinned this, they were annoyed when employees who were not included in the context of their work adopted this slang.

2. Misunderstanding instructions

Misunderstanding the instructions is the most obvious point in this article and would seem to be useless. Almost all researchers clarify in the introduction to the questionnaire what the study is aimed at and how to fill out the form. Almost all respondents already have experience in participating in some kind of polls, and now you can rarely hear the worried questions that we were asked about 8 years ago: “Do you need to put a plus sign or a tick in the box?” And “Can I cross out the answer if I change my mind?” ? "

On the picture: Questionnaire with inaccurate instructions for applicants (source: pikabu.ru).

Despite the experience of an ordinary respondent, the usability questionnaire again arises if the researcher tries new forms (for example, on-line surveys and questionnaires on mobile devices with non-obvious instructions or the inability to miss an answer), forgets about the option “one answer option / several answer options” , or forgets to give the correct instruction to difficult questions (for example, questions with ranking, or pairwise comparison). And it’s a great success for the researcher to find out about the difficulties of the participant, as in the example in the picture below.

In the picture: a user’s comment on a questionnaire published on the site (source: pikabu.ru)

What seems obvious to the researcher and most of the respondents can be difficult for less experienced participants.

In the picture: Questionnaire with inaccurate instructions, not adapted for the survey participant (source: adme.ru).

Example 1:We conducted a study of employees of the company, which has branches in small cities and towns. Representatives of the branches gathered to study in the capital, so it seemed like a good idea to interview them all after the training. We spoke to the staff, gave instructions and distributed questionnaires, promising to send the results by e-mail to each participant, or bring them the next day. The questionnaire was quite large; people filled it in silence. As soon as the first participant gave the completed application form, a woman got up at the end of the room and headed for the door. When we asked where her profile was, she replied: “I do not understand what the email is and I do not understand where and what you want to send me. I’d better go. ” It turned out that she sat for more than half an hour, because she did not understand our words, but was embarrassed to clarify the instructions. For us it was a blatant case: we found out what extent misunderstanding of the instruction can reach. And with remote and on-line surveys, the researcher usually does not have the opportunity to understand what went wrong: questionnaires either do not return, or are filled out incorrectly.

Example 2. Once we organized a large testing of people of different ages using psychological questionnaires, which included a lie scale. According to the rules of such tests, it is forbidden to interpret the results if the lie indicator exceeds the established threshold. One of the participants received a critical score on a lie scale, and when we refused to analyze the results, she was extremely surprised to say that she always tries to tell the truth and certainly would not lie in a psychological test. We undertook to sort out the answers with her, among which were, for example: “I always cross the road to the green light”, “I always tell the truth” and other obvious questions to social desirability. To all she answered “Yes.” Then I asked:

“Well, and if you think about it, in your whole life you definitely have never crossed the road to red?”

- It was, of course. But I tried to answer as I think it’s right to behave, and not how I behave. And so - different things happen in life.

It turned out that a detailed and clear instruction for the test was nevertheless comprehended by the woman in her own way.

Example 3:During the study of styles of behavior in official relations among enterprise directors, as always, we were able to attract much more women, a strong bias in the sample was planned, and therefore we were very happy when we learned that tomorrow there would be several profiles from men, directors of large state-owned companies. organizations. We were ready to start processing the next day, but in the evening one of the directors called me. He cheerfully said that he filled out our questionnaires with several directors and would like clarifications on some situations from the test, as they had disputes in choosing the answer options. It turned out that the directors, having arrived at professional development, decided to combine communication and completing an interesting test, and began to fill out our profiles together in the hotel room, vigorously discuss each situation, argue and convince each other.

3. Misunderstanding of the wording of questions

There is one rule in creating a questionnaire, or planning an interview: “A question is almost the answer.” An informative answer can only be obtained with a very well thought out question. In order to create such questions, a lot of preparatory work is carried out (in the next part of the article I will talk about the stages of this preparation).

But our experience with researchers shows that almost no one pays attention to such training. Responsibility for the answer is often assigned to the respondent, an essential assumption is made that the respondents have a formulated opinion on our issue and really want to express it. Often this is a completely erroneous assumption. Not only the problems of researchers, but the language of their questions is often not perceived by respondents.

On the picture: Shot from the movie "Hitchhiker 's Guide to the Galaxy."

Wikipedia: “The Final Answer to the greatest question of Life, the Universe and All That (...):“ Forty-two. ” The reaction was:

- Forty-two! Squealed Lunkkuool. “And that's all you can say after seven and a half million years of work?”

“I checked everything very carefully,” the computer said, “and with all certainty I declare that this is the answer.” It seems to me, if you are absolutely honest with you, then the whole point is that you yourself did not know what the question was.

“But this is a great question!” The final question of life, the universe and all that! - almost howled Lunkkuool.

“Yes,” the computer said in the voice of a sufferer, enlightening a round fool. “And what is this question?”

Example 1: Once a top manager of a production company shared the idea of research with me. At that moment, she was finishing her MBA studies and became interested in studying the motivation of workers in the workplace. The study of motivation is a complex topic, we asked what methods it will use. “What methods are needed here? - she was surprised. “I will give them pieces of paper with the question“ What motivates you? ”And collect the answers.” It is a pity that communication with her did not continue, and we did not find out how the factory workers took her questionnaires, and how she used the results of such a study.

Example 2: The second example of a mismatch between the language of research questionnaires and the language of people filling them out from production. The HR director of a large industrial holding company once boasted to us in a personal conversation that in several years he had managed to increase staff involvement by 12% (!). Workers and middle management of factories are a specific group for research. How did he study their involvement? It turned out to be a questionnaire from the Gallup company.

Here you need to clarify. The biggest drawback of using the questionnaire is not so vague and unadapted wording of questions for people of working specialties (for example, questions No. 5, 6, 8), but its use as a test with the calculation of the integral numerical indicator of engagement. In other words, even if the participants do not understand the wording of the questionnaire, the researcher will not analyze the answers separately, will not track the emissions, but will calculate the total indicator. The text of the questionnaire is shown in the picture.

In the picture: One of the options for the Russian translation of the staff engagement questionnaire from Gallup (Source: antropos.ru).

We were unable to find links to publications with adaptation and validation of the Gallup questionnaire (mandatory steps when using translation methods). Nevertheless, you can find several options for its free translation, many recommendations for its use in business publications and even more offers from consulting companies that use 12 unvalidated and unadapted questions as the only tool for measuring staff involvement.

Naturally, when a client sees in the report only generalized indicators of engagement and - even better - the growth of such indicators, he rarely asks about the methods by which they were obtained.

4. Misunderstanding of the focus of the survey

Misunderstanding the direction of the issue is a much more complex and widespread mistake in questionnaire research. Sometimes it is impossible to assume how a person can interpret a seemingly unambiguously formulated question.

There is an elegant solution for this problem, which we will discuss in the next part. In the meantime, we can talk about cases where researchers deliberately hide the true meaning of the question, or confuse the wording in order to get the desired answer.

The illusion of choice.In the practice of social manipulation, there is such a move: providing the interlocutor with the illusion of choice, in which any of his answers will be beneficial to the manipulator. A classic example of the illusion of choosing fast-food chain restaurants from sales: When a customer orders coffee, the waiter asks: “Will you take a muffin for coffee, or a pie?” (The question “Would you like something for coffee?” Is considered much less effective from the point of view of sales ) Such a move is often used in questionnaires when the research organizer is interested in certain answers.

Example:A few years ago, our company organized the corporate game for middle and top managers of a well-known factory. Shortly before this, a controlling stake in this plant was bought by a German company, which immediately began implementing corporate standards of conduct. These standards in Russian represented a set of rules that were not quite correctly translated and not always clear for the Russian mentality, and we were set the task of organizing the game so that the management adopted these standards. Only a few hours were allotted for the game, and we immediately explained that in such a time it is impossible to form behavioral patterns and one cannot even develop acceptance and acceptance of alien norms. They agreed that the goal of the game will be to reduce the emotional stress that new standards caused. So, we developed games and contests,

The game went well, all the leaders were involved and dispersed satisfied. And a few days later, the HR department of the company asked us to conduct a survey of satisfaction with the game in order to report to management. We understood that the new norms were not adopted by the employees and, moreover, did not turn into habits. But our goal - to reduce stress - we have fulfilled. Therefore, our profile was focused exclusively on positive emotions after the game (see. Figure).

On the picture: A fragment of the feedback questionnaire for the participants in the game.

Questions 5, 6, and 9 are questions with the illusion of choice. They look pretty full. But, if you look closely, any answer either reflects a positive assessment or shifts the responsibility to the one filling out (according to the principle “it wasn’t a bad game - I still don’t understand the standards of behavior that have been explained to me for several months now”, or “I already I understand the essence of standards well ”).

The last question is an example of a question with the illusion of choice. All its options are positive. In formulating it, we knew how the results would look in the report: how the positive effects set by us in advance in the questionnaire would look in the diagrams, and how pleasant it would be for the manager to consider them. When a person views such a report, he rarely raises the question of whether negative answers were provided by the questionnaire.

Accent

If the research customer is not interested in the source text of the questionnaire and does not specify what options were provided to the participants, the organizers can replace the meaning of the results by placing emphasis.

Example: The recent publication of the results of a study of public opinion of Russians caused a heated discussion on the Internet (see figure). Basically, the discussion revolves around the term “satisfactory”. And for our article it is interesting how the results of the survey were transformed in the title of the article due to the play on the words of the Russian language. The heading reads “half of the Russians are satisfied with the country's economy”, while the transcript of the article says that only 6% rated the economy positively. If only “significant” results were presented in the report and the option with a positive assessment was missed, then, thanks to the richness of the meanings of the language, the concept “satisfies” would be perceived as a very good poll result (and not as a “three-point” mark).

In the picture: a fragment of news about the results of the opinion poll (source: www.solidarnost.org ).

Example 2: Another fun example of emphasis. If you enter the phrase “almost half of Russians do not trust the police” in the search engine, links to sources vedomosti.ru, rbc.ru, echo.msk.ru and others are given. And if you enter “almost half of Russians trust the police”, links to ria are issued. ru, news.sputnik.ru, www.business-gazeta.ru , ridus.ru (the last source is surprisingly, in contrast to the screenshot).

On the picture: a screenshot of the news feed - emphasis by different publications (source: joyreactor.cc)

Substitution of concepts. In fact, the practice of protecting the customer alone of the research results without first examining the raw data and how to collect them leaves a huge field for falsifying data and manipulating the results without any falsification. In such cases, it is important to pay attention to the logic and focus of the questions in the questionnaire before analyzing its results.

Therefore, the proposals of training and consulting companies look especially funny on their own to assess the effect of staff training, or implemented implementations, and provide the customer with a report on the return on investment. In feedback questionnaires, contractors may ask employees not at all about what, but in the final report to replace concepts. But customers usually readily accept such reports, as they look quite authoritative, and do not require efforts from internal services.

Example:Recently, we were introduced to a company that provides PR services. They presented, proudly explaining that this company is one of the few in the industry, giving a clear report on KPI (key performance indicators) of its activities in percent. Indeed, it is not easy to evaluate the effectiveness of marketing and PR campaigns, to clear measurements from all influential market conditions. We are interested in the technique. And, when we asked how exactly the effectiveness of PR-activity is measured and interest is calculated, it turned out that the plan is used as the unit of measure (for example, “write 10 articles in industry publications”), and the report is used to calculate the implementation of this board ( according to the logic of this company, if 9 articles are written, then KPI on PR is 90% complete). Of course, there is a separation between process and actual KPIs and there are businesses, where the precise execution of the process is important. But this does not apply to those areas where the process does not matter if the result is not achieved. And I have a suspicion that, by providing the customer with a report on the effectiveness of the PR campaign in numerical terms, these contractors made a crude substitution of concepts.

Suggestive wording. The tonality of the question in the questionnaire, or its orientation, can lead the participant to a specific answer. The benefit of the research organizer from such answers is to confirm their own position (for example, when falsifying theories, or justifying managerial decisions).

Example: This effect is well illustrated by the “Theory of Prospects” by A. Tversky and D. Kahneman on saving intellectual costs (they received the Nobel Prize for researching the irrational nature of decisions made by people).

In one decision-research study, depending on the context of the information provided, known as “Asian Disease,” participants were asked to solve the problem: “Imagine that the United States of America is preparing for an outbreak of an unknown“ Asian disease ”that is expected to 600 people will die. To combat this disease, two alternative programs have been proposed. Which one should you choose? ”Next, the same information was reported to two different groups of participants, but in different formulations (with positive and negative focus). The authors of the experiment proved that, in an effort to save effort in making decisions, people prefer to rely on intuition, past experience and simpler and more optimistic options.

In the picture: The wording of the “programs” of the “Asian Disease” experiment and the preference for answers by the participants.

Another common example from D. Kahneman’s research: if one group of subjects is asked: “Did Gandhi live to be 114 years old? At what age did he die? ”And another:“ Did Gandhi live to be 35? At what age did he die? ”, The first group will evaluate Gandhi's life as much longer than the second.

So, successfully formulating the questionnaire questions, focusing on the positive options, or using suggestive numbers, the researchers create a “binding effect” and can manipulate the participants' answers.

Second option, in which the technique of suggestive formulations is used, is a gradual change in the beliefs of the study participant using the questionnaire. In this case, an outline is created from question to question, in which the respondent is forced to answer in a certain way, encounters his answers as with new ideas, and gradually changes his point of view. A continuation of this method is the need-creating questions (see next paragraph).

Example:Once I myself became a victim of a rigorous manipulative survey. It was carried out by a reputable organization, offering to go on-line questioning to people from all over the world. The study was presented under the guise of "Evaluate how well you eat." The beginning of the questionnaire was standard, questions were asked about different products, but gradually the questionnaire narrowed to filtering questions about meat (do you eat meat, how many times a week, etc.). After my answers, the following questions of the questionnaire began to offer me to look at photographs and videos about how animals are killed on farms, how carcasses are cut, how waste is disposed of, etc. And very soon the questions took on this character: “Knowing how animals on farms suffer, will you still eat meat on a regular basis? "and" Do you think you should prefer vegetable proteins, the same useful and nutritious to stop mocking animals? ” These questions rained down: no one was interested in my diet. It seemed that this flow could be completed only by answering in the spirit of "I have realized everything and will now go to convince everyone whom I meet on the way." For the first time I had such a traumatic experience from filling out a questionnaire. I stopped answering questions, and it took me a while to re-establish myself in my ideas about nutrition, which were greatly influenced by this survey.

Surveys creating need. Creating the necessary needs for the participants with the help of correctly formulated questions is another tactic of manipulation and sales. And questionnaires in this event provide good support to selling companies.

No one will be deceived by pseudo-sociological polls in the style of “Do you care for your skin properly?”, Or “Do you know everything about cleansing the body?”, Where after the third question appears: “Are you familiar with the products of company N?”. About ten years ago in our country, these polls were very popular.

Now the tactics of creating needs are used more subtly. The first option is to covertly inform the participant using a questionnaire about the assortment and new products of the company, which creates awareness and arouses curiosity (see figure).

In the picture: a fragment of the questionnaire recommended by the Ministry of Health to determine the risk group for drug use by adolescents. Among the questions were also: “How many times, if this happened, did you sniff inhalants - glue, aerosol, gasoline - specifically to get“ unusual sensations ”?” Questionnaires were offered to distribute to children 10-13 years old, which caused a storm of indignation of parents. (Sources: Ridus.ru, RG.ru).

The second option for creating needs is to offer the client a questionnaire, supposedly helping to sort out what type of service (product) he actually needs. This is not to say that this is an unambiguous "policy of evil." Such questionnaires, indeed, help to clarify the needs of the client, but they very well help to sell.

The essence of the sale is that under the guise of a survey:

a) the respondent is led to the idea that he doesn’t have something, or that something is used inefficiently (cause a feeling of anxiety),

b) inform about new services (products, options) that will help solve his problem (about which he did not know before the survey).

An approximate algorithm of the questionnaire that creates the need:

1. Introductory neutral questions on the topic, "ice breakers."

2. Questions about the current state of the respondent, which gradually lead him to the idea that he is missing something, or that something is out of date.

3. Questions clarifying whether the respondent understands what the consequences of this shortage are, and what it may turn out to be for him or his business personally.

4.Questions about whether the respondent is familiar with some decisions (goods, services, technologies).

5. Questions informing the respondent exactly how these solutions help to cope with his problem.

6. Questions suggestive of a purchase (informing about the price, discount, conditions).

7. Questions confirming that when purchasing this solution the respondent’s problems will be resolved and he will receive additional benefits.

Example: If I wanted to sell this article using the questionnaire, I would first ask a few formal questions about your experience in questionnaire research and whether you received a special education to conduct research (work begins with anxiety here). Then I would ask by what procedures you establish and prove that the respondents correctly understand the instructions, wording and evaluation criteria in your surveys. Then she would ask if you are familiar with such and such examples from your sphere, when the results of expensive polls had to be thrown out because not all respondents correctly understood the instructions, or the essence of the questions. I would mention how shameful the organizers of such polls from such and such a company were. Then I would ask if you are familiar with the procedures of conceptualization, operationalization, validation, adaptation, testing and pilot launch, and to what extent you use them. She would then give examples of how these procedures helped save expensive research. And finally, I would ask what should happen in your company to change the approach to survey research and how much, in your opinion, can cost an article that contains an algorithm for applying these procedures to make your research reliable, bring success to your company and to you personally.

In this example, the “white threads” of sales are still clearly visible, but the closer your respondent is to customer status (for example, fills out a questionnaire on the product’s website) and the closer he is to purchase, the better they will perceive such a questionnaire and the easier it will be to increase the volume of his purchase due to additional sales.

Third optioncreating need profiles, very popular now, these are entertaining tests. Internet users are still attracted by tests in the style of “Are you good at computer games?” (Where you can inadvertently add a few questions listing the gaming innovations of a certain company), “Do you know how to manage your time?” (Here it’s appropriate to mention several specialized mobile apps you want to sell) etc. Such tests sometimes look professional and deliver positive emotions, even when their advertising nature is obvious.

Example: A few days ago, a friend suggested that I take a test of knowledge of Italian cheeses on the Medusa website. The test was marked with the “affiliate material” icon, and the brand name of the cheese producer was mentioned in the name, but a friend did not notice it. He read out the questions, and I perceived them by ear. The test was really interesting.

And at some point, having already wanted a salad with juicy healthy mozzarella, I jokingly exclaimed:

“Yes, it looks like they are selling these cheeses!”

- Indeed, look, it says here at the end of the test: “Well, of course, now we just have to tell you that U. also produces all the cheeses that we mentioned in the test, and not only. They are fresh and healthy, and even the calcium in them is with the taste of Italy. ”

Knowing a lot about marketing tricks and manipulations, we still took this test positively and became interested in the company, as the form of presentation and usefulness of the content exceeded the knowledge about the true goals of the test organizers.

One of the test questions is presented in the figure.

On the picture: One of the questions of the test for knowledge of Italian cheeses ( Source ).

Offset polls. Apart from this list of polls with hidden motives are polls with a biased purpose, since they are used mainly not for manipulation, but for a more elegant study of respondents' motives, when this cannot be done with direct questions.

Example:My student, director of a private furniture factory, spoke about this survey. She tried to understand the reasons for poor sales in company stores. A few months ago, the factory (which used to produce only upholstered furniture) acquired the production of kitchens. Accordingly, in company stores, in addition to upholstered furniture, the sale of kitchens began. But, against the background of stable sales of upholstered furniture, kitchens sold very poorly. The director had a hypothesis that the reason for poor sales was not in the poor quality of the products (she had the opportunity to check the real demand), but in a biased attitude of sellers who sabotaged the sales. It was impossible to directly ask sellers about this, and therefore she organized a survey among them in the style of "Why, in your opinion, kitchens are not selling well." Sellers were delighted with the opportunity to finally express all their dissatisfaction with innovation, and eloquently answered the questionnaire. So she managed to confirm her hypothesis, collect the main prejudices of sellers against a new product, understand their resistance and get a basis for working with objections (in her case, with objections of sellers themselves, not customers).

5. Misunderstanding of the evaluation criteria

Researchers do not always clarify assessment criteria, expecting them to be intuitive to participants.

Example: Traditionally, out of dozens of questionnaires sent to me for examination, about 30% contain questions asking to evaluate a certain parameter (service, product) from 1 to 5 points, but do not contain a decoding of the values, or at least an indication of which pole is positive . Sometimes the whole study disappears if the organizers pay attention to diametrical differences in the respondents' ratings and only then realize that some respondents put “1”, assuming that it was “first place” in the ranking, while others put “1” as the lowest score .

As a way out of such situations, it is customary to use the Likert scale (Lakert, Likert) containing more detailed wordings for assessment (for example, from “I totally dislike” to “I like it very much”, or from “Never” to “Very often”). You can find or come up with many formulations of this scale for a variety of questions. But this scale also has drawbacks, since subjective understanding, for example, of the frequency of some actions in different people may vary. And if it is important for us to know not their opinion about the frequency, but a more or less accurate indicator, it is better to specify such answers in exact intervals (for example: “Less often than once a year” - “...” - “Several times a day”).

In the picture: participants' comments on the questionnaire survey published on the site (source: pikabu.ru) The

development of answer options becomes especially important when the questionnaire is used to assess complex, subjectively significant parameters. In such cases, respondents are prone to “mental savings” and choose assessment options that correspond to their general idea of the object without thinking about the question (for example, “I generally like the game, put 4 and 5 everywhere - it doesn’t matter, I evaluate the sound , or the level of feedback from players ").

Such averaged estimates are common when evaluating employees using the 360 degree method. When evaluating their colleagues, people tend to give positive scores to “loved ones” and neutral, or negative, scores to “unloved”, regardless of which parameter they are rated (unloved employee can be a strong leader, or don’t conflict with anyone, but “hand will not rise ”to give him high scores even for these indicators). As a result, such an assessment turns out to be completely useless for the organizers of the study, since it does not make it possible to identify real problem points among the company's employees.

The situation is exactly the same when evaluating user experience when working with IT products. If the user, in general, likes the site, or the company, he will tend to give high ratings in general to “not offend anyone”. And such a survey will not help developers make the product better.

Example: Забавный случай случился с моей знакомой, когда только начал развиваться сервис «Одноклассники». Заведя аккаунт значительно позже своих знакомых и еще не зная негласных правил поведения на сайте, она стала просматривать профили друзей и ставить оценки фотографиям. Очень скоро несколько подруг ее заблокировали. Она была крайне удивлена и смогла разговорить одну из них, чтобы вяснить причину. Оказалось, подруг (уже давно закончивших школу) сильно задели низкие оценки, которые она ставила их фотографиям. «Как ты не понимаешь? Ты понизила мне общий балл своими двойками!» – призналась одна из них. А моя знакомая, в отличие от большинства пользователей «Одноклассников», была убеждена, что механизм оценки фотографий существует для того, чтобы искренне высказываться насчет удачности кадра, цвета, перспективы и др., а не для выполнения социальной функции поддержки друзей.

In such cases, the researcher’s aerobatics is the development of “behavioral” response options that describe examples of the manifestation of quality in human behavior (or — when evaluating a product — especially the interaction of the user with him). According to the behavioral options, it is impossible to unambiguously determine which one is positive and which negative, and the respondent has to choose the option that is closest to reality.

Пример: Можно предложить такие поведенческие варианты для оценки конфликтности: «Этот человек никогда не повышает голос и всегда держится в стороне от любых разногласий и споров» (самый низкий балл) – «Этот человек остро реагирует, когда его точка зрения не принимается коллегами, или когда поведение коллег кажется ему несправедливым; он может повышать голос, или даже решать разногласия силой» (самый высокий балл). Соответственно, в таком же ключе формулируются остальные промежуточные варианты оценки.

На картинке: Пример вопроса оценки «ориентации на команду» для оценки сотрудников методом 360 градусов. В каждом варианте можно заметить положительные и негативные черты, что увеличивает вероятность честной оценки, в отличие от простого приписывания высоких, или низких баллов сотруднику (Источник).

Developing behavioral assessment options is a difficult step. It is especially difficult to choose adequate options when it is not entirely clear how they appear in real behavior (or user interaction with the product). For example, when evaluating sound effects in a game, the highest sound score would be: “Sounds do not distract from the game, create a good background”, or “Sounds play a crucial role, help to monitor the dynamics of the game, and prompt the players to take actions”. In order for the evaluation options to be the most appropriate for the real user experience, the operationalization stage is used , which I will discuss in detail in the next part of the article.

Brief conclusions

I tried to describe examples where the survey steps that are obvious to research organizers are misunderstood by the participants. Respondents may misunderstand words and phrases, wording of questions, instructions for the questionnaire, evaluation criteria and focus of questions. These difficulties lead to the collection of irrelevant information, or non-return of questionnaires.

We also examined examples where the confusion of respondents is provoked intentionally in order to manipulate the results of the survey. Among such manipulations:

- providing the illusion of choice;

- arrangement of accents;

- substitution of concepts;

- suggestive language;

- use of the binding effect;

- creating needs: 1) through information and curiosity, 2) through provocation of anxiety, 3) through entertaining tests,

- polls with a biased purpose.

In order to evaluate whether the researchers are not manipulating your opinion by presenting the results of a questionnaire, you can ask them several questions about the research procedure:

1. Ask to see the source text of the questionnaire and try to fill it out yourself before getting acquainted with the results (better yet, before how to authorize a study).

2. Imagine how your typical client or employee fills out this questionnaire (whether all terms and wording will be clear to him).

3.Pay attention to the wording of the questions and answer options: are there negative options, do they occupy an equal place with positive options.

4. Please note whether there are any suggestive language and “bindings” in the questionnaire questions.

5. Look at how clearly and concretely the evaluation criteria are formulated in the questionnaire (do they provoke “mental savings” - neutral, or extremely positive answers). The best option is if the assessment criteria are formulated in behavioral examples.

6. When analyzing the final report, pay attention to the basis of what questions in the questionnaire the conclusions are based on: whether the researchers replace the concept, do not give out the opinions of respondents as facts.

7.If you use a foreign technique (for example, from a well-known company), take a look at links to publications with a description of the procedure for its translation, adaptation and validation. Without these procedures, a technique cannot be considered an authoritative research method, regardless of the status of its developer.

8. Request the exact distribution of the survey participants by groups (gender, age, social status, or: departments, positions) in order to avoid sampling bias (more about this in the previous article about the sample).

In the following articles:

Mistake 2. The wording of the questions: why did you decide that you are understood? (Part 2)

Mistake 3. Types of lies in polls: why do you believe the answers?

Error 4.Opinion is not equal to behavior: are you really asking what you want to know?

Mistake 5. Types of polls: do you need to find out or confirm?

Mistake 6. Separate and saturate the sample: the average does not help to understand anything.

Mistake 7. The notorious “Net Promouter Score” is NOT an elegant solution.