Solvability beltway problems in polynomial time

Students get acquainted with the beltway problem by taking bioinformatics courses, in particular, one of the most qualitative and closest in spirit for programmers - the Bioinformatics course (Pavel Pevzner) at the course of the University of California San Diego. The problem draws attention to the simplicity of the statement, its practical importance, and the fact that it is still considered unsolved, with the seeming opportunity to solve it by simple coding. The problem is put this way. Is it possible in polynomial time to recover the coordinates of a set of pointsif the set of all pair distances between them is known ?

This seemingly simple task is still on the list of unsolved problems of computational geometry. Moreover, the situation does not even concern points in multidimensional spaces, all the more crooked, the problem is the simplest option - when all points have integer coordinates and are localized on one line.

Over the past almost half a century since this task was perceived by mathematicians as a challenge (Shamos, 1975), some results were obtained. Consider two cases possible for a one-dimensional problem:

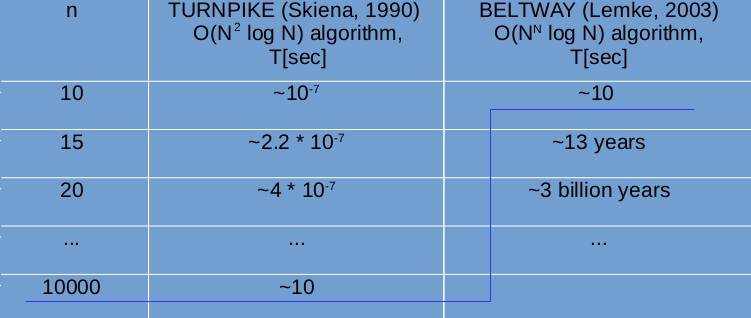

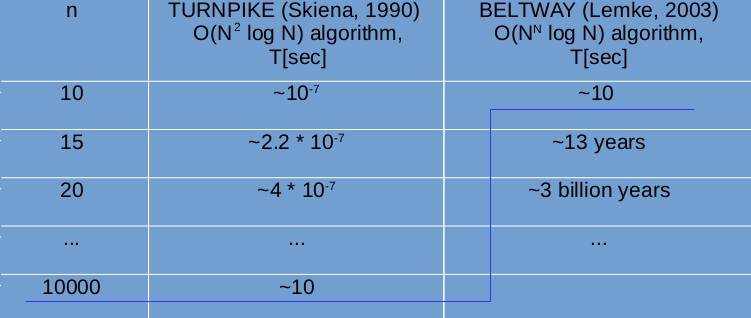

These two cases have received different names for a reason - various efforts are required to solve them. And indeed, the first problem was solved fairly quickly (in 15 years) and a backtracking algorithm was built, which restores the solution on average in quadratic time.where - the number of points (Skiena, 1990); for the second task, this has not been done so far, and the best proposed algorithm has exponential computational complexity(Lemke, 2003). The picture below shows an estimate of how long your computer will work to get a solution for a set with a different number of points.

That is, for a psychologically acceptable time (~ 10 sec.), You can restore manyup to 10 thousand points in the turnpike case and only ~ 10 points for the beltway case, which is completely useless for practical applications.

Small clarification. It is believed that the turnpike problem is solved from the point of view of use in practice, that is, for the vast majority of the data encountered. At the same time, the objections of pure mathematicians to the fact that there are special data sets for which the time for obtaining a solution is exponential are ignored.(Zhang, 1982). In contrast to turnpike, beltway, the problem with its exponential algorithm can in no way be considered practically solved.

At the end of the 20th century, a new pathway for the synthesis of biomolecules was discovered, called the non-ribosomal route of synthesis. Its main difference from the classical synthesis pathway is that the final result of the synthesis is not encoded in DNA at all. Instead, the DNA contains only the code of the “tools” (many different synthetases) that these objects can assemble. Thus, biomachine engineering is substantially enriched, and a cell instead of one type of collector (ribosomes) that works with only 20 standard parts (standard amino acids, also called proteinogenic), many other types of collectors appear that can work with more than 500 standard and non-standard parts (non-proteinogenic amino acids and their various modifications). And these collectors can not just join parts in chains, but also to receive rather intricate designs - cyclic, branching, and also designs with many cycles and branching. All this significantly increases the cell's arsenal for different occasions of its life. The biological activity of such structures is high, the specificity (selectivity of action) is also, the variety of properties is not limited. The class of these cell products in the English-language literature is called NRPs (non-ribosomal products, or non-ribosomal peptides). Biologists hope that it is among these products of cell metabolism that one can find new pharmacological preparations with high efficiency and no side effects due to their specificity. The biological activity of such structures is high, the specificity (selectivity of action) is also, the variety of properties is not limited. The class of these cell products in the English-language literature is called NRPs (non-ribosomal products, or non-ribosomal peptides). Biologists hope that it is among these products of cell metabolism that one can find new pharmacological preparations with high efficiency and no side effects due to their specificity. The biological activity of such structures is high, the specificity (selectivity of action) is also, the variety of properties is not limited. The class of these cell products in the English-language literature is called NRPs (non-ribosomal products, or non-ribosomal peptides). Biologists hope that it is among these products of cell metabolism that one can find new pharmacological preparations with high efficiency and no side effects due to their specificity.

The only question is, how and where to look for NRPs? They are very effective, therefore the cell has absolutely no need to produce them in large quantities, and their concentrations are negligible. So chemical methods of extraction with their low accuracy ~ 1% and huge expenses for reagents and time are useless. Further. They are not encoded in DNA, which means all the databases that are accumulated in deciphering the genome, and all methods of bioinformatics and genomics are also useless. The only way to find something remains physical methods, and, namely, mass spectroscopy. Moreover, the detection rate of a substance in modern spectrometers is so high that it allows you to find insignificant quantities, total> ~ 800 molecules (atto-molar range, or concentration).

"

"

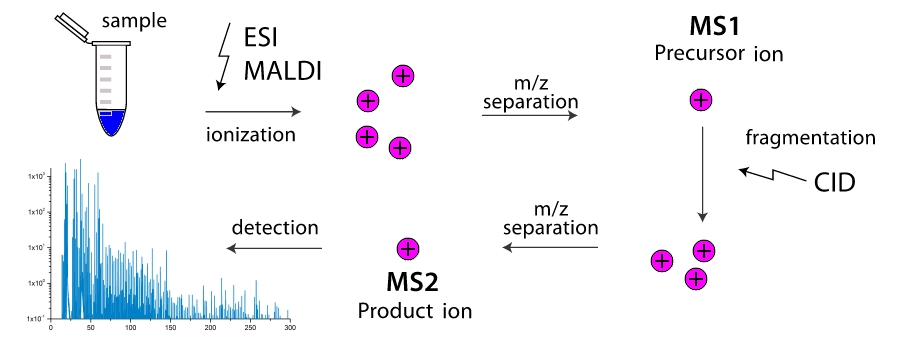

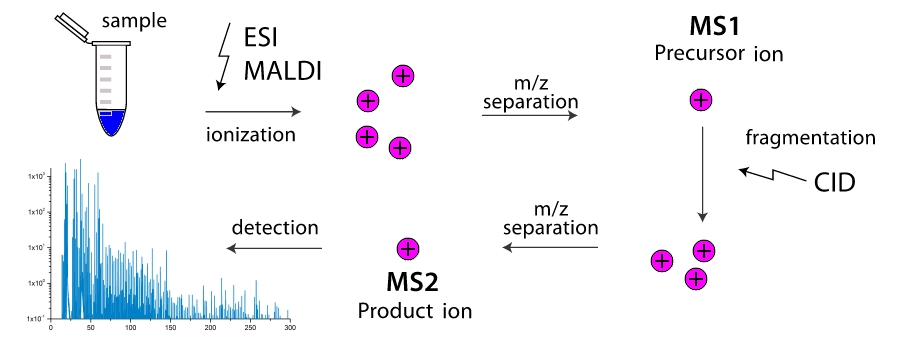

And how does a mass spectrometer work? In the working chamber of the device, molecules break down into fragments (more often by colliding with each other, less often due to external influence). These fragments are ionized and then accelerated and separated in an external electromagnetic field. Separation occurs either by the time it reaches the detector, or by the rotation angle in a magnetic field, because the fragment’s mass is higher and its charge is lower, the more clumsy it is. Thus, the mass spectrometer “weights” the mass of fragments, moreover, vanie "can be a multi-step, multiple pieces of filtering out the one mass (more precisely one value) and driving them through fragmentation with further separation. Two-stage mass spectrometers are widely distributed and are called tandem, multistage mass spectrometers are extremely rare, and are simply referred to aswhere - number of stages. And here the task appears, as knowing only the masses of various fragments of a molecule to know its structure? Here we come to turnpike and beltway problems, for linear and cyclic peptides, respectively.

Let me explain in more detail how the task of restoring the structure of a biomolecule is reduced to these problems using the example of a cyclic peptide.

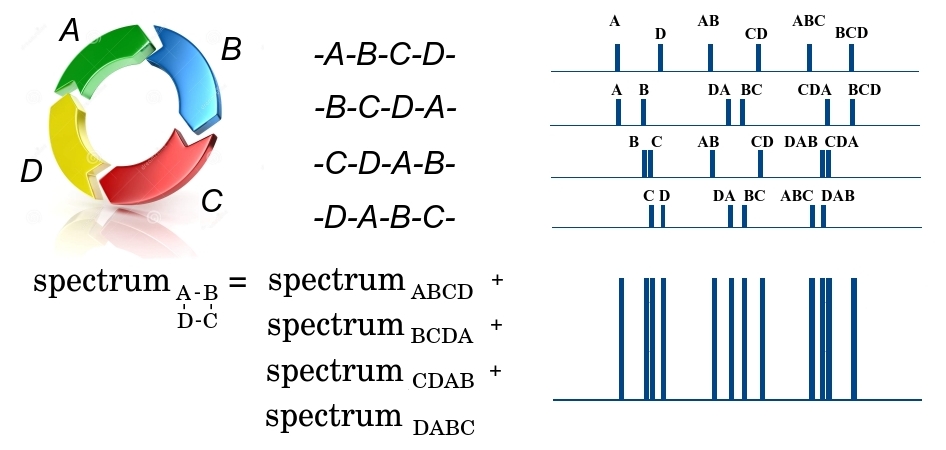

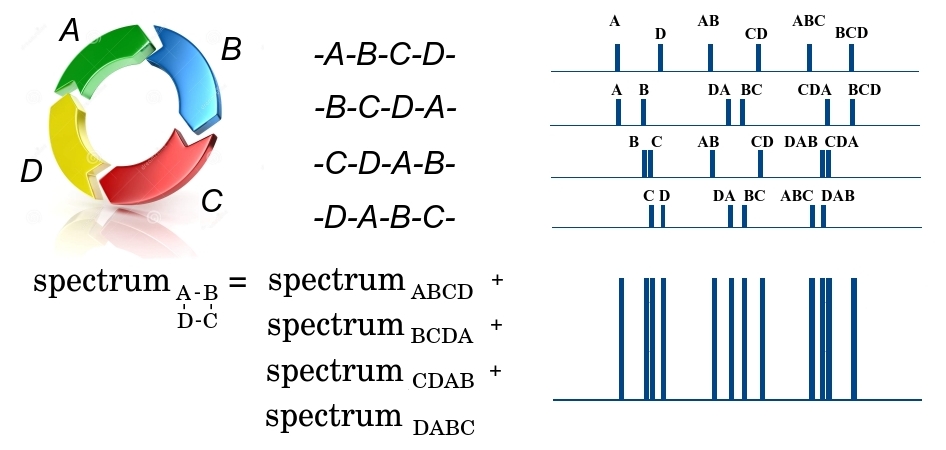

The cyclic ABCD peptide at the first stage of fragmentation can be broken in 4 places, either by the DA bond, or by AB, BC or CD, forming 4 possible linear peptides - either ABCD, BCDA, CDAB or DABC. Since a huge number of identical peptides pass through the spectrometer, at the output we will have fragments of all 4 types. Moreover, we note that all the fragments have the same mass, and cannot be separated at the first stage. At the second stage, the linear fragment of ABCD can be broken in three places, forming smaller fragments with different masses A and BCD, AB and CD, ABC and D, and forming the corresponding mass spectrum. In this spectrum, the mass of the fragment is plotted along the x axis, and the number of small fragments with a given mass along the y axis. Similarly, the spectra are formed for the remaining three linear fragments of BCDA, CDAB and DABC. Since all 4 large fragments passed to the second stage, all their spectra are added. So, the output is some masswhere - some mass, and - the multiplicity of its repetition. At the same time, we do not know which fragment this mass belongs to or whether this fragment is unique, since different fragments can have the same mass. The further the bonds in the peptide are from each other, the greater the mass of the peptide fragment is between them. That is, the task of restoring the order of elements in a cyclic peptide is reduced to a beltway problem, in which the elements of the set are bonds in the peptide, and elements of the set - masses of fragments between bonds.

From my own experience and from communicating with people who tried or actually did something to solve this problem, I noticed that the vast majority of people try to solve it either in the general case or for integer data in a narrow range like this (1, 50). Both options are doomed to failure. For the general case, it was proved that the total number of solutions for beltway problems in the one-dimensional case limited to values (Lemke, 2003) which means the existence of an exponential number of solutions in the asymptotics . Obviously, if the number of solutions grows exponentially, then the time to obtain them cannot grow more slowly. That is, for the general case, a polynomial time solution is impossible. As for the integer data in a narrow range, here everything can be checked experimentally, since it is not too difficult to write code that searches for a solution using the exhaustive search method. For smallThis code will not take very long. The results of testing such a code will show that, under such conditions, for data a complete number of different solutions for any set already at small also growing extremely sharply.

Learning about these facts, you can put up and give up on this task. I admit that this is one of the reasons why the beltway problem is still considered unsolved. However, loopholes do exist. Recall that the exponential functionbehaves very interesting. At first, it grows scary slowly, rising from 0 to 1 for an infinitely large interval fromto 0, then its growth accelerates more and more. However, the smaller the valuethe greater should be the value so that the result of the function transcends a specified value . As such a value is convenient to choose the number, before it is the only solution, after it there are many solutions. Question. Did anyone check the dependence of the number of decisions on what data gets into the input? Yes, I did. There is a remarkable phD dissertation of a Croatian woman mathematician Tamara Dakis (Dakic, 2000), in which she defined what conditions the input data should satisfy in order for the problem to be solved in polynomial time. The most important of them are the uniqueness of the solution and the absence of duplication in the set of input data. The level of her phD dissertation is very high. It is a pity that this student was limited only by the turnpike problem, convinced that if she had turned her interest in the beltway problem that would have been solved long ago.

It was possible to discover the data for which it is possible to construct the desired algorithm by accident. It took additional ideas. The main idea arose from the observation (see above) that the spectrum of a cyclic peptide is the sum of the spectra of all linear peptides that are formed during single ring breaks. Since the amino acid sequence in a peptide can be recovered from any such linear peptide, the total number of lines in the spectrum is significant (in time where - the number of amino acids in the peptide) is excessive. The only question is which lines should be excluded from the spectrum in order not to lose the possibility of restoring the sequence. Since mathematically both tasks (recovery of a cyclic peptide sequence from the mass spectrum and the beltway problem) are isomorphic, it is also possible to puncture many.

Thinning operations were built using local symmetries of the set (Fomin, 2016a).

Theorems have been proved that both operations thin out many but there will still be enough elements in it to restore the solution. . Using these operations, the operation was built and implemented on the c ++ algorithm to solve the beltway problem (Fomin, 2016b). The algorithm differs little from the classical backtracking algorithm (that is, we try to build a solution by iterating over possible options and make returns if we get an error during construction). The only difference is that for the account of the narrowing of the settry us there are significantly fewer options.

Here is an example of how manywhen thinning.

Computational experiments were performed for randomly generated cyclic peptides of lengthfrom 10 to 1000 amino acids (y-axis on a logarithmic scale). The abscissa axis also on a logarithmic scale shows the number of elements in sets thinned by different operations. in units . Such a presentation is absolutely unusual, because I will decipher how it is read by example. Look at the left chart. Let the peptide have a length. For him, the number of elements in the set equally (this is the point on the upper dotted straight line ). After removing from the set repeating elements, the number of elements in reduced to (circles with crosses). After symmetrization the number of elements falls to (black circles), and after the convolution by partial solution before (crosses). Total amount of the setreduced by only 20 times. For a peptide of the same length, but in the right chart, the reduction is from before that is 100 times.

Note that the generation of test examples for the left diagram is done in such a way that the level of duplication at was in the range from 0.1 to 0.3, and for the right - less than 0.1. The level of duplication is defined aswhere - the number of unique elements in the set . This definition gives a natural result: in the absence of duplicates in the set its power is equal to and , with the maximum possible duplication and . How to make provide a different level of duplication, I will say a little later. The diagrams show that the lower the level of duplication, the more thinned outat number of elements in thinned generally reaches its limit because the thinned set is less than elements cannot be obtained (operations retain enough elements to obtain a solution in which elements). The fact of narrowing the power setto the lower limit is very important, it is he who leads to dramatic changes in the computational complexity of obtaining a solution.

After inserting thinning operations into the backtracking algorithm and in solving the beltway problem, a complete analog of what Tamara Dakis was talking about with respect to the turnpike problem was revealed. I will remind you. She said that for turnpike problems it is possible to get a solution in polynomial time if the solution is unique and there is no duplication in. It turned out that a complete lack of duplication is not necessarily necessary (it is hardly possible for real data), it is enough that its level will be sufficiently small. The figure below shows how long it takes to get a solution to the beltway problem depending on the peptide length and the level of duplication in.

In the figure, both the x-axis and y-axis are given on a logarithmic scale. This allows you to clearly see whether the dependence of the count time on the length of the sequenceexponential (straight line) or polynomial (log-shaped curve). As can be seen in the diagrams, with a low level of duplication (right diagram), the solution is obtained in polynomial time. Well, to be more precise, the solution is obtained in quadratic time. This occurs when thinning operations reduce the power of the set to a lower limit., there are few points left in it, returns when selecting options become single, and as a matter of fact, the algorithm ceases to sort out the options, but simply constructs a solution from what is left.

PS Well, I will reveal the last secret regarding the generation of sets in different levels of duplication. This is due to the different accuracy of data presentation. If the data is generated with low accuracy (for example, rounding to integers), then the level of duplication becomes high, more than 0.3. If the data is generated with good accuracy, for example, up to 3 decimal places, then the level of duplication decreases sharply, less than 0.1. And from here follows the last most important remark.

For real data, in conditions of constantly increasing measurement accuracy, the beltway problem becomes solvable in real time.

Literature.

1. Dakic, T. (2000). On the Turnpike Problem. PhD thesis, Simon Fraser University.

2. Fomin. E. (2016a) A Pair of Distances ^ 2 Steps for the Sequencing Problem: I. Theory. J Comput Biol. 2016, 23 (9): 769-75.

3. Fomin. E. (2016b) wise Distance Pair Ste Pair 2 wise Ste ps wise 2 wise Pair Pair 2 ps 2 2 wise Algorithm. J Comput Biol. 2016, 23 (12): 934-942.

4. Lemke, P., Skiena, S., and Smith, W. (2003). Reconstructing Sets From Interpoint Distances. Discrete and Computational Geometry Algorithms and Combinatorics, 25: 597–631.

5. Skiena, S., Smith, W., and Lemke, P. (1990). Reconstructing sets from interpoint distances. In Proceedings of the Sixth ACM Symposium on Computational Geometry, pages 332–339. Berkeley, CA

6. Zhang, Z. 1982. An exponential example for a partial digest mapping algorithm. J. Comp. Biol. 1, 235–240.

This seemingly simple task is still on the list of unsolved problems of computational geometry. Moreover, the situation does not even concern points in multidimensional spaces, all the more crooked, the problem is the simplest option - when all points have integer coordinates and are localized on one line.

Over the past almost half a century since this task was perceived by mathematicians as a challenge (Shamos, 1975), some results were obtained. Consider two cases possible for a one-dimensional problem:

- points are located on the line (turnpike problem)

- points are located on a circle (beltway problem)

These two cases have received different names for a reason - various efforts are required to solve them. And indeed, the first problem was solved fairly quickly (in 15 years) and a backtracking algorithm was built, which restores the solution on average in quadratic time.where - the number of points (Skiena, 1990); for the second task, this has not been done so far, and the best proposed algorithm has exponential computational complexity(Lemke, 2003). The picture below shows an estimate of how long your computer will work to get a solution for a set with a different number of points.

That is, for a psychologically acceptable time (~ 10 sec.), You can restore manyup to 10 thousand points in the turnpike case and only ~ 10 points for the beltway case, which is completely useless for practical applications.

Small clarification. It is believed that the turnpike problem is solved from the point of view of use in practice, that is, for the vast majority of the data encountered. At the same time, the objections of pure mathematicians to the fact that there are special data sets for which the time for obtaining a solution is exponential are ignored.(Zhang, 1982). In contrast to turnpike, beltway, the problem with its exponential algorithm can in no way be considered practically solved.

The importance of solving beltway problems in terms of bioinformatics

At the end of the 20th century, a new pathway for the synthesis of biomolecules was discovered, called the non-ribosomal route of synthesis. Its main difference from the classical synthesis pathway is that the final result of the synthesis is not encoded in DNA at all. Instead, the DNA contains only the code of the “tools” (many different synthetases) that these objects can assemble. Thus, biomachine engineering is substantially enriched, and a cell instead of one type of collector (ribosomes) that works with only 20 standard parts (standard amino acids, also called proteinogenic), many other types of collectors appear that can work with more than 500 standard and non-standard parts (non-proteinogenic amino acids and their various modifications). And these collectors can not just join parts in chains, but also to receive rather intricate designs - cyclic, branching, and also designs with many cycles and branching. All this significantly increases the cell's arsenal for different occasions of its life. The biological activity of such structures is high, the specificity (selectivity of action) is also, the variety of properties is not limited. The class of these cell products in the English-language literature is called NRPs (non-ribosomal products, or non-ribosomal peptides). Biologists hope that it is among these products of cell metabolism that one can find new pharmacological preparations with high efficiency and no side effects due to their specificity. The biological activity of such structures is high, the specificity (selectivity of action) is also, the variety of properties is not limited. The class of these cell products in the English-language literature is called NRPs (non-ribosomal products, or non-ribosomal peptides). Biologists hope that it is among these products of cell metabolism that one can find new pharmacological preparations with high efficiency and no side effects due to their specificity. The biological activity of such structures is high, the specificity (selectivity of action) is also, the variety of properties is not limited. The class of these cell products in the English-language literature is called NRPs (non-ribosomal products, or non-ribosomal peptides). Biologists hope that it is among these products of cell metabolism that one can find new pharmacological preparations with high efficiency and no side effects due to their specificity.

The only question is, how and where to look for NRPs? They are very effective, therefore the cell has absolutely no need to produce them in large quantities, and their concentrations are negligible. So chemical methods of extraction with their low accuracy ~ 1% and huge expenses for reagents and time are useless. Further. They are not encoded in DNA, which means all the databases that are accumulated in deciphering the genome, and all methods of bioinformatics and genomics are also useless. The only way to find something remains physical methods, and, namely, mass spectroscopy. Moreover, the detection rate of a substance in modern spectrometers is so high that it allows you to find insignificant quantities, total> ~ 800 molecules (atto-molar range, or concentration).

"

" And how does a mass spectrometer work? In the working chamber of the device, molecules break down into fragments (more often by colliding with each other, less often due to external influence). These fragments are ionized and then accelerated and separated in an external electromagnetic field. Separation occurs either by the time it reaches the detector, or by the rotation angle in a magnetic field, because the fragment’s mass is higher and its charge is lower, the more clumsy it is. Thus, the mass spectrometer “weights” the mass of fragments, moreover, vanie "can be a multi-step, multiple pieces of filtering out the one mass (more precisely one value) and driving them through fragmentation with further separation. Two-stage mass spectrometers are widely distributed and are called tandem, multistage mass spectrometers are extremely rare, and are simply referred to aswhere - number of stages. And here the task appears, as knowing only the masses of various fragments of a molecule to know its structure? Here we come to turnpike and beltway problems, for linear and cyclic peptides, respectively.

Let me explain in more detail how the task of restoring the structure of a biomolecule is reduced to these problems using the example of a cyclic peptide.

The cyclic ABCD peptide at the first stage of fragmentation can be broken in 4 places, either by the DA bond, or by AB, BC or CD, forming 4 possible linear peptides - either ABCD, BCDA, CDAB or DABC. Since a huge number of identical peptides pass through the spectrometer, at the output we will have fragments of all 4 types. Moreover, we note that all the fragments have the same mass, and cannot be separated at the first stage. At the second stage, the linear fragment of ABCD can be broken in three places, forming smaller fragments with different masses A and BCD, AB and CD, ABC and D, and forming the corresponding mass spectrum. In this spectrum, the mass of the fragment is plotted along the x axis, and the number of small fragments with a given mass along the y axis. Similarly, the spectra are formed for the remaining three linear fragments of BCDA, CDAB and DABC. Since all 4 large fragments passed to the second stage, all their spectra are added. So, the output is some masswhere - some mass, and - the multiplicity of its repetition. At the same time, we do not know which fragment this mass belongs to or whether this fragment is unique, since different fragments can have the same mass. The further the bonds in the peptide are from each other, the greater the mass of the peptide fragment is between them. That is, the task of restoring the order of elements in a cyclic peptide is reduced to a beltway problem, in which the elements of the set are bonds in the peptide, and elements of the set - masses of fragments between bonds.

Anticipation of the possibility of the existence of an algorithm with a polynomial time to solve beltway problems

From my own experience and from communicating with people who tried or actually did something to solve this problem, I noticed that the vast majority of people try to solve it either in the general case or for integer data in a narrow range like this (1, 50). Both options are doomed to failure. For the general case, it was proved that the total number of solutions for beltway problems in the one-dimensional case limited to values (Lemke, 2003) which means the existence of an exponential number of solutions in the asymptotics . Obviously, if the number of solutions grows exponentially, then the time to obtain them cannot grow more slowly. That is, for the general case, a polynomial time solution is impossible. As for the integer data in a narrow range, here everything can be checked experimentally, since it is not too difficult to write code that searches for a solution using the exhaustive search method. For smallThis code will not take very long. The results of testing such a code will show that, under such conditions, for data a complete number of different solutions for any set already at small also growing extremely sharply.

Learning about these facts, you can put up and give up on this task. I admit that this is one of the reasons why the beltway problem is still considered unsolved. However, loopholes do exist. Recall that the exponential functionbehaves very interesting. At first, it grows scary slowly, rising from 0 to 1 for an infinitely large interval fromto 0, then its growth accelerates more and more. However, the smaller the valuethe greater should be the value so that the result of the function transcends a specified value . As such a value is convenient to choose the number, before it is the only solution, after it there are many solutions. Question. Did anyone check the dependence of the number of decisions on what data gets into the input? Yes, I did. There is a remarkable phD dissertation of a Croatian woman mathematician Tamara Dakis (Dakic, 2000), in which she defined what conditions the input data should satisfy in order for the problem to be solved in polynomial time. The most important of them are the uniqueness of the solution and the absence of duplication in the set of input data. The level of her phD dissertation is very high. It is a pity that this student was limited only by the turnpike problem, convinced that if she had turned her interest in the beltway problem that would have been solved long ago.

Obtaining a polynomial algorithm for solving beltway problems

It was possible to discover the data for which it is possible to construct the desired algorithm by accident. It took additional ideas. The main idea arose from the observation (see above) that the spectrum of a cyclic peptide is the sum of the spectra of all linear peptides that are formed during single ring breaks. Since the amino acid sequence in a peptide can be recovered from any such linear peptide, the total number of lines in the spectrum is significant (in time where - the number of amino acids in the peptide) is excessive. The only question is which lines should be excluded from the spectrum in order not to lose the possibility of restoring the sequence. Since mathematically both tasks (recovery of a cyclic peptide sequence from the mass spectrum and the beltway problem) are isomorphic, it is also possible to puncture many.

Thinning operations were built using local symmetries of the set (Fomin, 2016a).

- Symmetrization. The first operation is to select an arbitrary element of the set and remove from all elements except those that have symmetric pairs about points and where - the circumference (I remind you that in the beltway case all points are located on the circle).

- Partial solution convolution. The second operation uses the presence of a guess about the solution, that is, knowledge of individual points that belong to the solution, the so-called partial solution. Here from the setall elements are also deleted, except those that satisfy the condition - if we measure the distances from the point being checked to all points of the partial solution, then all the obtained values are in. I will specify that if at least one of the distances obtained is absent inthen the dot is ignored.

Theorems have been proved that both operations thin out many but there will still be enough elements in it to restore the solution. . Using these operations, the operation was built and implemented on the c ++ algorithm to solve the beltway problem (Fomin, 2016b). The algorithm differs little from the classical backtracking algorithm (that is, we try to build a solution by iterating over possible options and make returns if we get an error during construction). The only difference is that for the account of the narrowing of the settry us there are significantly fewer options.

Here is an example of how manywhen thinning.

Computational experiments were performed for randomly generated cyclic peptides of lengthfrom 10 to 1000 amino acids (y-axis on a logarithmic scale). The abscissa axis also on a logarithmic scale shows the number of elements in sets thinned by different operations. in units . Such a presentation is absolutely unusual, because I will decipher how it is read by example. Look at the left chart. Let the peptide have a length. For him, the number of elements in the set equally (this is the point on the upper dotted straight line ). After removing from the set repeating elements, the number of elements in reduced to (circles with crosses). After symmetrization the number of elements falls to (black circles), and after the convolution by partial solution before (crosses). Total amount of the setreduced by only 20 times. For a peptide of the same length, but in the right chart, the reduction is from before that is 100 times.

Note that the generation of test examples for the left diagram is done in such a way that the level of duplication at was in the range from 0.1 to 0.3, and for the right - less than 0.1. The level of duplication is defined aswhere - the number of unique elements in the set . This definition gives a natural result: in the absence of duplicates in the set its power is equal to and , with the maximum possible duplication and . How to make provide a different level of duplication, I will say a little later. The diagrams show that the lower the level of duplication, the more thinned outat number of elements in thinned generally reaches its limit because the thinned set is less than elements cannot be obtained (operations retain enough elements to obtain a solution in which elements). The fact of narrowing the power setto the lower limit is very important, it is he who leads to dramatic changes in the computational complexity of obtaining a solution.

After inserting thinning operations into the backtracking algorithm and in solving the beltway problem, a complete analog of what Tamara Dakis was talking about with respect to the turnpike problem was revealed. I will remind you. She said that for turnpike problems it is possible to get a solution in polynomial time if the solution is unique and there is no duplication in. It turned out that a complete lack of duplication is not necessarily necessary (it is hardly possible for real data), it is enough that its level will be sufficiently small. The figure below shows how long it takes to get a solution to the beltway problem depending on the peptide length and the level of duplication in.

In the figure, both the x-axis and y-axis are given on a logarithmic scale. This allows you to clearly see whether the dependence of the count time on the length of the sequenceexponential (straight line) or polynomial (log-shaped curve). As can be seen in the diagrams, with a low level of duplication (right diagram), the solution is obtained in polynomial time. Well, to be more precise, the solution is obtained in quadratic time. This occurs when thinning operations reduce the power of the set to a lower limit., there are few points left in it, returns when selecting options become single, and as a matter of fact, the algorithm ceases to sort out the options, but simply constructs a solution from what is left.

PS Well, I will reveal the last secret regarding the generation of sets in different levels of duplication. This is due to the different accuracy of data presentation. If the data is generated with low accuracy (for example, rounding to integers), then the level of duplication becomes high, more than 0.3. If the data is generated with good accuracy, for example, up to 3 decimal places, then the level of duplication decreases sharply, less than 0.1. And from here follows the last most important remark.

For real data, in conditions of constantly increasing measurement accuracy, the beltway problem becomes solvable in real time.

Literature.

1. Dakic, T. (2000). On the Turnpike Problem. PhD thesis, Simon Fraser University.

2. Fomin. E. (2016a) A Pair of Distances ^ 2 Steps for the Sequencing Problem: I. Theory. J Comput Biol. 2016, 23 (9): 769-75.

3. Fomin. E. (2016b) wise Distance Pair Ste Pair 2 wise Ste ps wise 2 wise Pair Pair 2 ps 2 2 wise Algorithm. J Comput Biol. 2016, 23 (12): 934-942.

4. Lemke, P., Skiena, S., and Smith, W. (2003). Reconstructing Sets From Interpoint Distances. Discrete and Computational Geometry Algorithms and Combinatorics, 25: 597–631.

5. Skiena, S., Smith, W., and Lemke, P. (1990). Reconstructing sets from interpoint distances. In Proceedings of the Sixth ACM Symposium on Computational Geometry, pages 332–339. Berkeley, CA

6. Zhang, Z. 1982. An exponential example for a partial digest mapping algorithm. J. Comp. Biol. 1, 235–240.