Integrated CROC development stand for 1C and not only

Development experience, accumulated on large and complex projects, is embodied in useful tools and engineering practices that need to enrich the development processes, rethinking it all over time. It is the awareness of the value of acquired experience as an artifact, the desire to develop, led us to an understanding of the need to introduce tools and practices into current processes. And we launched a cardinal revision of approaches to design solutions and the development process as a whole. It makes no sense to describe the typical limitations and shortcomings of the “classic” approach to team development in the 1C world. Much has already been said on this subject. I will describe only the patterns that allowed us to make these flaws small and almost not scary.

So get acquainted, integrated development booth!

Designing the architecture of the stand, we sought to cover the entire life cycle of the solutions implemented on the projects, implement the experience gained, standardize the processes, automate the routine. At the same time, it was necessary to lay the development potential, to preserve the ability to scale and maximum simplicity, in terms of maintenance, development and user experience. Tools and techniques that have proven their effectiveness in practice should be added to the process. And all useless, interfering with the work should be removed.

The life cycle of any solution practically does not change depending on the scale and complexity of the project. It includes: analysis, design, development, testing, implementation, maintenance, decommissioning. To obtain maximum efficiency, each of these processes should be verified and coordinated with the preceding and subsequent processes, combined into a kind of automated conveyor that can be replicated for an unlimited number of projects. The task was solved by the implementation of the system in the form of microservices connected via exported APIs in isolated containers, which can be deployed both in the cloud service and in the local network of the enterprise.

Here is our stack, which implements the process:

We tried to minimize the use of paid services with closed source code, which had a positive impact on the cost of ownership. Virtually all services are open source and run on Linux.

The process is designed so that we get the most out of each member of the development team, and standardization and automation eliminates excessive complexity and routine.

One of the most significant stages at the start of a project is to design a future solution based on requirements analysis. The main task is to understand the most clearly, unambiguously and quickly the architecture of the future solution, in terms understood by both the developer / engineer and the consultant. Describe the metadata, algorithms realizable business processes. At the same time, I wanted to maximize the use of ready-made templates that can be quickly customized for specific conditions, which can be adapted to the input data and receive project documentation at the output.

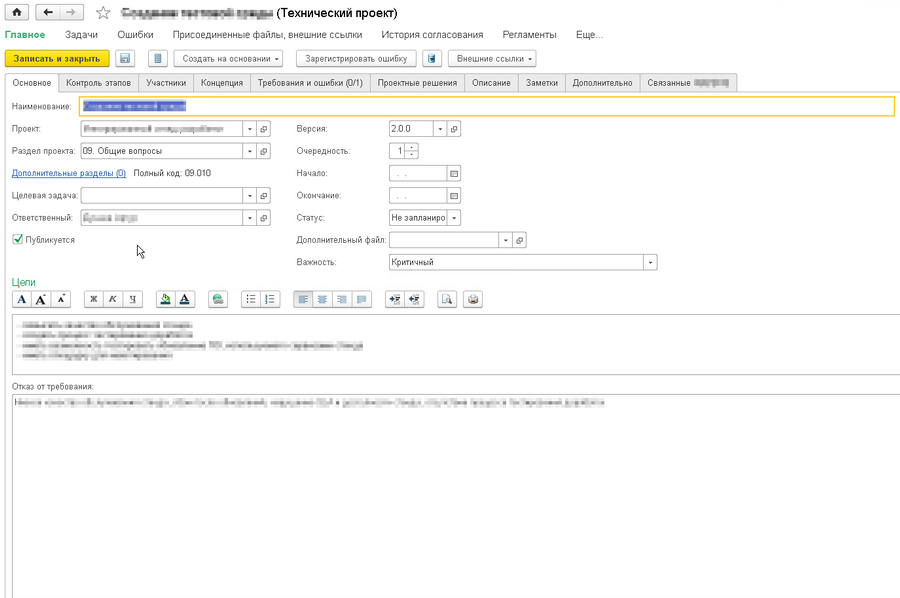

We implemented the configuration of "1C: DSS" as a single interface for designing application solutions, significantly reworked the concept of describing business processes and functions, as well as the design of TP (FDR). We also automated the processes of generating documentation through integration with the functionality of “1C: DO” and microservices for generating documents in the docx format.

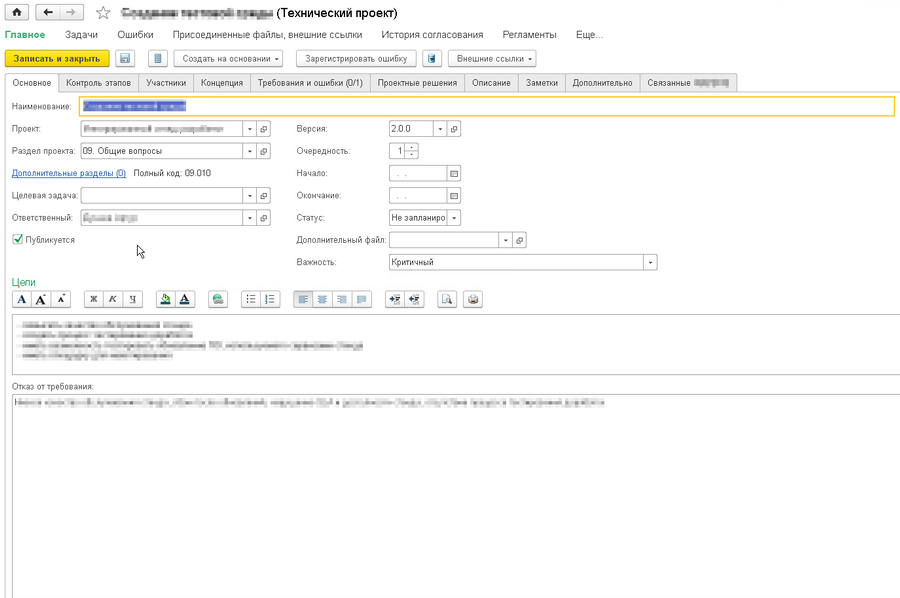

"1C: DSS." Editing information about the project:

"1C: DSS." Report on the processes:

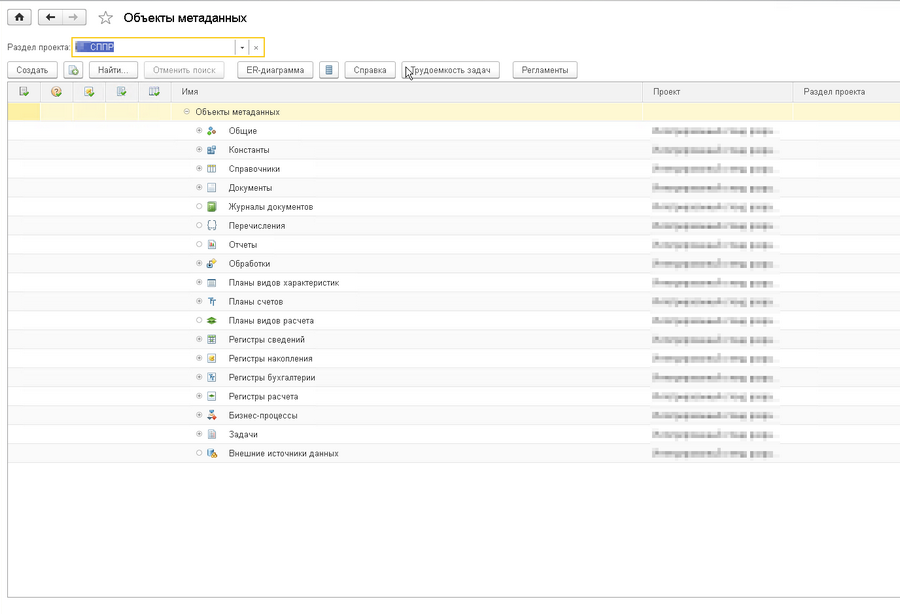

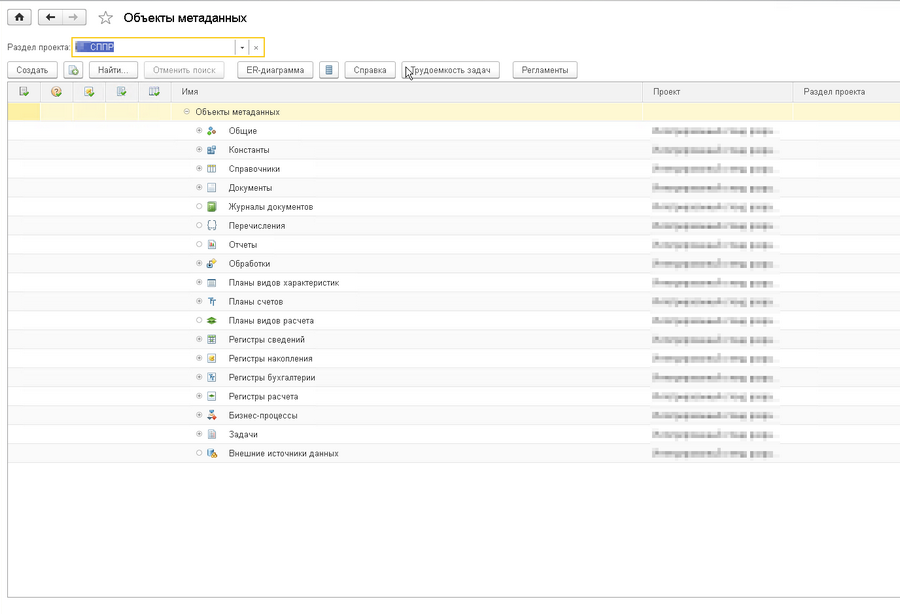

"1C: DSS." Editing object metadata:

"1C: DSS". Editing a business process diagram:

By the way, we can visualize the relationship between business processes and objects in the system, create a list of improvements based on the registered requirements and obtain project documentation automatically, which simplifies the change management process. So, plan in detail the development process, see the complexity, the connectedness of tasks, more accurately determine the timing and order of their implementation.

Of course, one cannot say that the design process has changed dramatically, but the unification of organizational approaches and the automation of many functions already contribute to the quality of projects.

We have tried to focus on the possibility of comprehensive, continuous, automated quality control of the developed code in order to ensure compliance with the standard established by CROC. Moreover, even if we involve third-party development teams, the methods, tools, and development standards of which may differ significantly from ours.

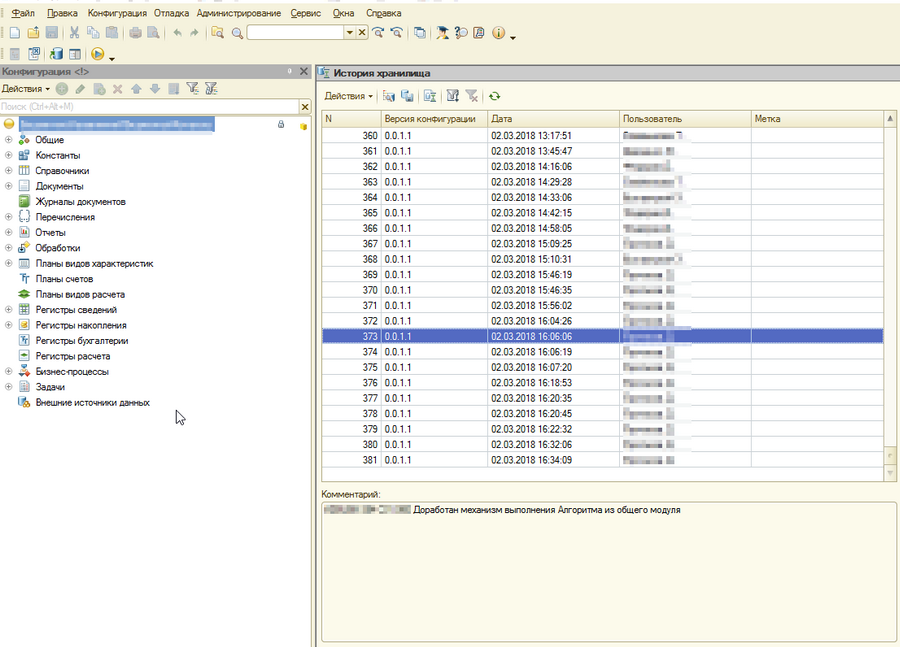

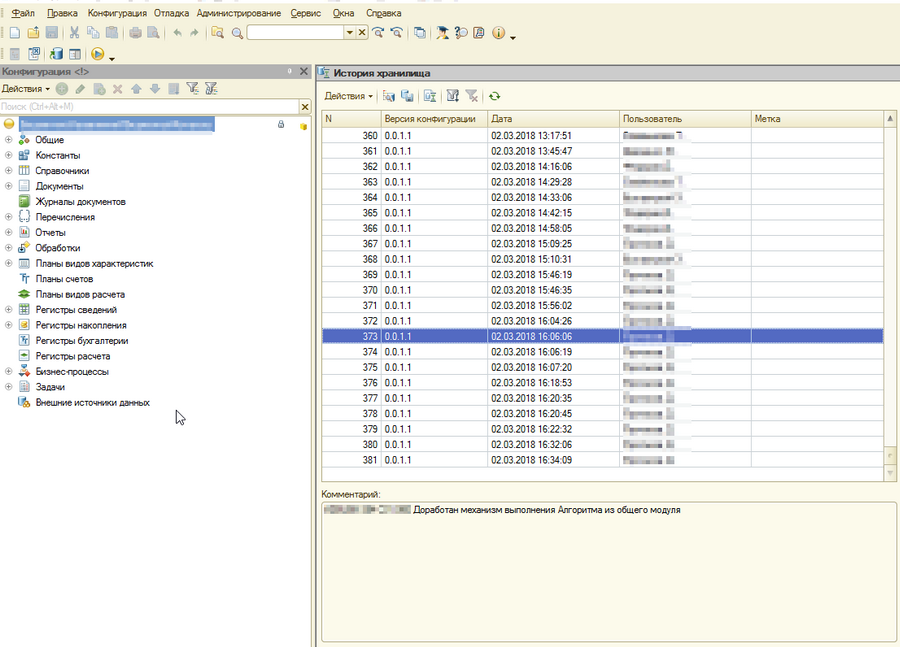

At the stand, each developer commit automatically starts the procedure for parsing the configuration in the directory and file structure through the Gitsync service. The resulting changes are indexed and placed in the Git repository. In our case, this is the Gitlab service. The commit message is automatically generated from the text of the comment entered when changes are placed in the configuration repository, and the author of the commit in the version control system is associated with the user of the configuration repository. During the analysis of the text commentary, we can get information about the development task and labor costs, transfer it to the task tracking system, for example, Jira. It gives a comprehensive picture of the development history. For example, we can find the author by line of code,

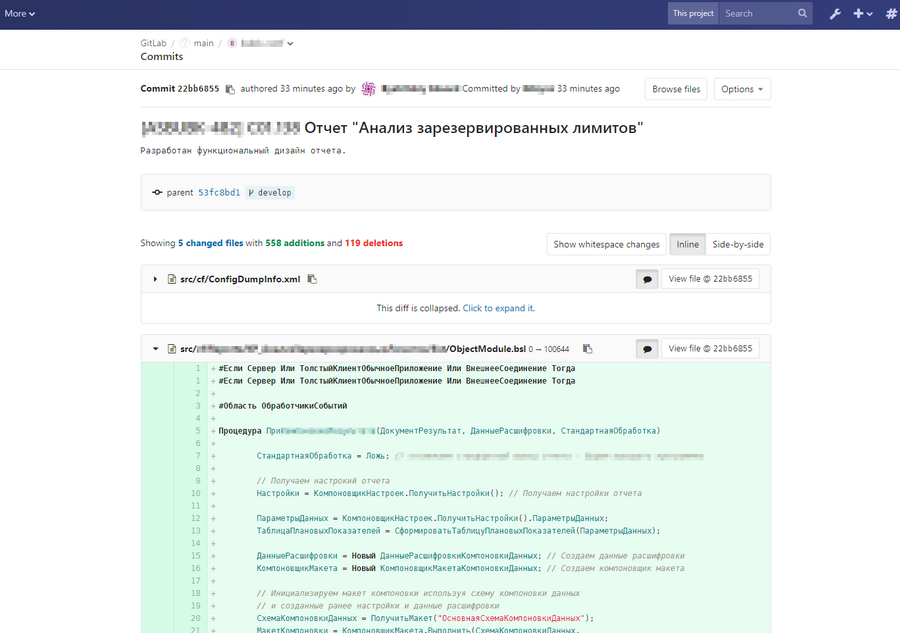

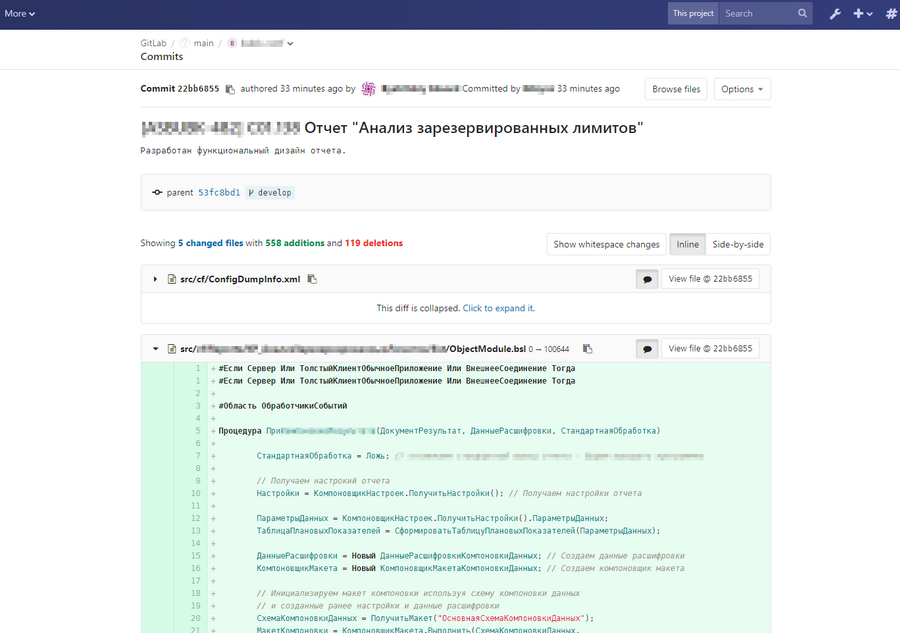

Gitlab. Now it is possible to comment on any line placed by the commit code:

Gitlab. Conduct a “Code Review” with syntax highlighting:

Gitlab. Get a clear picture of changes in the code in the new commit:

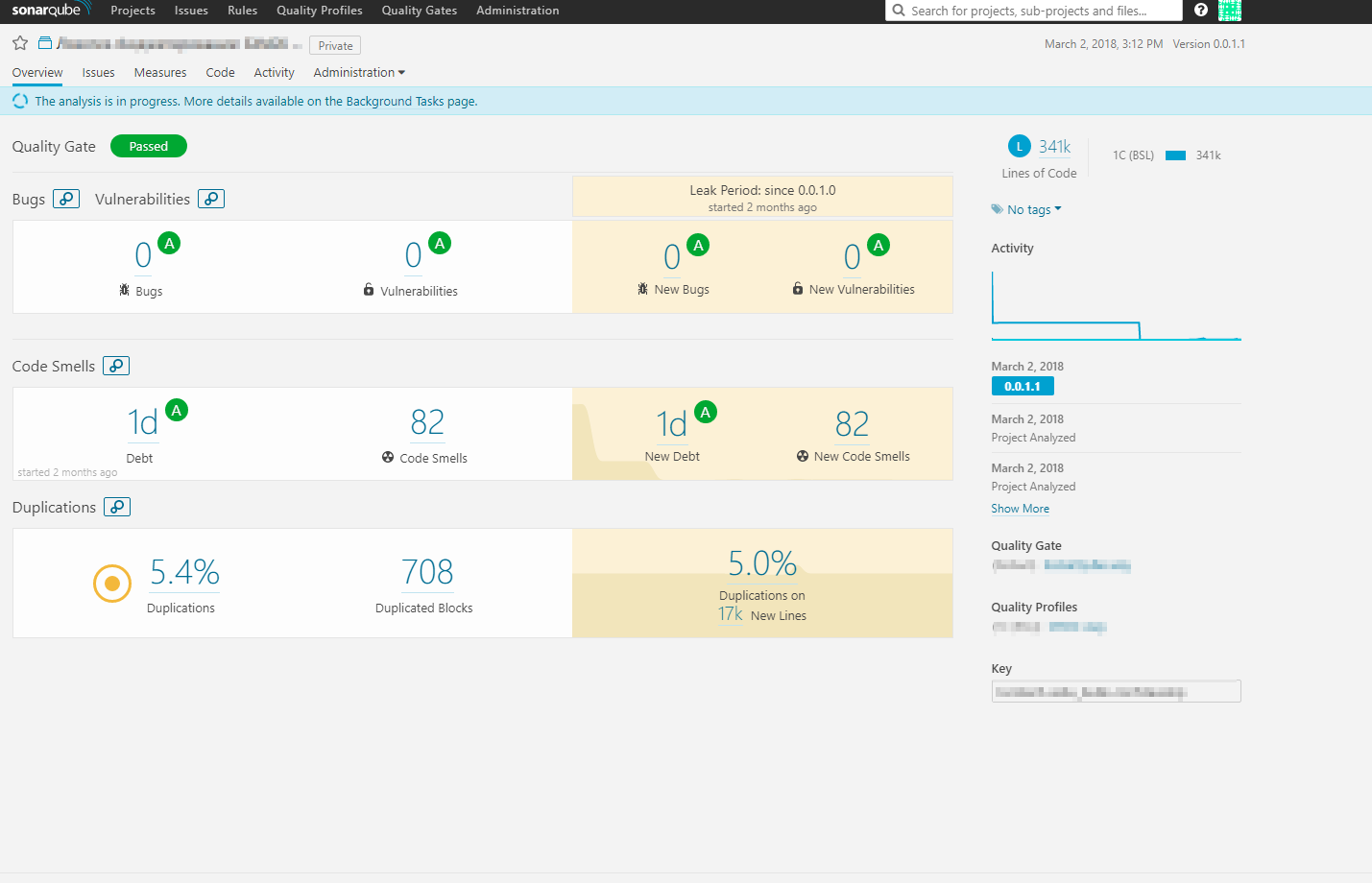

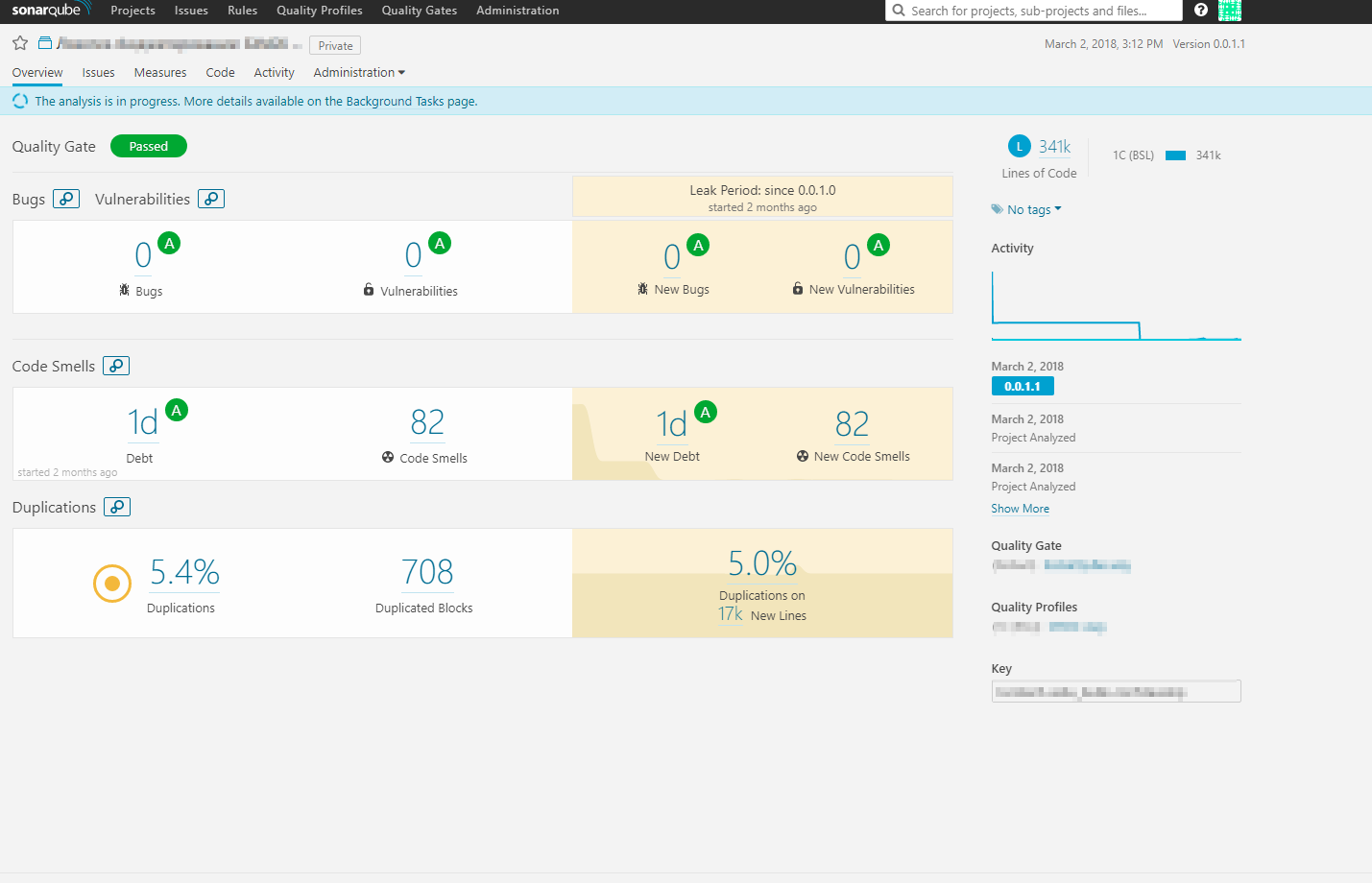

After each commit in the repository, the static code inspection procedure by the SonarQube service automatically starts. The BSL commit code is checked for compliance with a set of rules (quality profile) describing the code development standard. The procedure is performed both for the code of the configuration being developed, and for external mechanisms: extensions, external reports and processing, and, in principle, any other project code, even in other languages.

SonarQube:

Each check updates the information of tracked codebase quality metrics, such as:

The metrics collected as a result of the check provide a visual representation of the current state of the project code base, allow us to assess the quality, identify risks, and promptly correct errors.

The quality profile can be extended by its own sets of rules through XPath, as well as by issuing new rules as part of its own implementation of the plug-in for 1C. This allows you to flexibly manage quality requirements based on the realities of a particular solution.

Automated start of configuration parsing, code inspection, automated testing, etc. launches a continuous integration service (Jenkins service). The number and nature of such assembly lines can be changed in accordance with the specifics of a project.

Despite the relative complexity of the process described, all pipeline mechanisms that perform the routine are hidden in the cloud service. And the developer deals with the configurator interface, which is familiar to him, and can also develop his skills using more advanced tools. For example, git, repository for external mechanisms in conjunction with third-party code editors and SonarLint, SourceTree, etc.

In the general case, the developer connects the information base to the 1C configuration storage service, writes the code and places it into this service, thereby starting the process hidden from it on the stand. If, as a result of checking a commit, the code reveals flaws, the developer receives an email notification (or chat bot message) with a link to the error description and recommendations for correction and a temporary assessment of labor costs in the SonarQube service interface. After correcting the error, a new commit occurs and the process is repeated, the edited tasks are automatically closed ... technical debt is reduced. By the same logic, an automated testing process is built, where each commit initiates the launch of the deployment of the test environment, connects the necessary tests from the test library.

It is difficult to overestimate the effect of continuous, comprehensive verification of the code, followed by automated testing of the developed functionality. This allows you to get rid of far-reaching consequences, make the development stages transparent, and together with properly aligned processes, objectively assess the qualifications of developers, which eliminates the risks of dependence on contractors. Evaluating the parameters of the current code base, we can quickly identify and mitigate emerging risks, correct design flaws, and respond to changing requirements in a timely manner.

The modular organization of the architecture of the stand allows you to build in the process of new modules, replicate the solution for the required number of projects. Schematically, it looks like this:

Above, I have already spoken about the service of continuous testing, which is integrated into the pipeline of the development process. At the moment, the test of the smoke tests and unit tests has been implemented on the stand. The autotest functionality is implemented using the xUnitFor1C framework.

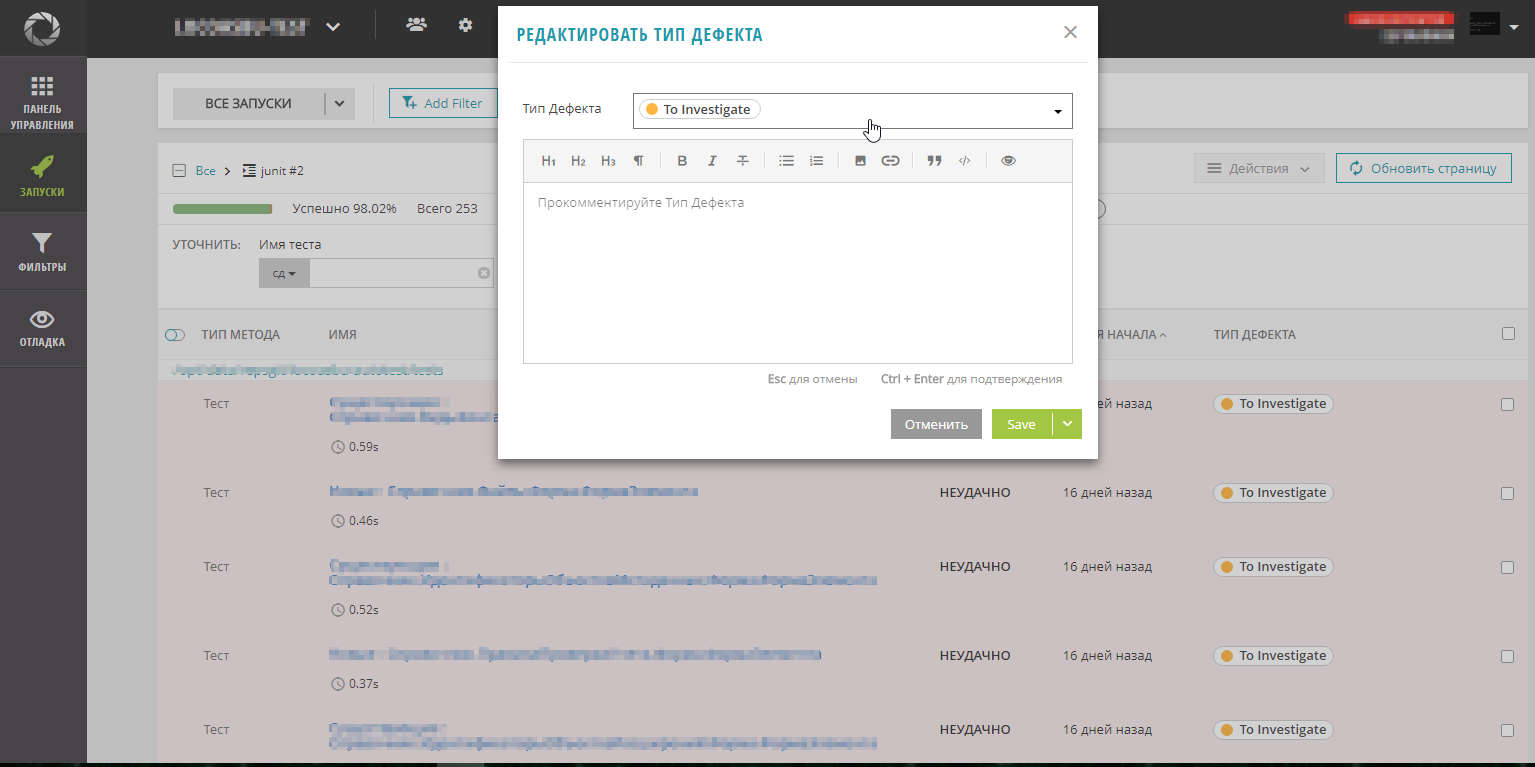

Starting the testing process, as well as code inspection, is tied to a commit event in the project repository. For unit tests, development of a test is implied in parallel with the development of a functional. Immediately before their execution, an automated deployment of the latest version of the configuration to the prepared test information base is performed. Then a client is launched that executes the test scripts for the already implemented functionality. Tight integration of automated testing services with BSL SonarQube plugin allows you to calculate such a parameter as code coverage tests. The result of the test run is a report that is loaded into the ReportPortal test analysis and visualization system. This service is a report portal that aggregates test run data (statistics and results), Basic system training is done on categorizing falls and further automatically determining the causes. All parameters of test runs are available in a convenient web interface with various boards for graphs, diagrams (widgets). To extend the functions of the portal it is possible to use your own extensions.

The automated testing service is another step that reduces the risk of getting a release code that breaks the previously implemented functionality or works with errors.

ReportPortal. Dashboard:

ReportPortal. Failed test runs:

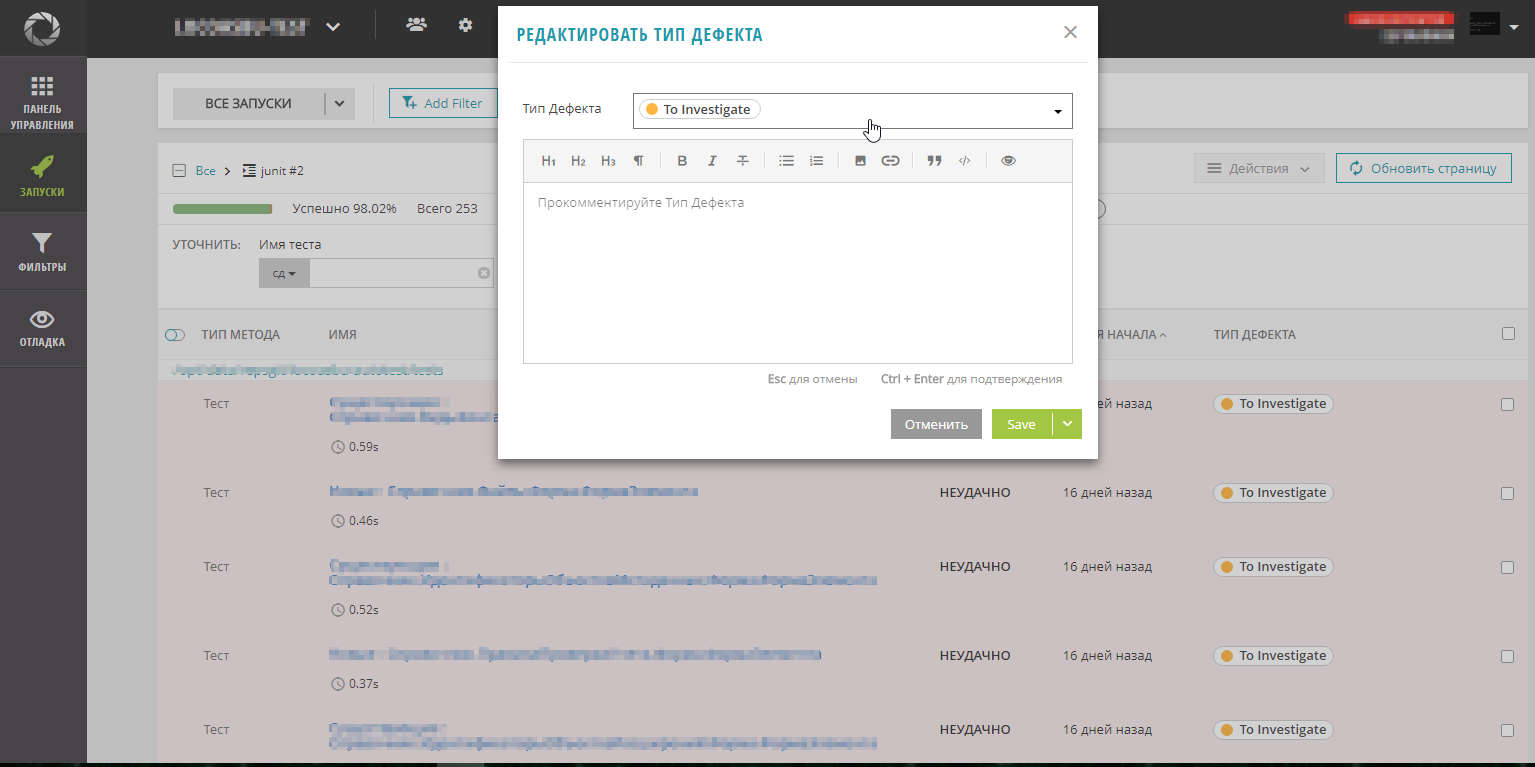

ReportPortal. Types of defects:

ReportPortal. Editing defect types:

Evaluating the results of the work done, we see that from the conceived already implemented, which ideas are already working successfully, which still have to be implemented.

Of the plans for the near future development of the stand - the creation of a portal service management stand. The web interface of the portal will allow you to work with applications for connecting projects to the stand, with a calculator of the cost of services, with their automated deployment on request for a project. As a result, the manager can immediately receive a cost estimate of the selected services and estimate the costs, determine the developers for the project.

We are planning to integrate the portal with the cloud solution for operating 1C systems. This will help to quickly deploy replicable standard solutions in conjunction with the development services implemented on the stand for more efficient migration of the customer’s systems to the CROC cloud.

We also plan to integrate the management portal with the automated configuration management service (deployment, configuration, removal). This will reduce deployment time, simplify system configuration and maintenance. And to increase the level of security, we will introduce a pass-through authentication service across all the services of the stand in order to use the same account in all of them.

If we consider the whole process from the point of view of the history of the complete solution development cycle, then the created pipeline will allow collecting and aggregating a large number of various metrics, both for the project artifacts and for the specialists who took part in it. Such a detailed retrospective will contribute to the accumulation and reuse of experience in solving complex problems, to form teams of successful developers for more efficient and harmonious work.

UPD. At the request of comments we add a list of open-use products that we use, with links.

So get acquainted, integrated development booth!

General principles of architecture

Designing the architecture of the stand, we sought to cover the entire life cycle of the solutions implemented on the projects, implement the experience gained, standardize the processes, automate the routine. At the same time, it was necessary to lay the development potential, to preserve the ability to scale and maximum simplicity, in terms of maintenance, development and user experience. Tools and techniques that have proven their effectiveness in practice should be added to the process. And all useless, interfering with the work should be removed.

Process

The life cycle of any solution practically does not change depending on the scale and complexity of the project. It includes: analysis, design, development, testing, implementation, maintenance, decommissioning. To obtain maximum efficiency, each of these processes should be verified and coordinated with the preceding and subsequent processes, combined into a kind of automated conveyor that can be replicated for an unlimited number of projects. The task was solved by the implementation of the system in the form of microservices connected via exported APIs in isolated containers, which can be deployed both in the cloud service and in the local network of the enterprise.

Here is our stack, which implements the process:

We tried to minimize the use of paid services with closed source code, which had a positive impact on the cost of ownership. Virtually all services are open source and run on Linux.

The process is designed so that we get the most out of each member of the development team, and standardization and automation eliminates excessive complexity and routine.

Design (Application Design Engineering Service)

One of the most significant stages at the start of a project is to design a future solution based on requirements analysis. The main task is to understand the most clearly, unambiguously and quickly the architecture of the future solution, in terms understood by both the developer / engineer and the consultant. Describe the metadata, algorithms realizable business processes. At the same time, I wanted to maximize the use of ready-made templates that can be quickly customized for specific conditions, which can be adapted to the input data and receive project documentation at the output.

We implemented the configuration of "1C: DSS" as a single interface for designing application solutions, significantly reworked the concept of describing business processes and functions, as well as the design of TP (FDR). We also automated the processes of generating documentation through integration with the functionality of “1C: DO” and microservices for generating documents in the docx format.

"1C: DSS." Editing information about the project:

"1C: DSS." Report on the processes:

"1C: DSS." Editing object metadata:

"1C: DSS". Editing a business process diagram:

By the way, we can visualize the relationship between business processes and objects in the system, create a list of improvements based on the registered requirements and obtain project documentation automatically, which simplifies the change management process. So, plan in detail the development process, see the complexity, the connectedness of tasks, more accurately determine the timing and order of their implementation.

Of course, one cannot say that the design process has changed dramatically, but the unification of organizational approaches and the automation of many functions already contribute to the quality of projects.

Development (Continuous Integration, Inspection and Testing Services)

We have tried to focus on the possibility of comprehensive, continuous, automated quality control of the developed code in order to ensure compliance with the standard established by CROC. Moreover, even if we involve third-party development teams, the methods, tools, and development standards of which may differ significantly from ours.

At the stand, each developer commit automatically starts the procedure for parsing the configuration in the directory and file structure through the Gitsync service. The resulting changes are indexed and placed in the Git repository. In our case, this is the Gitlab service. The commit message is automatically generated from the text of the comment entered when changes are placed in the configuration repository, and the author of the commit in the version control system is associated with the user of the configuration repository. During the analysis of the text commentary, we can get information about the development task and labor costs, transfer it to the task tracking system, for example, Jira. It gives a comprehensive picture of the development history. For example, we can find the author by line of code,

Gitlab. Now it is possible to comment on any line placed by the commit code:

Gitlab. Conduct a “Code Review” with syntax highlighting:

Gitlab. Get a clear picture of changes in the code in the new commit:

After each commit in the repository, the static code inspection procedure by the SonarQube service automatically starts. The BSL commit code is checked for compliance with a set of rules (quality profile) describing the code development standard. The procedure is performed both for the code of the configuration being developed, and for external mechanisms: extensions, external reports and processing, and, in principle, any other project code, even in other languages.

SonarQube:

Each check updates the information of tracked codebase quality metrics, such as:

- technical debt - the total labor costs necessary to correct the identified errors;

- quality threshold - maximum permissible indicators of errors, vulnerabilities and other code flaws, upon reaching which, the release release is considered not possible;

- code duplication - the number of lines of code repeated multiple times within the developed configuration;

- cyclomatic and cognitive complexity - algorithms with a large number of branches, complicating the support and refinement of the code.

The metrics collected as a result of the check provide a visual representation of the current state of the project code base, allow us to assess the quality, identify risks, and promptly correct errors.

The quality profile can be extended by its own sets of rules through XPath, as well as by issuing new rules as part of its own implementation of the plug-in for 1C. This allows you to flexibly manage quality requirements based on the realities of a particular solution.

Automated start of configuration parsing, code inspection, automated testing, etc. launches a continuous integration service (Jenkins service). The number and nature of such assembly lines can be changed in accordance with the specifics of a project.

Despite the relative complexity of the process described, all pipeline mechanisms that perform the routine are hidden in the cloud service. And the developer deals with the configurator interface, which is familiar to him, and can also develop his skills using more advanced tools. For example, git, repository for external mechanisms in conjunction with third-party code editors and SonarLint, SourceTree, etc.

In the general case, the developer connects the information base to the 1C configuration storage service, writes the code and places it into this service, thereby starting the process hidden from it on the stand. If, as a result of checking a commit, the code reveals flaws, the developer receives an email notification (or chat bot message) with a link to the error description and recommendations for correction and a temporary assessment of labor costs in the SonarQube service interface. After correcting the error, a new commit occurs and the process is repeated, the edited tasks are automatically closed ... technical debt is reduced. By the same logic, an automated testing process is built, where each commit initiates the launch of the deployment of the test environment, connects the necessary tests from the test library.

It is difficult to overestimate the effect of continuous, comprehensive verification of the code, followed by automated testing of the developed functionality. This allows you to get rid of far-reaching consequences, make the development stages transparent, and together with properly aligned processes, objectively assess the qualifications of developers, which eliminates the risks of dependence on contractors. Evaluating the parameters of the current code base, we can quickly identify and mitigate emerging risks, correct design flaws, and respond to changing requirements in a timely manner.

The modular organization of the architecture of the stand allows you to build in the process of new modules, replicate the solution for the required number of projects. Schematically, it looks like this:

Testing (continuous testing service)

Above, I have already spoken about the service of continuous testing, which is integrated into the pipeline of the development process. At the moment, the test of the smoke tests and unit tests has been implemented on the stand. The autotest functionality is implemented using the xUnitFor1C framework.

Starting the testing process, as well as code inspection, is tied to a commit event in the project repository. For unit tests, development of a test is implied in parallel with the development of a functional. Immediately before their execution, an automated deployment of the latest version of the configuration to the prepared test information base is performed. Then a client is launched that executes the test scripts for the already implemented functionality. Tight integration of automated testing services with BSL SonarQube plugin allows you to calculate such a parameter as code coverage tests. The result of the test run is a report that is loaded into the ReportPortal test analysis and visualization system. This service is a report portal that aggregates test run data (statistics and results), Basic system training is done on categorizing falls and further automatically determining the causes. All parameters of test runs are available in a convenient web interface with various boards for graphs, diagrams (widgets). To extend the functions of the portal it is possible to use your own extensions.

The automated testing service is another step that reduces the risk of getting a release code that breaks the previously implemented functionality or works with errors.

ReportPortal. Dashboard:

ReportPortal. Failed test runs:

ReportPortal. Types of defects:

ReportPortal. Editing defect types:

Development

Evaluating the results of the work done, we see that from the conceived already implemented, which ideas are already working successfully, which still have to be implemented.

Of the plans for the near future development of the stand - the creation of a portal service management stand. The web interface of the portal will allow you to work with applications for connecting projects to the stand, with a calculator of the cost of services, with their automated deployment on request for a project. As a result, the manager can immediately receive a cost estimate of the selected services and estimate the costs, determine the developers for the project.

We are planning to integrate the portal with the cloud solution for operating 1C systems. This will help to quickly deploy replicable standard solutions in conjunction with the development services implemented on the stand for more efficient migration of the customer’s systems to the CROC cloud.

We also plan to integrate the management portal with the automated configuration management service (deployment, configuration, removal). This will reduce deployment time, simplify system configuration and maintenance. And to increase the level of security, we will introduce a pass-through authentication service across all the services of the stand in order to use the same account in all of them.

If we consider the whole process from the point of view of the history of the complete solution development cycle, then the created pipeline will allow collecting and aggregating a large number of various metrics, both for the project artifacts and for the specialists who took part in it. Such a detailed retrospective will contribute to the accumulation and reuse of experience in solving complex problems, to form teams of successful developers for more efficient and harmonious work.

UPD. At the request of comments we add a list of open-use products that we use, with links.

| Product | Vendor / Author | References №1 | References №2 |

|---|---|---|---|

| 1C | 1C | http://v8.1c.ru | |

| 1C: DSS | 1C | http://v8.1c.ru/model/ | |

| 1C: TO | 1C | http://v8.1c.ru/doc8/ | |

| Git | Sfc | https://ru.wikipedia.org/wiki/Git | |

| Gitlab | Gitlab | https://ru.wikipedia.org/wiki/GitLab | https://about.gitlab.com/ |

| Jira | Atlassian | https://ru.wikipedia.org/wiki/Jira | https://ru.atlassian.com/software/jira |

| ReportPortal | EPAM Systems, Inc. | https://github.com/reportportal | https://rp.epam.com/ui/ |

| SonarQube | SonarSource | https://ru.wikipedia.org/wiki/SonarQube | https://github.com/SonarSource/sonarqube |

| Jenkins | Kosuke Kawaguchi | https://ru.wikipedia.org/wiki/Jenkins | https://github.com/jenkinsci |

| Sourcetree | Atlassian | https://www.sourcetreeapp.com/ | |

| xUnitFor1C | artbear | https://github.com/xDrivenDevelopment/xUnitFor1C | https://github.com/artbear |

| Gitsync | EvilBeaver | https://github.com/oscript-library/gitsync | https://github.com/EvilBeaver |

| SonarQube 1C (BSL) Plugin | Silver bulleters | https://silverbulleters.org/sonarqube | https://github.com/silverbulleters/sonar-1c-bsl-public |