Assessment of test coverage on the project

The best way to evaluate whether we tested a product well is to analyze missing defects. Those faced by our users, implementers, business. A lot can be estimated from them: what we checked insufficiently, what areas of the product should be paid more attention to, what is the percentage of omissions and what is the dynamics of its changes. With this metric (perhaps the most common in testing), everything is fine, but ... When we released the product and found out about the missed errors, it might be too late: an angry article appeared about us at the Habré, competitors are rapidly spreading criticism, customers have lost trust in us, management dissatisfied.

To prevent this from happening, we usually try to evaluate the quality of testing in advance, before the release: how well and thoroughly do we test the product? What areas lack attention, where are the main risks, what is the progress? And to answer all these questions, we evaluate the test coverage.

Any assessment metrics is a waste of time. At this time, you can test, get bugs, prepare autotests. What magical benefit do we get from test coverage metrics to donate time for testing?

Before implementing any metric, it is important to decide how you will use it. Start by answering exactly this question - most likely, you will immediately understand how it is best to consider it. And I will only share in this article some examples and my experience of how this can be done. Not in order to blindly copy solutions - but in order for your imagination to rely on this experience, thinking through a solution that is ideally suited to you.

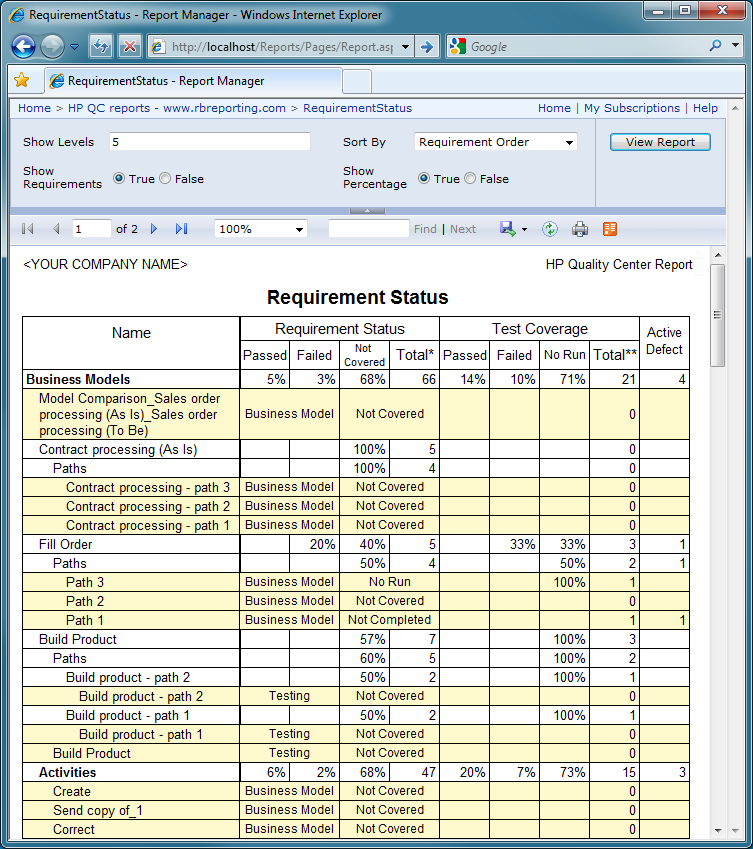

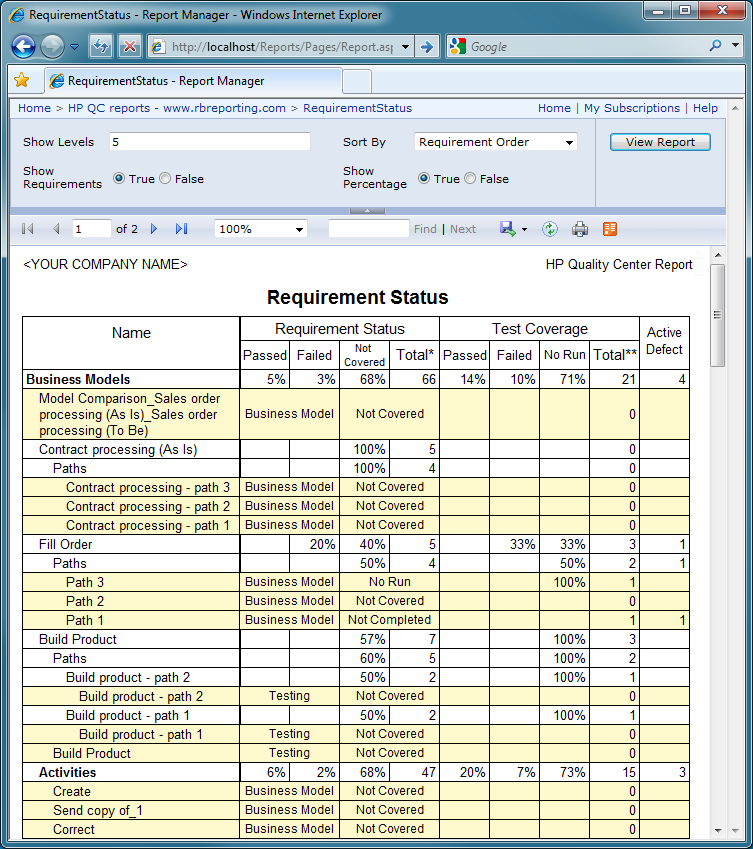

Suppose you have analysts in your team and they are not wasting their time. Based on the results of their work, requirements were created in RMS (Requirements Management System) - HP QC, MS TFS, IBM Doors, Jira (with additional plug-ins), etc. They introduce requirements into this system that meet the requirements of the requirements (sorry for the tautology). These requirements are atomic, traceable, specific ... In general, ideal conditions for testing. What can we do in this case? When using the scripted approach, link requirements and tests. We run tests in the same system, make a requirement-test bundle, and at any time we can see a report on which requirements there are tests, on which - not, when these tests were passed, and with what result.

We get a coverage map, we cover all uncovered requirements, everyone is happy and satisfied, we don’t miss mistakes ...

Okay, let's return from heaven to earth. Most likely, you do not have detailed requirements, they are not atomic, some of the requirements have been lost at all, and there is no time to document every test, or at least every other one. You can despair and cry, or you can admit that testing is a compensatory process, and the worse we have with analytics and development on the project, the more we must try ourselves and compensate for the problems of other participants in the process. Let's analyze the problems separately.

Analysts also sometimes sin with vinaigrette in the head, and usually this is fraught with problems with the whole project. For example, you are developing a text editor, and you may have two requirements in the system (among others): “html formatting must be supported” and “when opening a file of an unsupported format, a pop-up window should appear with a question”. How many tests are required for basic verification of the 1st requirement? And for the 2nd? The difference in answers is most likely about a hundred times !!! We can’t say that if there is at least one test according to the first requirement, this is enough - but about the second, most likely, completely.

Thus, the presence of a test for the requirement does not guarantee us anything at all! What does our coverage statistics mean in this case? Just about nothing! Have to decide!

Of course, such a coordination process requires a lot of resources and time, especially at first, before the practice is developed. Therefore, spend on it only high priority requirements, and new improvements. Over time, pull up the rest of the requirements, and everyone will be happy! But ... and if there are no requirements at all?

They are absent from the project, they are discussed orally, everyone does what he wants / can and how he understands. Testing the same way. As a result, we get a huge number of problems not only in testing and development, but also in the initially incorrect implementation of features - they wanted a completely different one! Here I can advise the option “identify and document the requirements yourself”, and even used this strategy a couple of times in my practice, but in 99% of cases there are no such resources in the testing team - so let's go in a much less resource-intensive way:

But ... What if the requirements are met, but not in a traceable format?

The project has a huge amount of documentation, analysts print at a speed of 400 characters per minute, you have specifications, technical specifications, instructions, certificates (most often this happens at the request of the customer), and all this acts as requirements, and the project has long been Confused where to find what information?

We repeat the previous section, helping the whole team to restore order!

But ... Not for long ... It seems that over the past week, customer service analysts have updated 4 different specifications !!!

Of course, it would be nice to test some fixed system, but our products are usually live. The customer asked for something, something changed in the legislation external to our product, and somewhere, the analysts found the analysis error of the year before last ... Requirements live their lives! What to do?

In this case, we get all the benefits of the test coverage assessment, and even in dynamics! Everyone is happy!!! But ...

But you paid so much attention to working with requirements that now you do not have enough time either to test or to document tests. In my opinion (and there is room for a religious dispute!), Requirements are more important than tests, and even better! At least they are in order, and the whole team is in the know, and the developers are doing exactly what they need. BUT ON TIME TEST DOCUMENTATION DO NOT REMAIN!

In fact, the source of this problem can be not only a lack of time, but also your well-informed choice not to document them (we do not like it, we avoid the effect of the pesticide, the product changes too often, etc.). But how to evaluate test coverage in this case?

But ... What is another “but”? What ???

Say, we’ll get around everything and may quality products be with us!

To prevent this from happening, we usually try to evaluate the quality of testing in advance, before the release: how well and thoroughly do we test the product? What areas lack attention, where are the main risks, what is the progress? And to answer all these questions, we evaluate the test coverage.

Why evaluate?

Any assessment metrics is a waste of time. At this time, you can test, get bugs, prepare autotests. What magical benefit do we get from test coverage metrics to donate time for testing?

- Search for your weak areas. Naturally, do we need this? not just to gobble up, but to know where improvements are needed. What functional areas are not covered by tests? What did not we check? Where are the greatest risks of missing errors?

- Rarely do we get 100% based on coverage estimates. What to improve? Where to go? What is the percentage now? How do we increase it with any task? How fast will we get to 100? All of these questions bring transparency and clarity to our process , and coverage answers give answers to them.

- Focus of attention. Let's say there are about 50 different functional zones in our product. A new version comes out, and we begin to test the first of them, and we find typos there, and buttons that have moved a couple of pixels, and other trifles ... And now the testing time is over, and this functionality has been tested in detail ... And the remaining 50? Coverage assessment allows us to prioritize tasks based on current realities and deadlines.

How to evaluate?

Before implementing any metric, it is important to decide how you will use it. Start by answering exactly this question - most likely, you will immediately understand how it is best to consider it. And I will only share in this article some examples and my experience of how this can be done. Not in order to blindly copy solutions - but in order for your imagination to rely on this experience, thinking through a solution that is ideally suited to you.

Evaluate test coverage requirements

Suppose you have analysts in your team and they are not wasting their time. Based on the results of their work, requirements were created in RMS (Requirements Management System) - HP QC, MS TFS, IBM Doors, Jira (with additional plug-ins), etc. They introduce requirements into this system that meet the requirements of the requirements (sorry for the tautology). These requirements are atomic, traceable, specific ... In general, ideal conditions for testing. What can we do in this case? When using the scripted approach, link requirements and tests. We run tests in the same system, make a requirement-test bundle, and at any time we can see a report on which requirements there are tests, on which - not, when these tests were passed, and with what result.

We get a coverage map, we cover all uncovered requirements, everyone is happy and satisfied, we don’t miss mistakes ...

Okay, let's return from heaven to earth. Most likely, you do not have detailed requirements, they are not atomic, some of the requirements have been lost at all, and there is no time to document every test, or at least every other one. You can despair and cry, or you can admit that testing is a compensatory process, and the worse we have with analytics and development on the project, the more we must try ourselves and compensate for the problems of other participants in the process. Let's analyze the problems separately.

Problem: requirements are not atomic.

Analysts also sometimes sin with vinaigrette in the head, and usually this is fraught with problems with the whole project. For example, you are developing a text editor, and you may have two requirements in the system (among others): “html formatting must be supported” and “when opening a file of an unsupported format, a pop-up window should appear with a question”. How many tests are required for basic verification of the 1st requirement? And for the 2nd? The difference in answers is most likely about a hundred times !!! We can’t say that if there is at least one test according to the first requirement, this is enough - but about the second, most likely, completely.

Thus, the presence of a test for the requirement does not guarantee us anything at all! What does our coverage statistics mean in this case? Just about nothing! Have to decide!

- In this case, automatic calculation of requirements coverage by tests can be removed - it still does not carry a semantic load.

- For each requirement, starting with the highest priority, we prepare tests. In preparation, we analyze what tests will be required for this requirement, how much will be enough? We carry out a full-fledged test analysis, and do not dismiss "there is one test, well, okay."

- Depending on the system used, we export / unload tests on demand and ... test these tests! Are there enough of them? Ideally, of course, such testing should be done with the analyst and developer of this functionality. Print out the tests, lock your colleagues in the meeting room, and do not let go until they say “yes, these tests are enough” (this happens only with written approval, when these words are spoken for unsubscribing, even without analysis of the tests. In an oral discussion, your colleagues will pour a tub criticism, missed tests, misunderstood requirements, etc. - this is not always pleasant, but very useful for testing!)

- After finalizing the tests on demand and agreeing on their completeness, in the system this requirement can be marked with the status “covered by tests”. This information will mean much more than “there is at least 1 test”.

Of course, such a coordination process requires a lot of resources and time, especially at first, before the practice is developed. Therefore, spend on it only high priority requirements, and new improvements. Over time, pull up the rest of the requirements, and everyone will be happy! But ... and if there are no requirements at all?

Problem: There are no requirements at all.

They are absent from the project, they are discussed orally, everyone does what he wants / can and how he understands. Testing the same way. As a result, we get a huge number of problems not only in testing and development, but also in the initially incorrect implementation of features - they wanted a completely different one! Here I can advise the option “identify and document the requirements yourself”, and even used this strategy a couple of times in my practice, but in 99% of cases there are no such resources in the testing team - so let's go in a much less resource-intensive way:

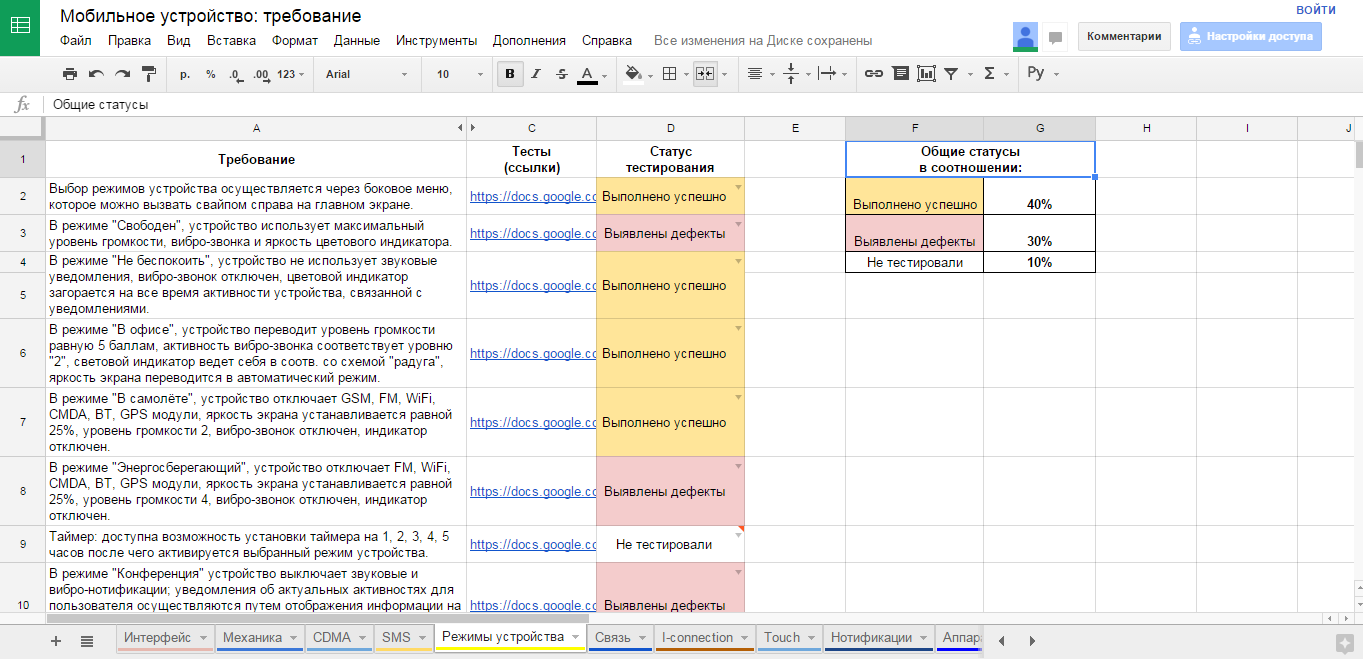

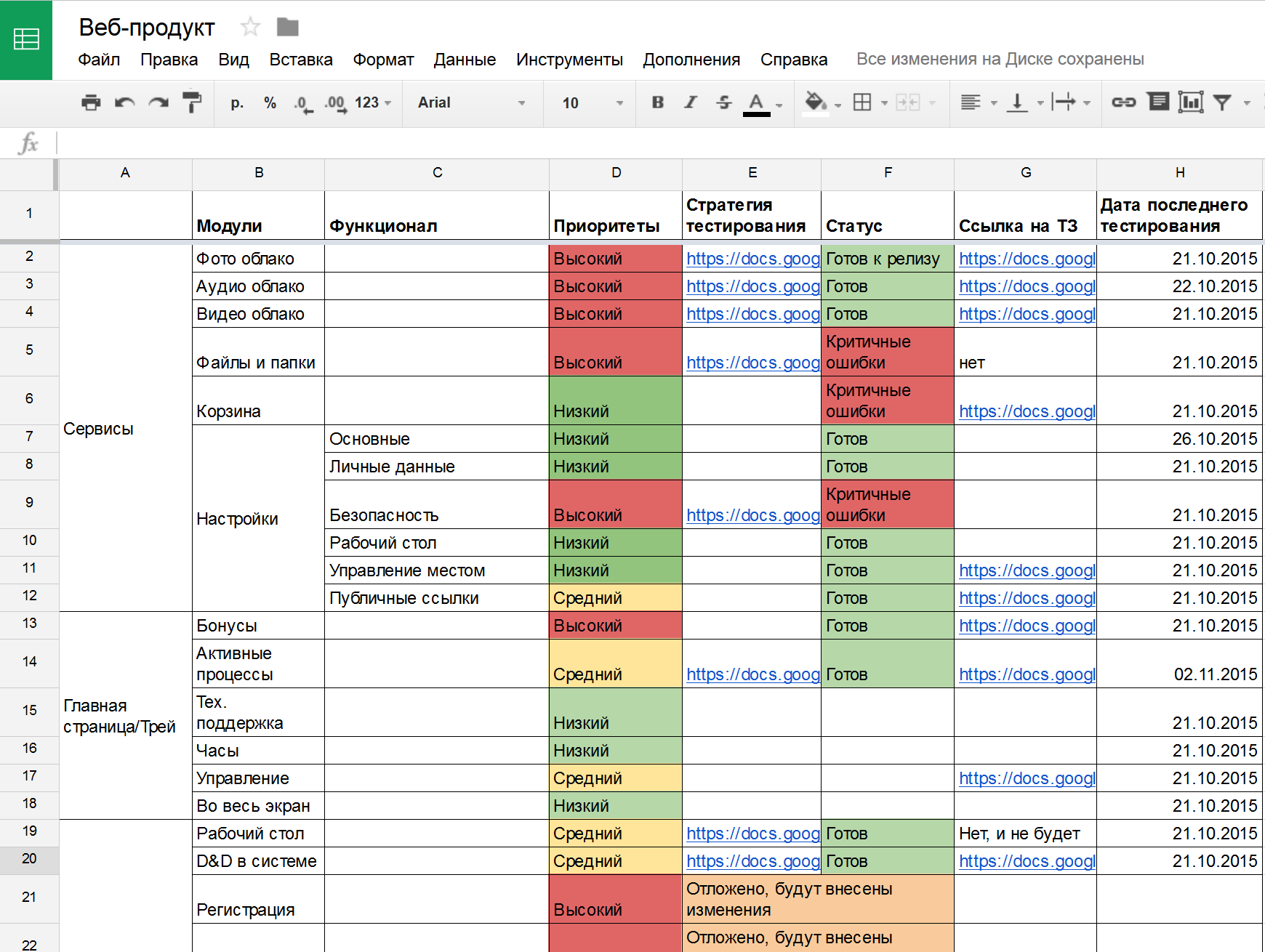

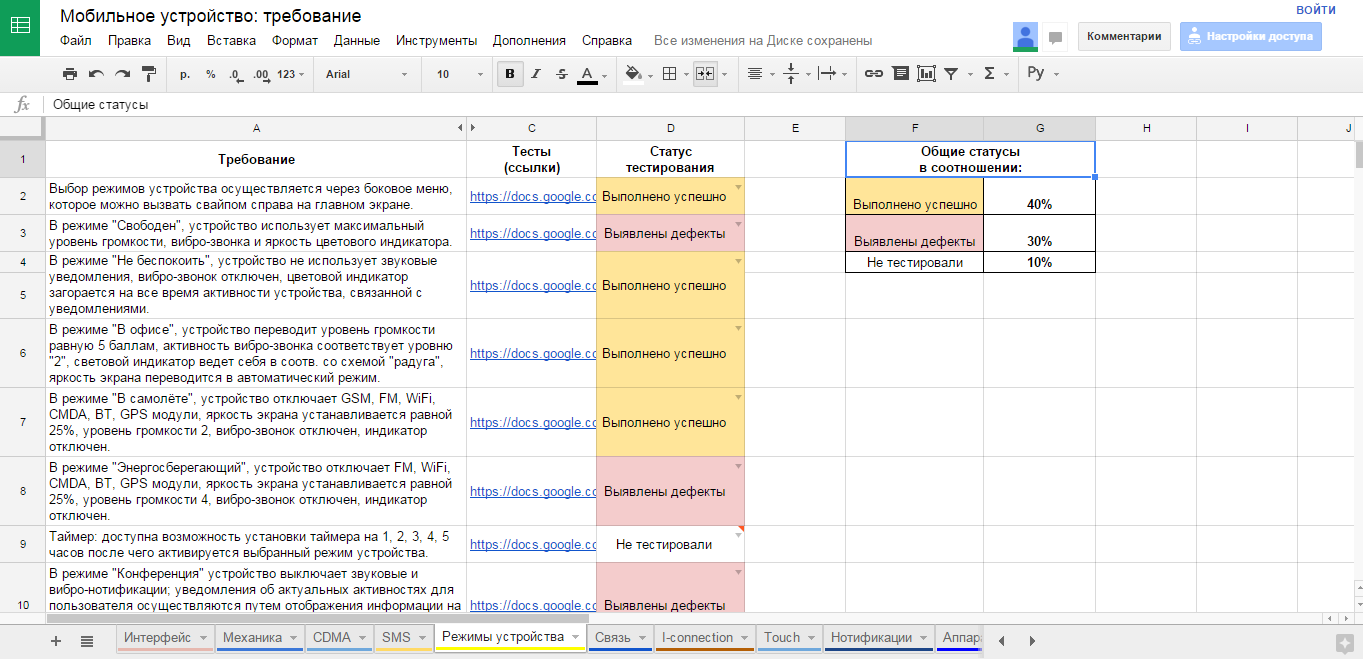

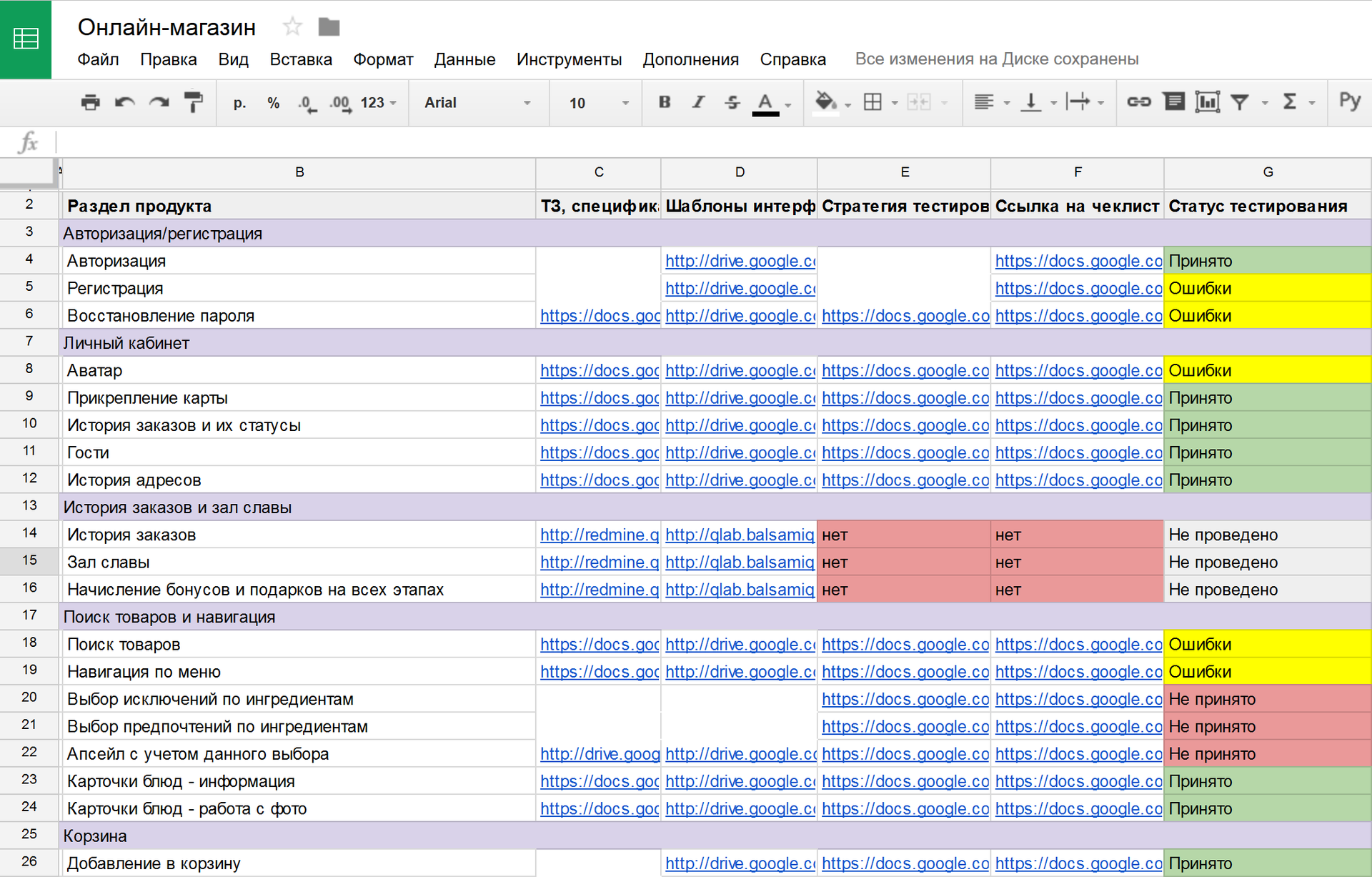

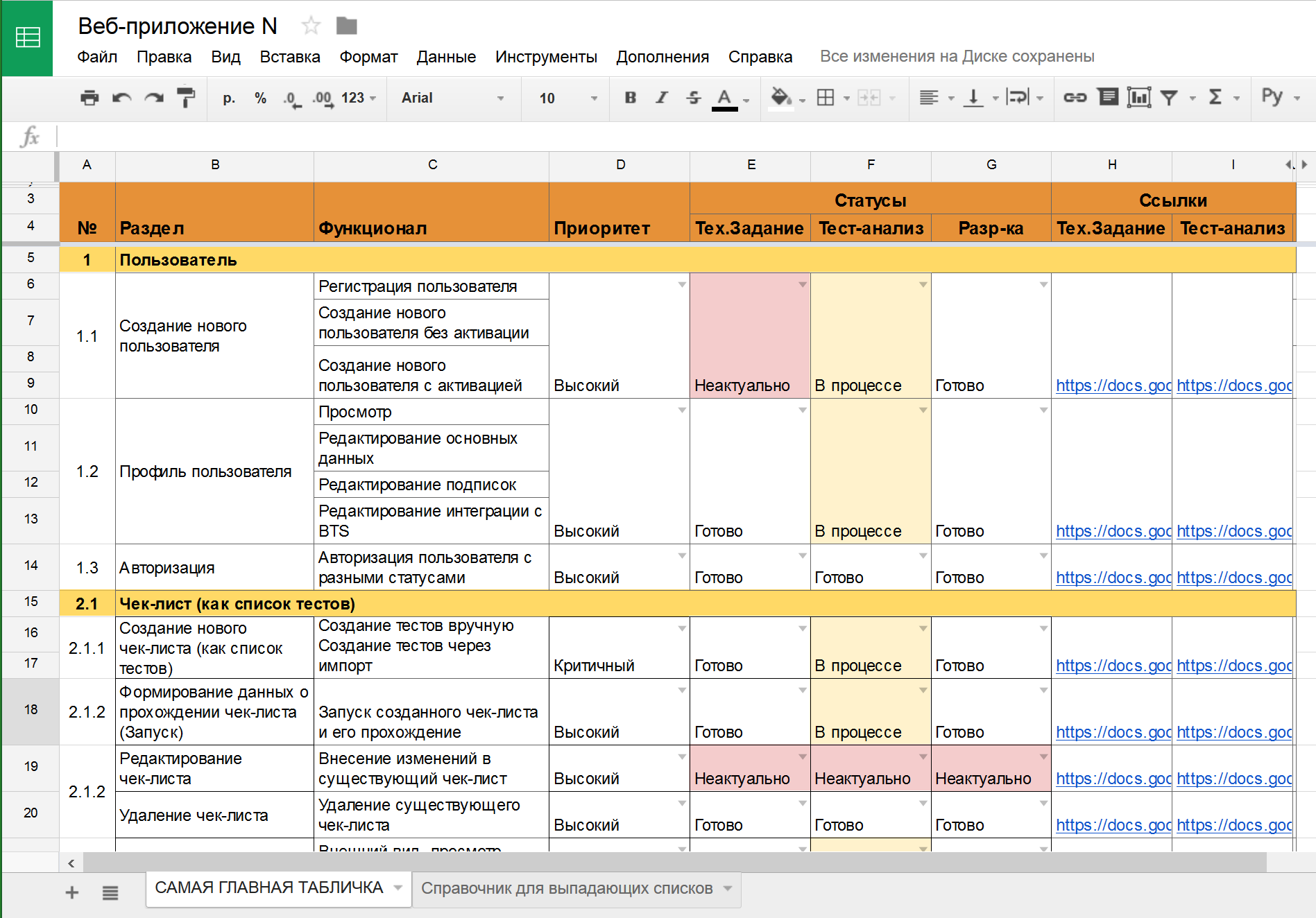

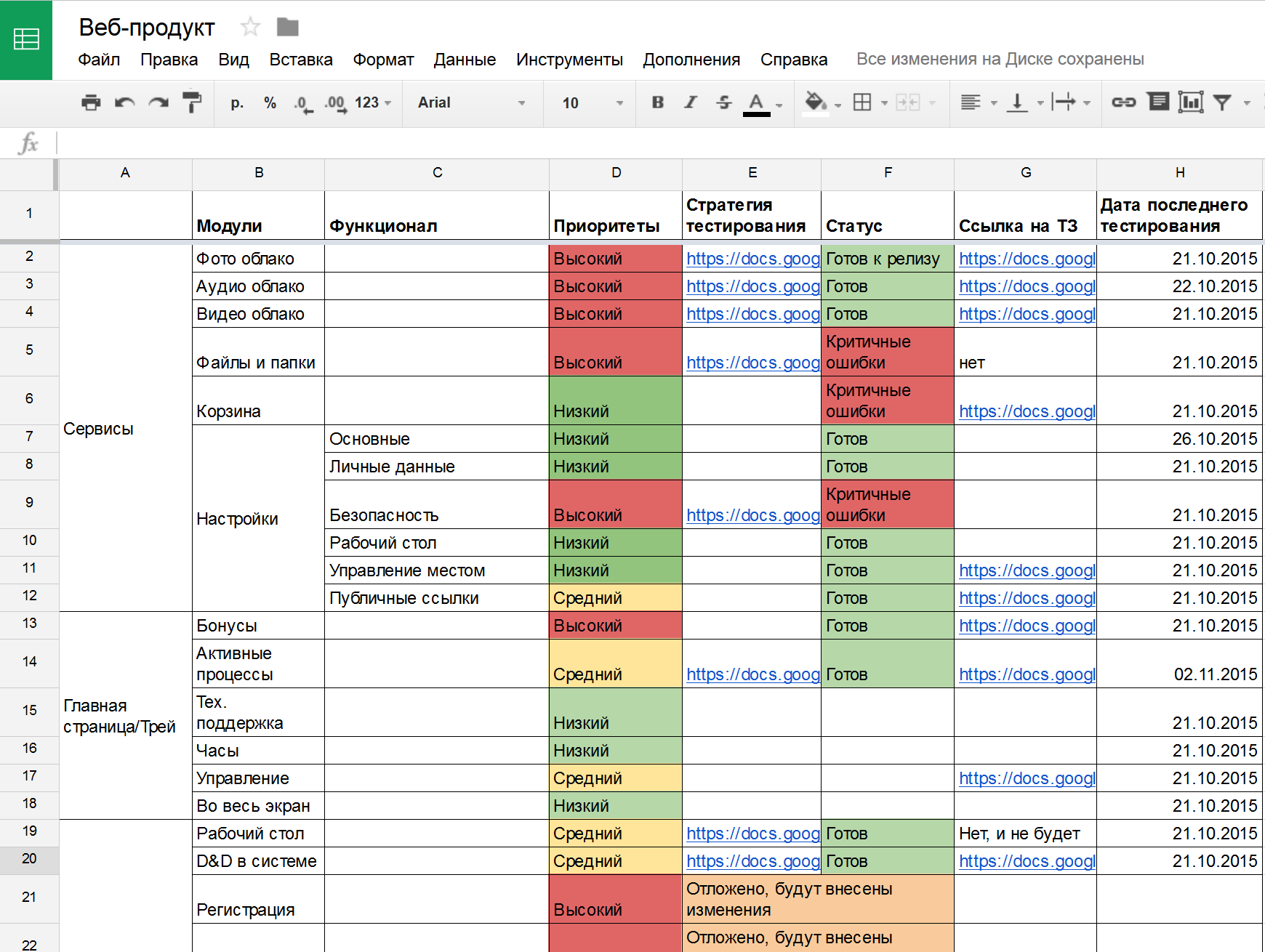

- Create a feature list. Yourself! In the form of a google plate, in PBI format in TFS - choose any, if only not a text format. We still need to collect statuses! We add all the functional areas of the product to this list, and try to choose one general level of decomposition (you can write out software objects, or user scripts, or modules, or web pages, or API methods, or screen forms ...) - but not all of this at once ! ONE decomposition format, which is the easiest and most obvious way for you not to miss the important.

- We coordinate the COMPLETENESS of this list with analysts, developers, business, within our team ... Try to do everything so as not to lose important parts of the product! How deeply the analysis is up to you. In my practice, there were only a few times products on which we created more than 100 pages in the table, and these were giant products. Most often, 30-50 lines are an achievable result for subsequent thorough processing. In a small team with no dedicated test analysts, a greater number of fichelist elements will be too difficult to support.

- After that, we go by priorities, and process each line of the fichelist as in the requirements section described above. We write tests, discuss, agree on sufficiency. We mark the statuses for which feature tests are enough. We get both status and progress, and the expansion of tests due to communication with the team. Everyone is happy!

But ... What if the requirements are met, but not in a traceable format?

Problem: requirements are not traceable.

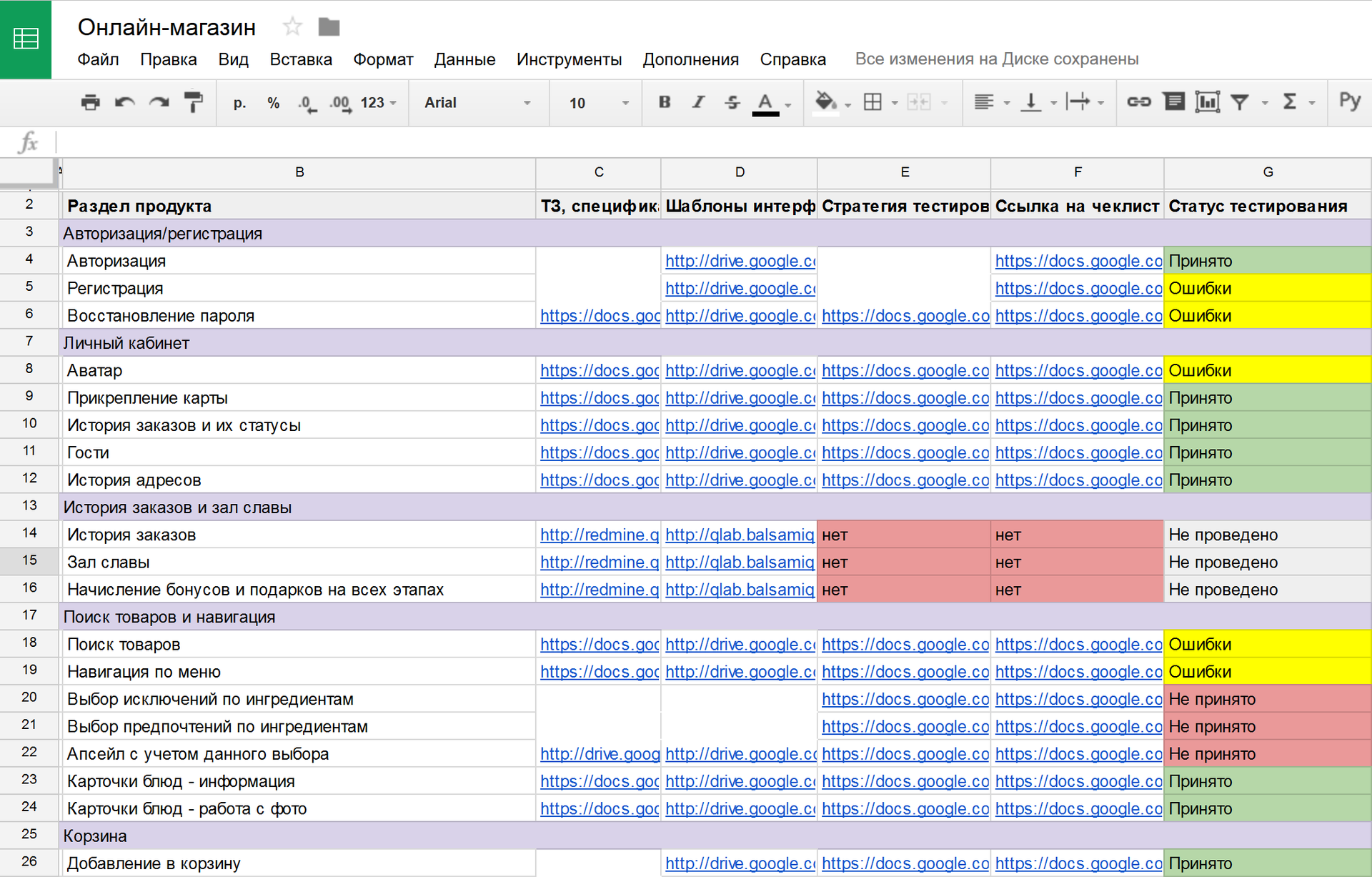

The project has a huge amount of documentation, analysts print at a speed of 400 characters per minute, you have specifications, technical specifications, instructions, certificates (most often this happens at the request of the customer), and all this acts as requirements, and the project has long been Confused where to find what information?

We repeat the previous section, helping the whole team to restore order!

- We create a fichelist (see above), but without a detailed description of the requirements.

- For each feature, we put together links to TK, specifications, instructions, and other documents.

- We go by priorities, prepare tests, coordinate their completeness. Everything is the same, only by combining all the documents in one plate we increase the ease of access to them, transparent statuses and test consistency. As a result, everything is super, and everyone is happy!

But ... Not for long ... It seems that over the past week, customer service analysts have updated 4 different specifications !!!

Problem: requirements change all the time.

Of course, it would be nice to test some fixed system, but our products are usually live. The customer asked for something, something changed in the legislation external to our product, and somewhere, the analysts found the analysis error of the year before last ... Requirements live their lives! What to do?

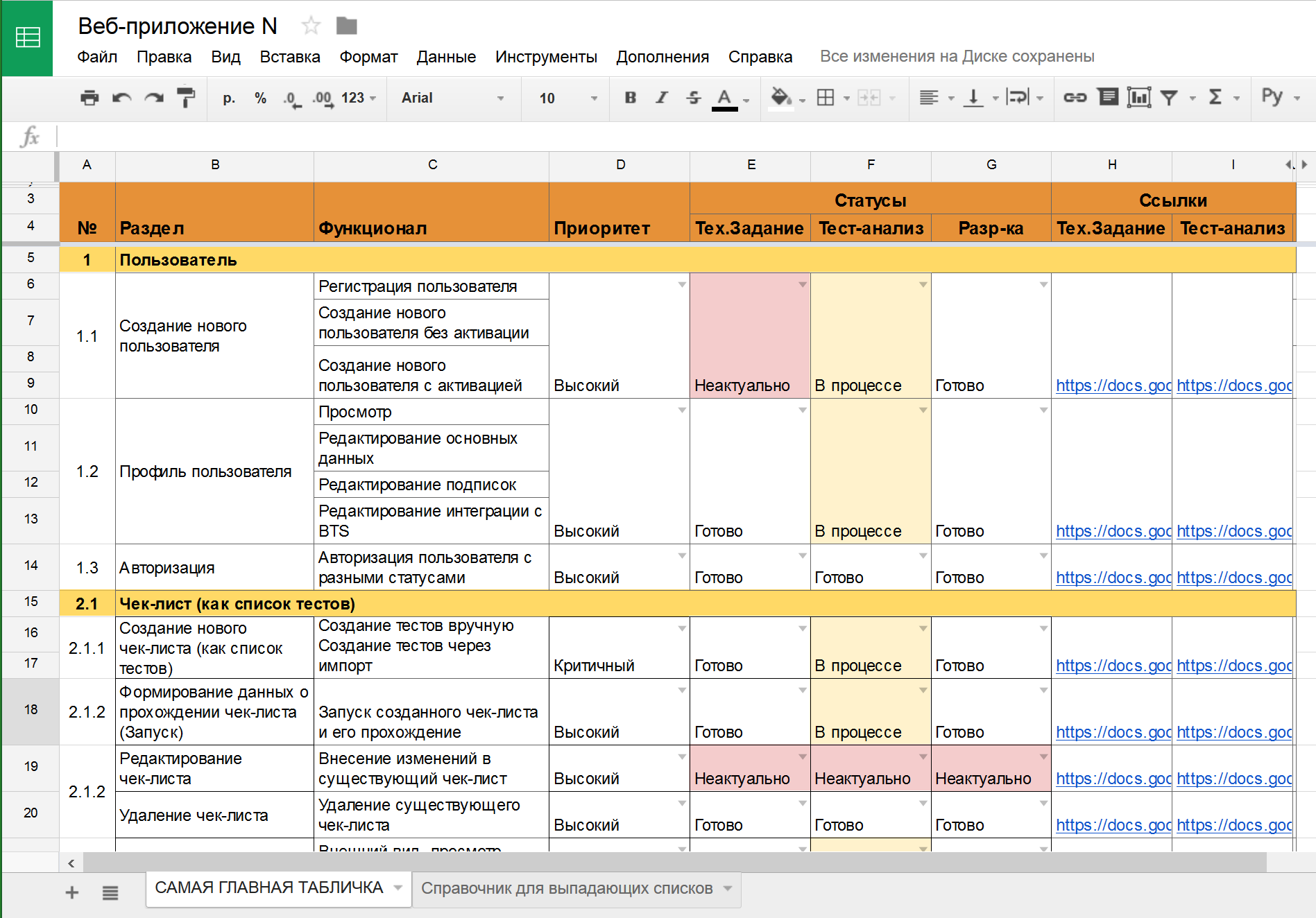

- Suppose you already have links to TK and specifications in the form of a feature list, PBI, requirements, notes in the Wiki, etc. Suppose you already have tests for these requirements. And now, the demand is changing! This can mean a change in RMS, or a task in TMS (Task Management System), or a letter in the mail. In any case, this leads to the same result: your tests are irrelevant! Or may be irrelevant. So, they require updating (covering the old version of the product with tests is somehow not very considered, right?)

- In the feature list, in the RMS, in the TMS (Test Management System - testrails, sitechco, etc), the tests must be necessarily and immediately marked as irrelevant! In HP QC or MS TFS, this can be done automatically when updating requirements, and in the google-plate or wiki you have to put down the pens. But you should see right away: the tests are irrelevant! This means that we will have a complete second way: update, re-test the test analysis, rewrite the tests, reconcile the changes, and only after that mark the feature / requirement again as “covered by tests”.

In this case, we get all the benefits of the test coverage assessment, and even in dynamics! Everyone is happy!!! But ...

But you paid so much attention to working with requirements that now you do not have enough time either to test or to document tests. In my opinion (and there is room for a religious dispute!), Requirements are more important than tests, and even better! At least they are in order, and the whole team is in the know, and the developers are doing exactly what they need. BUT ON TIME TEST DOCUMENTATION DO NOT REMAIN!

Problem: Not enough time to document tests.

In fact, the source of this problem can be not only a lack of time, but also your well-informed choice not to document them (we do not like it, we avoid the effect of the pesticide, the product changes too often, etc.). But how to evaluate test coverage in this case?

- You still need requirements as full requirements or as a feature list, so some of the sections described above, depending on the work of analysts on the project, will still be necessary. Got Requirements / Fichelist?

- We describe and verbally agree briefly on the testing strategy, without documenting specific tests! This strategy can be indicated in a column of a table, on a wiki page, or in a request in RMS, and it must again be agreed upon. As part of this strategy, the tests will be carried out in different ways, but you will know: when was the last time it was tested and by what strategy? And this, you see, is also good! And everyone will be happy.

But ... What is another “but”? What ???

Say, we’ll get around everything and may quality products be with us!