Work with the mdadm utility. Array type change, chunk size, extension

Introduction

I was prompted to write this article by the lack of intelligible information in Russian on working with the mdadm utility that implements RAID of various types in the linux OS. In principle, the main provisions of this utility are covered in sufficient detail. You can read about it, for example, one , two , three .

Convert RAID1 to RAID5

The main message was the conversion of RAID1, created by installing the Ubuntu array of two disks to RAID5. I started, as usual with google, and came across an earlier article on Habr . Unfortunately, tested on virtual machines, this method guaranteed to kill the ability to boot from the root partition. What this is connected with, with a newer version of mdadm, or with the specifics of testing (the root partition on the array, it is necessary to work with a live CD) could not be detected.

System version

root@u1:/home/alf# uname -a

Linux u1 3.2.0-23-generic #36-Ubuntu SMP Tue Apr 10 20:39:51 UTC 2012 x86_64 x86_64 x86_64 GNU/LinuxPartitioning the disk in my case was nowhere simpler, I modeled it on a test virtual machine, allocating 20 gigs for disks:

The root partition is on the / dev / sda2 / dev / sdb2 devices that are arrayed and has the name / dev / md0 in the base system . The boot partition is located on both disks, / boot is not allocated to a separate disk.

In the mdadm v3.2.5 version , which is installed by default in Ubuntu, the RAID conversion procedure is possible in the direction 1-> 5, and impossible back. It is executed by the array change command –grow, -G. Before converting, insert a USB flash drive into the device and mount it to save the backup superblock. In case of failures from it, we will restore the array. Mounting it in / mnt / sdd1 The

mkdir /mnt/sdd1mount /dev/sdd1 /mnt/sdd1mdadm --grow /dev/md0 --level=5 --backup-file=/mnt/sdd1/backup1operation usually takes place very quickly and painlessly. It should be noted that the resulting RAID5 array on two disks is actually a RAID1 array, with the possibility of expansion. It will become full-fledged RAID5 only by adding at least one more disk.

It is extremely important that our boot partition is on this array and after rebooting GRUB will not automatically load the modules to run. To do this, we do the bootloader update.

update-grubIf you still forgot to do the update, and after the reboot you received

cannot load raid5rec

grub rescue>

don’t despair, you just need to boot from LiveCD, copy the / boot directory to the USB flash drive , reboot from the main partition into grub rescue> again and load the RAID5 module from the USB flash drive according to common instructions, for example like this .

Add the line insmod /boot/grub/raid5rec.mod

After downloading from the main section, do not forget to update-grub

RAID5 array expansion

Extensions of the modified array are described in all sources and are not a problem. We do not turn off the power.

- Clone the structure of an existing disk

sfdisk –d /dev/sda | sfdisk /dev/sdc - Add a disk to the array

mdadm /dev/md0 --add /dev/sdc2 - Expanding array

mdadm --grow /dev/md0 --raid-device=3 --backup-file=/mnt/sdd1/backup2 - We are waiting for the end of reconfiguration and observe the process

watch cat /proc/mdstat - Install the boot sector to a new disk

grub-install /dev/sdc - Updating the bootloader

update-grub - We expand the free space to the maximum, it works on a live and mounted partition.

resize2fs /dev/md0 - We look at the resulting array

mdadm --detail /dev/md0 - We look at an empty seat

df -h

Changing the Chunk Size of an Existing RAID5 Array

In RAID1, the concept of Chunk Size is absent, because this is the size of the block, which is written to different disks in turn. When converting an array type to RAID5, this parameter appears in the output of detailed information about the array:

Mdadm –detail /dev/md0

/dev/md0:

Version : 1.2

Creation Time : Tue Oct 29 03:57:36 2013

Raid Level : raid5

Array Size : 20967352 (20.00 GiB 21.47 GB)

Used Dev Size : 20967352 (20.00 GiB 21.47 GB)

Raid Devices : 2

Total Devices : 2

Persistence : Superblock is persistent

Update Time : Tue Oct 29 04:35:16 2013

State : clean

Active Devices : 2

Working Devices : 2

Failed Devices : 0

Spare Devices : 0

Layout : left-symmetric

Chunk Size : 8K

Name : u1:0 (local to host u1)

UUID : cec71fb8:3c944fe9:3fb795f9:0c5077b1

Events : 20

Number Major Minor RaidDevice State

0 8 2 0 active sync /dev/sda2

1 8 18 1 active sync /dev/sdb2

In this case, the chunk size is selected according to the rule: The largest common divisor of the size of the array (in our case 20967352K) and the maximum automatically set chunk size (which is 64K). In my case, the maximum possible chunk size is 8K (20967352/8 = 2620919 is not further divided by 2).

If in the future you plan to add disks, increasing the size of the array, then it is advisable for you to change the size of the chunk at some point. You can read an interesting article about performance .

To change the chunk parameter in an array, you must bring its size into line with the multiplier. Before this, it is important to resize the partition, as if you cut along a living maximum partition, you will most likely overwrite the superblock of the partition and guaranteed to corrupt the file system.

How to calculate the required partition size for the chunk size you need? We take the maximum current partition size in kilobytes.

mdadm --detail /dev/md0 | grep ArrayDivide entirely on the chunk size we need and on (the number of disks in the array is 1, for RAID5). The resulting number is again multiplied by the size of the chunk and by (the number of disks in the array is -1), and the result will be the size of the partition we need.

(41934704/256) / 2 = 81903; 81903 * 256 * 2 = 41934336

It is not possible to resize a partition to a smaller size on a working, mounted partition, and since it is the root partition, we reboot from any LiveCD (I like RescueCD , it’s mdadm loaded automatically)

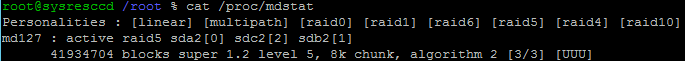

- Checking the running arrays. We

Cat /proc/mdstat

find that our array is in RO mode.

- Reassembling it as usual

Mdadm --stop /dev/md127Mdadm --assemble --scan

- Perform an array check for errors with auto-correction

e2fsck -f /dev/md127 - We change the size of the active partition, specifying -S 256 as the parameter so that the utility understands that this partition will be on the RAID array and adding the letter K to the end of the new array size so that the utility does not count this number as the number of blocks

resize2fs -p S 256 /dev/md127 41934336K - To resize the array, we again use the --grow parameter in the mdadm utility . It must be taken into account that the array is changed on each disk, and therefore in the parameters we specify not the total required size of the array, but the size of the array on each disk, accordingly it is necessary to divide by (the number of disks in the array -1). The operation is instant

41934336/2=20967168mdadm --grow /dev/md127 --size=20967168 - We reboot into normal mode, resize the chunk, do not forget to pre-mount the USB flash drive for the superblock backup, and wait for the re-synchronization of the array to complete.

mdadm --grow /dev/md0 --chunk=256 --backup-file=/mnt/sdd1/backup3watch cat /proc/mdstat

The operation is long, much longer than adding a disk, get ready to wait. Power, as usual, can not be turned off.

Array recovery

If at some stage of working with the array through mdadm you had a failure, then to restore the array from the backup (didn’t you forget to specify the file for backup?) You need to boot from liveCD and rebuild the array again with the correct sequence of disks included in an array and specifying a superblock for loading

mdadm --assemble /dev/md0 /dev/sda2 /dev/sdb2 /dev/sdc2 --backup-file=/mnt/sdd1/backup3In my case, the last operation to change the chunk of the array put it in the * reshape state , but the rebuilding process itself did not start for a long time. I had to reboot with liveCD and restore the array. After that, the process of rebuilding the array went, and at the end of it, the Chunk Size was already new, 256K.

Conclusion

I hope this article will help you painlessly change your home storage systems, and perhaps someone will come across the idea that the mdadm utility is not as incomprehensible as it seems.

Read more

www.spinics.net/lists/raid/msg36182.html

www.spinics.net/lists/raid/msg40452.html

enotty.pipebreaker.pl/2010/01/30/zmiana-parametrow-md-raid-w-obrazkach

serverhorror .wordpress.com / 2011/01/27 / migrating-raid-levels-in-linux-with-mdadm

lists.debian.org/debian-kernel/2012/09/msg00490.html

www.linux.org.ru/forum / admin / 9549160

Gourmet specialties fossies.org/dox/mdadm-3.3/Grow_8c_source.html