Legendary Intel Core i7-2600K: testing Sandy Bridge in 2019 (part 2)

- Transfer

Part 1 → Part 2 → Part 3

In accordance with our processor testing policy, we take a premium motherboard with a suitable socket and equip the system with sufficient memory that runs at the maximum frequency supported by the manufacturer. Testing is also performed when possible with JEDEC settings.

It is noted that some users dispute this approach, mentioning that sometimes the maximum supported frequency is quite low, or faster memory is available at a similar price, or that using supported frequencies can reduce performance. Although these comments make sense, in the end, very few consumers use memory profiles (XMP or others), because they require interaction with the BIOS, and most users abandon the supported JEDEC speeds - this includes both home users and providers who may want to reduce the margin for a couple of cents or stay within the limits set by the manufacturer. Where possible, we will expand testing to add faster memory modules - either in this review or later.

Thank you very much ...

We must thank the companies listed below for the kindly provided equipment for our test benches. Some of this hardware is not included in this test system, but is used in other tests.

In order to keep up with the times, we have to update our software again. In updates, we usually use the latest operating system, the latest fixes, the latest software versions and graphics drivers, as well as add new tests or delete old ones. As regular readers know, our processor testing consists of a set of automated tests, and depending on how the latest software works, the package has to be changed: update or delete tests, or completely redo it. The last time we rewrote tests from scratch, it took most of the month, including regression testing (testing old processors).

One of the key elements of our test update for 2018 (and 2019) is the fact that our scripts and systems are prepared to protect against Specter and Meltdown. This means that our BIOS must be updated using the latest microcode, and all steps are performed on the operating system with security updates. In this case, we use Windows 10 x64 Enterprise 1709 with April security updates that mitigate the Smeltdown threat (as we call the two vulnerabilities in one word). Users may ask why we are not using Windows 10 x64 RS4, the latest major update. This is due to some new features that give mixed results. Instead of spending several weeks studying the possibility of turning them off, we will continue to work with RS3,

Our previous set of benchmarks was divided into several segments, depending on what the test relates to and how it is perceived. Our new test suite follows similar rules, and we run tests based on:

Depending on the direction of the review, the order of these guidelines may change, and some of them may remain outside the scope of the review. All test results will be stored in our Bench database, which now has a new section “CPU 2019”.

In each section, we will conduct such tests:

Our power tests are to create the maximum workload for each thread in the system, and check the power registers on the chip to find out details such as the power consumption of the cores, the power of the entire chip, DRAM, I / O, and the power of each core. The result depends on how much information the chip manufacturer gives: sometimes there really is a lot, sometimes almost nothing.

We are currently using POV-Ray as the main Power test, as it seems to penetrate deep into the system and is very consistent. To limit the number of cores during testing, we use an affinity mask controlled from the command line.

These tests are carried out with the shutdown of all turbo modes in the system, making it work at the base frequency, and implement both memory latency checks (Intel Latency Checker works equally well for both platforms) and AIDA64 to check the cache bandwidth.

We recently automated about a dozen games with four different levels of performance. Most games will have data about the frame time, but not everyone has difficulties with automation. The idea is to get a good overview of a selection of different genres and engines for testing. At the moment, we have automated the following games:

We will use the NVIDIA GTX 1080 set of CPU Gaming tests. For pure CPU testing, we use the RX460, since now we have several devices for parallel testing.

In previous years, we tested several GPUs on a small number of games. This time, thanks to my survey on Twitter, which gave exactly 50:50, we do the opposite: more games, fewer GPUs.

From time to time we get the same comment: automation is not the best way to test. There is a high barrier to entry, and this limits the ability to conduct tests. From our point of view, despite the fact that you need to spend time for proper programming (and the correct preparation of the test), automation allows the following advantages:

Our test software compares all the results, and sends all the data of the running tests to the central repository, and I can analyze the data as it arrives. It also acts as a manual check in case any data looks abnormal.

We have one main limitation, it applies to our gaming tests. We run several tests through one Steam account, and some games (e.g. GTA) are only available online. Since Steam allows one account to run the game on only one system, our game script checks the Steam API to determine whether we are “online” or not, and runs offline tests if the account is allowed to log in to the system. Depending on the number of games that categorically require online mode, this need may be a bottleneck.

As always, we carry out benchmarking requests. This helps us understand the workloads users work with and plan their testing accordingly.

Software Package Note: We had requests for software tests, such as ANSYS or other professional-level software. The disadvantage of testing this software is licensing and scaling. Most of these companies do not really care that we conduct the tests, and declare that this is not part of their plans. Others, such as Agisoft, are more than willing to help. If you are a contributor to the development of these software packages, the best way to see how we use them is to give us a hand. We have special versions of the software for some tests, and if we can get something that works and is important for our audience, then it will be easy for us to add it to the test suite.

The section "System tests" focuses on testing in real conditions that the consumer is faced with, with a slight bias in throughput. In this section, we will look at application loading time, image processing, simple physics, emulation, neural modeling, optimized calculations and the development of three-dimensional models using easily accessible and customizable software. Although some of the tests are easier to overlap with the capabilities of large packages such as PCMark (we publish these values in the office tests section), it’s worth considering various perspectives. In all tests, we will explain in detail what is being tested and how exactly we are testing.

All our test results can also be found on our Bench page.

One of the most important aspects of the user experience and workflow is the speed of the system. A good test here is to check application load times. Most programs these days, when they are stored on an SSD, download almost instantly, but some office tools require preloading assets before they are ready to go. Most operating systems also use caching, so when certain software is downloaded frequently (web browser, office tools), it can be initialized much faster.

In the previous test suite, we checked how long it would take to load a large PDF into Adobe Acrobat. Unfortunately, this test was a programming nightmare, and refused to upgrade to Win10 RS3 without a fight. In the meantime, we discovered an application that can automate this test, and we decided to use GIMP, a popular and free photo editing application. This is an open source editor and the main alternative to Adobe Photoshop. We configured it to load a large design template of 50 MB in size, and performed the load 10 times with an interval of 10 seconds between them. Due to caching, the first 3-5 results are often slower than the rest, and the time for caching can be inconsistent, so we accept the average of the last five results,

Even overclocked, 2600K cannot reach the performance of 7700K, while 9700K with a higher single-threaded frequency takes a worthy first place.

FCAT software was designed to detect micro-sticks, dropped frames, and run graphic tests when two video cards are combined together to render a scene. Due to game engines and graphics drivers, not all GPU combinations worked perfectly, as a result of which the software captured colors for each rendered frame and dynamically performed RAW data recording using a video capture device.

FCAT software accepts the recorded video, in our case it is 90 seconds 1440p of the game Rise of the Tomb Raider, and converts the color data into frame time data, so the system can display the “observed” frame rate and correlate with the energy consumption of the video accelerators. This test, due to how quickly it was completed, is single-threaded. We start the process and get the completion time as a result.

FCAT is another single-threaded test, so we see the same differences in performance: an overclocked 2600K cannot quite compare with the stock 7700K, and 9700K breaks ahead.

Our 3DPM test is a custom benchmark designed to model six different algorithms for moving particles in three-dimensional space. The algorithms were developed as part of my Ph.D. thesis and, ultimately, work best on the GPU, and give a good idea of how command streams are interpreted by various microarchitectures.

The key part of the algorithms is the generation of random numbers - we use relatively fast generation, which completes the implementation of chains of dependencies in the code. The main update compared to the primitive first version of this code is that the False Sharing problem in caches was resolved, which was the main bottleneck. We are also considering applying the AVX2 and AVX512 versions of this test for future reviews.

For this test, we run a stock set of particles using six different algorithms, within 20 seconds, with 10-second pauses, and report the total particle speed in millions of operations (movements) per second.

3DPM v2.1 can be downloaded from our server: 3DPMv2.1.rar (13.0 MB)

Since the 2600K does not have AVX2, it lags far behind the 7700K / 9700K when the program is optimized for new instructions.

One of the popular requested tests in our package is console emulation. The ability to select a game from an outdated system and run it is very attractive, and depends on the efforts of the emulator: a much more powerful x86 system is required to be able to accurately emulate an old console other than x86. Especially if the code for this console was made taking into account some physical flaws and hardware bugs.

For our test, we use the popular Dolphin emulation software, and run a computing project through it to determine how accurately our processors can emulate the console. In this test, Nintendo Wii emulation will last about 1050 seconds.

The latest version of Dolphin can be downloaded here.

Dolphin achieved significant results in the Haswell / Broadwell era, which led to an incredible increase in performance from 2600K to 7700K. Unfortunately, for various reasons, an overclocked processor failed this test.

This benchmark was originally designed to model and visualize the activity of neurons and synapses in the brain. The software comes with various predefined modes, and we chose a small benchmark that simulates a brain of 32 thousand neurons / 1.8 billion synapses, which is equivalent to the brain of a sea slug.

We report the test results as an opportunity to emulate data in real time, so any results above the “unit” are suitable for real-time operation. Of the two modes, the “without synapse start” mode, which is difficult for DRAM, and the “with synapse start” mode, in which the processor is loaded, we select the latter. Despite our choice, the test is still affected by DRAM speed.

DigiCortex can be downloaded here

For the tests related to memory, we ran the systems at their supported frequencies determined by Intel, with the exception of the overclocked system, which rightly received significant acceleration from DDR3-1333 to DDR3-2400. The results show an increase in performance, but even the default 7700K is faster. And the transition to 9700K shows a performance gain due to additional cores.

I once heard about y-Cruncher as a tool to help you compute various mathematical constants. But after I started talking with its developer, Alex Yee, an NWU researcher and now a software optimization developer, I realized that he optimized the software in an incredible way to get better performance. Naturally, any simulation that takes 20+ days will benefit from a 1% increase in productivity! Alex started working with y-Cruncher as a project in high school, and now the project is up to date. Alex is constantly working on it to take advantage of the latest sets of instructions, even before they become available at the hardware level.

For our test, we run y-Cruncher v0.7.6 through all possible optimized variants of binary, single-threaded and multi-threaded calculations, including binary files optimized for AVX-512. The test is to calculate 250 million characters of the Pi number, and we use single-threaded and multi-threaded versions of this test.

Users can download y-cruncher from Alexa's website.

Y-cruncher is another benchmark that uses as many AVX features as possible to accelerate, demonstrating that modern descendants of Sandy Bridge have additional benefits.

One of the ISVs we have been working with for several years is Agisoft. The campaign is developing software called PhotoScan, which converts a series of 2D images into a 3D model. This is an important tool in the development and archiving of models, and relies on a number of single-threaded and multi-threaded algorithms for moving from one side of the calculation to the other.

In our test, we take software version 1.3.3 with a large data set - photos 84 x 18 megapixels. We run the test according to a fairly quick set of algorithms, but still more rigorous than our 2017 test. As a result, we report the total process completion time.

The Agisoft Photoscan website can be found here.

Since this is a test with variable multithreading, overclocking 2600K gives a significant jump in performance compared to the stock version. However, the default 7700K makes another leap of almost the same size. And 9700K, thanks to a large number of cores, just laughs at the rest of the chips in this comparison.

In a professional environment, rendering is often the main processor workload. It is used in various formats: from 3D rendering to rasterization, in tasks such as games or ray tracing, and uses the ability of software to manage meshes, textures, collisions, aliases, and physics (in animation). Most renderers offer code for the CPU, while some use GPUs and choose environments that use FPGAs or specialized ASICs. However, for large studios, processors are still the main hardware.

All our test results can also be found on our Bench page.

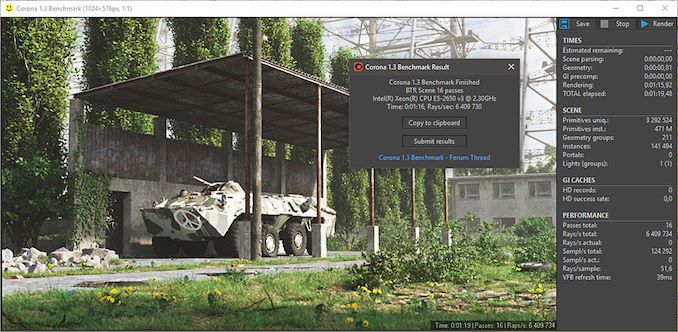

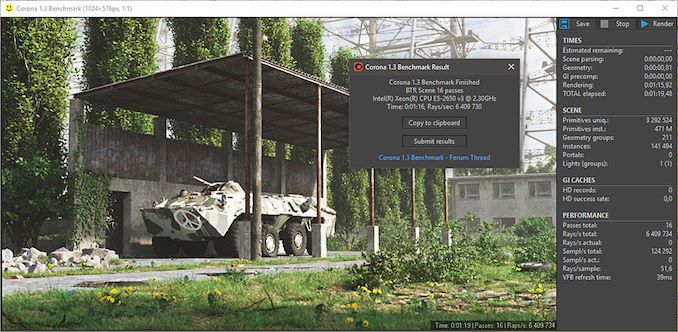

An improved renderer optimized for performance for software such as 3ds Max and Cinema 4D, the Corona test renders a generated scene of version 1.3 standard. Usually, the implementation of the GUI benchmark shows the process of building the scene, and allows the user to see the result as "time to complete."

We contacted the developer who gave us the command line version of the test, which provides direct output of the results. Instead of reporting the time the scene was built, we report the average number of rays per second over six runs, as the ratio of completed actions to units of time is visually easier to understand.

We see a noticeable difference in performance between 7700K and 2600K, due to microarchitecture updates and frequency, however, overclocking 2600K only halves this gap.

A high-end rendering tool, Blender is an open source product with many settings and configurations and is used by many high-end animation studios around the world. The organization recently released a Blender test suite, a couple of weeks after we decided to reduce the use of the Blender test in our new package, but a new test may take more than an hour. To get our results, we run one of the subtests in this package through the command line - the standard scene "bmw27" in the "CPU only" mode, and measure the completion time of the rendering.

Blender can be downloaded at .

We see the same picture with the Blender test: overclocking only halves the performance gap between 2600K and stock 7700K. Add acceleration to 7700K and this gap will become larger.

As stated above, there are many different ways to process rendering data: CPU, GPU, Accelerator, and others. In addition, there are many frameworks and APIs in which you can program, depending on how the software will be used. LuxMark, a benchmark developed using the LuxRender engine, offers several different scenes and APIs.

In our test, we run a simple “Ball” scene using C ++ and OpenCL code, but in CPU mode. This scene begins with a rough rendering and slowly improves quality over two minutes, giving the final result in an indicator that can be called "average number of thousands of rays per second."

POV-Ray 3.7.1: ray tracing

The ray tracing engine Persistence of Vision is another well-known benchmarking tool that went into hibernation for some time until AMD released its Zen processors when suddenly both Intel and AMD started pushing code into the main branch of an open source project. For our test, we use the built-in test for all cores, called from the command line.

POV-Ray can be downloaded here

POV-Ray stands out from previous tests, and shows what role AVX2 plays in modern processors. POV-Ray also prefers cores rather than threads, so having eight real cores gives the 9700K a pretty good advantage.

The Office test suite is designed to focus on more standard industry benchmarks that focus on office workflows. These are more synthetic tests, but we also test the compiler performance in this section. For users who need to evaluate the equipment as a whole, these are usually the most important criteria to consider.

All our test results can also be found on our Bench page.

Futuremark, now known as UL, has been developing tests that have become industry standards for two decades. The last set of system tests is PCMark 10, where several tests are improved compared to PCMark 8, and more attention is paid to OpenCL, specifically in cases such as video streaming.

PCMark breaks its grades into roughly 14 different areas, including launching applications, web pages, spreadsheets, photo editing, rendering, video conferencing, and physics. We publish all these data in our Bench database, but the overall score is a key indicator for the current review.

A tool like PCMark does not actually show the real scale of the differences, with the exception of the main tests, which are fully multithreaded, and in which the 9700K takes advantage. The 7700K outperforms the regular 2600K by only 17%, and the gap is reduced to 5% compared to the overclocked version. Perhaps this is also an indicator of how often you feel the difference between the new 7700K and the overclocked 2600K: 5% of the time. Of course, it depends on your load balance.

A large number of AnandTech readers are software engineers who watch how the hardware works. Although compiling the Linux kernel is “standard” for reviewers who often compile, our test is a little more diverse - we use Windows instructions to compile Chrome, in particular, the Chrome build on March 56, 2017, as it was when we created the test. Google gives quite detailed instructions on how to compile under Windows after downloading 400,000 files from the repository.

In our test, following the instructions from Google, we use the MSVC compiler, and ninja to control compilation. As expected, this is a test with variable multithreading, and with variable requirements for DRAM, which benefit from faster caches. The results obtained in our test are the time spent on compilation, which we convert to the number of compilations per day.

This compilation test prefers the 9700K cores over the SMT, and again we see that the overclocked 2600K are in the middle between the stock 7700K and 2600K. Even without overclocking, the 7700K is stronger.

Along with PCMark, there is a 3DMark benchmark, Futuremark (UL) - a set of game tests. Each game test consists of one or two scenes that are heavy for the GPU, as well as a physical test, depending on when the test was written and what platform it targets. The main subjects in increasing difficulty are Ice Storm, Cloud Gate, Sky Diver, Fire Strike and Time Spy.

Some of the subtests offer other options, such as Ice Storm Unlimited, which is designed for mobile platforms with off-screen rendering, or Fire Strike Ultra, which is designed for high-performance 4K systems with a lot of added features. It is worth noting that Time Spy currently has the AVX-512 mode (which we can use in the future).

As for our tests, we send the results of each physical test to Bench, but for the review we stick to the results of only the most demanding scenes: Ice Storm Unlimited, Cloud Gate, Sky Diver, Fire Strike Ultra and Time Spy,

A common tool for cross-platform testing on mobile devices, PCs and Macs, GeekBench 4 is the perfect synthetic test of a system using a range of algorithms that require maximum bandwidth. Tests include encryption, compression, fast Fourier transform, memory operations, n-body physics, matrix operations, histogram manipulation, and HTML parsing.

I am including this test because of the popularity of the query, although the results are very synthetic. Many users often attach great importance to its results due to the fact that it was compiled on different platforms (albeit with different compilers).

We record the ratings of the main subtests (Crypto, Integer, Floating Point, Memory) in our database of test results, but for the review we publish only general single-threaded and multi-threaded results.

With the increase in the number of streams, video blogs and video content in general, coding and transcoding tests are becoming increasingly important. Not only are there more and more home users and gamers engaged in converting video files and video streams, but servers that process data streams need encryption on the fly, as well as compression and decompression of logs. Our coding tests are aimed at such scenarios, and take into account the opinion of the community in order to provide the most relevant results.

All our test results can also be found on our Bench page.

A popular open source tool, Handbrake is software for converting video in any way possible, which, in a sense, is the benchmark. The danger here lies in version numbers and in optimization. For example, recent software versions can take advantage of the AVX-512 and OpenCL to speed up certain types of transcoding and certain algorithms. The version that we use is pure work with the CPU, with standard transcoding options.

We divided Handbrake into several tests using recording from a native Logitech C920 1080p60 webcam (essentially a stream recording). The record will be converted into two types of streaming formats and into one for archiving. Used output parameters:

Of all our archiving / unarchiving tests, 7-zip is the most popular and has a built-in benchmark. We added the latest version of this software to our test suite, and we launch the benchmark from the command line. The results of archiving and unarchiving are displayed as a single total score.

This test clearly shows that modern processors with several matrices have a big difference in performance between compression and decompression: they perform well in one, and poorly in the other. In addition, we are actively discussing how Windows Scheduler implements each thread. When we get more results, we will be happy to share our thoughts on this.

Пожалуйста, обратите внимание, если вы планируете где-либо публиковать данные сжатия, пожалуйста, включите и результаты разархивирования. В противном случае вы представите лишь половину результата.

When I need a compression tool, I usually choose WinRAR. Many users of my generation used it more than two decades ago. The interface has not changed much, although integration with right-click commands in Windows is a very nice plus. It does not have a built-in benchmark, so we start compressing a directory containing more than 30 60-second video files and 2000 small web files with normal compression speed.

WinRAR has variable multithreading, and is demanding on caching, so in our test we run it 10 times and calculate the average value for the last five runs, which is to check only processor performance.

WinRAR finally allows the overclocked 2600K to almost equal the stock 7700K. This is a multi-threading test, and it does not seem to use any of the new instructions offered by the 7700K. However, a larger number of cores in 9700K, as opposed to threads, brings a big bonus, as does the upgraded DRAM.

A number of platforms, especially mobile devices, encrypt file systems by default to protect content. For Windows devices, encryption is often used by BitLocker or third-party software. In the AES encryption test, we used discontinued TrueCrypt in a benchmark that checks several encryption algorithms directly in memory.

The data from this test is the combined AES encryption / decryption performance measured in gigabytes per second. The software uses AES commands if the processor allows it, but does not use the AVX-512.

Due to their focus on low-end systems, or small form factor systems, web tests are usually difficult to standardize. Modern web browsers are often updated, making it impossible to disable these updates, so it is difficult to maintain some kind of common platform. The rapid pace of browser development means that versions (and performance indicators) can change from week to week. Despite this, web tests are often an important indicator for users: many of today's office work is related to web applications, especially electronic and office applications, as well as to interfaces and development environments. Our web test suite includes several industry standard tests, as well as several popular but somewhat outdated tests.

We also included our obsolete but still popular tests in this section.

All our test results can also be found on our Bench page.

The company behind the XPRT test suite, Principled Technologies, recently released the latest web test, and instead of adding a year to the name, they simply called it “3”. This latest (at least for now) test is developed on the basis of such predecessors: tests of user interaction, office computing, graphing, sorting lists, HTML5, image manipulation, and in some cases even AI tests.

For our benchmark, we run a standard test, which will work out the checklist seven times and give the final result. We carry out such a test four times, and display the average value.

Users can access the WebXPRT test at

The older version of WebXPRT is the 2015 edition, which focuses on a slightly different set of web technologies and frameworks, which, however, are used today. This is still a relevant test, especially for users who interact with not the latest web applications on the market, and there are many such users. The development of web frameworks is very fast and highly fluid. The frameworks are quickly developed, built into applications, used, and then the developers move on to the next. Adaptation of the application to the new framework is a difficult task, especially with such a speed of development cycles. For this reason, many applications are "stuck in time", and remain relevant for users for many years.

As in the case of WebXPRT3, the main benchmark fulfills the control set seven times, displaying the final result. We repeat this four times, display the average and show the final results.

Our newest web test is Speedometer 2, which runs through a number of javascript frameworks to do just three simple things: build a list, include each item in the list, and delete the list. All frameworks implement the same visual signals, but, obviously, they do it in different ways.

Our test passes the entire list of frameworks and gives the final score under the name "rpm", one of the internal benchmark indicators. We display this indicator as the final result.

A popular web test for several years, but now no longer updated, is Octane from Google. Version 2.0 performs a couple of dozen tasks related to computations, such as regular expressions, cryptography, ray tracing, emulation, and the calculation of the Navier - Stokes equations.

The test gives each of the subtests an assessment, and returns the geometric mean as the final result. We conduct a complete benchmark four times and evaluate the final results.

Even older than Octane, here is Kraken, this time developed by Mozilla. This is an old test that performs relatively uniform computational mechanics, such as audio processing or image filtering. It seems that Kraken produces a very unstable result, depending on the version of the browser, since this test is highly optimized.

The main benchmark passes through each of the subtests ten times, and returns the average completion time for each cycle in milliseconds. We run the full benchmark four times, and measure the average result.

The first “inherited” test in the package is the first version of our 3DPM test. This is the final native version of the code, as if it were written by a scientist without knowledge of how computer hardware, compilers, or optimization works (as it was at the very beginning). The test is a large amount of scientific modeling in the wild, where getting an answer is more important than the speed of computing (getting the result in 4 days is acceptable if it is correct; a year learning to program and getting the result in 5 minutes is not acceptable).

In this version, the only real optimization was in the compiler flags (-O2, -fp: fast): compilation in release mode and enabling OpenMP in the main computing cycles. Loops were not sized for functions, and the most serious slowdown is false sharing in the cache. The code also has long chains of dependencies based on the generation of random numbers, which leads to a decrease in performance on some computing microarchitectures.

3DPM v1 as well as 3DPM v2 can be downloaded here: 3DPMv2.1.rar (13.0 MB)

This transcoding test is very old; Anandtech used it back in the days of the Pentium 4 and Athlon II processors. In it, standardized 720p video is encoded with double conversion, and the benchmark shows frames per second of each pass. The test is single-threaded, and in some architectures we run into IPC restriction - instructions-per-clock.

Thank you for staying with us. Do you like our articles? Want to see more interesting materials? Support us by placing an order or recommending it to your friends, a 30% discount for Habr users on a unique analogue of entry-level servers that we invented for you: The whole truth about VPS (KVM) E5-2650 v4 (6 Cores) 10GB DDR4 240GB SSD 1Gbps from $ 20 or how to divide the server?(options are available with RAID1 and RAID10, up to 24 cores and up to 40GB DDR4).

Dell R730xd 2 times cheaper? Only we have 2 x Intel TetraDeca-Core Xeon 2x E5-2697v3 2.6GHz 14C 64GB DDR4 4x960GB SSD 1Gbps 100 TV from $ 199 in the Netherlands! Dell R420 - 2x E5-2430 2.2Ghz 6C 128GB DDR3 2x960GB SSD 1Gbps 100TB - from $ 99! Read about How to Build Infrastructure Bldg. class using Dell R730xd E5-2650 v4 servers costing 9,000 euros for a penny?

Testing equipment

In accordance with our processor testing policy, we take a premium motherboard with a suitable socket and equip the system with sufficient memory that runs at the maximum frequency supported by the manufacturer. Testing is also performed when possible with JEDEC settings.

It is noted that some users dispute this approach, mentioning that sometimes the maximum supported frequency is quite low, or faster memory is available at a similar price, or that using supported frequencies can reduce performance. Although these comments make sense, in the end, very few consumers use memory profiles (XMP or others), because they require interaction with the BIOS, and most users abandon the supported JEDEC speeds - this includes both home users and providers who may want to reduce the margin for a couple of cents or stay within the limits set by the manufacturer. Where possible, we will expand testing to add faster memory modules - either in this review or later.

Thank you very much ...

We must thank the companies listed below for the kindly provided equipment for our test benches. Some of this hardware is not included in this test system, but is used in other tests.

Our new test suite for 2019 and 2020:

Seasoned against Specter and Meltdown

In order to keep up with the times, we have to update our software again. In updates, we usually use the latest operating system, the latest fixes, the latest software versions and graphics drivers, as well as add new tests or delete old ones. As regular readers know, our processor testing consists of a set of automated tests, and depending on how the latest software works, the package has to be changed: update or delete tests, or completely redo it. The last time we rewrote tests from scratch, it took most of the month, including regression testing (testing old processors).

One of the key elements of our test update for 2018 (and 2019) is the fact that our scripts and systems are prepared to protect against Specter and Meltdown. This means that our BIOS must be updated using the latest microcode, and all steps are performed on the operating system with security updates. In this case, we use Windows 10 x64 Enterprise 1709 with April security updates that mitigate the Smeltdown threat (as we call the two vulnerabilities in one word). Users may ask why we are not using Windows 10 x64 RS4, the latest major update. This is due to some new features that give mixed results. Instead of spending several weeks studying the possibility of turning them off, we will continue to work with RS3,

Our previous set of benchmarks was divided into several segments, depending on what the test relates to and how it is perceived. Our new test suite follows similar rules, and we run tests based on:

- Power

- Memory

- Office

- System

- Render

- Encoding

- Web

- Legacy

- Integrated gaming

- CPU gaming

Depending on the direction of the review, the order of these guidelines may change, and some of them may remain outside the scope of the review. All test results will be stored in our Bench database, which now has a new section “CPU 2019”.

In each section, we will conduct such tests:

Power

Our power tests are to create the maximum workload for each thread in the system, and check the power registers on the chip to find out details such as the power consumption of the cores, the power of the entire chip, DRAM, I / O, and the power of each core. The result depends on how much information the chip manufacturer gives: sometimes there really is a lot, sometimes almost nothing.

We are currently using POV-Ray as the main Power test, as it seems to penetrate deep into the system and is very consistent. To limit the number of cores during testing, we use an affinity mask controlled from the command line.

Memory

These tests are carried out with the shutdown of all turbo modes in the system, making it work at the base frequency, and implement both memory latency checks (Intel Latency Checker works equally well for both platforms) and AIDA64 to check the cache bandwidth.

Office

- Chromium Compile: Windows VC ++ Compile of Chrome 56 (as in 2017)

- PCMark10: General data will be in the review results, and the results of the sub-tests can be seen in Bench

- 3DMark Physics: We enter every Bench sub-test, but report the most important ones (new)

- GeekBench4: On Demand (New)

- SYSmark 2018: recently released BAPCo, now we are working on adding it to our automatic test suite (new, not yet available)

System

- Application Download: GIMP 2.10.4 (New)

- FCAT: 90-second ROTR 1440p recording processing (same as in 2017)

- 3D Particle Movement: Particle distribution test (as in 2017) - and also we have versions for AVX2 and AVX512, which we will try to add later;

- Dolphin 5.0: Console Simulator (as in 2017)

- DigiCortex: Sea slug brain simulator (as in 2017)

- y-Cruncher v0.7.6: Pi calculation with optimized instruction set of new CPUs (new)

- Agisoft Photoscan 1.3.3: 2D to 3D Conversion Tool (updated)

Render

- Corona 1.3: Rendering performance in 3dsMax, Cinema4D (as in 2017)

- Blender 2.79b: bmw27 rendering on CPU (upgraded to 2.79b)

- LuxMark v3.1 C ++ and OpenCL: Testing different rendering code paths (as in 2017)

- POV-Ray 3.7.1: Built-in benchmark (updated)

- CineBench R15: An outdated Cinema4D test is likely to remain in Bench (same as in 2017)

Encoding

- 7-zip 1805: built-in benchmark (upgraded to v1805)

- WinRAR 5.60b3: Compression test of a folder containing video and web files (updated to 5.60b3)

- AES Encryption: AES performance in memory. Somewhat outdated test. (stayed from 2017)

- Handbrake 1.1.0: the Logitech C920 1080p60 initial file is transcoded into three formats for streaming / storage:

- 720p60, x264, 6000 kbps CBR, Fast, High Profile

- 1080p60, x264, 3500 kbps CBR, Faster, Main Profile

- 1080p60, HEVC, 3500 kbps VBR, Fast, 2-Pass Main Profile

Web

- WebXPRT3: Latest WebXPRT Test (updated)

- WebXPRT15: Similar to 3, but somewhat outdated. (stayed from 2017)

- Speedometer2: Javascript Framework test (new)

- Google Octane 2.0: Deprecated but popular web test (left over from 2017)

- Mozilla Kraken 1.1: Deprecated but popular web test (left from 2017)

- Legacy (set has not changed since 2017)

- 3DPM v1: Old version of 3DPM

- x264 HD 3.0: Old Transcoding Benchmark

- Cinebench R11.5 and R10: Representatives of Various Coding Methodologies

Integrated and CPU Gaming

We recently automated about a dozen games with four different levels of performance. Most games will have data about the frame time, but not everyone has difficulties with automation. The idea is to get a good overview of a selection of different genres and engines for testing. At the moment, we have automated the following games:

We will use the NVIDIA GTX 1080 set of CPU Gaming tests. For pure CPU testing, we use the RX460, since now we have several devices for parallel testing.

In previous years, we tested several GPUs on a small number of games. This time, thanks to my survey on Twitter, which gave exactly 50:50, we do the opposite: more games, fewer GPUs.

Scale Up vs. Scale Out: Automation Benefits

From time to time we get the same comment: automation is not the best way to test. There is a high barrier to entry, and this limits the ability to conduct tests. From our point of view, despite the fact that you need to spend time for proper programming (and the correct preparation of the test), automation allows the following advantages:

- It guarantees constant breaks between tests for cooling, and not a random recovery time based on "if I look at the screen";

- Allows us to test multiple systems simultaneously. I currently use five systems in my office (limited by the number of 4K monitors and free space), which means that we can check more equipment at the same time;

- We can leave the tests to run at night, which helps a lot in time;

- Adding new tests is very easy with the right approach.

Our test software compares all the results, and sends all the data of the running tests to the central repository, and I can analyze the data as it arrives. It also acts as a manual check in case any data looks abnormal.

We have one main limitation, it applies to our gaming tests. We run several tests through one Steam account, and some games (e.g. GTA) are only available online. Since Steam allows one account to run the game on only one system, our game script checks the Steam API to determine whether we are “online” or not, and runs offline tests if the account is allowed to log in to the system. Depending on the number of games that categorically require online mode, this need may be a bottleneck.

Benchmark Bundle Updates

As always, we carry out benchmarking requests. This helps us understand the workloads users work with and plan their testing accordingly.

Software Package Note: We had requests for software tests, such as ANSYS or other professional-level software. The disadvantage of testing this software is licensing and scaling. Most of these companies do not really care that we conduct the tests, and declare that this is not part of their plans. Others, such as Agisoft, are more than willing to help. If you are a contributor to the development of these software packages, the best way to see how we use them is to give us a hand. We have special versions of the software for some tests, and if we can get something that works and is important for our audience, then it will be easy for us to add it to the test suite.

System tests

The section "System tests" focuses on testing in real conditions that the consumer is faced with, with a slight bias in throughput. In this section, we will look at application loading time, image processing, simple physics, emulation, neural modeling, optimized calculations and the development of three-dimensional models using easily accessible and customizable software. Although some of the tests are easier to overlap with the capabilities of large packages such as PCMark (we publish these values in the office tests section), it’s worth considering various perspectives. In all tests, we will explain in detail what is being tested and how exactly we are testing.

All our test results can also be found on our Bench page.

Application Download: GIMP 2.10.4

One of the most important aspects of the user experience and workflow is the speed of the system. A good test here is to check application load times. Most programs these days, when they are stored on an SSD, download almost instantly, but some office tools require preloading assets before they are ready to go. Most operating systems also use caching, so when certain software is downloaded frequently (web browser, office tools), it can be initialized much faster.

In the previous test suite, we checked how long it would take to load a large PDF into Adobe Acrobat. Unfortunately, this test was a programming nightmare, and refused to upgrade to Win10 RS3 without a fight. In the meantime, we discovered an application that can automate this test, and we decided to use GIMP, a popular and free photo editing application. This is an open source editor and the main alternative to Adobe Photoshop. We configured it to load a large design template of 50 MB in size, and performed the load 10 times with an interval of 10 seconds between them. Due to caching, the first 3-5 results are often slower than the rest, and the time for caching can be inconsistent, so we accept the average of the last five results,

Even overclocked, 2600K cannot reach the performance of 7700K, while 9700K with a higher single-threaded frequency takes a worthy first place.

FCAT: image processing

FCAT software was designed to detect micro-sticks, dropped frames, and run graphic tests when two video cards are combined together to render a scene. Due to game engines and graphics drivers, not all GPU combinations worked perfectly, as a result of which the software captured colors for each rendered frame and dynamically performed RAW data recording using a video capture device.

FCAT software accepts the recorded video, in our case it is 90 seconds 1440p of the game Rise of the Tomb Raider, and converts the color data into frame time data, so the system can display the “observed” frame rate and correlate with the energy consumption of the video accelerators. This test, due to how quickly it was completed, is single-threaded. We start the process and get the completion time as a result.

FCAT is another single-threaded test, so we see the same differences in performance: an overclocked 2600K cannot quite compare with the stock 7700K, and 9700K breaks ahead.

3D Particle Movement v2.1: Brownian motion

Our 3DPM test is a custom benchmark designed to model six different algorithms for moving particles in three-dimensional space. The algorithms were developed as part of my Ph.D. thesis and, ultimately, work best on the GPU, and give a good idea of how command streams are interpreted by various microarchitectures.

The key part of the algorithms is the generation of random numbers - we use relatively fast generation, which completes the implementation of chains of dependencies in the code. The main update compared to the primitive first version of this code is that the False Sharing problem in caches was resolved, which was the main bottleneck. We are also considering applying the AVX2 and AVX512 versions of this test for future reviews.

For this test, we run a stock set of particles using six different algorithms, within 20 seconds, with 10-second pauses, and report the total particle speed in millions of operations (movements) per second.

3DPM v2.1 can be downloaded from our server: 3DPMv2.1.rar (13.0 MB)

Since the 2600K does not have AVX2, it lags far behind the 7700K / 9700K when the program is optimized for new instructions.

Dolphin 5.0: console emulation

One of the popular requested tests in our package is console emulation. The ability to select a game from an outdated system and run it is very attractive, and depends on the efforts of the emulator: a much more powerful x86 system is required to be able to accurately emulate an old console other than x86. Especially if the code for this console was made taking into account some physical flaws and hardware bugs.

For our test, we use the popular Dolphin emulation software, and run a computing project through it to determine how accurately our processors can emulate the console. In this test, Nintendo Wii emulation will last about 1050 seconds.

The latest version of Dolphin can be downloaded here.

Dolphin achieved significant results in the Haswell / Broadwell era, which led to an incredible increase in performance from 2600K to 7700K. Unfortunately, for various reasons, an overclocked processor failed this test.

DigiCortex 1.20: Modeling the brain of a marine slug

This benchmark was originally designed to model and visualize the activity of neurons and synapses in the brain. The software comes with various predefined modes, and we chose a small benchmark that simulates a brain of 32 thousand neurons / 1.8 billion synapses, which is equivalent to the brain of a sea slug.

We report the test results as an opportunity to emulate data in real time, so any results above the “unit” are suitable for real-time operation. Of the two modes, the “without synapse start” mode, which is difficult for DRAM, and the “with synapse start” mode, in which the processor is loaded, we select the latter. Despite our choice, the test is still affected by DRAM speed.

DigiCortex can be downloaded here

For the tests related to memory, we ran the systems at their supported frequencies determined by Intel, with the exception of the overclocked system, which rightly received significant acceleration from DDR3-1333 to DDR3-2400. The results show an increase in performance, but even the default 7700K is faster. And the transition to 9700K shows a performance gain due to additional cores.

y-Cruncher v0.7.6: Calculations optimized for microarchitecture

I once heard about y-Cruncher as a tool to help you compute various mathematical constants. But after I started talking with its developer, Alex Yee, an NWU researcher and now a software optimization developer, I realized that he optimized the software in an incredible way to get better performance. Naturally, any simulation that takes 20+ days will benefit from a 1% increase in productivity! Alex started working with y-Cruncher as a project in high school, and now the project is up to date. Alex is constantly working on it to take advantage of the latest sets of instructions, even before they become available at the hardware level.

For our test, we run y-Cruncher v0.7.6 through all possible optimized variants of binary, single-threaded and multi-threaded calculations, including binary files optimized for AVX-512. The test is to calculate 250 million characters of the Pi number, and we use single-threaded and multi-threaded versions of this test.

Users can download y-cruncher from Alexa's website.

Y-cruncher is another benchmark that uses as many AVX features as possible to accelerate, demonstrating that modern descendants of Sandy Bridge have additional benefits.

Agisoft Photoscan 1.3.3: Convert 2D Image to 3D Model

One of the ISVs we have been working with for several years is Agisoft. The campaign is developing software called PhotoScan, which converts a series of 2D images into a 3D model. This is an important tool in the development and archiving of models, and relies on a number of single-threaded and multi-threaded algorithms for moving from one side of the calculation to the other.

In our test, we take software version 1.3.3 with a large data set - photos 84 x 18 megapixels. We run the test according to a fairly quick set of algorithms, but still more rigorous than our 2017 test. As a result, we report the total process completion time.

The Agisoft Photoscan website can be found here.

Since this is a test with variable multithreading, overclocking 2600K gives a significant jump in performance compared to the stock version. However, the default 7700K makes another leap of almost the same size. And 9700K, thanks to a large number of cores, just laughs at the rest of the chips in this comparison.

Rendering Tests

In a professional environment, rendering is often the main processor workload. It is used in various formats: from 3D rendering to rasterization, in tasks such as games or ray tracing, and uses the ability of software to manage meshes, textures, collisions, aliases, and physics (in animation). Most renderers offer code for the CPU, while some use GPUs and choose environments that use FPGAs or specialized ASICs. However, for large studios, processors are still the main hardware.

All our test results can also be found on our Bench page.

Corona 1.3: Performance Render

An improved renderer optimized for performance for software such as 3ds Max and Cinema 4D, the Corona test renders a generated scene of version 1.3 standard. Usually, the implementation of the GUI benchmark shows the process of building the scene, and allows the user to see the result as "time to complete."

We contacted the developer who gave us the command line version of the test, which provides direct output of the results. Instead of reporting the time the scene was built, we report the average number of rays per second over six runs, as the ratio of completed actions to units of time is visually easier to understand.

We see a noticeable difference in performance between 7700K and 2600K, due to microarchitecture updates and frequency, however, overclocking 2600K only halves this gap.

Blender 2.79b: 3D Creation Suite

A high-end rendering tool, Blender is an open source product with many settings and configurations and is used by many high-end animation studios around the world. The organization recently released a Blender test suite, a couple of weeks after we decided to reduce the use of the Blender test in our new package, but a new test may take more than an hour. To get our results, we run one of the subtests in this package through the command line - the standard scene "bmw27" in the "CPU only" mode, and measure the completion time of the rendering.

Blender can be downloaded at .

We see the same picture with the Blender test: overclocking only halves the performance gap between 2600K and stock 7700K. Add acceleration to 7700K and this gap will become larger.

LuxMark v3.1: LuxRender via various code paths

As stated above, there are many different ways to process rendering data: CPU, GPU, Accelerator, and others. In addition, there are many frameworks and APIs in which you can program, depending on how the software will be used. LuxMark, a benchmark developed using the LuxRender engine, offers several different scenes and APIs.

In our test, we run a simple “Ball” scene using C ++ and OpenCL code, but in CPU mode. This scene begins with a rough rendering and slowly improves quality over two minutes, giving the final result in an indicator that can be called "average number of thousands of rays per second."

POV-Ray 3.7.1: ray tracing

The ray tracing engine Persistence of Vision is another well-known benchmarking tool that went into hibernation for some time until AMD released its Zen processors when suddenly both Intel and AMD started pushing code into the main branch of an open source project. For our test, we use the built-in test for all cores, called from the command line.

POV-Ray can be downloaded here

POV-Ray stands out from previous tests, and shows what role AVX2 plays in modern processors. POV-Ray also prefers cores rather than threads, so having eight real cores gives the 9700K a pretty good advantage.

Office tests

The Office test suite is designed to focus on more standard industry benchmarks that focus on office workflows. These are more synthetic tests, but we also test the compiler performance in this section. For users who need to evaluate the equipment as a whole, these are usually the most important criteria to consider.

All our test results can also be found on our Bench page.

PCMark 10: Industry Standard

Futuremark, now known as UL, has been developing tests that have become industry standards for two decades. The last set of system tests is PCMark 10, where several tests are improved compared to PCMark 8, and more attention is paid to OpenCL, specifically in cases such as video streaming.

PCMark breaks its grades into roughly 14 different areas, including launching applications, web pages, spreadsheets, photo editing, rendering, video conferencing, and physics. We publish all these data in our Bench database, but the overall score is a key indicator for the current review.

A tool like PCMark does not actually show the real scale of the differences, with the exception of the main tests, which are fully multithreaded, and in which the 9700K takes advantage. The 7700K outperforms the regular 2600K by only 17%, and the gap is reduced to 5% compared to the overclocked version. Perhaps this is also an indicator of how often you feel the difference between the new 7700K and the overclocked 2600K: 5% of the time. Of course, it depends on your load balance.

Chromium Compile: Windows VC ++ Compiling Chrome 56

A large number of AnandTech readers are software engineers who watch how the hardware works. Although compiling the Linux kernel is “standard” for reviewers who often compile, our test is a little more diverse - we use Windows instructions to compile Chrome, in particular, the Chrome build on March 56, 2017, as it was when we created the test. Google gives quite detailed instructions on how to compile under Windows after downloading 400,000 files from the repository.

In our test, following the instructions from Google, we use the MSVC compiler, and ninja to control compilation. As expected, this is a test with variable multithreading, and with variable requirements for DRAM, which benefit from faster caches. The results obtained in our test are the time spent on compilation, which we convert to the number of compilations per day.

This compilation test prefers the 9700K cores over the SMT, and again we see that the overclocked 2600K are in the middle between the stock 7700K and 2600K. Even without overclocking, the 7700K is stronger.

3DMark Physics: computing physics in games

Along with PCMark, there is a 3DMark benchmark, Futuremark (UL) - a set of game tests. Each game test consists of one or two scenes that are heavy for the GPU, as well as a physical test, depending on when the test was written and what platform it targets. The main subjects in increasing difficulty are Ice Storm, Cloud Gate, Sky Diver, Fire Strike and Time Spy.

Some of the subtests offer other options, such as Ice Storm Unlimited, which is designed for mobile platforms with off-screen rendering, or Fire Strike Ultra, which is designed for high-performance 4K systems with a lot of added features. It is worth noting that Time Spy currently has the AVX-512 mode (which we can use in the future).

As for our tests, we send the results of each physical test to Bench, but for the review we stick to the results of only the most demanding scenes: Ice Storm Unlimited, Cloud Gate, Sky Diver, Fire Strike Ultra and Time Spy,

GeekBench4: Synthetic Test

A common tool for cross-platform testing on mobile devices, PCs and Macs, GeekBench 4 is the perfect synthetic test of a system using a range of algorithms that require maximum bandwidth. Tests include encryption, compression, fast Fourier transform, memory operations, n-body physics, matrix operations, histogram manipulation, and HTML parsing.

I am including this test because of the popularity of the query, although the results are very synthetic. Many users often attach great importance to its results due to the fact that it was compiled on different platforms (albeit with different compilers).

We record the ratings of the main subtests (Crypto, Integer, Floating Point, Memory) in our database of test results, but for the review we publish only general single-threaded and multi-threaded results.

Encoding tests

With the increase in the number of streams, video blogs and video content in general, coding and transcoding tests are becoming increasingly important. Not only are there more and more home users and gamers engaged in converting video files and video streams, but servers that process data streams need encryption on the fly, as well as compression and decompression of logs. Our coding tests are aimed at such scenarios, and take into account the opinion of the community in order to provide the most relevant results.

All our test results can also be found on our Bench page.

Handbrake 1.1.0: потоковое и архивное транскодирование видео

A popular open source tool, Handbrake is software for converting video in any way possible, which, in a sense, is the benchmark. The danger here lies in version numbers and in optimization. For example, recent software versions can take advantage of the AVX-512 and OpenCL to speed up certain types of transcoding and certain algorithms. The version that we use is pure work with the CPU, with standard transcoding options.

We divided Handbrake into several tests using recording from a native Logitech C920 1080p60 webcam (essentially a stream recording). The record will be converted into two types of streaming formats and into one for archiving. Used output parameters:

- 720p60 at 6000 kbps constant bit rate, fast setting, high profile

- 1080p60 at 3500 kbps constant bit rate, faster setting, main profile

- 1080p60 HEVC at 3500 kbps variable bit rate, fast setting, main profile

7-zip v1805: популярный архиватор с открытым исходным кодом

Of all our archiving / unarchiving tests, 7-zip is the most popular and has a built-in benchmark. We added the latest version of this software to our test suite, and we launch the benchmark from the command line. The results of archiving and unarchiving are displayed as a single total score.

This test clearly shows that modern processors with several matrices have a big difference in performance between compression and decompression: they perform well in one, and poorly in the other. In addition, we are actively discussing how Windows Scheduler implements each thread. When we get more results, we will be happy to share our thoughts on this.

Пожалуйста, обратите внимание, если вы планируете где-либо публиковать данные сжатия, пожалуйста, включите и результаты разархивирования. В противном случае вы представите лишь половину результата.

WinRAR 5.60b3: Архиватор

When I need a compression tool, I usually choose WinRAR. Many users of my generation used it more than two decades ago. The interface has not changed much, although integration with right-click commands in Windows is a very nice plus. It does not have a built-in benchmark, so we start compressing a directory containing more than 30 60-second video files and 2000 small web files with normal compression speed.

WinRAR has variable multithreading, and is demanding on caching, so in our test we run it 10 times and calculate the average value for the last five runs, which is to check only processor performance.

WinRAR finally allows the overclocked 2600K to almost equal the stock 7700K. This is a multi-threading test, and it does not seem to use any of the new instructions offered by the 7700K. However, a larger number of cores in 9700K, as opposed to threads, brings a big bonus, as does the upgraded DRAM.

AES Encryption: File Protection

A number of platforms, especially mobile devices, encrypt file systems by default to protect content. For Windows devices, encryption is often used by BitLocker or third-party software. In the AES encryption test, we used discontinued TrueCrypt in a benchmark that checks several encryption algorithms directly in memory.

The data from this test is the combined AES encryption / decryption performance measured in gigabytes per second. The software uses AES commands if the processor allows it, but does not use the AVX-512.

Web Tests and Legacy Tests

Due to their focus on low-end systems, or small form factor systems, web tests are usually difficult to standardize. Modern web browsers are often updated, making it impossible to disable these updates, so it is difficult to maintain some kind of common platform. The rapid pace of browser development means that versions (and performance indicators) can change from week to week. Despite this, web tests are often an important indicator for users: many of today's office work is related to web applications, especially electronic and office applications, as well as to interfaces and development environments. Our web test suite includes several industry standard tests, as well as several popular but somewhat outdated tests.

We also included our obsolete but still popular tests in this section.

All our test results can also be found on our Bench page.

WebXPRT 3: Web tasks of the modern world, including AI

The company behind the XPRT test suite, Principled Technologies, recently released the latest web test, and instead of adding a year to the name, they simply called it “3”. This latest (at least for now) test is developed on the basis of such predecessors: tests of user interaction, office computing, graphing, sorting lists, HTML5, image manipulation, and in some cases even AI tests.

For our benchmark, we run a standard test, which will work out the checklist seven times and give the final result. We carry out such a test four times, and display the average value.

Users can access the WebXPRT test at

WebXPRT 2015: Testing HTML5 and Javascript Web UX

The older version of WebXPRT is the 2015 edition, which focuses on a slightly different set of web technologies and frameworks, which, however, are used today. This is still a relevant test, especially for users who interact with not the latest web applications on the market, and there are many such users. The development of web frameworks is very fast and highly fluid. The frameworks are quickly developed, built into applications, used, and then the developers move on to the next. Adaptation of the application to the new framework is a difficult task, especially with such a speed of development cycles. For this reason, many applications are "stuck in time", and remain relevant for users for many years.

As in the case of WebXPRT3, the main benchmark fulfills the control set seven times, displaying the final result. We repeat this four times, display the average and show the final results.

Speedometer 2: Javascript Frameworks

Our newest web test is Speedometer 2, which runs through a number of javascript frameworks to do just three simple things: build a list, include each item in the list, and delete the list. All frameworks implement the same visual signals, but, obviously, they do it in different ways.

Our test passes the entire list of frameworks and gives the final score under the name "rpm", one of the internal benchmark indicators. We display this indicator as the final result.

Google Octane 2.0: Core Web Compute

A popular web test for several years, but now no longer updated, is Octane from Google. Version 2.0 performs a couple of dozen tasks related to computations, such as regular expressions, cryptography, ray tracing, emulation, and the calculation of the Navier - Stokes equations.

The test gives each of the subtests an assessment, and returns the geometric mean as the final result. We conduct a complete benchmark four times and evaluate the final results.

Mozilla Kraken 1.1: Core Web Compute

Even older than Octane, here is Kraken, this time developed by Mozilla. This is an old test that performs relatively uniform computational mechanics, such as audio processing or image filtering. It seems that Kraken produces a very unstable result, depending on the version of the browser, since this test is highly optimized.

The main benchmark passes through each of the subtests ten times, and returns the average completion time for each cycle in milliseconds. We run the full benchmark four times, and measure the average result.

3DPM v1: Naïve Code Variant of 3DPM v2.1.

The first “inherited” test in the package is the first version of our 3DPM test. This is the final native version of the code, as if it were written by a scientist without knowledge of how computer hardware, compilers, or optimization works (as it was at the very beginning). The test is a large amount of scientific modeling in the wild, where getting an answer is more important than the speed of computing (getting the result in 4 days is acceptable if it is correct; a year learning to program and getting the result in 5 minutes is not acceptable).

In this version, the only real optimization was in the compiler flags (-O2, -fp: fast): compilation in release mode and enabling OpenMP in the main computing cycles. Loops were not sized for functions, and the most serious slowdown is false sharing in the cache. The code also has long chains of dependencies based on the generation of random numbers, which leads to a decrease in performance on some computing microarchitectures.

3DPM v1 as well as 3DPM v2 can be downloaded here: 3DPMv2.1.rar (13.0 MB)

x264 HD 3.0: legacy transcoding test

This transcoding test is very old; Anandtech used it back in the days of the Pentium 4 and Athlon II processors. In it, standardized 720p video is encoded with double conversion, and the benchmark shows frames per second of each pass. The test is single-threaded, and in some architectures we run into IPC restriction - instructions-per-clock.

Thank you for staying with us. Do you like our articles? Want to see more interesting materials? Support us by placing an order or recommending it to your friends, a 30% discount for Habr users on a unique analogue of entry-level servers that we invented for you: The whole truth about VPS (KVM) E5-2650 v4 (6 Cores) 10GB DDR4 240GB SSD 1Gbps from $ 20 or how to divide the server?(options are available with RAID1 and RAID10, up to 24 cores and up to 40GB DDR4).

Dell R730xd 2 times cheaper? Only we have 2 x Intel TetraDeca-Core Xeon 2x E5-2697v3 2.6GHz 14C 64GB DDR4 4x960GB SSD 1Gbps 100 TV from $ 199 in the Netherlands! Dell R420 - 2x E5-2430 2.2Ghz 6C 128GB DDR3 2x960GB SSD 1Gbps 100TB - from $ 99! Read about How to Build Infrastructure Bldg. class using Dell R730xd E5-2650 v4 servers costing 9,000 euros for a penny?