How One PostgreSQL Configuration Change Improves Slow Query Performance 50 Times

Hello, Khabrovites! I bring to your attention a translation of the article "How a single PostgreSQL config change improved slow query performance by 50x" by Pavan Patibandla. It helped me a lot to improve the performance of PostgreSQL.

At Amplitude, our goal is to provide easy-to-use interactive product analytics so that everyone can find answers to their questions about the product. To ensure usability, Amplitude must provide these answers quickly. Therefore, when one of our customers complained about how long it took to load the drop-down list of event properties in the Amplitude user interface, we started a detailed study of the problem.

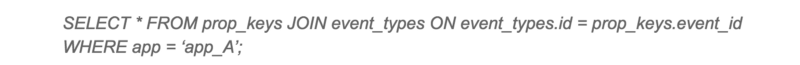

By tracking the delay at different levels, we realized that it took 20 seconds for one particular PostgreSQL query to complete. This came as a surprise to us, since both tables have indexes in the join column.

Slow query

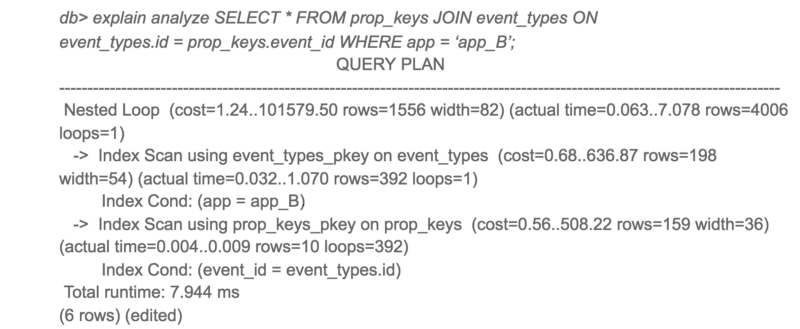

The PostgreSQL execution plan for this query was unexpected for us. Despite the fact that both tables have indexes, PostgreSQL decided to perform a Hash Join with sequential scanning of a large table. Sequentially scanning a large table took up most of the query time.

Slow Query Execution Plan

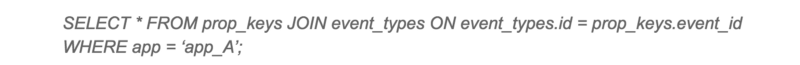

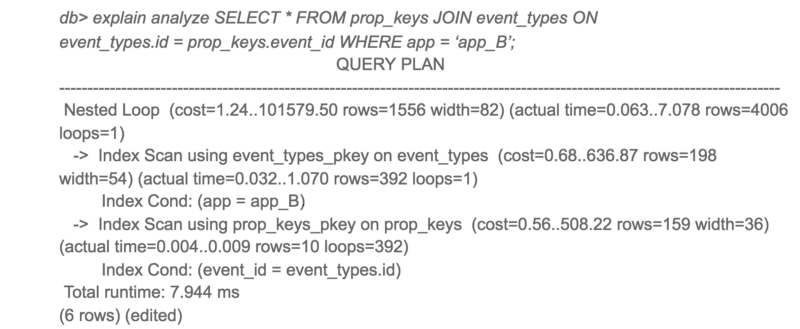

I initially suspected that this might be due to fragmentation. But after checking the data, I realized that data is only added to this table and practically not deleted from there. Since clearing the place with VACUUM won't help much here, I started digging further. Then I tried the same request on another client with a good response time. To my surprise, the query execution plan looked completely different!

The execution plan of the same request on another client

It is interesting that application A gained access only to 10 times more data than application B, but the response time was 3000 times longer.

To see alternative PostgreSQL query plans, I turned off the hash connection and restarted the query.

Alternative execution plan for slow query

Here you go! The same request completes 50 times faster when using a nested loop instead of a hash join. So why did PostgreSQL choose the worst plan for application A?

With a closer look at the estimated cost and actual lead time for both plans, the estimated ratios of cost and actual lead time were very different. The main culprit for this discrepancy was the cost estimate of sequential scanning. PostgreSQL estimates that sequential scans would be better than 4000+ index scans, but in fact, index scans were 50 times faster.

This led me to the random_page_cost and seq_page_cost configuration options . PostgreSQL defaults 4and 1 for random_page_cost , seq_page_cost , which are configured for the HDD, where random access to the disk is more expensive than sequential access. However, these costs were inaccurate for our deployment using the gp2 EBS volume , which are solid state drives. For our deployment, random and sequential access is almost the same.

I changed the value of random_page_cost to 1 and retried the request. This time, PostgreSQL used the Nested Loop, and the query ran 50 times faster. After the change, we also noticed a significant decrease in the maximum response time from PostgreSQL.

The overall performance of the slow query has improved significantly.

If you use SSD and use PostgreSQL with the default configuration, I advise you to try setting up random_page_cost and seq_page_cost . You may be surprised at the dramatic performance improvement.

I’ll add on my own that I set the minimum parameters seq_page_cost = random_page_cost = 0.1, to give priority to the data in memory (cache) over processor operations, since I have allocated a large amount of RAM for PostgreSQL (the size of the RAM exceeds the size of the database on the disk). It is not very clear why the postgres community still uses the default settings that are relevant for a server with a small amount of RAM and HDDs, and not for modern servers. Hopefully this will be fixed soon.

At Amplitude, our goal is to provide easy-to-use interactive product analytics so that everyone can find answers to their questions about the product. To ensure usability, Amplitude must provide these answers quickly. Therefore, when one of our customers complained about how long it took to load the drop-down list of event properties in the Amplitude user interface, we started a detailed study of the problem.

By tracking the delay at different levels, we realized that it took 20 seconds for one particular PostgreSQL query to complete. This came as a surprise to us, since both tables have indexes in the join column.

Slow query

The PostgreSQL execution plan for this query was unexpected for us. Despite the fact that both tables have indexes, PostgreSQL decided to perform a Hash Join with sequential scanning of a large table. Sequentially scanning a large table took up most of the query time.

Slow Query Execution Plan

I initially suspected that this might be due to fragmentation. But after checking the data, I realized that data is only added to this table and practically not deleted from there. Since clearing the place with VACUUM won't help much here, I started digging further. Then I tried the same request on another client with a good response time. To my surprise, the query execution plan looked completely different!

The execution plan of the same request on another client

It is interesting that application A gained access only to 10 times more data than application B, but the response time was 3000 times longer.

To see alternative PostgreSQL query plans, I turned off the hash connection and restarted the query.

Alternative execution plan for slow query

Here you go! The same request completes 50 times faster when using a nested loop instead of a hash join. So why did PostgreSQL choose the worst plan for application A?

With a closer look at the estimated cost and actual lead time for both plans, the estimated ratios of cost and actual lead time were very different. The main culprit for this discrepancy was the cost estimate of sequential scanning. PostgreSQL estimates that sequential scans would be better than 4000+ index scans, but in fact, index scans were 50 times faster.

This led me to the random_page_cost and seq_page_cost configuration options . PostgreSQL defaults 4and 1 for random_page_cost , seq_page_cost , which are configured for the HDD, where random access to the disk is more expensive than sequential access. However, these costs were inaccurate for our deployment using the gp2 EBS volume , which are solid state drives. For our deployment, random and sequential access is almost the same.

I changed the value of random_page_cost to 1 and retried the request. This time, PostgreSQL used the Nested Loop, and the query ran 50 times faster. After the change, we also noticed a significant decrease in the maximum response time from PostgreSQL.

The overall performance of the slow query has improved significantly.

If you use SSD and use PostgreSQL with the default configuration, I advise you to try setting up random_page_cost and seq_page_cost . You may be surprised at the dramatic performance improvement.

I’ll add on my own that I set the minimum parameters seq_page_cost = random_page_cost = 0.1, to give priority to the data in memory (cache) over processor operations, since I have allocated a large amount of RAM for PostgreSQL (the size of the RAM exceeds the size of the database on the disk). It is not very clear why the postgres community still uses the default settings that are relevant for a server with a small amount of RAM and HDDs, and not for modern servers. Hopefully this will be fixed soon.